Essence

Data Source Quality Filtering (DSQF) is the process of validating, cleaning, and aggregating external price feeds before they are used by a decentralized options protocol. In the context of crypto derivatives, DSQF is not a passive data hygiene practice; it is a fundamental security mechanism. The reliability of a derivatives contract ⎊ its settlement, collateralization, and liquidation ⎊ hinges entirely on the integrity of the reference price.

Flawed data inputs can lead to catastrophic liquidations, protocol insolvency, and systemic risk. DSQF ensures that the on-chain representation of an asset’s price accurately reflects its true market value, protecting the protocol from adversarial manipulation and transient market anomalies.

Data Source Quality Filtering ensures that the reference price used for derivatives settlement accurately reflects market reality, protecting against manipulation and systemic failure.

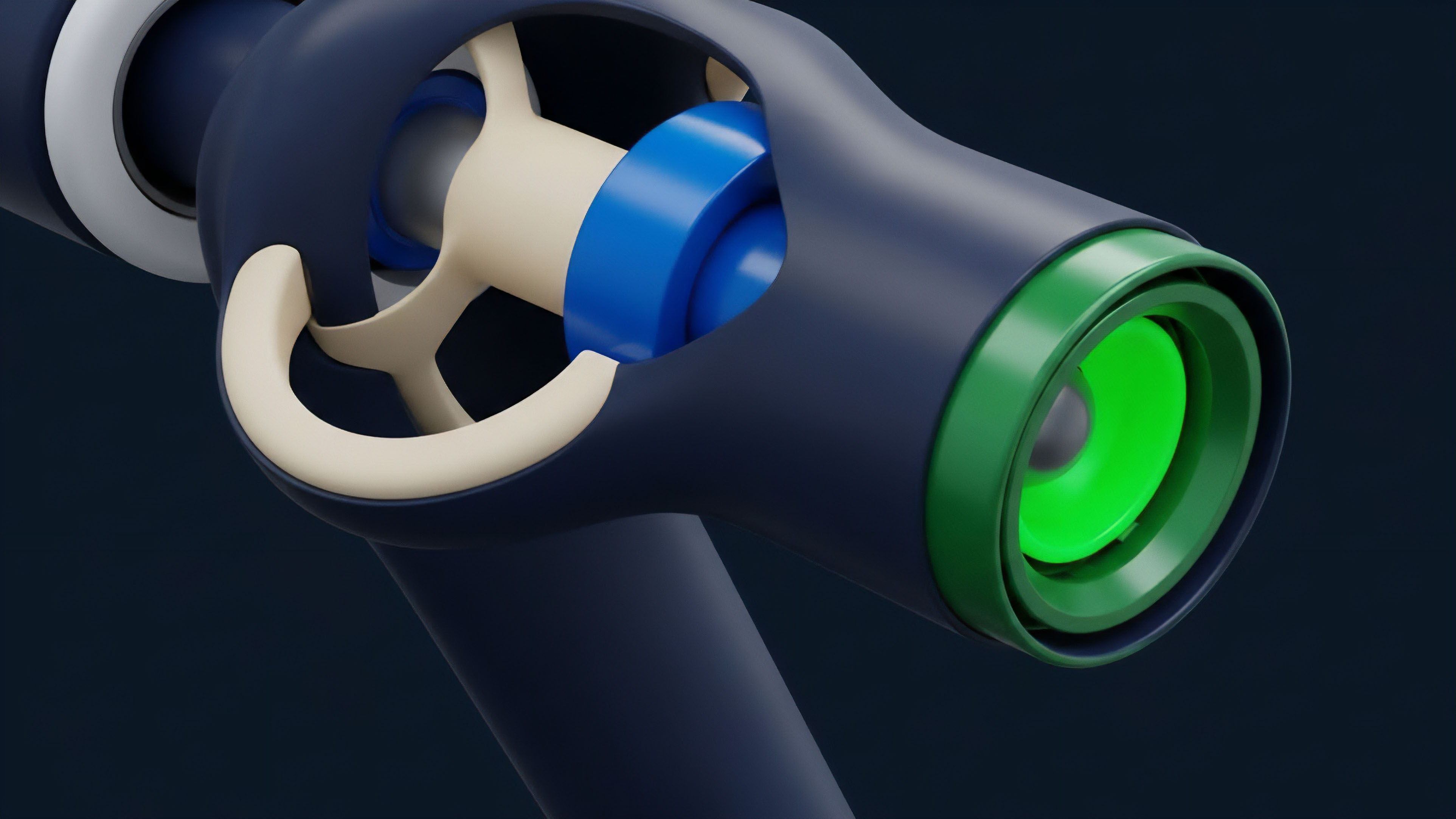

The challenge in decentralized finance (DeFi) options is that the market for the underlying asset is highly fragmented across dozens of exchanges, both centralized and decentralized. A single data feed from one source can be easily manipulated by a large order or a flash loan attack. DSQF addresses this by aggregating data from multiple sources, applying statistical analysis to detect outliers, and ensuring that a single source cannot unilaterally dictate the price.

This process is particularly relevant for options protocols, where small discrepancies in the underlying asset price can lead to large, cascading liquidations due to high leverage.

Origin

The concept of data quality filtering originates in traditional financial markets, where data feeds from exchanges like the NYSE or CME are standardized and regulated. The data quality challenge in TradFi primarily centers on latency and market access, not fundamental data integrity, because exchanges themselves serve as trusted, centralized sources of truth.

The advent of DeFi introduced the “oracle problem,” where smart contracts require off-chain data to execute logic but cannot access it directly. Early solutions involved simple single-source oracles, which quickly proved vulnerable to price manipulation. The necessity for sophisticated DSQF emerged during the “DeFi Summer” of 2020.

Several protocols experienced significant losses due to flash loan attacks that manipulated single-source price oracles. These attacks highlighted a critical flaw in the architecture: a derivatives contract’s security is only as strong as its weakest data input. This led to a paradigm shift from simple oracle solutions to decentralized oracle networks (DONs) that actively filter data.

The focus shifted from merely fetching data to verifying its quality, integrity, and resistance to manipulation before consumption by a smart contract.

Theory

The theoretical underpinnings of DSQF combine elements of statistical finance and adversarial game theory. From a quantitative perspective, the goal is to derive a robust “true price” by analyzing a set of noisy and potentially malicious inputs.

This involves several statistical techniques:

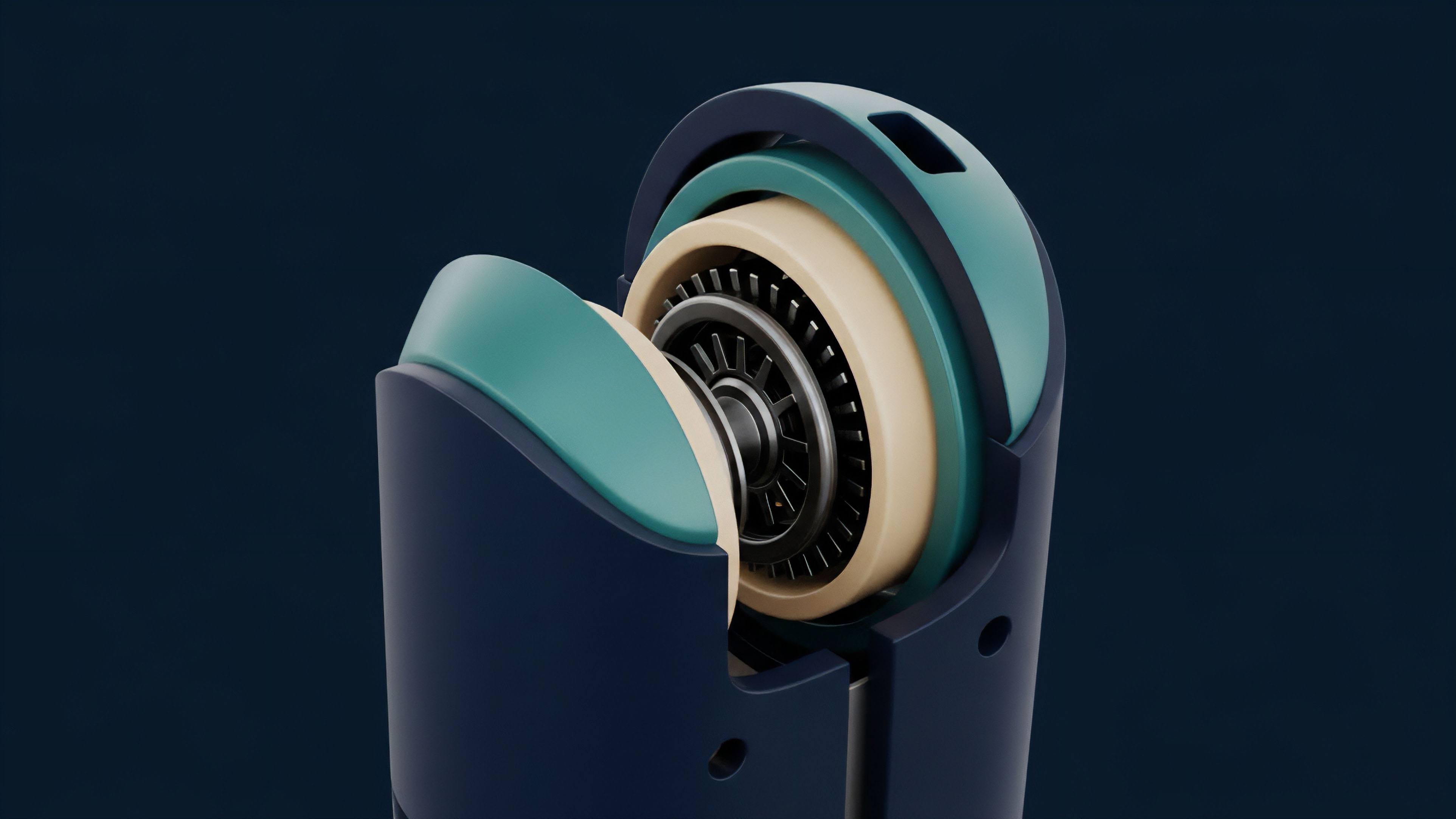

- Outlier Detection: This process identifies data points that deviate significantly from the statistical norm of the aggregated data set. Methods like Z-score analysis or median absolute deviation (MAD) are applied to identify and discard extreme values that could indicate manipulation or data errors.

- Volume-Weighted Averaging (VWAP): To accurately reflect market depth and liquidity, DSQF often calculates a VWAP across aggregated exchanges. This technique weights prices by the volume traded at that price level, ensuring that illiquid exchanges have less influence on the final price than highly liquid ones.

- Latency Mitigation: Price data must be fresh. DSQF protocols apply time-based filters, penalizing or discarding data points that exceed a certain age threshold. This prevents stale data from being used in high-frequency liquidation calculations, especially during periods of high volatility.

From a game-theoretic standpoint, DSQF assumes an adversarial environment. The system must be designed to make the cost of manipulation exceed the potential profit. A well-designed filtering mechanism increases the capital required to manipulate a price feed by requiring an attacker to execute large trades across multiple, high-volume exchanges simultaneously to move the aggregated price.

| Filtering Technique | Objective | Risk Mitigation |

|---|---|---|

| Median Calculation | Determine central tendency | Resilience against single-source outliers |

| Volume Weighting (VWAP) | Reflect market depth | Resistance to low-liquidity exchange manipulation |

| Time-Weighted Averaging (TWAP) | Smooth short-term volatility | Protection against flash loan attacks and transient spikes |

Approach

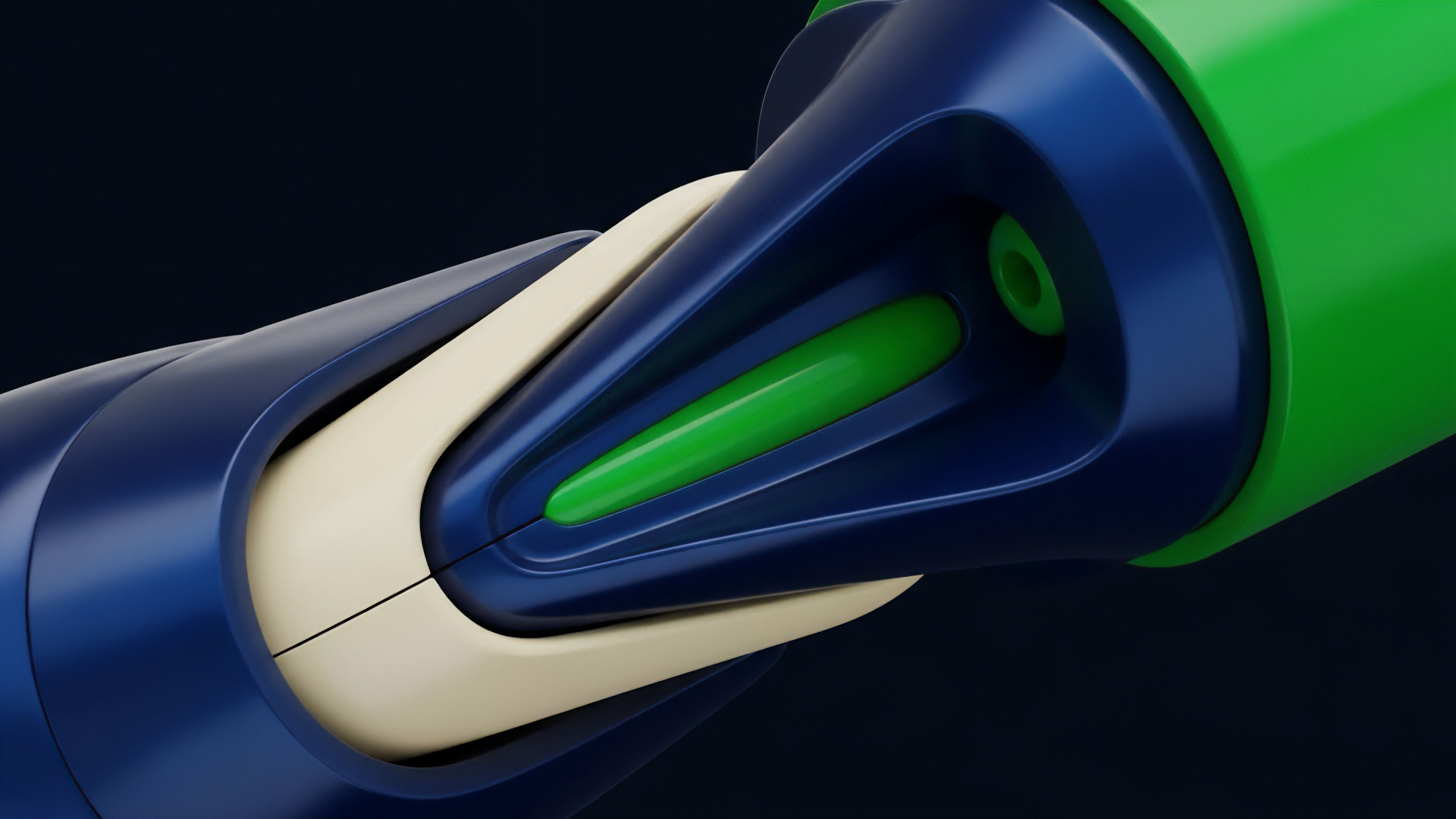

Current implementations of DSQF in crypto options protocols generally follow a multi-layered approach, balancing security with efficiency. The primary approach involves a decentralized oracle network that aggregates data from a diverse set of data providers.

- Data Source Diversity: Protocols select a broad range of data sources, including major centralized exchanges (CEXs) like Binance and Coinbase, as well as decentralized exchanges (DEXs) like Uniswap. The goal is to avoid single points of failure and ensure a robust representation of global liquidity.

- Data Aggregation Layer: The data from these sources is fed into an aggregation layer. This layer performs the core DSQF functions: data normalization (adjusting for different formats and quote conventions), statistical analysis for outlier removal, and calculation of a final reference price (often a median or VWAP).

- On-Chain Validation and Submission: The final filtered price is submitted to the smart contract via a decentralized network of oracle nodes. These nodes are incentivized to submit accurate data and penalized for submitting malicious data. This economic incentive layer adds another layer of security, making it expensive for nodes to collude and manipulate the price.

The choice of filtering parameters ⎊ how tightly to filter, how many sources to include, and the weighting algorithm ⎊ is a trade-off between accuracy and speed. A tightly filtered, highly diverse set of inputs offers greater security against manipulation but introduces latency, potentially causing a lag between the protocol’s reference price and the real-time market price.

The trade-off between data latency and manipulation resistance defines the operational security parameters of a derivatives protocol’s data filtering mechanism.

Evolution

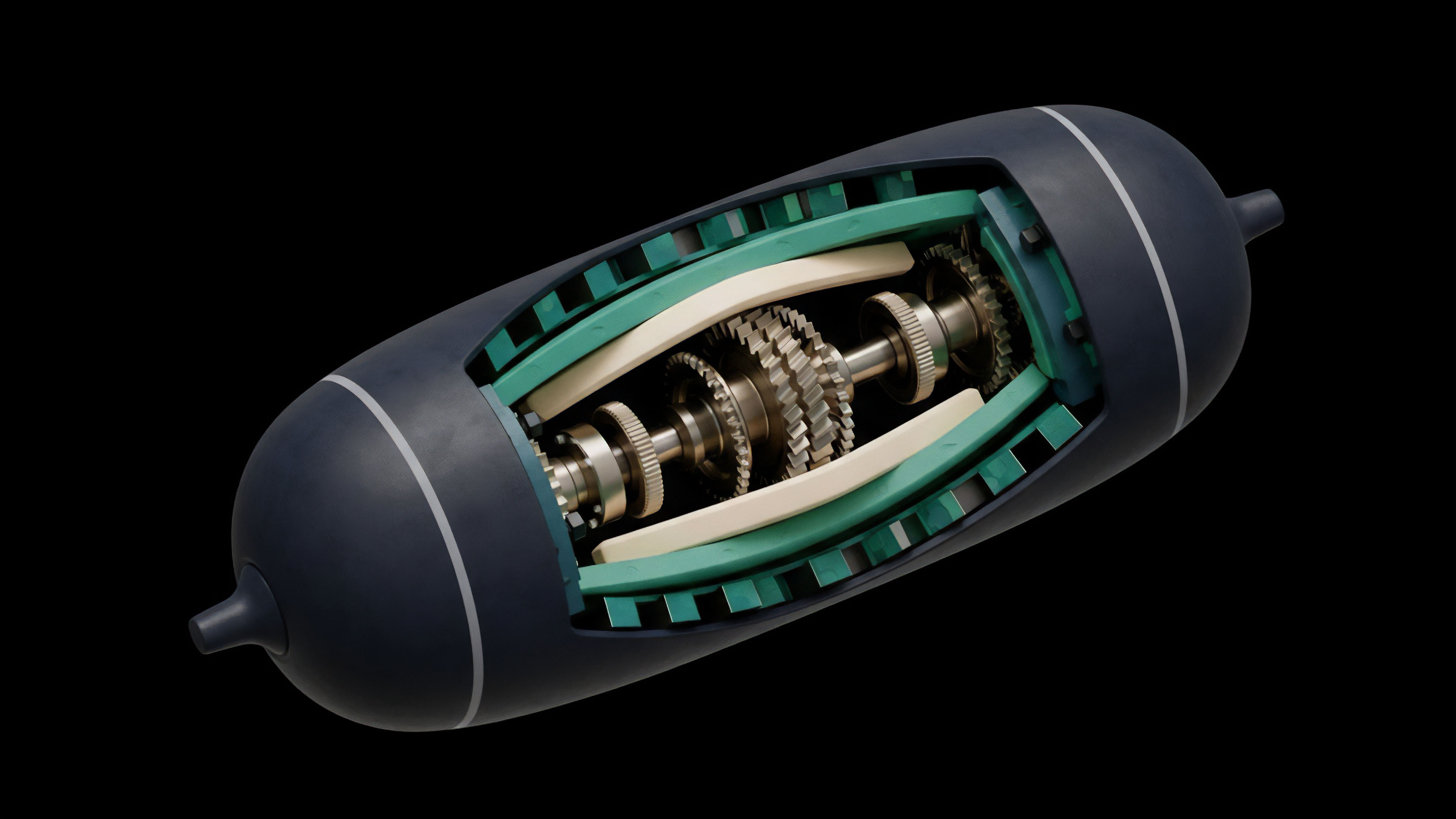

The evolution of DSQF has mirrored the growth in complexity and value locked within the DeFi space. Early oracle designs were simplistic, often relying on a single data source or a small, trusted committee. This architecture proved insufficient as flash loan attacks became prevalent.

The first major evolutionary step was the move to multi-source aggregation, where protocols recognized that data quality required redundancy. The next significant change was the implementation of Time-Weighted Average Price (TWAP) and Volume-Weighted Average Price (VWAP) calculations directly within the oracle mechanism. This shift was a direct response to flash loan attacks that exploit single-block price movements.

By averaging prices over a time window, protocols made it significantly harder for attackers to execute rapid, high-impact manipulations. The current state of DSQF involves a continuous refinement of these aggregation algorithms, incorporating concepts like data source reputation scoring, where data from historically reliable sources receives greater weight than data from new or less proven sources. This creates a feedback loop that improves data quality over time.

Horizon

Looking ahead, the future of DSQF will likely diverge into two primary pathways. The first involves a continued refinement of external data feeds through more advanced statistical modeling. This includes incorporating real-time market depth data from order books, rather than just last-trade prices, to better understand market pressure and prevent manipulation.

The second pathway involves a move away from external data feeds entirely for specific derivative types. This second pathway suggests that protocols may eventually derive prices internally by creating on-chain, self-contained liquidity pools for synthetic assets. This would allow the protocol to calculate prices based on the internal supply and demand dynamics of its own market, eliminating the need for external oracles and DSQF for those specific instruments.

The integration of real-world assets (RWAs) into DeFi also poses a new data quality challenge, as these assets require verifiable, off-chain data that is far more difficult to standardize than cryptocurrency prices. This requires new filtering methods that account for data source credibility and regulatory compliance.

The future of data quality filtering in options protocols will likely involve a combination of sophisticated external data aggregation and the development of internal, self-contained pricing mechanisms.

The ultimate goal for DSQF is to achieve a level of data integrity where the system’s resilience is built into its design, rather than relying solely on post-hoc filtering. This involves moving toward verifiable computation, where data providers prove the integrity of their data submission using zero-knowledge proofs.

Glossary

Cross Chain Data Transfer

Data Latency Mitigation

Time-Weighted Average Price

Data Availability Guarantees

Data Cleansing Techniques

Open Source Financial Logic

Oracle Quality

Source-Available Licensing

Statistical Deviation Filtering