Essence

Data reliability in crypto options is the foundational assurance that all inputs ⎊ specifically price feeds, volatility surfaces, and collateral valuations ⎊ are accurate, timely, and resistant to adversarial manipulation. In a decentralized environment, where a single, trusted central counterparty does not exist, the integrity of the data stream becomes the single point of failure for the entire risk management framework. The system’s ability to calculate margin requirements, determine collateral health, and execute liquidations hinges entirely on the data’s veracity.

If the data feed for the underlying asset is compromised, the option’s pricing model (such as Black-Scholes or variations) immediately fails to reflect reality, creating an arbitrage opportunity that can lead to a protocol-wide insolvency event. This makes data reliability not a secondary technical consideration, but the primary determinant of a protocol’s financial solvency and systemic resilience. The challenge in a decentralized setting stems from the oracle problem , where off-chain data must be securely brought on-chain for use by smart contracts.

The financial integrity of a crypto options protocol relies on the economic guarantee that the cost of manipulating the data feed is higher than the potential profit from exploiting the protocol. This is a delicate balancing act, as options protocols require high-frequency updates to accurately price short-term volatility, increasing the window of opportunity for manipulation if data latency is not managed effectively.

Data reliability is the non-negotiable prerequisite for maintaining financial solvency in decentralized options markets.

Origin

The necessity for robust data reliability emerged from the early failures of decentralized finance protocols during periods of high market volatility. The initial attempts at creating decentralized options and lending protocols often relied on simple, on-chain price feeds derived from decentralized exchanges (DEXs) like Uniswap. However, these on-chain prices were highly susceptible to sandwich attacks and manipulation during a single block execution.

An attacker could front-run a large trade, artificially spike the price on the DEX, execute a profitable liquidation against the options protocol using the manipulated price, and then unwind the initial trade, all within a single transaction block. The most prominent example of this vulnerability occurred during the “Black Thursday” crash of March 2020. Several lending protocols experienced massive liquidations that were triggered by data feeds that were either stale or easily manipulated, resulting in significant losses for users and creating systemic risk.

This event underscored that on-chain data alone was insufficient for robust financial primitives. The subsequent evolution led to the development of dedicated, multi-source oracle networks designed to provide more resilient and manipulation-resistant price feeds, moving away from a single source of truth to a consensus-based model.

Theory

The theoretical foundation of data reliability in options pricing connects directly to the core assumptions of quantitative finance.

Option pricing models, particularly those based on continuous-time processes, assume a specific, verifiable underlying price. When data reliability fails, this assumption breaks down, leading to model risk where the theoretical price deviates significantly from the real-world value. The primary theoretical challenges in decentralized options center on managing latency risk and stale data risk.

Latency risk refers to the delay between a real-world price change and the data feed update on the blockchain. For short-dated options, even a few seconds of latency can drastically alter the implied volatility and subsequently misprice the option. Stale data risk occurs when a price feed update fails to occur during a period of extreme volatility, allowing an attacker to exploit the outdated price for profit.

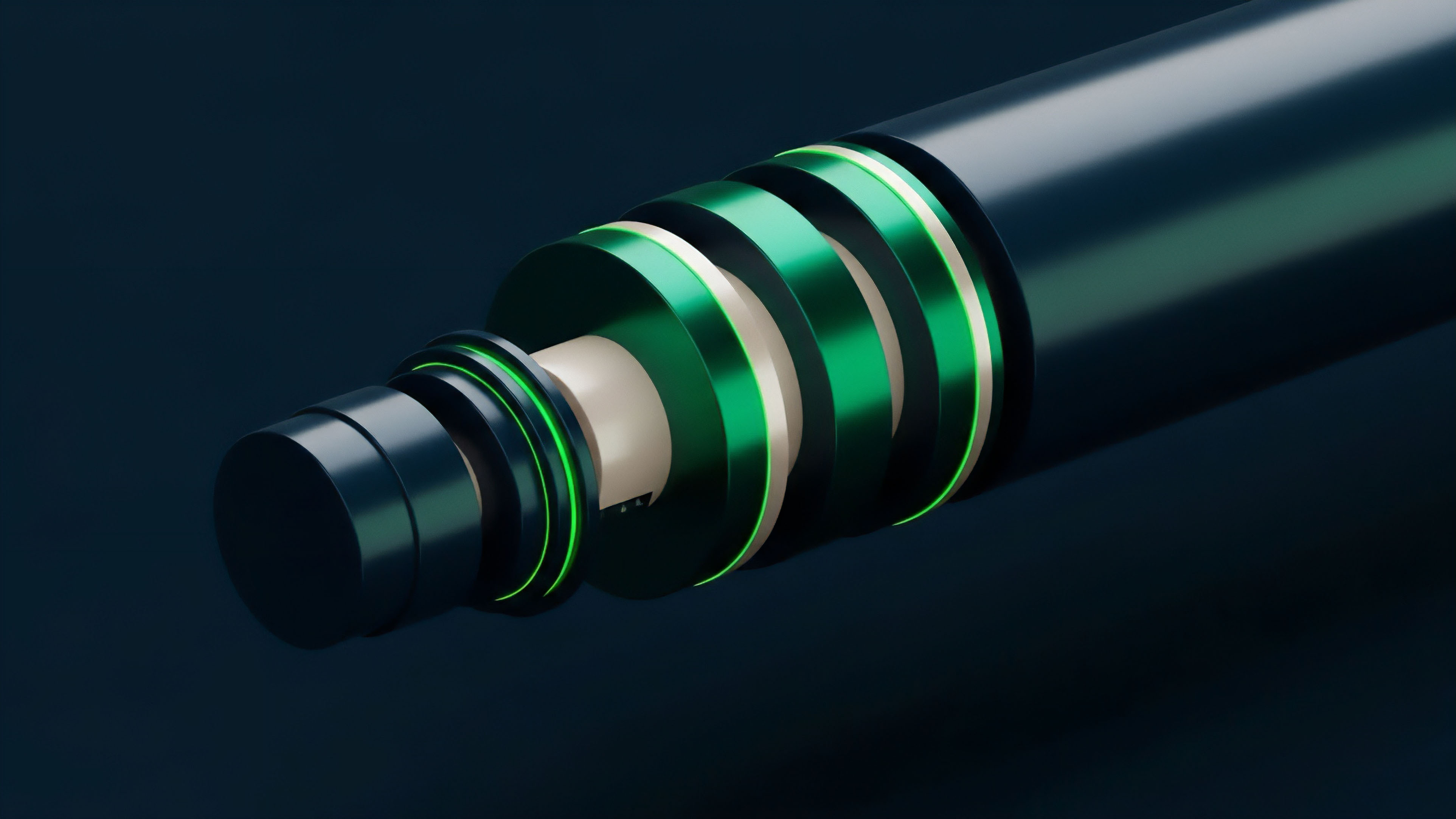

To mitigate these risks, protocols employ sophisticated data aggregation techniques. The use of Time-Weighted Average Price (TWAP) or Volume-Weighted Average Price (VWAP) oracles is standard practice. These mechanisms average prices over a defined time window, making single-block manipulation significantly more expensive.

However, this introduces a trade-off: increased manipulation resistance comes at the cost of higher latency, which can be detrimental for protocols requiring high-frequency updates for accurate risk management.

Greeks and Data Impact

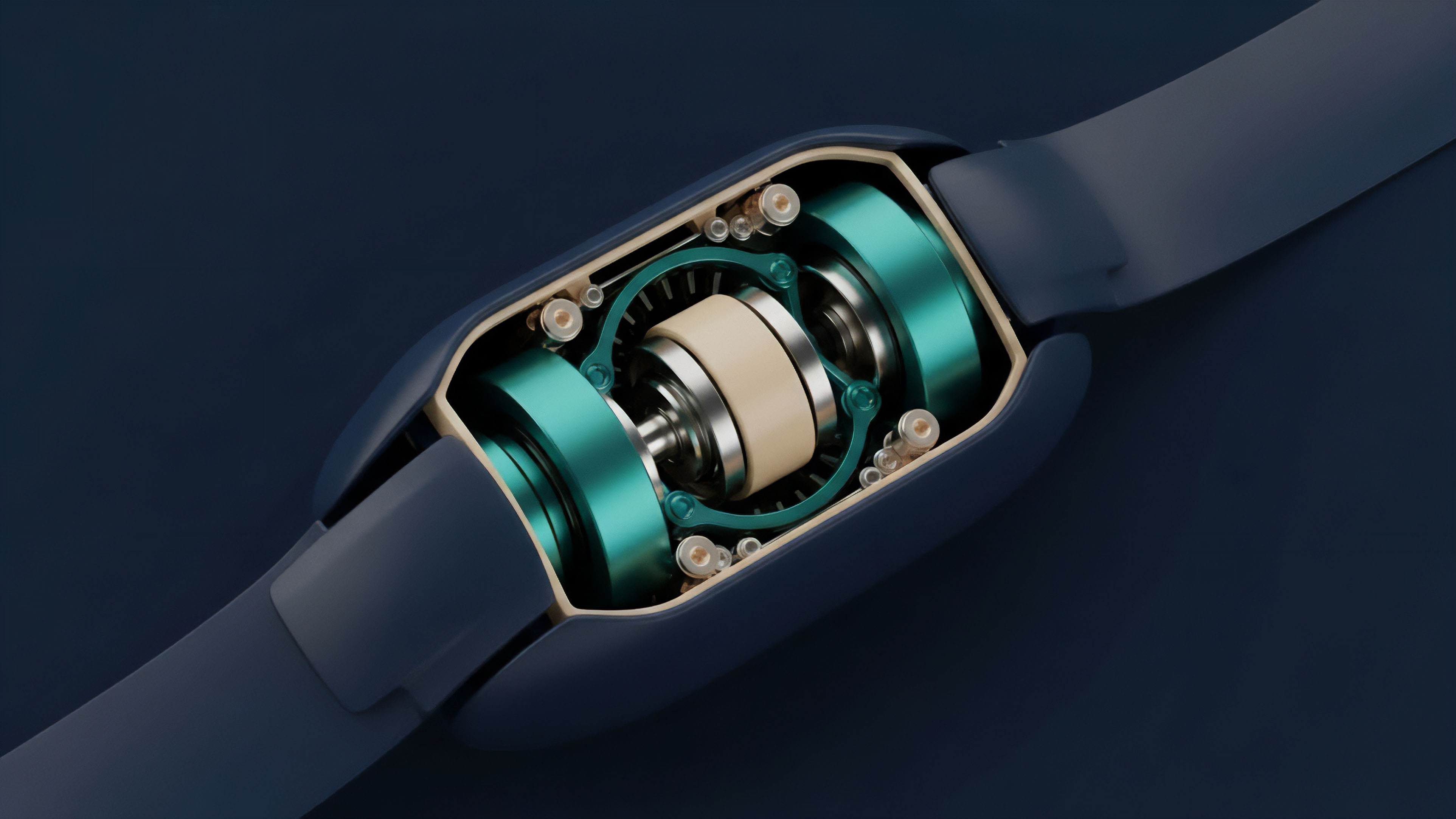

The reliability of data directly influences the calculation of option Greeks, which measure risk sensitivities. The Delta of an option, representing its sensitivity to changes in the underlying asset price, is particularly susceptible to data latency. An options protocol must accurately calculate the Delta of a user’s position to maintain a properly collateralized portfolio.

If the underlying price data is stale, the protocol’s risk engine will calculate an incorrect Delta, leading to an under-collateralized position that can be exploited by market makers. Similarly, Vega , which measures sensitivity to volatility changes, relies on accurate inputs for implied volatility. If the data feed for the underlying asset’s price history or current market movements is unreliable, the calculated implied volatility will be inaccurate, leading to mispricing of options and incorrect hedging strategies.

| Oracle Design Principle | Manipulation Resistance | Latency Trade-Off | Best Use Case |

|---|---|---|---|

| Single Source Feed | Low | Very Low | High-frequency, low-value applications; susceptible to exploits. |

| TWAP/VWAP Aggregation | Medium | Medium (Delayed) | Collateral valuation and liquidation triggers; high cost to manipulate. |

| Decentralized Aggregation | High | High (Consensus required) | Core protocol pricing and long-term risk management. |

Approach

Current approaches to ensuring data reliability in crypto options rely heavily on multi-layered security architectures and economic incentives. The core design challenge is creating a data feed that is simultaneously fast enough for accurate options pricing and secure enough to prevent manipulation. This involves a shift from a technical problem to an economic and game-theoretic one.

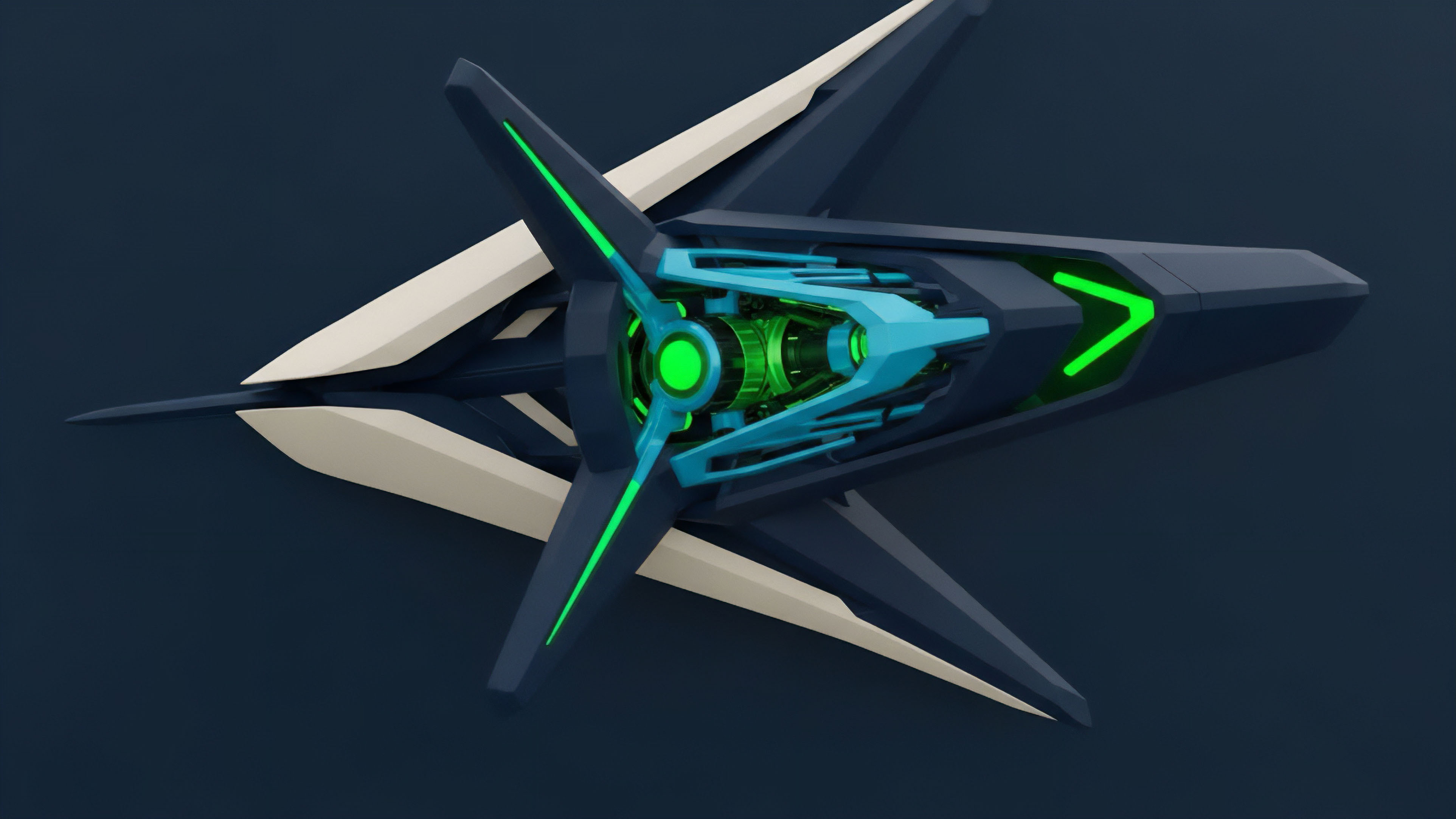

Oracle Network Architecture

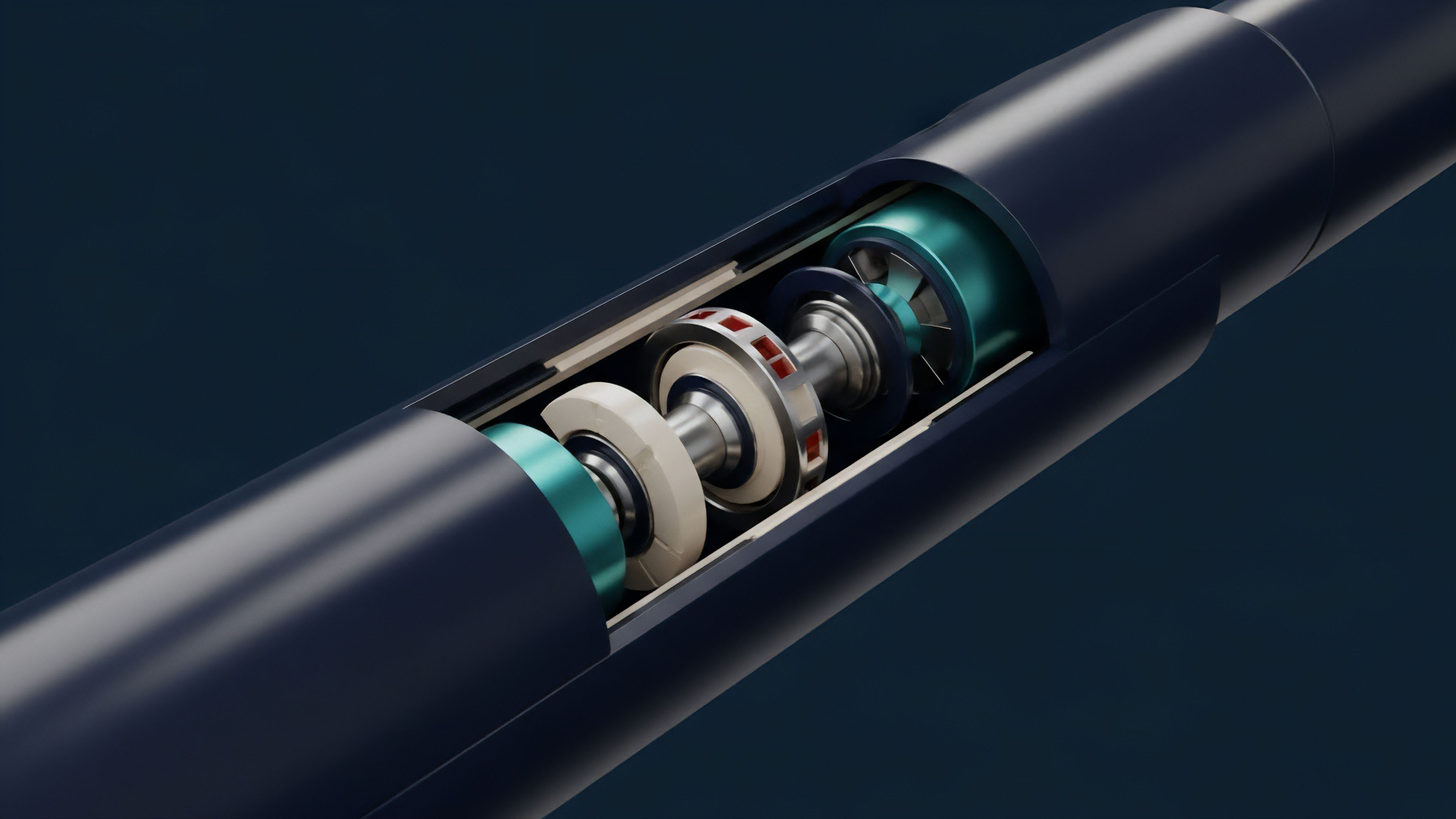

Modern decentralized options protocols utilize oracle networks, which are distinct from simple price feeds. These networks aggregate data from multiple independent sources, or nodes, to create a robust, decentralized price feed. The process involves several steps:

- Data Source Diversification: Nodes pull data from multiple centralized exchanges (CEXs) and high-liquidity DEXs. This prevents a single exchange from manipulating the aggregate price.

- Consensus Mechanism: The oracle network uses a consensus mechanism (often based on a median or average calculation) to determine the “true” price from the various inputs. This ensures that a single malicious node cannot poison the feed unless a majority of nodes collude.

- Economic Incentives: Nodes are incentivized with rewards for providing accurate data and penalized (slashed) for submitting incorrect or malicious data. The economic design ensures that the cost of collusion among nodes exceeds the potential profit from manipulating the options protocol.

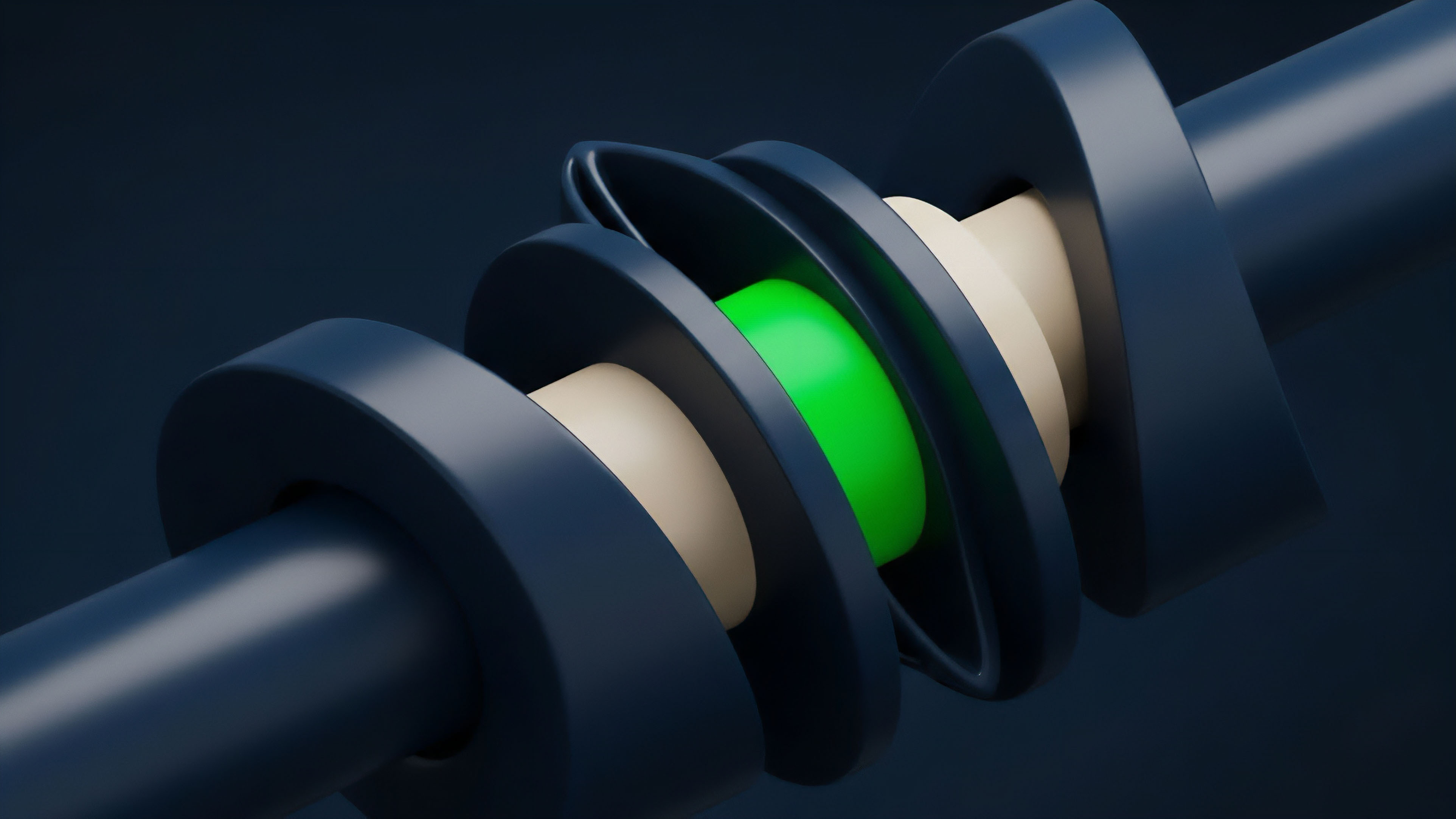

Liquidation Mechanism Design

The design of the liquidation mechanism is directly tied to data reliability. A well-designed protocol uses a time delay between a data update and the actual liquidation execution. This allows for market participants to respond to price changes and provides a window for potential data feed anomalies to be corrected before a liquidation occurs.

The liquidation threshold itself must be carefully calibrated based on the underlying asset’s volatility and the oracle’s latency characteristics.

The integrity of a decentralized options protocol’s risk engine depends entirely on the economic guarantees of its oracle network.

Evolution

The evolution of data reliability for crypto options has progressed from reactive fixes to proactive architectural design. Early protocols focused on preventing single-point failures; current systems prioritize systemic resilience through redundancy and economic alignment. The focus has expanded beyond just the spot price of the underlying asset to include a wider range of data points required for sophisticated options trading.

Data Types and Complexity

As decentralized options have grown more complex, the required data inputs have expanded significantly. The current generation of protocols requires reliable feeds for:

- Implied Volatility (IV) Surfaces: A complete options market requires accurate data on implied volatility across different strikes and expirations. Providing this data reliably is significantly more complex than providing a single spot price, as it involves aggregating data from multiple options markets.

- Interest Rate Benchmarks: Protocols offering interest rate swaps or options on interest rates require reliable feeds for benchmark rates like SOFR or risk-free rates in DeFi (e.g. Aave or Compound rates).

- Cross-Chain Data: As protocols expand to multiple chains, data reliability must account for cross-chain communication delays and security challenges.

Risk Management Layers

The most significant evolution in data reliability is the implementation of multiple layers of risk management. Protocols now incorporate circuit breakers and price deviation guards that automatically pause liquidations or trading if the price feed deviates significantly from an expected range. This provides a buffer against sudden, malicious price spikes.

| Data Reliability Challenge | Initial Response (2020) | Current Solution (2024) |

|---|---|---|

| Single point of failure price feed | Simple on-chain TWAP from DEX | Decentralized oracle network aggregation |

| Flash loan manipulation risk | Higher collateralization ratios | Price deviation guards and time delays |

| Lack of volatility data | Manual parameter setting | Aggregated volatility surface feeds |

Horizon

The future of data reliability in crypto options involves a deeper integration of zero-knowledge technology and advanced incentive engineering. The current challenge remains the trade-off between speed and security; future systems aim to reduce this friction by leveraging off-chain computation and verifiable proofs.

Zero-Knowledge Oracles

A significant development on the horizon is the use of zero-knowledge proofs (ZKPs) to verify data integrity off-chain. This approach allows a data provider to prove that they correctly processed and aggregated data from a specific set of sources without revealing the underlying data itself. This enhances privacy and reduces the data payload required on-chain.

ZKPs could allow for more complex calculations, such as verifiable implied volatility surfaces, to be performed off-chain and proven on-chain with a single, compact proof.

Inter-Protocol Risk Sharing

The next iteration of data reliability will likely involve protocols sharing risk and data feeds more effectively. Rather than each options protocol building its own redundant oracle network, a shared infrastructure for risk-managed data feeds could emerge. This would allow protocols to collectively fund and secure high-quality data streams, creating a more efficient and resilient ecosystem.

The governance of these shared data feeds will require new incentive models to ensure fair participation and prevent collusion among protocols.

The future of data reliability in decentralized options will be defined by zero-knowledge proofs and new economic models that ensure data integrity without sacrificing speed.

Glossary

Oracle Network Design

Financial Market Dynamics Analysis

Economic Security Mechanisms

Oracle System Reliability

Api Reliability

Financial Instrument Reliability

Protocol Resilience Frameworks

Protocol Design

Liquidation Risk Management Best Practices