Essence

Data Quality Assessment functions as the structural integrity verification layer for decentralized derivative markets. It serves as the diagnostic process determining whether incoming market feeds, historical trade logs, and oracle-reported values meet the rigorous standards required for accurate margin engine execution and risk management. Without this validation, automated systems risk processing corrupted inputs that lead to erroneous liquidation events or mispriced derivative contracts.

Data Quality Assessment represents the foundational verification of input accuracy required for reliable decentralized derivative pricing and risk management.

This assessment operates across multiple dimensions of financial veracity. It evaluates data for temporal consistency, ensuring that timestamps align with consensus-layer finality. It also checks for cross-venue arbitrage discrepancies that might indicate manipulation or liquidity fragmentation.

By establishing a threshold for acceptable data deviation, protocols protect themselves from garbage-in, garbage-out failure modes.

Origin

The necessity for Data Quality Assessment emerged from the limitations of early decentralized exchange architectures. Initial protocols relied on singular, often unreliable, price feeds that proved vulnerable to manipulation during periods of high volatility. Developers realized that raw data, while abundant, lacked the contextual validation required for high-stakes financial instruments like options and perpetual futures.

The evolution of this field traces back to the refinement of Decentralized Oracle Networks. As developers sought to build more complex financial primitives, the focus shifted from merely accessing data to ensuring its provenance and reliability. Early practitioners adopted methodologies from traditional high-frequency trading, adapting them to the pseudonymous, permissionless environment of blockchain networks.

- Systemic Fragility: Early reliance on unverified on-chain price points created systemic risks that necessitated robust validation.

- Adversarial Environments: The rise of MEV (Maximum Extractable Value) actors forced protocols to treat every data point as potentially malicious.

- Financial Integrity: The shift toward professionalized derivative markets required auditability standards that matched traditional financial markets.

Theory

Data Quality Assessment rests on the application of statistical filtering and game-theoretic validation. At the core, it involves the calculation of a Confidence Score for every data packet. This score aggregates metrics such as feed latency, node reputation, and historical deviation from median price points.

If the score falls below a pre-determined threshold, the protocol triggers a circuit breaker to prevent automated contract execution.

| Assessment Metric | Theoretical Purpose |

| Latency Variance | Detecting network congestion or feed manipulation |

| Outlier Probability | Identifying flash crashes or erroneous trades |

| Consensus Weighting | Validating feed reliability via decentralized nodes |

Quantitative models utilize Mean Reversion Analysis and Volatility Clustering to distinguish between genuine market moves and data artifacts. When price action deviates significantly from established models without corresponding volume, the assessment layer flags the input as suspect. This prevents the margin engine from initiating liquidations based on synthetic price spikes.

Robust data validation protocols employ statistical filtering and decentralized consensus to ensure that margin engines operate on verified market realities.

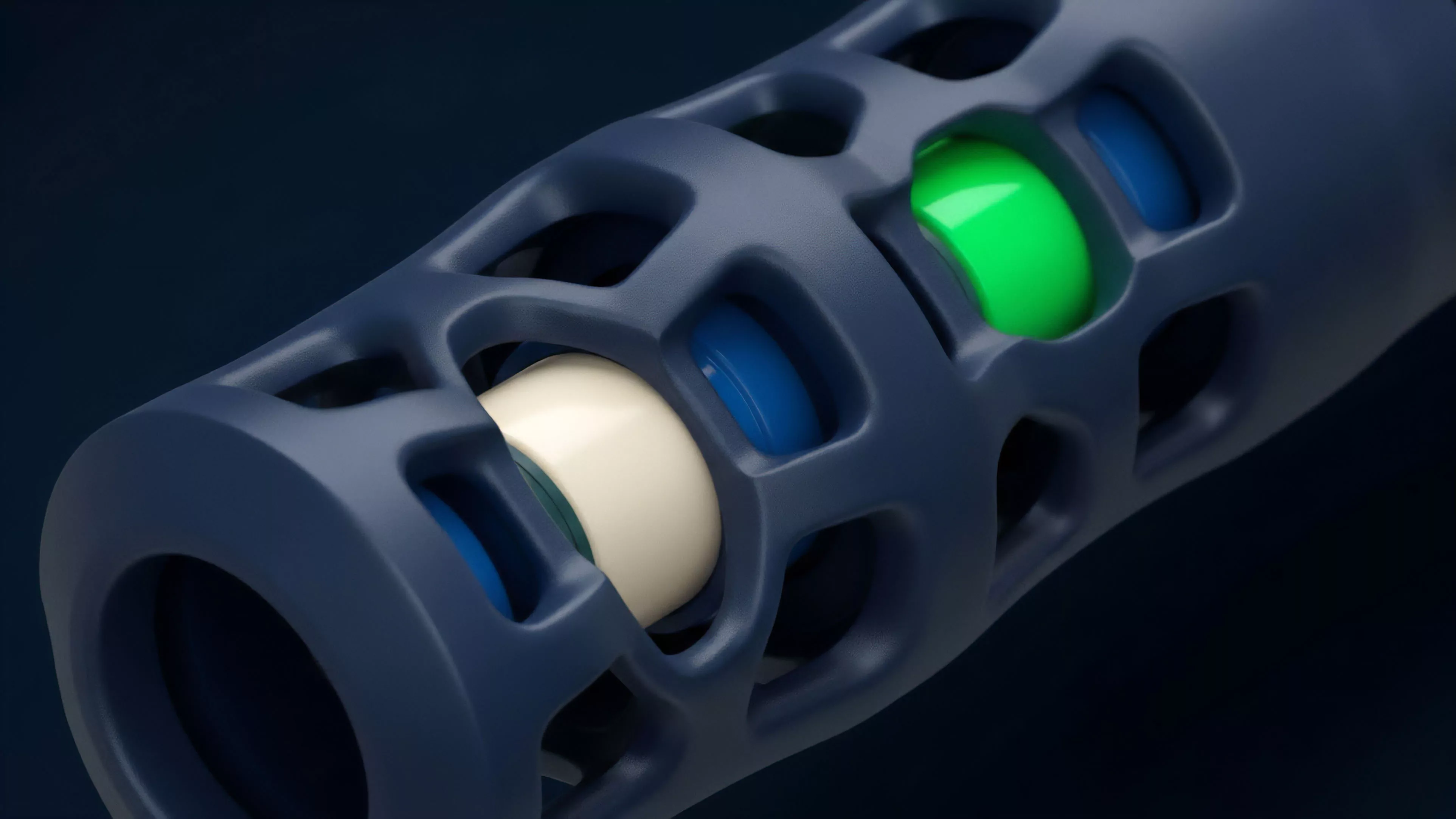

The logic here mirrors the functioning of a digital nervous system. Just as the human brain filters sensory input to ignore noise and focus on critical stimuli, a protocol must discard anomalous data to maintain systemic stability. The complexity of this filtering determines the protocol’s resilience against sophisticated market manipulation strategies.

Approach

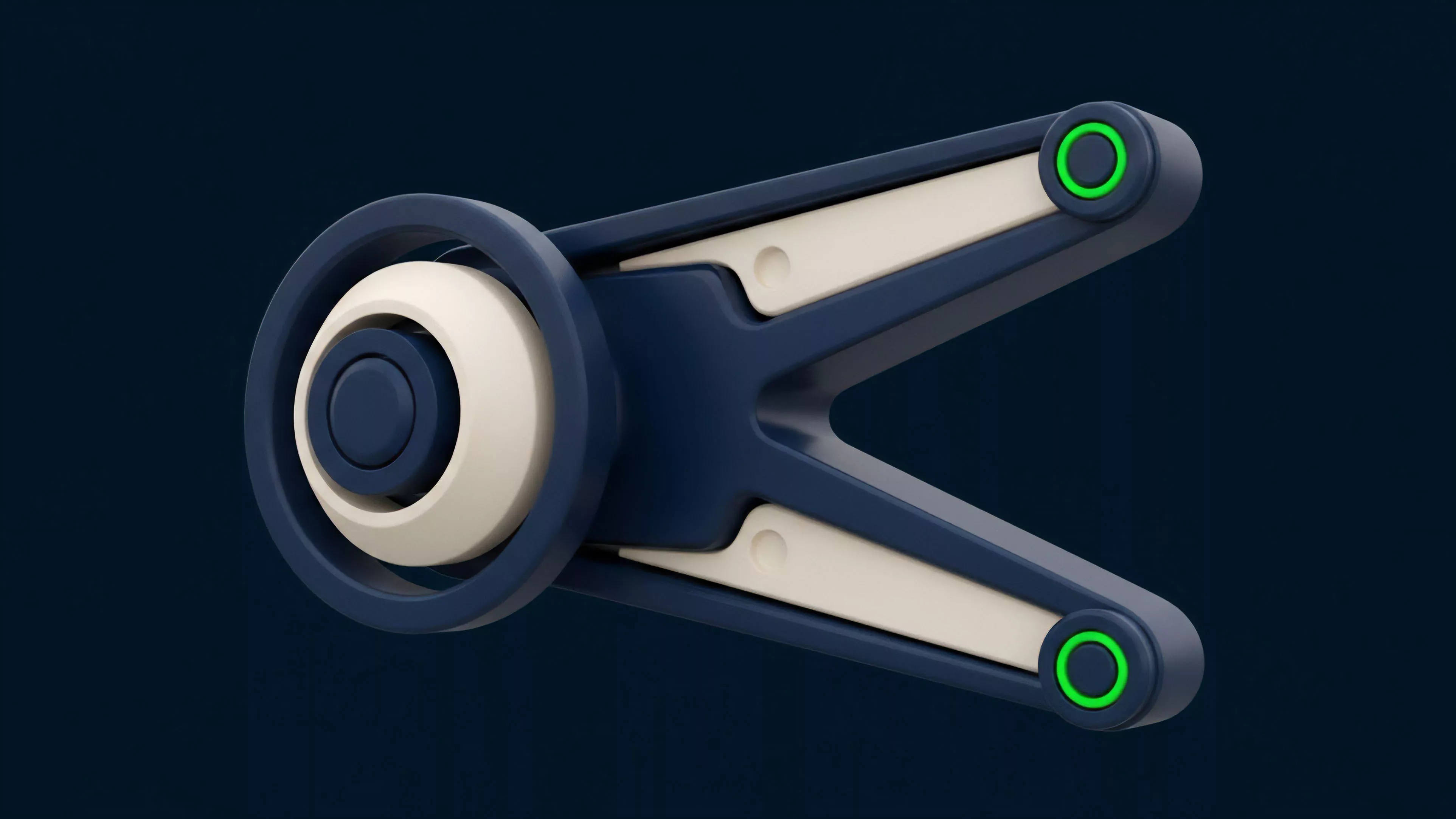

Current practices in Data Quality Assessment rely heavily on Multi-Source Aggregation.

Instead of trusting a single provider, protocols synthesize inputs from numerous independent nodes. This reduces the attack surface by ensuring that a single corrupted feed cannot dictate the outcome of derivative settlements.

- Validation Logic: Protocols utilize weighted medians to calculate the true asset price while discarding extreme outliers.

- Latency Mitigation: Advanced systems incorporate jitter buffers to account for block time fluctuations in decentralized environments.

- Historical Backtesting: Developers subject their assessment algorithms to historical data sets to simulate how they handle past market crises.

This process is continuous and automated. The assessment layer monitors for Arbitrage-Induced Distortion, where the price on one venue diverges from the broader market average. By constantly recalibrating the weighting of different data sources, the protocol maintains a high degree of fidelity even during extreme market stress.

Evolution

The field has moved from simplistic, static checks to Dynamic Heuristic Models.

Initially, protocols merely verified that a price feed was active. Now, they employ machine learning algorithms that adapt to shifting market conditions. This evolution reflects the increasing sophistication of market participants and the corresponding need for higher-order defensive mechanisms.

The transition from static verification to dynamic heuristic models marks the maturation of data integrity standards within decentralized derivative markets.

One might consider how this progression mirrors the development of early telecommunications protocols, which also had to overcome signal noise and interference before they could support reliable global communication. We have seen a shift toward Proof of Validity, where the data itself carries a cryptographic signature proving it originated from a verified source. This minimizes the trust placed in intermediary nodes and enhances the overall security posture of the financial instrument.

Horizon

Future developments in Data Quality Assessment will prioritize Zero-Knowledge Proofs to verify data integrity without revealing the underlying raw inputs.

This will enable privacy-preserving validation, allowing protocols to verify that data meets quality standards without exposing sensitive trading patterns to public mempools.

| Future Development | Impact on Derivatives |

| ZK-Proofs | Enhanced privacy for high-volume traders |

| Predictive Filtering | Anticipating data corruption before it occurs |

| Cross-Chain Validation | Unified data standards across fragmented networks |

As decentralized markets grow, the integration of Real-Time Auditing will become standard. Protocols will likely adopt autonomous data monitors that act as independent agents, continuously stress-testing the validity of inputs. This creates a self-healing financial infrastructure that can withstand sophisticated adversarial attacks while maintaining liquidity and capital efficiency.