Essence

Off Chain Data Ingestion functions as the critical connective tissue between decentralized financial protocols and the broader, high-velocity information environments of global capital markets. It represents the technical apparatus designed to bridge the gap between deterministic blockchain state machines and the probabilistic, rapidly shifting realities of external price discovery, volatility metrics, and macroeconomic indicators.

Off Chain Data Ingestion provides the essential bridge between deterministic blockchain state machines and the probabilistic realities of global capital markets.

The primary utility lies in feeding high-fidelity, verified information into smart contract logic without necessitating a total migration of financial activity onto a single, bottlenecked ledger. This process ensures that decentralized derivative engines, such as automated options clearinghouses, remain synchronized with global spot prices, interest rate curves, and volatility surfaces. Without these ingestion mechanisms, decentralized markets would suffer from severe arbitrage inefficiency, as local state information would diverge from the global financial reality.

Origin

The genesis of Off Chain Data Ingestion stems from the fundamental limitation of the blockchain trilemma, specifically the inherent inability of decentralized consensus mechanisms to perform high-frequency, low-latency computation on external data. Early iterations of decentralized exchanges relied exclusively on on-chain order books, which proved disastrous during periods of extreme volatility, leading to massive slippage and liquidity fragmentation.

The industry recognized that the cost of trustless computation scales poorly when coupled with the need for continuous, real-time data feeds. This realization catalyzed the development of decentralized oracle networks and state relayers. These systems were architected to externalize the heavy lifting of data aggregation and verification, moving the computational burden away from the core settlement layer while maintaining cryptographic integrity.

Decentralized derivative engines rely on externalized data ingestion to prevent systemic arbitrage failure and ensure synchronization with global price discovery.

Theory

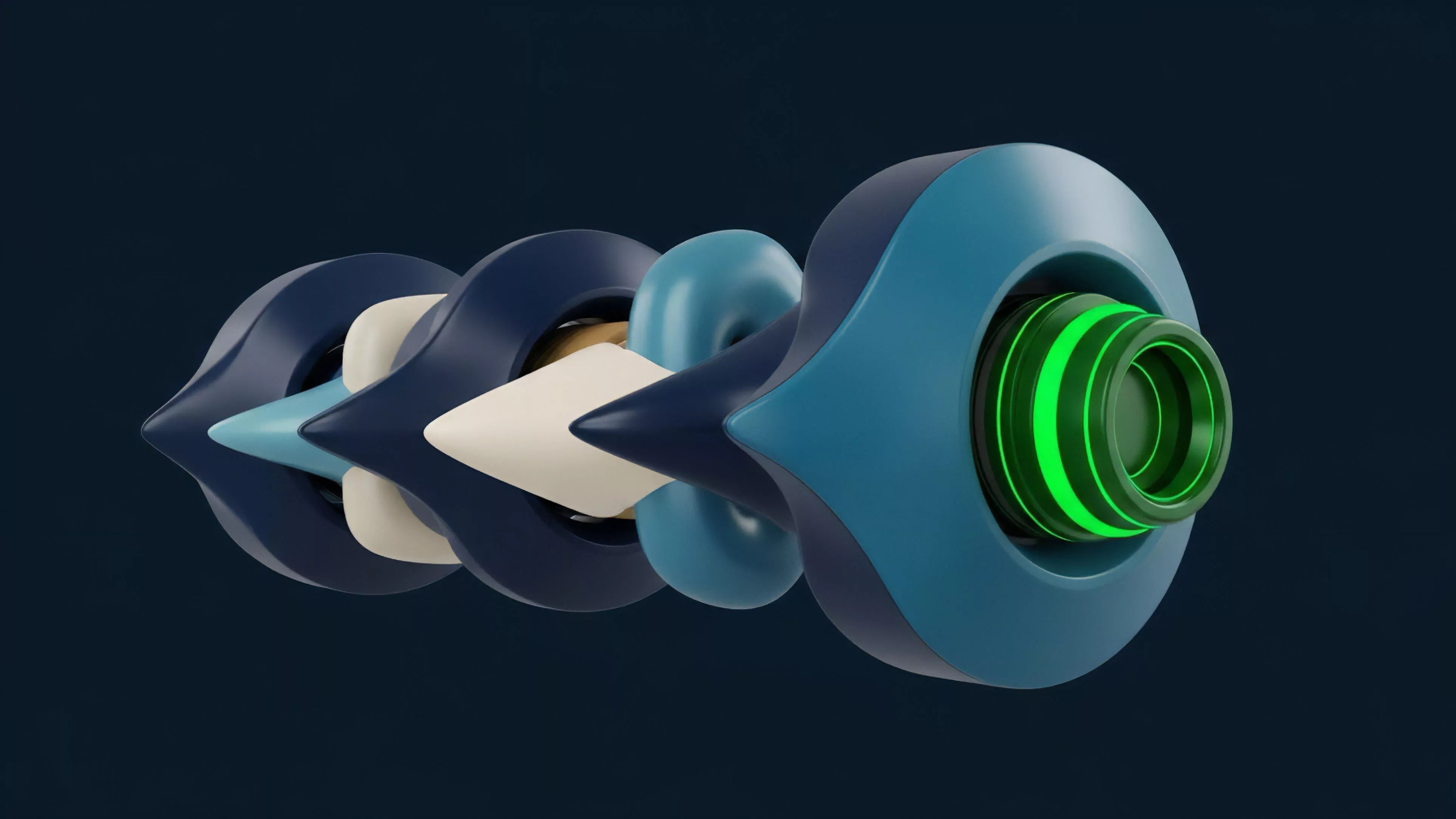

The structural integrity of Off Chain Data Ingestion rests upon the mechanics of proof-of-validity and the mitigation of adversarial interference. Systems are designed to minimize the attack surface by employing multi-node aggregation, cryptographic signatures, and economic slashing conditions. The goal is to ensure that the data injected into the protocol reflects the consensus of a diverse set of sources, rather than the input of a single, potentially compromised node.

Architectural Frameworks

- Decentralized Oracle Networks employ threshold signature schemes to aggregate external data points into a single, verifiable payload before submission.

- State Relayer Protocols utilize light client proofs to import the finalized state of external chains or off-chain order books into the host protocol.

- Zero Knowledge Proofs allow for the verification of complex off-chain computations without revealing the raw data, preserving privacy while ensuring correctness.

| Mechanism | Trust Assumption | Latency |

|---|---|---|

| Centralized API | High Trust | Minimal |

| Oracle Aggregation | Distributed Trust | Moderate |

| Zk Proofs | Cryptographic | High |

Approach

Current implementation strategies focus on balancing capital efficiency with security guarantees. Protocols increasingly utilize modular data architectures where the ingestion layer is separated from the execution layer. This allows for the selection of data providers based on the specific risk profile of the derivative instrument. High-leverage options contracts demand sub-second, highly redundant data, whereas long-dated volatility swaps might prioritize cost-effectiveness over absolute speed.

Data ingestion modularity allows protocols to match security guarantees with the specific risk profile of individual derivative instruments.

Market makers and liquidity providers now rely on custom Off Chain Data Ingestion pipelines to manage their hedging strategies. By streaming real-time Greeks and order flow data into automated risk engines, they maintain tighter spreads and mitigate the risk of adverse selection. This sophisticated approach shifts the burden of price discovery away from the base layer, enabling a more responsive and capital-efficient ecosystem.

Evolution

The trajectory of Off Chain Data Ingestion has moved from rudimentary, single-source feeds to highly complex, multi-layered verification stacks. Initially, simple push-based mechanisms dominated, which were susceptible to rapid price manipulation and single-point failure. The transition toward pull-based, user-initiated ingestion models significantly improved robustness, as data is only retrieved when explicitly required by the protocol, reducing the cost of unnecessary updates.

The integration of high-frequency data streams has necessitated a shift in how smart contracts handle updates. Older architectures relied on synchronous calls, which caused massive latency issues. Newer designs employ asynchronous messaging, where the protocol requests data and processes the response when it arrives, allowing for a more fluid and scalable user experience.

- First Generation utilized centralized push mechanisms with minimal security overhead.

- Second Generation introduced decentralized node aggregation to mitigate single-source failure.

- Third Generation leverages zero-knowledge cryptography to ensure data validity at the protocol level.

Horizon

The future of Off Chain Data Ingestion lies in the maturation of verifiable computation. As hardware-accelerated zero-knowledge proof generation becomes more accessible, the distinction between on-chain and off-chain data will dissolve. Protocols will treat all external information as inherently untrusted, verifying its origin and correctness through cryptographic proofs rather than relying on reputation-based models.

We are moving toward a reality where entire order books, including the history of limit orders and trade executions, are ingested as single, verifiable blobs. This evolution will allow decentralized protocols to replicate the performance of traditional exchanges while retaining the transparency and censorship resistance of the underlying ledger. The challenge will remain the management of systemic risk as these ingestion pipelines become more complex and interconnected, potentially creating new vectors for contagion across the broader digital asset space.