Essence

Data Latency Reduction functions as the primary determinant of execution quality in high-frequency decentralized derivatives markets. It represents the temporal delta between the generation of a market event, such as a price update or order book shift, and the successful commitment of a corresponding trade to the blockchain ledger. In an environment where smart contract execution is constrained by block times and consensus finality, minimizing this interval becomes the fundamental lever for capital efficiency.

Data latency reduction minimizes the temporal gap between market event generation and blockchain transaction finality to ensure competitive execution.

The operational significance of Data Latency Reduction resides in its ability to mitigate adverse selection. Market participants capable of processing information and broadcasting transactions faster than their peers capture superior fill prices, effectively neutralizing the impact of slippage. This advantage is amplified in crypto-native venues where order flow is transparent, and latency sensitive strategies directly impact the profitability of automated liquidity provision and delta-neutral hedging operations.

Origin

The necessity for Data Latency Reduction emerged alongside the transition from simple on-chain swaps to complex derivative architectures. Early decentralized exchanges relied on rudimentary automated market makers that operated without order books, rendering execution speed secondary to liquidity depth. As sophisticated traders entered the space, the limitation of sequential block processing became apparent, forcing the development of off-chain order books and relayers.

- Transaction propagation delay dictates the speed at which nodes receive and validate new information within the peer-to-peer network.

- Consensus mechanism overhead introduces deterministic waiting periods that define the absolute lower bound of system responsiveness.

- Oracle update frequency limits the precision of collateral valuation, forcing traders to account for stale data risks.

Historical market failures in decentralized finance often stem from this latency gap, where sudden volatility causes price divergence between the blockchain state and broader global markets. Participants observed that arbitrageurs exploited this discrepancy, prompting a shift toward vertical integration where protocol designers began prioritizing speed through layer-two scaling solutions and custom sequencing layers.

Theory

Analyzing Data Latency Reduction requires a deep understanding of protocol physics. The relationship between latency and profitability is non-linear; as execution time approaches the network’s minimum block time, the value of the information decays exponentially. This decay follows a power law distribution where the first actor to reach the consensus layer captures the vast majority of available economic rent.

| Metric | Impact of Latency |

| Slippage | Increases as latency rises |

| Arbitrage Opportunity | Shrinks with faster updates |

| Liquidation Risk | Higher during network congestion |

The mathematical model for optimal execution involves minimizing the variance of the execution price against the expected price at the time of order entry. When latency is high, the uncertainty regarding the state of the order book increases, leading to wider bid-ask spreads. Traders must factor in this volatility premium, which acts as a hidden cost that erodes the net present value of complex derivative strategies.

Systemic risk increases when network latency exceeds the time required for protocol liquidators to respond to collateral shortfall events.

Entropy in the network is a constant force. Just as a pendulum eventually loses energy to friction, information in a decentralized market loses its predictive power to latency. My work in this field suggests that we are approaching a physical limit where further improvements in hardware will yield diminishing returns, forcing a shift toward algorithmic architectural changes.

Approach

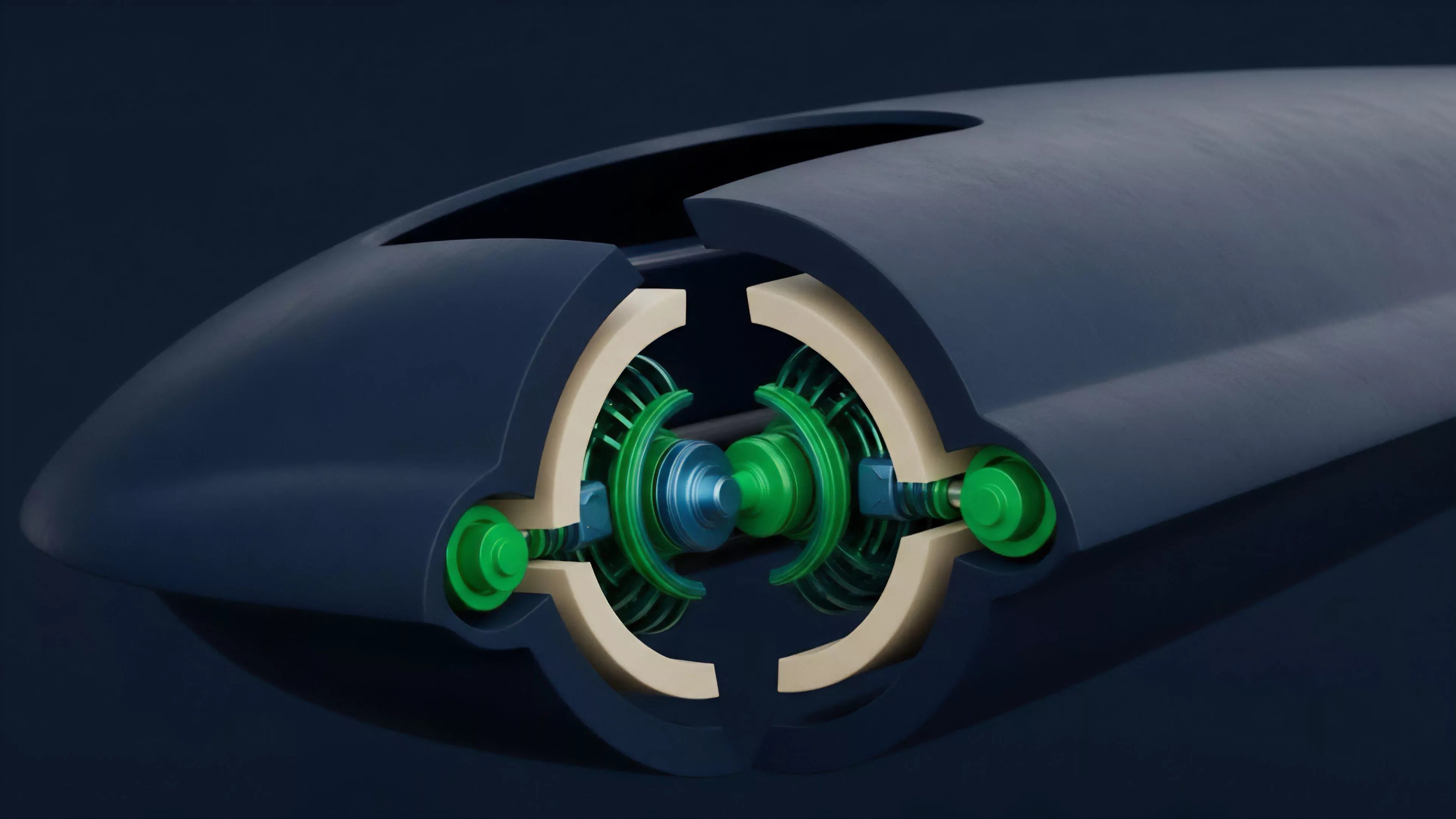

Current strategies for Data Latency Reduction focus on structural modifications to the transaction lifecycle. Market makers utilize dedicated infrastructure to colocate with sequencing nodes, reducing the physical distance that signals must travel. By optimizing the interaction between the off-chain matching engine and the on-chain settlement layer, these entities achieve a competitive edge that is strictly unavailable to retail participants.

- Sequencer decentralization allows for multiple entry points into the transaction pipeline, mitigating the risk of single-point congestion.

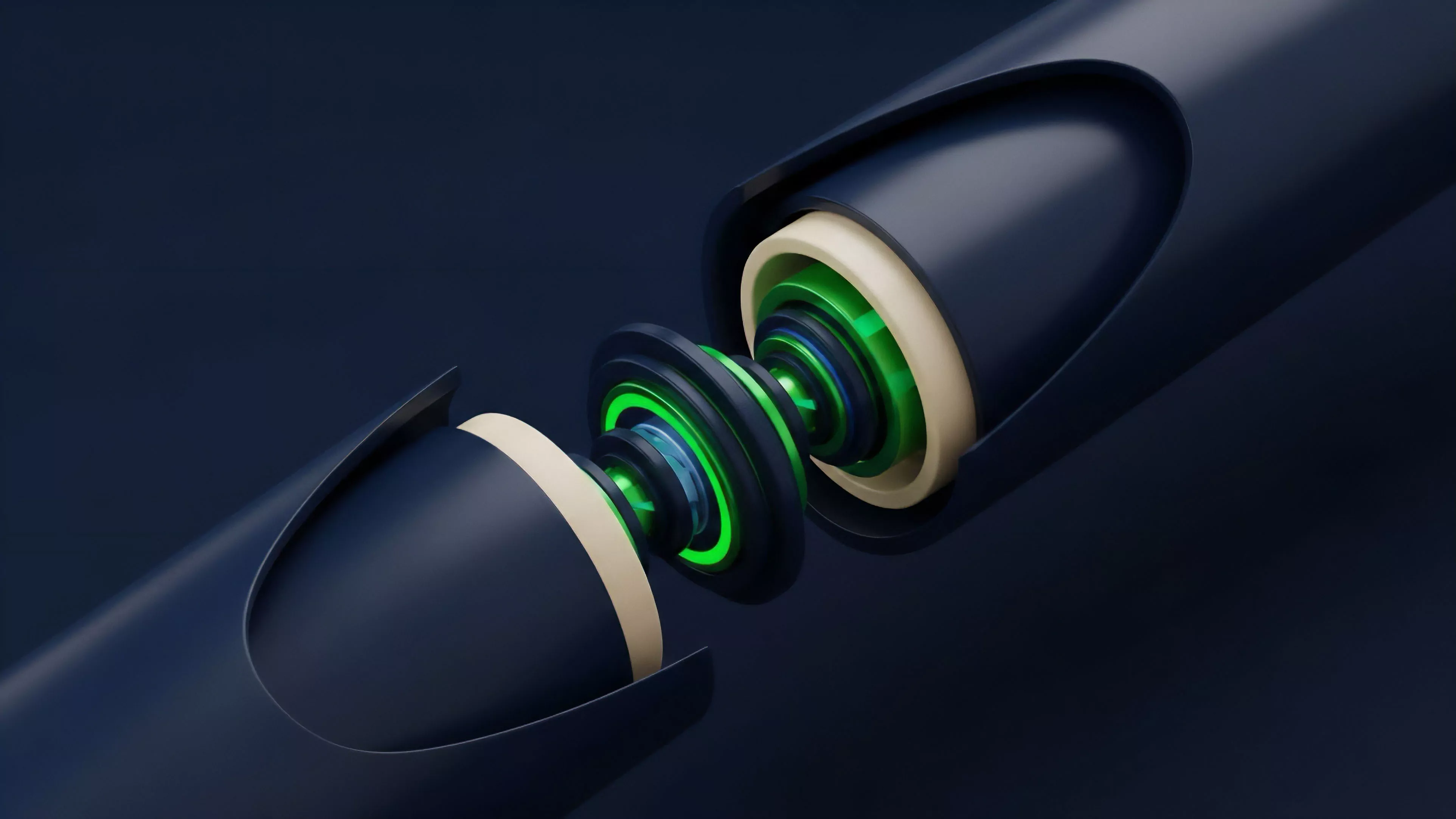

- Batching mechanisms consolidate multiple orders into a single transaction, amortizing the cost of latency across larger volumes.

- Predictive pre-confirmation protocols allow traders to receive guarantees of execution before the transaction reaches finality, providing a buffer against network jitter.

The focus has moved toward creating specialized mempools that prioritize high-value derivative orders. This introduces a form of market stratification where the cost of speed is baked into the fee structure. While this ensures stability for institutional participants, it raises questions about the long-term decentralization of the underlying protocol architecture.

Evolution

The evolution of this domain reflects a broader transition from experimental finance to robust institutional infrastructure. We have moved from simple gas-based bidding wars to sophisticated priority fee auctions. These auctions force participants to quantify the exact value of their time, creating a transparent market for latency that was previously hidden within network congestion.

Market evolution toward dedicated sequencing layers reflects the transition from unoptimized mempools to high-performance derivative execution environments.

Early systems treated all transactions with equal priority, a design flaw that left the network vulnerable to denial-of-service attacks during periods of extreme market stress. Modern protocols recognize that derivative order flow requires a different treatment than simple token transfers. The integration of zero-knowledge proofs and state-channel technologies has allowed for a significant compression of the data footprint, enabling faster validation cycles without compromising security.

Horizon

The future of Data Latency Reduction lies in the implementation of hardware-level acceleration and the adoption of asynchronous consensus models. As we move toward a world where derivative markets are integrated directly into the consensus layer, the distinction between order matching and settlement will vanish. This will necessitate a new class of risk management tools capable of operating at sub-millisecond speeds.

| Future Development | Systemic Impact |

| Hardware Security Modules | Enhanced execution integrity |

| Asynchronous Consensus | Elimination of block-time bottlenecks |

| Atomic Settlement | Total removal of counterparty risk |

My conjecture involves the rise of programmable latency, where protocols dynamically adjust the priority of orders based on the systemic health of the platform. This will move us beyond static fee structures toward an intelligent, self-regulating ecosystem. The ultimate goal is the achievement of near-instantaneous global price discovery, a state that will redefine the boundaries of liquidity and market efficiency.