Essence

Data Integrity represents the core assurance that financial data used by a decentralized protocol is accurate, consistent, and delivered in a timely manner. In traditional finance, this assurance is provided by centralized clearing houses and regulated exchanges. In the decentralized environment, where a single, trusted entity does not exist, Data Integrity becomes a complex, multi-layered problem.

For crypto options and derivatives, the accuracy of the underlying asset price feed determines the fairness of settlement, the correctness of margin calculations, and the very viability of the contract itself. A derivative contract is essentially a bet on a future price; if the price feed ⎊ the oracle ⎊ is compromised, the contract’s fundamental value proposition collapses.

The challenge extends beyond simple accuracy. It involves liveness, ensuring the data feed is continuously updated, and consistency, ensuring all nodes in the network agree on the same data point at the same time. These properties are critical for options protocols where time decay (theta) and volatility (vega) are highly sensitive to real-time market movements.

If data updates are delayed or inconsistent, it opens the door for high-frequency arbitrage and, more significantly, allows for malicious manipulation that can trigger unfair liquidations or profitable exploits. This vulnerability is not theoretical; it is a systemic risk that has repeatedly led to catastrophic losses in DeFi protocols. The integrity of the data feed is the ultimate arbiter of a protocol’s solvency and its ability to function as a reliable financial instrument.

Data Integrity in decentralized derivatives is the cryptographic and economic assurance that price feeds accurately reflect real-world markets, enabling fair settlement and preventing systemic exploitation.

Origin

The concept of Data Integrity in finance predates blockchain technology, originating with the need to prevent fraud and ensure accurate record-keeping in traditional banking systems. In TradFi, the origin of data integrity is rooted in hierarchical trust models. The integrity of a stock price feed, for instance, is guaranteed by the exchange (e.g.

CME or NYSE) and verified by regulatory bodies. This model relies on legal frameworks and centralized oversight to enforce accuracy and prevent manipulation.

When crypto derivatives emerged, initially on centralized exchanges like BitMEX and Deribit, this model was simply ported over. The exchange itself acted as the central authority for price feeds and settlement. The true challenge of Data Integrity began with the rise of decentralized finance (DeFi) and the “oracle problem.” The core paradox of DeFi is that smart contracts, which are deterministic and operate entirely on-chain, often require information about real-world events or off-chain asset prices to function.

The data must be bridged from the real world into the blockchain without reintroducing the need for a central authority. Early protocols often relied on simple, single-source oracles, which quickly proved to be a critical single point of failure. The first generation of oracle solutions attempted to solve this by simply providing a price feed from a single source, but this created an obvious vulnerability where an attacker could manipulate that source and profit from the protocol’s reliance on it.

The need for decentralized data verification arose from the inherent limitations of trustless execution in a world of centralized data sources.

Theory

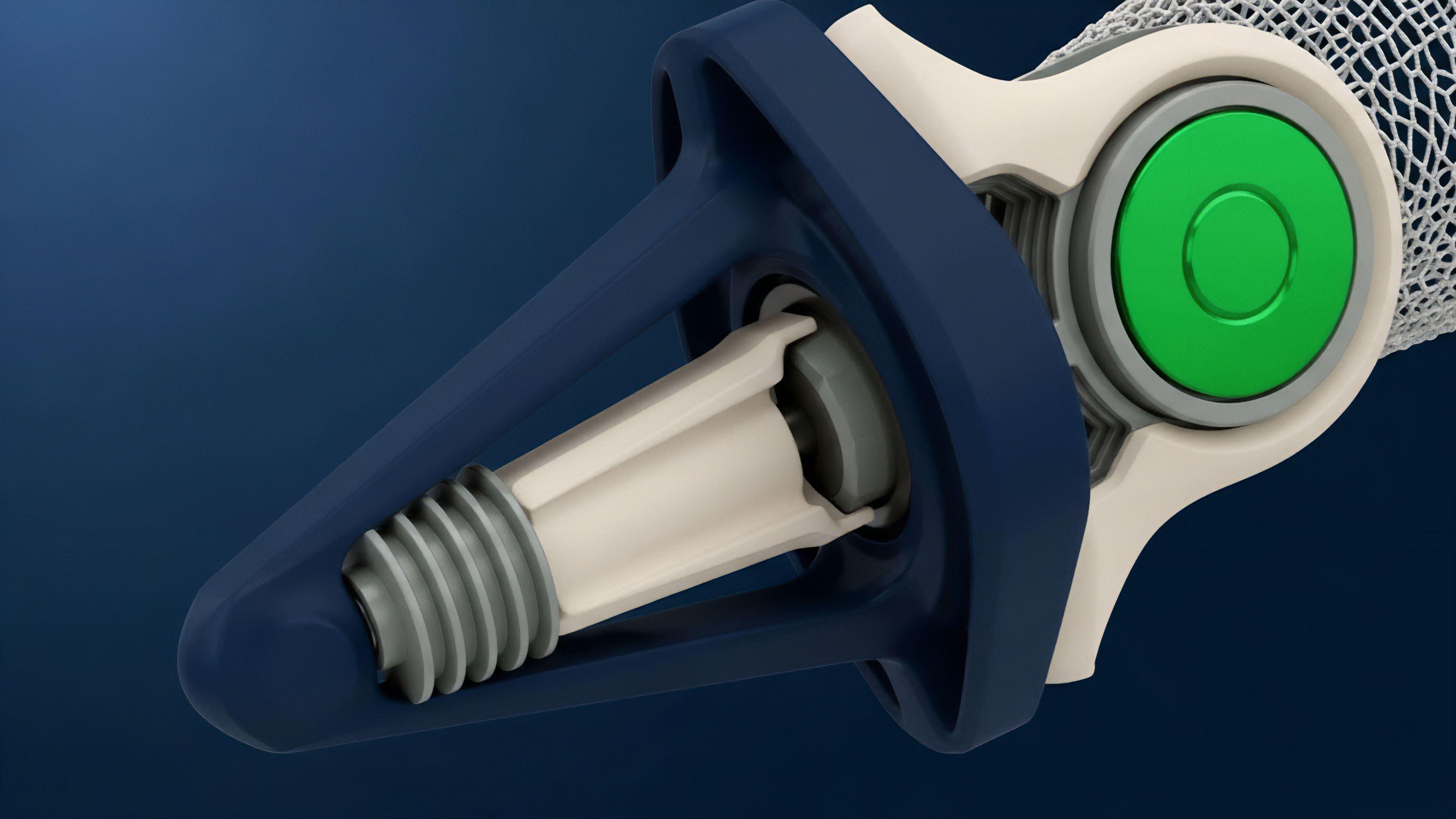

The theoretical foundation of Data Integrity in derivatives relies heavily on quantitative finance and game theory. The pricing of an option contract, particularly in a complex model like Black-Scholes or its variants, is highly sensitive to inputs such as the underlying asset price, volatility, and time to expiration. A data feed that delivers an inaccurate price directly invalidates the theoretical value calculated by the model.

This creates a disconnect between the protocol’s internal state and the external reality of the market.

From a quantitative perspective, Data Integrity is analyzed through the lens of risk exposure. Inaccurate data feeds introduce basis risk and counterparty risk. If a protocol calculates margin requirements based on a manipulated price, it can trigger liquidations that are unfair to users or allow an attacker to create synthetic leverage that destabilizes the entire system.

The game theory of oracle manipulation focuses on the cost-benefit analysis for an attacker. An attacker will calculate the cost of manipulating the oracle feed versus the potential profit from liquidating positions or executing arbitrage trades. For a protocol to be secure, the economic cost of manipulation must significantly outweigh the potential profit.

This cost-of-attack analysis is a core component of protocol design. Furthermore, data integrity directly impacts the accuracy of the Greeks ⎊ the risk sensitivities of an option contract. If the underlying spot price data is faulty, the calculation of delta (price sensitivity) and gamma (delta sensitivity) becomes unreliable, making proper hedging impossible for market makers and exposing the protocol to unexpected systemic risk.

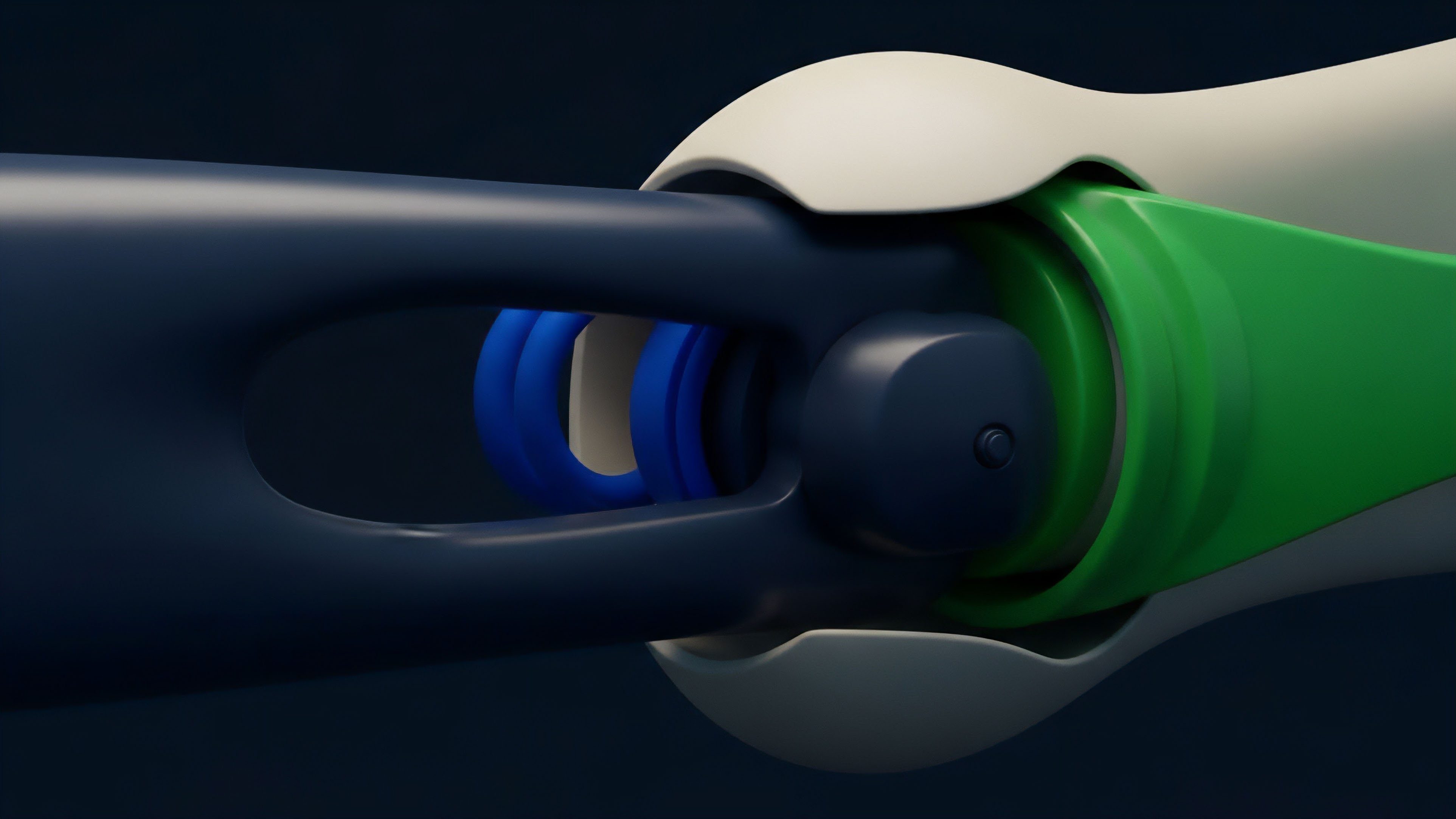

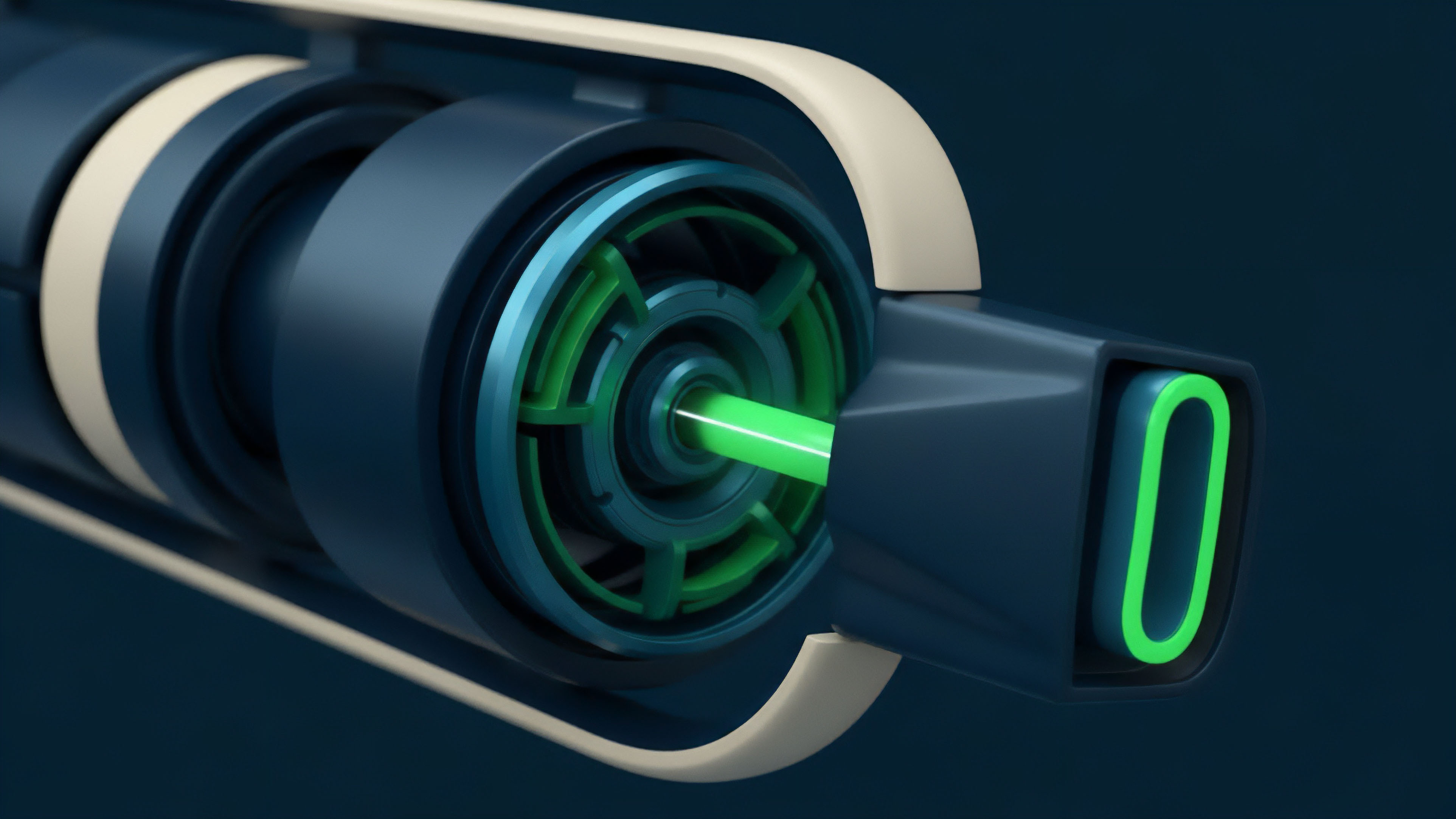

The core theoretical problem with Data Integrity in options protocols is the tension between data speed and data security. A low-latency feed is necessary for options pricing to reflect real-time market movements, but high-speed data feeds are often more susceptible to manipulation, especially during periods of high volatility. The design challenge involves creating mechanisms that can provide timely data while maintaining economic security.

The use of Time-Weighted Average Price (TWAP) mechanisms, for example, mitigates flash loan attacks by averaging prices over time, but introduces latency, making the data less suitable for high-frequency trading strategies. This trade-off between speed and security is a central theoretical constraint in designing robust derivative protocols.

Approach

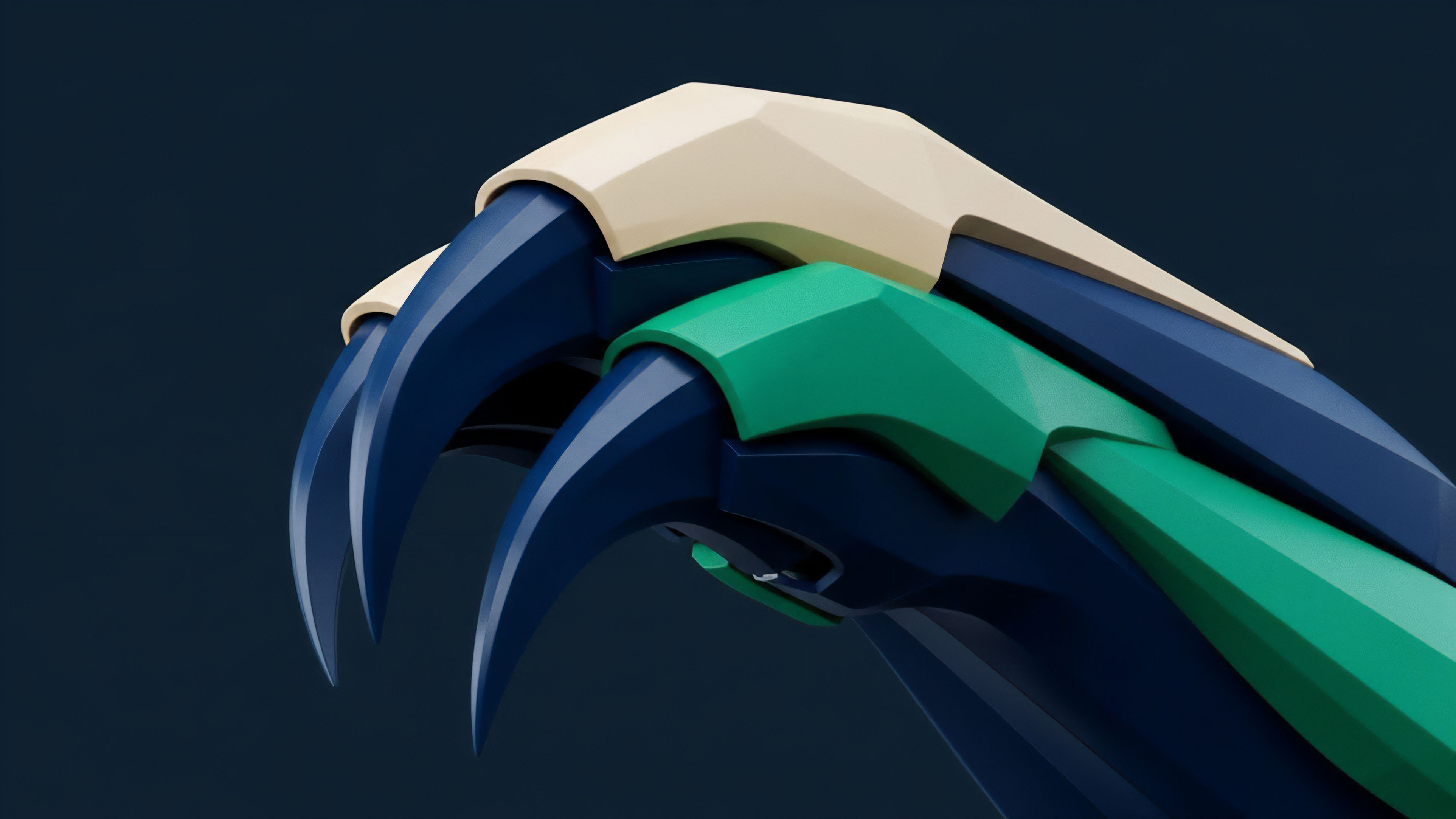

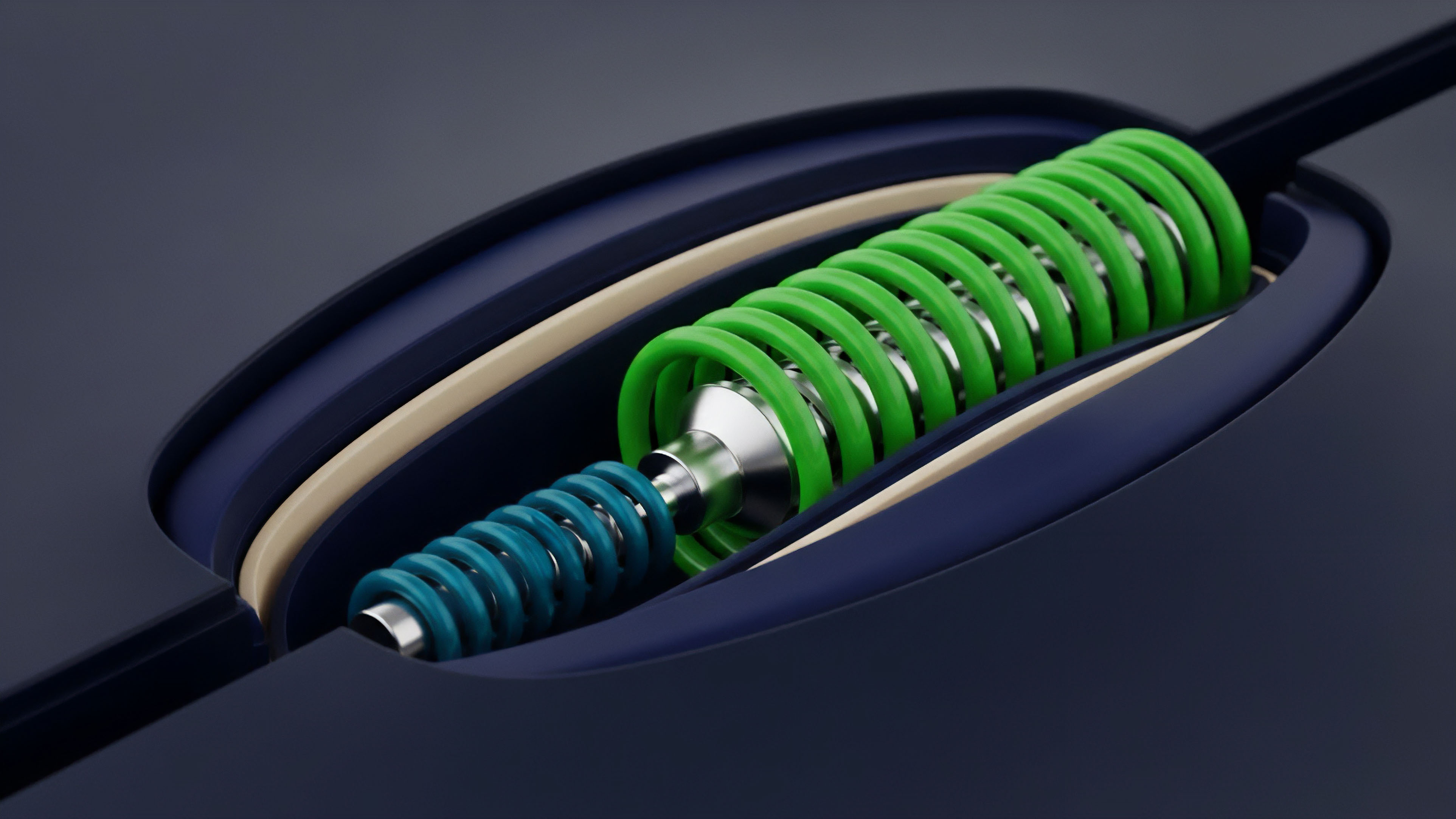

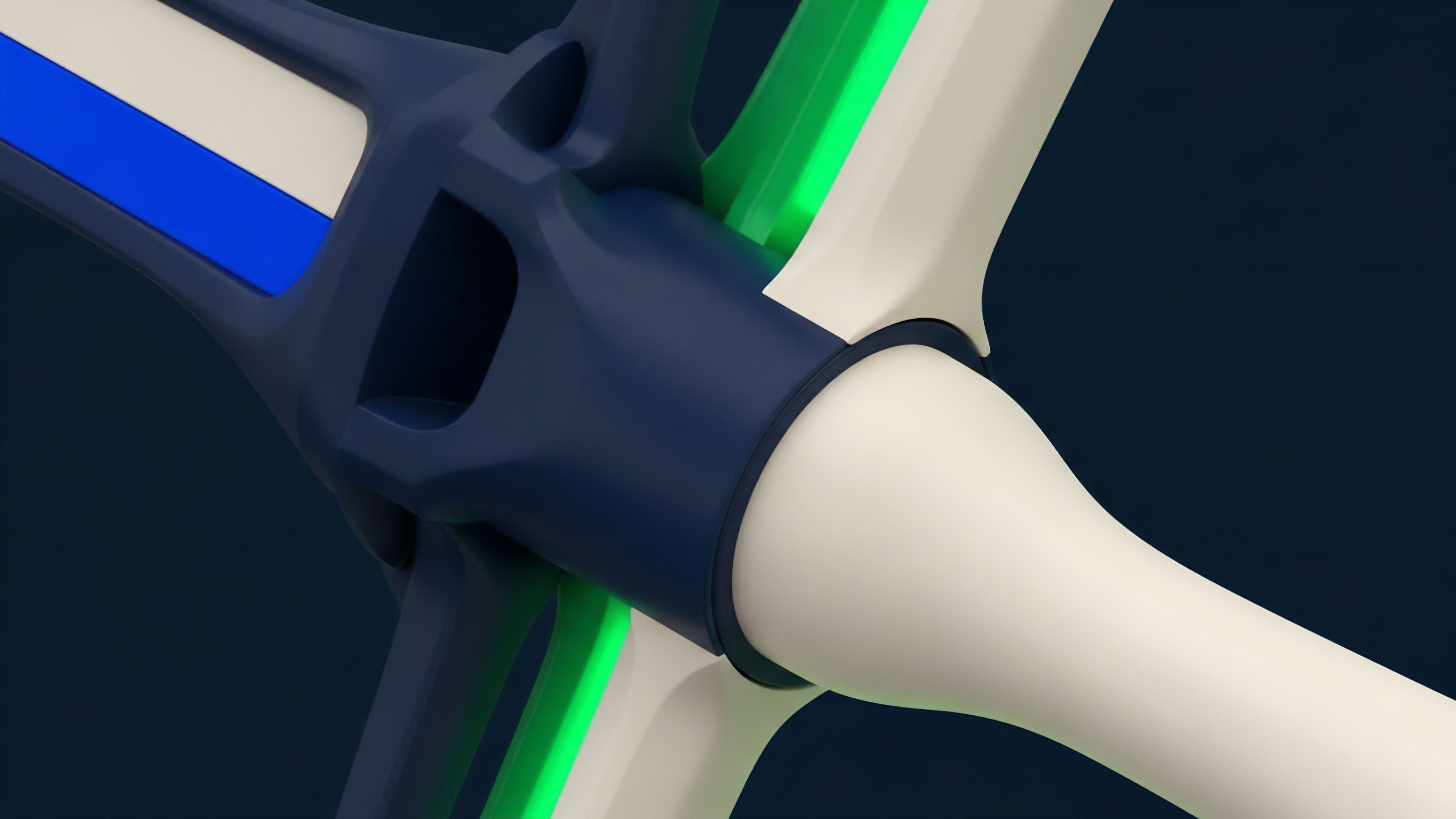

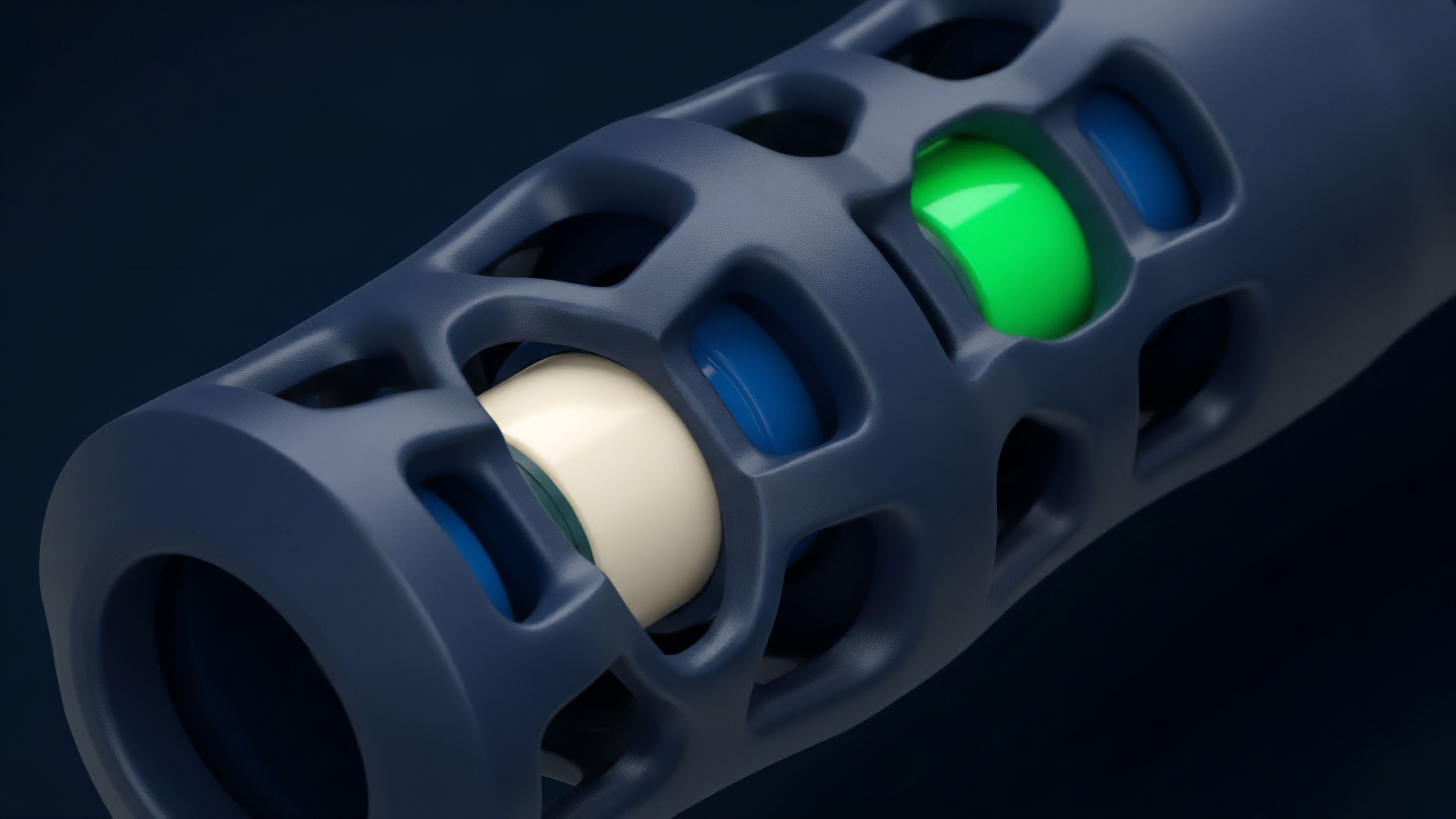

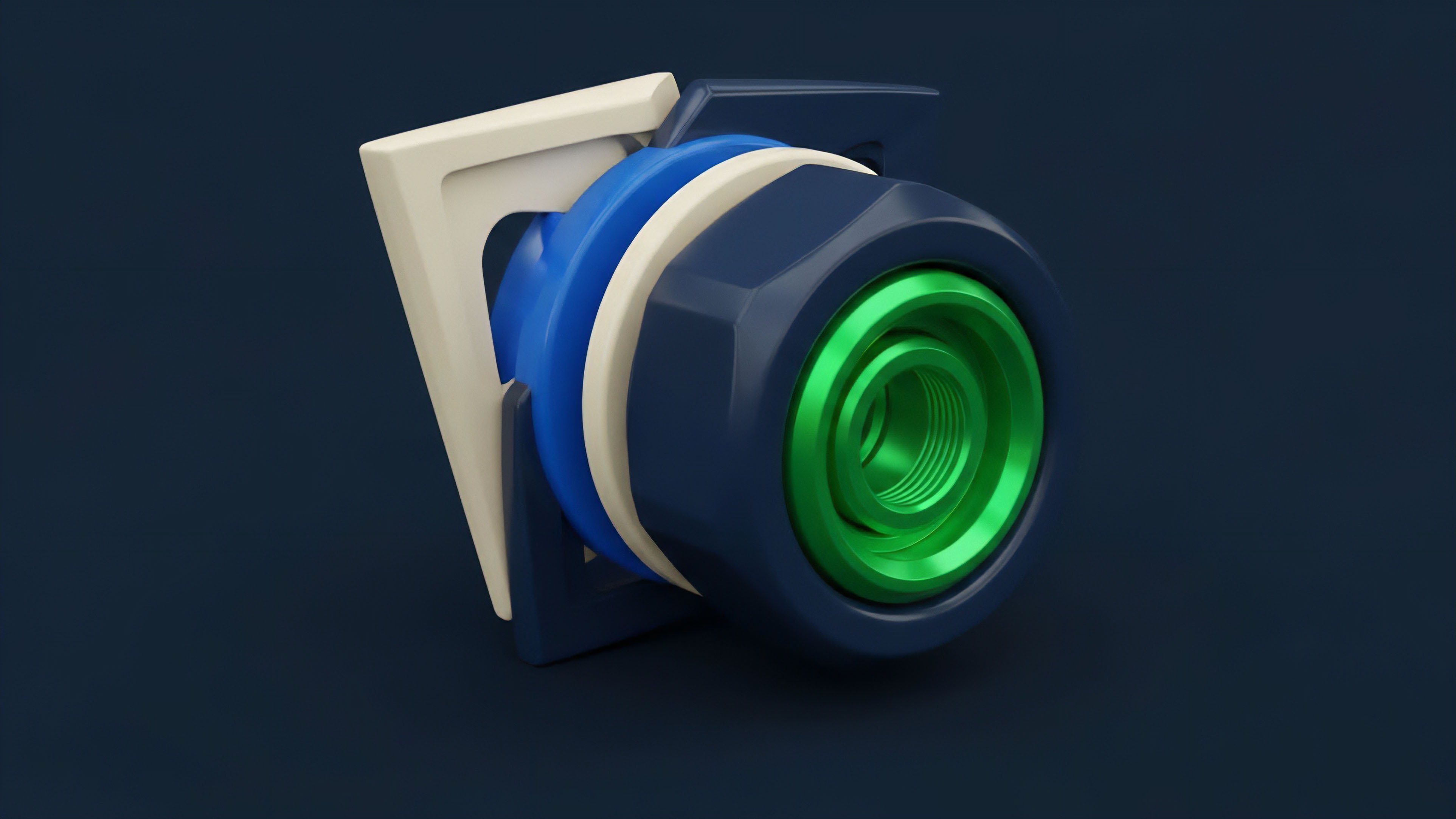

Current approaches to ensuring Data Integrity in decentralized derivatives focus on creating redundant and economically secure oracle systems. The prevailing methodology involves moving away from single-source oracles toward decentralized oracle networks (DONs).

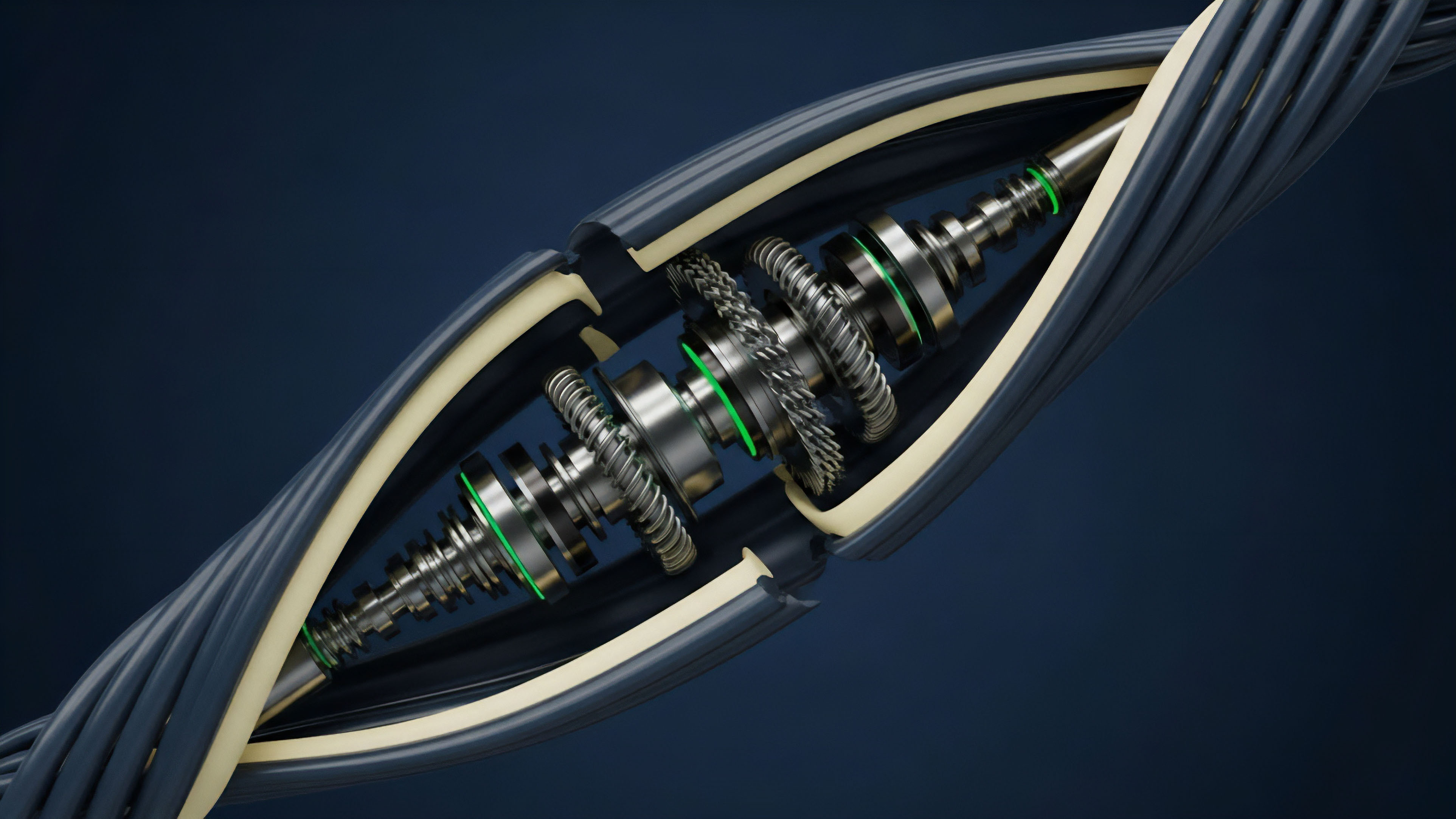

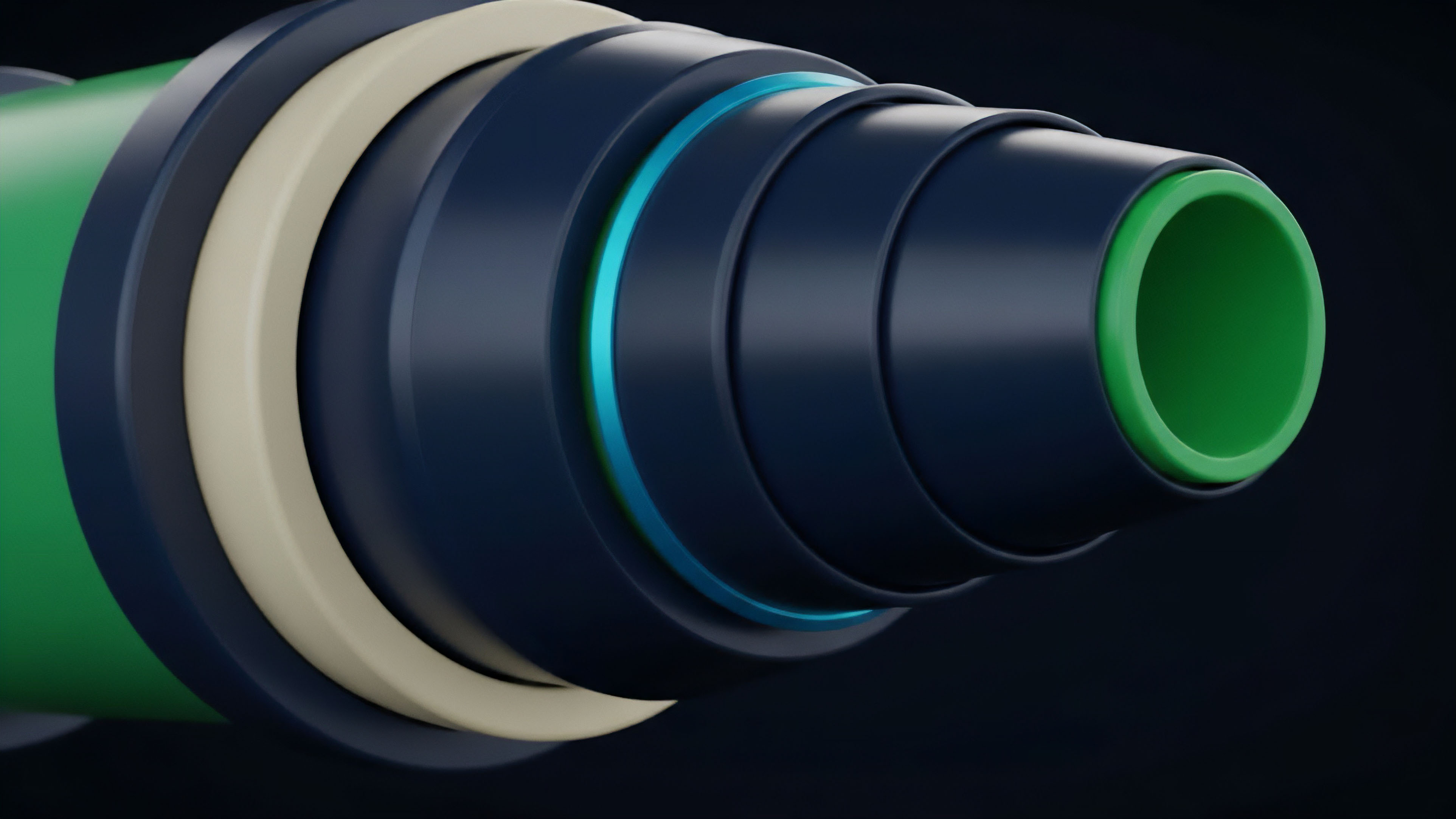

A DON typically operates on a multi-layered security model:

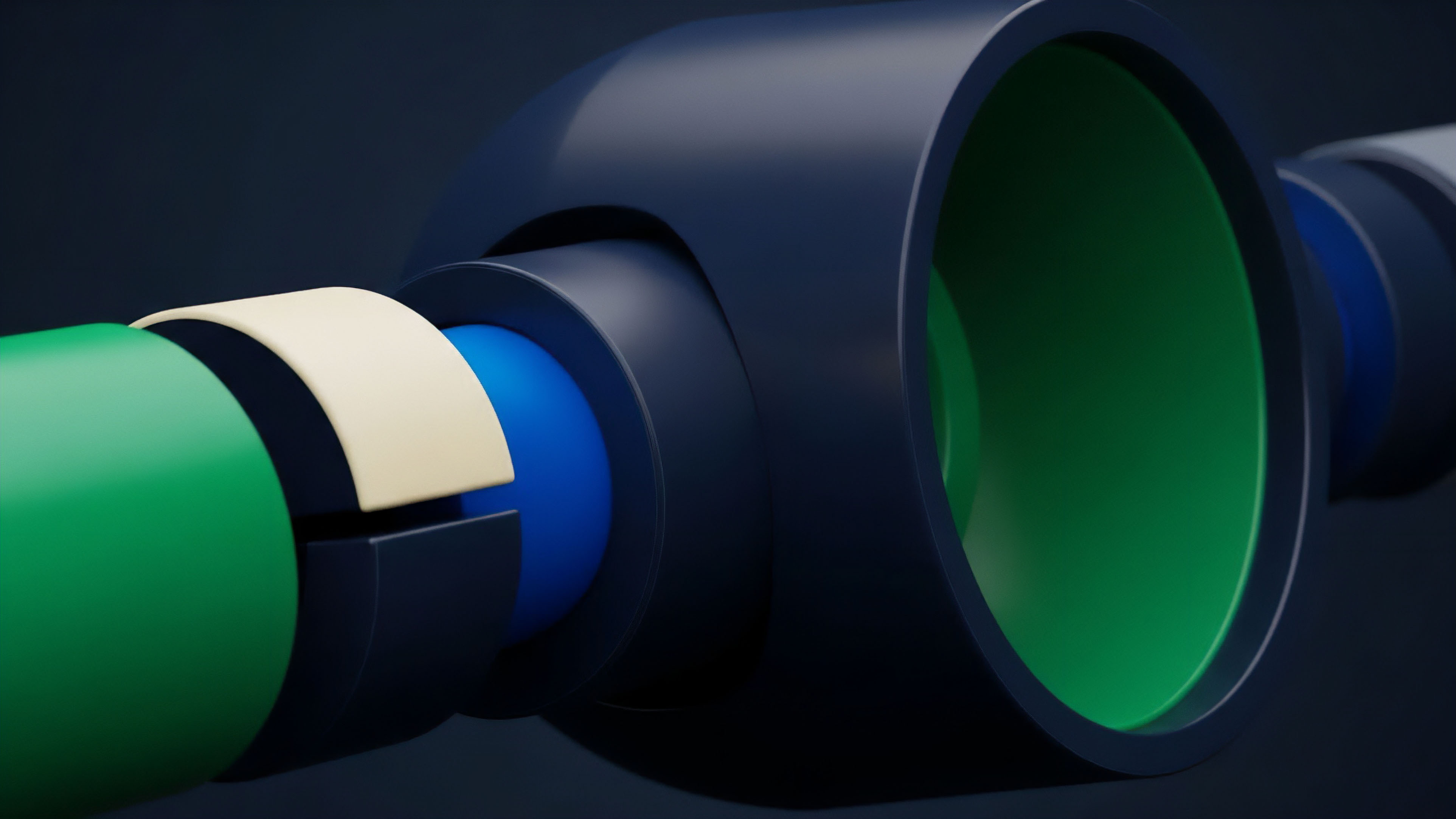

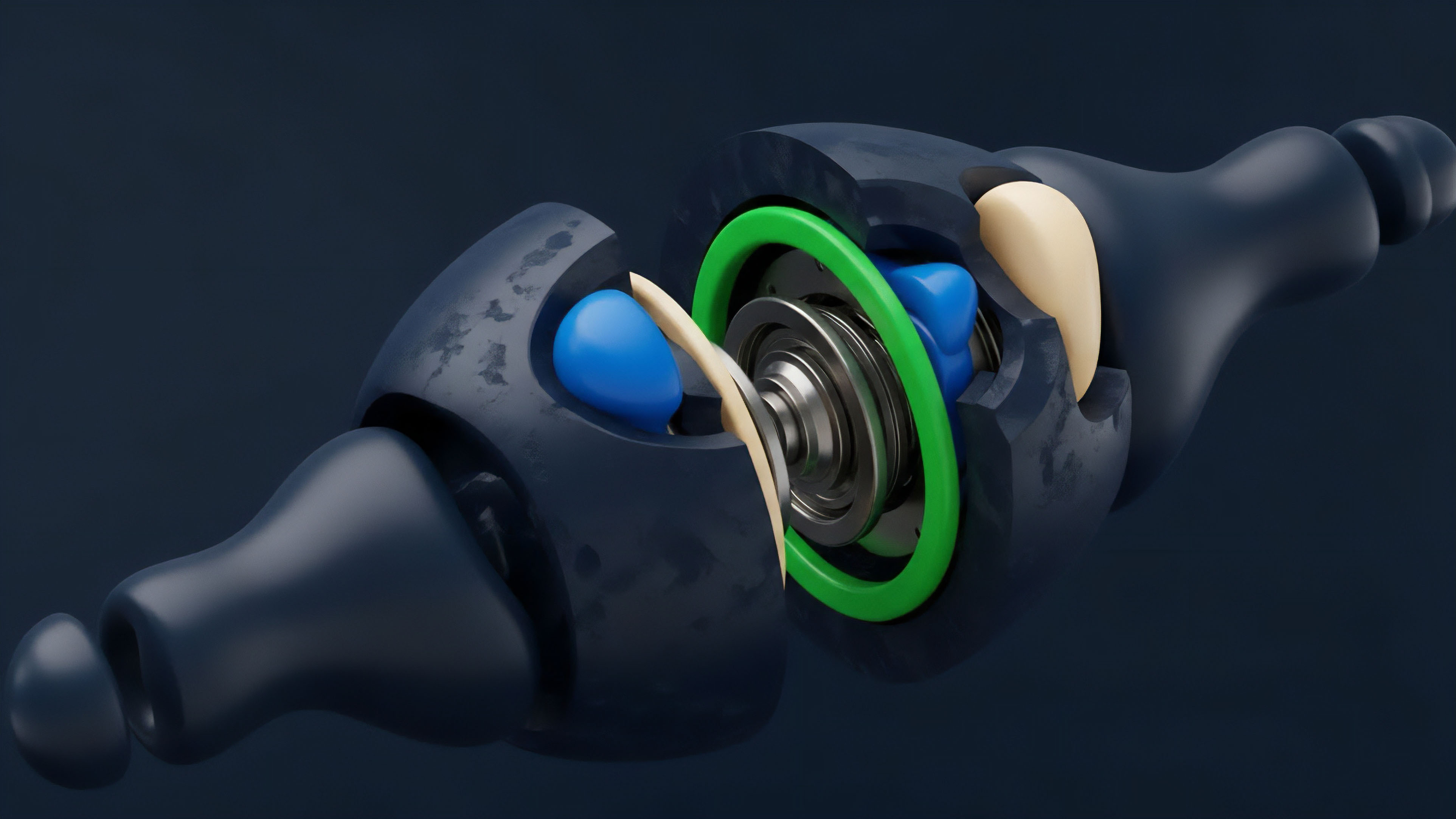

- Data Source Aggregation: Instead of relying on a single exchange price, a DON pulls data from multiple reputable exchanges and data providers. This makes it significantly more expensive for an attacker to manipulate the data feed, as they would need to manipulate prices across multiple venues simultaneously.

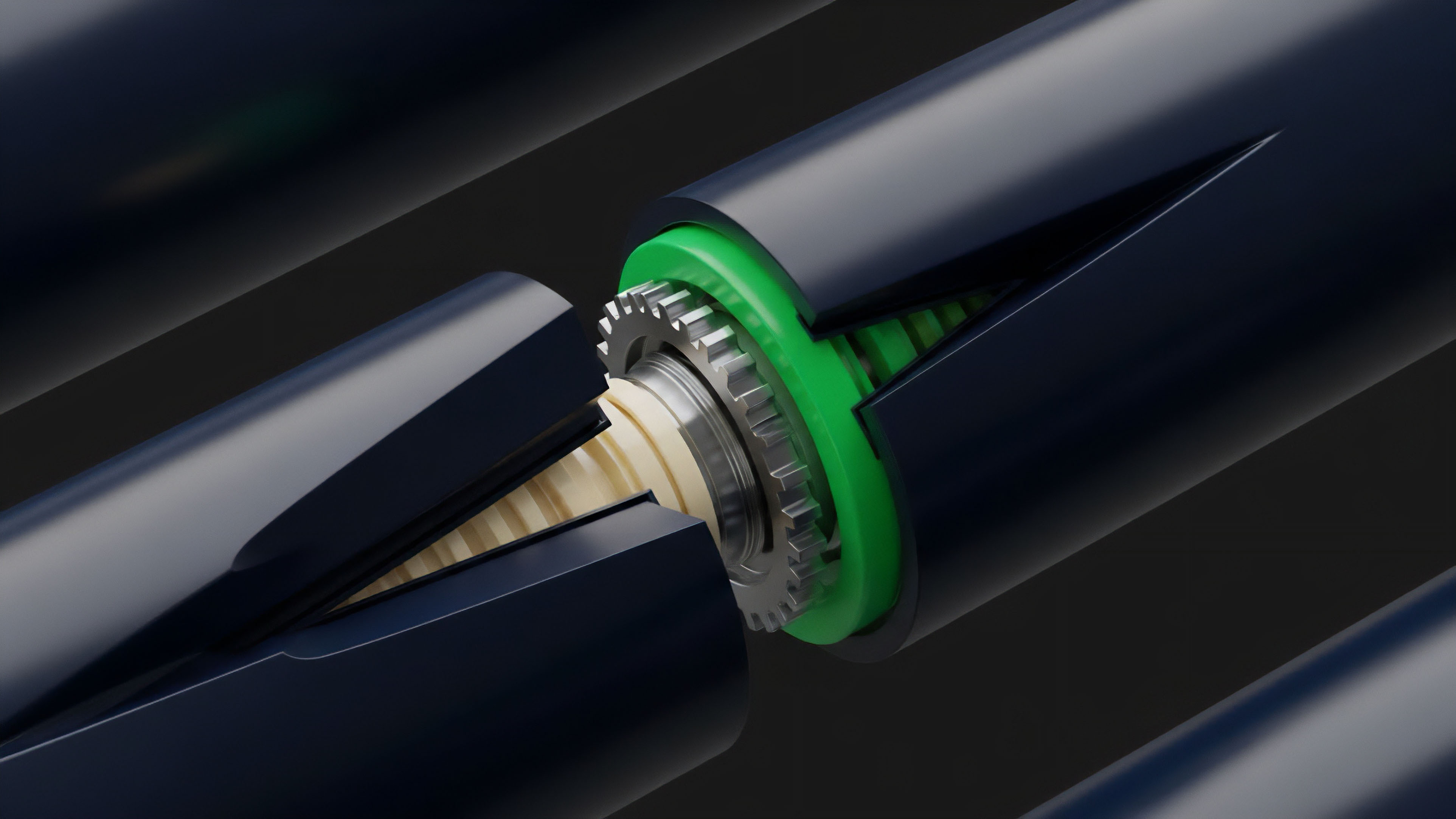

- Decentralized Node Network: The data aggregation process is executed by a network of independent nodes. Each node fetches data from the sources, signs the data cryptographically, and submits it to the network. The final price is determined by aggregating these individual reports, often using a median or a weighted average. This prevents a single malicious node from corrupting the entire feed.

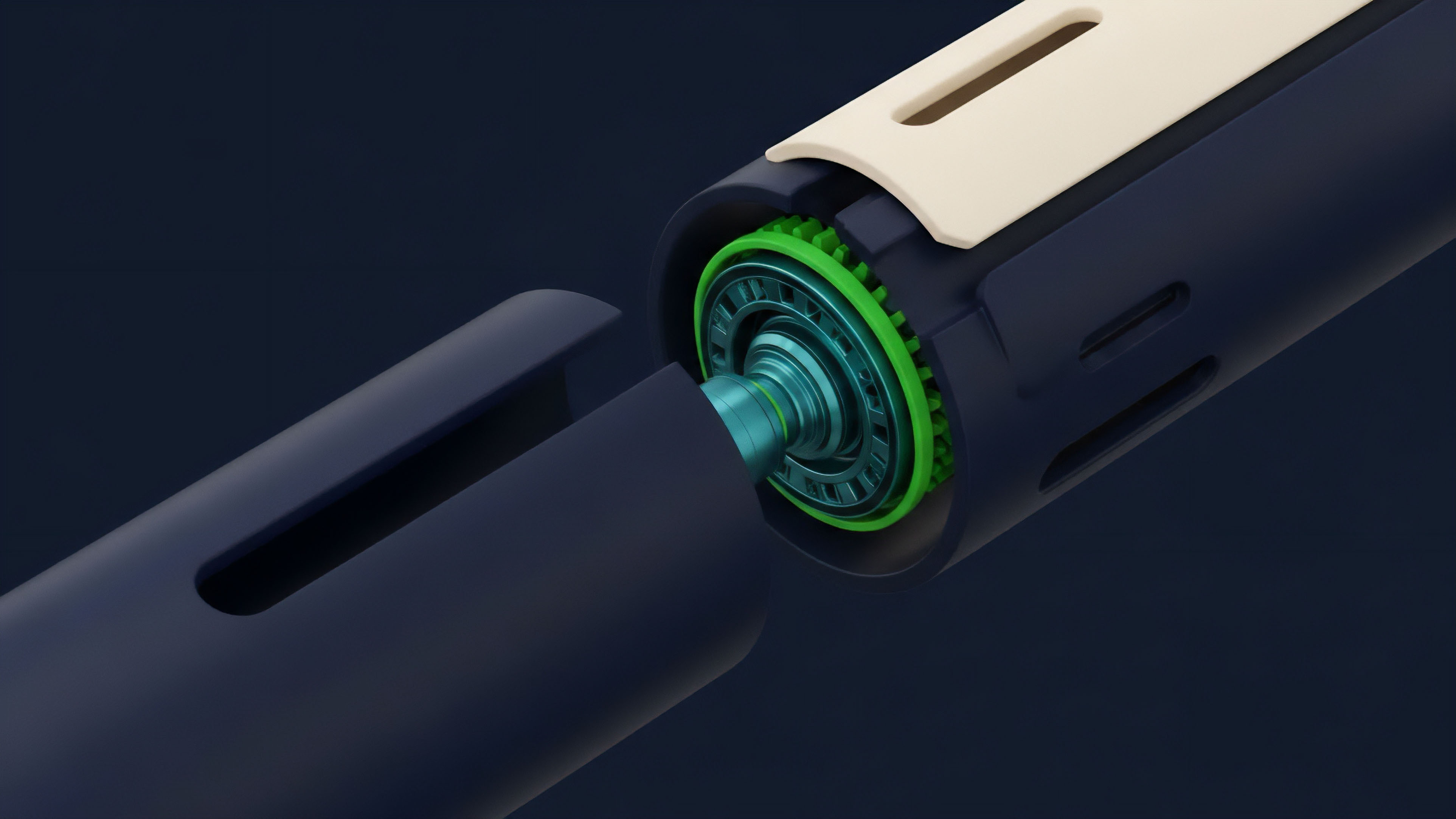

- Economic Incentives and Penalties: Nodes are often required to stake collateral to participate in the network. If a node submits incorrect or malicious data, its stake is slashed, creating a financial disincentive for bad behavior. This economic security model ensures that honest behavior is rewarded and malicious behavior is penalized.

For options protocols, the specific implementation of Data Integrity also depends on the type of derivative. Perpetual futures, for example, often use TWAP mechanisms to smooth out price volatility and prevent flash loan attacks on liquidations. Options protocols, however, require more precise and timely data for accurate pricing and risk management.

The design choices for a protocol’s Data Integrity framework directly dictate its risk profile. The following table illustrates the trade-offs in different oracle approaches for derivatives:

| Oracle Approach | Data Source Count | Latency | Security Risk Profile |

|---|---|---|---|

| Single-Source Oracle | 1 | Low | High (Single point of failure) |

| TWAP Oracle (On-Chain) | 1 (Internal) | High | Medium (Mitigates flash loans) |

| Decentralized Oracle Network (DON) | Many | Medium | Low (High cost of attack) |

Evolution

The evolution of Data Integrity in crypto derivatives has been driven by a cycle of innovation and exploitation. The initial phase saw protocols relying on simple, often centralized oracles. The vulnerabilities of this model were exposed through numerous high-profile exploits, where attackers manipulated spot prices on decentralized exchanges to trigger liquidations or gain from mispriced derivatives.

These incidents forced protocols to rethink their fundamental data architecture.

The transition to multi-source aggregation marked the second phase of evolution. Protocols began integrating solutions that combined data from multiple exchanges, making manipulation more costly. However, even these solutions proved vulnerable to “data source failure” or “data source manipulation” where a large, single source (like a major centralized exchange) experienced technical issues or manipulation that propagated through the entire oracle network.

The current phase of evolution focuses on creating more resilient, multi-layered systems. This includes the integration of on-chain data verification methods, where data from the oracle is checked against a set of rules before being accepted by the smart contract. Furthermore, protocols are increasingly designing bespoke oracle solutions tailored to their specific risk requirements.

A protocol dealing with highly volatile, low-liquidity assets requires a different data integrity framework than one dealing with high-liquidity assets like Bitcoin or Ethereum. This specialization reflects a maturation in understanding the nuanced risks associated with different data sources and asset classes.

The history of Data Integrity in DeFi is a history of adapting to adversarial market conditions, where each exploit reveals a flaw in the current model and drives the development of more robust, economically secure solutions.

Horizon

Looking ahead, the future of Data Integrity in crypto derivatives points toward two significant developments: cryptographic verification and a shift toward “first-principles” data sources. The first development involves the integration of Zero-Knowledge Proofs (ZKPs). ZKPs allow for the verification of data without revealing the data itself.

In the context of derivatives, this could enable a new class of options contracts where the data source can be cryptographically guaranteed as valid, without exposing sensitive market data to all participants. This changes the game for privacy-preserving derivatives.

The second development involves moving beyond simple price feeds to verifiable real-world data. We are beginning to see derivatives that settle based on verifiable information, such as weather data, sports results, or even insurance claims. This requires a new approach to Data Integrity, moving beyond price feeds to verifiable computation.

The challenge here is to create secure and decentralized mechanisms for ingesting complex, non-financial data into a blockchain environment. This will likely involve a combination of decentralized identity (DID) for data sources and a move toward verifiable computation where the data’s integrity can be proven mathematically. The long-term horizon for Data Integrity is a system where data feeds are not just trusted, but are mathematically provable and integrated seamlessly into a protocol’s risk engine, allowing for a new generation of sophisticated financial instruments based on verifiable real-world outcomes.

Glossary

Volatility Skew

Network Integrity

State Element Integrity

Dark Pool Integrity

Data Oracle Integrity

Derivative Systemic Integrity

Execution Integrity Guarantee

Data Integrity Drift

Financial Instrument Reliability