Essence

The functional definition of a low latency data feed within the context of crypto derivatives is a mechanism for delivering price information with minimal delay, typically measured in milliseconds or microseconds, from a source exchange or oracle network to a trading algorithm or smart contract. This high-velocity data transmission is the fundamental prerequisite for efficient price discovery and accurate risk management in high-frequency trading environments. Without near-instantaneous data, derivatives pricing models cannot function correctly, leading to significant mispricing, slippage in hedging strategies, and opportunities for arbitrage exploitation.

The speed of data delivery dictates the possible strategies available to market participants. In decentralized finance, this concept extends beyond simple API access. The challenge shifts to verifying data integrity on-chain while minimizing the inherent latency introduced by block propagation and consensus mechanisms.

For options and perpetual contracts, data feeds are not just inputs for trading decisions; they are the mechanism for calculating collateral requirements, determining liquidation thresholds, and calculating settlement prices. The speed and reliability of this data directly affect the solvency and stability of the entire protocol. A data feed that lags behind market movements creates a systemic vulnerability, allowing for front-running and manipulation that can destabilize the underlying derivative product.

The speed of data delivery is a critical determinant of market microstructure, dictating the feasibility of arbitrage strategies and the accuracy of derivative pricing models.

Origin

The requirement for low latency data originated in traditional financial markets with the transition from open-outcry trading floors to electronic exchanges in the late 1990s and early 2000s. The shift to digital order books created a new competitive environment where information velocity became paramount. Market makers and high-frequency trading firms invested heavily in co-location facilities, placing their servers physically next to the exchange’s matching engine to minimize the travel time of data packets.

This infrastructure created a clear advantage for those who could process and act on price changes faster than their competitors. When crypto derivatives markets began to mature, they inherited this architecture. Early crypto options platforms were built on centralized exchanges (CEXs) and relied on traditional WebSocket APIs for data feeds.

However, the emergence of decentralized finance (DeFi) introduced a new challenge: how to bring real-time off-chain data onto a blockchain for use in smart contracts. The inherent latency of blockchain consensus (e.g. Ethereum’s 12-second block time) meant that traditional data feeds were unsuitable for real-time risk management on-chain.

This gave rise to decentralized oracle networks. These networks were designed to aggregate data from multiple sources and push updates to the blockchain, creating a new form of data feed specifically tailored for decentralized applications.

Theory

From a quantitative finance perspective, the impact of latency on derivatives pricing can be modeled as a source of information asymmetry and market friction.

The value of a data feed is determined by the speed at which it allows a participant to identify and execute against a pricing discrepancy. In option pricing, models like Black-Scholes rely on continuous-time assumptions. In practice, data feeds are discrete and delayed.

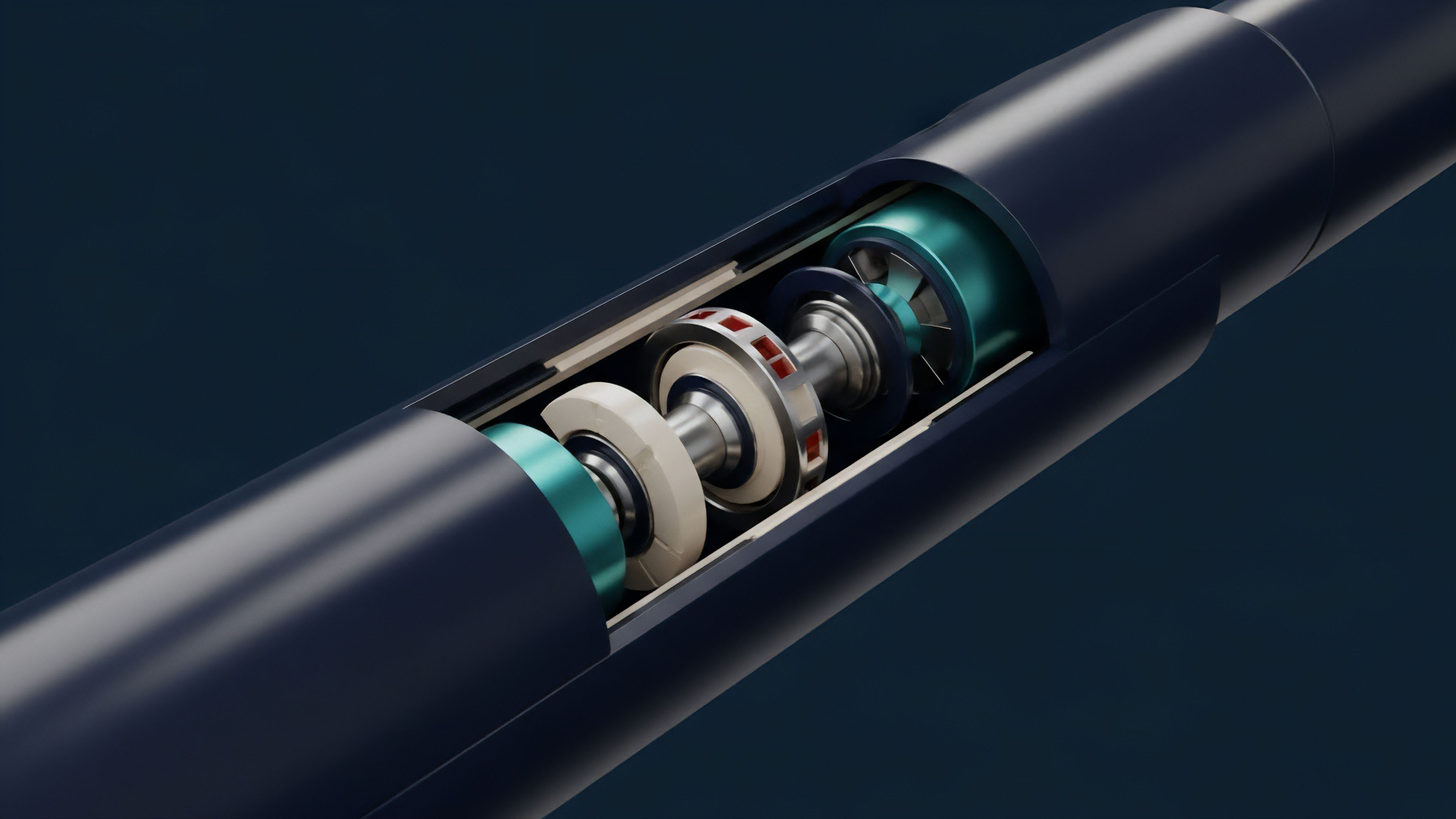

The gap between the true market price and the data feed’s price creates an opportunity for latency arbitrage. A market maker’s primary risk in providing liquidity for options is managing their delta hedge. When a market maker sells an option, they must simultaneously buy or sell the underlying asset to maintain a delta-neutral position.

If the underlying asset’s price changes, the option’s delta changes immediately. A low latency data feed ensures the market maker can execute the required adjustment to their hedge position before the price moves significantly further, thus minimizing slippage and PnL variance. Conversely, a high latency feed means the market maker is constantly hedging based on stale data, incurring losses.

The following table compares the latency challenges in centralized and decentralized environments:

| Environment | Latency Source | Impact on Derivatives | Mitigation Strategy |

|---|---|---|---|

| Centralized Exchanges (CEXs) | API response time, network congestion, physical distance from servers. | HFT latency arbitrage, order book front-running, mispricing of short-lived options. | Co-location, dedicated direct feeds, high-throughput network infrastructure. |

| Decentralized Finance (DeFi) | Blockchain block time, oracle update frequency, consensus delay, network propagation. | Oracle manipulation, liquidation cascades, front-running via MEV, inaccurate collateral calculations. | Decentralized oracle networks, layer 2 solutions, off-chain computation, MEV-resistant designs. |

The theoretical challenge in decentralized markets is particularly acute because data integrity must be secured cryptographically, adding computational overhead. The trade-off is between data freshness and data verification cost.

Approach

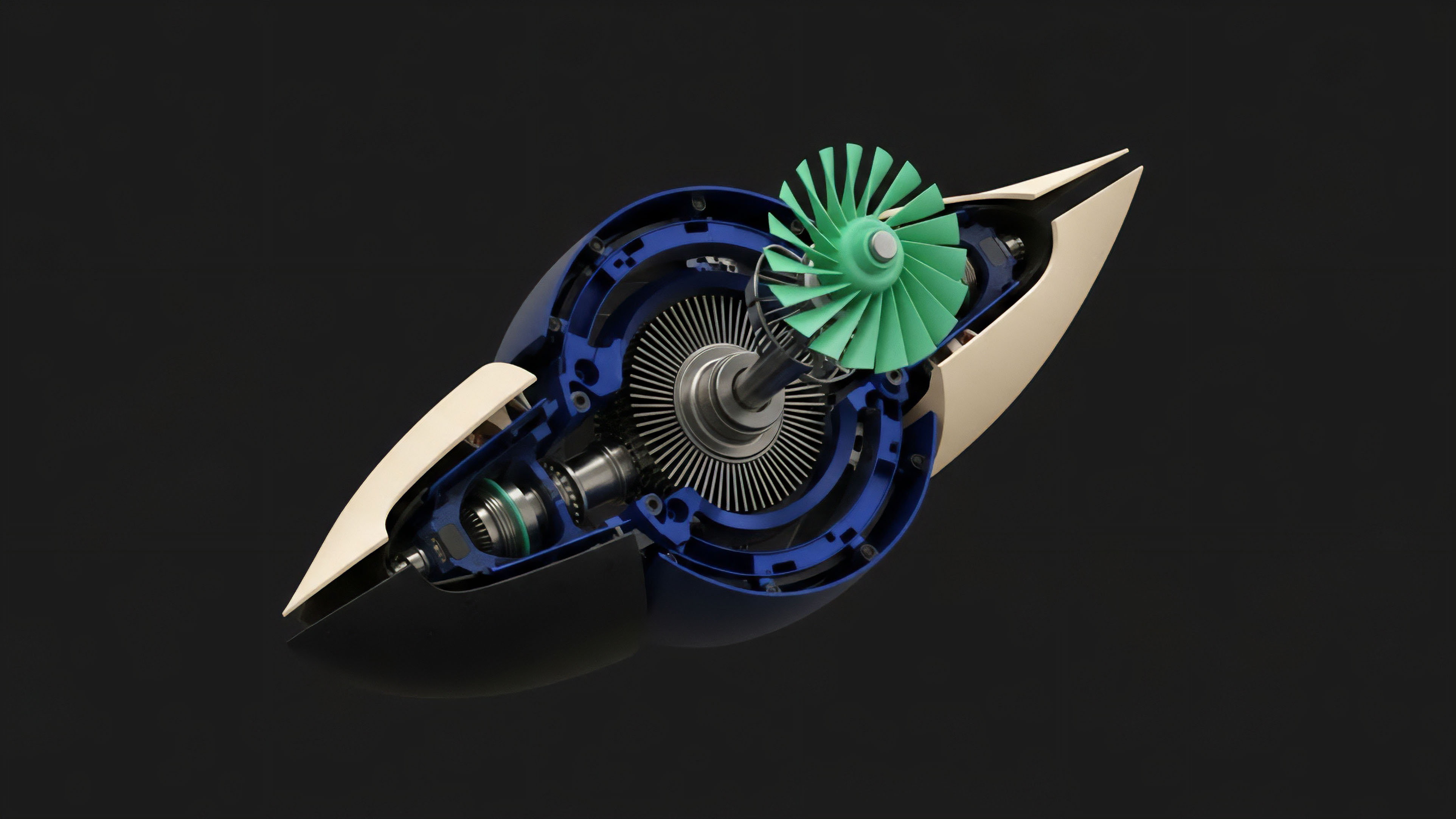

The implementation of low latency data feeds in crypto derivatives protocols follows two distinct approaches, driven by the choice between centralized and decentralized architectures.

The first approach utilizes traditional off-chain data sources, while the second relies on decentralized oracle networks.

- Centralized Data Feeds for CEX Derivatives: These systems closely resemble traditional finance infrastructure. Market makers connect directly to exchange APIs, typically using WebSockets for real-time updates. The data feed delivers a stream of market depth, last trade prices, and option quotes. The primary challenge here is managing network jitter and ensuring the data feed’s reliability during periods of extreme market volatility.

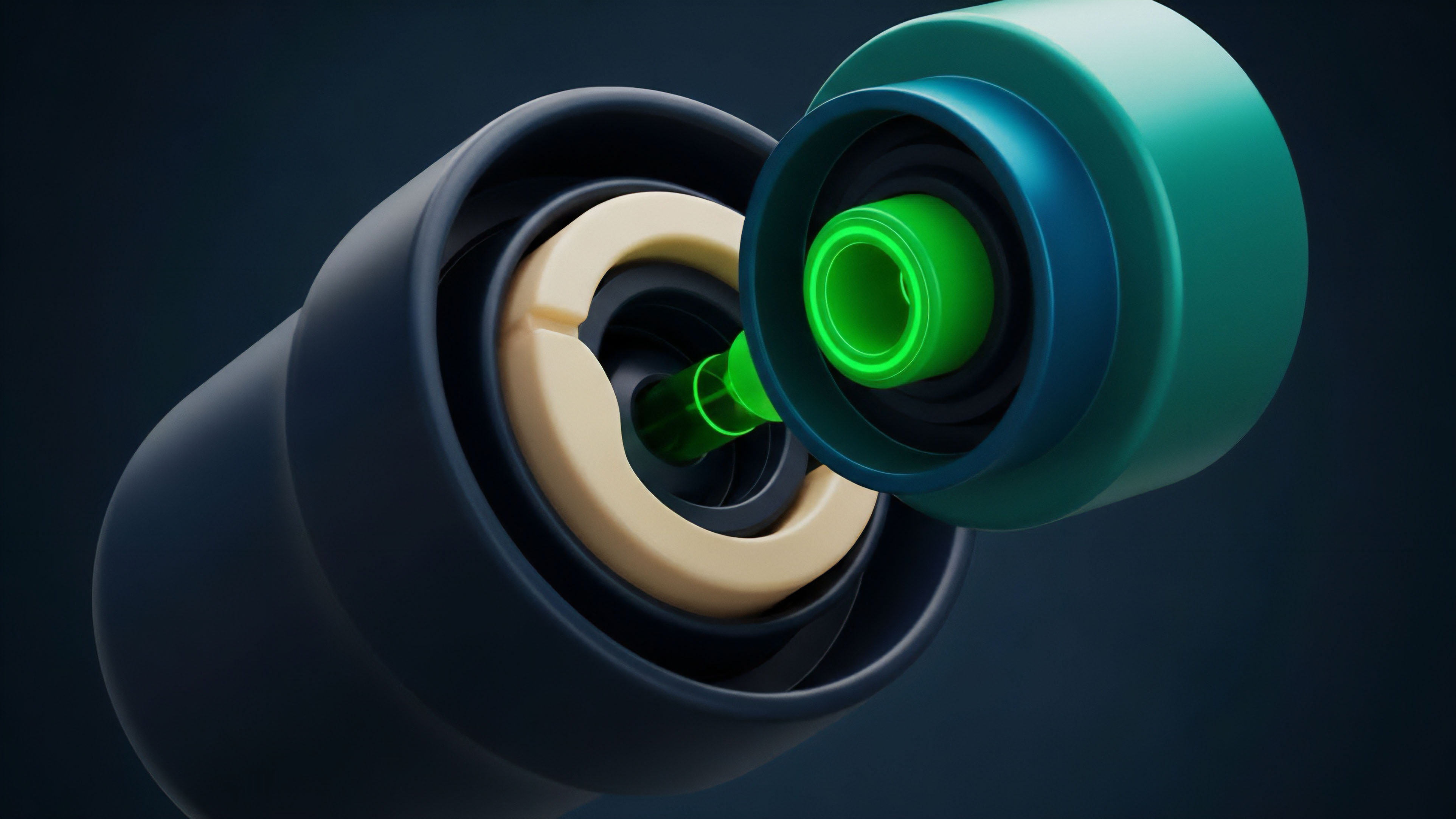

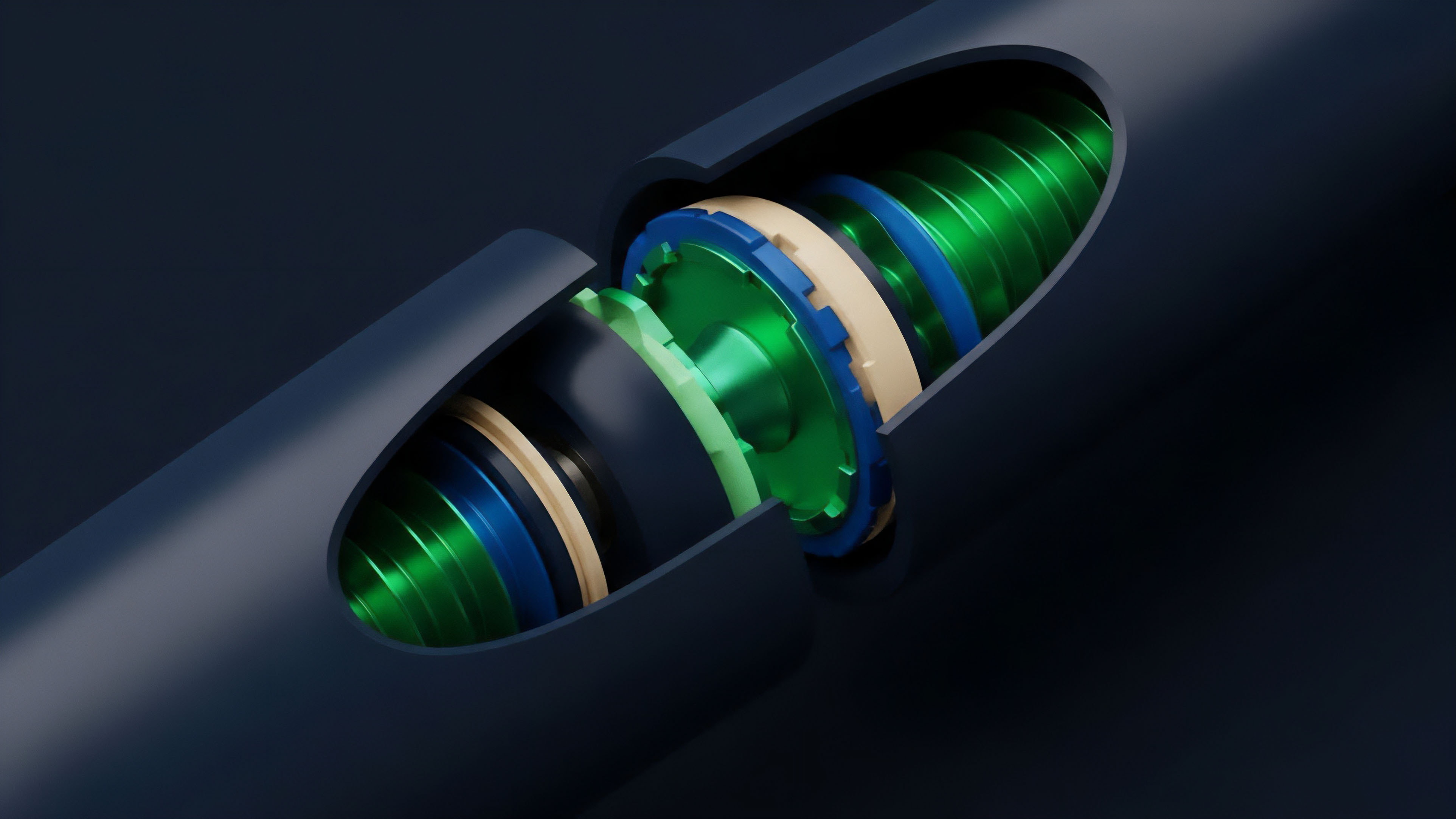

- Decentralized Oracle Networks for On-Chain Derivatives: This approach addresses the “oracle problem,” where smart contracts require external data. These networks aggregate data from multiple independent sources to prevent single points of failure. Two primary models exist:

- Push Model (e.g. Chainlink): Data is pushed onto the blockchain at regular intervals or when a price deviation threshold is met. This provides reliable, verifiable data but introduces inherent latency based on the update frequency. For options, this latency can be significant during volatile market conditions, creating opportunities for on-chain arbitrage.

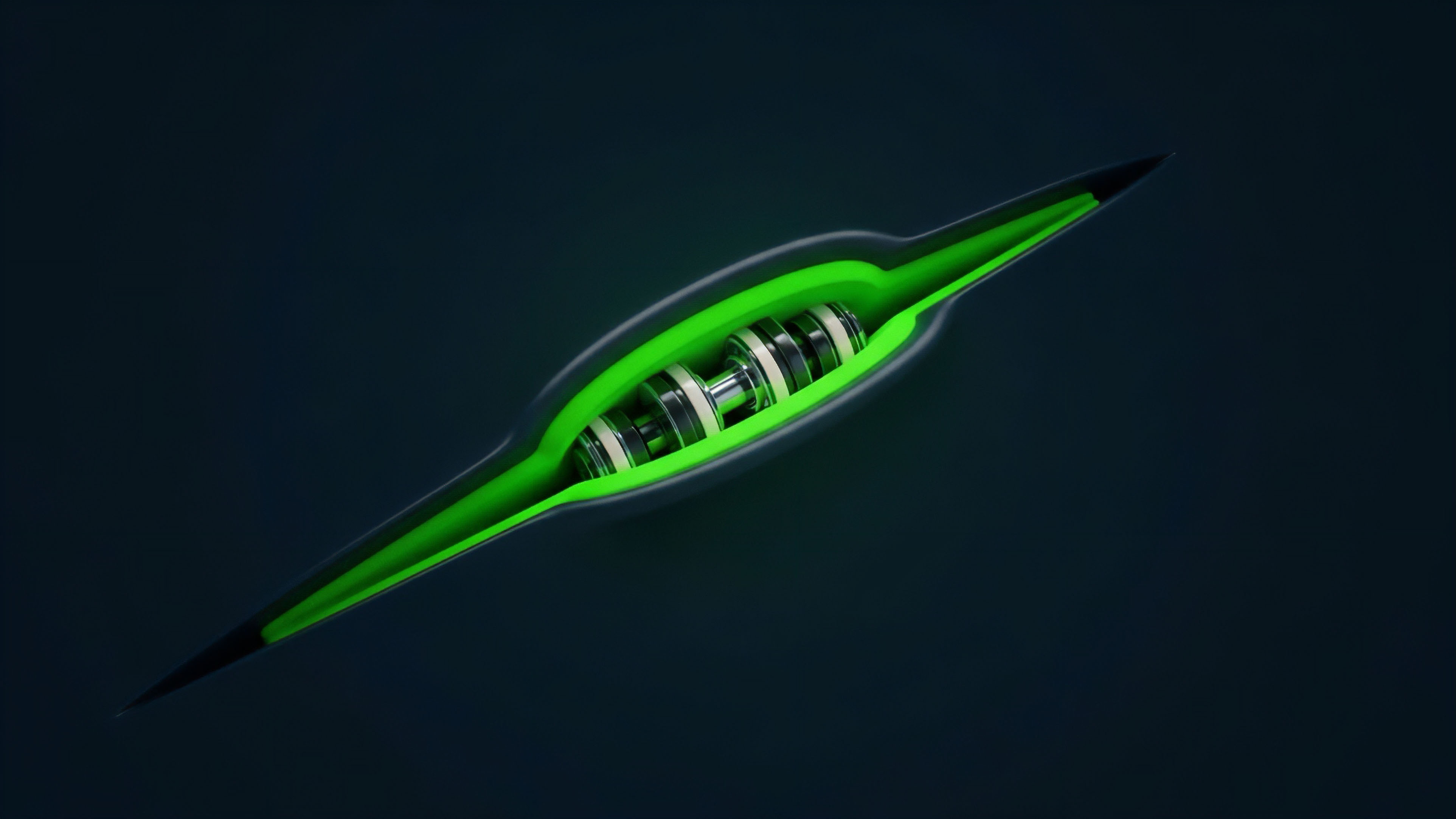

- Pull Model (e.g. Pyth Network): Data is published off-chain in real-time by a network of data providers. Users or protocols request (pull) the data onto the chain when needed. This approach reduces latency by removing the need for data to be pushed at fixed intervals, allowing for data freshness on demand. However, it requires a different incentive structure to ensure data providers are honest and responsive.

A protocol’s choice of data feed determines its risk profile. A protocol that relies on a slower, less frequent data feed for liquidations, for instance, must maintain higher collateralization ratios to account for potential price movements between updates. This trade-off between data latency and capital efficiency is a core design decision for decentralized derivatives platforms.

A protocol’s choice of data feed directly impacts its capital efficiency, as slower data necessitates higher collateralization ratios to absorb price changes between updates.

Evolution

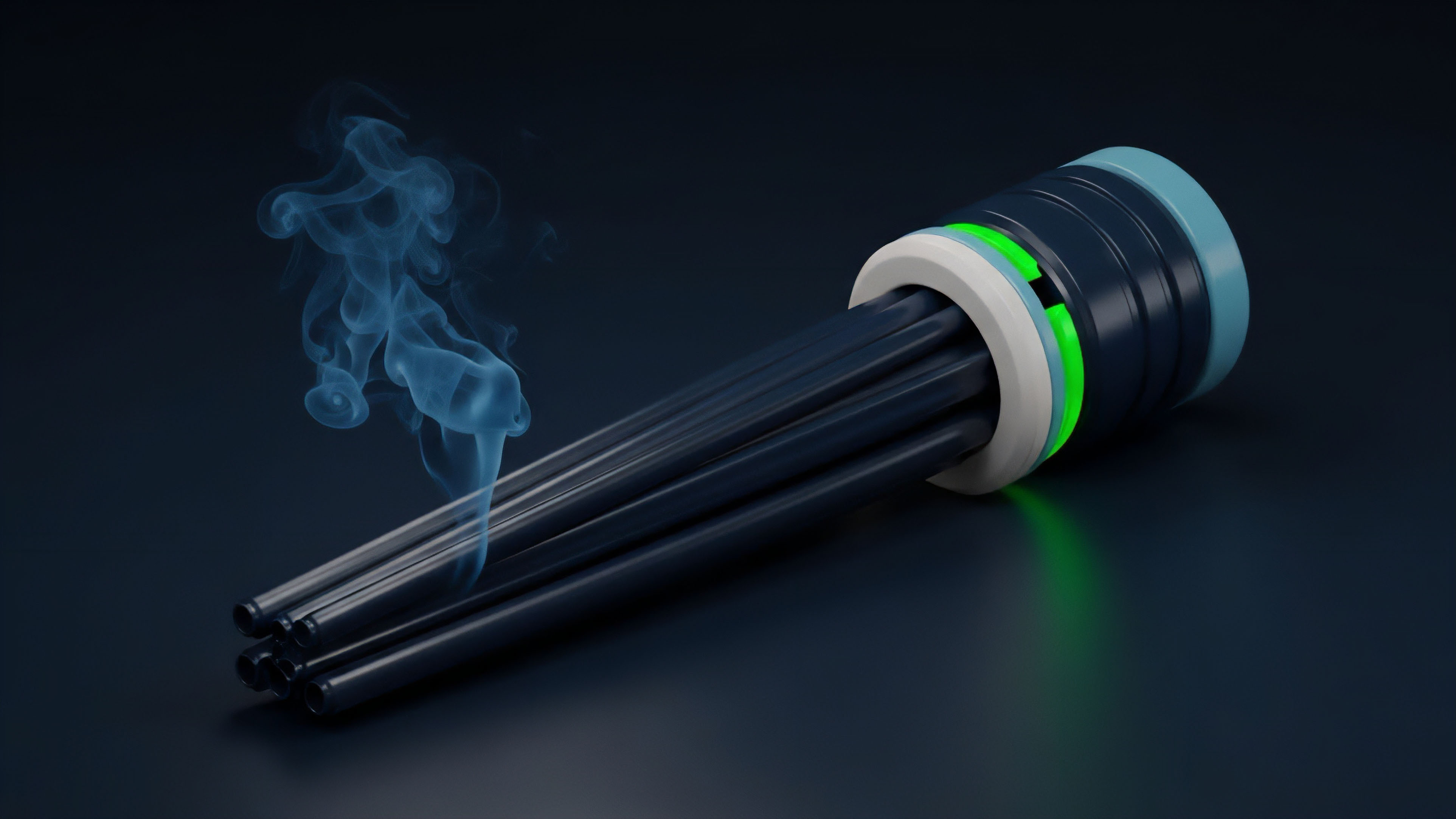

The evolution of low latency data feeds in crypto is intrinsically linked to the development of Maximal Extractable Value (MEV) strategies. Initially, low latency data was seen as a tool for efficient market making. As DeFi matured, participants realized that access to fast data, combined with knowledge of the mempool, allowed for more aggressive strategies.

This led to the rise of front-running and sandwich attacks. A trader with a faster data feed can see an impending large trade in the mempool, calculate the price impact, and execute a trade just before and just after the large trade to profit from the price movement. This adversarial environment has driven significant innovation in data feed architecture.

Protocols have evolved to mitigate MEV by implementing mechanisms such as sequencer centralization or MEV-resistant designs. For instance, some decentralized exchanges have moved to a design where order flow is processed off-chain by a centralized sequencer before being bundled and submitted to the blockchain. This reduces the opportunities for front-running by making data available only to the sequencer.

The current challenge is to balance the need for low latency with the requirement for decentralization. The market structure for data feeds is shifting from a simple data delivery model to a complex system where data integrity and fair execution are prioritized. This evolution has led to the development of specific data feeds for different use cases.

| Data Feed Type | Latency Profile | Primary Use Case | Systemic Risk |

|---|---|---|---|

| Centralized Exchange API | Low (sub-millisecond) | CEX HFT, CEX arbitrage, risk management. | Single point of failure, data manipulation by exchange. |

| On-Chain Oracle (Push) | Medium (seconds) | On-chain collateral calculation, slow settlement. | Stale data, oracle manipulation during high volatility. |

| On-Chain Oracle (Pull) | Low (milliseconds) | Real-time liquidations, high-frequency on-chain trading. | Potential for data provider collusion, cost of on-demand data. |

Horizon

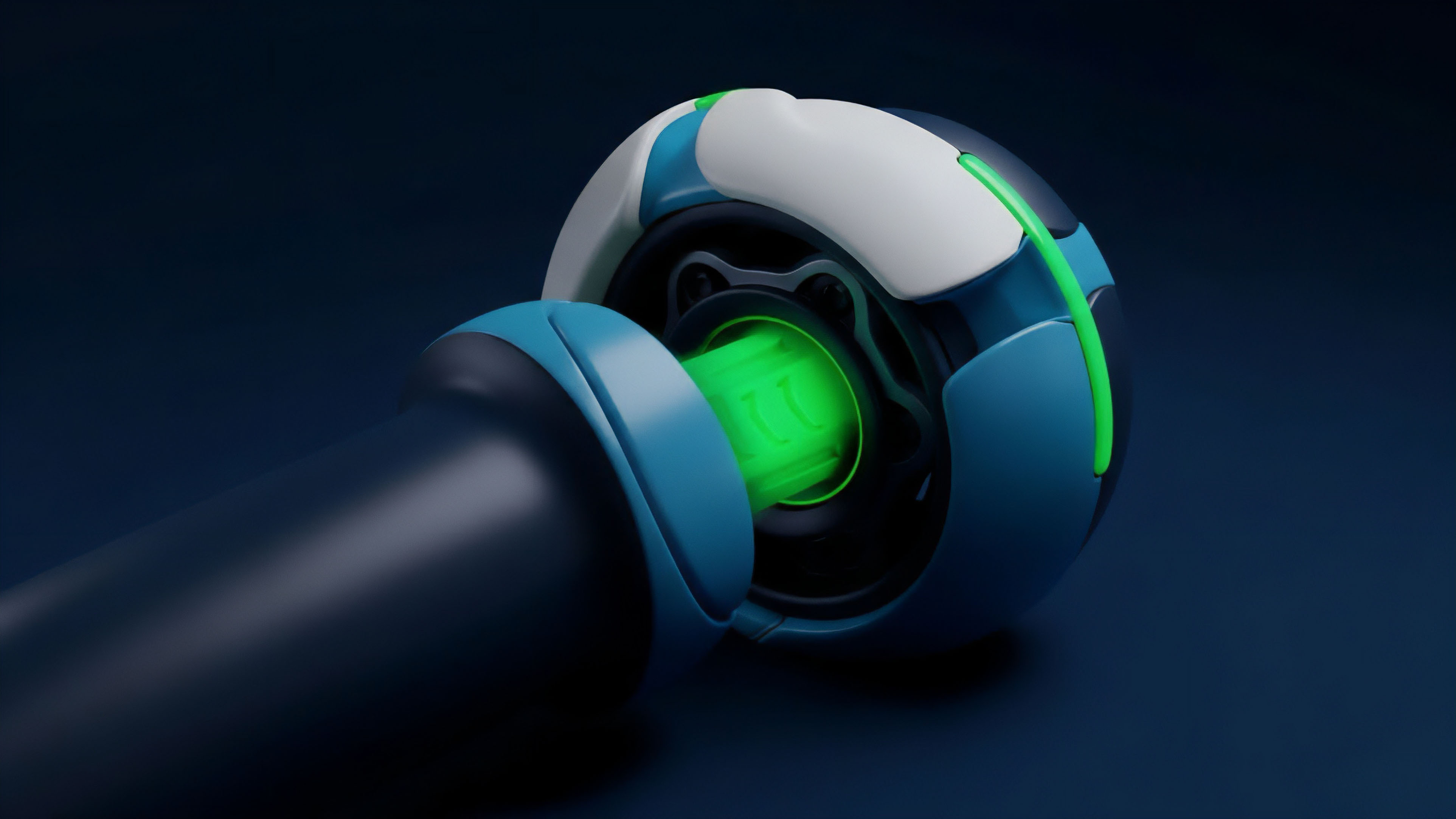

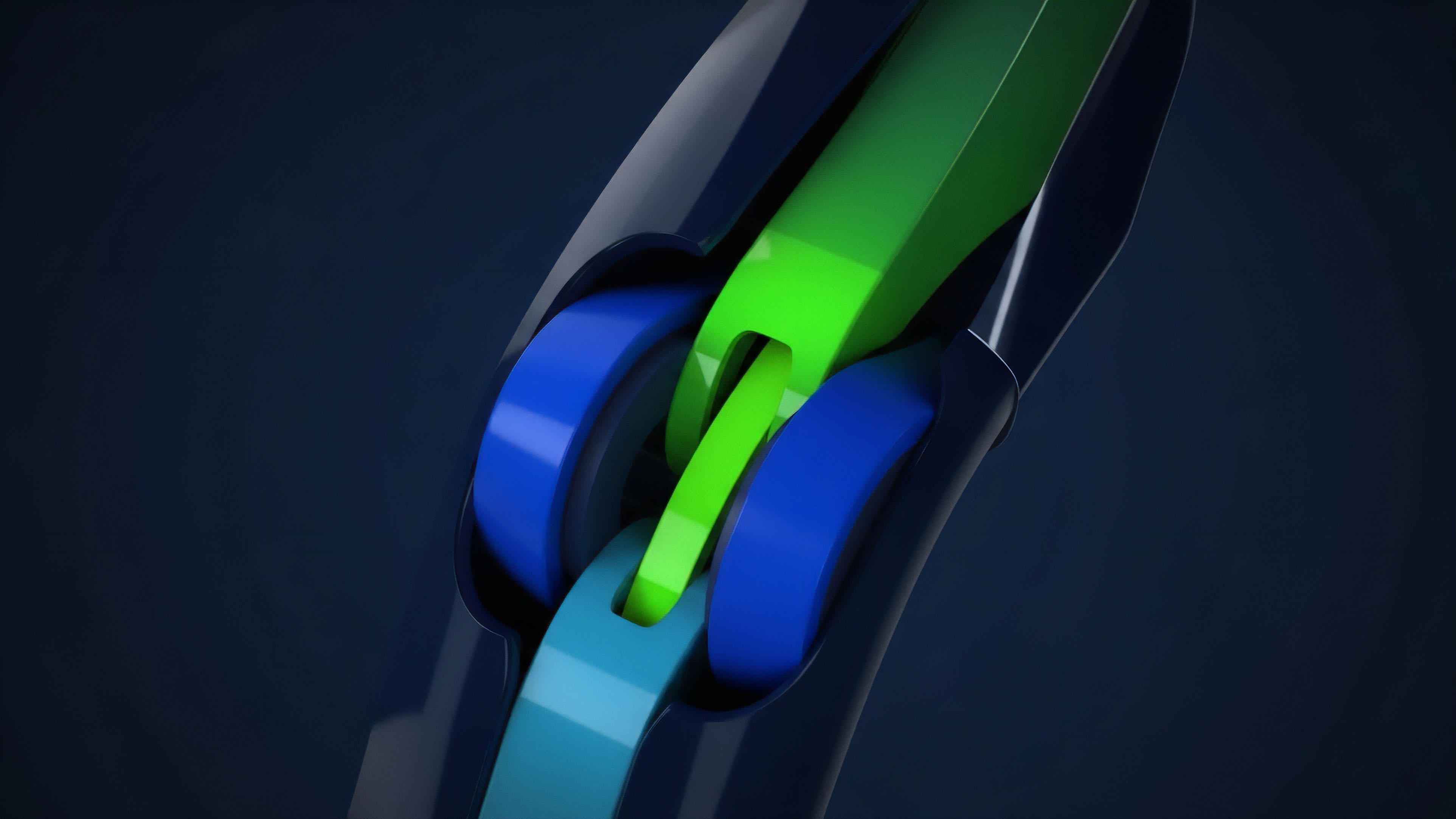

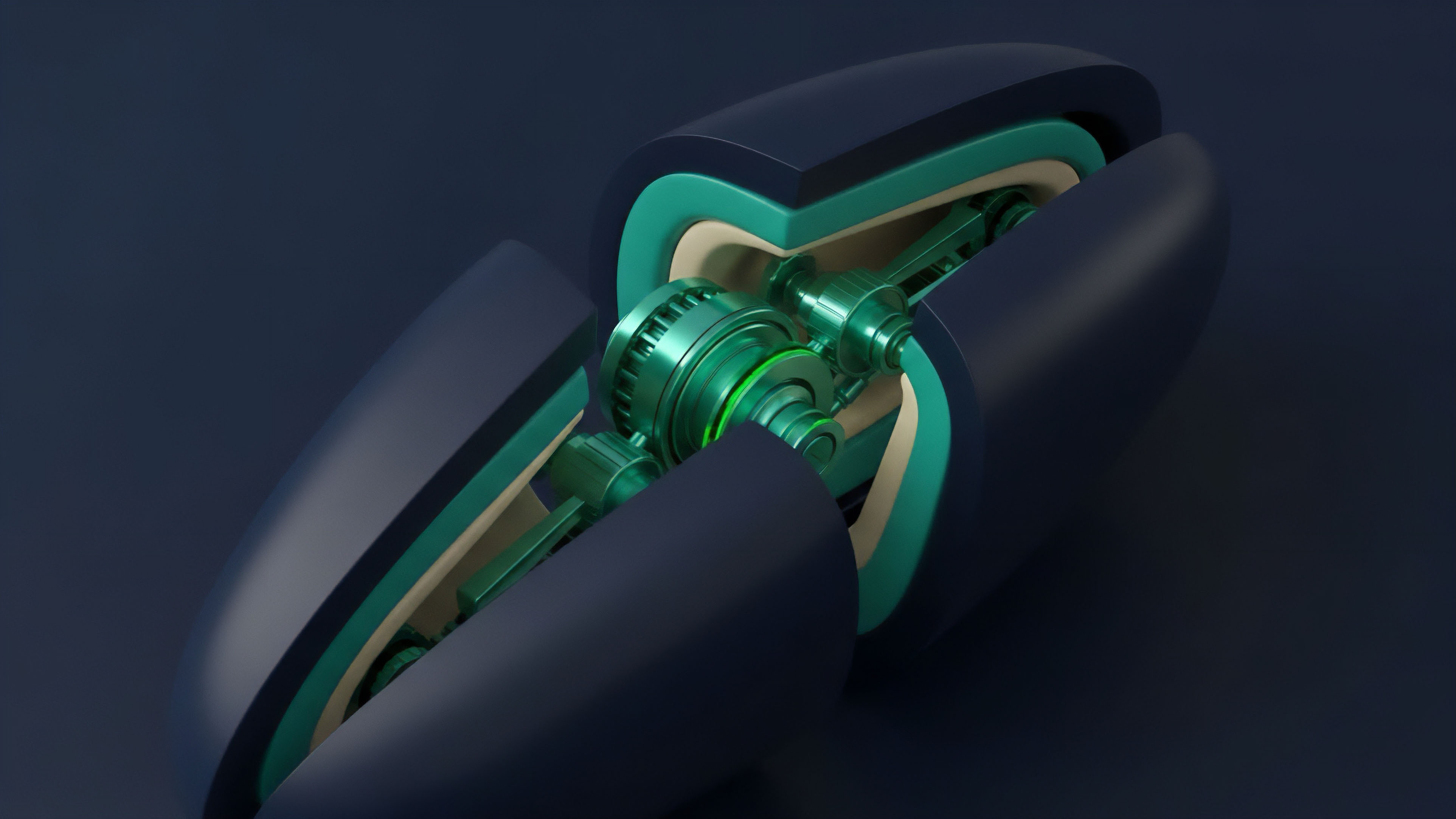

The next phase of low latency data feeds will be defined by the intersection of zero-knowledge proofs and hardware acceleration. The current challenge in decentralized markets is verifying data integrity without incurring high gas costs or sacrificing speed. Zero-knowledge proofs (zk-proofs) offer a solution by allowing data providers to prove the validity of their off-chain data calculations without revealing the underlying data itself.

This enables protocols to verify data quickly and efficiently, moving beyond simple data aggregation to verifiable computation. The development of hardware-accelerated oracle networks represents another significant trend. These networks utilize specialized hardware (e.g. trusted execution environments or FPGAs) to perform data aggregation and verification off-chain with extremely low latency.

The goal is to provide data feeds that are both fast enough for high-frequency trading and cryptographically secure for decentralized applications. This creates a new layer of infrastructure that bridges the gap between the speed of traditional finance and the trustlessness of decentralized systems. The future of low latency data feeds is not simply about reducing time delay; it is about creating a fair execution environment.

The ultimate goal is to remove the informational asymmetry that currently favors sophisticated HFTs. The next generation of protocols will utilize these advancements to build derivatives platforms where data integrity is guaranteed cryptographically, ensuring that all participants operate on the same, verifiable information set.

The future of data feeds lies in cryptographically guaranteeing data integrity through zero-knowledge proofs, rather than relying on trust in centralized providers or simply optimizing network speed.

Glossary

Redundancy in Data Feeds

Block Finality Latency

Low Cost Data Availability

Geodesic Network Latency

Data Latency Arbitrage

Implied Volatility Feeds

Options Trading Latency

Latency-Finality Dilemma

Latency-Alpha Decay