Essence

Data Feed Normalization represents the technical reconciliation of disparate price streams into a singular, authoritative reference point for derivative settlement. Within decentralized markets, liquidity providers and exchanges broadcast pricing data across heterogeneous formats, latencies, and update frequencies. This process abstracts these technical variations, ensuring that smart contracts interact with a coherent, unified price representation.

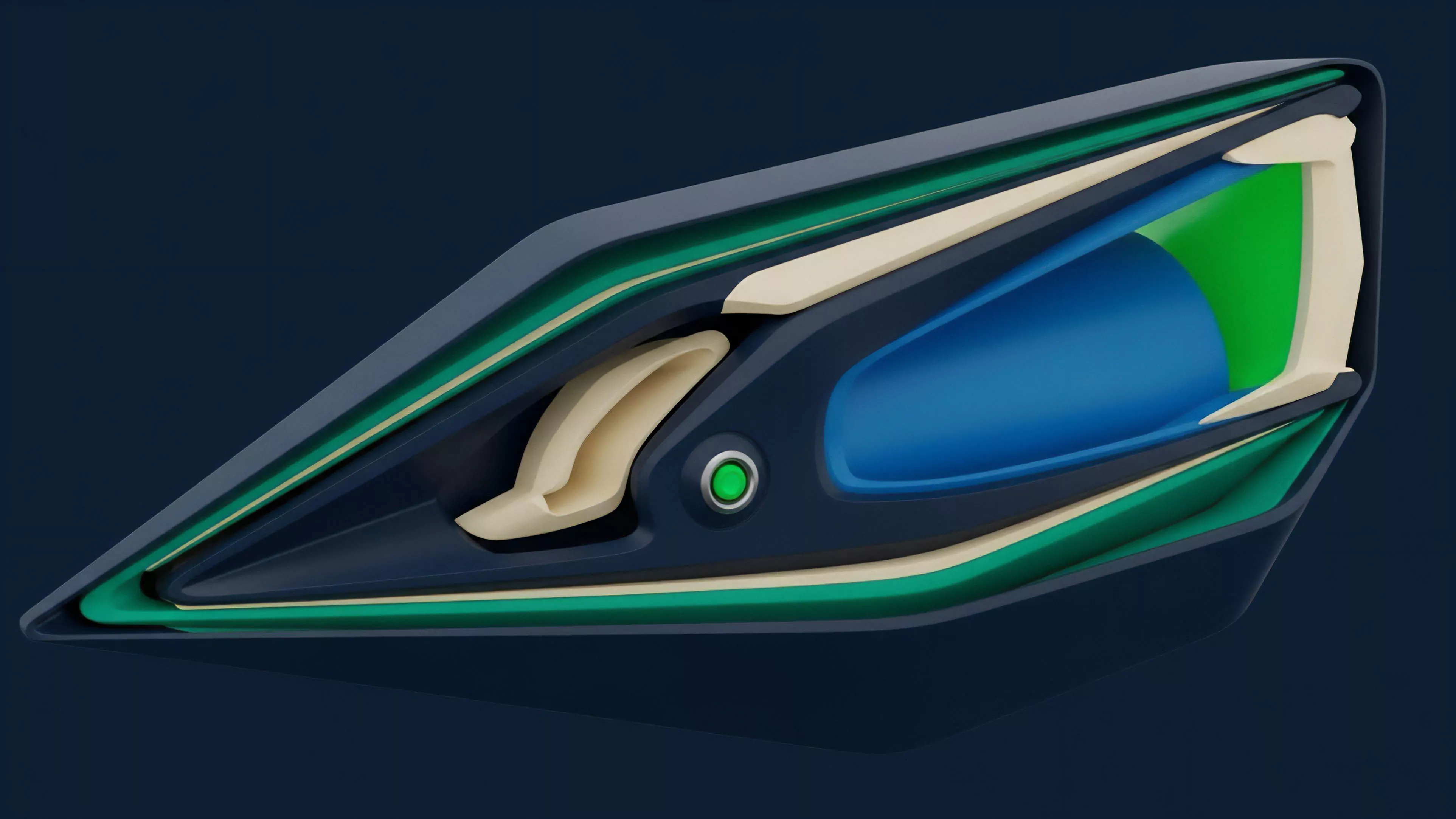

Data Feed Normalization creates a consistent price reference by reconciling heterogeneous data inputs into a unified standard for derivative settlement.

The systemic necessity of this function stems from the inherent fragmentation of crypto liquidity. Without a standardized feed, individual derivative protocols would suffer from arbitrage-driven pricing discrepancies, leading to uneven liquidation triggers and distorted risk metrics. By enforcing structural uniformity on incoming data, protocols achieve reliable margin engine performance, essential for maintaining market integrity under high volatility.

Origin

The requirement for Data Feed Normalization emerged alongside the transition from simple spot exchanges to complex, leverage-heavy derivative platforms.

Early decentralized finance iterations relied on single-source or simple median-based price feeds, which proved vulnerable to localized manipulation and technical outages. Market participants observed that synthetic assets and perpetual contracts required more robust price discovery mechanisms to prevent systemic insolvency during periods of rapid asset repricing.

- Liquidity fragmentation necessitated mechanisms to aggregate data from multiple exchanges.

- Latency arbitrage drove the need for timestamp synchronization across various data sources.

- Manipulation resistance required weighting algorithms to mitigate the influence of outlier data points.

As derivative volume scaled, the industry moved toward decentralized oracle networks that perform on-chain normalization. These systems allow for the secure ingestion of off-chain data, translating raw exchange order books into verifiable inputs for automated market makers and clearinghouses. This evolution reflects the broader shift toward building financial infrastructure capable of surviving adversarial environments without centralized oversight.

Theory

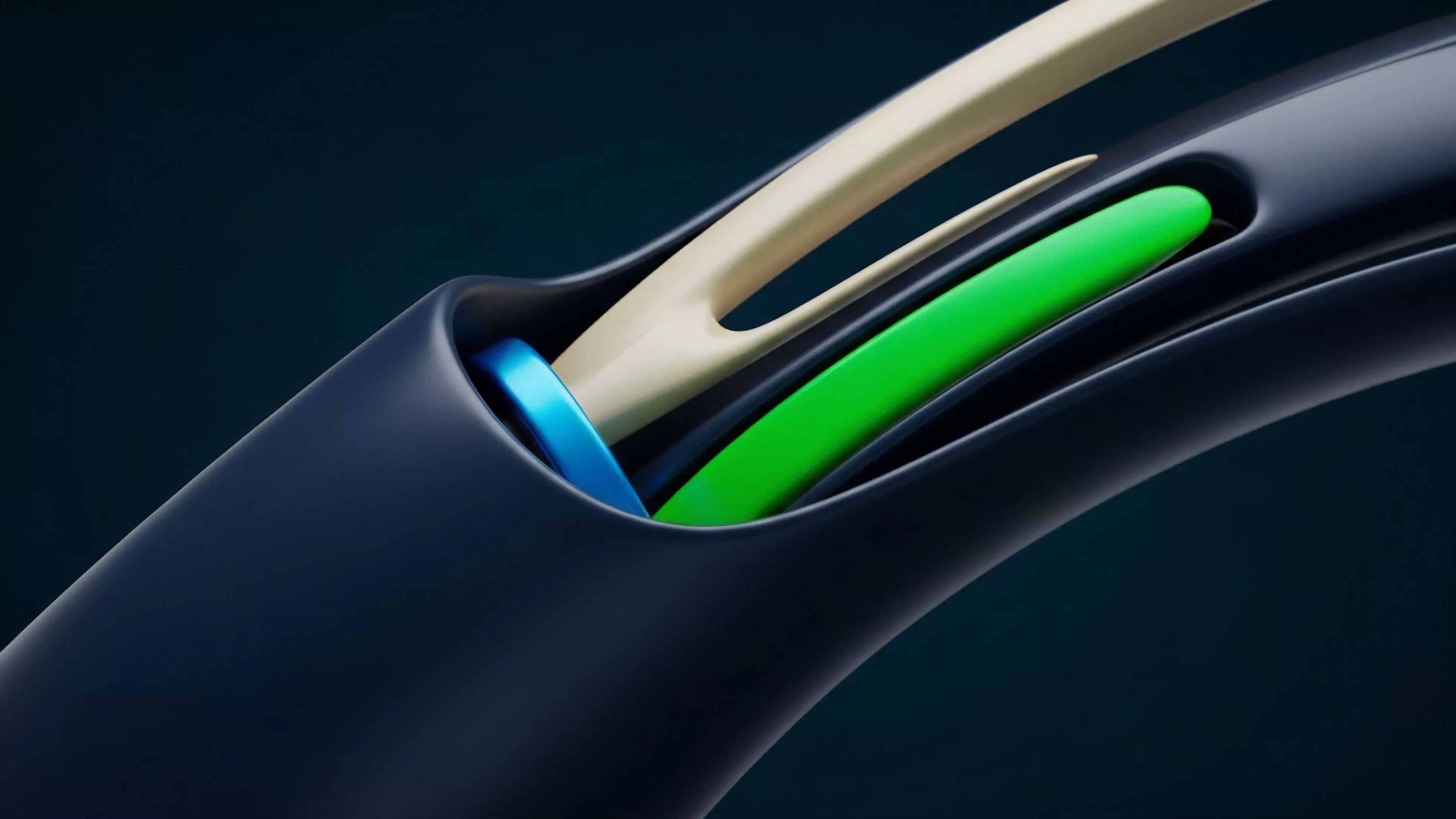

The architecture of Data Feed Normalization rests on the mathematical transformation of raw telemetry into a stable, probabilistic signal.

Quantitative analysts view this as a signal processing challenge where noise reduction and outlier rejection are the primary objectives. Protocols must filter high-frequency market noise to prevent triggering unnecessary liquidations while maintaining sensitivity to genuine price trends.

Statistical Filtering Mechanisms

The normalization engine typically employs a combination of weighted moving averages and deviation thresholds. By assigning weights based on exchange volume and historical reliability, the system produces a price signal that is less susceptible to thin-market volatility.

| Method | Mechanism | Risk Mitigation |

| Volume Weighting | Prioritizes high-liquidity sources | Reduces impact of flash crashes on low-volume exchanges |

| Deviation Capping | Filters data exceeding standard deviation | Prevents oracle poisoning and erratic price spikes |

| Time-Weighted Averaging | Smooths rapid price fluctuations | Protects against transient liquidity gaps |

Effective normalization models apply statistical filters to raw exchange data to produce stable inputs for automated margin and liquidation engines.

The interaction between the normalization layer and the margin engine dictates the protocol’s risk appetite. If the feed is too slow, the protocol risks insolvency during rapid drawdowns. If the feed is too reactive, the protocol faces frequent, unnecessary liquidations.

This balance requires precise tuning of the update frequency and the sensitivity parameters governing the data aggregation algorithm.

Approach

Current implementations of Data Feed Normalization utilize multi-node decentralized oracle networks to achieve censorship resistance and data integrity. These networks operate by polling multiple independent nodes, each fetching data from a diverse set of centralized and decentralized exchanges. The resulting dataset undergoes aggregation via a consensus-based approach, often involving a median-of-medians calculation to ensure that a small subset of compromised sources cannot dictate the final price.

- Data Ingestion involves fetching raw order book data from multiple global exchanges.

- Normalization standardizes timestamps, asset pairs, and units of measure into a common format.

- Consensus Aggregation applies statistical models to determine the final, verifiable price output.

The technical challenge lies in the trade-off between update frequency and computational cost. Frequent updates improve accuracy but increase the gas burden on the underlying blockchain. Many protocols address this by utilizing off-chain aggregation, where data is normalized and signed by a trusted set of validators before being pushed to the blockchain as a single, verified transaction.

This hybrid approach optimizes for both precision and operational efficiency.

Evolution

The progression of Data Feed Normalization mirrors the increasing complexity of derivative instruments. Initially, simple linear averages sufficed for basic spot tracking. However, the introduction of cross-margining and complex option strategies demanded higher-fidelity data.

Modern systems now incorporate volatility-aware weighting, where the feed itself adjusts its sensitivity based on the current market environment.

Adaptive normalization techniques dynamically adjust sensitivity parameters based on real-time market volatility to maintain systemic stability.

This shift represents a transition from static to dynamic data governance. Protocol architects are increasingly designing systems that automatically adjust their reliance on specific data sources based on real-time performance metrics. If a specific exchange begins to exhibit anomalous behavior, the normalization engine detects the deviation and automatically down-weights or excludes that source, maintaining the integrity of the aggregate feed without requiring manual intervention.

One might observe that this mirrors the transition from rigid mechanical clocks to self-correcting atomic oscillators, where precision is no longer an absolute, but a function of constant, automated calibration against external reality. The current horizon points toward the integration of predictive modeling, where the normalization layer may soon account for anticipated volatility spikes before they fully manifest in the order book data.

Horizon

The future of Data Feed Normalization lies in the convergence of high-frequency trading data and decentralized governance. We anticipate the rise of proof-of-stake based oracle networks that offer cryptographically guaranteed latency and accuracy.

These networks will likely integrate directly with order flow information, allowing protocols to anticipate liquidity shocks rather than merely reacting to them.

| Future Development | Impact |

| Cryptographic Latency Proofs | Verifies speed and reliability of data delivery |

| Order Flow Integration | Enables predictive margin and risk assessment |

| Cross-Chain Normalization | Unifies pricing across disparate blockchain ecosystems |

The ultimate goal is the creation of a global, permissionless, and tamper-proof price reference for every tradable asset. As decentralized derivatives continue to capture market share, the normalization layer will become the primary determinant of protocol resilience. Success will be defined by the ability to maintain accurate, low-latency price signals across increasingly complex and interconnected financial environments, ensuring that decentralized markets remain robust against both technical failure and adversarial manipulation.