Essence

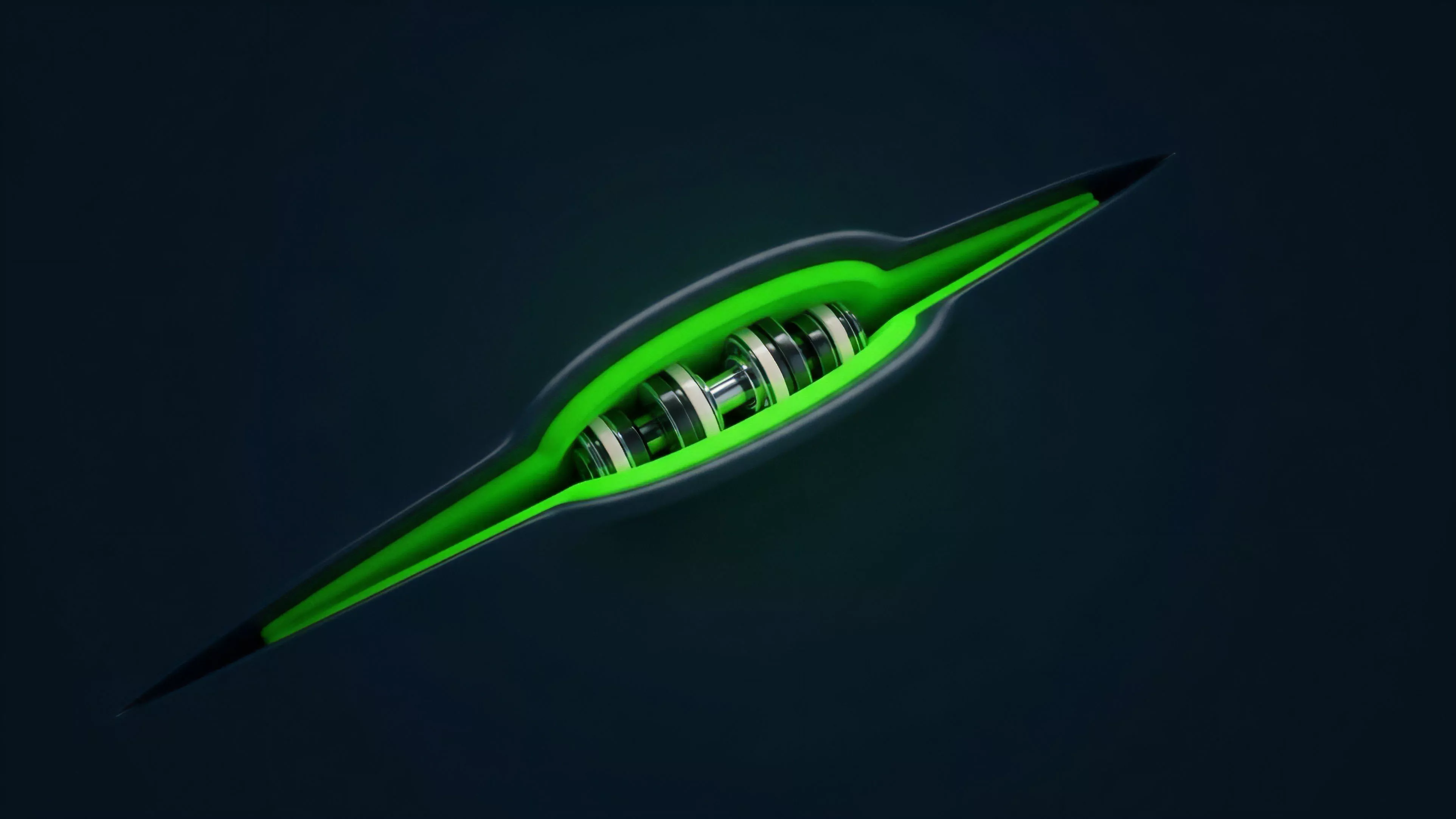

Network Resource Optimization represents the programmatic allocation of computational bandwidth, storage capacity, and validator stake to maximize throughput and minimize latency within decentralized derivatives protocols. It functions as the metabolic engine of on-chain finance, ensuring that complex margin calculations, liquidation triggers, and order book updates occur with deterministic finality.

Network Resource Optimization serves as the structural foundation for achieving high-frequency trading parity within decentralized environments.

This concept transcends simple server load balancing. It addresses the inherent scarcity of block space and the economic cost of computation in permissionless ledgers. By abstracting the underlying hardware requirements into tradeable or programmable units, protocols align the incentives of node operators with the performance demands of sophisticated market participants.

Origin

The genesis of Network Resource Optimization resides in the early realization that decentralized settlement layers possess severe throughput limitations compared to centralized matching engines.

Developers observed that standard consensus mechanisms often prioritize security at the expense of the rapid execution required for complex derivative instruments.

- EIP-1559 established the foundational model for dynamic fee markets, creating the first protocol-level mechanism for prioritizing resource consumption.

- Off-chain computation models introduced the possibility of decoupling execution from settlement to preserve network integrity.

- Layer 2 rollups shifted the focus toward batching transactions, thereby increasing the effective utility of the underlying base layer resources.

These historical developments shifted the discourse from purely aesthetic decentralization toward a focus on functional efficiency. The transition highlights the necessity of managing state growth and transaction volume to maintain a viable financial operating environment.

Theory

The architecture of Network Resource Optimization rests on the principle of maximizing capital velocity through reduced computational overhead. It treats block space as a finite, high-value commodity subject to auction-based distribution and algorithmic scheduling.

Protocol Physics

The interplay between consensus latency and margin engine updates defines the performance ceiling of any derivative protocol. When a system reaches its throughput limit, the resulting congestion induces systemic risk, specifically regarding the timing of liquidations.

| Parameter | Optimized State | Congested State |

| Transaction Latency | Deterministic | Stochastic |

| Liquidation Accuracy | Precise | Delayed |

| Systemic Risk | Contained | Propagating |

Effective resource management requires the precise synchronization of state updates with the volatile nature of derivative price feeds.

Adversarial agents constantly probe these systems, seeking to exploit temporary resource exhaustion to prevent timely margin calls. Therefore, robust protocols implement circuit breakers and dynamic priority queues to ensure that critical risk management operations maintain precedence over routine trading activity. Sometimes, the most efficient solution involves moving the state entirely off-chain, leaving only the proof of settlement on the primary ledger ⎊ a subtle shift in the definition of what constitutes a truly decentralized market.

Approach

Current implementation strategies focus on isolating high-intensity processes from the main consensus loop.

Market makers and institutional participants now leverage specialized infrastructure to minimize their proximity to the matching engine, reducing the physical distance and computational hops required for order submission.

- Proposer Builder Separation allows the decoupling of block creation from transaction ordering, facilitating specialized resource management.

- State Rent models attempt to align the cost of storage with the actual network burden imposed by long-term data retention.

- Zero Knowledge Proofs enable the verification of complex derivative states without requiring the entire network to recompute every transaction.

This shift toward specialized execution environments mirrors the evolution of traditional high-frequency trading venues. Participants must now account for the cost of computational priority as a distinct component of their overall trading strategy, acknowledging that execution speed is as vital as price discovery.

Evolution

The path from simple gas-guzzling smart contracts to sophisticated, resource-aware protocols has been marked by a constant struggle against state bloat and throughput bottlenecks. Early iterations relied on brute-force computational scaling, which ultimately proved unsustainable under heavy market stress.

The trajectory of protocol design indicates a move toward modular architectures where resource allocation is handled by specialized layers.

Modern frameworks utilize modularity to distribute the burden of computation. By separating data availability, execution, and settlement, these systems achieve a higher degree of resilience. This evolution demonstrates a maturing understanding of the trade-offs between absolute decentralization and the practical requirements of financial infrastructure.

Horizon

Future developments in Network Resource Optimization will likely center on predictive resource scheduling. Protocols will employ machine learning models to anticipate periods of high volatility and preemptively adjust gas prices or batching parameters to prevent system-wide saturation.

Systemic Implications

The ability to dynamically allocate resources will determine the long-term survival of decentralized derivative venues. Systems that fail to implement sophisticated optimization will face recurring periods of fragility, leading to liquidity migration toward more efficient architectures.

| Future Development | Impact |

| Predictive Scaling | Lower Latency |

| Autonomous Fee Markets | Stable Throughput |

| Hardware-Level Integration | Performance Parity |

The ultimate goal remains the creation of a financial layer that functions with the speed of traditional markets while retaining the transparency of open protocols. Success depends on the ability to treat network resources as a programmable variable rather than a static constraint.