Essence

Data Availability Sampling (DAS) represents a fundamental shift in how decentralized systems manage information integrity and scale. It addresses the core challenge of ensuring that all data required to reconstruct the blockchain state ⎊ specifically for Layer 2 rollups ⎊ is accessible to every participant without requiring them to download the entire dataset. The primary purpose of DAS is to decouple the cost of data storage from the cost of transaction execution, allowing Layer 2 solutions to scale while maintaining the security guarantees provided by the underlying Layer 1.

This mechanism transforms the economic and security landscape for decentralized financial applications. The financial significance of DAS stems from its impact on systemic risk and capital efficiency. In a rollup architecture, if the data for a batch of transactions is not available on the Layer 1, users cannot verify the state transition.

This creates a security vulnerability where malicious operators could potentially steal funds or prevent withdrawals. DAS provides a probabilistic guarantee that data is available, enabling light clients to verify data integrity with high confidence by sampling only small portions of the data. This reduces the operational cost for nodes and, critically, ensures the integrity of financial settlement for derivatives and other complex instruments operating on Layer 2s.

Data Availability Sampling ensures that off-chain transaction data is verifiable on the main chain without requiring full node participation, securing the state transitions for Layer 2 protocols.

Origin

The concept of DAS originates from the “scalability trilemma,” which posits that a blockchain can only optimize for two of three properties: decentralization, security, and scalability. Early Layer 1 designs prioritized security and decentralization, leading to high transaction costs and limited throughput. The advent of rollups offered a path to scalability by executing transactions off-chain and posting compressed data back to the Layer 1.

This design introduced a new, critical challenge: the data availability problem. If a rollup operator withholds data, the Layer 1 cannot verify the state, and users cannot exit the system. The initial solutions to this problem involved either a “data committee” (which introduces centralization) or requiring full nodes to download all data (which limits scalability).

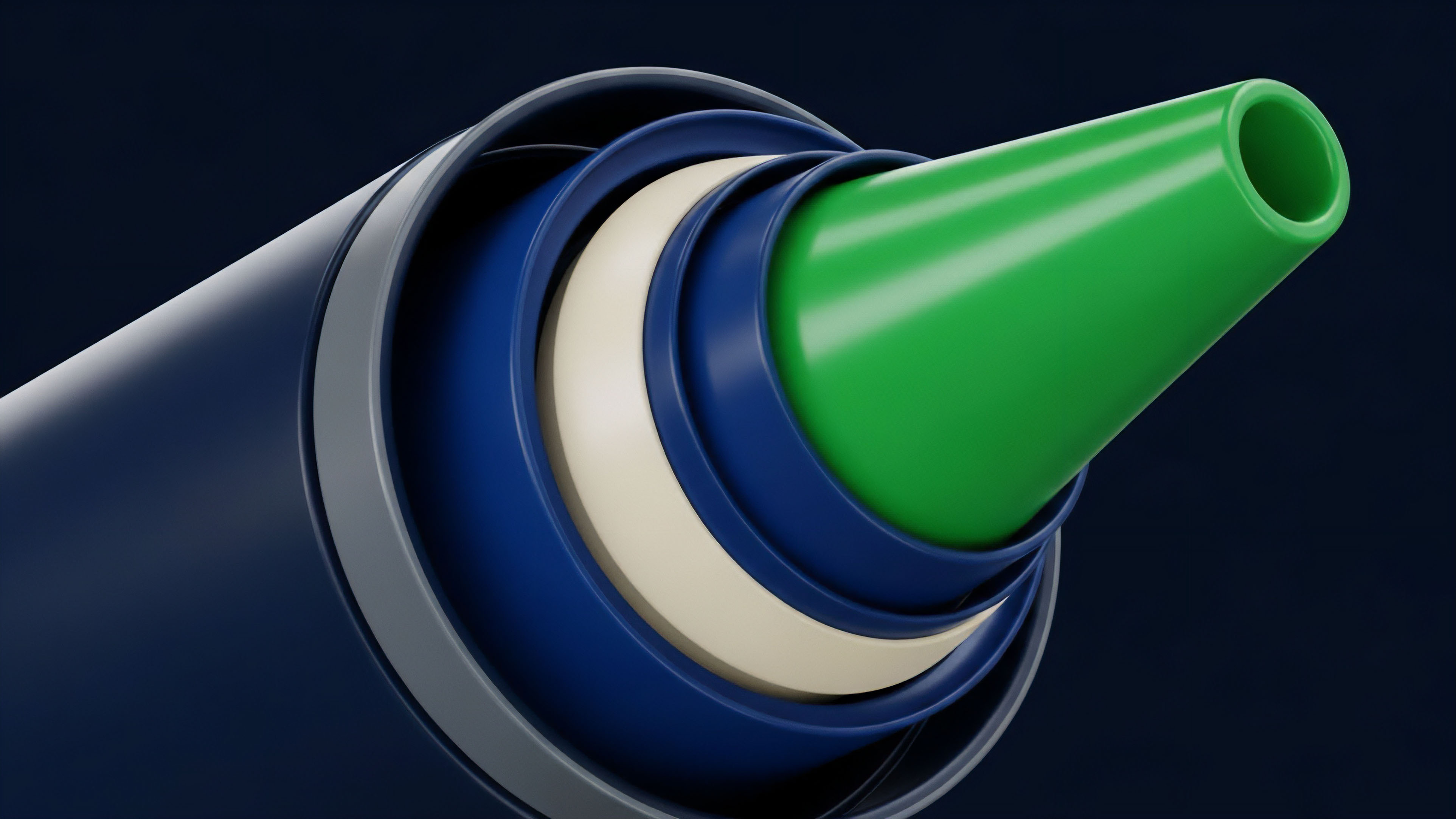

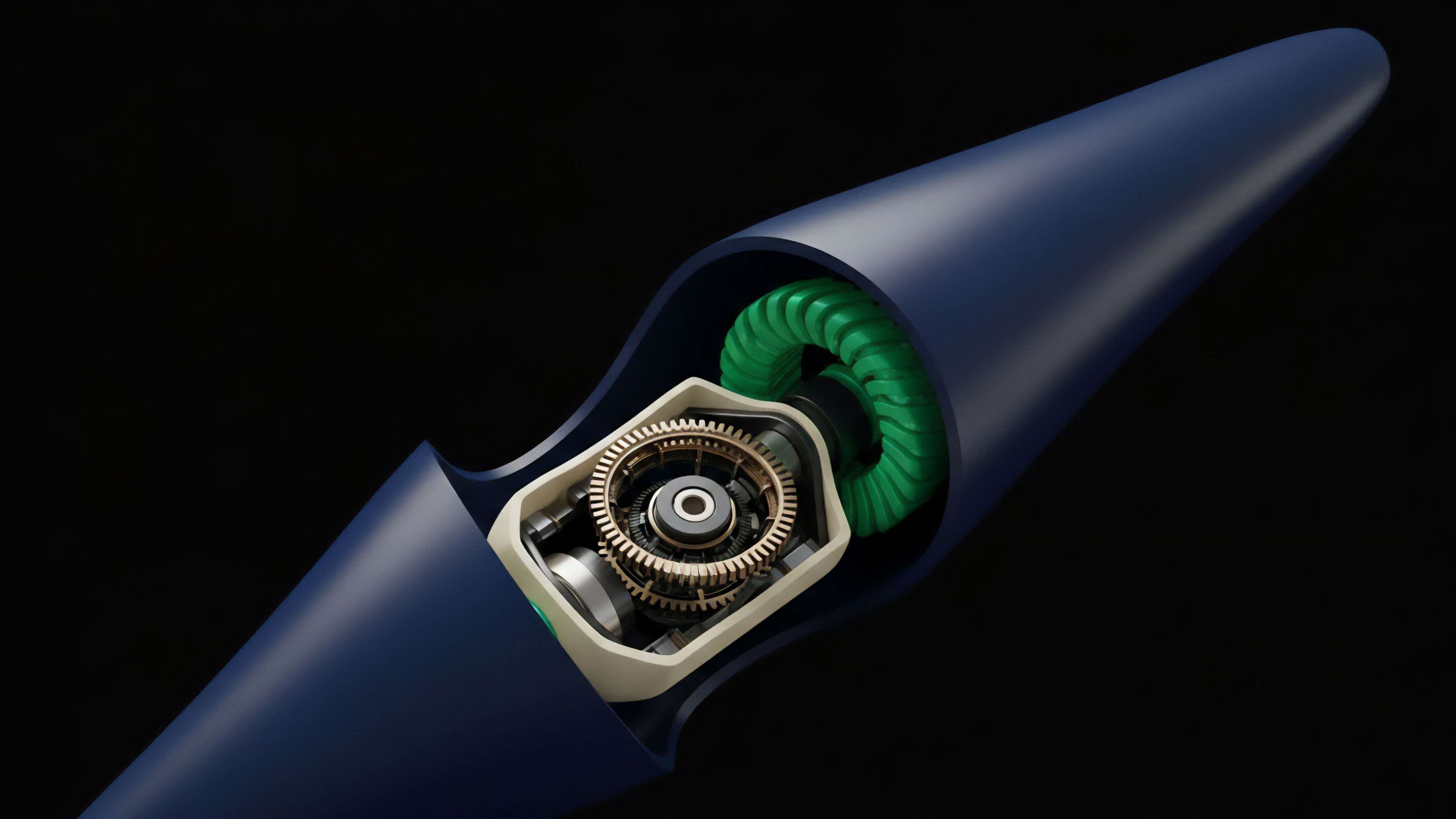

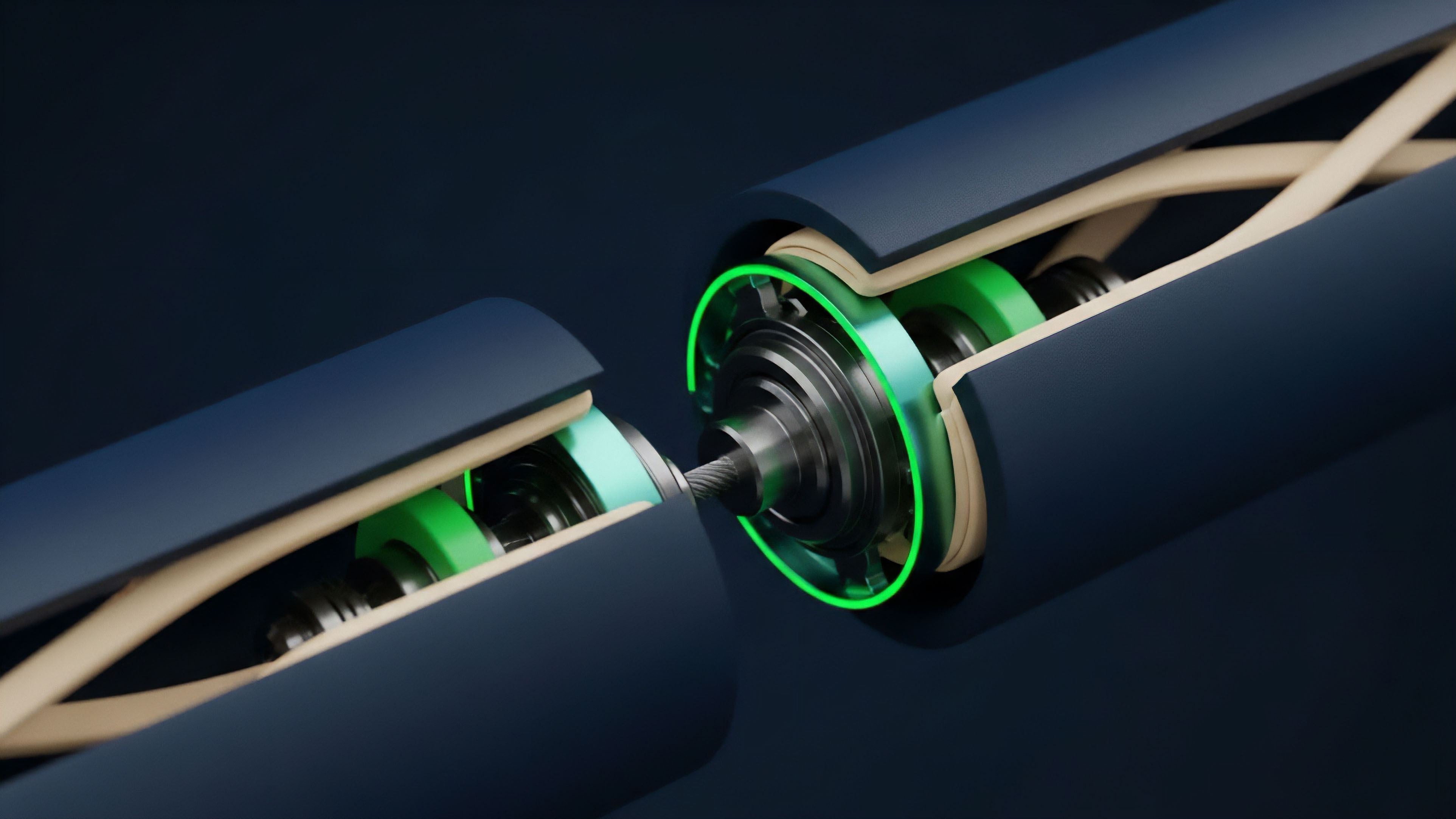

The breakthrough came from applying erasure coding, a concept from information theory. Erasure coding, specifically Reed-Solomon codes, allows for the reconstruction of a dataset from only a fraction of its parts. By encoding data with redundancy, DAS enables light clients to sample a small, random subset of data chunks.

If a sufficient number of samples pass verification, the system can assume with high probability that the entire dataset is available. This mathematical foundation allowed for the creation of modular blockchains where data availability is handled separately from execution.

Theory

The theoretical foundation of DAS rests on probabilistic verification and erasure coding.

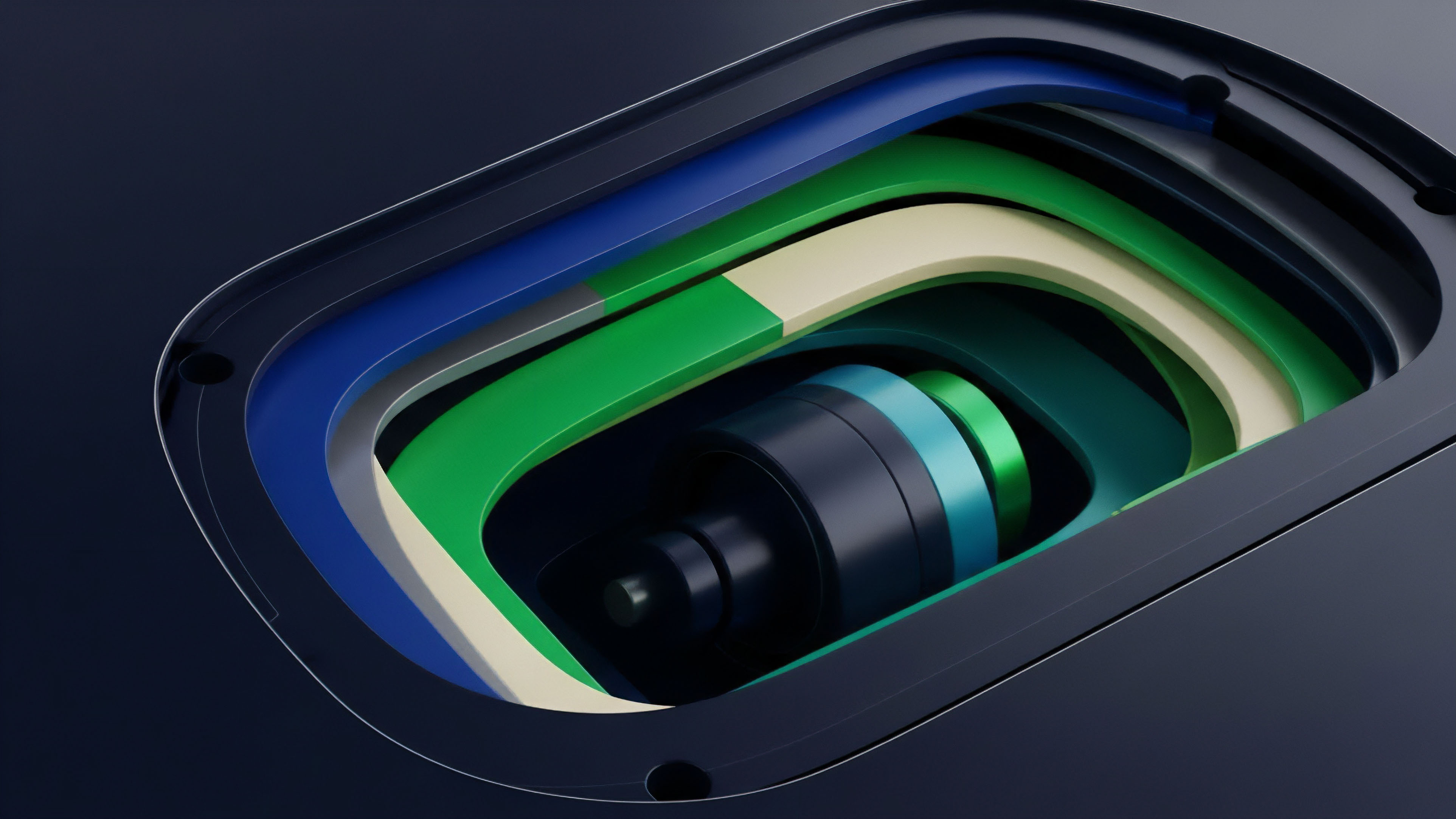

Erasure coding transforms a set of data chunks into a larger set of encoded chunks, where the original data can be recovered from any subset of the encoded chunks. For example, a dataset of 100 chunks might be expanded to 200 chunks. To verify data availability, light nodes randomly select and download a small number of these encoded chunks.

The probability of detecting a malicious actor who has withheld data increases exponentially with the number of successful samples. The security guarantee provided by DAS is a probabilistic one, not absolute. The probability of a light node failing to detect data withholding (a “false positive”) decreases rapidly as more samples are taken.

The number of samples required to achieve a desired security level is determined by the specific erasure coding scheme and the number of total data chunks. This allows a protocol to define a specific security threshold based on the risk tolerance of the applications built upon it.

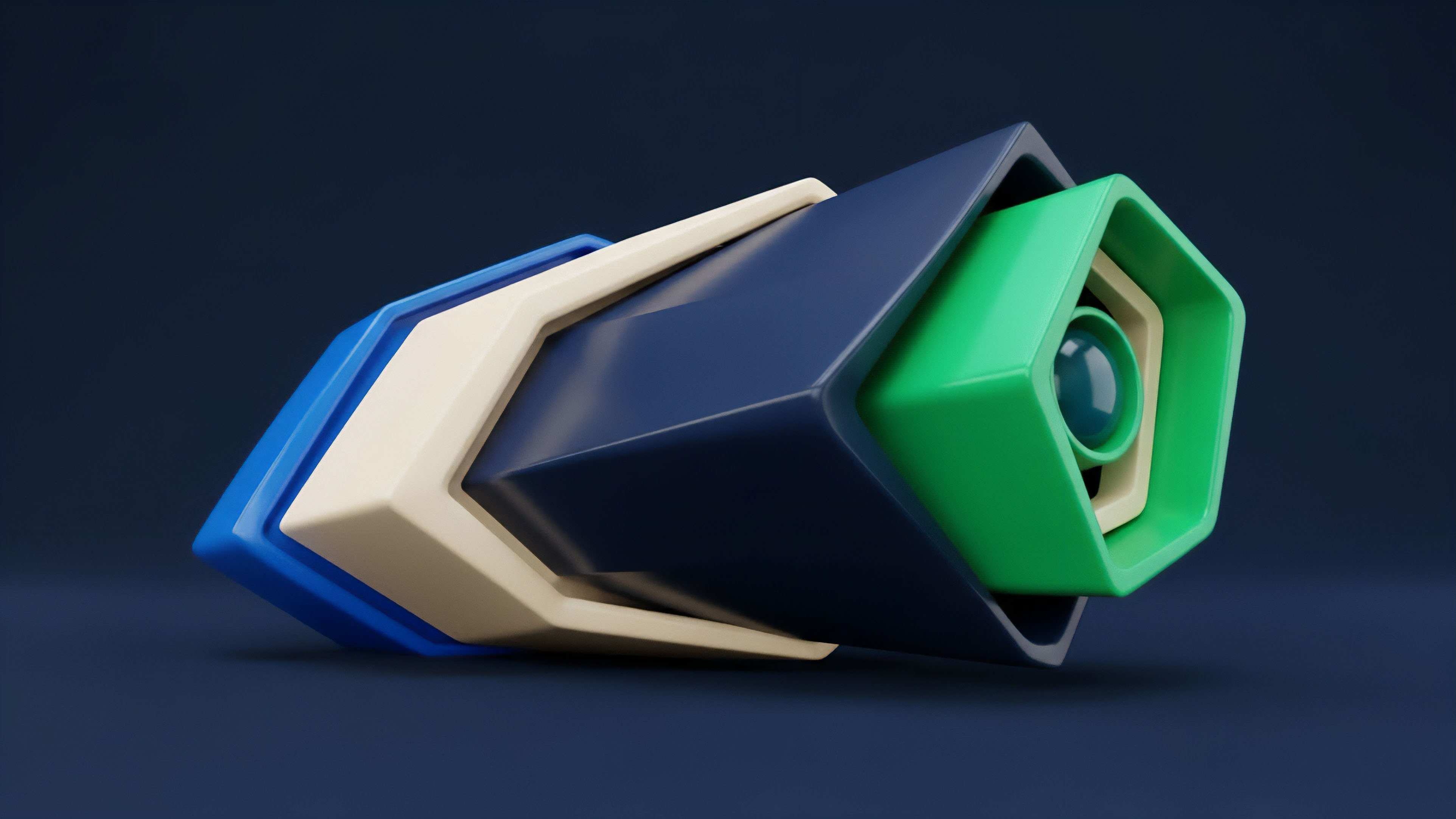

Erasure coding allows for data reconstruction from partial information, providing a mathematical basis for probabilistic data verification in DAS systems.

The core challenge in DAS implementation lies in managing the trade-off between security and efficiency. Increasing the number of samples taken by light nodes enhances security but increases network load and latency. Conversely, reducing the sampling rate improves efficiency but lowers the probability of detecting malicious data withholding.

This dynamic creates a critical risk management calculation for Layer 2s that rely on DAS.

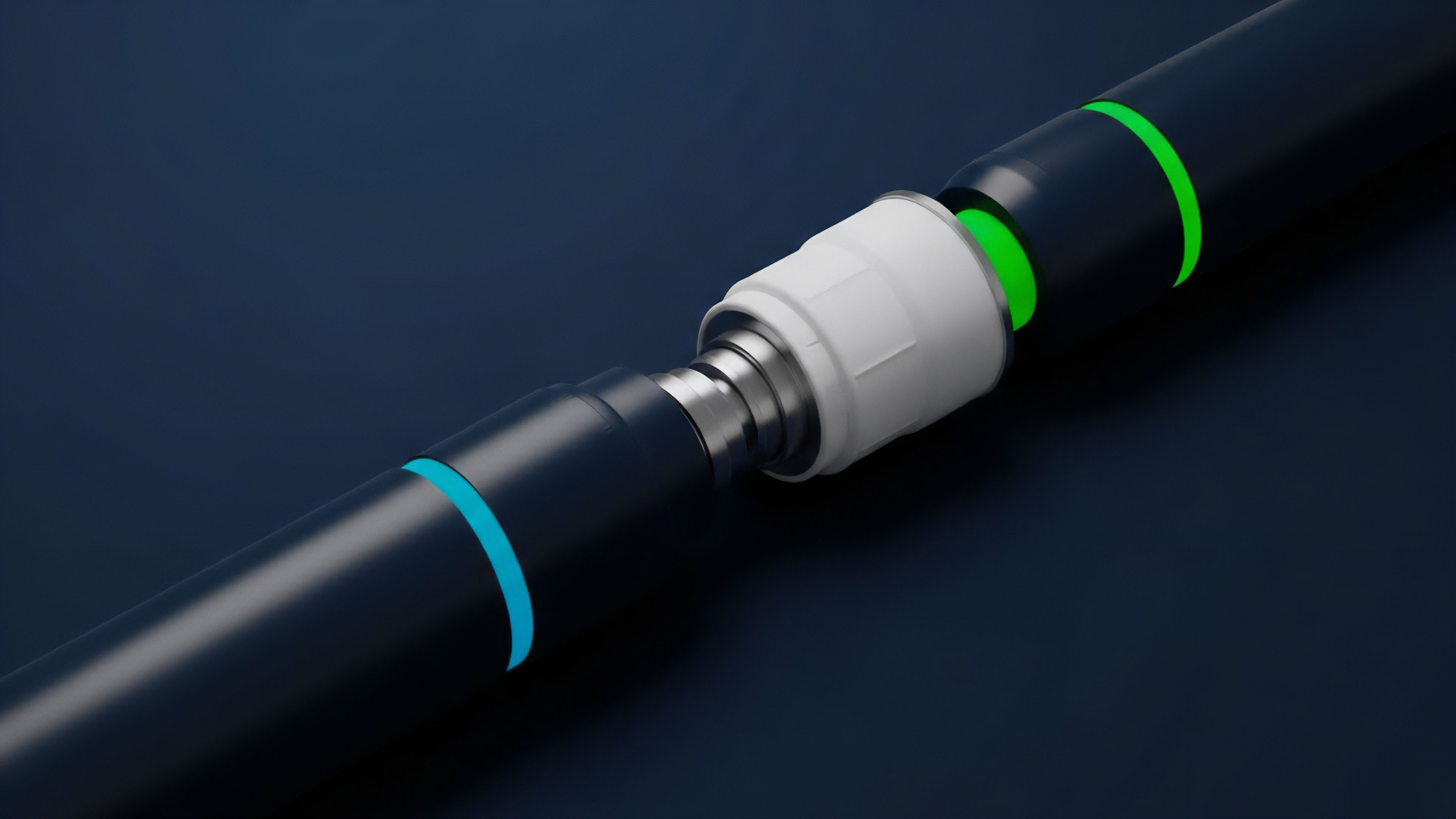

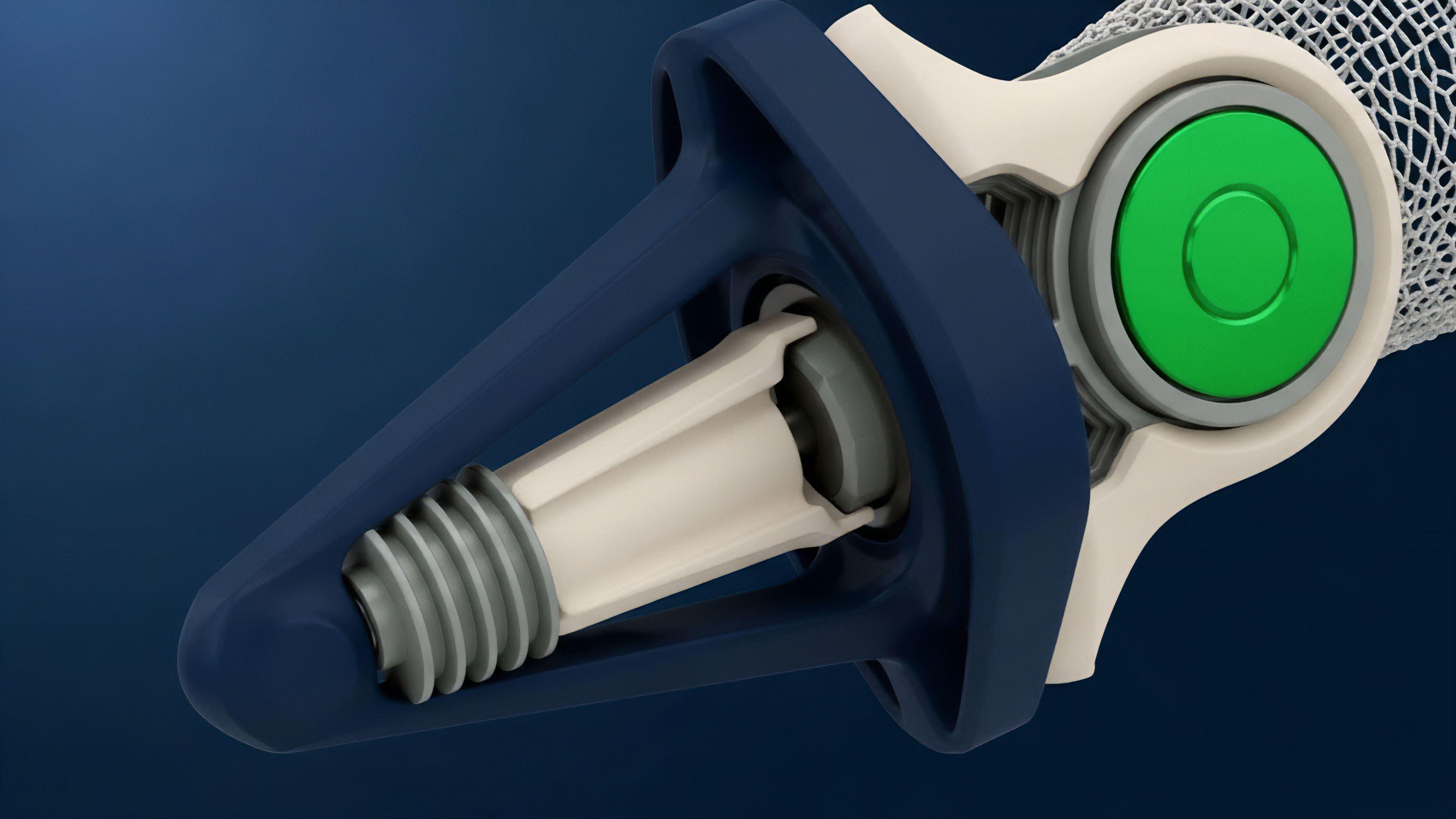

- Data Encoding: The rollup operator applies erasure coding to the transaction data, generating redundant chunks.

- Data Posting: The operator posts these encoded chunks to the Data Availability Layer.

- Random Sampling: Light nodes randomly select and download a small number of these chunks.

- Verification: Light nodes verify that the selected chunks are correctly encoded. If a significant number of samples pass, it provides high confidence that the entire dataset is available.

- Reconstruction: If data withholding is detected, a full node can reconstruct the data from the available chunks and challenge the rollup operator.

Approach

The implementation of DAS directly influences the market microstructure of decentralized derivatives platforms. The cost of data availability, which DAS seeks to reduce, is a significant component of Layer 2 transaction fees. Lower data costs enable higher throughput and lower transaction costs, which in turn facilitate more complex financial strategies, such as high-frequency options trading and dynamic hedging.

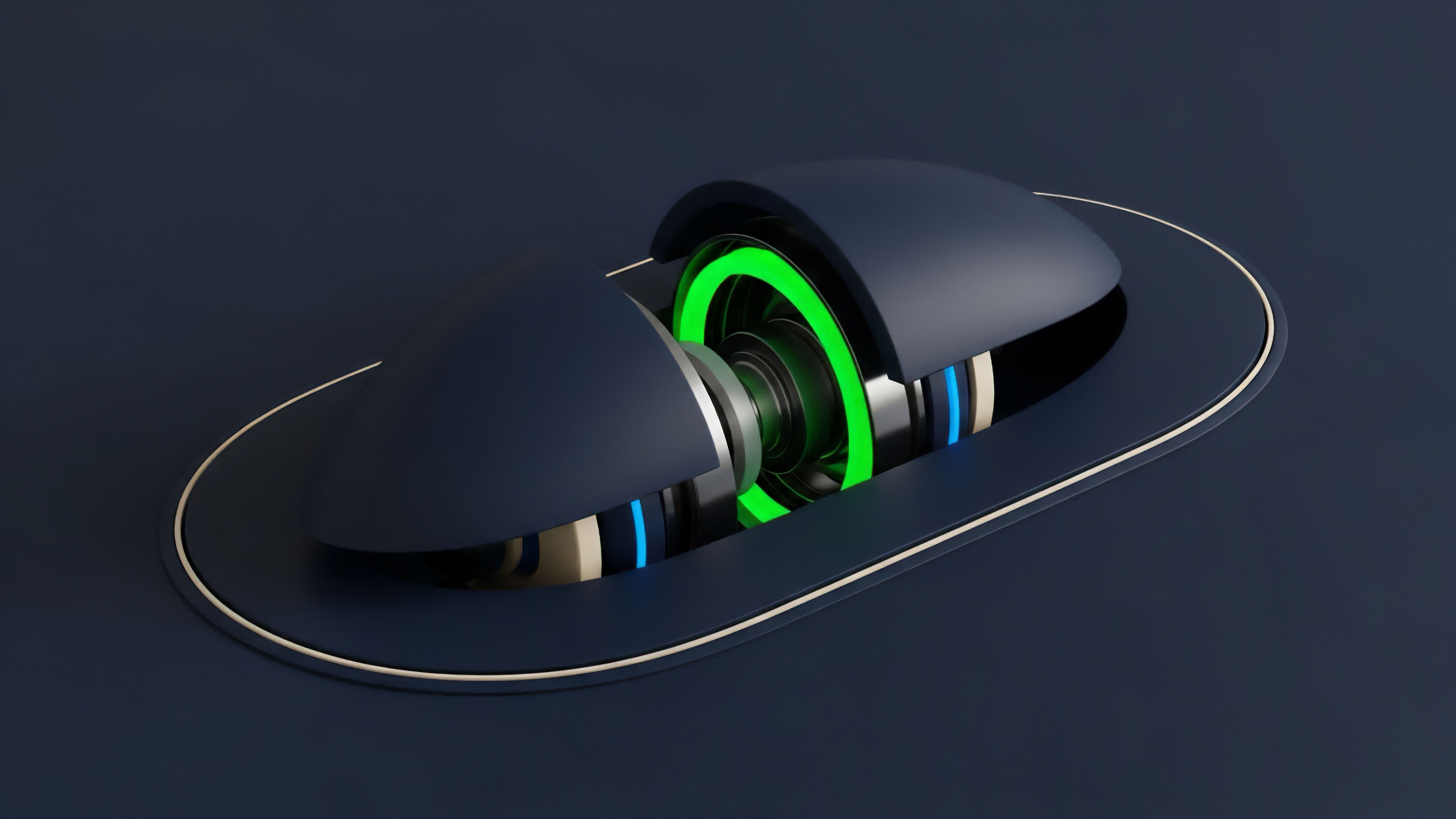

The market approach to DAS involves a shift toward modular architectures. Rather than a monolithic chain handling all functions, a modular system separates execution, settlement, consensus, and data availability into specialized layers. This allows for dedicated data availability layers (like Celestia) to compete on price and performance, driving down costs for Layer 2s.

This competition in the data availability market creates a new set of financial incentives and risks.

| Data Availability Solution | Methodology | Primary Trade-off |

|---|---|---|

| Monolithic Chain (e.g. Ethereum) | Full data storage on Layer 1. | High cost per byte, low scalability. |

| Data Availability Sampling (DAS) | Erasure coding and probabilistic verification by light nodes. | Scalability and decentralization, but probabilistic security guarantee. |

| Data Committee (Sidechains) | Centralized or federated verification. | High scalability, low decentralization. |

From a financial engineering perspective, the cost of data availability directly affects the pricing models for derivatives on Layer 2s. A higher cost for data translates to higher transaction fees for opening, closing, or liquidating positions. This can make certain strategies unprofitable, especially those involving frequent rebalancing or short-term expiration.

By lowering this cost floor, DAS enables the creation of more capital-efficient derivatives protocols that can offer lower spreads and more competitive pricing.

Evolution

The evolution of DAS reflects the transition from a monolithic blockchain design to a modular stack. Initially, the data availability problem was addressed by simply increasing the data capacity of the Layer 1 (e.g. increasing block size).

This approach, however, rapidly led to increased hardware requirements for full nodes, compromising decentralization. The introduction of EIP-4844 (Proto-Danksharding) on Ethereum marked a critical inflection point, introducing dedicated data “blobs” for rollups. These blobs offer cheaper data storage compared to calldata, specifically designed to be sampled by light nodes.

The next phase of evolution involves the separation of the data availability layer entirely. Projects like Celestia and EigenLayer have created dedicated data availability networks that function as independent layers. This creates a new economic primitive: a market for data availability.

Rollups can purchase data space from these specialized layers, allowing them to optimize for execution efficiency while relying on an external, specialized network for data integrity.

The transition from monolithic blockchains to modular data availability layers creates new market dynamics and reduces the cost floor for Layer 2 derivatives protocols.

This modular approach has profound implications for risk management. Derivatives protocols built on a modular stack must account for the specific security properties of the DA layer they use. The probabilistic nature of DAS introduces a new vector of systemic risk. While the probability of data withholding going undetected is low, it is non-zero. The financial models for these protocols must therefore account for this residual risk in their liquidation mechanisms and insurance funds.

Horizon

Looking ahead, DAS enables a future where decentralized finance can support complex, high-frequency financial products previously confined to centralized exchanges. The reduction in data costs facilitates the deployment of derivatives protocols that can handle a larger volume of transactions and support more intricate financial instruments. The future impact of DAS on derivatives markets will be defined by the rise of data-driven financial products. As data availability becomes cheaper and more reliable, it enables a new generation of smart contracts that rely on verifiable on-chain data streams. This allows for the creation of new financial primitives, such as options contracts based on real-time data feeds or derivatives linked to complex, non-financial data points. The modularity provided by DAS allows these new products to be deployed quickly and securely, creating a competitive environment for financial innovation. This modularity also leads to a more resilient system architecture. By separating the data layer, a failure in one component (e.g. an execution layer bug) does not necessarily compromise the integrity of the data. This systemic resilience is essential for a mature derivatives market, where cascading failures and contagion risks are primary concerns. The architecture fostered by DAS allows for a more robust and efficient risk management framework, where different layers can specialize in specific risk functions. The ultimate goal is to create a financial ecosystem where high-frequency trading and complex financial engineering can operate with the same level of security and cost efficiency as traditional finance, but with the added benefits of decentralization and transparency.

Glossary

Data Availability Bandwidth

Data Availability Economics

Consistency and Availability

Data Availability Governance

Cryptographic Proofs of Data Availability

Blockchain Scalability

Cost Reduction Strategies

Data Availability Costs in Blockchain

Transaction Costs