Essence

Data aggregation for crypto options involves synthesizing information from disparate sources to create a coherent view of market dynamics, which is essential for accurate pricing and risk management. The core challenge lies in the fragmented nature of crypto markets, where liquidity for derivatives is spread across centralized exchanges (CEXs), decentralized exchanges (DEXs), and various Layer 2 solutions. A reliable aggregation methodology must reconcile price discrepancies, account for varying levels of liquidity, and filter out noise or manipulation attempts to produce a single, actionable signal.

This signal, typically in the form of an implied volatility surface or a time-weighted average price, serves as the foundation for settlement engines and automated market maker (AMM) algorithms. Without robust aggregation, accurate options pricing becomes impossible, leading to mispricing, increased arbitrage opportunities, and systemic risk.

Data aggregation in crypto options synthesizes fragmented market data to establish a single source of truth for pricing and risk management.

The process must account for the unique characteristics of decentralized finance, where data provenance and censorship resistance are critical design constraints. Unlike traditional markets, where a few centralized venues provide standardized data feeds, crypto derivatives protocols must either build proprietary aggregation systems or rely on decentralized oracle networks to securely ingest data from the volatile multi-venue landscape. The methodology must specifically address the calculation of implied volatility, which is far more complex to derive from fragmented order books than simple spot prices.

Origin

The necessity for sophisticated data aggregation methodologies for derivatives originated in traditional finance, where centralized exchanges like the CME or Cboe serve as primary sources for options data. In this environment, aggregation primarily focused on consolidating data from multiple centralized sources to create a composite picture of market depth and pricing. However, the crypto market introduced a new set of challenges, necessitating a re-evaluation of these methods.

The rise of on-chain derivatives protocols and AMM-based options, starting around 2020, created a demand for verifiable, on-chain price feeds. The inherent latency and high gas costs of early blockchains meant that real-time, high-frequency data aggregation was computationally expensive and often impractical. This led to the development of decentralized oracle networks (DONs) specifically tailored to the unique requirements of on-chain settlement.

Early approaches often relied on simple median calculations of CEX prices. As the market matured, protocols realized that simple averaging failed to account for liquidity differences between venues, making the aggregated price vulnerable to manipulation on less liquid exchanges. The evolution of aggregation methodologies in crypto has therefore been a continuous effort to create a robust and secure source of truth that balances decentralization with data integrity.

Theory

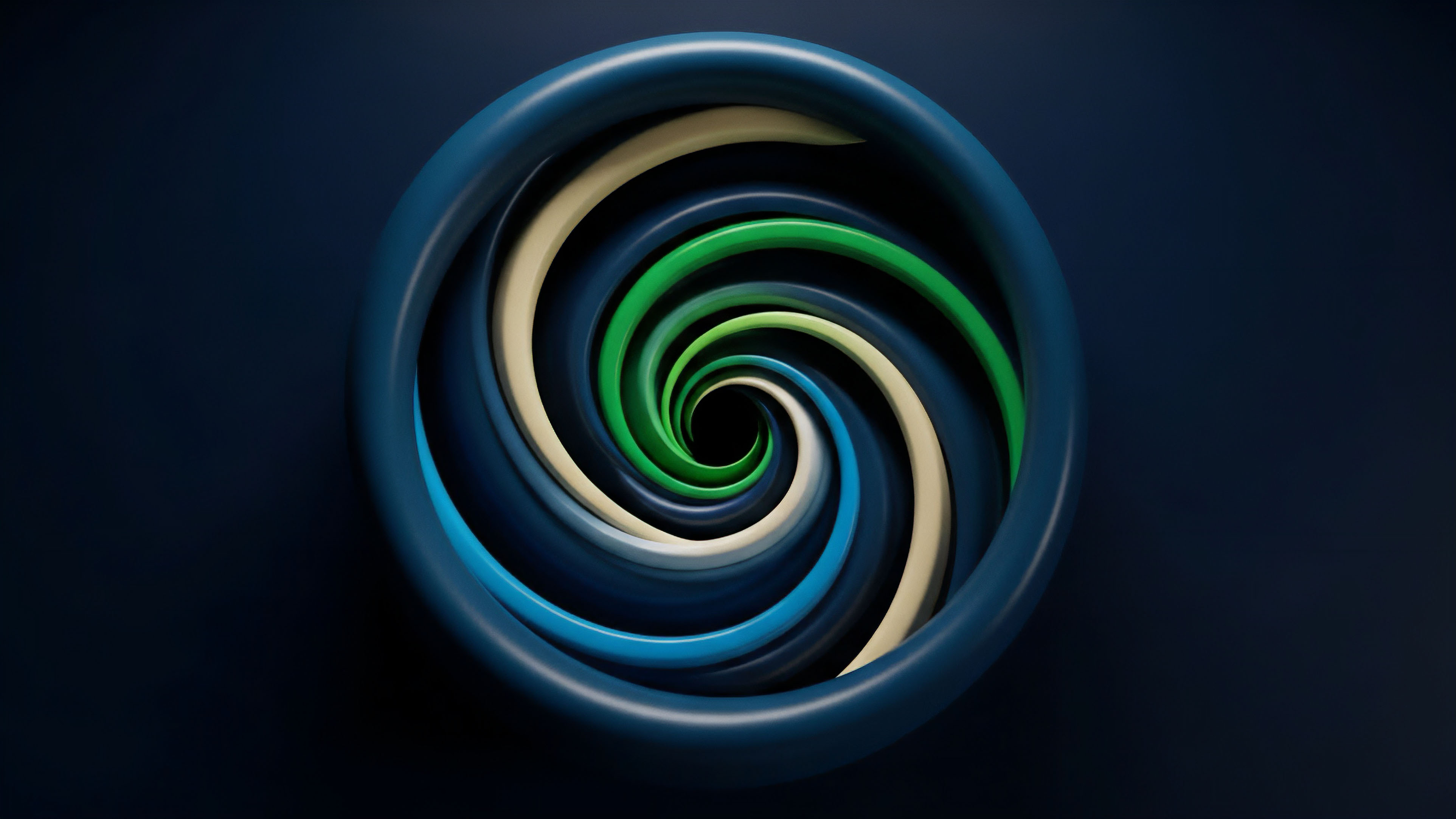

The theoretical underpinnings of crypto options data aggregation center on two core challenges: calculating implied volatility and managing data integrity in a trustless environment. The goal is to produce a reliable volatility surface that accurately reflects market expectations across different strike prices and expiries.

Implied Volatility Surface Construction

The primary theoretical hurdle for options pricing is calculating implied volatility (IV). A simple spot price feed is insufficient for options. Instead, a robust methodology must derive IV from a collection of option premiums across various strikes and expiries.

In fragmented markets, this requires synthesizing data from multiple sources. A common approach involves creating a composite volatility surface by blending data from different venues using liquidity-weighted or volume-weighted averages.

- Liquidity-Weighted Aggregation: This approach prioritizes data from venues with deeper order books, assigning a higher weight to prices from exchanges with greater liquidity at specific strike prices. This reduces the influence of thinly traded markets on the final aggregated price.

- Time-Weighted Averages (TWAs): To mitigate short-term price manipulation, methodologies often apply time-weighted averaging to the aggregated data. A simple spot price TWAP (Time-Weighted Average Price) calculates the average price over a time interval, but for options, this becomes a time-weighted implied volatility (TWIV) calculation.

- Outlier Filtering: A robust aggregation methodology must include mechanisms to identify and filter out spurious data points, which can arise from data feed errors or malicious manipulation attempts. This often involves calculating a median value from a set of data points and discarding values that fall outside a specific standard deviation threshold.

Data Integrity and Oracle Economics

From a systems perspective, the theory of aggregation must address the economic incentives for data providers. A decentralized oracle network relies on a set of independent nodes to provide data. The aggregation methodology must be designed to make collusion between nodes prohibitively expensive.

The “median-of-medians” approach, where a set of data points is first aggregated by different providers, and then those results are aggregated again, helps to create a robust, manipulation-resistant feed.

Approach

Current implementations of data aggregation for crypto options fall into two main categories: off-chain proprietary engines and on-chain decentralized oracle networks. The choice between these approaches depends on the specific use case, balancing speed and capital efficiency against decentralization and censorship resistance.

Off-Chain Aggregation Engines

Market makers and institutional trading desks utilize sophisticated off-chain aggregation engines. These systems are designed for high-frequency trading and risk management, prioritizing low latency and deep order book analysis.

- Data Ingestion: These engines connect directly to the APIs of multiple CEXs and DEXs to stream real-time order book data. The data is often normalized to a common format to allow for consistent analysis.

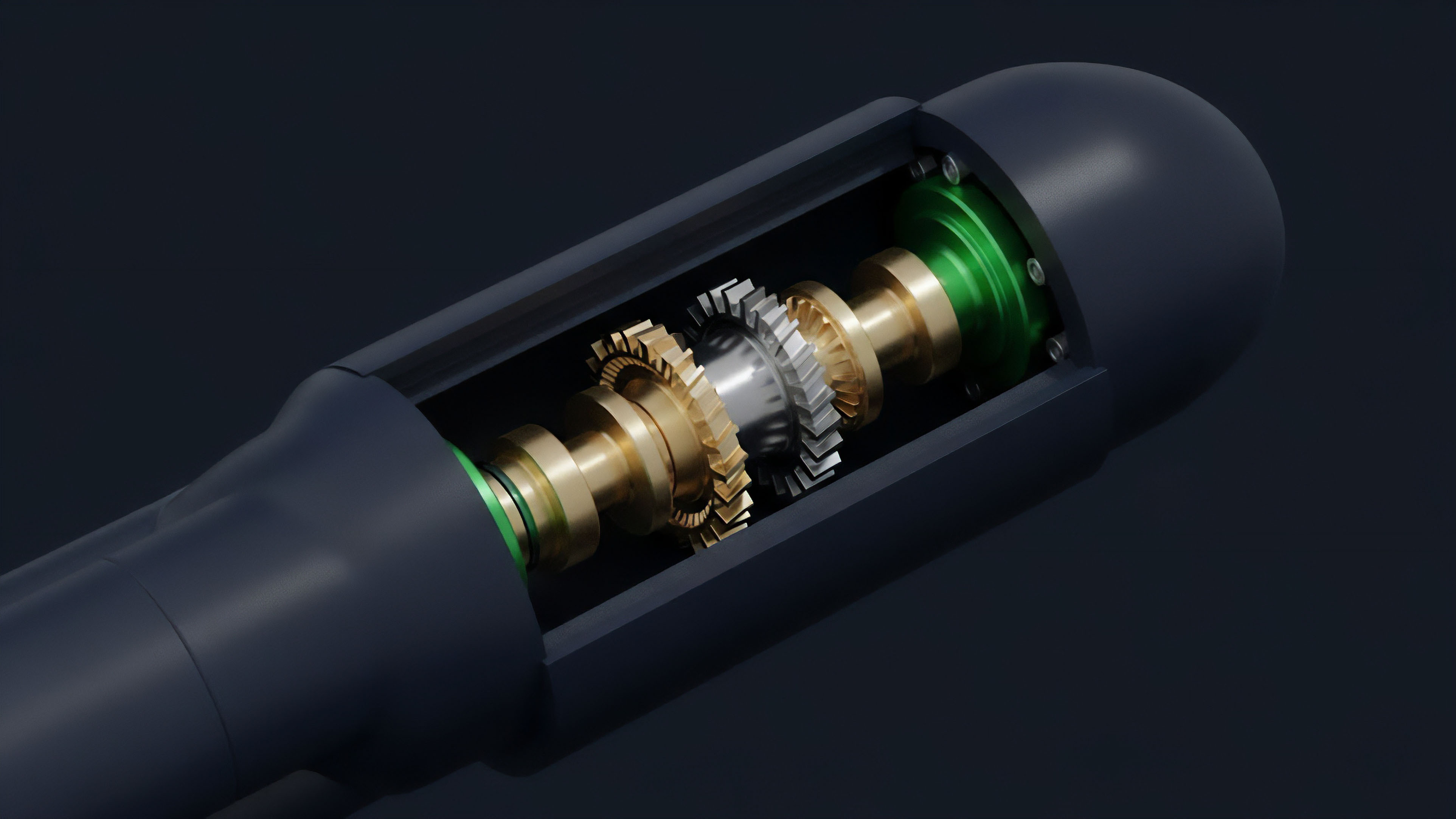

- Implied Volatility Surface Generation: The core function of these engines is to calculate a composite implied volatility surface by synthesizing data from all venues. This allows market makers to identify pricing discrepancies and manage portfolio Greeks.

- Latency Prioritization: The primary goal here is speed. These systems often sacrifice full decentralization for millisecond-level updates, enabling rapid arbitrage and dynamic hedging strategies.

On-Chain Decentralized Oracle Networks

For on-chain options protocols that require a verifiable price feed for automated settlement and liquidations, decentralized oracle networks are the standard solution. These networks aggregate data off-chain and then post a validated result on-chain.

| Methodology Feature | Off-Chain Aggregation (Market Maker Proprietary) | On-Chain Aggregation (Decentralized Oracle Networks) |

|---|---|---|

| Primary Goal | Low latency and risk management | Censorship resistance and verifiable settlement |

| Data Source | Direct API feeds from CEXs and DEXs | Data feeds from multiple oracle nodes |

| Data Integrity Model | Proprietary algorithms and backtesting | Economic incentives, staking, and slashing mechanisms |

| Latency | Sub-second (real-time) | Minutes to hours (block-based updates) |

The fundamental trade-off in data aggregation methodologies is between the low latency required for efficient market making and the high integrity required for trustless on-chain settlement.

Liquidity-Weighted Implied Volatility (LWIV)

A key refinement in aggregation methodologies is the use of LWIV. This approach recognizes that not all liquidity is equal. A deep order book on a major CEX should have a greater influence on the final price than a shallow order book on a smaller DEX.

The aggregation algorithm weights the implied volatility from each venue based on its available liquidity at specific strike prices. This creates a more accurate reflection of true market sentiment and reduces the impact of manipulation on less liquid platforms.

Evolution

The evolution of data aggregation for crypto options has progressed from rudimentary CEX-based feeds to complex, multi-chain oracle architectures.

Initially, protocols relied on simple price feeds from major exchanges. However, the flash crashes and oracle manipulation incidents of 2020 and 2021 demonstrated the fragility of single-source data feeds. The market quickly shifted toward decentralized oracle networks that aggregate data from multiple sources to prevent single points of failure.

The development of new derivatives products, such as AMM-based options and exotic structures, has driven further innovation in aggregation methodologies. These new products require data beyond simple price feeds; they need real-time implied volatility surfaces. The methodologies have evolved from simple volume-weighted averages to more complex algorithms that account for liquidity depth, time decay, and cross-venue discrepancies.

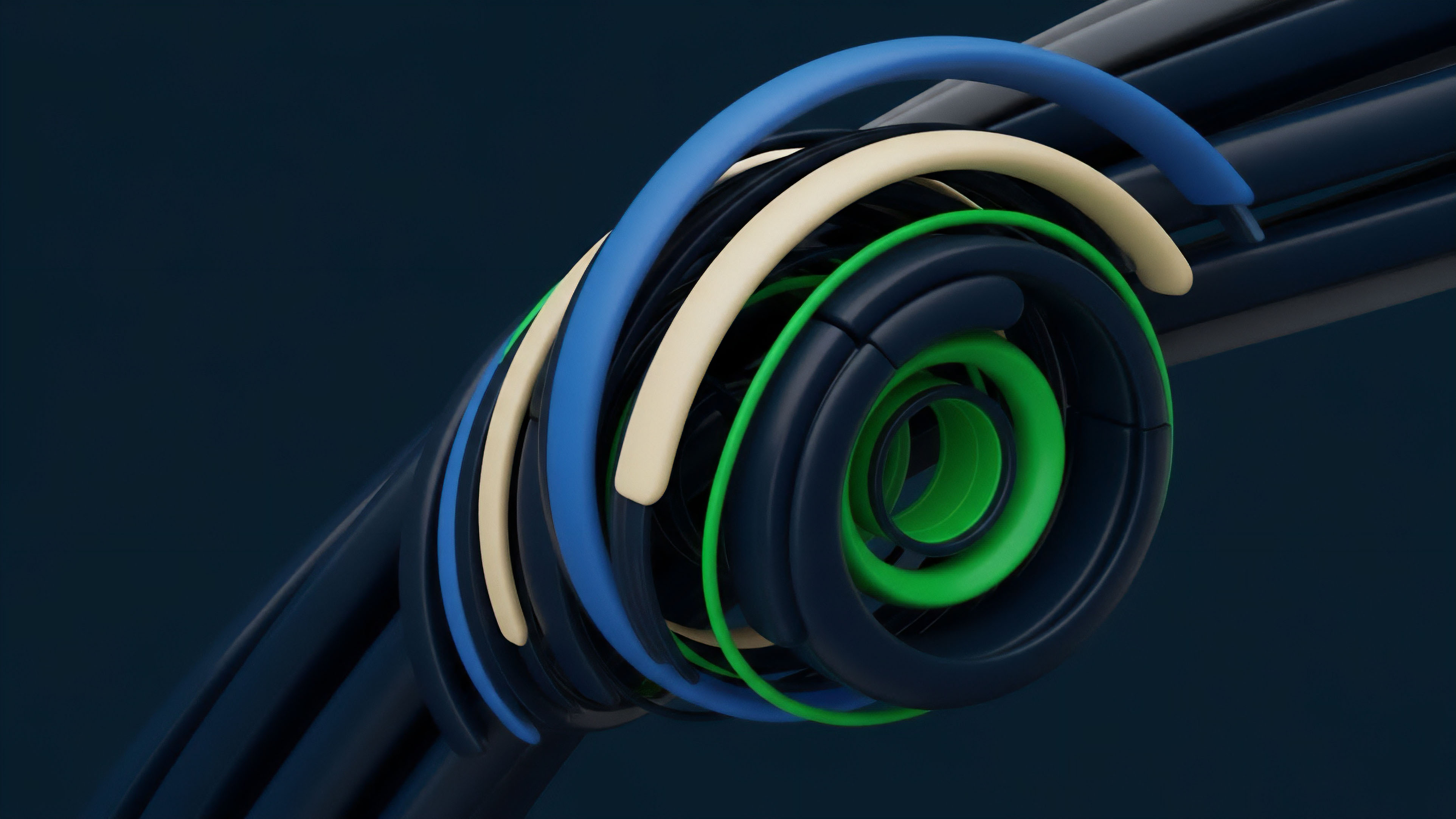

The recent move to Layer 2 solutions and app-specific chains presents a new challenge, as liquidity becomes fragmented across multiple chains. This necessitates aggregation methodologies capable of synthesizing data from different blockchain environments to create a holistic view of market risk.

Horizon

Looking ahead, the next generation of data aggregation methodologies will likely focus on addressing data provenance and integrity through cryptographic proofs.

The current oracle model, while robust, still relies on trust in a set of data providers. The future points toward solutions where data integrity can be proven mathematically.

Zero-Knowledge Proofs for Data Validity

One promising development involves using zero-knowledge (ZK) proofs to verify the accuracy of aggregated data without revealing the raw inputs. A data provider could prove that a price feed was calculated correctly from a set of exchanges without disclosing the exact order book data from each exchange. This would significantly enhance transparency while maintaining data privacy for market makers.

Cross-Chain Aggregation Frameworks

As liquidity fragments across multiple Layer 1 and Layer 2 ecosystems, a new generation of aggregation frameworks must emerge to provide a unified view of market risk. This involves creating a standard for data exchange between different chains and aggregating data across these disparate environments. This will enable the creation of truly global derivatives markets where risk and liquidity can be managed efficiently across all major ecosystems.

The future of data aggregation aims to create a fully verifiable and transparent data supply chain using cryptographic proofs, ensuring a robust foundation for institutional-grade derivatives.

On-Chain Implied Volatility Calculation

The long-term goal for on-chain protocols is to move beyond simply ingesting aggregated data to performing the calculation of implied volatility directly on-chain. This would eliminate the reliance on off-chain data feeds entirely, allowing protocols to create self-contained risk management systems. This requires significant advances in computational efficiency and Layer 2 scaling solutions to make complex calculations feasible on-chain.

Glossary

Liquidity-Weighted Implied Volatility

Data Validation

Amm Options Pricing

Interchain Liquidity Aggregation

Cross-Chain Asset Aggregation

Financial Data Aggregation

Aggregation Functions

Multi-Source Data Aggregation

Order Book Pattern Detection Software and Methodologies