Essence

Consensus Layer Performance represents the temporal and computational efficiency of decentralized validation mechanisms, serving as the primary constraint on throughput and finality for derivative settlement. This metric defines the latency between transaction broadcast and canonical inclusion, directly influencing the pricing of short-dated options and the efficacy of automated liquidation engines. When validation delays increase, the resulting uncertainty expands the width of bid-ask spreads, forcing market makers to extract higher risk premiums to compensate for the inability to hedge positions instantly.

Consensus layer performance dictates the speed and reliability of transaction finality, which directly determines the capital efficiency and risk management precision of decentralized derivatives markets.

The operational integrity of these systems depends on the deterministic nature of block production. In high-volatility environments, the ability of the Consensus Layer to maintain a steady cadence of state updates acts as the anchor for all derivative pricing models. If validation intervals fluctuate, the underlying model assumptions regarding time-to-settlement break down, creating systemic vulnerabilities that propagate through leveraged portfolios.

Origin

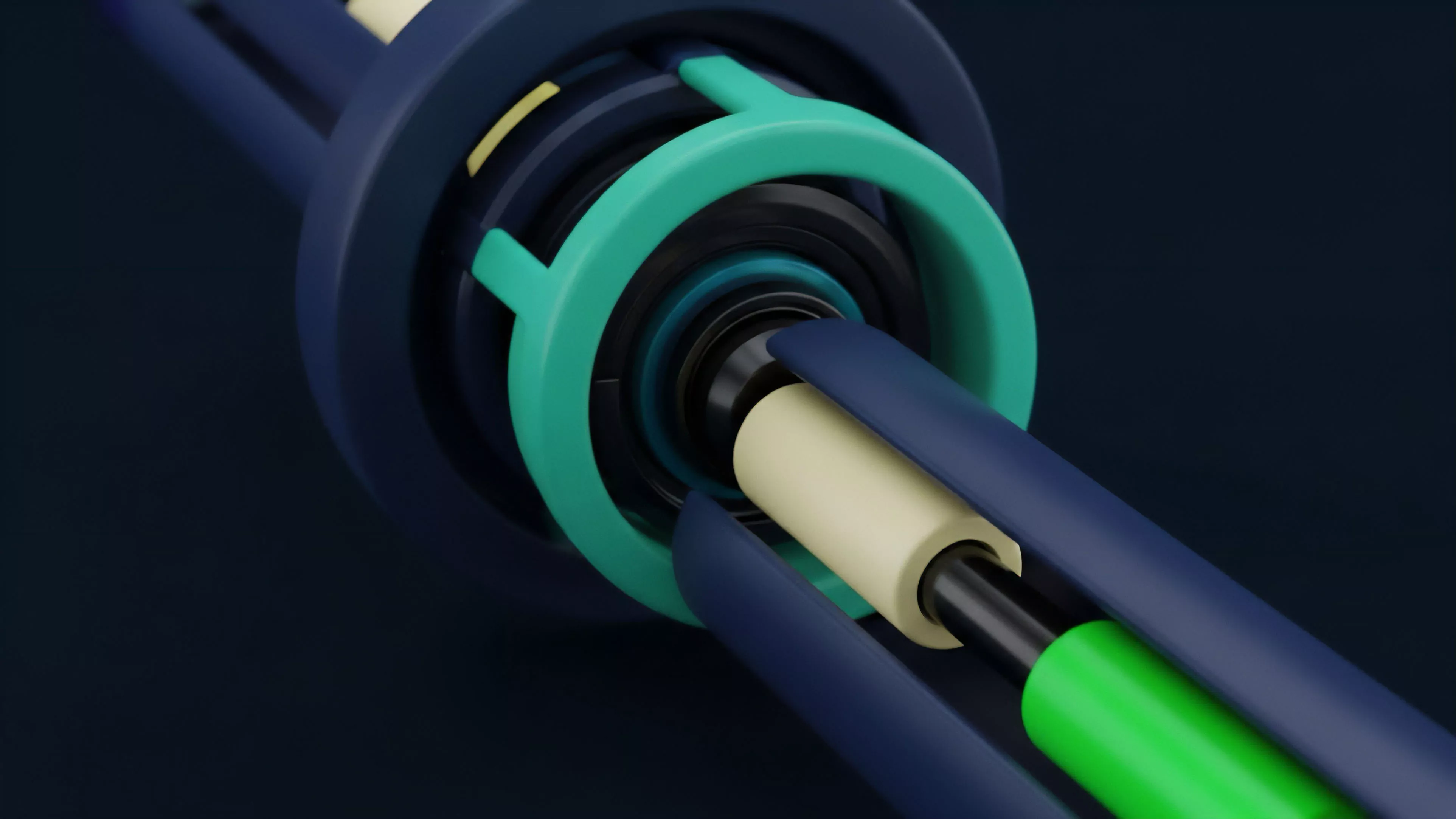

The architectural roots of Consensus Layer Performance trace back to the early trade-offs between decentralization, security, and scalability.

Early designs prioritized absolute validator distribution, often at the cost of rapid finality. This tension necessitated the development of advanced mechanisms to reconcile distributed agreement with the requirements of financial markets, where settlement speed is the defining factor for asset liquidity.

- Proof of Stake protocols replaced energy-intensive mining with validator-driven consensus, altering the economics of block production and latency.

- Finality Gadgets introduced deterministic settlement checkpoints, providing traders with cryptographic guarantees that a transaction remains immutable.

- MEV Extraction emerged as a secondary consequence of consensus design, where participants optimize block space to capture value from front-running and arbitrage.

These developments shifted the focus from simple block propagation to the granular management of state transitions. The evolution from probabilistic finality to instant, deterministic settlement transformed the consensus layer into a foundational component of modern financial infrastructure, moving beyond simple peer-to-peer ledger updates to high-frequency settlement engines.

Theory

The quantitative framework for Consensus Layer Performance centers on the relationship between network latency, throughput, and the cost of capital. Market participants evaluate the system through the lens of Block Time and Finality Latency, as these variables define the upper bound of trade execution speed.

| Metric | Financial Impact |

| Block Interval | Determines maximum frequency of state updates |

| Finality Time | Defines duration of counterparty risk exposure |

| Validator Throughput | Limits capacity for concurrent derivative settlements |

From a quantitative finance perspective, the consensus mechanism acts as the underlying clock for all derivative Greeks. If the clock exhibits jitter, the pricing of Gamma and Theta becomes distorted, as the model assumes a continuous or predictable time-series that the network cannot sustain. Behavioral game theory further complicates this, as validators operate under incentive structures that may prioritize local profit over network-wide latency optimization.

Network latency jitter introduces stochastic noise into derivative pricing models, requiring higher risk premiums to account for delayed hedge execution.

One might observe that this mirrors the physical constraints of light speed in fiber-optic trading, yet here the constraint is not distance but the cryptographic overhead of distributed consensus. This reality necessitates a rigorous approach to margin management, where collateral requirements are scaled based on the current probability of network congestion.

Approach

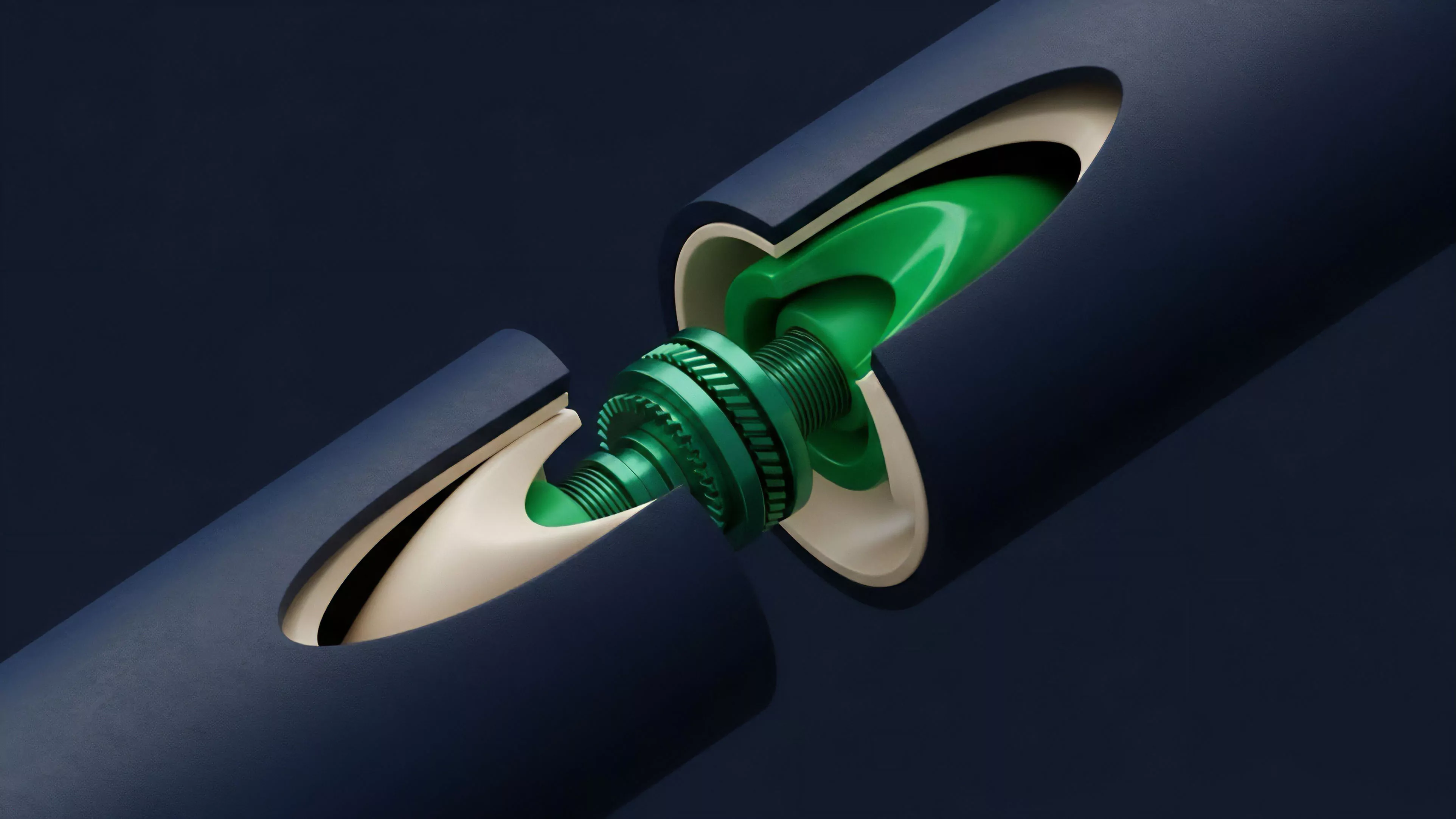

Current strategies for optimizing Consensus Layer Performance focus on reducing the overhead of consensus protocols through sharding and execution-layer separation. By decoupling the ordering of transactions from their execution, networks achieve higher throughput without compromising the security guarantees required for large-scale derivative volumes.

- Modular Architectures allow the consensus layer to focus exclusively on transaction ordering and data availability, delegating execution to specialized rollups.

- Parallel Execution enables the network to process independent derivative settlements simultaneously, reducing bottlenecks during periods of high market activity.

- Validator Tiering categorizes participants by their ability to meet strict performance SLAs, ensuring the network maintains stability even during adversarial conditions.

Market makers now integrate real-time network health monitoring into their algorithmic trading engines, treating Consensus Layer Performance as a dynamic risk factor. When the network experiences congestion, automated strategies shift from aggressive market-making to defensive hedging, adjusting liquidation thresholds to prevent cascading failures in leveraged derivative pools.

Evolution

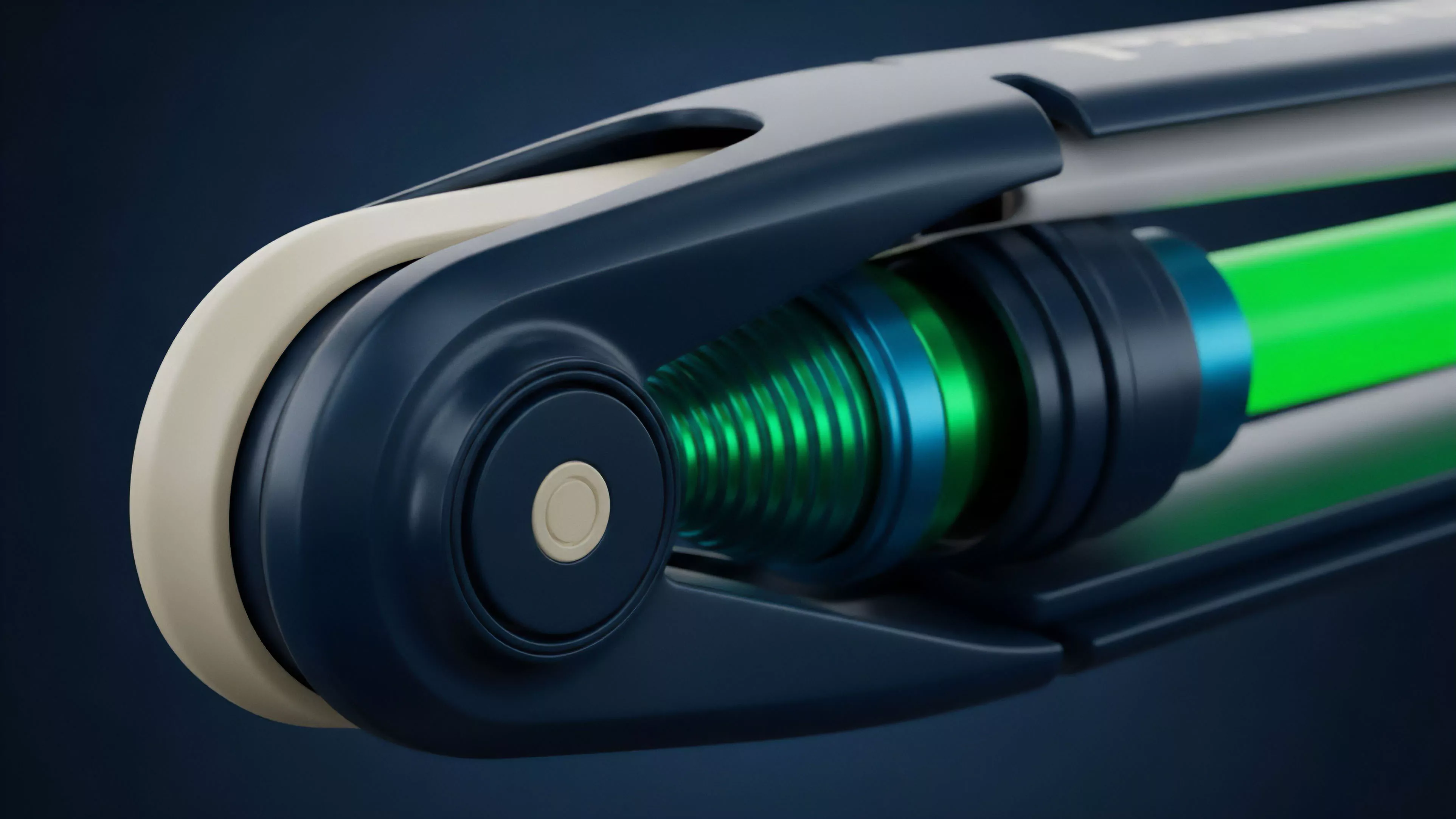

The transition from monolithic to modular consensus structures represents the most significant shift in the history of decentralized finance. Early systems relied on global state updates, which limited performance to the speed of the slowest validator.

This bottleneck created massive inefficiencies, particularly for time-sensitive instruments like options and perpetuals. The introduction of Zero-Knowledge Proofs and Data Availability Layers transformed the landscape, allowing for the verification of vast amounts of data with minimal latency. This evolution shifted the burden of performance from the base layer to secondary, high-speed execution environments, effectively creating a multi-layered financial stack.

Modular consensus designs allow for specialized performance at each layer, enabling high-frequency trading capabilities within a decentralized, trustless environment.

This development reflects a broader trend toward specialization, where the base layer provides the security substrate, and higher layers provide the financial speed required by global capital. We are currently witnessing the maturation of these systems, where the focus has moved from theoretical throughput to the practical, adversarial-tested stability of the settlement layer.

Horizon

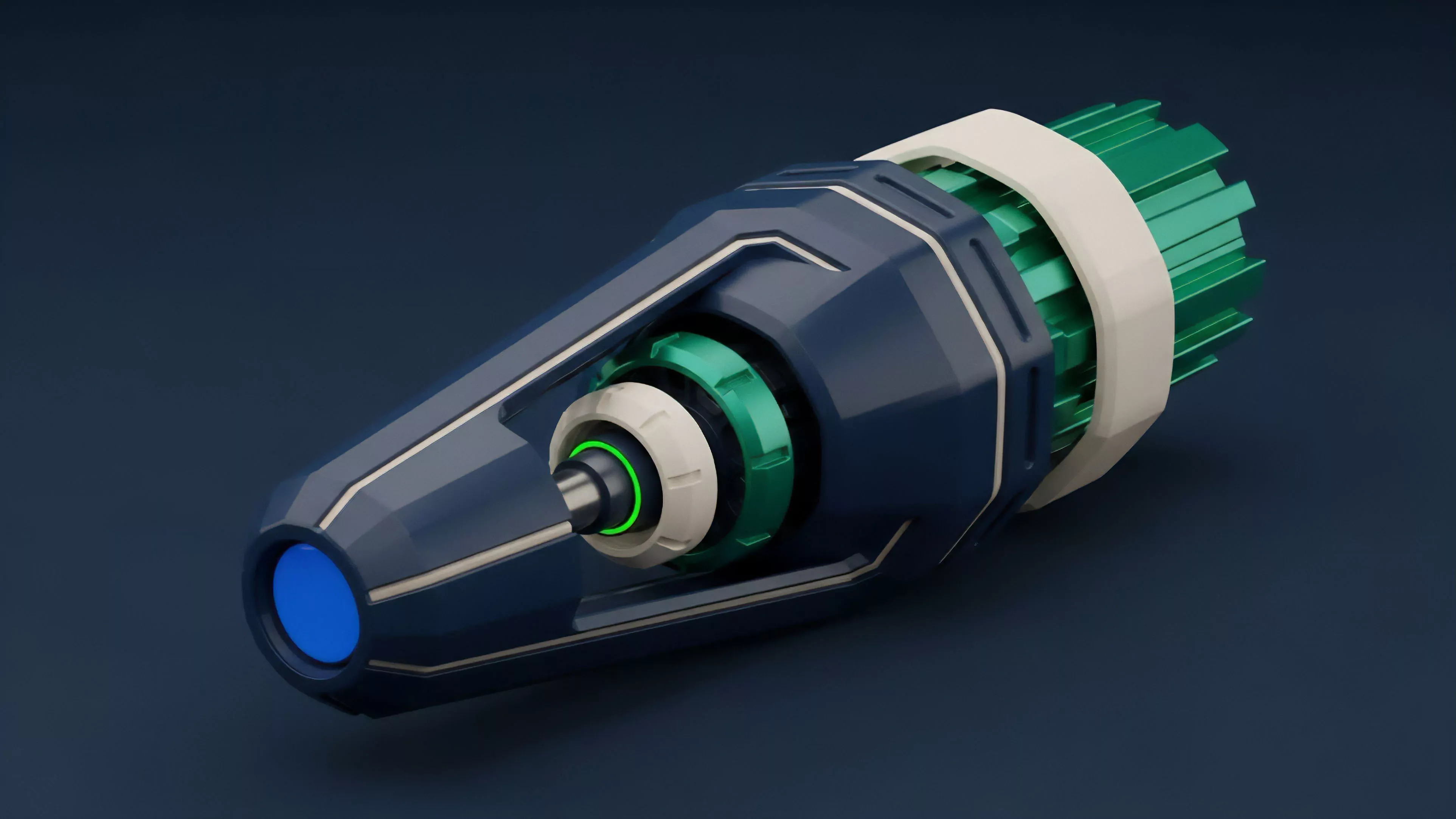

The future of Consensus Layer Performance lies in the integration of AI-driven congestion management and predictive validator scheduling. As networks scale, the ability to dynamically allocate resources based on anticipated market volatility will become the defining competitive advantage for any protocol supporting derivative markets.

| Development | Impact on Derivatives |

| AI-Optimized Routing | Reduced latency during peak market volatility |

| Predictive Finality | Lower collateral requirements for instant settlement |

| Adaptive Consensus | Dynamic scaling based on real-time throughput demand |

The next generation of protocols will treat the consensus layer as a fluid resource, expanding and contracting based on the needs of the financial instruments running on top of them. This creates a more resilient system, capable of absorbing massive liquidity shocks without compromising the integrity of derivative contracts. The ultimate goal is a state where the underlying consensus is invisible, providing a frictionless, high-performance foundation for all global value transfer.