Essence

Consensus Algorithms represent the mechanical foundations for state synchronization across distributed ledger environments. They dictate how participants reach agreement on the validity of transactions without reliance on centralized intermediaries. These protocols enforce consistency and liveness, ensuring that the ledger remains a single, immutable source of truth despite adversarial conditions or network partitions.

Consensus algorithms function as the distributed rulebooks governing how independent network participants validate data to maintain a unified state.

These systems manage the trade-offs between speed, security, and decentralization. A robust protocol aligns economic incentives with technical performance, compelling actors to act in the interest of the network. Failure to maintain this alignment results in network instability, forks, or susceptibility to various attack vectors, directly impacting the integrity of any derivative instruments settled on the platform.

Origin

The genesis of these systems lies in solving the Byzantine Generals Problem, a classic challenge in distributed computing regarding how to achieve consensus in a system where components may fail or behave maliciously.

Satoshi Nakamoto introduced Proof of Work as the initial breakthrough, utilizing computational expenditure to solve the double-spend problem without a central authority.

- Proof of Work established the precedent of linking security to tangible resource expenditure.

- Byzantine Fault Tolerance models provided the academic framework for evaluating network resilience against node failure.

- Sybil Resistance mechanisms emerged as a necessary defense against attackers creating multiple identities to manipulate voting or validation power.

This history reveals a transition from purely cryptographic signatures to game-theoretic incentive structures. The evolution reflects a growing realization that technical protocols alone cannot ensure security; economic stakes must underpin the validator selection process to discourage malicious behavior effectively.

Theory

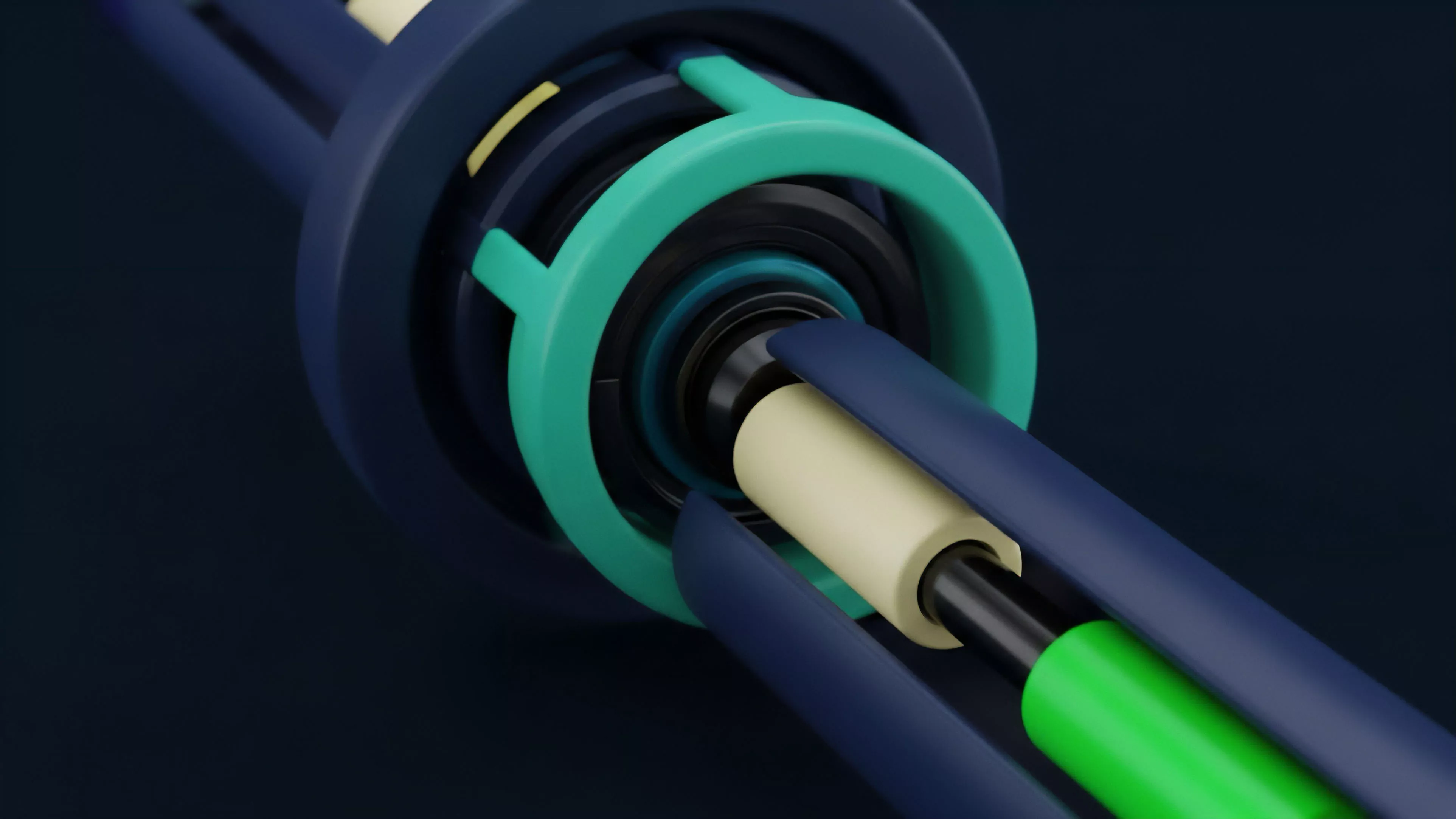

The architectural structure of a Consensus Algorithm determines the settlement finality and throughput capacity of a financial protocol. Systems rely on specific mechanisms to verify transaction ordering and data integrity, directly influencing the margin requirements and liquidation engines of derivative platforms.

| Mechanism | Resource Basis | Finality Type |

| Proof of Work | Computational Power | Probabilistic |

| Proof of Stake | Capital Lockup | Deterministic |

| Delegated Proof of Stake | Reputational/Stake Weight | Fast Deterministic |

The mathematical rigor behind these systems involves managing Greeks ⎊ specifically delta and gamma ⎊ within the context of protocol-level risk. A change in the underlying consensus mechanism alters the variance of block production times, which impacts the pricing of short-dated options and the efficiency of automated market makers.

The choice of consensus mechanism fundamentally defines the settlement latency and risk profile for all derivatives built upon the ledger.

These protocols function within an adversarial environment where participants optimize for their own utility. Behavioral game theory informs the design of slashing conditions and reward schedules, aiming to create an equilibrium where honest participation yields the highest expected value.

Approach

Current implementations prioritize scalability through sharding and Layer 2 scaling solutions that inherit security from the primary Consensus Algorithm. Market participants evaluate these protocols based on their capacity to handle high-frequency order flow without sacrificing decentralization.

- Validator Selection now frequently utilizes stake-weighted random sampling to mitigate centralization risks.

- State Finality is achieved through multi-round voting processes that prevent deep reorgs in the chain.

- MEV Extraction ⎊ Miner Extractable Value ⎊ has become a central focus, as validators optimize transaction ordering to maximize returns, creating significant implications for market microstructure.

This reality forces architects to consider the systemic risk posed by validator cartels. When a few entities control the majority of the staked assets or computational power, the protocol becomes vulnerable to censorship and price manipulation, threatening the stability of any derivatives market operating on that infrastructure.

Evolution

The transition from resource-intensive mining to capital-efficient staking marked a shift toward institutional-grade infrastructure. Earlier iterations focused on maximizing censorship resistance at the cost of throughput.

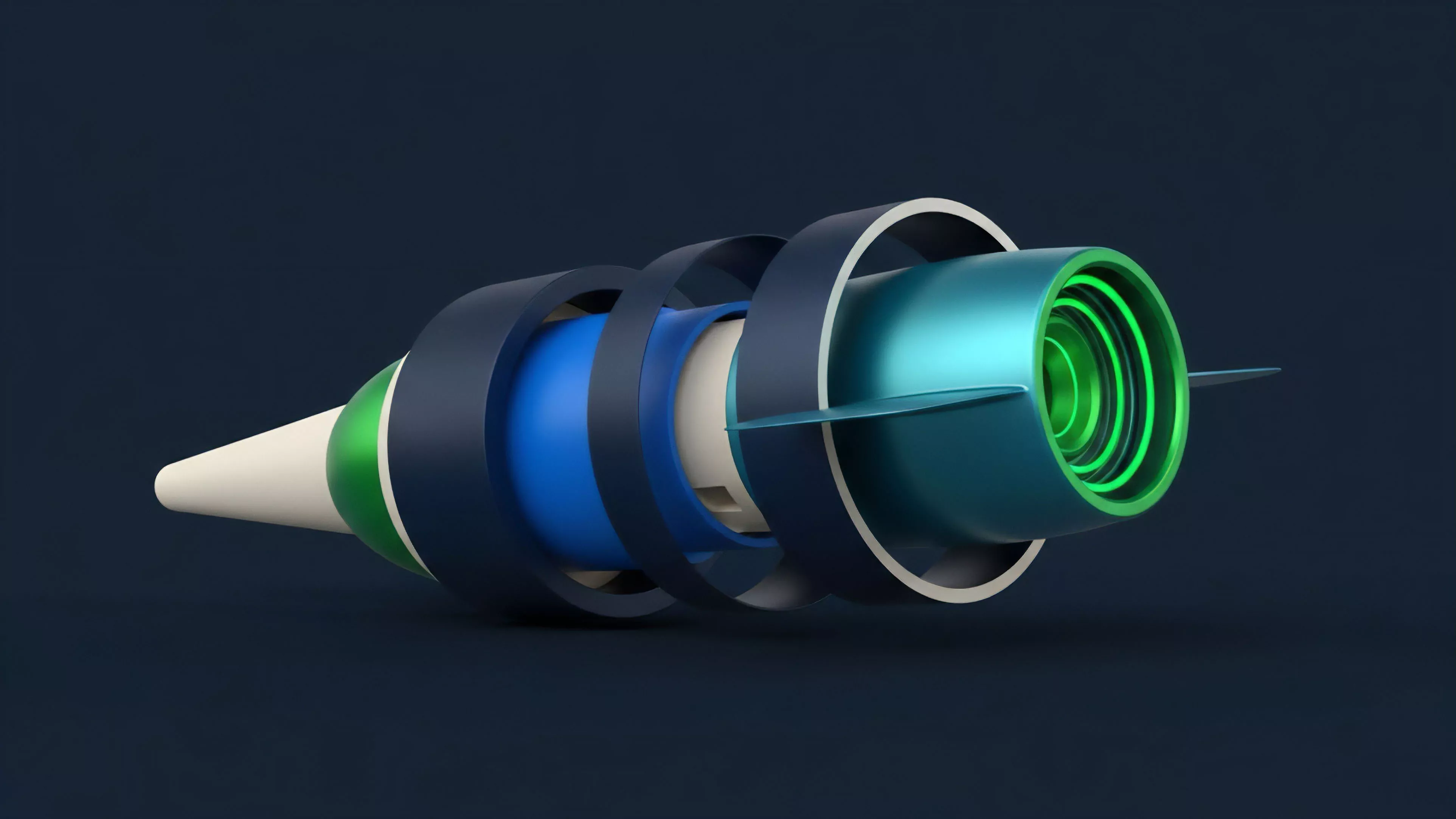

Modern designs now incorporate advanced cryptographic primitives like zero-knowledge proofs to decouple data availability from execution.

Modern protocol design increasingly emphasizes decoupling execution from settlement to maximize network efficiency and throughput.

One might consider how the evolution of these protocols mirrors the history of clearinghouses in traditional finance, where the need for rapid, reliable settlement drove the creation of centralized hubs. The current movement toward Liquid Staking derivatives adds a layer of complexity, introducing systemic leverage that could propagate failure if the underlying protocol faces a consensus-level challenge.

Horizon

Future developments will likely focus on Modular Consensus, where protocols separate data availability, execution, and settlement into distinct layers. This approach allows for tailored security models based on the specific requirements of the application, such as high-frequency options trading versus low-frequency settlement.

| Future Trend | Implication |

| Modular Execution | Increased throughput for derivatives |

| Cross-Chain Consensus | Unified liquidity across ecosystems |

| Zero-Knowledge Settlement | Enhanced privacy and finality |

The ultimate goal remains the creation of a global, permissionless settlement layer that can support the complexity of traditional finance while retaining the transparency of decentralized ledgers. The primary hurdle is not technical but systemic ⎊ designing protocols that remain resilient under extreme market stress while maintaining the incentive structures necessary for decentralized operation.