Essence

Computational Finance within digital asset markets functions as the mathematical engine for price discovery and risk management. It transforms raw blockchain data into actionable models, enabling the systematic valuation of derivative instruments. By applying rigorous quantitative techniques to decentralized order books, participants quantify uncertainty and manage exposure in highly volatile environments.

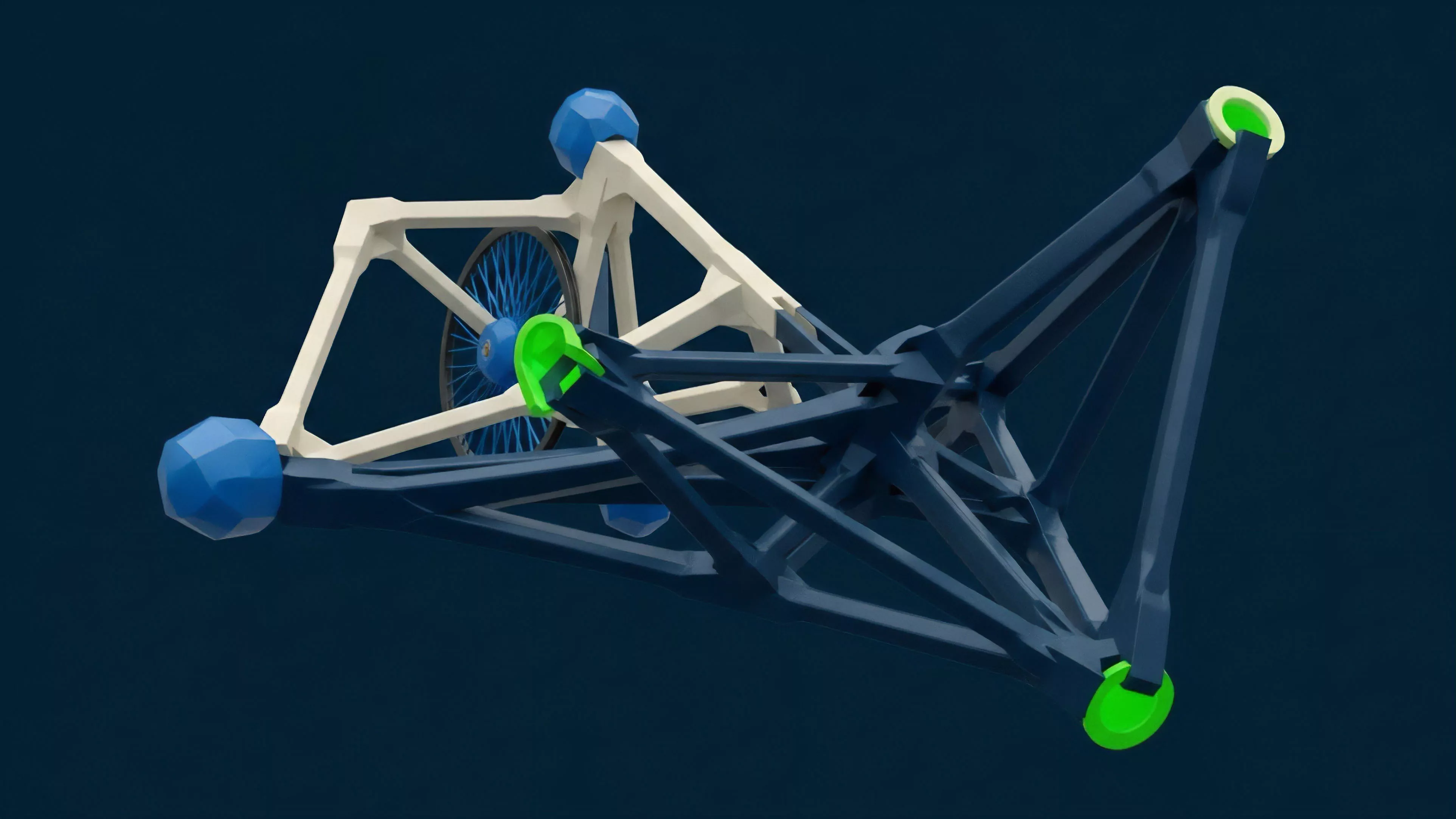

Computational Finance provides the quantitative infrastructure necessary to value risk and price derivatives within decentralized markets.

This domain relies on the intersection of stochastic calculus, numerical methods, and high-performance computing to solve complex valuation problems. It addresses the unique challenges of crypto markets, such as fragmented liquidity and the absence of traditional centralized clearing. Through these methods, market participants move beyond speculative trading, adopting structured strategies that account for systemic feedback loops and protocol-specific constraints.

Origin

The genesis of this field traces back to the adaptation of classical quantitative models from traditional equity and commodity markets to the nascent infrastructure of decentralized finance.

Early pioneers sought to replicate the Black-Scholes-Merton framework to price options on volatile assets like Bitcoin. This required adjusting traditional assumptions to accommodate unique features such as 24/7 trading cycles, high-frequency volatility spikes, and the absence of traditional margin requirements.

- Stochastic Volatility Models adapted to account for the heavy-tailed distributions observed in digital asset price movements.

- Automated Market Maker Mechanisms introduced new challenges for modeling impermanent loss and liquidity provider risk.

- Smart Contract Oracles emerged as the technical bridge, feeding real-time price data into on-chain pricing engines.

As these markets matured, the focus shifted from simple replication to the creation of native derivative structures. Developers began designing protocols that internalized risk management through code rather than intermediaries. This evolution replaced legacy settlement systems with autonomous, collateralized mechanisms, fundamentally altering how market participants interact with leverage and counterparty risk.

Theory

The theoretical framework governing these systems rests on the assumption that market behavior is predictable through probabilistic modeling, despite the adversarial nature of decentralized protocols.

Quantitative analysts utilize specific metrics, known as Greeks, to measure sensitivity to price, time, and volatility changes. These metrics allow for the dynamic hedging of portfolios, minimizing exposure to adverse market movements.

Greeks serve as the fundamental diagnostic tools for managing directional and volatility risk in complex derivative portfolios.

| Metric | Financial Function | Systemic Relevance |

|---|---|---|

| Delta | Price sensitivity | Determines hedging requirements |

| Gamma | Rate of delta change | Indicates risk of rapid liquidation |

| Vega | Volatility sensitivity | Quantifies exposure to market stress |

| Theta | Time decay | Measures option premium erosion |

Adversarial environments necessitate a focus on Smart Contract Security as a primary variable in valuation. Unlike traditional finance, code exploits act as systemic shocks that can instantly nullify the value of underlying collateral. Consequently, the pricing of derivatives must incorporate a risk premium that accounts for the probability of protocol failure or governance manipulation.

Approach

Modern practitioners deploy sophisticated algorithmic strategies to navigate liquidity fragmentation across multiple decentralized exchanges.

This involves building custom infrastructure to capture high-fidelity data, which is then processed through proprietary models to identify arbitrage opportunities or mispriced volatility. Execution occurs via automated agents that minimize slippage and optimize trade routing.

Algorithmic execution strategies prioritize liquidity capture and risk-adjusted returns within fragmented decentralized trading venues.

The strategic landscape is defined by the following core operational components:

- Latency Management involves minimizing the time between signal generation and on-chain execution to avoid adverse selection.

- Capital Efficiency requires the continuous optimization of collateral ratios to maximize exposure while maintaining liquidation buffers.

- Cross-Protocol Arbitrage exploits price discrepancies between decentralized and centralized venues, reinforcing market integration.

Market makers utilize these approaches to provide depth to order books, often employing dynamic hedging to remain delta-neutral. This requires constant interaction with on-chain data streams, ensuring that pricing models remain calibrated to current network activity and broader macro-crypto correlations. The effectiveness of these strategies hinges on the robustness of the underlying infrastructure, particularly the speed and reliability of data feeds.

Evolution

The field has transitioned from basic, off-chain replicated products to complex, on-chain native instruments.

Initial efforts were limited to centralized venues offering linear products, but the current landscape features decentralized perpetuals, options vaults, and structured products that utilize smart contracts for automated settlement. This shift reflects a broader trend toward trust-minimized financial architecture, where the protocol itself enforces margin requirements and liquidations.

Native on-chain derivatives represent the transition from trust-based intermediaries to protocol-enforced financial settlement systems.

The trajectory of this development is marked by several distinct phases:

- Replication Phase focused on porting traditional financial models directly to digital asset platforms.

- Innovation Phase saw the development of automated margin and liquidation engines specific to blockchain constraints.

- Systemic Integration Phase emphasizes the interconnection of derivatives with lending protocols and yield-bearing assets.

This development trajectory is not linear. Technical limitations, such as gas costs and oracle latency, have forced designers to innovate within constrained environments, leading to the adoption of layer-two scaling solutions and off-chain order books with on-chain settlement. These architectural choices reflect a pragmatic recognition of the trade-offs between decentralization, security, and performance.

Horizon

The next stage of development centers on the convergence of institutional-grade quantitative modeling with permissionless infrastructure.

This will involve the deployment of advanced machine learning models for predictive volatility analysis, integrated directly into on-chain protocols. Furthermore, the rise of privacy-preserving computation will allow for more complex derivative structures without sacrificing the confidentiality of trading strategies.

Future derivative protocols will utilize privacy-preserving computation and machine learning to achieve institutional-grade market efficiency.

The evolution of regulatory frameworks will likely influence the design of future protocols, pushing architecture toward hybrid models that satisfy compliance requirements while maintaining decentralization. Market participants will increasingly rely on sophisticated, autonomous systems that can adapt to rapid shifts in liquidity and systemic risk. The ultimate goal is a global, interoperable financial layer where derivative instruments are as accessible and secure as the underlying assets themselves. What remains the most significant paradox in balancing automated risk management with the inherent unpredictability of decentralized governance?