Essence

Collateralized Data Feeds represent a fundamental shift in oracle design, moving beyond a simple data relay service to a mechanism for economic security. The core challenge for decentralized derivatives protocols is the “oracle problem,” where a smart contract must rely on external data ⎊ like asset prices ⎊ to execute critical functions such as liquidations and settlements. If this data source is compromised or provides inaccurate information, the protocol’s entire financial state can be destabilized, leading to cascading failures.

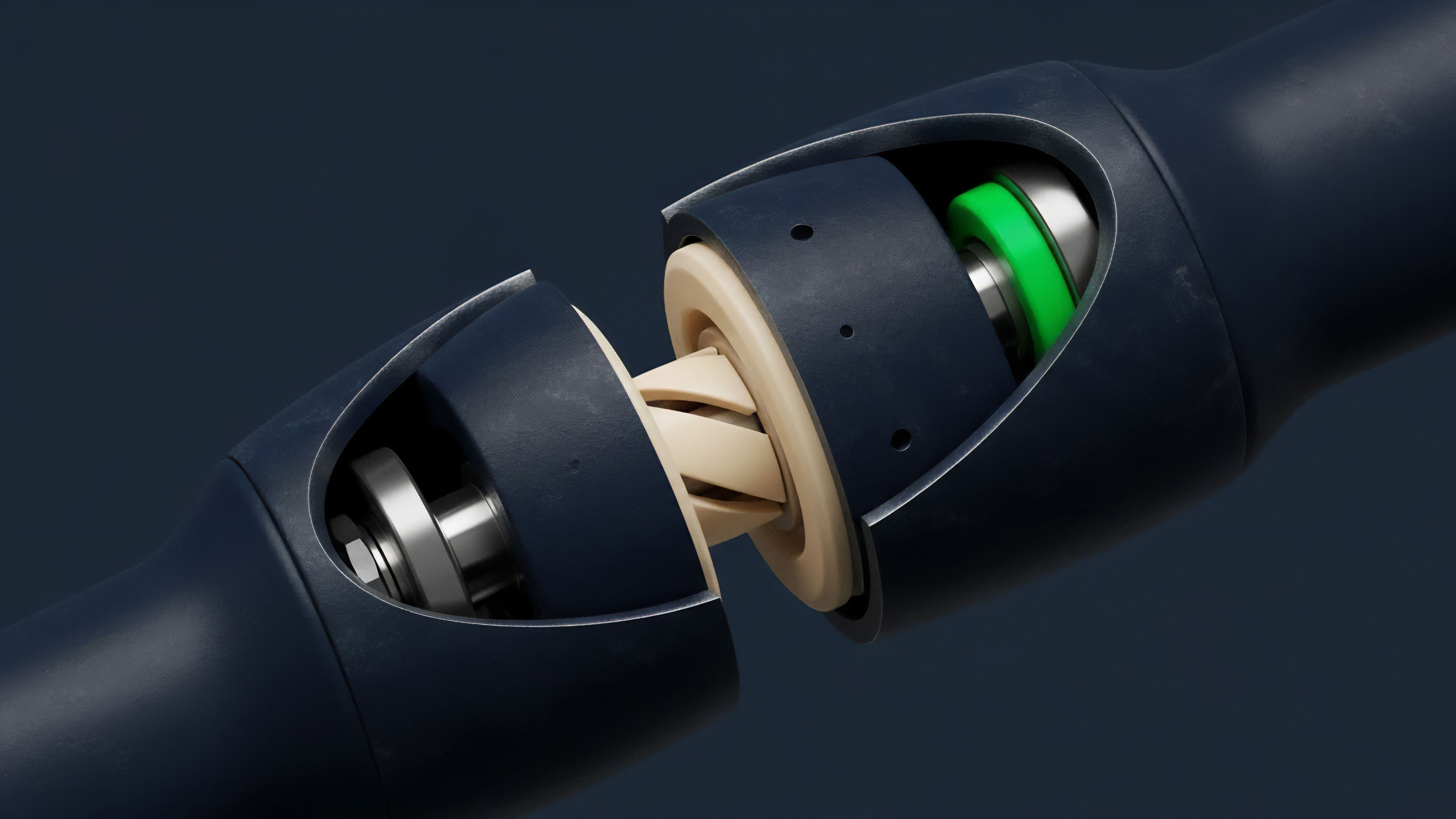

A Collateralized Data Feed mitigates this systemic risk by requiring the data provider to stake a significant amount of capital as collateral. This collateral acts as a financial guarantee of data integrity.

The Collateralized Data Feed transforms the data provider from a simple information source into an economically aligned counterparty, making the cost of providing malicious data prohibitively high.

The data feed itself becomes a financial instrument where the value of the underlying collateral directly backs the veracity of the information being supplied. This design aligns incentives, ensuring that the oracle provider has more to lose from misbehavior than they stand to gain from a potential exploit. This architecture is particularly critical for options and derivatives markets, where small price discrepancies can trigger massive, asymmetric losses for users and the protocol’s liquidity pool.

The mechanism ensures that the integrity of the data stream is secured by real-world value, rather than simply by reputation or trust in a centralized entity.

Origin

The necessity for Collateralized Data Feeds emerged directly from early failures in decentralized finance, where the “oracle problem” was exposed as the primary vector for systemic risk. In the initial phases of DeFi development, protocols relied on simplistic oracles, often a single data source or a small, permissioned committee.

These early models operated on the assumption that data providers would act honestly. However, market events and flash loan exploits demonstrated that a lack of economic incentive alignment made these systems vulnerable. Attackers quickly learned to manipulate spot markets on decentralized exchanges, which were often used as oracle sources, to trigger liquidations or profit from arbitrage against the derivatives protocols.

The need for a robust solution became apparent when protocols faced significant losses due to oracle manipulation during periods of high market volatility. The core issue was that the cost of manipulating the oracle was often lower than the potential profit from the exploit. The evolution toward collateralization was a direct response to this cost-benefit imbalance.

Early designs experimented with simple collateral requirements, but these evolved into more sophisticated systems with integrated dispute resolution mechanisms. The goal was to create a system where the data provider’s financial stake was sufficient to deter attacks, moving the system from a trust-based model to a verifiable, economically secured model.

Theory

The theoretical foundation of Collateralized Data Feeds rests on principles of game theory and economic security.

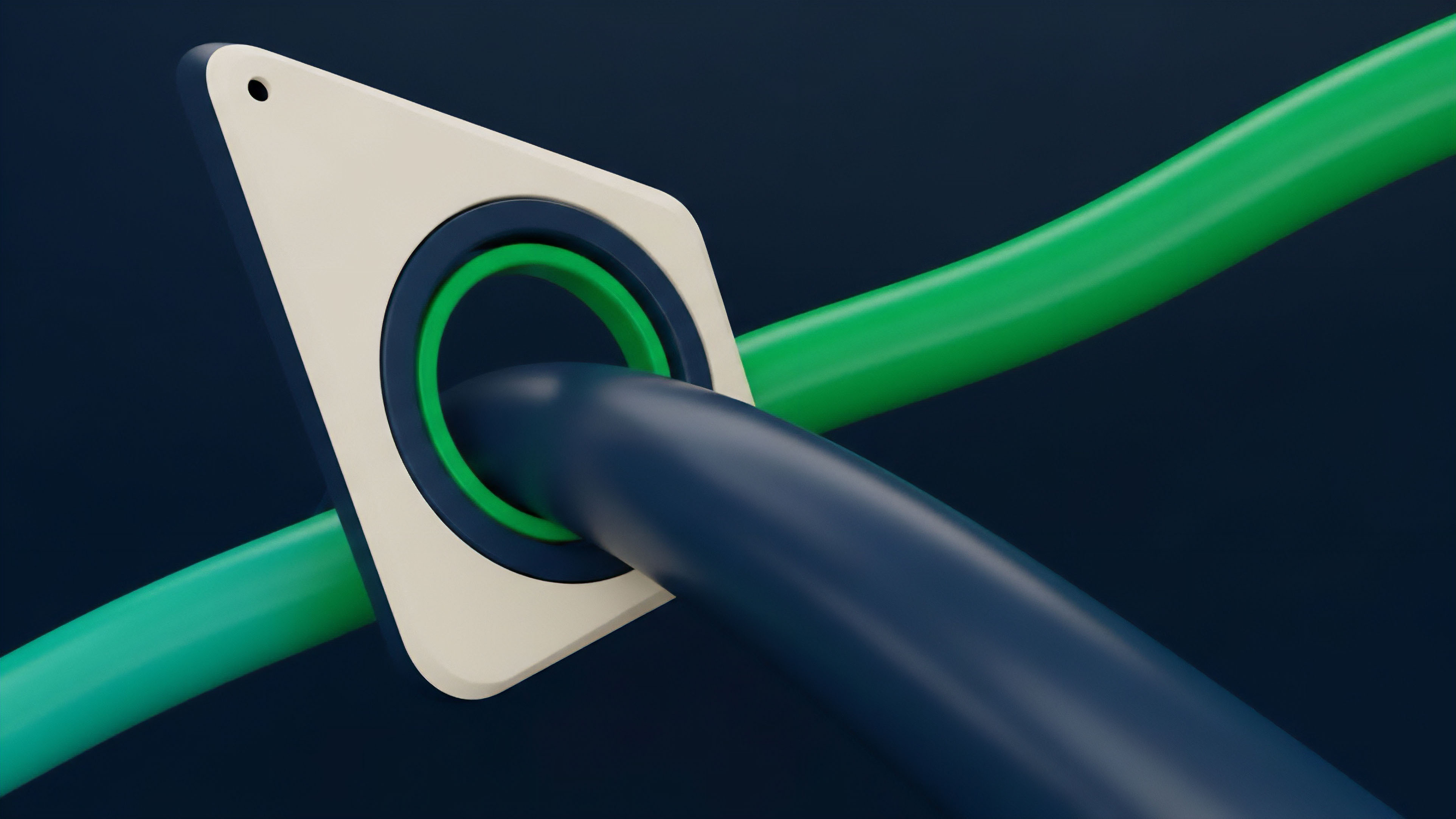

The design goal is to create a Nash equilibrium where the dominant strategy for all participants ⎊ data providers, users, and protocol governance ⎊ is to maintain the integrity of the system. This equilibrium is achieved by ensuring the cost of providing incorrect data (the slashing penalty) significantly outweighs the potential profit derived from the exploit. The core parameters that define this equilibrium are:

- Collateralization Ratio: The amount of collateral required relative to the value of the data being secured. A higher ratio increases security but reduces capital efficiency.

- Slashing Mechanism: The process by which collateral is destroyed or redistributed when a data feed is proven to be malicious. The penalty must be severe enough to deter bad actors.

- Dispute Resolution System: A mechanism for challenging data feeds and verifying accuracy. This often involves a decentralized network of validators who vote on the validity of a data point.

This system functions as a decentralized insurance mechanism for data integrity. The collateral acts as a bond, providing financial recourse to users if the oracle fails. The security model must also account for Liveness versus Safety trade-offs.

Liveness ensures the data feed updates frequently, while Safety ensures the data is accurate. In options protocols, high volatility demands high liveness, but this increased speed can introduce new attack vectors if not properly secured by sufficient collateral and robust dispute mechanisms. The system design must balance these competing priorities to ensure both timely execution and financial integrity.

Approach

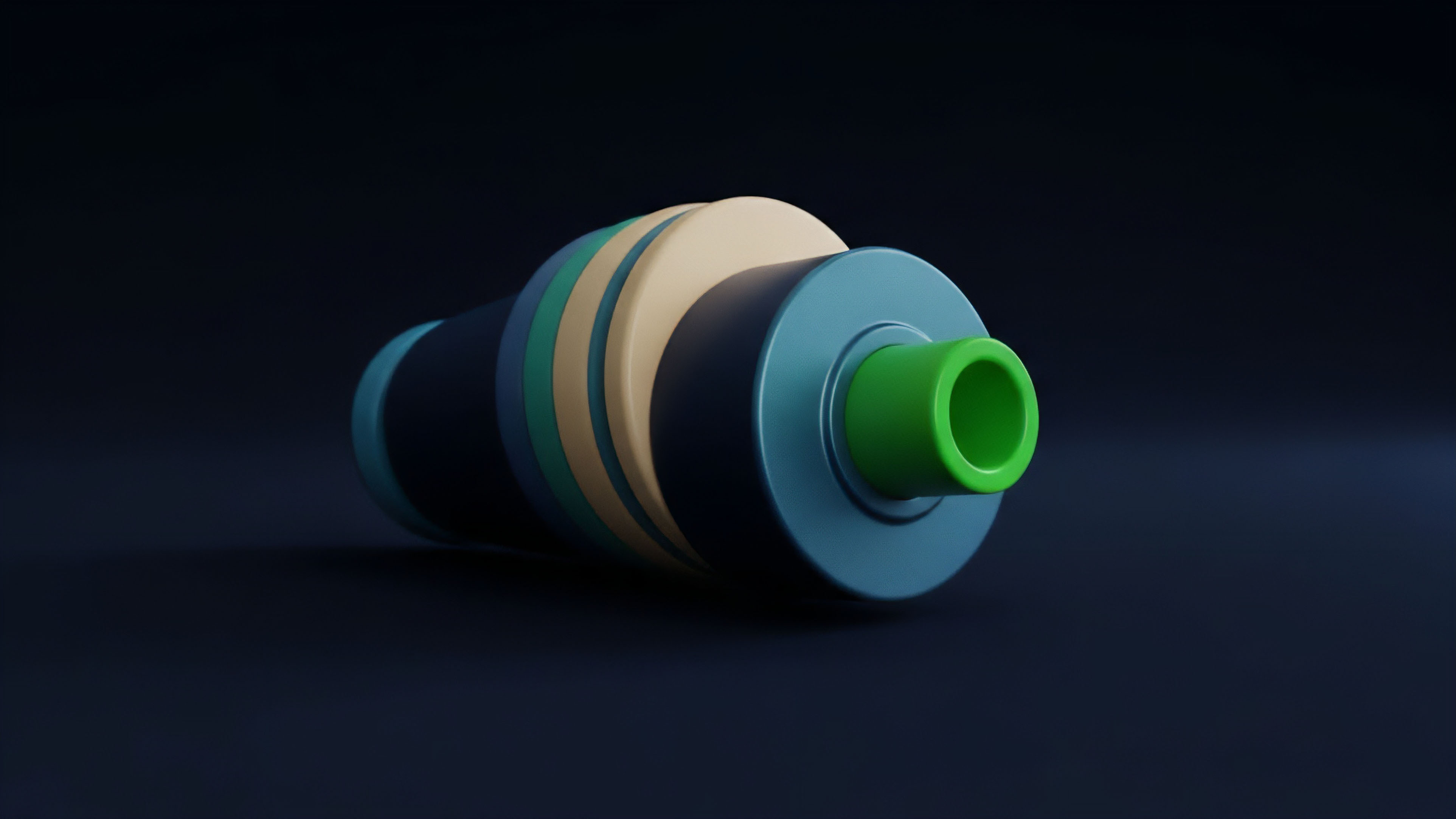

Implementing Collateralized Data Feeds in a derivatives protocol requires careful architectural decisions, particularly regarding data aggregation and latency management. The choice of data source for a CDF is critical. For options protocols, a Time-Weighted Average Price (TWAP) or Volume-Weighted Average Price (VWAP) from a decentralized exchange is often used instead of a single spot price.

This aggregation method reduces the impact of flash loan attacks, which can temporarily manipulate a single block’s price. The collateralization requirement must be dynamically adjusted based on market volatility; higher volatility necessitates greater collateral to cover potential losses from rapid price changes between updates. A robust approach to CDFs involves several layers of defense.

- Primary Data Feed: The initial, collateralized data stream providing real-time pricing.

- Dispute Mechanism: A secondary layer where users or other validators can challenge the primary feed by staking their own collateral.

- Slashing and Resolution: If a dispute is successful, the primary provider’s collateral is slashed, and the challenger may receive a reward.

The protocol’s margin engine must be tightly integrated with the CDF to ensure liquidations are triggered based on verified, collateralized data. The challenge here is balancing the capital cost of collateralization with the necessary security level. If collateral requirements are too high, data providers may be deterred, leading to a lack of data availability.

If requirements are too low, the system remains vulnerable to economic attack.

| Oracle Model | Security Mechanism | Risk Profile | Capital Efficiency |

|---|---|---|---|

| Centralized Feed | Reputation and Trust | High single point of failure risk; vulnerable to censorship. | High; no collateral required. |

| Uncollateralized Aggregator | Decentralized Data Sources | Vulnerable to manipulation of underlying sources; no recourse. | High; no collateral required. |

| Collateralized Data Feed | Economic incentives via collateral and slashing. | Lower risk; security scales with collateral value. | Lower; capital locked in collateral. |

Evolution

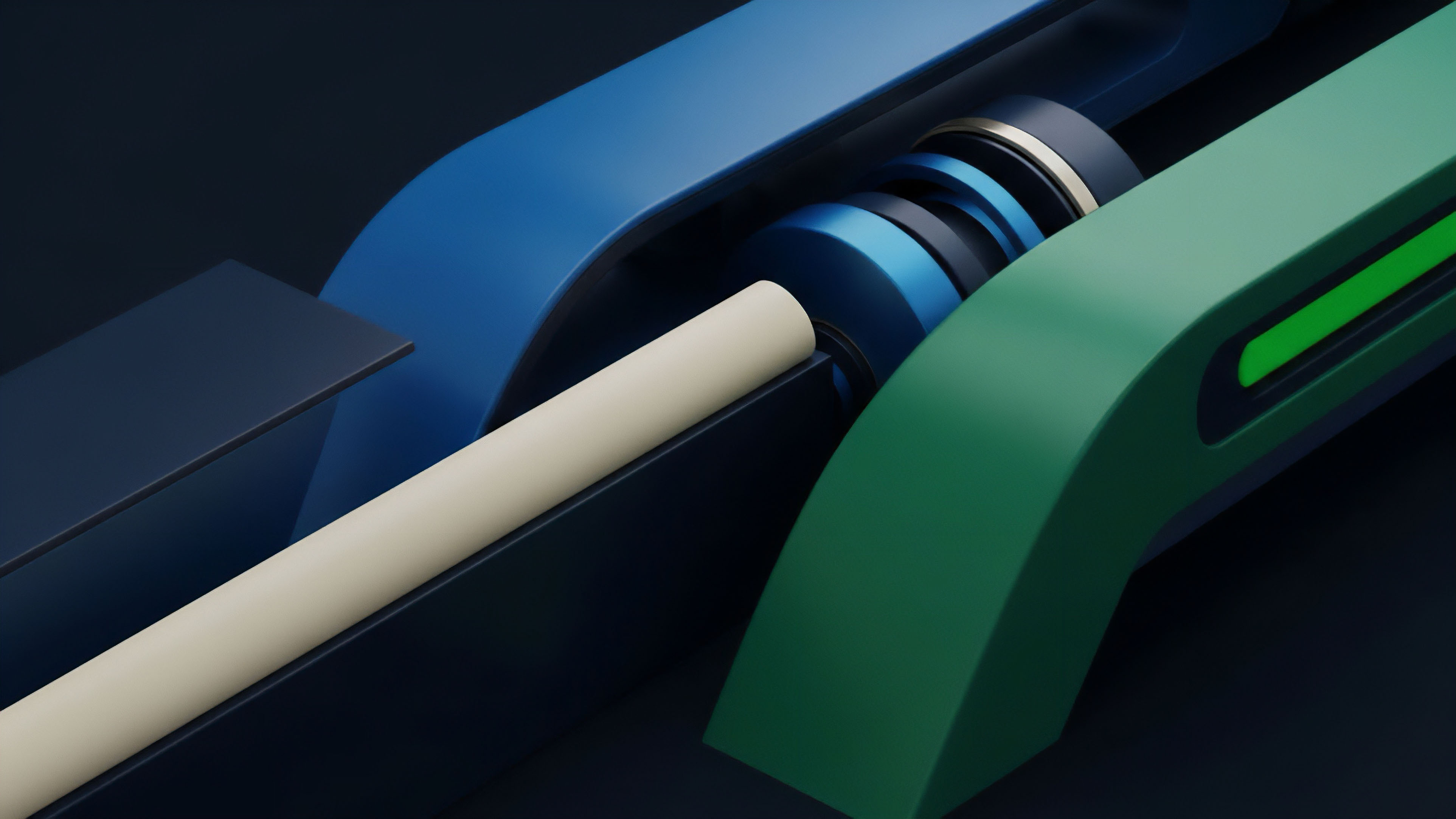

The evolution of Collateralized Data Feeds reflects a broader trend toward layered security and decentralization in financial systems. Initially, collateralization was a binary concept: either the oracle provider staked collateral or they did not. Modern CDFs have moved toward a more nuanced, multi-layered approach.

This includes the implementation of Decentralized Oracle Networks (DONs) where data providers form a collective and collateralize their collective data output. This structure distributes risk across multiple entities, making collusion more expensive.

The next generation of CDFs integrates governance directly into the data provision process, allowing the community to dynamically adjust parameters based on market conditions and perceived risk.

Furthermore, the integration of governance mechanisms has become critical. When a dispute arises, a decentralized autonomous organization (DAO) or a specific governance body often determines the validity of the challenge. This adds a human layer of judgment to the purely mathematical slashing mechanism, allowing for more flexible responses to complex market scenarios that fall outside simple price deviations. This evolution demonstrates a recognition that purely code-based solutions for data integrity are insufficient when dealing with adversarial human behavior and complex market dynamics. The system must adapt to both technical and behavioral risks.

Horizon

Looking ahead, the development of Collateralized Data Feeds will be driven by two primary forces: the need for more complex data and the demand for enhanced security through cryptographic primitives. The next phase of derivatives protocols will move beyond simple price feeds to require data on volatility, implied volatility skew, and other complex financial metrics. CDFs will need to adapt to secure these multi-dimensional data streams. This presents significant challenges, as validating complex financial models is far more difficult than verifying a simple spot price. The integration of zero-knowledge proofs (ZKPs) into CDFs represents a significant leap forward. ZKPs allow a data provider to prove that their data calculation follows a specific methodology without revealing the underlying data itself. This enhances privacy while maintaining verifiability. This capability is crucial for bringing sensitive financial data on-chain without exposing proprietary trading strategies or compromising market integrity. The future of CDFs lies in their ability to bridge the gap between traditional finance (TradFi) and DeFi by providing secure, verifiable data feeds for a wider range of financial products, ultimately enabling the creation of synthetic assets and exotic options with institutional-grade security.

Glossary

Economic Incentive Alignment

Latency Management

Data Feed

Financial Modeling

Continuous Data Feeds

Centralized Exchange Feeds

Omni Chain Feeds

Pricing Vs Liquidation Feeds

Nash Equilibrium