Capital Efficiency Loss Fundamentals

The concept of Capital Efficiency Loss, within the architecture of decentralized options and derivatives, defines the opportunity cost incurred by locking up collateral that significantly exceeds the necessary risk capital. This excess collateral remains static, failing to generate yield or participate in other market activities, thereby diminishing the systemic velocity of value transfer. This friction is a direct consequence of the Protocol Physics of decentralized finance ⎊ specifically, the latency and cost inherent in on-chain settlement and liquidation mechanisms.

The issue is not one of simple over-collateralization; rather, it is the systemic premium required to offset the asynchronous nature of risk management in an adversarial, transparent environment. A smart contract cannot react to sudden volatility spikes with the instantaneous speed of a centralized exchange’s internal ledger, forcing protocols to mandate larger collateral buffers. These buffers serve as a latency hedge against the time required for an oracle update to finalize, a transaction to be mined, and a liquidation to be processed.

Capital Efficiency Loss is the systemic premium paid by DeFi protocols to compensate for the latency and determinism constraints of on-chain risk management.

The architect must acknowledge that every basis point of capital loss represents a drag on the network’s overall utility. It means less capital is available for market making, for yield generation, or for providing the foundational liquidity that stabilizes the entire derivative stack. The ultimate goal is to design a system where the Risk-to-Collateral Ratio approaches unity, without compromising the solvency of the protocol.

Origin of Capital Friction

The roots of this capital friction lie in the initial, pragmatic designs of early DeFi options platforms. These protocols, built on the principle of auditable transparency, had to prioritize solvency above all else, often defaulting to a model of full collateralization for every short option position. This conservative approach was a necessary reaction to the novelty of the technology and the high volatility of the underlying crypto assets.

Early decentralized options platforms effectively replicated the structure of a covered call strategy, where the entire notional value of the underlying asset was locked to write a single call option. This Atomic Collateral Model proved secure, yet inherently inefficient. It treated every position as an isolated silo of risk, failing to recognize the offsetting or diversifying effects of a broader portfolio ⎊ a fundamental flaw when measured against the portfolio margining standards of traditional finance.

The initial lack of robust, low-latency, decentralized volatility feeds and liquidator networks further cemented the reliance on excess collateral. Without a trustworthy, real-time mechanism to calculate the true risk of a portfolio, the only viable defense against a “Black Swan” event ⎊ or even a localized flash crash ⎊ was to demand an insurmountable collateral cushion. This trade-off was deliberate: security for capital velocity.

Quantitative Theory and Loss Mechanics

The rigorous quantitative analysis of Capital Efficiency Loss reveals it to be a direct function of three primary systemic variables. Our inability to respect the mathematical relationship between these variables is the critical flaw in our current models.

Quantifying Capital Drag

The core theoretical framework for quantifying CEL can be expressed as the difference between the Theoretical Minimum Margin (TMM) ⎊ derived from the portfolio’s aggregated Greeks and stress-testing ⎊ and the Protocol Required Margin (PRM).

| CEL Variable | Definition | Impact on Capital |

|---|---|---|

| Liquidation Lag | Time between margin breach and collateral seizure. | Increases PRM as a buffer against adverse price movement during the lag. |

| Volatility Drag | Excess capital held to cover unexpected jumps (fat tails). | Directly proportional to the implied volatility surface’s skew and kurtosis. |

| Oracle Latency | Delay in price data feed update and finality. | Mandates a larger haircut on collateral value, increasing PRM. |

Protocol Physics and Liquidation

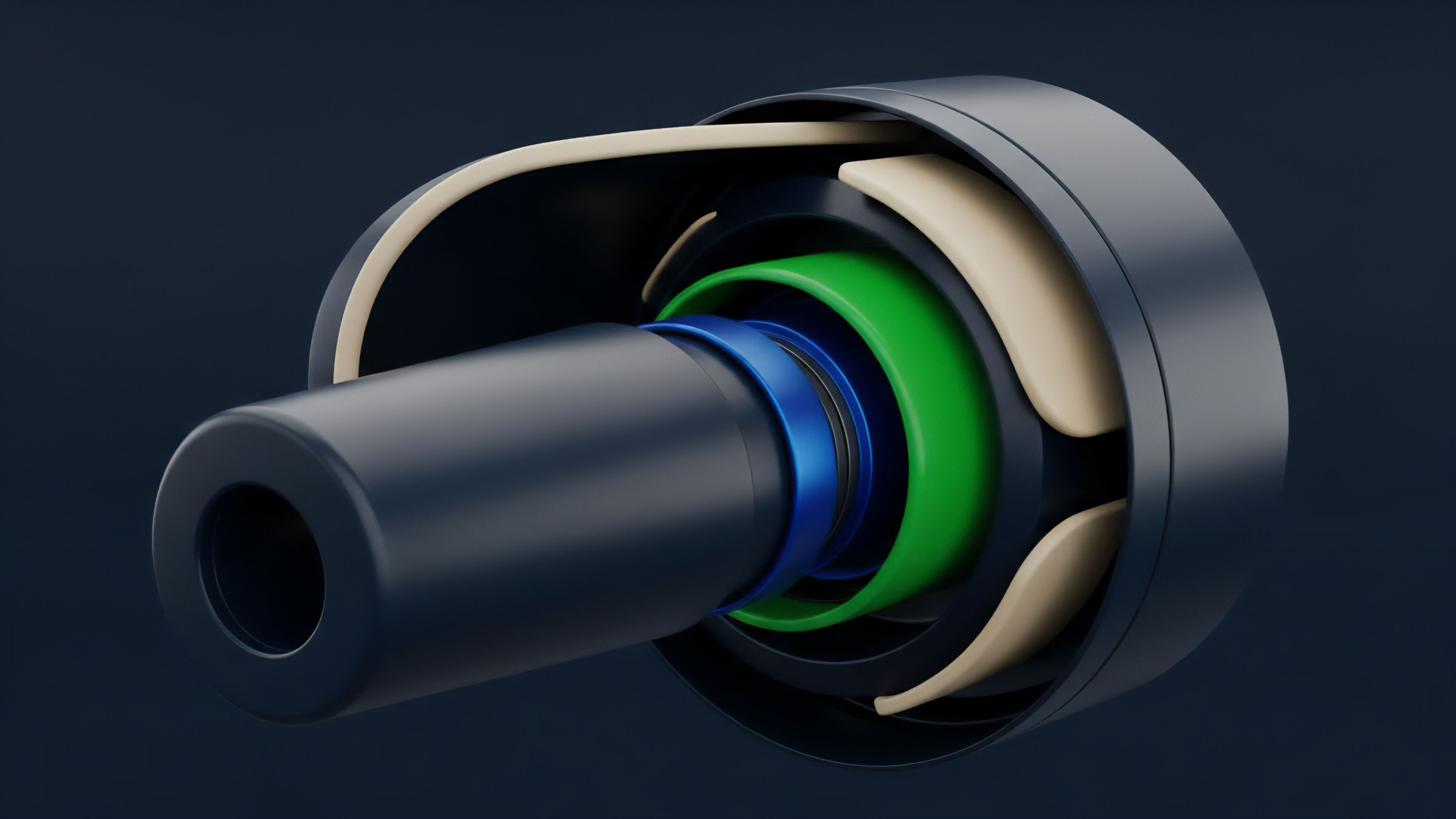

The physics of a decentralized options protocol are governed by the liquidation engine. In a centralized system, liquidation is a singular, instantaneous ledger adjustment. On-chain, it is a multi-step process susceptible to Maximum Extractable Value (MEV) and gas price spikes.

The cost of a failed or delayed liquidation is borne by the protocol’s solvency pool, which in turn demands higher collateral from all participants.

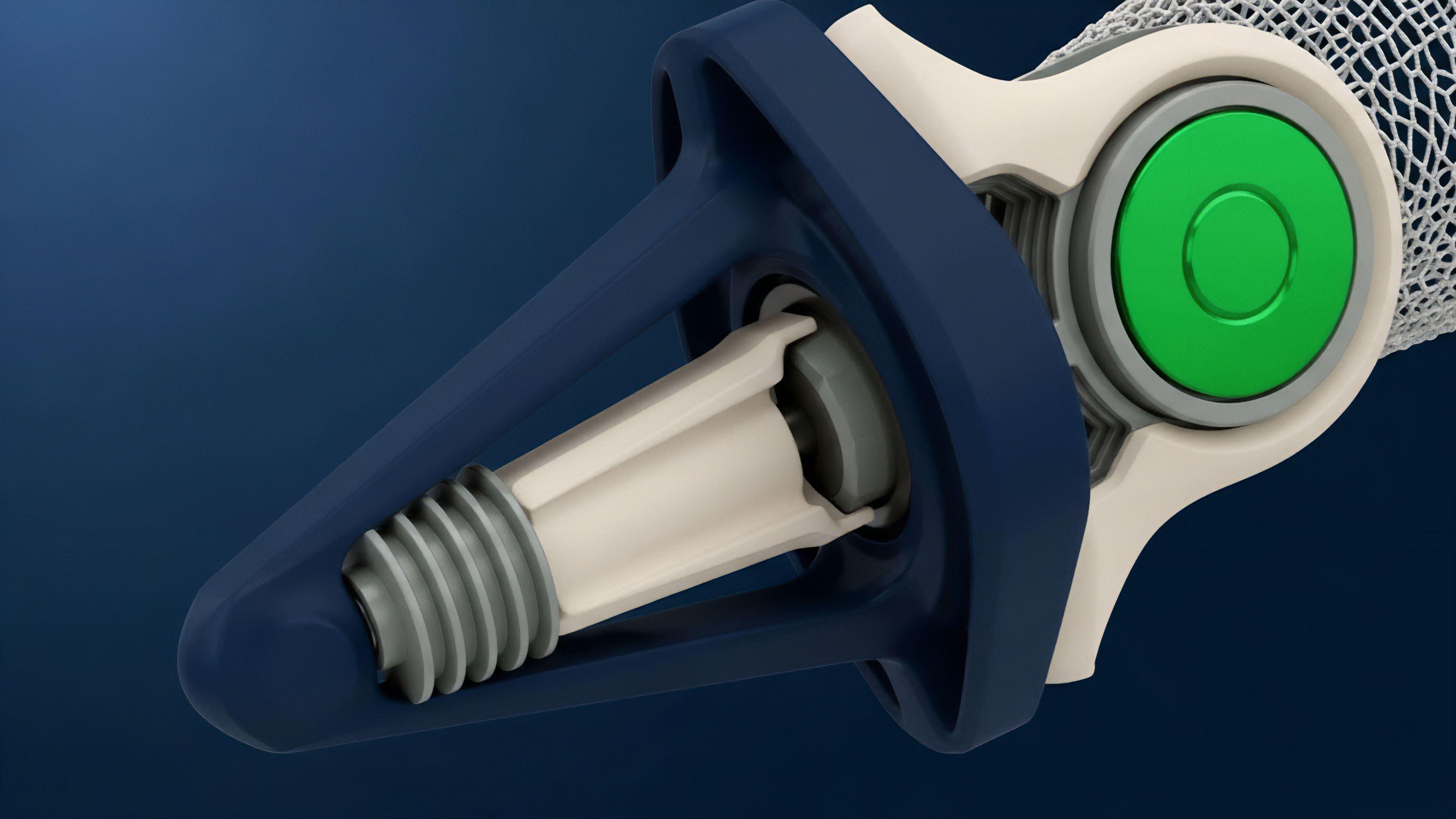

- Margin Threshold Breach: The portfolio value falls below the PRM.

- Transaction Competition: Liquidators compete in a gas auction to execute the liquidation transaction.

- Price Slippage: The forced sale of collateral causes market slippage, consuming more of the remaining collateral than anticipated.

- Protocol Solvency Drain: Any residual deficit is absorbed by the protocol’s insurance fund, leading to an increase in future margin requirements or fees ⎊ a systemic cost passed to the user.

The Capital Efficiency Loss metric is an inverse measure of a protocol’s ability to manage its systemic tail risk and liquidate positions at speed and scale.

The system is adversarial by design; participants are always probing the liquidation threshold. This constant stress test ⎊ a fascinating intersection of quantitative finance and behavioral game theory ⎊ forces the architects to over-engineer the margin engine. We must design for the worst-case, not the average-case.

The very possibility of a coordinated attack on the liquidation mechanism requires the system to hold more capital than any pure Black-Scholes model would suggest.

Current Risk Management Approaches

The current approaches to mitigating Capital Efficiency Loss revolve around moving away from the isolated collateral model toward more holistic, portfolio-level risk assessment. This requires a significant increase in computational complexity, moving the margin calculation closer to the sophistication of a prime brokerage.

Unified Margin Accounts

The most significant architectural shift is the implementation of Unified Margin Accounts. Instead of collateralizing each option position individually, all positions across a single user are aggregated into one account. The margin requirement is then calculated based on the net risk of the entire portfolio, factoring in hedges and offsetting exposures.

- Delta Hedging Credit: A short call option and a long put option on the same underlying, which are naturally offsetting in terms of Delta exposure, receive a reduction in the overall margin requirement.

- Cross-Collateral Utilization: Allows the use of multiple asset types (e.g. ETH, USDC, BTC) as collateral, dynamically applying haircuts based on their historical volatility and correlation to the underlying options.

- Risk Parameter Set: The calculation must account for the five key Greeks ⎊ Delta, Gamma, Theta, Vega, and Rho ⎊ to determine the portfolio’s sensitivity across various market conditions.

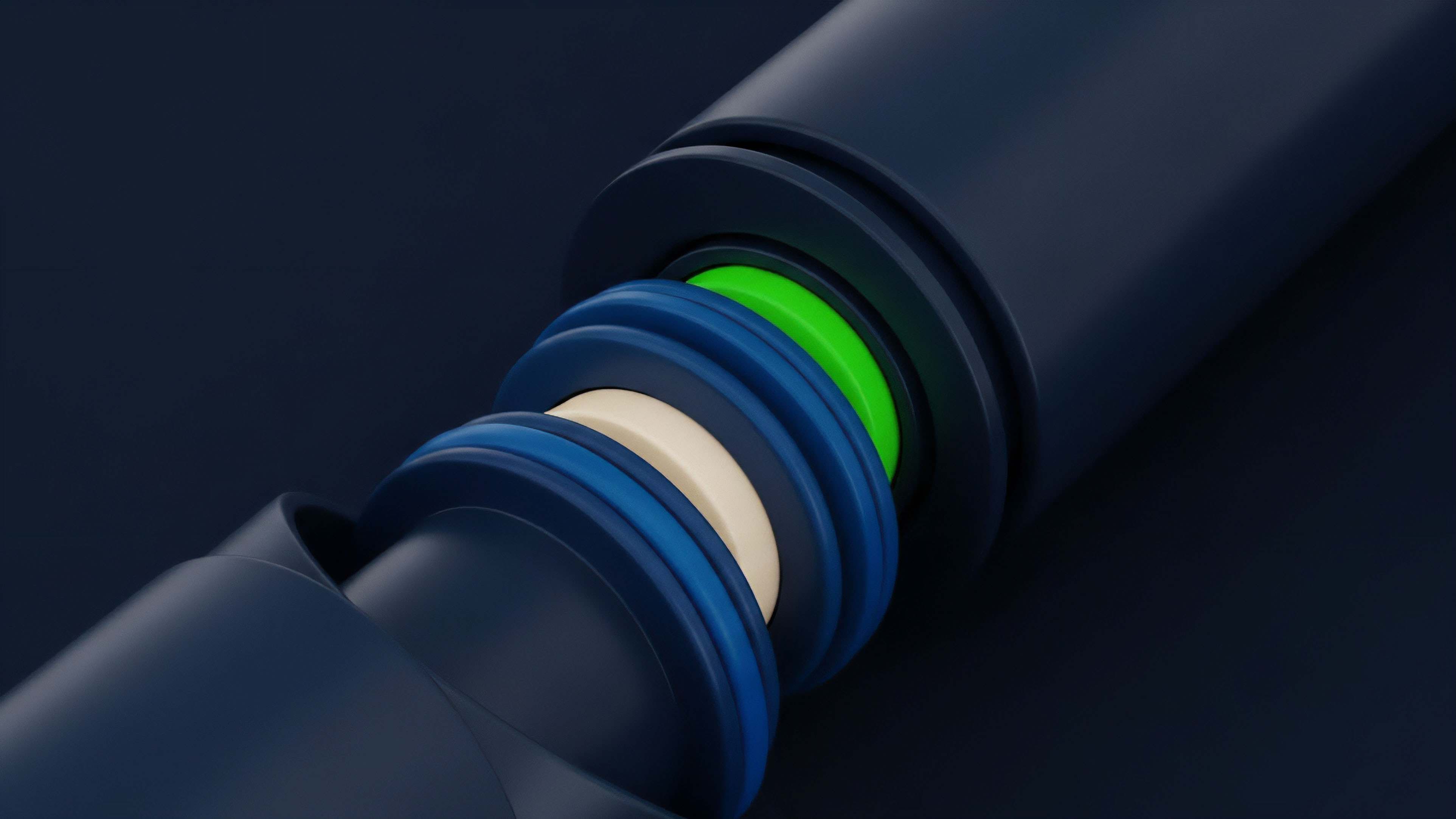

Dynamic Collateral Haircuts

Protocols are beginning to implement dynamic haircuts on collateral assets. A stablecoin might have a 0% haircut, while a volatile, low-liquidity asset might have a 25% haircut. This haircut is not static; it adjusts based on real-time factors like:

| Haircut Factor | Data Source | Efficiency Implication |

|---|---|---|

| On-Chain Liquidity Depth | DEX Order Book/AMM Pool Size | Lower depth necessitates a higher haircut to cover slippage. |

| Implied Volatility Skew | Options Market Data | Higher skew suggests greater tail risk, demanding a higher haircut. |

| Protocol Utilization Rate | Total Value Locked vs. Total Borrowed | High utilization suggests stress, leading to temporarily increased haircuts. |

The transition to portfolio margining is a shift from a simplistic, position-based security model to a complex, systems-based solvency model.

This is a pragmatic trade-off. By accepting the computational overhead and the added oracle dependency, we gain the ability to unlock capital, moving the system closer to the theoretical ideal of efficient risk-transfer.

Architectural Evolution and Stress Testing

The evolution of derivative protocols is defined by the constant struggle to minimize Capital Efficiency Loss without sacrificing the core tenets of decentralization.

We have witnessed a progression from fully isolated, static collateral models to pooled, dynamic, and eventually, under-collateralized models.

The Shift to Pooled Liquidity

The major structural leap was the move to a pooled-liquidity model, where a single pool of capital acts as the counterparty for all option writers. This is where the power of diversification is finally leveraged on-chain. By aggregating all risk, the pool can benefit from the statistical improbability of all positions moving against it simultaneously.

This is a crucial step, but it introduces the systemic risk of contagion ⎊ the failure of one large, under-margined position can drain the shared pool, causing a cascade.

Adversarial Design and Game Theory

The true test of any capital efficiency improvement lies in the adversarial environment of the market. Sophisticated market makers do not view margin requirements as a fixed constraint; they view them as a boundary to be tested. The game theory here is clear: exploit the minor inefficiencies ⎊ the momentary lag between a price movement and a margin call ⎊ to extract value.

The architect’s job is to close these windows of opportunity. This is why we must continually audit the models against real-world market stress. We are not just building financial software; we are constructing an automated, adversarial financial environment where code is the final arbiter.

The question is not if the system will be stressed, but how quickly it will fail when it is.

Zero Loss Systems and Protocol Futures

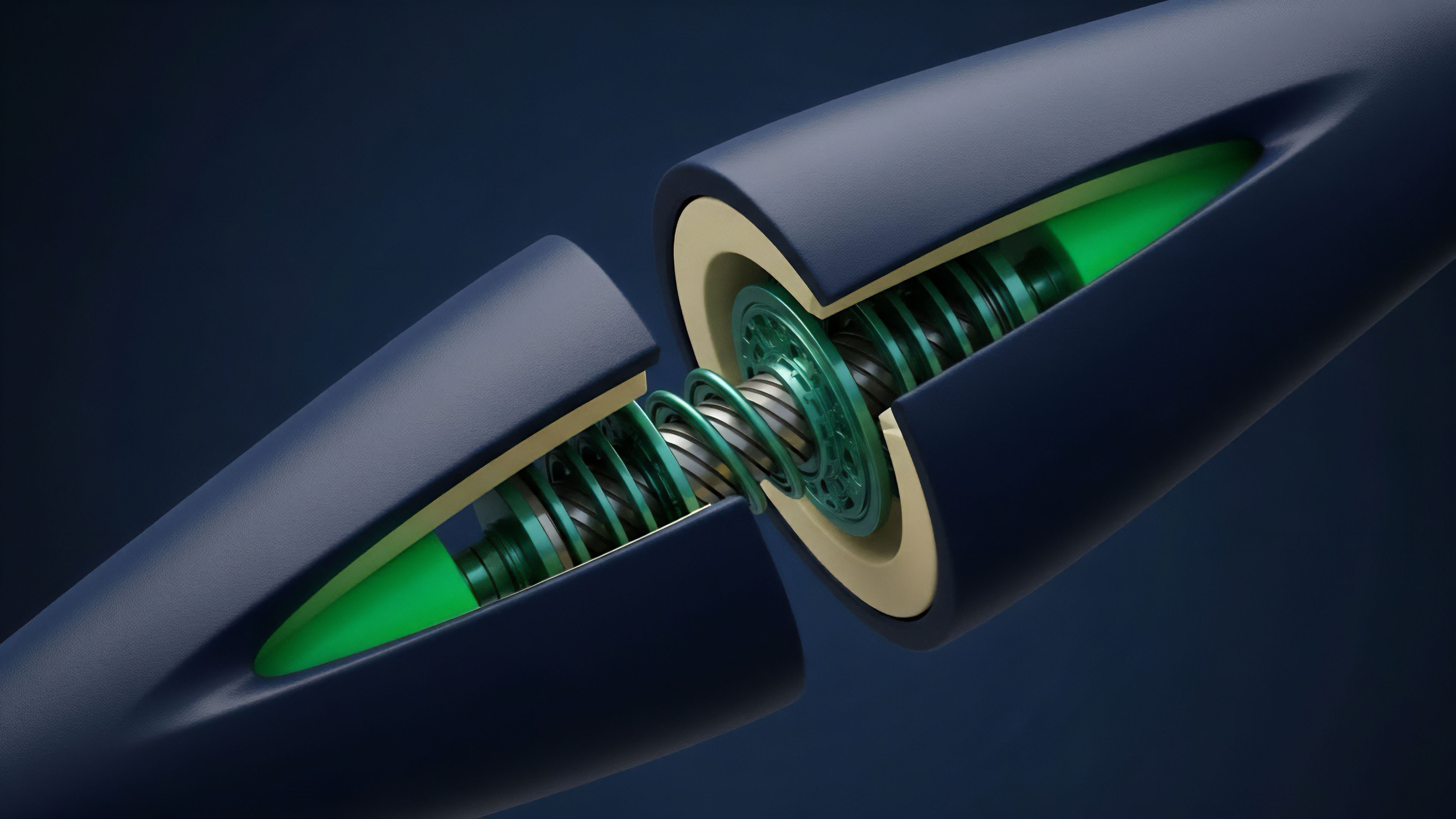

The horizon for crypto options is the attainment of a near Zero-Loss System , where the theoretical margin required is virtually indistinguishable from the collateral locked. This future is contingent upon advancements in two distinct, yet interconnected, domains: Layer 2 scaling and the emergence of Protocol-Native Volatility Oracles.

Layer 2 and Instant Finality

The current latency that drives Capital Efficiency Loss is a Layer 1 constraint. Moving margin engines and liquidation processes to a high-throughput, low-latency Layer 2 or app-chain environment is non-negotiable. Instantaneous finality eliminates the liquidation lag and dramatically reduces the need for the large collateral buffers currently required as a latency hedge.

The risk window shrinks from minutes to milliseconds.

Protocol-Native Volatility Oracles

A significant drag on capital is the reliance on external, off-chain data for implied volatility. Future protocols will compute the implied volatility surface and Greeks directly within the protocol’s state machine, providing a Protocol-Native Volatility Oracle. This internal computation allows for immediate, high-fidelity margin adjustments, moving beyond the simplistic risk parameters currently dictated by slow, external price feeds.

| Feature | Impact on Capital Efficiency | Systemic Implication |

|---|---|---|

| Instant Settlement | Eliminates Liquidation Lag | Reduces PRM to near-TMM levels |

| Native Volatility Surface | Dynamic, real-time Greek calculation | Enables true portfolio margining for exotic options |

| Decentralized Liquidation Pool | Incentivizes immediate liquidations | Reduces reliance on insurance funds |

The final destination is a system that achieves capital efficiency by being radically transparent and fast ⎊ a system where the solvency of the protocol is mathematically proven with every block, and the need for static, locked capital is rendered obsolete.

Glossary

Systemic Velocity

Liquidity Provider Capital Efficiency

Rebalancing Efficiency

Capital Efficiency Benefits

User Capital Efficiency Optimization

Capital Efficiency Tradeoff

Theoretical Minimum Margin

Price Discovery Efficiency

Stop Loss