Essence

The Cross-Instrument Parity Arbitrage Efficiency defines the systemic measure of friction and delay in the price convergence across related financial instruments ⎊ specifically, the spot asset, its perpetual swap, and its European-style options. This metric is not a static number; it is a dynamic scalar that quantifies the effectiveness of automated and human agents in enforcing the foundational law of derivatives pricing, which is the Put-Call Parity relationship. In decentralized finance (DeFi), where capital is pseudo-permissionless but execution is constrained by block latency and gas costs, this efficiency serves as a direct proxy for the health and sophistication of the market microstructure.

A low efficiency implies wide, persistent arbitrage windows, which are symptomatic of either significant protocol design flaws or prohibitive transaction costs that prevent the marginal trader from correcting mispricing.

The systemic story of decentralized options begins with the simple, elegant framework of no-arbitrage pricing. In a frictionless market, the cost of a call option plus the present value of the strike price must equal the cost of a put option plus the price of the underlying asset. When this relationship breaks, an arbitrage opportunity is born.

In the crypto context, this theoretical deviation is amplified by unique technical factors, transforming a classical finance problem into a systems engineering challenge. The core origin of the concept stems from the failure of the first wave of DeFi options protocols to account for the true cost of on-chain hedging. Arbitrageurs, often automated bots, found that the execution risk ⎊ the possibility of a transaction failing or being front-run ⎊ consumed the entire theoretical profit margin, leading to wide, persistent pricing discrepancies.

Cross-Instrument Parity Arbitrage Efficiency is the real-time scalar quantifying the market’s ability to enforce the no-arbitrage condition across spot, perpetual, and options instruments, reflecting the true cost of on-chain friction.

This efficiency is a critical determinant of capital allocation. If the arbitrage is inefficient, capital remains fragmented. A market that cannot quickly and reliably close its own structural gaps signals a high risk premium to professional market makers, who then either demand wider spreads or withdraw liquidity entirely.

The true vision of decentralized derivatives requires this efficiency to approach the performance of centralized venues, moving the constraint from execution latency to genuine price discovery based on information asymmetry.

Origin

The concept finds its theoretical origin in the 1900s with the establishment of Put-Call Parity, formalized later by economists to describe the relationship between European-style options and their underlying asset. This classical framework was a mental tool for risk-free profit identification. The modern crypto derivative application, however, is a direct reaction to the structural challenges presented by the Ethereum Virtual Machine (EVM) and its derivatives.

The immediate predecessor to the efficiency concept was the observed, systematic divergence between implied volatility (IV) surfaces on centralized exchanges (CEXs) and decentralized options protocols. CEXs, with their high-throughput order books, exhibited a relatively smooth IV surface, while DeFi protocols showed a “potholed” surface ⎊ massive, localized spikes and dips in IV for specific strikes and expiries. This was not a reflection of unique information; it was a symptom of a technical bottleneck.

The DeFi Arbitrage Genesis

The first generation of decentralized options protocols were essentially liquidity pools for options, utilizing automated market makers (AMMs) to price contracts. These AMMs, often relying on simplified Black-Scholes approximations, were inherently exploitable. The true origin of the “efficiency” concept is rooted in the early losses sustained by these pools.

The losses were a direct consequence of arbitrageurs exploiting the AMM’s static, deterministic pricing function, particularly when a large, informed order was executed.

- Static Pricing Models: Initial protocols used models that were too slow to react to rapid changes in the underlying spot price, creating a lag that was instantly exploitable by high-frequency trading bots.

- High Gas Costs: The cost of transaction execution often exceeded the theoretical profit of the arbitrage, creating a “dead zone” where small mispricings were uneconomical to correct, allowing the deviation to persist and grow.

- Execution Uncertainty: The possibility of front-running or transaction failure (reverts) introduced an unquantifiable arbitrage execution risk that had no equivalent in traditional markets, forcing arbitrageurs to demand a much larger spread.

This historical failure led to a realization: the efficiency of a DeFi derivatives market is not measured by the sophistication of its pricing model alone, but by the efficiency of its underlying settlement and order-flow mechanism. The problem was one of “protocol physics,” not financial theory.

Theory

The theoretical foundation of Cross-Instrument Parity Arbitrage Efficiency is built upon the superposition of classical quantitative finance models and adversarial behavioral game theory within a state-machine environment. The key deviation from traditional theory lies in the non-zero cost and time required for state transition (a transaction).

The Financial-Technical Synthesis

Arbitrage efficiency is mathematically modeled as the ratio of realized profit to theoretical profit, net of all execution costs and slippage, across a statistically significant sample of opportunities.

| Cost Component | Traditional Market Equivalent | DeFi Amplification Factor |

|---|---|---|

| Transaction Fee (Gas) | Brokerage Commission | Non-linear, volatile, and block-dependent. |

| Execution Latency | Market Order Slippage | Deterministic by block time, leading to predictable front-running risk. |

| Liquidation Risk | Counterparty Credit Risk | Automated, instantaneous, and prone to cascade effects during stress. |

The core theoretical challenge is the proper calculation of the arbitrage execution delta, which is the systemic risk introduced by the adversarial environment. This delta is not a part of the Black-Scholes-Merton (BSM) framework; it is an emergent property of the blockchain’s consensus mechanism. Our inability to respect the true cost of execution is the critical flaw in our current modeling.

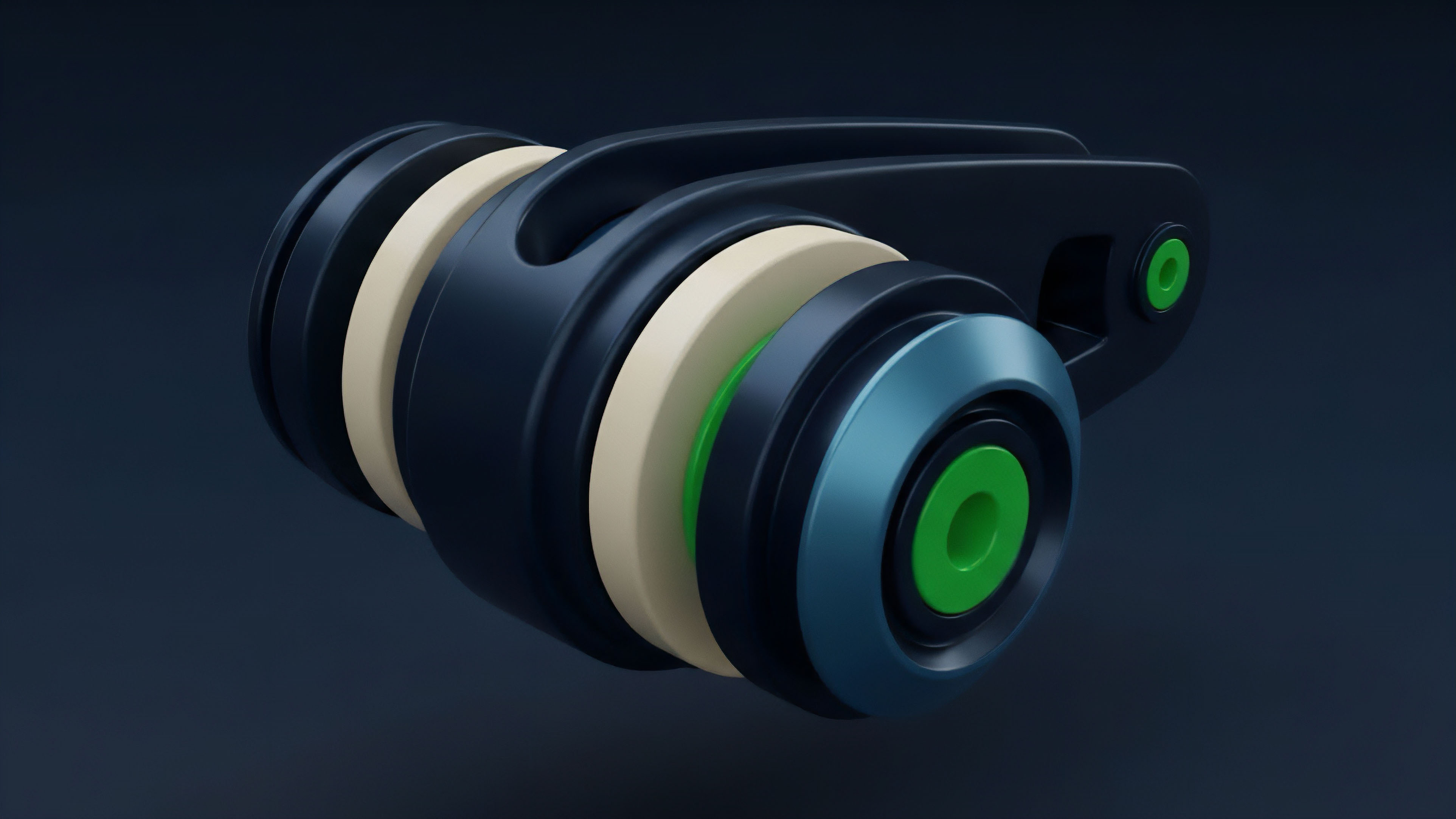

Systemic Risk and Liquidation Engines

Arbitrageurs are often required to hold leveraged positions, and the efficiency of the market is deeply intertwined with the robustness of the protocol’s liquidation engine. Inefficient arbitrage can be a precursor to systemic risk. When mispricing persists, arbitrageurs may deploy greater leverage to capitalize on the wider profit margin.

If the underlying spot price moves against their leveraged hedge before the slow on-chain transaction can settle, the resulting liquidation event can be a large, sudden market order that further destabilizes the options AMM and creates a cascade.

The Parity Arbitrage Efficiency is a boundary condition on protocol solvency; its failure to hold tight suggests a structural weakness that will be exploited by systemic liquidation events.

The most sophisticated agents view the options AMM as a strategic opponent in a repeated game. They do not seek to eliminate all arbitrage; they seek to manage the rate at which they close it, maximizing their expected value per block. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

The arbitrage window becomes a strategic resource, not a simple inefficiency.

Approach

The modern approach to maximizing Cross-Instrument Parity Arbitrage Efficiency is a multi-dimensional strategy that combines off-chain computation with highly optimized on-chain settlement logic. It is a departure from purely on-chain pricing.

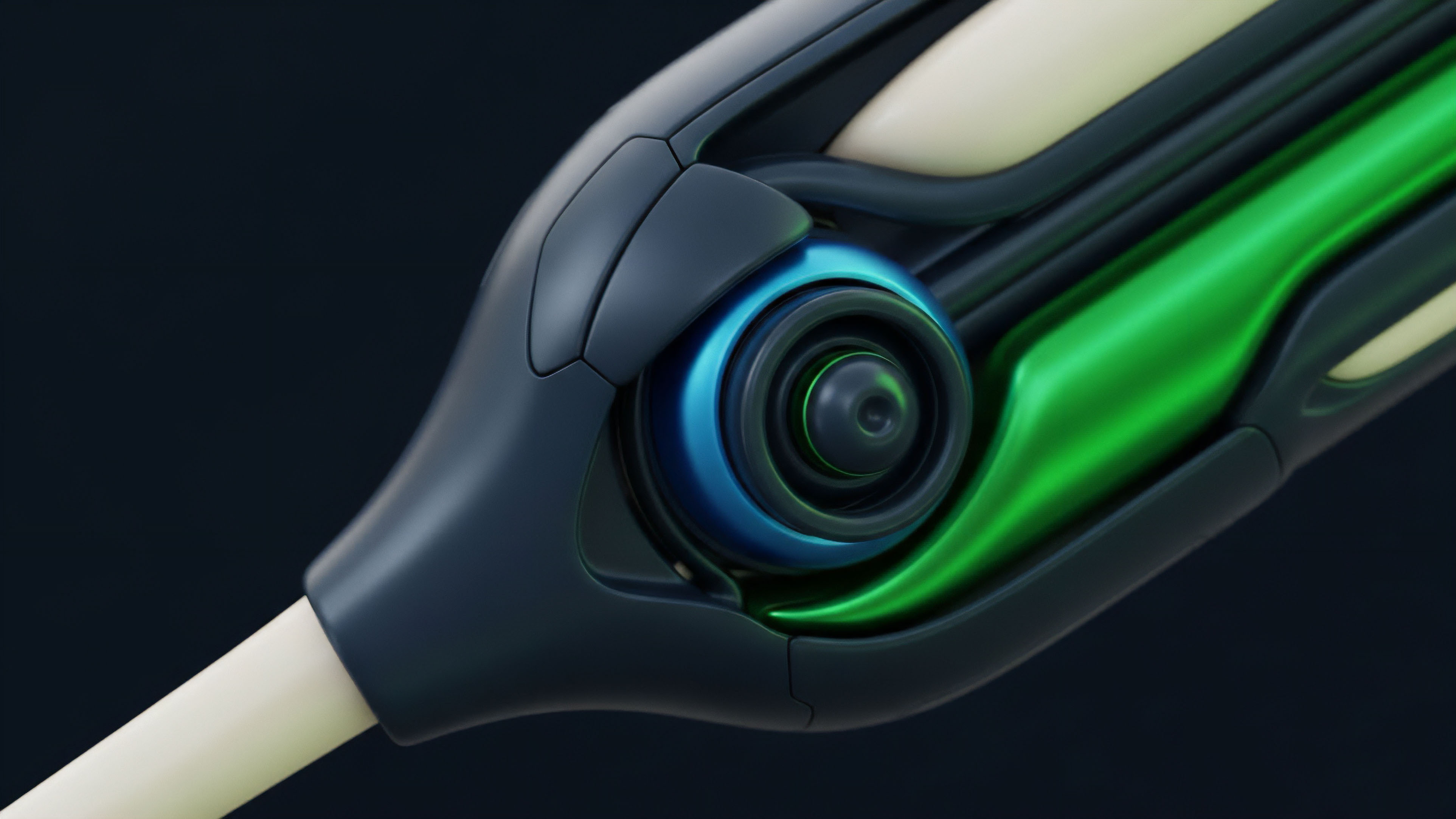

Off-Chain Pricing and Order Flow

The current state-of-the-art involves moving the computationally expensive task of option pricing and risk management off-chain. This allows for continuous, high-fidelity calculation of the implied volatility surface and the resulting arbitrage opportunities, free from the constraints of block time.

- Optimized Greek Calculation: Utilizing advanced Monte Carlo simulations or high-speed numerical methods to calculate the full set of Options Greeks (Delta, Gamma, Vega, Theta) multiple times per second, ensuring the pricing function is always ahead of the market.

- Predictive Gas Modeling: Arbitrage bots now incorporate sophisticated models to predict future gas prices and block inclusion times, adjusting their bid-ask spread on the arbitrage trade to account for the stochastic cost of execution.

- Request-for-Quote (RFQ) Systems: Replacing public AMMs with private, off-chain RFQ systems allows professional market makers to quote tighter spreads, knowing their quotes are protected from front-running and can be settled on-chain via a single, gas-efficient transaction.

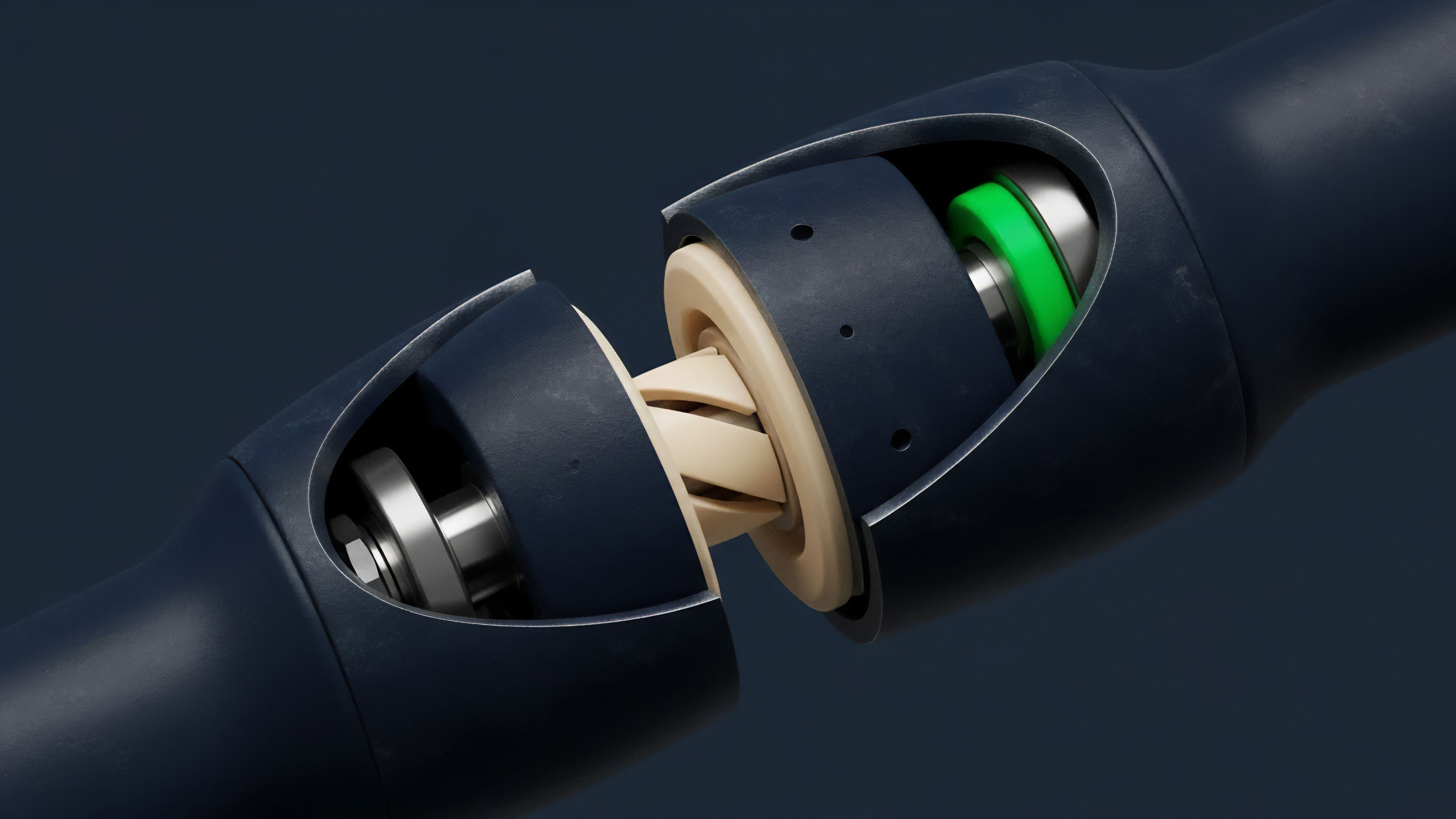

Technical Execution Optimization

On the technical side, the focus is on minimizing the execution friction that prevents the theoretical arbitrage from being realized. This is an architectural problem that involves protocol design trade-offs.

| Strategy | Protocol Design Implication | Impact on Arbitrage Delta |

|---|---|---|

| Batching Settlements | Requires a multi-asset margin system and a designated settlement window. | Reduces gas cost per trade, lowering the floor for profitable arbitrage. |

| Layer 2 Deployment | Mandates a complex cross-chain bridging and state synchronization mechanism. | Dramatically reduces execution latency, shrinking the arbitrage window time. |

| Optimized Rebalancing Logic | Uses a Dutch Auction or Time-Weighted Average Price (TWAP) for hedge execution. | Minimizes slippage and market impact for the arbitrageur’s hedge leg. |

The ultimate goal is to reduce the arbitrage window to a size that can only be captured by the most technically sophisticated participants, effectively transforming the inefficiency into a small, predictable cost of doing business that subsidizes the liquidity providers.

Evolution

The evolution of Cross-Instrument Parity Arbitrage Efficiency mirrors the maturation of the entire crypto derivatives complex. It began as a battle against structural latency and has progressed into a competition of capital efficiency and risk modeling.

From Static Pools to Dynamic Surfaces

Early iterations of options protocols treated liquidity as a passive resource, which arbitrageurs could drain by repeatedly taking the ‘good side’ of the trade based on external market data. The response was the development of dynamic liquidity models. These models actively adjust the implied volatility surface based on the pool’s current risk exposure (its aggregate Greeks), effectively internalizing the arbitrageur’s expected hedge cost into the option price.

This was a critical shift; the protocol itself became a dynamic participant in the arbitrage game, rather than a static oracle.

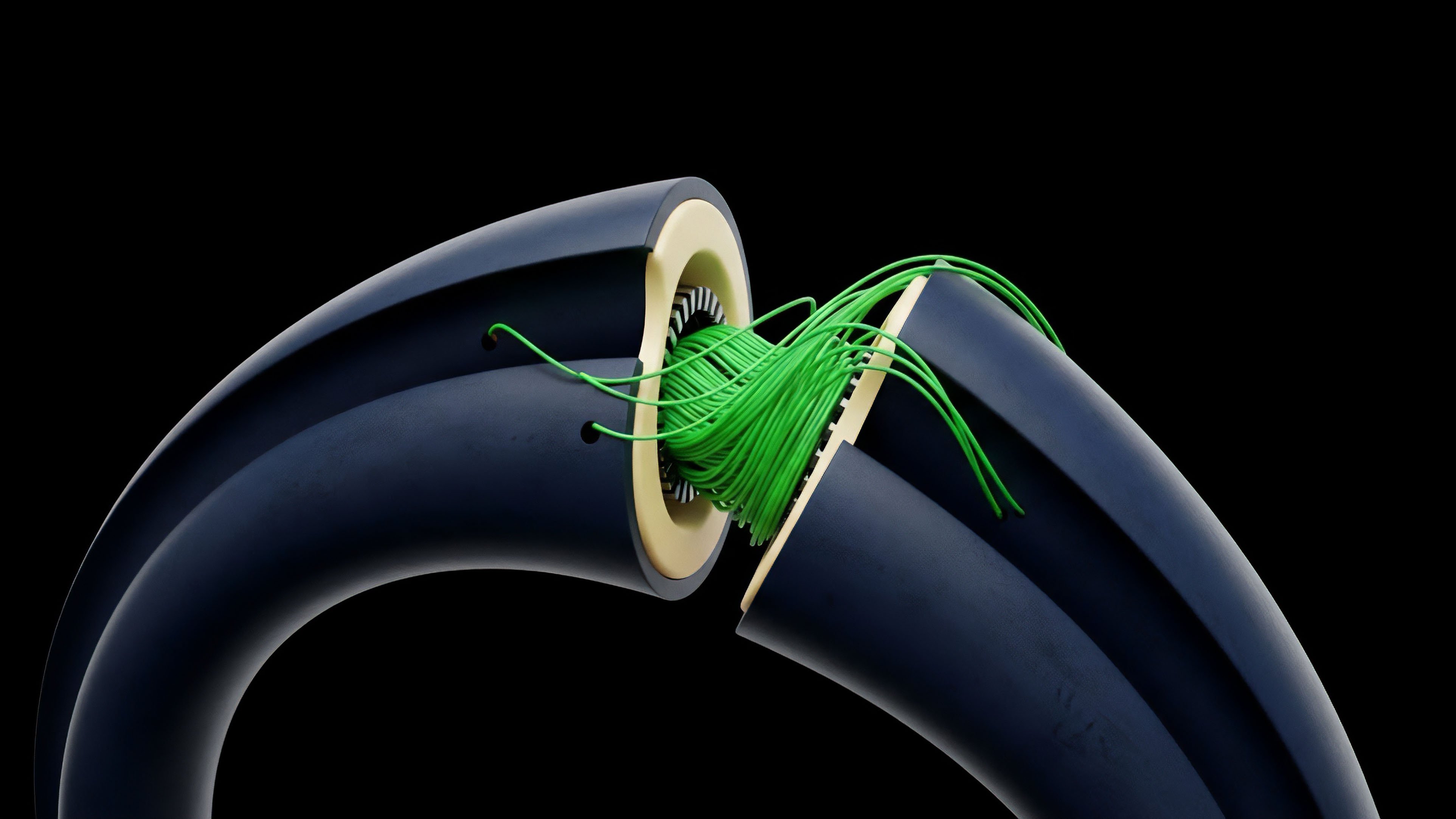

The transition to a multi-instrument approach marked the second phase. Arbitrage is no longer just a comparison between a call and a put; it is a three-way comparison involving the option, the spot market, and the perpetual futures market. This complexity is necessary because the perpetual swap’s funding rate often acts as a proxy for the cost of carry, which is a critical input for option pricing models.

The evolution demands a unified risk engine that models the entire derivative stack ⎊ spot, perpetuals, and options ⎊ as a single, interconnected system, eliminating localized inefficiencies.

The Layer 2 Scaling Impact

The deployment of options protocols onto Layer 2 (L2) scaling solutions represents the most significant leap in efficiency. By drastically reducing gas costs and increasing transaction throughput, L2s have shrunk the “dead zone” of unprofitable arbitrage. This means smaller mispricings are now economical to correct, leading to tighter spreads and a surface that more closely tracks the theoretical ideal.

However, this has also introduced a new form of systemic risk: cross-chain settlement risk. The arbitrage trade now has an added, non-zero risk that the L2 state will not be finalized or synchronized correctly with the Layer 1 (L1) state, a new form of execution uncertainty that the strategist must account for.

The future of this evolution is the integration of these systems into a unified, cross-protocol margin framework. This will allow an arbitrageur to post collateral on one protocol and execute the hedge on another, maximizing capital efficiency and further tightening the price relationship across the decentralized finance landscape.

Horizon

The future of Cross-Instrument Parity Arbitrage Efficiency is not the elimination of arbitrage, but its institutionalization and commodification. The next generation of protocols will treat the closing of arbitrage windows as a utility, a subsidized service provided to the market to guarantee price integrity.

The Rise of Volatility Tokens

We will see the rise of tokenized volatility products ⎊ structured products that are essentially perpetual bets on the shape and movement of the implied volatility surface. These tokens will become the most liquid instruments for hedging Vega and Gamma exposure, and their pricing will act as a real-time, collective market opinion on the efficiency of the options market. Arbitrageurs will shift from directly trading options to trading these volatility tokens against the options’ theoretical Greeks, creating a second-order arbitrage loop that further stabilizes the market.

Decentralized Autonomous Organizations and Risk Management

The final architectural shift will involve decentralized autonomous organizations (DAOs) actively managing the risk parameters of options AMMs. Governance will not merely vote on fees; it will vote on Dynamic Risk Adjustment Factors (DRAF) ⎊ parameters that dictate how quickly the AMM adjusts its pricing surface in response to external spot movements, thereby controlling the width of the arbitrage window. This is the ultimate expression of the systems architect’s vision: embedding risk management into the protocol’s governance layer.

This new environment will present new challenges for the quantitative analyst. The traditional BSM framework will be insufficient. The market will demand a pricing model that is:

- State-Dependent: Prices must be a function of the protocol’s current capital utilization and aggregate risk exposure.

- Game-Theoretic: The model must incorporate the expected behavior of the largest, most sophisticated arbitrage bots and market makers.

- Consensus-Aware: The cost of carry must include the predicted future cost of block space, which is a function of network activity and consensus mechanism design.

The most significant intellectual challenge on the horizon is the development of a unified theory of value for digital assets that successfully marries the continuous-time mathematics of finance with the discrete-time, adversarial reality of blockchain settlement. This is the only path to a truly resilient and efficient decentralized financial operating system.

Glossary

Arbitrage Gas Competition

Arbitrage Exploit

Liquidation Bonus Arbitrage

Execution Optimization

Arbitrage Friction Barriers

Capital Efficiency Dynamics

Arbitrage Opportunity Window

Market Microstructure

Capital Allocation