Essence

Network security constitutes the mathematical boundary between sovereign capital and systemic theft. In a decentralized environment, security is the objective measure of a system’s resistance to unauthorized state changes ⎊ the ultimate arbiter of whether a financial transaction remains final or becomes subject to adversarial reversal. This resistance is not a static property but a continuous equilibrium maintained through cryptographic proofs and economic game theory.

When this equilibrium falters, vulnerabilities manifest as structural gaps where the cost of an attack falls below the expected utility of the exploit. The integrity of a blockchain network relies on the verifiable scarcity of the resources required to participate in consensus. Security vulnerabilities represent instances where this scarcity is bypassed or where the consensus logic contains flaws that allow participants to act against the collective interest without suffering proportional economic loss.

Within the crypto options sector, these vulnerabilities introduce non-linear risks that standard pricing models ⎊ such as Black-Scholes ⎊ often fail to incorporate.

- Consensus Failure: A state where the agreement mechanism between nodes is compromised, allowing for double-spending or chain reorganization.

- Cryptographic Weakness: Flaws in the underlying mathematical primitives or their implementation that permit the forging of digital signatures.

- Economic Attack Vector: Strategies that use market liquidity or incentive imbalances to manipulate network behavior for profit.

- Network Topology Risk: Vulnerabilities arising from the physical or logical distribution of nodes, such as eclipse attacks or routing manipulation.

Security is the foundational layer upon which all derivative liquidity is constructed. Without the assurance of network-level finality, the entire stack of smart contracts and financial instruments collapses into a state of permanent uncertainty. The architect must view every protocol as a system under constant siege, where the only true defense is a mathematically sound and economically disincentivized attack surface.

Origin

The genesis of network security concerns traces back to the Byzantine Generals Problem, a classic dilemma in distributed computing.

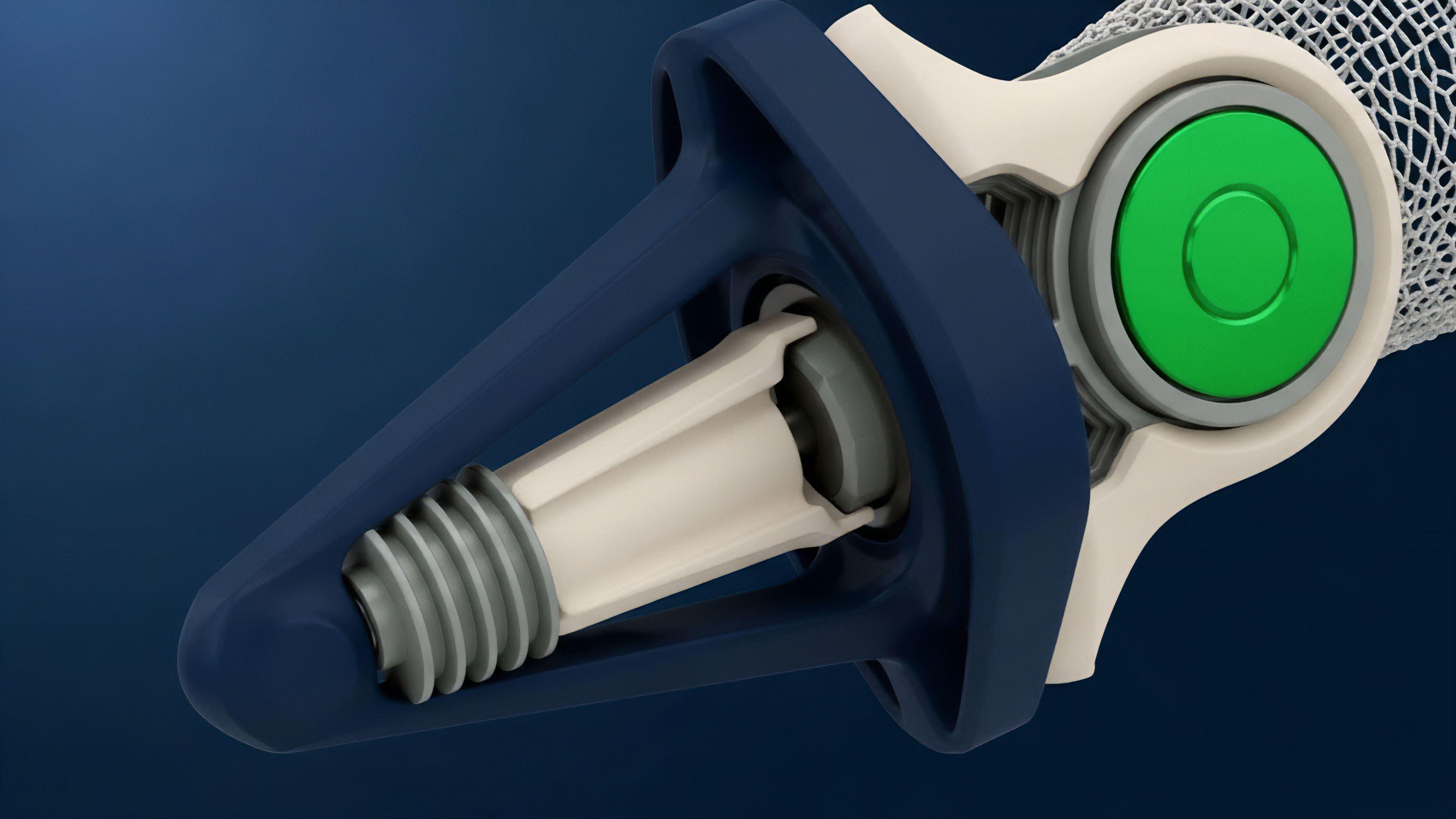

Early attempts at digital cash failed because they could not solve the double-spend problem without a central authority. The introduction of Proof of Work provided the first viable solution by tying the ability to update the ledger to the expenditure of physical energy, creating a direct link between thermodynamic cost and network security. As the technology moved from simple value transfer to programmable state machines, the surface area for vulnerabilities expanded.

The shift from Bitcoin’s Script to Ethereum’s Turing-complete Virtual Machine introduced a new class of risks ⎊ logic-based vulnerabilities that exist at the intersection of network consensus and smart contract execution. This transition marked the move from securing a ledger to securing a global, decentralized computer.

| Historical Era | Primary Security Focus | Dominant Vulnerability Class |

|---|---|---|

| Protocol Genesis | Double-Spending Prevention | 51% Hashrate Attacks |

| Programmable Era | State Machine Integrity | Reentrancy and Logic Errors |

| DeFi Expansion | Economic Incentive Alignment | Oracle Manipulation and MEV |

| Interoperability Era | Cross-Chain Finality | Bridge Proof Validation Failures |

The evolution of these vulnerabilities reflects the increasing sophistication of the participants. Early attacks were often blunt force attempts to overwhelm the network’s hash power. Modern exploits are surgical, leveraging the internal logic of the protocol or the external market forces that govern its value.

This historical trajectory demonstrates that as systems become more expressive, they become more fragile, requiring ever-greater levels of formal verification and economic modeling to remain secure.

Theory

Mathematical modeling of network security centers on the Cost of Attack (CoA) relative to the Profit from Attack (PfA). A system is considered secure if CoA > PfA for all rational actors. This relationship is often expressed through the lens of Sybil resistance, where the cost of acquiring the necessary influence to subvert consensus is made prohibitively expensive.

In Proof of Work, this cost is tied to hardware and electricity; in Proof of Stake, it is tied to the market value of the native token.

Network security is the equilibrium state where the mathematical cost of subverting consensus exceeds the economic utility derived from the exploit.

Quantitative analysis of these vulnerabilities involves calculating the probability of a successful attack based on the distribution of network resources. For instance, in a 51% attack scenario, the probability of success is a function of the attacker’s share of the total hashrate and the number of confirmations required by the recipient. The risk to derivative markets is particularly acute, as a single reorganization can invalidate the collateral backing thousands of open positions, leading to a cascade of liquidations and systemic contagion.

- Byzantine Fault Tolerance: The theoretical limit of malicious participants a network can withstand while still reaching a valid consensus.

- Sybil Resistance Mechanisms: The methods used to ensure that a single entity cannot gain disproportionate control by creating multiple identities.

- Finality Thresholds: The mathematical point at which a transaction is considered irreversible within a specific consensus model.

- Liveness vs Safety: The trade-off between a network’s ability to continue processing transactions and its ability to ensure those transactions are correct.

Adversarial game theory provides the structure for understanding how rational participants might deviate from the protocol. If the rewards for honesty are lower than the potential gains from collusion or sabotage, the network will eventually succumb to internal decay. The architect must therefore design systems where the Nash Equilibrium is the honest participation of all nodes, ensuring that the network remains robust even in the presence of highly motivated and well-capitalized adversaries.

Approach

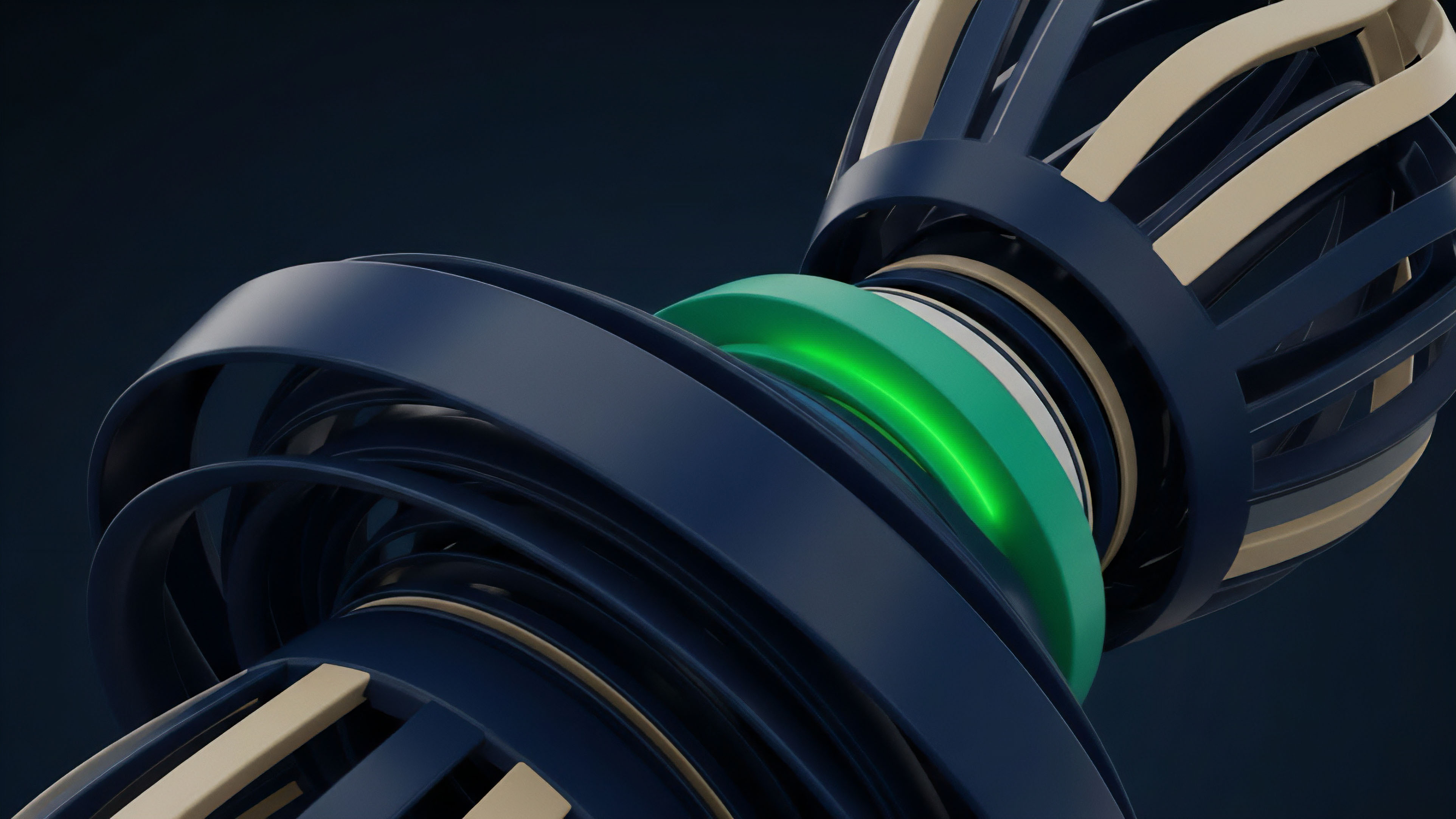

Current methodologies for mitigating network security vulnerabilities involve a multi-layered defense strategy.

This begins with formal verification ⎊ using mathematical proofs to ensure that the protocol’s code behaves exactly as intended under all possible conditions. Unlike traditional testing, which only checks for known failure modes, formal verification attempts to prove the absence of entire classes of vulnerabilities. On-chain monitoring and automated circuit breakers represent the second layer of defense.

These systems track network health in real-time, looking for anomalies such as sudden shifts in hashrate, unusual patterns of large transactions, or deviations from expected consensus behavior. If a threat is detected, these mechanisms can trigger defensive actions, such as pausing certain protocol functions or increasing the required confirmation times for high-value transfers.

| Defensive Layer | Methodology | Primary Objective |

|---|---|---|

| Static Analysis | Automated Code Scanning | Identify Common Syntax and Logic Flaws |

| Formal Verification | Mathematical Proofs | Ensure Absolute Adherence to Specifications |

| Economic Auditing | Game Theory Simulation | Verify Incentive Alignment and Resistance |

| Real-Time Monitoring | On-Chain Analytics | Detect and Respond to Active Exploits |

The efficacy of a security strategy is measured by its ability to maintain system integrity during periods of extreme market volatility and adversarial pressure.

Bug bounties and decentralized security audits provide a human-centric layer of protection. By incentivizing independent researchers to find and report vulnerabilities, protocols can tap into a global pool of expertise that far exceeds the capabilities of any single internal team. This adversarial approach to security ⎊ where the system is constantly being probed for weaknesses by friendly actors ⎊ is vital for identifying the creative and non-obvious exploits that automated tools often miss.

Evolution

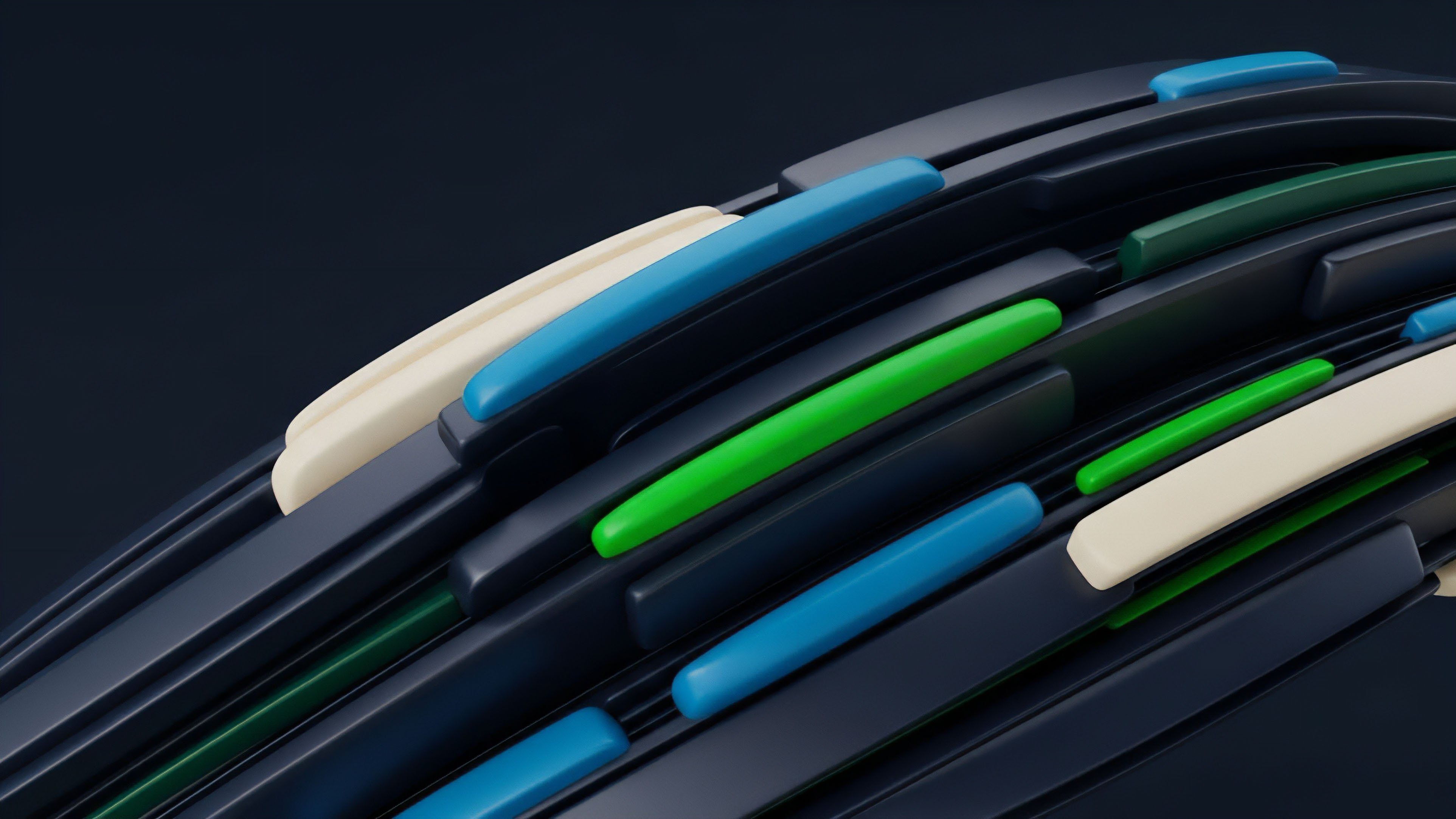

The nature of network security has shifted from the physical to the economic.

In the early days, the primary threat was the accumulation of specialized hardware. Today, the most significant vulnerabilities exist in the complex interactions between protocols. The rise of decentralized finance has introduced the concept of economic flash loans, which allow an attacker to borrow massive amounts of capital to manipulate a network’s incentive structure for a single transaction.

This has turned security into a liquidity-based challenge ⎊ the network is only as secure as the depth of its markets. The transition to Proof of Stake has also altered the security landscape. While it reduces the physical energy requirements, it introduces new risks such as long-range attacks and stake centralization.

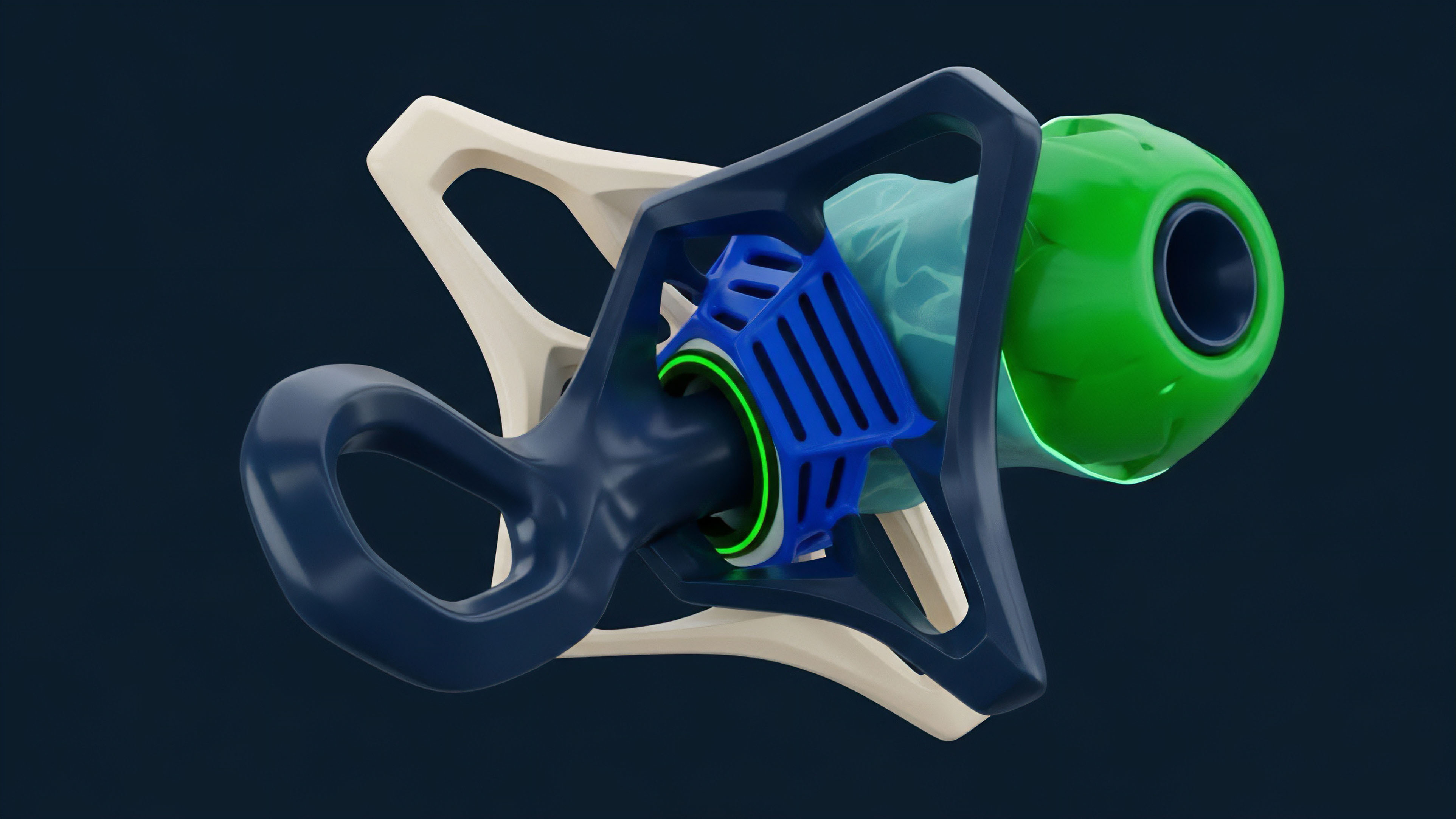

If a small number of entities control a majority of the staked assets, they can effectively dictate the state of the network, undermining the very decentralization that security is meant to protect. This has led to the development of liquid staking and restaking protocols, which further complicate the security model by creating layers of derivative claims on the underlying network collateral.

| Vulnerability Shift | Old Model | New Model |

|---|---|---|

| Attack Resource | ASIC Hardware / Electricity | Staked Capital / Governance Tokens |

| Exploit Velocity | Days (Hashrate Accumulation) | Seconds (Atomic Transactions) |

| Target Surface | Base Layer Consensus | Cross-Protocol Interdependencies |

| Mitigation Focus | Hashrate Diversification | Incentive Design and MEV Smoothing |

Security is no longer a binary state but a spectrum of economic resistance. The architect must traverse this terrain with the understanding that every optimization for speed or scalability often comes at the cost of security. The drive toward modular blockchains ⎊ where consensus, data availability, and execution are handled by different layers ⎊ represents the latest attempt to manage this trade-off.

However, this modularity also introduces new points of failure at the interfaces between layers, requiring a unified perspective on the entire stack to ensure systemic stability.

Horizon

The future of network security will be defined by the emergence of quantum computing and the increasing role of artificial intelligence in both attack and defense. Quantum computers pose a fundamental threat to the elliptic curve cryptography that currently secures almost all blockchain networks. A sufficiently powerful quantum machine could derive private keys from public addresses, rendering the entire system obsolete.

Preparing for this “Q-Day” requires the development and implementation of post-quantum cryptographic algorithms ⎊ a massive undertaking that will require coordinated upgrades across the entire industry.

Future security architectures must integrate quantum-resistant cryptography and AI-driven defensive agents to survive in an increasingly automated adversarial environment.

Artificial intelligence will act as a force multiplier for both sides of the security equation. Attackers will use AI to scan for vulnerabilities with unprecedented speed and precision, while defenders will deploy AI agents to monitor networks and respond to threats in real-time. This will lead to an automated arms race where the speed of response is measured in milliseconds. The architect must design systems that are not only secure by design but also capable of autonomous adaptation to new and unforeseen threats. The ultimate goal is the creation of a “self-healing” network ⎊ a system that can detect its own vulnerabilities and automatically deploy patches or adjust its incentive structures to mitigate them. This would represent the final realization of the decentralized vision: a financial operating system that is truly sovereign, resilient, and immune to human error or malice. The path to this future is fraught with technical and philosophical challenges, but it is the only way to ensure that the decentralized markets of tomorrow are built on a foundation of absolute security. The convergence of zero-knowledge proofs and secure multi-party computation will likely provide the mathematical tools necessary for this transition, allowing for private yet verifiable transactions that do not compromise the network’s integrity. As we move toward this state, the distinction between code and law will become even more blurred, with the mathematical properties of the network serving as the ultimate and final authority on all matters of value and ownership. This shift will require a fundamental rethinking of how we perceive risk and trust in a world where the human element is increasingly removed from the core processes of financial settlement and network governance.

Glossary

Vulnerability Disclosure Policy

Decentralized Ledger Security

Elliptic Curve Vulnerability

Nothing-at-Stake Problem

Sybil Resistance Mechanism

Chain Reorganization Depth

Game Theoretic Equilibrium

Smart Contract Integrity

Resource Exhaustion