Essence

Automated Verification Tools function as the computational gatekeepers for derivative protocols, systematically auditing the logic governing asset pricing, collateralization, and execution. These systems move beyond manual code reviews by employing formal methods and symbolic execution to map every potential state a smart contract might occupy. In the volatile theater of decentralized finance, these mechanisms provide the mathematical assurance required to maintain systemic integrity when human oversight proves insufficient.

Automated verification tools serve as mathematical proofs of contract safety, replacing subjective human trust with deterministic logic.

The primary utility of these systems lies in their ability to detect edge cases ⎊ liquidation race conditions, oracle manipulation vectors, or arithmetic overflows ⎊ before capital is committed to a live environment. By modeling the protocol as a state machine, developers can verify that invariant properties, such as the solvency of a margin engine, remain intact across all possible input sequences. This shifts the security paradigm from reactive patching to proactive, logic-based immunity.

Origin

The lineage of these tools traces back to formal methods in computer science, specifically the application of Hoare Logic and model checking to ensure software reliability in critical infrastructure.

Initially developed for aerospace and banking systems, these techniques found a natural home within the constraints of immutable, permissionless ledgers. The transition occurred when the frequency of high-value exploits demonstrated that traditional testing methods could not keep pace with the composability of DeFi protocols.

- Formal Verification emerged as the standard for ensuring code behaves exactly as specified by its mathematical model.

- Symbolic Execution allows engines to explore code paths by treating variables as symbols rather than concrete values, uncovering hidden execution branches.

- Static Analysis provides the initial layer of defense, scanning source code for known anti-patterns and vulnerabilities without executing the logic.

Early implementations focused on simple token contracts, but the rise of complex options platforms necessitated more robust frameworks. Developers began integrating these verification layers directly into development lifecycles, recognizing that in a decentralized setting, a single logic error constitutes a permanent financial catastrophe.

Theory

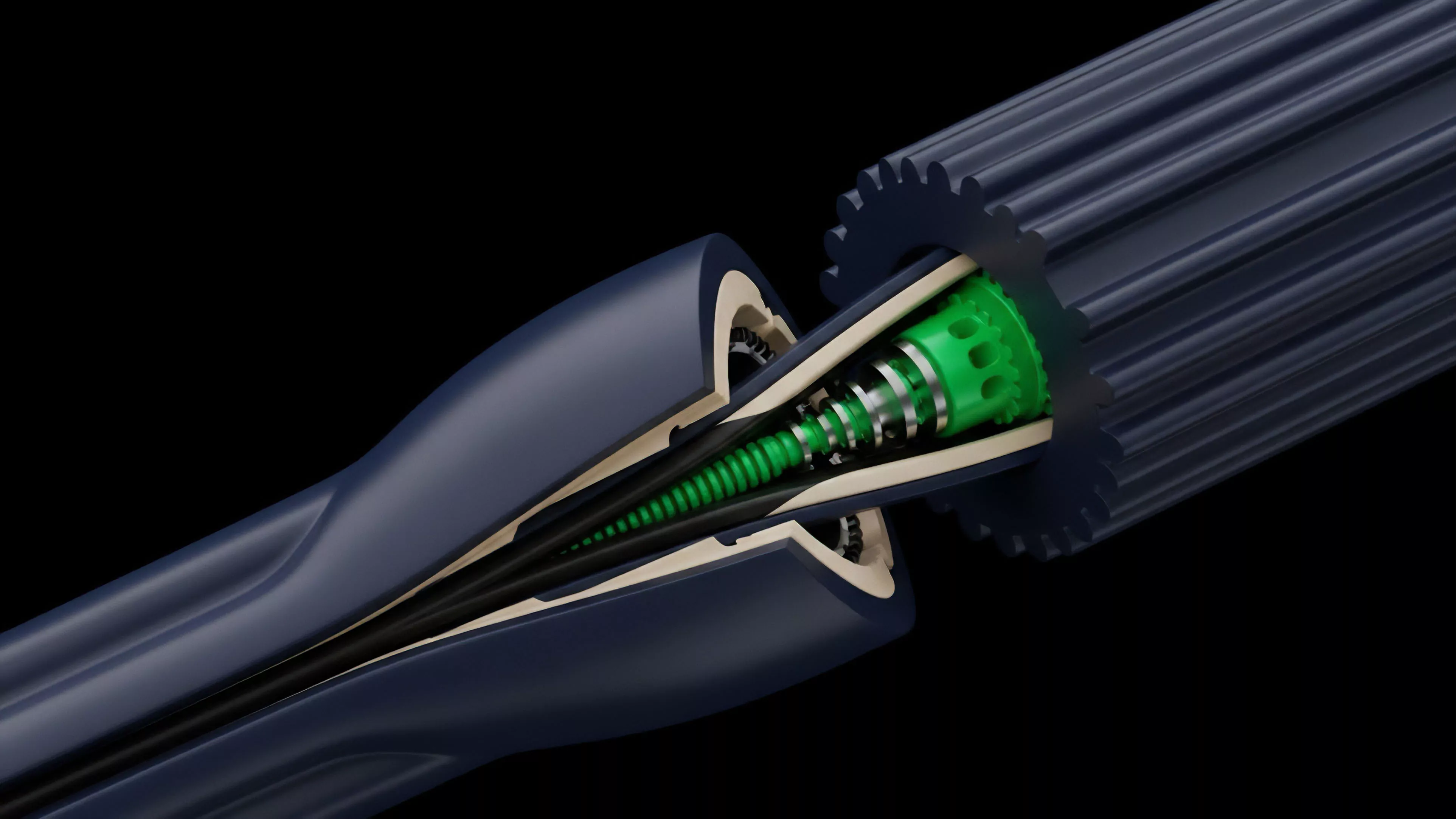

The architecture of a verification engine relies on Invariants ⎊ conditions that must hold true at every stage of a protocol’s operation. If a protocol defines an invariant that the total value of collateral must exceed the total value of issued options, the verification tool constructs a mathematical proof to ensure this condition cannot be violated by any sequence of user transactions or external price updates.

This process involves complex interactions between the contract code and the underlying blockchain state.

Mathematical invariance ensures that protocol rules remain immutable regardless of market conditions or adversarial intervention.

When analyzing crypto derivatives, these tools specifically target the Margin Engine and Settlement Logic. By modeling the protocol using SMT solvers, the engine can identify scenarios where a sudden drop in asset price leads to a state where the system cannot liquidate positions fast enough to maintain solvency. This is where the theory intersects with quantitative finance; the tools do not merely check code, they check the economic viability of the financial model implemented in code.

| Methodology | Primary Focus | Computational Cost |

| Model Checking | State Space Exploration | High |

| Symbolic Execution | Path Coverage | Moderate |

| Static Analysis | Pattern Recognition | Low |

The reality of these systems often involves a trade-off between the depth of the proof and the time required for computation. A truly exhaustive verification might take days, whereas a targeted check occurs in seconds, forcing architects to decide which invariants are truly foundational to the system’s survival.

Approach

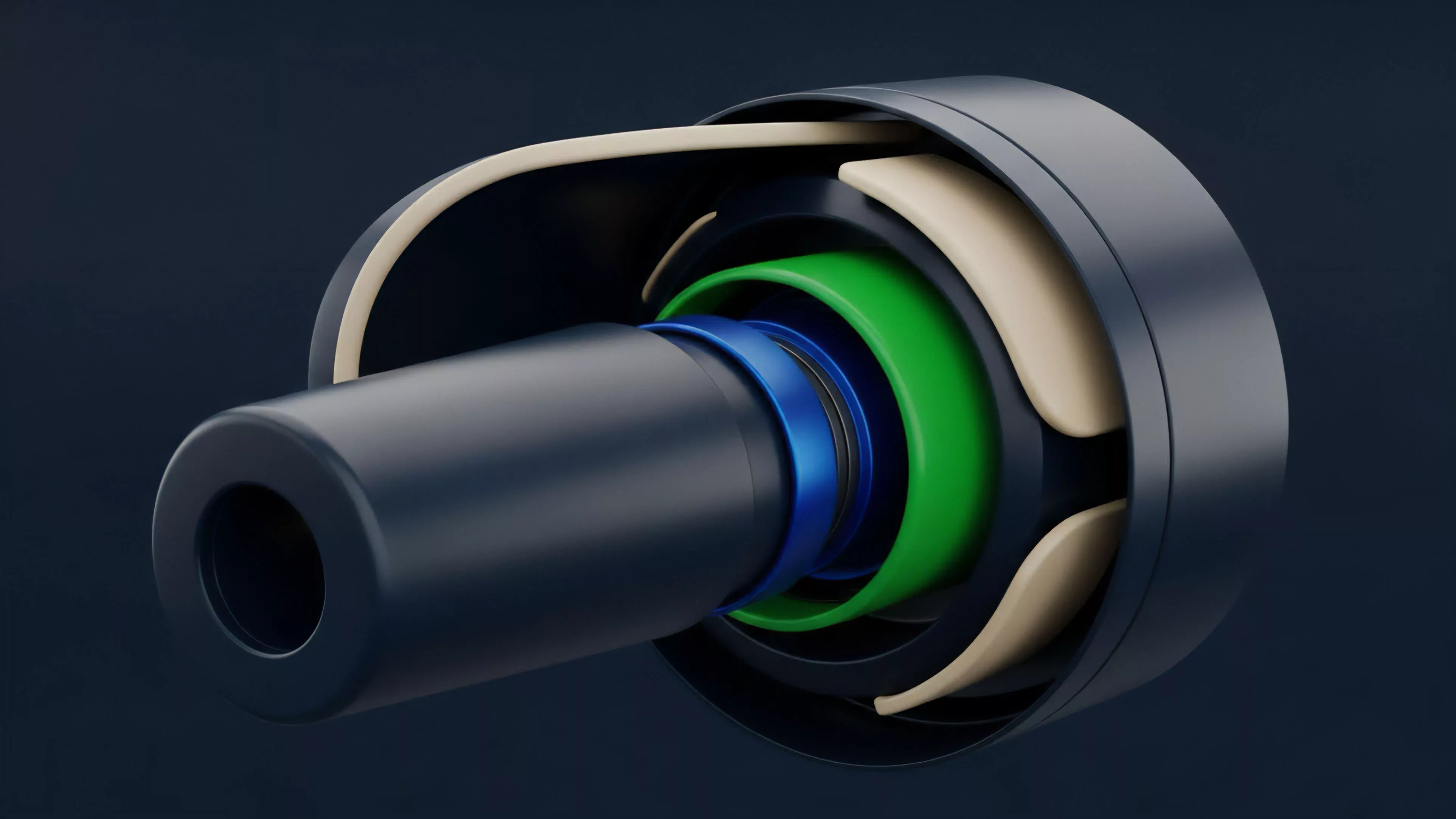

Current implementation strategies emphasize a multi-layered defense. Developers now utilize Continuous Integration pipelines where verification occurs automatically upon every commit.

This ensures that any modification to the pricing oracle or the collateralization ratio is immediately subjected to the suite of formal checks. It is an adversarial approach, where the verification tool acts as an automated hacker, constantly searching for inputs that break the financial model.

Continuous automated verification transforms security from a final audit into an ongoing, rigorous development standard.

The process involves mapping the protocol’s Financial Greeks ⎊ Delta, Gamma, Vega ⎊ into code constraints. If the protocol is designed to be delta-neutral, the verification tool ensures that no combination of user actions can inadvertently create an unhedged exposure that threatens the protocol’s treasury. This level of rigor is required because decentralized markets operate in a state of constant, automated stress.

- Invariant Testing establishes the core rules that the protocol must never violate under any circumstances.

- Property-Based Testing generates thousands of random inputs to stress-test the protocol’s responses to abnormal market volatility.

- Differential Testing compares the output of the protocol against a trusted reference model to identify discrepancies in pricing.

My own experience suggests that the most effective teams treat their verification suite as a competitive advantage. It allows for faster iteration cycles, as the team knows that their core financial invariants are protected by a mathematical firewall, even when introducing complex new features.

Evolution

The trajectory of these tools is moving toward Real-Time Monitoring and on-chain verification. Early versions were limited to pre-deployment audits, but the future lies in systems that can pause a contract or adjust risk parameters if the verification engine detects a deviation from safe operational parameters in real-time.

This is a shift from static security to dynamic, autonomous risk management. Sometimes I think about the sheer audacity of replacing legal contracts with mathematical proofs, and it becomes clear that we are essentially building a new form of digital law. As protocols become more modular, the need for Cross-Contract Verification has grown.

A single derivative platform might interact with five different oracles, three lending pools, and two governance modules. Verification tools are now evolving to model the entire interconnected system, ensuring that a vulnerability in a peripheral dependency does not cascade into the core derivative engine.

| Era | Primary Focus | Technology |

| Foundational | Syntax Errors | Linters |

| Growth | Logic Vulnerabilities | Symbolic Execution |

| Advanced | Systemic Risk | Formal Verification |

Horizon

The next stage involves the integration of Artificial Intelligence to assist in the generation of properties for verification. Currently, developers must manually define the invariants they wish to protect. Future systems will automatically infer these invariants from the code’s intent, creating a self-verifying architecture that adapts to the evolving complexity of decentralized finance.

This will reduce the human error inherent in defining the security scope of a protocol.

Autonomous property generation will eventually allow protocols to define and enforce their own security boundaries without manual intervention.

We are approaching a period where the barrier between financial engineering and software engineering will vanish. Automated verification will be the standard for any protocol managing significant capital, and those that fail to implement these systems will be excluded from the institutional-grade liquidity pools of the future. The survival of decentralized derivatives depends on this shift toward rigorous, machine-verified financial integrity.