Essence

Automated Liquidity Adjustment represents the dynamic recalibration of capital deployment within decentralized derivative protocols to maintain market efficiency and solvency. These systems operate as autonomous agents that monitor order book depth, volatility regimes, and collateralization ratios to rebalance liquidity pools without manual intervention.

Automated liquidity adjustment functions as a programmatic risk management mechanism that dynamically shifts capital to maintain market stability.

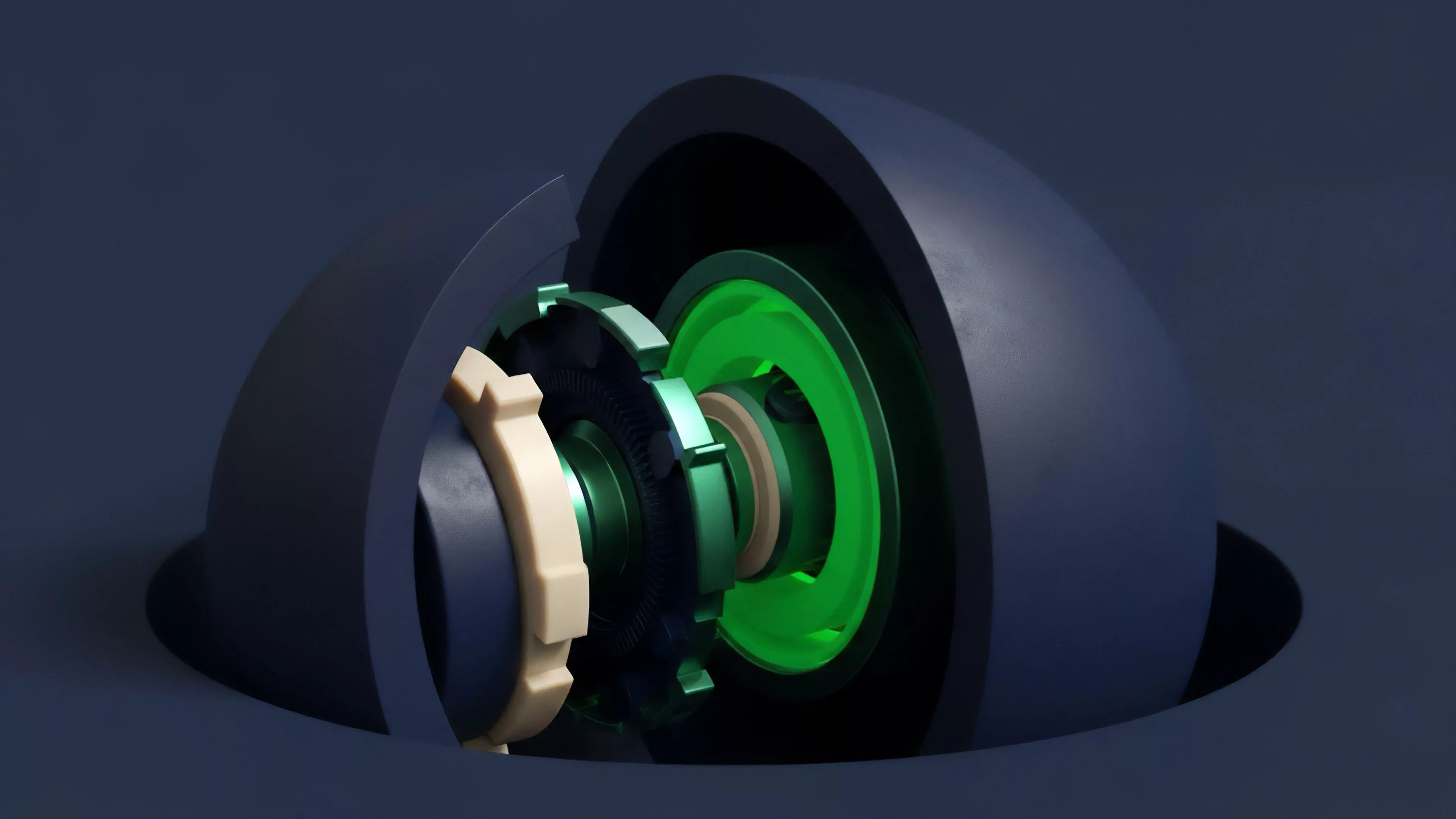

This architecture replaces static, capital-inefficient liquidity provisioning with elastic models. By continuously modulating the width and depth of quote ranges or collateral requirements, these protocols optimize for both capital utilization and protection against adverse selection.

- Liquidity Elasticity: The capacity of a protocol to expand or contract its active order book based on real-time volatility signals.

- Dynamic Range Management: The autonomous shifting of price bands where liquidity is concentrated to align with shifting market fair values.

- Solvency Preservation: The automatic adjustment of margin requirements or collateral weights to insulate the protocol from systemic liquidation cascades.

Origin

The genesis of Automated Liquidity Adjustment lies in the limitations of constant product market makers when applied to derivatives. Early decentralized exchanges faced extreme impermanent loss and capital inefficiency, particularly during high volatility events. Developers recognized that fixed-range liquidity models failed to account for the non-linear risk profiles inherent in options and perpetual futures.

Initial liquidity models failed to adapt to the non-linear risk profiles of crypto derivatives, necessitating the shift toward autonomous adjustment mechanisms.

The evolution followed a transition from centralized, human-managed order books toward algorithmic market making (AMM) designs. Early experiments with concentrated liquidity proved that capital efficiency improves when providers can select specific price ranges. Automated Liquidity Adjustment evolved as the next logical step, moving this selection process from the user to the protocol itself, governed by data-driven feedback loops.

| Generation | Liquidity Model | Adjustment Mechanism |

| First | Constant Product | None (Static) |

| Second | Concentrated | Manual Range Setting |

| Third | Automated | Algorithmic Rebalancing |

Theory

The mechanics of Automated Liquidity Adjustment rely on quantitative feedback loops that translate market data into protocol state changes. These systems typically employ volatility estimators, such as GARCH or realized variance models, to determine the optimal breadth of liquidity provision. When realized volatility exceeds predetermined thresholds, the protocol expands its quote width to widen the spread, thereby compensating for the increased risk of adverse selection.

The structural integrity of these systems depends on the tight coupling between Greeks ⎊ specifically Delta and Gamma ⎊ and the liquidity deployment strategy. By programmatically adjusting the liquidity position to neutralize or hedge directional exposure, the protocol reduces the probability of insolvency. This is a departure from traditional market making, where the human element often fails to react at machine speed.

Automated liquidity adjustment aligns protocol risk parameters with real-time market variance to optimize capital efficiency and systemic stability.

This is where the model becomes elegant ⎊ and dangerous if ignored. The reliance on oracle feeds to trigger these adjustments introduces a reliance on data integrity. If the underlying oracle is manipulated, the automated system might misinterpret a price flash as a shift in fundamental volatility, leading to incorrect rebalancing that drains protocol reserves.

- Volatility Surface Mapping: The continuous tracking of implied volatility across various strikes to adjust liquidity depth.

- Gamma Hedging Automation: The programmatic rebalancing of liquidity to offset the convexity risk inherent in short option positions.

- Adverse Selection Mitigation: The use of speed-bumps or latency-sensitive liquidity withdrawal to prevent toxic flow from exploiting the protocol.

Approach

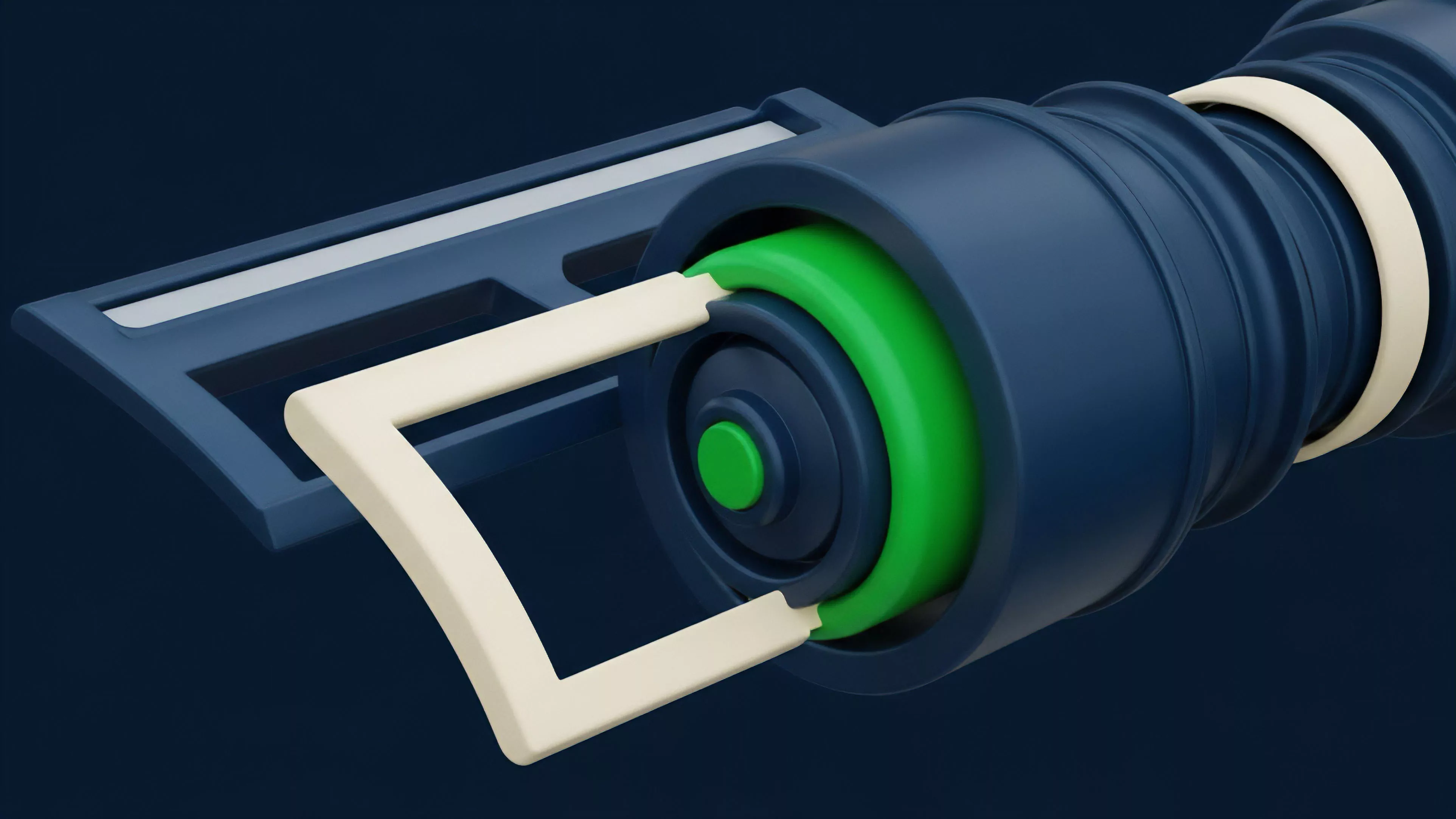

Current implementations of Automated Liquidity Adjustment utilize a combination of on-chain data and off-chain computation to drive protocol state. Protocols frequently deploy “keepers” or decentralized actor networks that execute the rebalancing transactions when the protocol’s internal state deviates from its target risk profile. This separation of concerns allows for complex computation while maintaining the security guarantees of the underlying smart contract.

Modern protocols utilize decentralized keeper networks to execute rebalancing transactions, bridging the gap between off-chain computation and on-chain security.

Strategic participants now focus on optimizing the parameters that govern these adjustments. This involves selecting the right sensitivity to volatility, the frequency of rebalancing, and the cost of the rebalancing transactions relative to the capital efficiency gained. The competition is moving toward minimizing the “slippage” of the adjustment itself, ensuring that the act of rebalancing does not move the market against the protocol.

| Parameter | Primary Function | Risk Impact |

| Volatility Window | Defines Lookback Period | Lag in Response |

| Rebalance Threshold | Trigger Sensitivity | Transaction Cost vs Efficiency |

| Spread Width | Compensation for Risk | Order Fill Rate |

Evolution

The trajectory of these systems shows a clear progression from simple reactive models to proactive, predictive architectures. Initially, protocols merely adjusted to past volatility. Now, they incorporate predictive models that anticipate liquidity needs based on macro-crypto correlations and historical trading patterns.

This is akin to the shift in high-frequency trading from reactive market making to anticipatory order flow management. We have moved past the era of static liquidity pools. The current horizon involves integrating cross-chain liquidity and shared risk frameworks.

These systems are becoming increasingly aware of the global state of the crypto market, not just the local pool depth. This interconnectedness is both a strength and a potential failure vector, as systemic contagion could propagate across these automated bridges.

Horizon

Future development will center on the integration of machine learning agents to replace static rule-based rebalancing. These agents will be capable of learning from diverse market conditions, effectively optimizing liquidity deployment in real-time without predefined thresholds.

The challenge lies in the verification of these models on-chain, as the complexity of neural networks often exceeds current gas limits and transparency requirements.

Future iterations will likely replace static threshold-based rebalancing with autonomous machine learning agents capable of predictive liquidity deployment.

The ultimate goal is the creation of self-healing derivative protocols that maintain market depth through extreme stress scenarios. This requires a deeper understanding of how these automated agents interact with one another. If multiple protocols use similar logic, the risk of synchronized rebalancing events becomes a significant concern, potentially exacerbating volatility rather than dampening it.