Staking Reward Equilibrium

Meaning ⎊ The optimal balance point where staking rewards attract sufficient capital without causing excessive token inflation.

Liquidity Provider Reward Models

Meaning ⎊ Frameworks for compensating capital providers, balancing incentive costs with the need for stable and deep market liquidity.

Staking Reward Recognition

Meaning ⎊ The identification and recording of income generated from locking assets to secure a blockchain network.

Protocol Reward Distribution

Meaning ⎊ Protocol Reward Distribution functions as the core incentive engine that aligns participant capital with the long-term security of decentralized systems.

Reward Receipt Timing

Meaning ⎊ Identifying the exact moment when staking rewards are legally recognized as taxable income based on asset control.

Tokenomic Reward Structures

Meaning ⎊ Mechanisms distributing digital assets to participants to align individual behavior with the protocol health and security.

Blockchain Reward Systems

Meaning ⎊ Blockchain reward systems function as programmable incentive layers that align participant behavior with network security and economic sustainability.

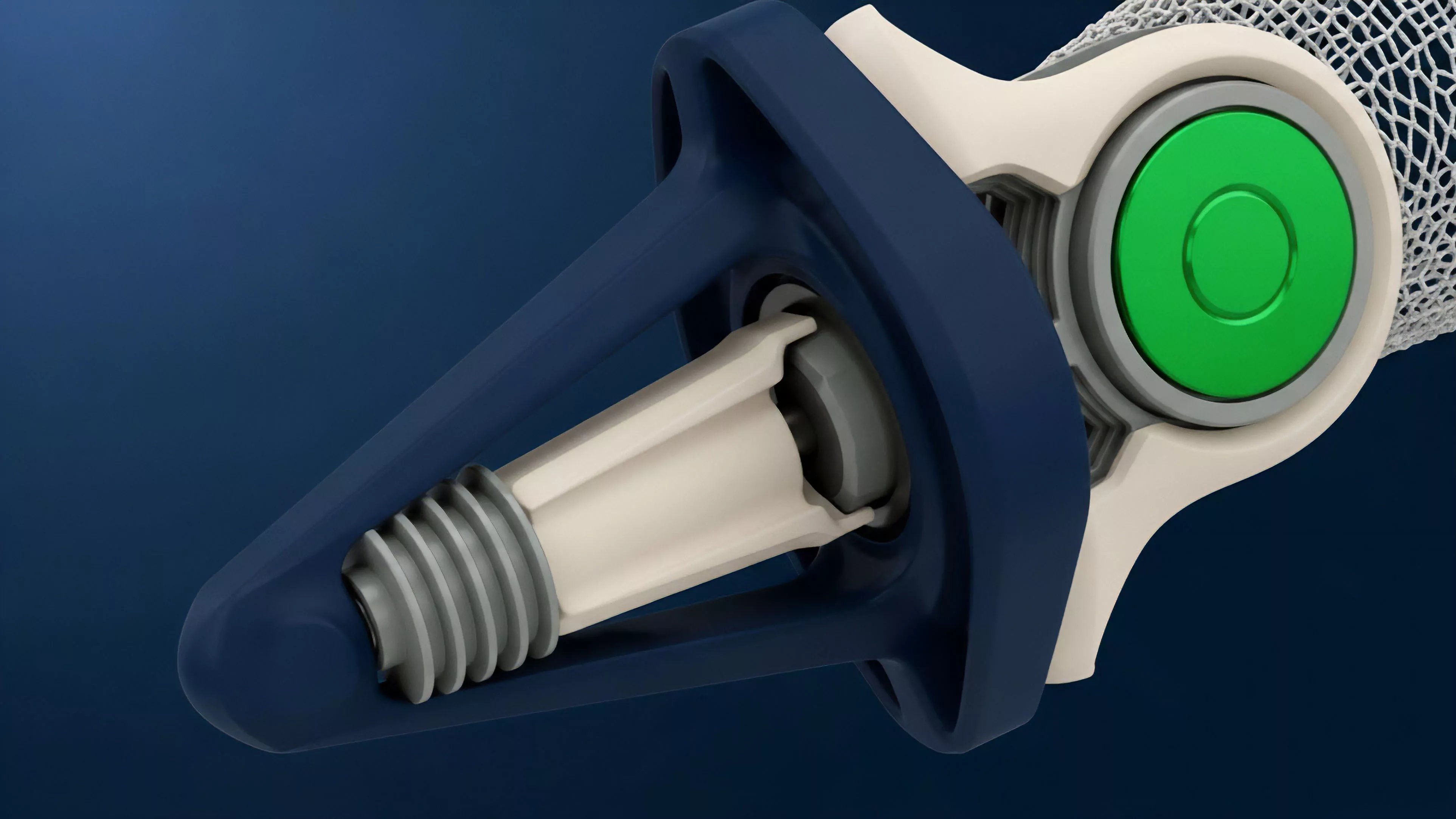

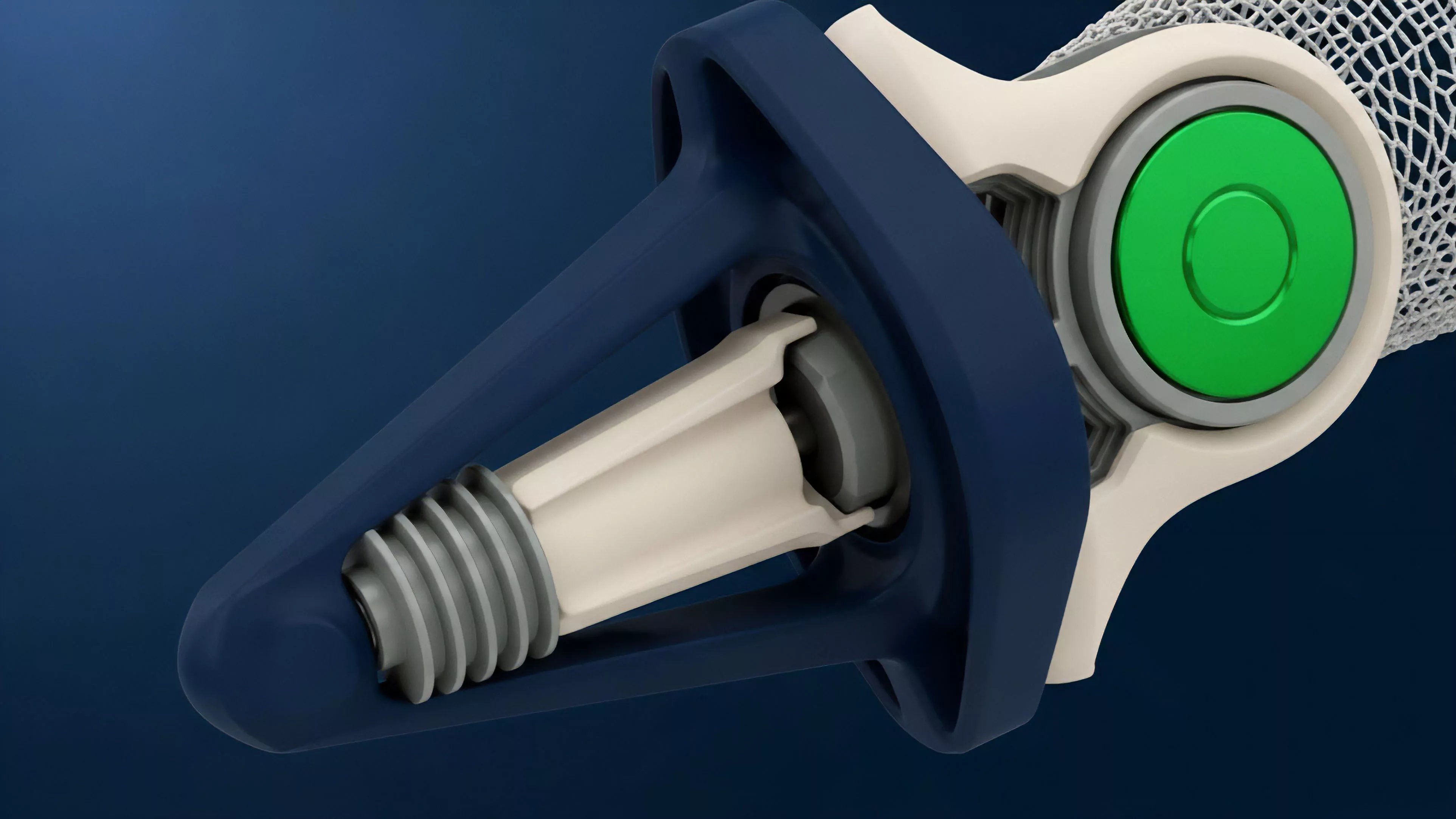

Validator Reward Mechanisms

Meaning ⎊ Validator reward mechanisms provide the economic security framework that incentivizes network participants to maintain ledger integrity and consensus.

Vested Reward Structures

Meaning ⎊ Delayed distribution of assets over time to align stakeholder incentives and prevent sudden market sell-offs.

Inflationary Reward Mechanisms

Meaning ⎊ Algorithmic minting of new tokens to reward participants, which expands supply and can dilute existing holder value.

Block Reward Scheduling

Meaning ⎊ The deterministic timeline defining how and when network participants are compensated with new tokens for securing the chain.

Staking Reward Emission Rates

Meaning ⎊ The algorithmic schedule of token rewards for network stakers, balancing security incentives with inflationary pressures.

Reward Dilution

Meaning ⎊ Reduction in individual validator rewards caused by an increase in the total amount of assets staked in a network.

Staking Reward Volatility

Meaning ⎊ Staking reward volatility quantifies the stochastic yield variance in proof-of-stake networks, essential for pricing derivatives and hedging risk.

Staking Reward Structures

Meaning ⎊ Staking reward structures align participant incentives with network security while managing inflationary supply and capital efficiency.

Validator Reward Optimization

Meaning ⎊ Validator reward optimization systematically enhances staking yields through active management of block production and capital efficiency protocols.

Staking Reward Compounding

Meaning ⎊ The practice of reinvesting earned staking rewards to increase the principal and accelerate future yield growth.

Staking Reward Systems

Meaning ⎊ Staking reward systems provide the foundational yield mechanism that aligns capital allocation with network security in decentralized protocols.

Risk-Adjusted Reward Modeling

Meaning ⎊ Evaluating trading performance by normalizing returns against the volatility and leverage risk incurred by the strategy.

Staking Reward Rate

Meaning ⎊ The annualized return generated by locking crypto assets in a proof-of-stake network to support consensus operations.

Mining Reward Variance

Meaning ⎊ The statistical unpredictability of income for network validators due to the random nature of successful block discovery.

Asymmetric Risk Reward

Meaning ⎊ An investment profile where potential upside gains significantly outweigh the potential downside risks.

Block Reward Distribution

Meaning ⎊ Block Reward Distribution governs the economic incentive layer of decentralized networks, balancing security costs against long-term asset scarcity.

Validator Reward Distribution

Meaning ⎊ Validator Reward Distribution programs the economic incentives necessary to maintain decentralized consensus integrity and network security.

Validator Reward Systems

Meaning ⎊ Validator reward systems are the programmatic economic foundations that secure decentralized networks by aligning capital incentives with consensus.

Trend Smoothing

Meaning ⎊ Mathematical filtering of price data to isolate underlying directional movement by reducing high-frequency market noise.

Block Reward Mechanisms

Meaning ⎊ Block reward mechanisms provide the critical economic foundation for decentralized security by programmatically incentivizing network validation.

Risk-Reward Reassessment

Meaning ⎊ The systematic review of trade viability based on evolving market data to optimize potential gains against active risk exposure.