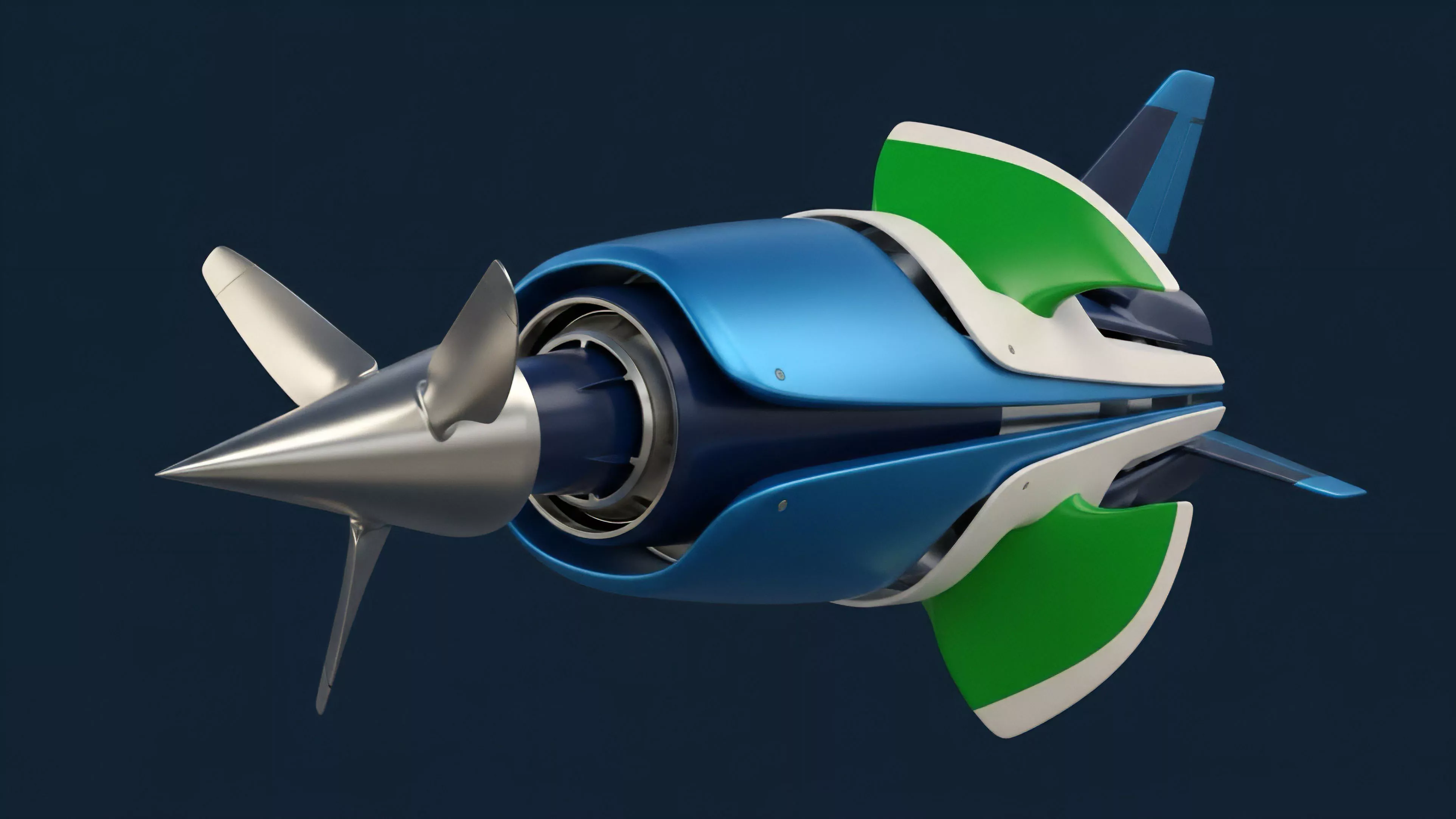

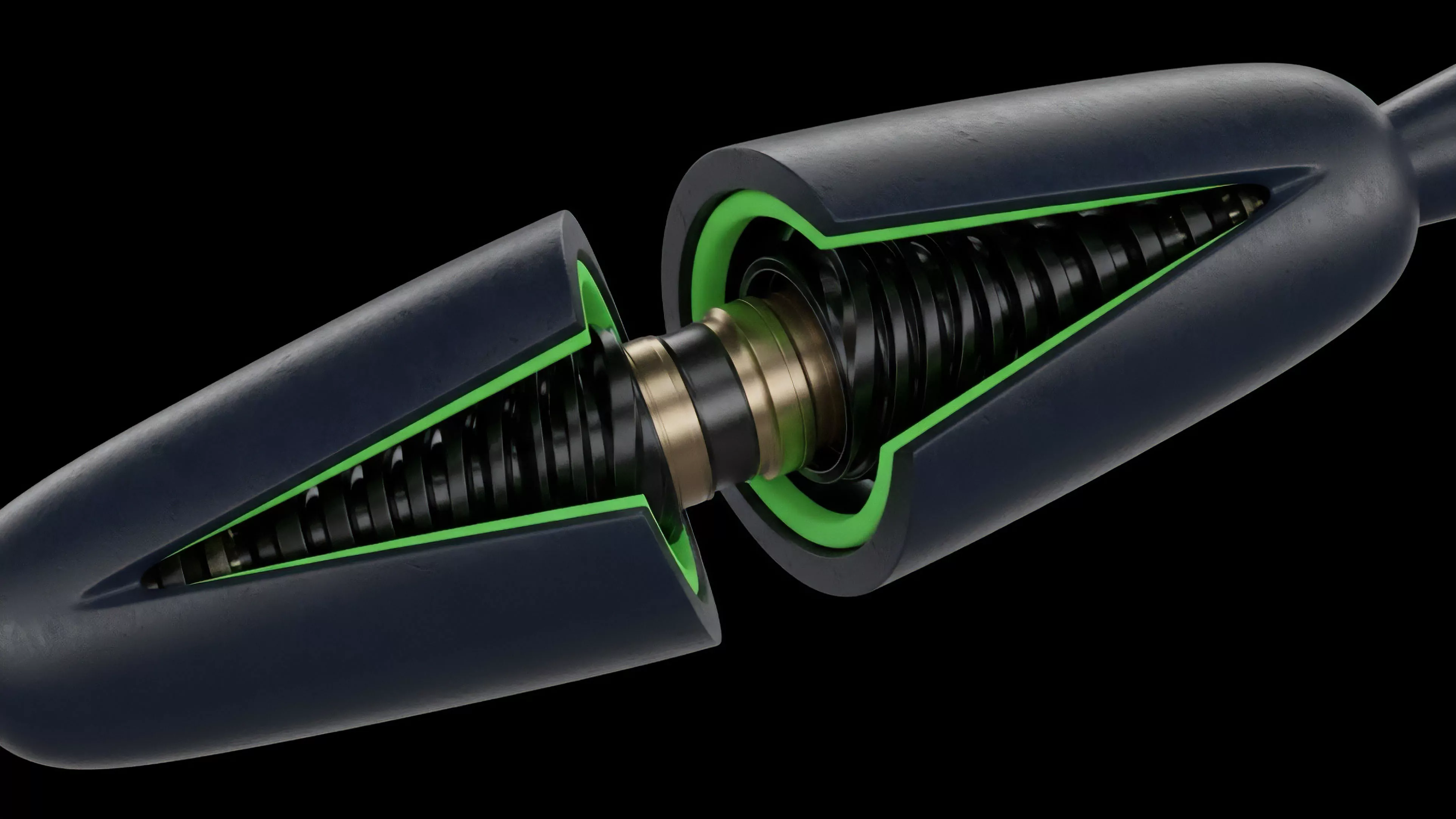

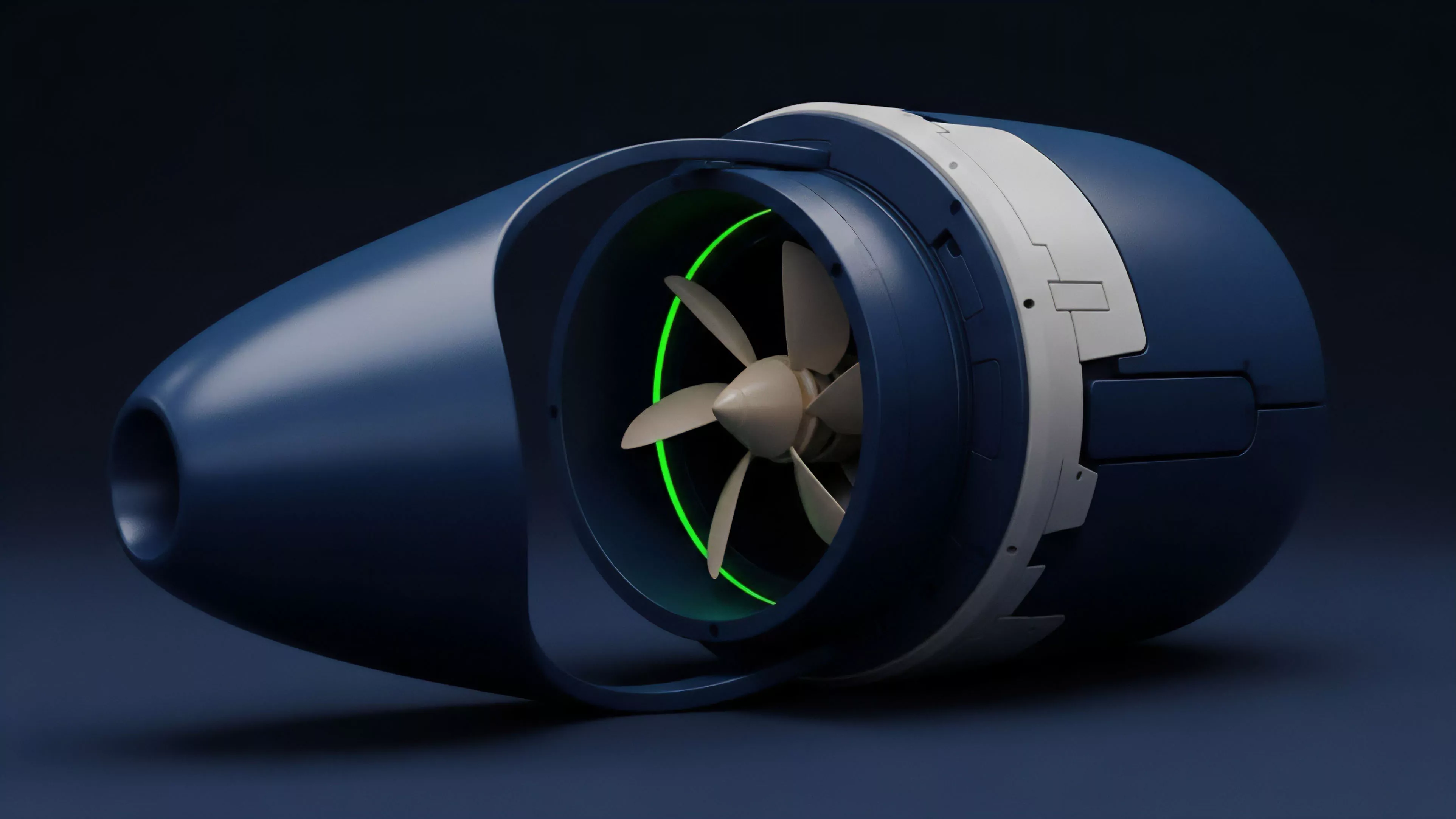

Blockchain Architecture

Meaning ⎊ Decentralized options architecture automates non-linear risk transfer on-chain, shifting from counterparty risk to smart contract risk and enabling capital-efficient risk management through liquidity pools.

Blockchain Technology

Meaning ⎊ Blockchain technology provides the foundational state machine for decentralized derivatives, enabling trustless settlement through code-enforced financial logic.

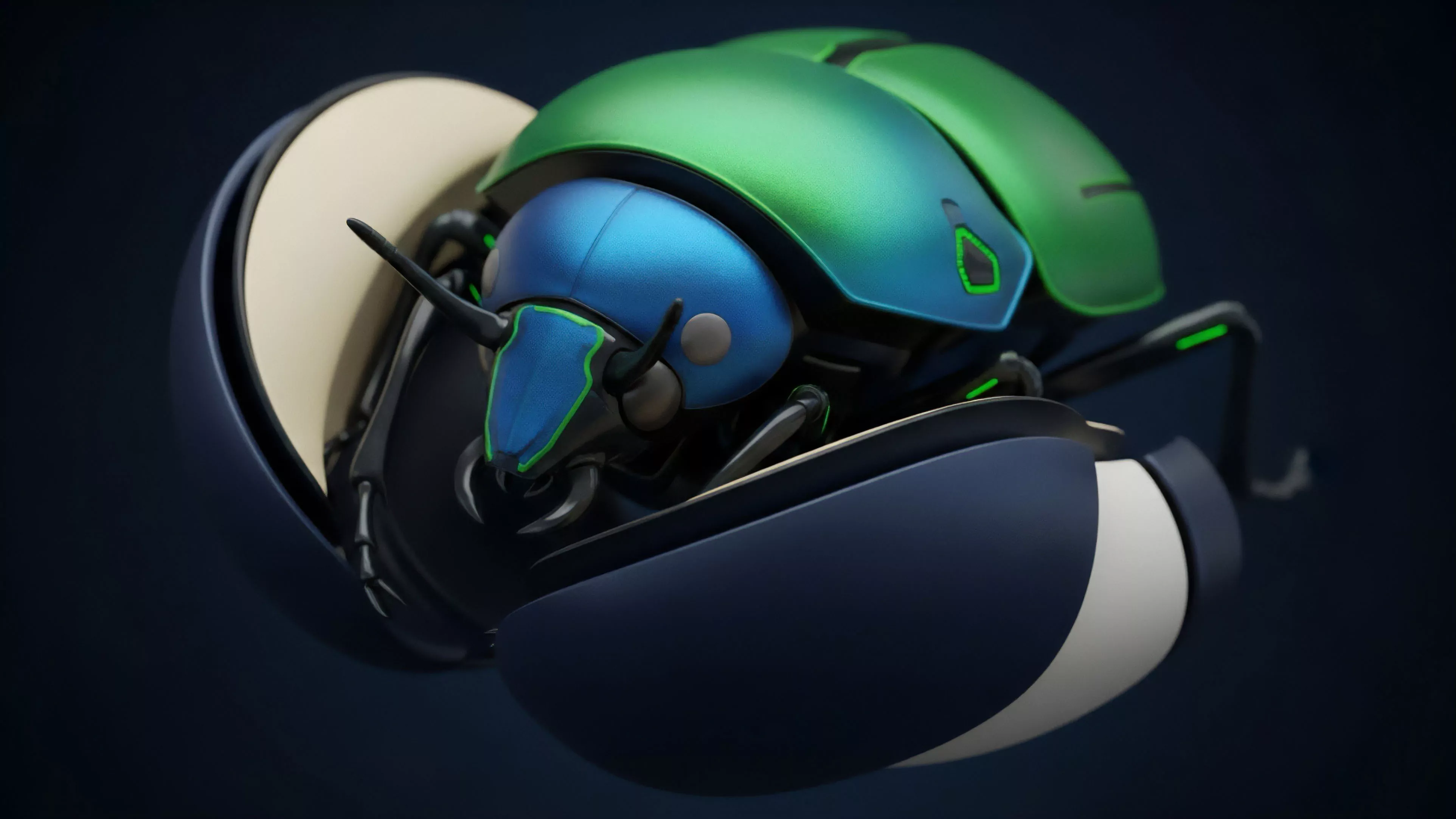

Blockchain Physics

Meaning ⎊ Blockchain Physics is a framework for analyzing how a decentralized protocol's design and incentive structures create emergent financial outcomes and systemic risk.

Blockchain Game Theory

Meaning ⎊ Blockchain game theory analyzes how decentralized options protocols design incentive structures to manage non-linear risk and ensure market stability through strategic participant interaction.

Blockchain Economics

Meaning ⎊ Decentralized Volatility Regimes define how blockchain architecture and smart contract execution alter risk pricing and systemic stability for crypto options.

Blockchain Constraints

Meaning ⎊ Blockchain constraints are the architectural limitations of distributed ledgers that dictate the cost, latency, and capital efficiency of decentralized options protocols.

Blockchain Data Feeds

Meaning ⎊ Blockchain data feeds are essential for decentralized options and derivatives, providing secure and accurate pricing data for collateral valuation and liquidation triggers.

Blockchain Finality Constraints

Meaning ⎊ The inherent delay in network confirmation required to ensure a transaction cannot be reversed or altered.

Blockchain Oracles

Meaning ⎊ Blockchain Oracles bridge off-chain data to smart contracts, enabling decentralized derivatives by providing critical pricing and settlement data.

Blockchain Consensus Mechanisms

Meaning ⎊ Consensus mechanisms establish the core security and finality properties of a decentralized network, directly influencing the design and risk profile of crypto derivative products.

Blockchain Trilemma

Meaning ⎊ The unavoidable trade-off between decentralization, security, and speed that constrains all blockchain architectures.

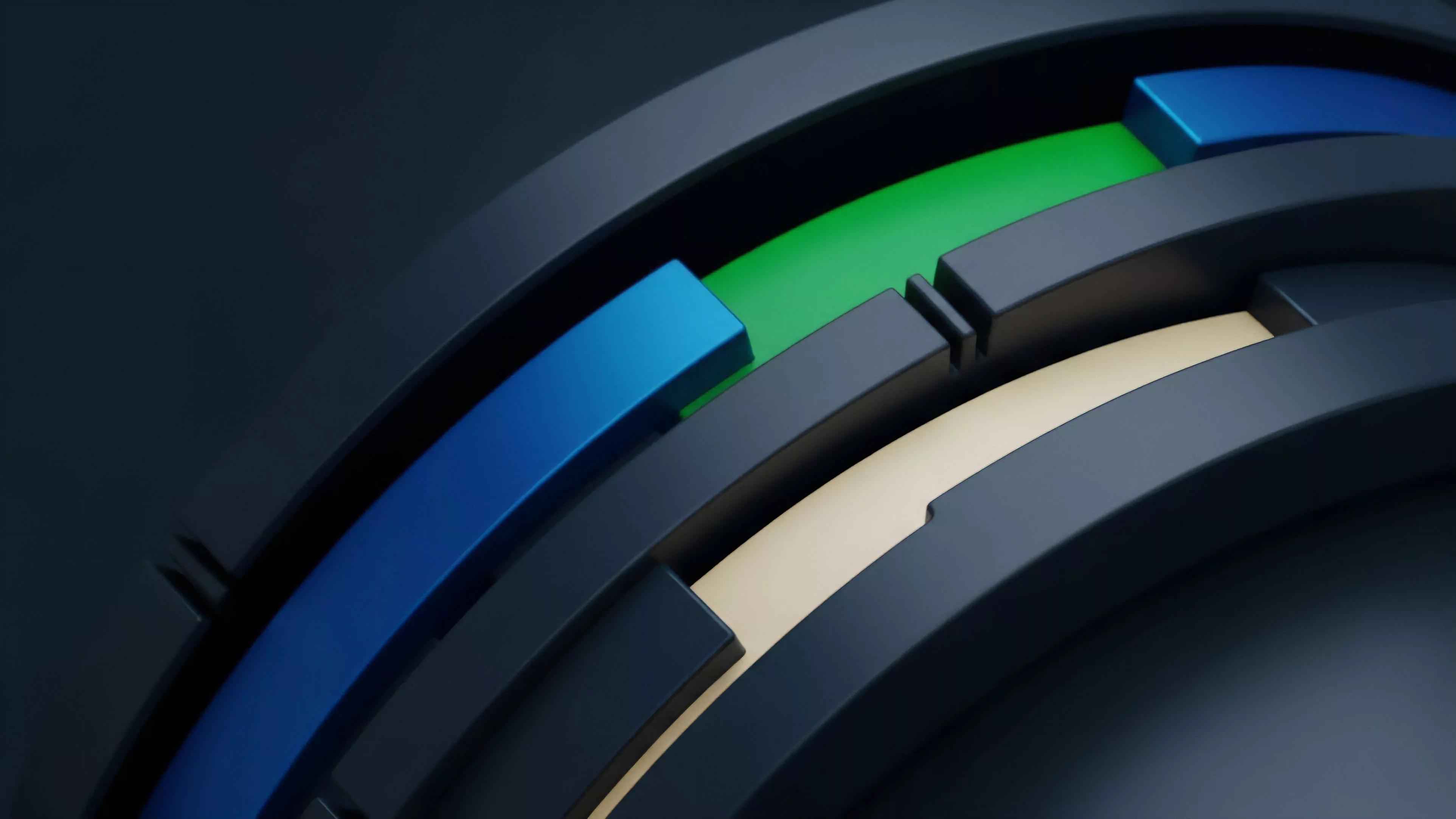

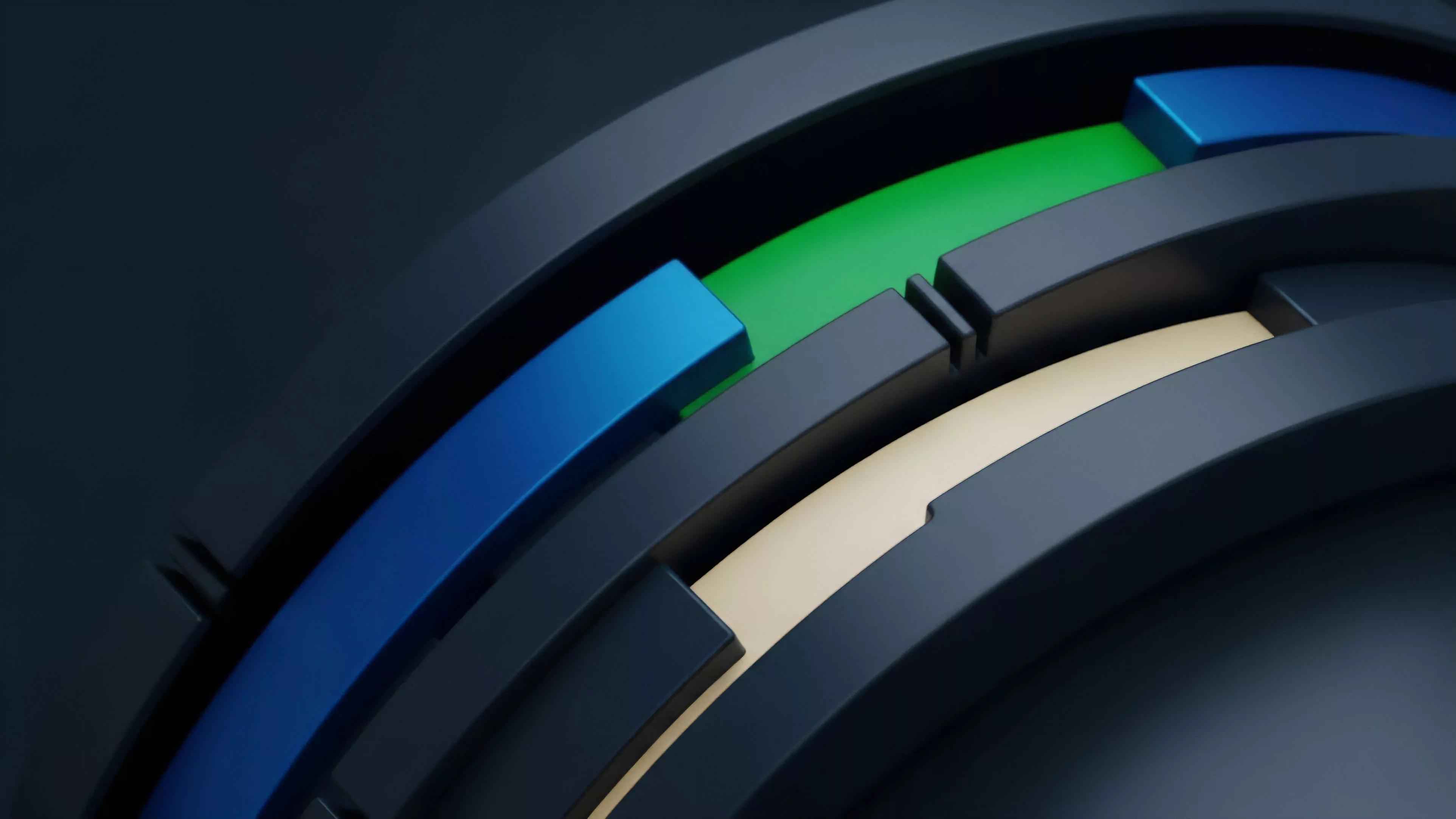

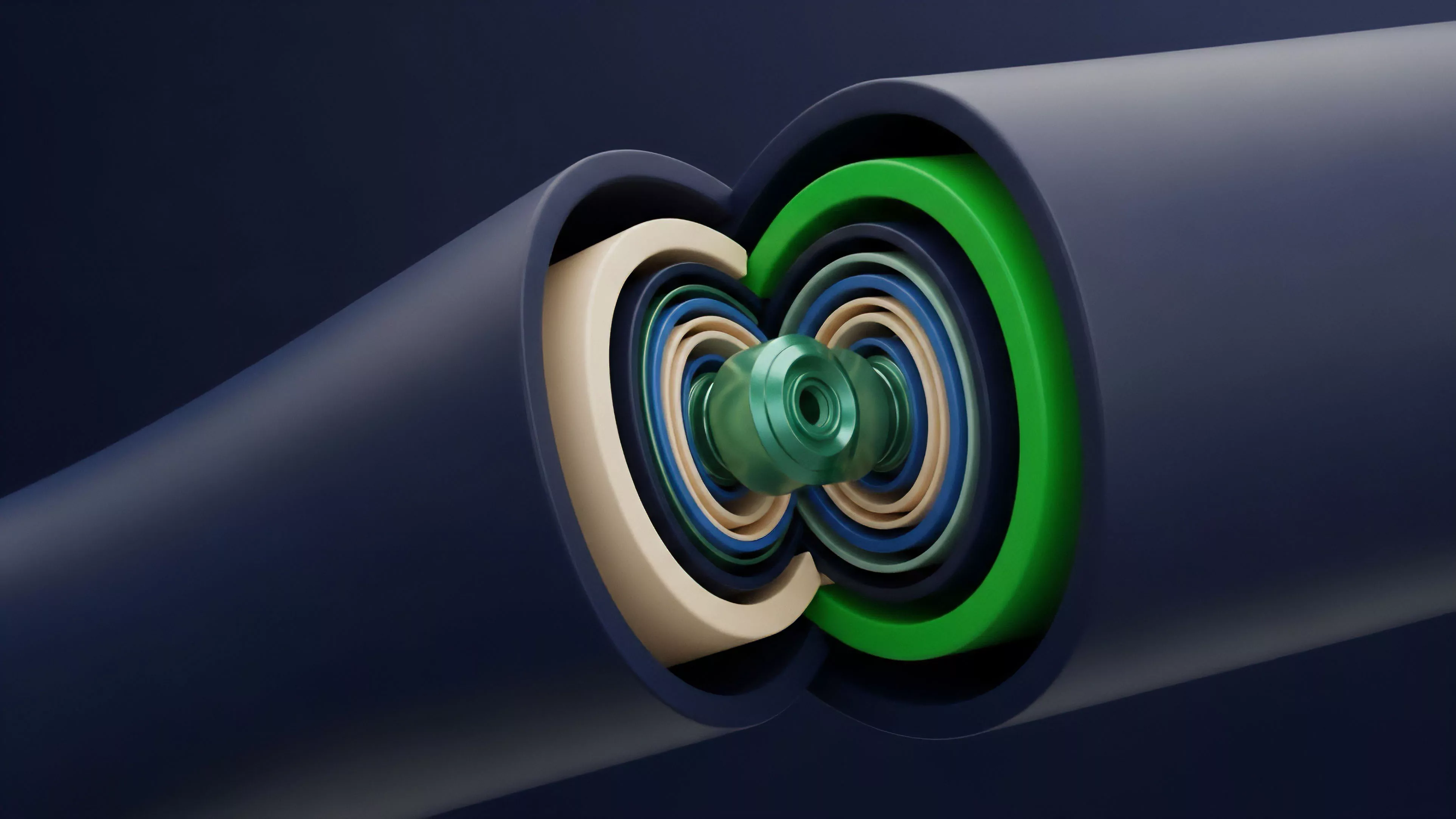

Modular Blockchain Architecture

Meaning ⎊ A design strategy that separates blockchain functions into specialized, distinct layers to improve scalability and performance.

Blockchain Transaction Costs

Meaning ⎊ Blockchain transaction costs define the economic viability and structural constraints of decentralized options markets, influencing pricing, hedging strategies, and liquidity distribution across layers.

Modular Blockchain Design

Meaning ⎊ Modular blockchain design separates core functions to create specialized execution environments, enabling high-throughput and capital-efficient crypto options protocols.

Data Feed Real-Time Data

Meaning ⎊ Real-time data feeds are the critical infrastructure for crypto options markets, providing the dynamic pricing and risk management inputs necessary for efficient settlement.

Blockchain Transparency

Meaning ⎊ Blockchain transparency shifts market dynamics by enabling real-time, public verification of collateral and positions, fundamentally altering risk management and market behavior.

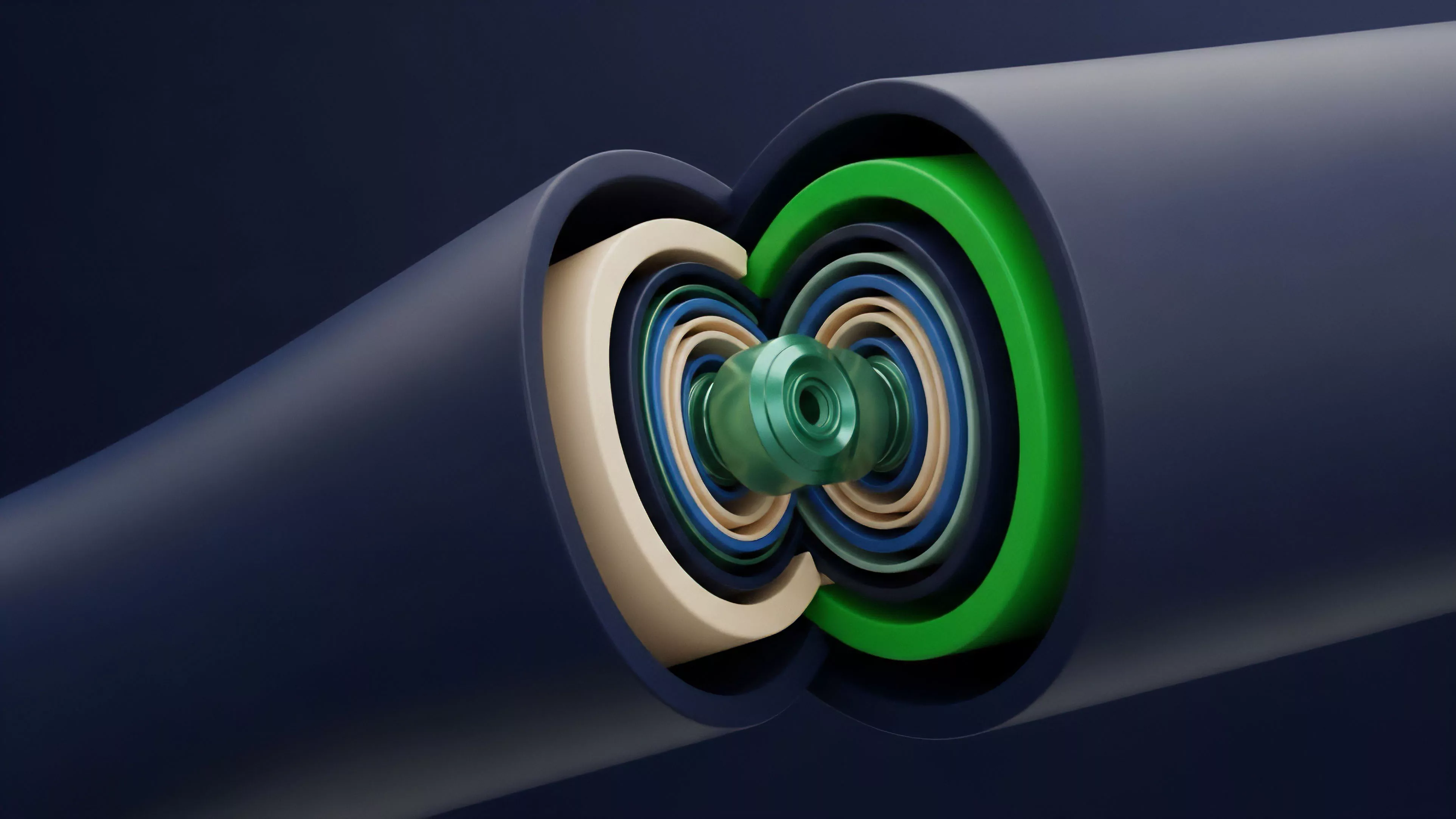

Blockchain State Machine

Meaning ⎊ Decentralized options protocols are smart contract state machines that enable non-custodial risk transfer through transparent collateralization and algorithmic pricing.

Blockchain Consensus Costs

Meaning ⎊ Blockchain Consensus Costs are the fundamental economic friction required to secure a decentralized network, directly impacting derivatives pricing and capital efficiency through finality latency and collateral risk.

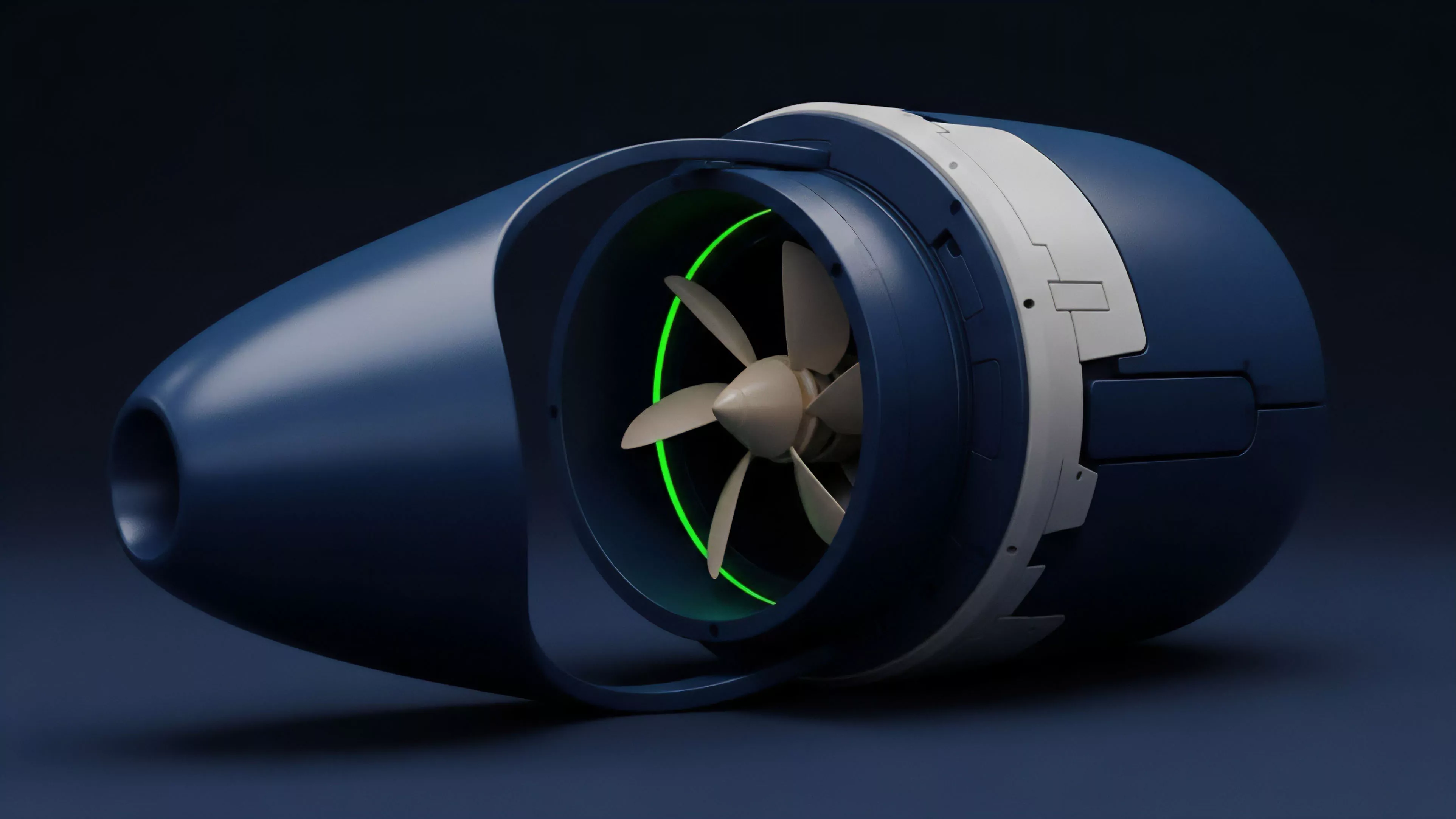

Blockchain Throughput

Meaning ⎊ The capacity of a distributed ledger to process and validate a specific volume of transactions per unit of time.

Blockchain Scalability Solutions

Meaning ⎊ Blockchain scalability solutions address the fundamental constraint of network throughput, enabling high-volume financial applications through modular architectures and off-chain execution environments.

Blockchain Mempool Dynamics

Meaning ⎊ Blockchain Mempool Dynamics govern the prioritization and ordering of unconfirmed transactions, creating an adversarial environment that introduces significant execution risk for decentralized derivatives.

Modular Blockchain

Meaning ⎊ Modular blockchain architecture decouples execution from data availability, enabling specialized rollups that optimize cost and risk for specific derivative applications.

Blockchain Gas Fees

Meaning ⎊ The Contingent Settlement Risk Premium is the embedded volatility of transaction costs that fundamentally distorts derivative pricing and threatens systemic liquidation stability.

Data Feed Order Book Data

Meaning ⎊ The Decentralized Options Liquidity Depth Stream is the real-time, aggregated data structure detailing open options limit orders, essential for calculating risk and execution costs.

High Gas Costs Blockchain Trading

Meaning ⎊ Priority fee execution architecture dictates the feasibility of on-chain derivative settlement by transforming network congestion into a direct tax.

Blockchain Network Security for Compliance

Meaning ⎊ ZK-Compliance enables decentralized financial systems to cryptographically prove solvency and regulatory adherence without revealing proprietary trading data.

Blockchain Network Security for Legal Compliance

Meaning ⎊ The Lex Cryptographica Attestation Layer is a specialized cryptographic architecture that uses zero-knowledge proofs to enforce legal compliance and counterparty attestation for institutional crypto options trading.

Blockchain Network Resilience Testing

Meaning ⎊ Blockchain Network Resilience Testing evaluates the structural integrity and economic finality of decentralized ledgers under extreme adversarial stress.

Blockchain Security Model

Meaning ⎊ The Blockchain Security Model aligns economic incentives with cryptographic proof to ensure the immutable integrity of decentralized financial states.