Essence

A Zero-Knowledge Limit Order Book functions as a decentralized trading venue where order matching occurs without revealing sensitive participant data. By leveraging Zero-Knowledge Proofs, specifically zk-SNARKs or zk-STARKs, the protocol validates that a trader possesses sufficient balance and valid signature authorization for an order without exposing the underlying account state or order price to the public ledger.

A Zero-Knowledge Limit Order Book enables private, verifiable price discovery by decoupling order validation from information disclosure.

This architecture addresses the inherent trade-off between transparency and privacy in decentralized finance. Traditional automated market makers suffer from front-running and toxic flow extraction; in contrast, this model enforces order execution integrity through cryptographic proofs, ensuring that state transitions remain valid while keeping specific order details shielded from adversarial observers until settlement.

Origin

The genesis of this concept lies in the intersection of cryptographic primitives and the persistent inefficiency of decentralized exchange mechanisms. Early decentralized exchanges relied on public order books, which inherently leaked information, allowing MEV (Maximal Extractable Value) bots to prey on retail flow.

- Cryptographic foundations: The evolution of zk-SNARKs allowed for the succinct verification of complex computations.

- Market microstructure demands: Traders sought the familiarity of order books rather than constant product market makers.

- Privacy requirements: Institutional participants required confidentiality to prevent signal leakage during large order execution.

Developers synthesized these elements to build systems where the order book state exists as a private commitment, with updates verified on-chain. This shift marks the departure from fully transparent, easily exploitable public state machines toward privacy-preserving, high-integrity financial venues.

Theory

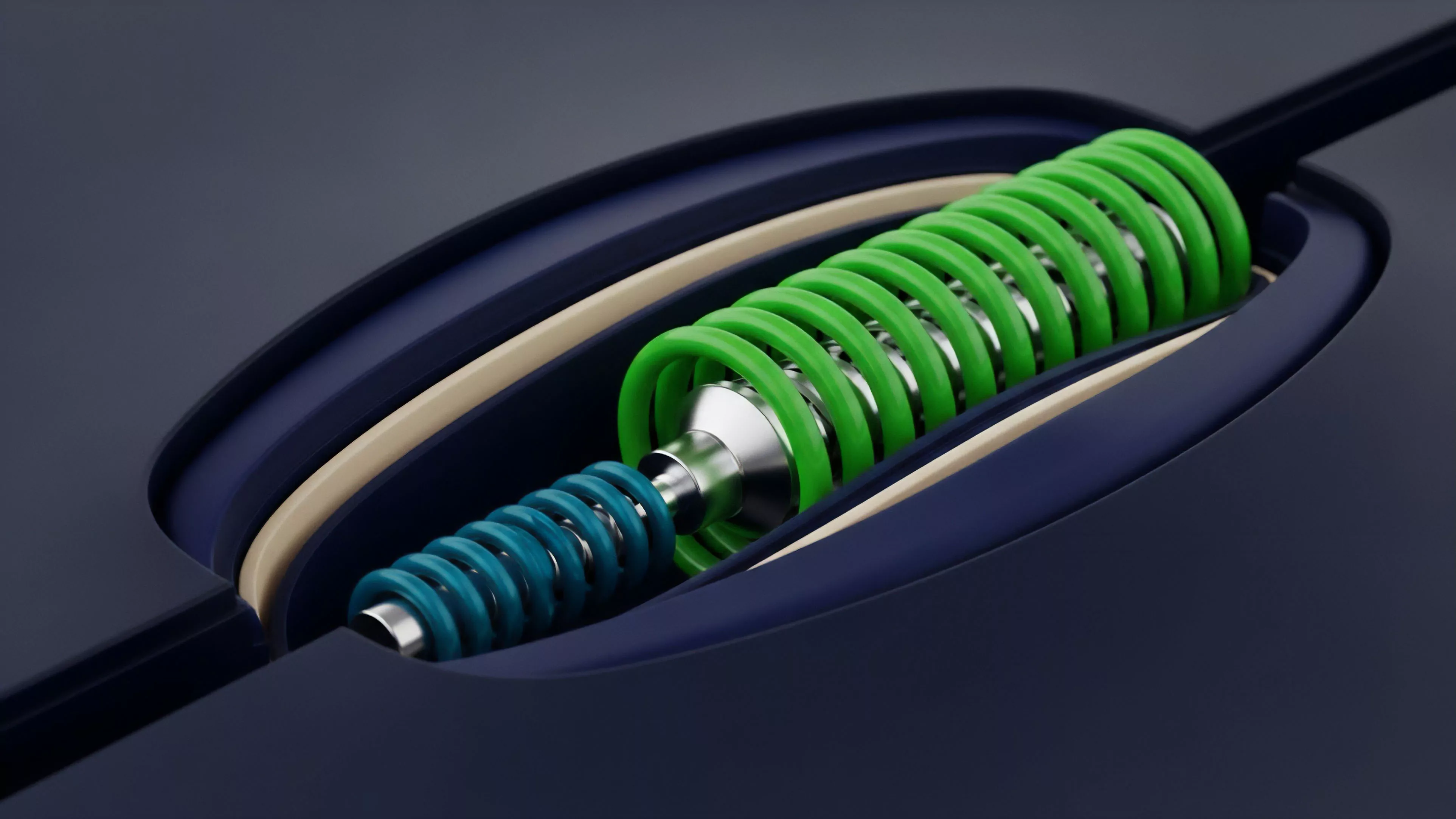

The mechanical structure relies on a private state commitment, typically a Merkle tree or Poseidon hash, which represents the entire order book. When a trader submits an order, they generate a Zero-Knowledge Proof demonstrating that their order satisfies protocol rules, such as having a sufficient margin or balance, without revealing the specific price or quantity until the moment of matching.

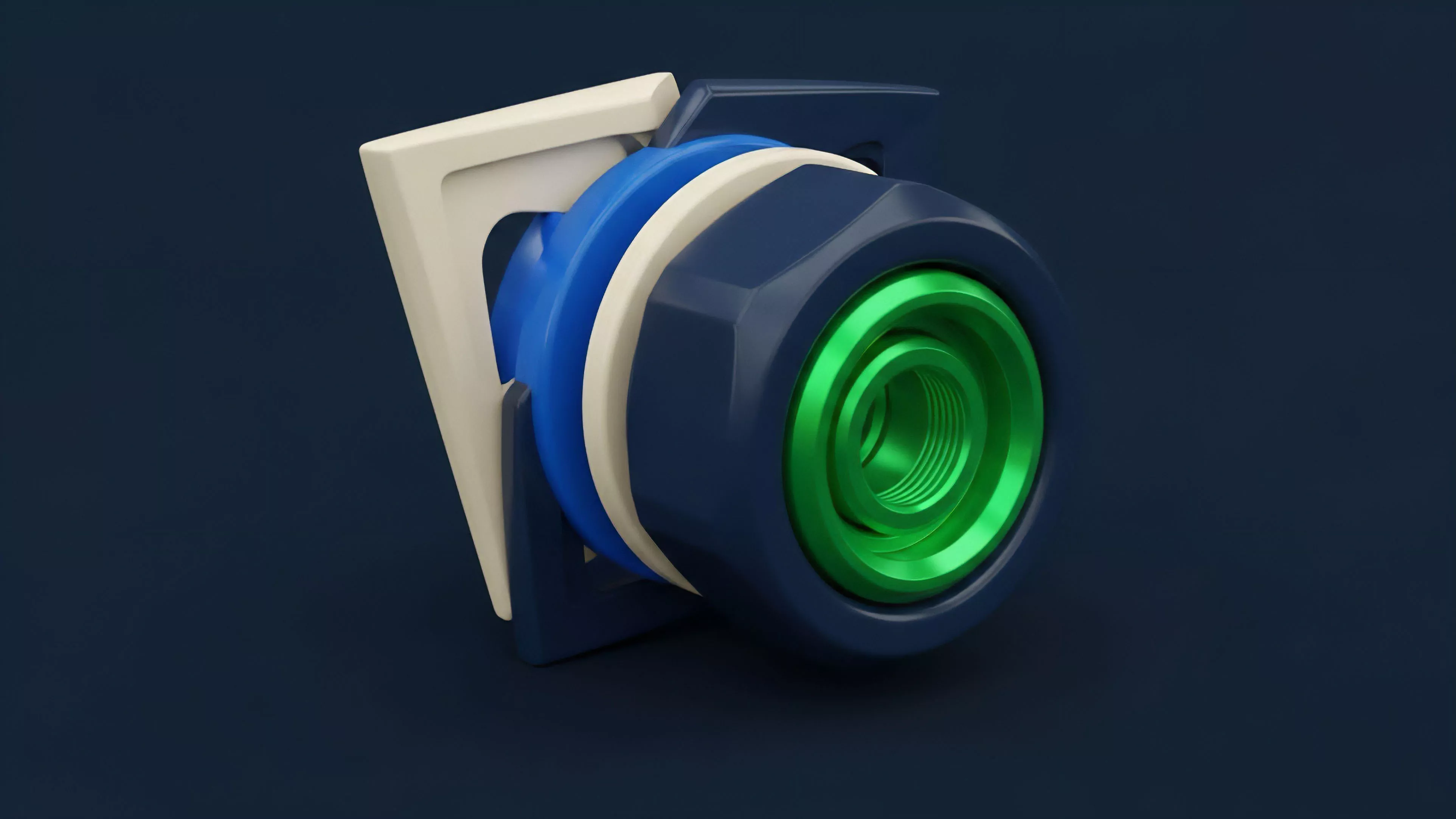

| Component | Functional Role |

| State Commitment | Maintains private order book integrity |

| Prover | Generates validity proof for orders |

| Verifier | Ensures proof compliance on-chain |

| Matcher | Executes trades against private state |

The integrity of the order book is maintained by proving the validity of state transitions rather than by making the state itself public.

The system operates as a constrained state machine. Because participants must prove adherence to the rules ⎊ such as preventing double-spending or unauthorized trades ⎊ the protocol achieves a level of security equivalent to public systems while maintaining the confidentiality required for institutional-grade strategy execution. Sometimes I think the true innovation is not the privacy itself, but the way we force the blockchain to perform complex validation without needing to see the raw data ⎊ it is a complete reversal of the traditional auditing process.

The math handles the trust, which is a significant departure from relying on centralized matching engines or transparent public mempools.

Approach

Current implementations prioritize off-chain computation with on-chain verification to maximize throughput. Traders interact with a sequencer or a relayer that collects encrypted orders, batches them, and generates a recursive proof to update the global state.

- Sequencer models: Relayers collect orders and produce batches for proof generation.

- Recursive proofs: Protocols aggregate multiple proofs to reduce gas costs per transaction.

- State channels: Some implementations use channels for low-latency updates before final settlement.

Off-chain proof generation minimizes the computational burden on the settlement layer while maintaining absolute cryptographic certainty.

Market participants must account for the latency of proof generation, which introduces a different set of risks compared to traditional high-frequency trading. Traders optimize their strategies by timing their submissions to align with batch windows, effectively trading raw speed for privacy and reduced slippage.

Evolution

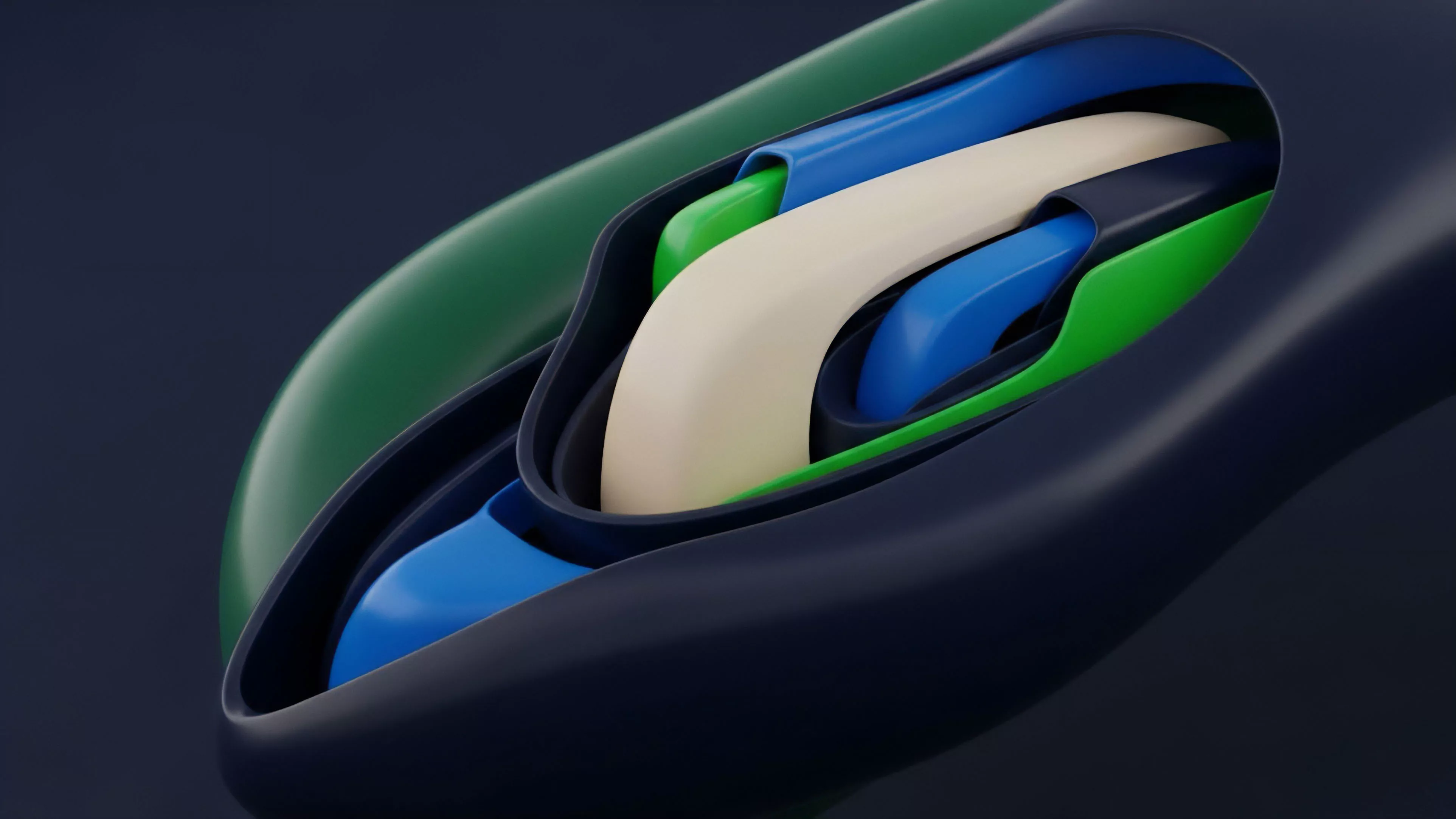

The transition from early prototypes to current deployments shows a shift toward modular architecture. Initially, these systems were monolithic, bundling the order book, the matching engine, and the proof generation into a single stack.

Now, we see a separation of concerns, where specialized prover networks handle the heavy computation, and the blockchain serves solely as a settlement and data availability layer.

| Stage | Key Characteristic |

| Experimental | Monolithic, slow proof generation |

| Iterative | Introduction of recursive proofs |

| Advanced | Decentralized prover networks and modularity |

This evolution is driven by the necessity to scale to millions of orders per day. As the underlying cryptographic primitives become more efficient, the overhead of generating proofs has decreased, allowing these protocols to approach the performance of centralized matching engines while retaining the non-custodial, permissionless nature of decentralized systems.

Horizon

The next phase involves the integration of cross-rollup liquidity and atomic composability. We are moving toward a future where a Zero-Knowledge Limit Order Book can interact with lending protocols and derivatives engines on different chains without requiring the order details to ever exist in plaintext.

The future of decentralized finance depends on the ability to maintain privacy during price discovery while ensuring total systemic auditability.

Future iterations will likely incorporate multi-party computation to further obscure order matching, preventing even the sequencer from knowing the specific trade details. This would create a truly blind matching engine, where liquidity providers and takers interact solely through verifiable, encrypted channels, setting a new standard for market fairness and participant protection in digital asset finance.