Essence

Zero-Knowledge Acceleration represents the specialized computational infrastructure required to reduce the latency of cryptographic proof generation within decentralized financial systems. This field focuses on optimizing the hardware and software pathways that enable Zero-Knowledge Proofs, specifically zk-SNARKs and zk-STARKs, to function at speeds compatible with high-frequency trading and institutional settlement cycles.

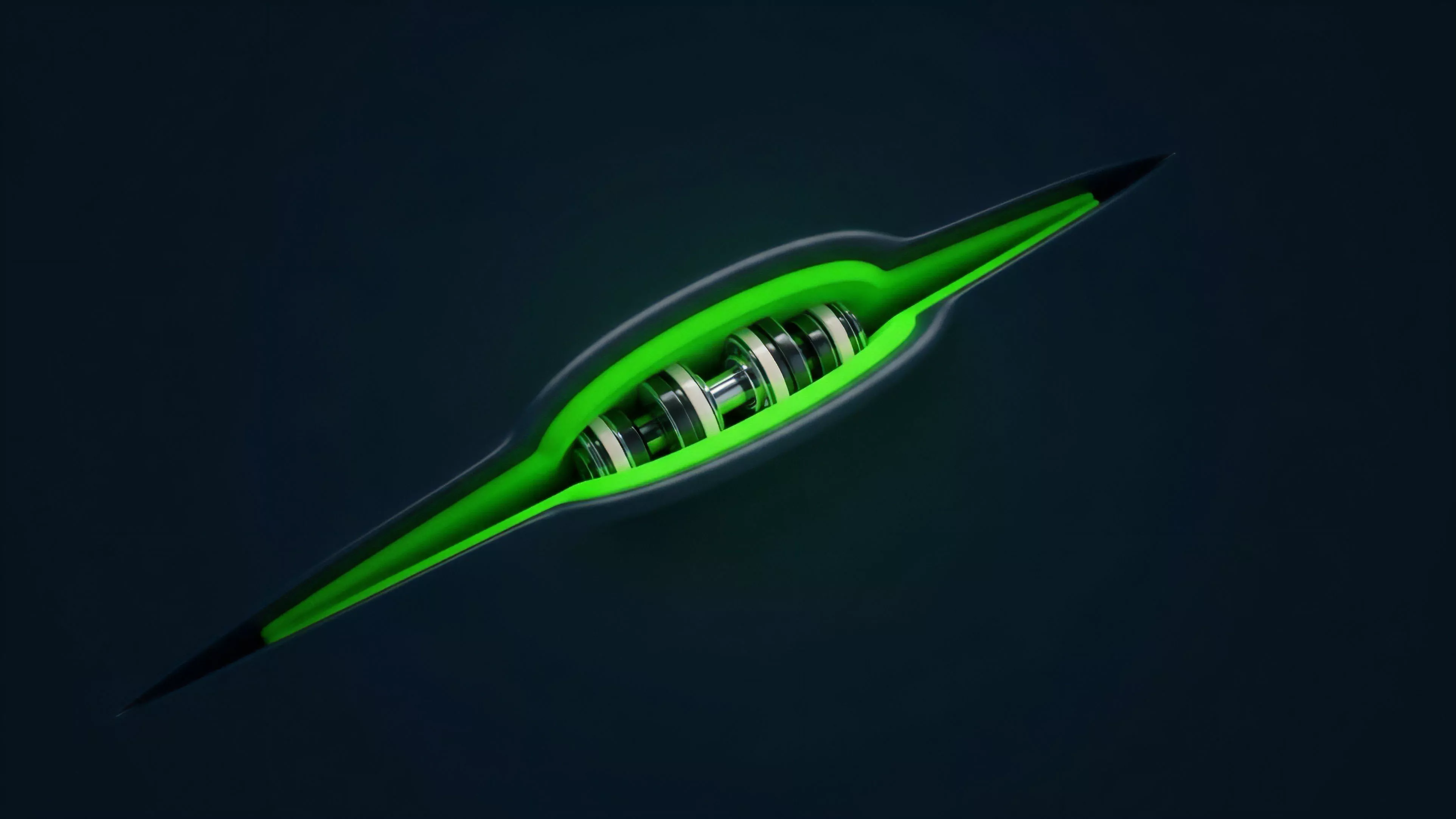

Zero-Knowledge Acceleration functions as the computational engine reducing the temporal overhead inherent in verifying complex cryptographic validity proofs.

The primary objective involves overcoming the massive CPU and memory intensity required for witness generation and multiscalar multiplication. By moving these operations from general-purpose processors to ASICs, FPGAs, or highly optimized GPU kernels, protocols achieve the throughput necessary for scaling Layer 2 networks and maintaining privacy-preserving order books without sacrificing security.

Origin

The requirement for Zero-Knowledge Acceleration surfaced as developers realized that cryptographic verification ⎊ while mathematically elegant ⎊ imposed severe performance penalties on throughput-sensitive applications. Initial implementations of zk-Rollups relied on standard cloud compute, which created significant bottlenecks during periods of high market volatility, as proof generation times frequently exceeded block production intervals.

- Computational Asymmetry: The disparity between the time required to generate a proof and the time required to verify it necessitates dedicated hardware resources.

- Latency Sensitivity: Market makers and arbitrageurs require millisecond-level execution, making slow proof generation a fatal flaw for on-chain derivatives.

- Hardware Specialization: Early attempts to utilize standard CPU architectures failed to meet the demands of large-scale circuit processing, leading to the development of custom acceleration layers.

This transition mirrors the historical shift in traditional finance where high-frequency trading firms moved from software-based order matching to hardware-accelerated FPGA implementations to gain a competitive advantage in execution speed.

Theory

The mathematical core of Zero-Knowledge Acceleration revolves around the efficient execution of Elliptic Curve Cryptography and Fast Fourier Transforms. These operations are the primary drivers of the computational burden in modern proof systems.

| Technique | Function | Hardware Target |

| Multiscalar Multiplication | Point aggregation | FPGA/ASIC |

| Number Theoretic Transform | Polynomial evaluation | GPU/Parallel Processing |

| Witness Generation | Circuit computation | High-memory CPU/ASIC |

The architectural integrity of a proof system depends on minimizing the computational path length between transaction submission and finalized state updates.

When analyzing the performance of these systems, one must consider the amortization of proof costs. By batching thousands of individual transactions into a single proof, the per-transaction cost drops, but the complexity of the underlying circuit grows. This creates a feedback loop where the demand for faster hardware forces advancements in circuit design, which in turn necessitates more specialized silicon.

Market microstructure suffers when the latency of state updates exceeds the volatility of the underlying assets. My professional experience suggests that the current obsession with throughput often ignores the jitter introduced by variable proof generation times, which creates systemic risks for liquidity providers.

Approach

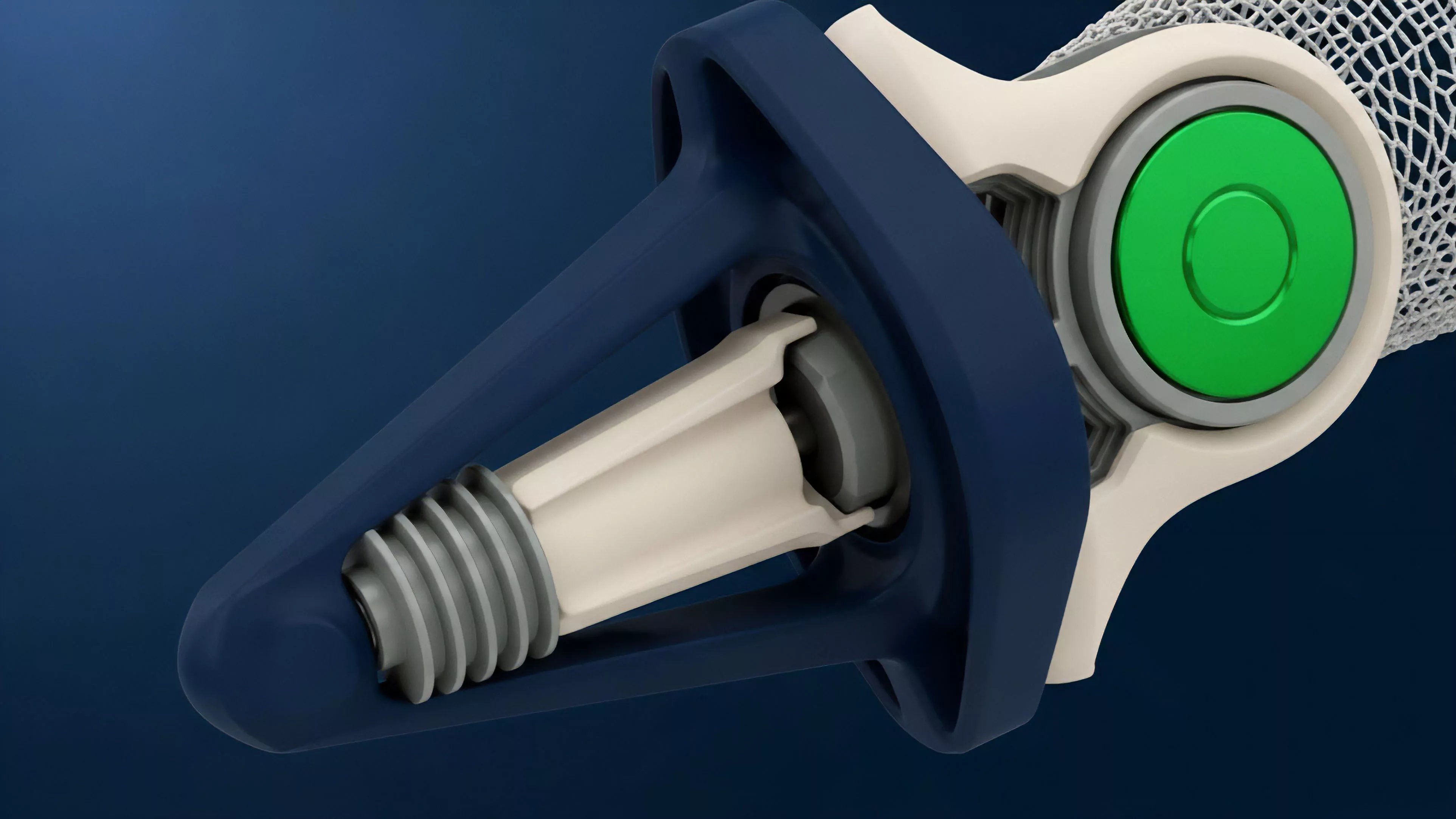

Current strategies prioritize the development of zk-friendly hardware specifically tuned for modular blockchain architectures. This approach shifts away from monolithic design, allowing for specialized prover networks that operate independently of the main chain consensus.

- Decentralized Prover Markets: Incentivizing a distributed set of actors to perform proof generation, thereby mitigating the risks associated with centralized infrastructure.

- Hardware-Agnostic Software Layers: Developing compilation tools that translate high-level cryptographic circuits into machine code optimized for various hardware targets.

- Circuit Parallelization: Breaking down monolithic circuits into smaller, independent segments that can be processed simultaneously across massive compute clusters.

This shift in approach recognizes that proof generation is not a uniform task. Some proofs are latency-sensitive, requiring low-latency FPGA setups, while others prioritize cost-efficiency, utilizing GPU-based batching strategies.

Evolution

The trajectory of this technology has moved from software-based research prototypes to production-ready hardware acceleration modules. Initially, the community viewed Zero-Knowledge Proofs as a theoretical mechanism for privacy, largely ignoring the performance implications of mass adoption.

The market eventually forced a reckoning. As Layer 2 protocols gained traction, the inability to generate proofs in real-time caused significant transaction delays and liquidity fragmentation. This forced a transition toward hardware-level optimization.

Systemic resilience requires that the latency of cryptographic proof generation remains significantly lower than the market-clearing frequency of the derivative instruments.

The industry now acknowledges that the bottleneck is not the blockchain itself, but the computational overhead of the proof system. Consequently, we see a rise in specialized entities dedicated to silicon-level optimization for cryptographic primitives. The focus has moved from general scaling to protocol-specific acceleration, where the hardware is designed to handle the unique arithmetic circuits of a specific proof system.

Horizon

The next phase involves the integration of Zero-Knowledge Acceleration directly into the consensus layer of decentralized exchanges. This will enable the deployment of fully on-chain order books that maintain complete privacy without sacrificing the performance of traditional central limit order books. The ultimate goal remains the total elimination of latency-based arbitrage opportunities that currently plague decentralized markets. By creating a standardized, high-speed proof generation layer, we can move toward a financial architecture where the speed of settlement is governed by physics rather than protocol inefficiencies. The primary challenge remains the verification of hardware. If we outsource proof generation to specialized hardware, we must ensure that the hardware itself does not introduce new attack vectors or biases into the system. This leads us to the next critical question: How can we achieve hardware-level trust in a decentralized environment without re-introducing the very central points of failure we are attempting to remove?