Essence

Zero-Cost Computation defines a state where the overhead of executing complex financial derivatives, such as options pricing models or margin calculations, reaches negligible levels relative to the transaction value. This concept shifts the burden of cost from the user to the protocol architecture, where optimized smart contract design and off-chain execution environments allow for high-frequency updates without incurring prohibitive gas fees or latency.

Zero-Cost Computation represents the architectural threshold where financial execution efficiency renders the cost of derivative validation irrelevant to strategy viability.

The core utility resides in the democratization of sophisticated hedging tools. By removing the friction associated with iterative re-balancing of delta-neutral portfolios, market participants can maintain tighter risk tolerances. This creates a more responsive liquidity landscape, as market makers adjust quotes with minimal computational resistance.

Origin

The genesis of this mechanism lies in the inherent constraints of early Ethereum-based decentralized finance, where high gas prices acted as a tax on complexity.

Developers observed that strategies involving frequent option rolling or dynamic hedging were systematically excluded due to the cumulative expense of on-chain state updates.

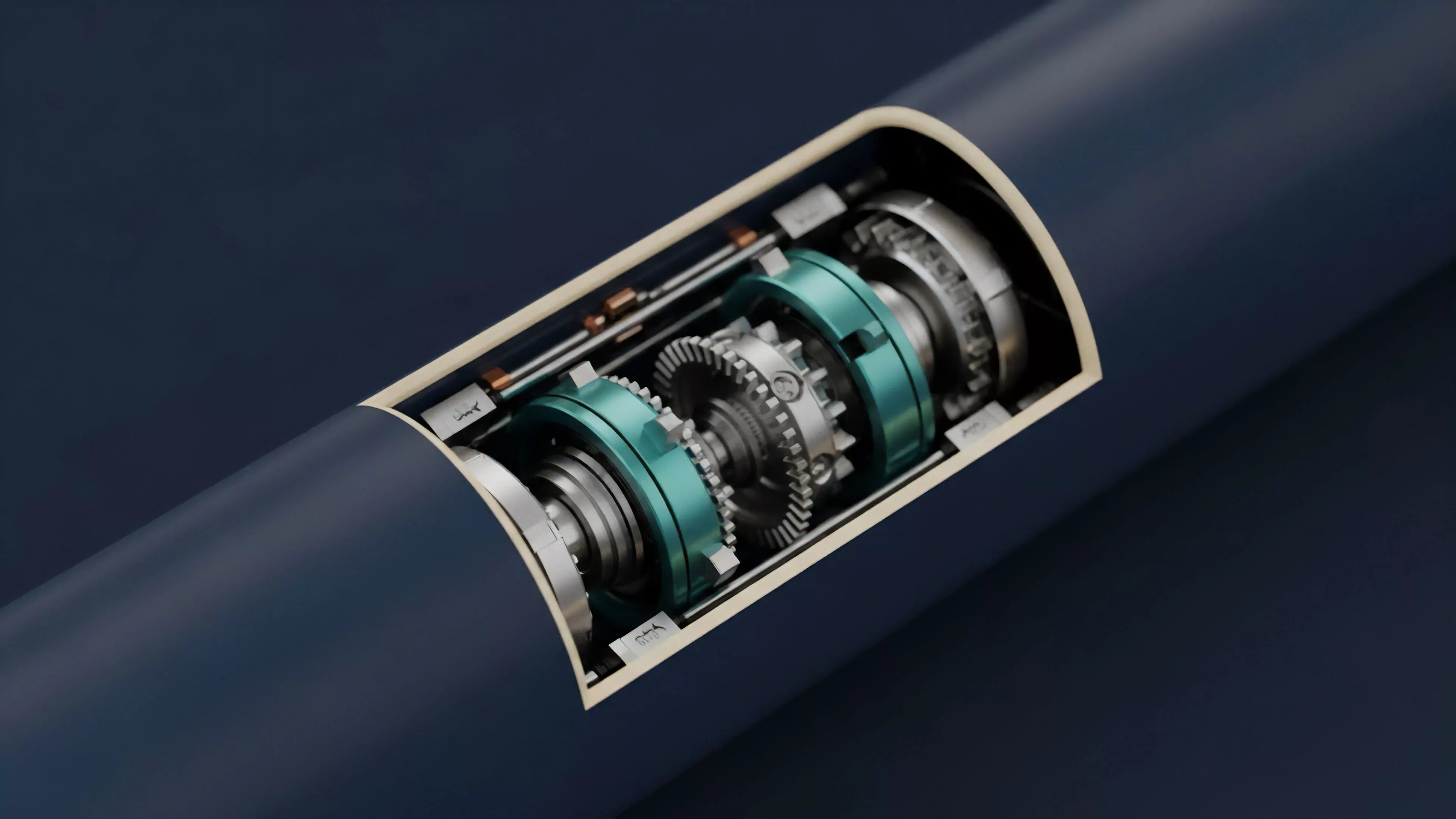

- Layer Two Rollups provided the initial substrate by aggregating transactions, effectively amortizing the fixed costs of computation across a larger set of users.

- Off-chain Order Books emerged as a secondary solution, moving the heavy lifting of matching and pricing outside the consensus layer to preserve capital efficiency.

- Zero-Knowledge Proofs introduced a method for verifying computational integrity without requiring every node to execute the underlying logic, drastically reducing the verification cost.

These developments collectively fostered an environment where complex financial instruments could function with the same agility as centralized counterparts. The transition from monolithic, expensive validation to modular, scalable execution marks the shift toward the current infrastructure.

Theory

The mathematical modeling of Zero-Cost Computation relies on the optimization of the state machine. In traditional DeFi, every calculation consumes gas; here, the objective is to move the computation to a transient state where only the final settlement or the delta of the change is recorded on the main ledger.

Computational Efficiency Parameters

| Parameter | Mechanism | Impact |

| State Bloat | Pruning | Reduces storage costs |

| Latency | Batching | Increases throughput |

| Gas Consumption | Proof Compression | Lowers per-trade overhead |

The theory assumes an adversarial environment where participants continuously probe for price inefficiencies. By reducing the computational cost to near-zero, the protocol forces the competition to shift from fee-arbitrage to model-precision. If the cost of updating a hedge is zero, the limiting factor becomes the accuracy of the volatility surface estimation and the speed of the underlying oracle feed.

Computational optimization in derivatives protocols transforms the barrier to entry from capital requirements to algorithmic sophistication.

Consider the thermodynamics of information. Just as heat dissipation limits hardware performance, computational gas limits protocol performance. When the cost of information processing approaches zero, the protocol achieves a state of maximum informational entropy, where prices reflect all available data instantaneously.

Approach

Current implementation strategies focus on the separation of concerns between settlement and execution.

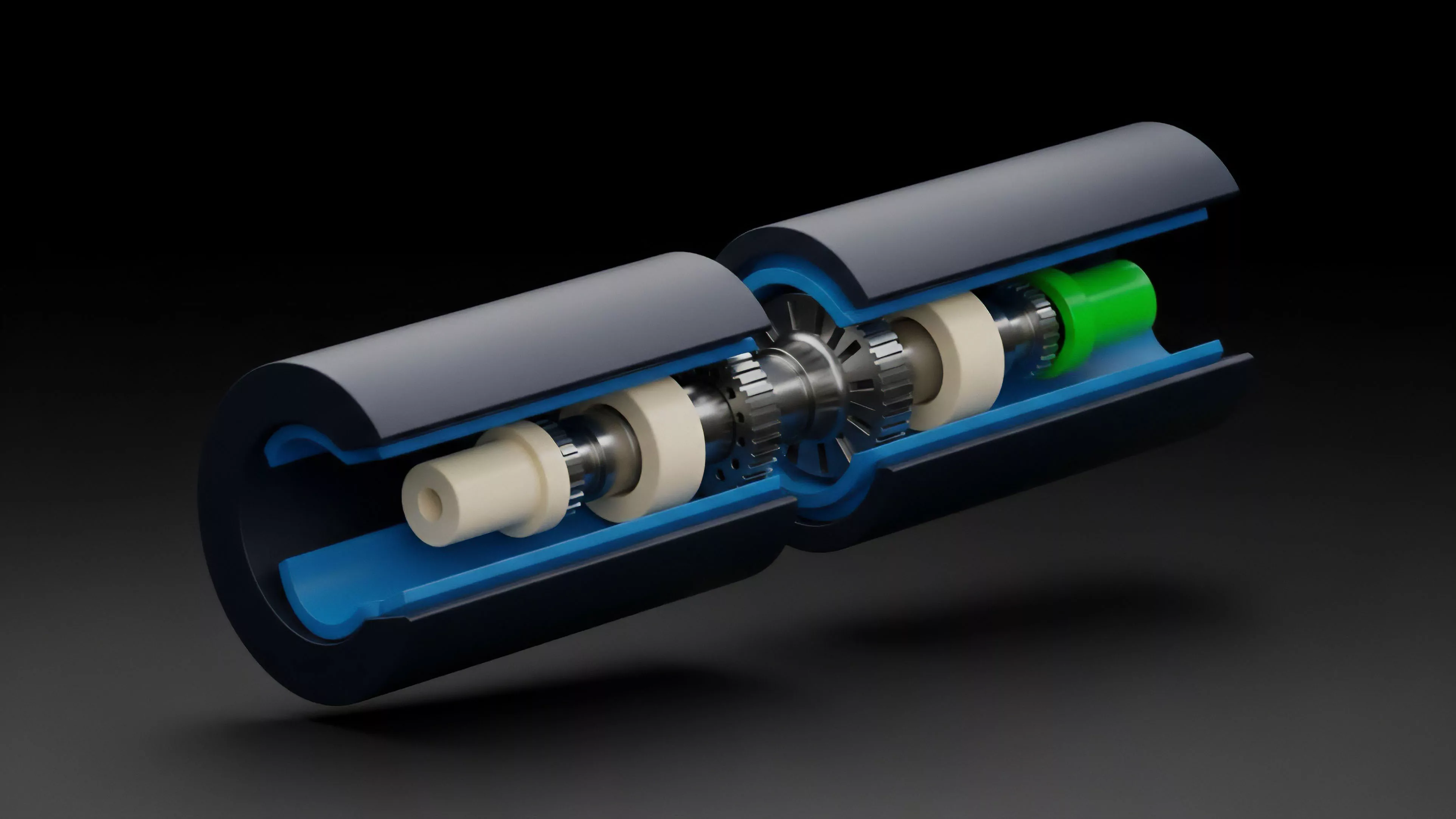

Modern derivative protocols utilize a tiered architecture where the heavy lifting occurs within a trusted execution environment or a specialized rollup, while the main chain serves only as a court of last resort.

- Asynchronous Settlement allows trades to clear immediately off-chain, with the finality window occurring later to ensure systemic solvency.

- Incremental State Updates ensure that only the net change in a user’s position is broadcast to the network, preventing redundant data propagation.

- Proactive Liquidity Management leverages automated agents that operate within the zero-cost environment to rebalance positions before liquidation thresholds are reached.

This approach minimizes the systemic footprint of individual trades. By treating the main chain as a high-value settlement layer rather than an execution engine, protocols can support thousands of concurrent derivative positions without compromising the security of the underlying collateral.

Evolution

The transition from early, monolithic protocols to current modular designs highlights a maturing understanding of financial system physics. Early systems struggled with the “gas-tax” on volatility, where the cost of managing an option portfolio often exceeded the potential profit from the strategy.

The evolution of derivative infrastructure demonstrates a clear trajectory from high-friction, centralized models toward low-cost, decentralized autonomy.

As these systems evolved, the focus shifted toward composability. Developers realized that isolated, zero-cost environments were insufficient if they could not interact with the broader liquidity pool. The current iteration involves bridging these high-performance islands, allowing collateral to flow seamlessly between disparate protocols while maintaining the efficiency gains of the zero-cost execution layer.

Horizon

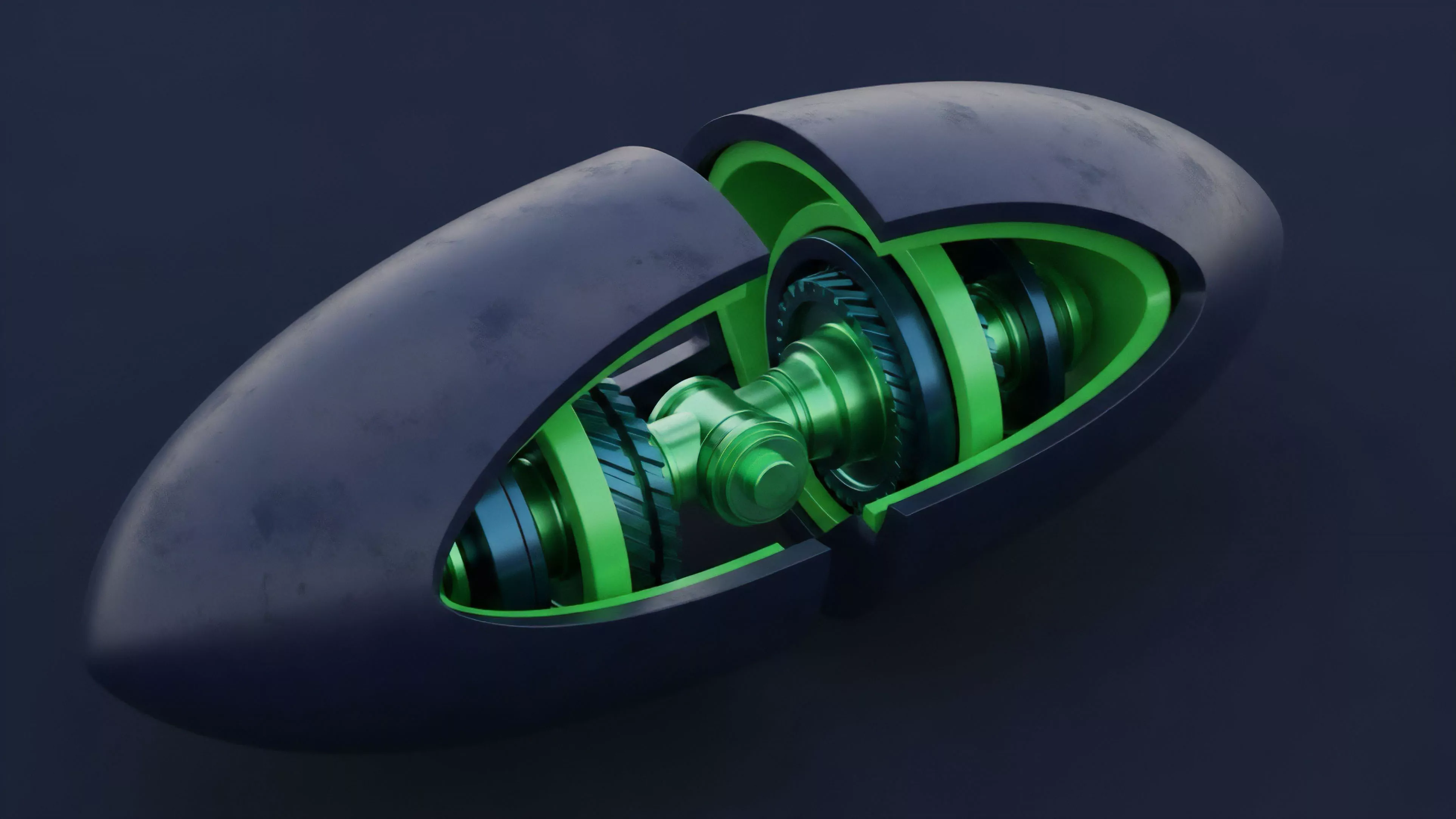

Future developments will likely focus on the integration of hardware-level acceleration and more advanced cryptographic primitives.

As zero-knowledge technology matures, the ability to perform private, zero-cost computations on sensitive financial data will redefine the boundaries of competitive advantage.

- Hardware Acceleration will enable the processing of complex exotic option pricing models in real-time.

- Autonomous Market Making will see agents managing portfolios with zero human intervention, driven by decentralized oracle networks.

- Cross-Protocol Liquidity will become the primary metric of success, as zero-cost environments compete for the largest share of collateral.

The trajectory leads toward a fully autonomous financial system where the cost of complex risk management is no longer a factor in portfolio construction. The remaining challenge involves managing the systemic risks that emerge when automated agents operate at these speeds without human oversight.