Essence

Volatility Signal Processing functions as the analytical extraction of latent information from derivative pricing data to anticipate future market regimes. It transforms raw, noisy option chain data into actionable indicators, providing a structural view of market expectations regarding future price dispersion.

Volatility Signal Processing converts disorganized option market data into structured indicators of expected future price dispersion.

This practice identifies shifts in risk sentiment by decomposing the term structure and the skew of implied volatility. It moves beyond standard historical measures, treating the surface of option prices as a real-time laboratory for gauging institutional positioning and systemic stress. The objective remains the isolation of genuine directional signals from liquidity-driven noise within decentralized exchanges.

Origin

The lineage of Volatility Signal Processing traces back to the development of the Black-Scholes-Merton model, which formalized the relationship between asset price variance and option value.

Early practitioners in traditional finance utilized these foundations to arbitrage discrepancies in implied versus realized volatility.

- Black Scholes: Provided the mathematical bedrock for quantifying time-dependent volatility.

- Volatility Smile: Revealed the market tendency to price out-of-the-money options higher than the model predicted.

- Decentralized Liquidity: Enabled the transition of these quantitative methods into automated, on-chain execution environments.

Digital asset markets adopted these frameworks to manage the extreme variance inherent in crypto-native assets. The shift from centralized order books to automated market makers forced a refinement of signal extraction, focusing on the specific mechanics of liquidity provision and margin maintenance.

Theory

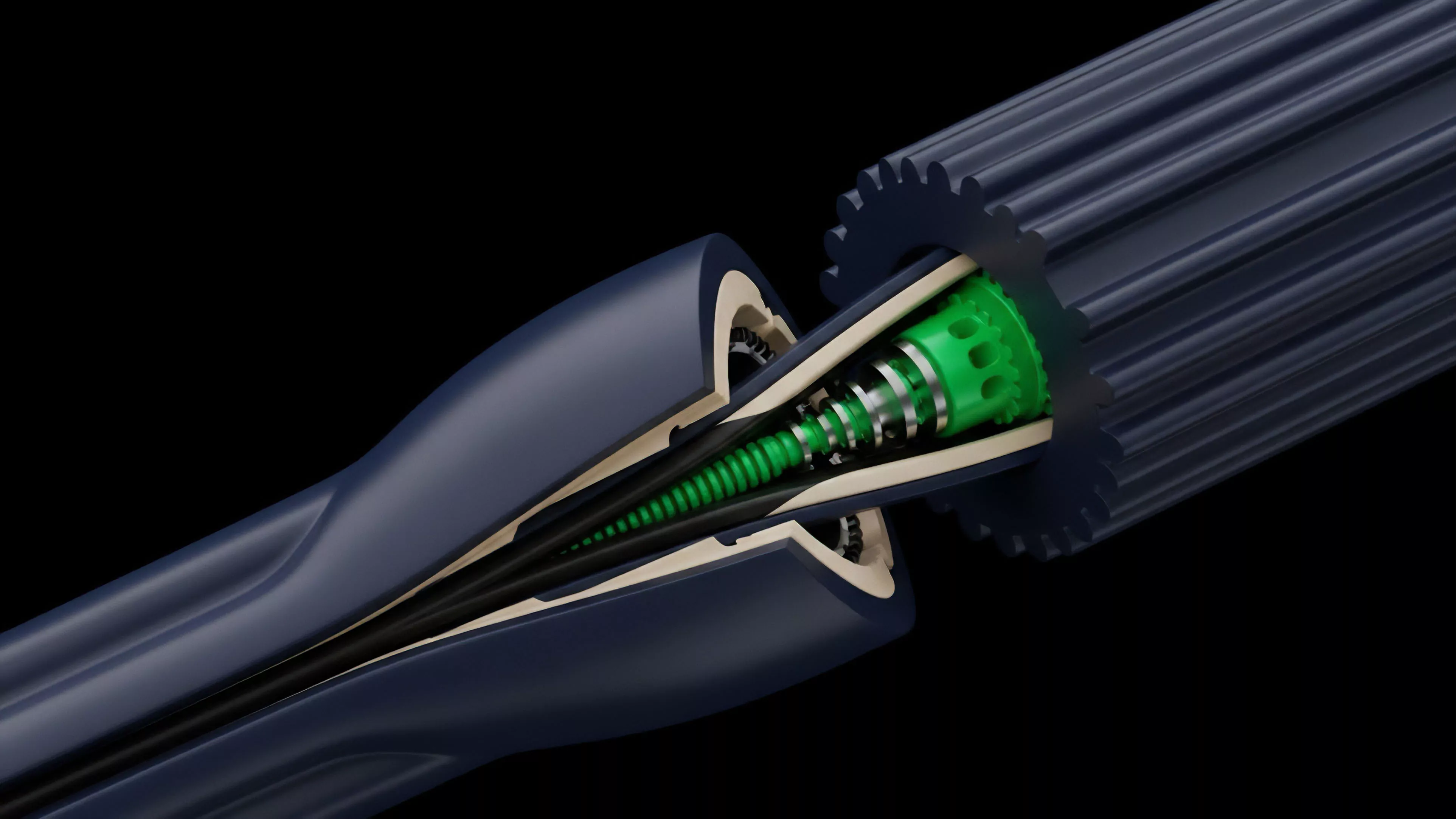

The theoretical framework rests on the interpretation of the Volatility Surface. By mapping implied volatility across different strikes and maturities, analysts construct a three-dimensional representation of market fear and greed.

This surface acts as a predictive mechanism for price behavior, where changes in the curvature signal shifts in underlying participant conviction.

The volatility surface serves as a three-dimensional map of market sentiment, where structural changes reveal shifts in participant conviction.

The mathematics of Greeks ⎊ specifically Vega and Vanna ⎊ governs the sensitivity of these signals. Traders analyze the rate of change in option premiums relative to shifts in volatility to gauge the stability of market liquidity.

| Metric | Functional Utility |

| Implied Skew | Quantifies the market premium for tail-risk protection |

| Term Structure | Signals expected variance across different time horizons |

| Vanna | Measures sensitivity of Delta to volatility changes |

The adversarial nature of these markets ensures that any detectable signal is quickly arbitraged. Consequently, the signal processing must account for the feedback loop created by automated delta-hedging strategies, which often amplify existing trends during periods of high market stress.

Approach

Modern practitioners utilize high-frequency data feeds from decentralized exchanges to construct real-time Volatility Surface models. This involves filtering out stale quotes and accounting for the specific impact of protocol-level liquidation mechanics on option pricing.

- Data Aggregation: Collecting granular order flow from decentralized option vaults and order-book protocols.

- Model Calibration: Adjusting standard pricing models to reflect the non-normal distribution of digital asset returns.

- Signal Extraction: Applying quantitative filters to isolate structural volatility changes from transient liquidity fluctuations.

Modern signal extraction relies on filtering noisy on-chain order flow to isolate genuine structural volatility shifts.

The process demands a rigorous approach to Systems Risk. By monitoring the concentration of open interest and the proximity of liquidation thresholds, the signal processor assesses the likelihood of a cascade. This is not merely about predicting price; it is about quantifying the fragility of the underlying liquidity structure.

Evolution

The transition from static, model-based pricing to dynamic, protocol-integrated systems marks the current state of the domain.

Earlier cycles relied on off-chain calculations applied to centralized exchanges, often resulting in significant latency between signal generation and execution. Current architectures integrate Volatility Signal Processing directly into the smart contract logic of derivatives protocols. This allows for automated adjustments to margin requirements and collateralization ratios based on real-time volatility signals.

| Development Stage | Primary Characteristic |

| Foundational | Manual analysis of centralized exchange data |

| Intermediate | Automated models using off-chain computation |

| Advanced | On-chain, protocol-native volatility risk management |

The evolution continues toward decentralized oracles that provide tamper-proof volatility data. This technical shift reduces reliance on centralized entities and enhances the robustness of derivative markets against manipulation.

Horizon

The future of Volatility Signal Processing lies in the integration of machine learning agents capable of identifying complex, non-linear patterns in order flow. These agents will operate within decentralized autonomous organizations, dynamically adjusting risk parameters to optimize for capital efficiency and market stability.

Future systems will employ autonomous agents to process complex volatility signals and adjust protocol risk parameters in real-time.

The convergence of Protocol Physics and Quantitative Finance will lead to the creation of self-healing derivative markets. These systems will autonomously rebalance liquidity pools in response to volatility spikes, effectively dampening the impact of exogenous shocks. The ultimate goal is the construction of a financial infrastructure that is inherently resilient to the volatility it seeks to trade.