Essence

Volatility measurement techniques serve as the primary diagnostic tools for quantifying the dispersion of returns in digital asset markets. These frameworks convert raw price action into actionable risk parameters, allowing market participants to calibrate exposure relative to market uncertainty. Implied Volatility and Realized Volatility represent the foundational dualities of this domain, functioning as the pulse of the derivatives marketplace.

Volatility measurement techniques translate raw market uncertainty into precise risk metrics essential for derivative pricing and portfolio hedging.

The systemic relevance of these metrics extends to margin engine stability and liquidity provision. Protocols rely on accurate volatility inputs to determine liquidation thresholds, ensuring the solvency of decentralized lending and trading venues under extreme market stress. Without robust measurement, automated systems lack the sensitivity to adjust risk parameters during rapid regime shifts, leading to cascading failures.

Origin

The genesis of modern volatility analysis traces back to the Black-Scholes-Merton framework, which introduced the concept of Implied Volatility as the missing variable in option pricing.

Early crypto derivatives markets inherited these classical models, initially treating digital assets as high-beta equities. However, the unique market microstructure of decentralized exchanges necessitated an evolution beyond traditional Gaussian assumptions.

- Black-Scholes Foundation provided the initial mathematical scaffolding for treating volatility as a forward-looking variable.

- GARCH Models emerged to address the observed clustering of volatility in financial time series data.

- Decentralized Liquidity Pools shifted the origin point from centralized order books to automated market maker mechanics.

Early participants quickly realized that crypto assets exhibit extreme fat-tailed distributions, rendering traditional models insufficient for tail-risk management. This forced the industry to adapt quantitative techniques to account for protocol-specific risks, such as governance attacks or smart contract exploits, which manifest as sudden, non-linear volatility spikes.

Theory

The theoretical structure of volatility measurement hinges on the distinction between forward-looking expectations and historical performance. Realized Volatility captures the statistical variance of price returns over a fixed interval, providing a retrospective view of market behavior.

Implied Volatility, conversely, represents the market consensus on future price dispersion, derived from the pricing of tradable derivatives.

Implied volatility reflects the market expectation of future price movement while realized volatility measures the actual historical variance observed.

The Volatility Surface serves as the primary visual and mathematical representation of this theory. It maps implied volatility against different strikes and maturities, revealing the Volatility Skew and Smile. These phenomena indicate that market participants assign higher probabilities to extreme downside events than classical models suggest.

| Metric | Theoretical Basis | Application |

| Realized Volatility | Standard Deviation of Returns | Risk Assessment |

| Implied Volatility | Option Pricing Models | Market Sentiment |

| GARCH | Autoregressive Conditional Heteroskedasticity | Volatility Forecasting |

The intersection of these theories with Behavioral Game Theory suggests that volatility is not merely a statistical property but an emergent outcome of strategic interaction. Participants anticipate liquidation events, front-running potential cascades, which in turn compresses or expands the observed volatility surface.

Approach

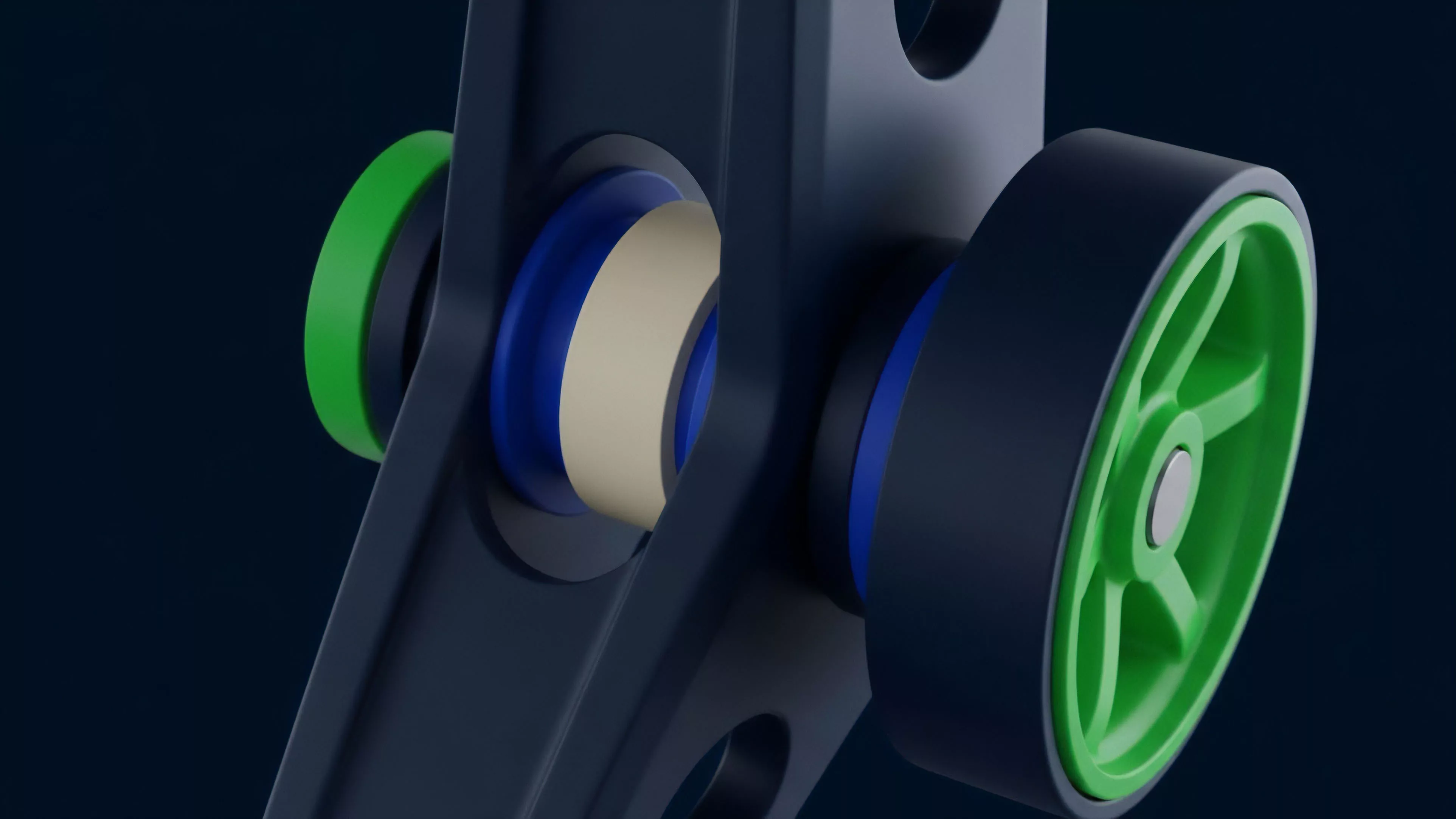

Current practitioners utilize high-frequency data feeds and sophisticated Greeks management to navigate decentralized markets. The approach focuses on Delta Neutral strategies, where volatility is traded as an independent asset class rather than a byproduct of directional speculation.

Traders monitor Vanna and Volga, sensitivities that describe how an option’s price changes relative to shifts in volatility and the volatility skew.

Trading volatility requires managing complex sensitivity parameters known as greeks to maintain portfolio stability across varying market regimes.

Advanced protocols now incorporate On-Chain Volatility Oracles, which aggregate decentralized exchange data to produce tamper-proof volatility inputs. This mitigates the risk of oracle manipulation, a critical vulnerability in earlier derivative architectures.

- Delta Hedging requires continuous adjustment of underlying asset positions to maintain neutral exposure.

- Skew Trading involves betting on the relative pricing differences between out-of-the-money puts and calls.

- Variance Swaps allow direct exposure to the difference between realized and implied volatility.

This technical rigor is essential because crypto markets operate in an adversarial environment. Automated agents constantly probe liquidation engines for weaknesses. The current approach demands that developers and traders view the protocol as a living system under perpetual stress.

Evolution

Volatility measurement has shifted from static, model-based calculations to dynamic, adaptive frameworks.

The early reliance on simple historical averages proved inadequate during liquidity crises, where correlation breakdown became the norm. Consequently, the industry adopted Stochastic Volatility models that treat volatility itself as a random variable, better capturing the rapid transitions between regimes. The evolution of these techniques mirrors the maturation of the underlying infrastructure.

As cross-margin capabilities and sophisticated vault strategies increased in prevalence, the demand for more granular volatility data accelerated. We now see the integration of Machine Learning models designed to predict volatility regimes based on network activity, mempool congestion, and social sentiment indicators.

Modern volatility frameworks treat volatility as a dynamic stochastic process rather than a static parameter to improve risk mitigation.

This progress has not been linear. Every cycle brings new forms of systemic risk, forcing a redesign of how volatility is perceived. The shift from centralized exchange reliance to DeFi protocols has necessitated the creation of decentralized, trustless measurement standards that operate independently of any single entity.

Horizon

The future of volatility measurement lies in the convergence of Protocol Physics and Quantitative Finance.

We anticipate the rise of Algorithmic Volatility Control, where smart contracts autonomously adjust collateral requirements based on real-time volatility surface analysis. This will create self-stabilizing financial systems capable of enduring shocks that would currently liquidate standard platforms.

Future derivative protocols will utilize autonomous volatility control systems to dynamically adjust risk parameters without human intervention.

Increased focus on Macro-Crypto Correlation will lead to the development of cross-asset volatility indices, linking digital asset risk to traditional financial market indicators. The next generation of tools will prioritize Composability, allowing volatility metrics to be plugged into any DeFi protocol to enhance capital efficiency. Ultimately, the goal is to build a robust financial operating system where risk is transparently priced, efficiently distributed, and algorithmically managed.