Essence

The conceptualization of Value at Risk Security involves the transformation of probabilistic financial loss into a tradeable, liquid instrument. This mechanism permits market participants to isolate and transfer the specific tail risk associated with a portfolio or asset without necessitating the liquidation of the underlying positions. By utilizing on-chain primitives, this instrument quantifies the maximum expected loss over a defined time interval at a specific confidence level ⎊ typically 95% or 99% ⎊ and encapsulates this metric within a smart contract.

The primary function of Value at Risk Security lies in its ability to provide a deterministic price for uncertainty. In decentralized finance, where volatility remains a constant, the capacity to hedge against extreme market movements through a standardized security enables more sophisticated capital allocation. This shifts the burden of risk from those seeking stability to those with the mathematical capacity and capital depth to absorb it.

Risk quantification enables the separation of asset exposure from systemic volatility.

Unlike traditional insurance, which often relies on discretionary claims processes, Value at Risk Security operates via automated execution. When the calculated risk metrics exceed predefined thresholds, the security triggers a rebalancing or a payout, ensuring that the solvency of the interconnected protocols remains intact. This transparency is vital for maintaining trust in permissionless environments where counterparty creditworthiness cannot be assessed through conventional means.

Origin

The lineage of Value at Risk Security traces back to the 1994 RiskMetrics whitepaper by JP Morgan, which sought to standardize risk reporting across global trading desks.

This methodology provided a single, aggregate number that summarized the total risk of a firm. As digital asset markets matured, the limitations of static risk models became apparent, leading to the integration of these quantitative frameworks into the Ethereum Virtual Machine and other programmable blockchains. The necessity for such instruments arose during the early liquidity crises in decentralized lending protocols.

Market participants realized that liquidation engines alone were insufficient to prevent bad debt during “black swan” events. The development of Value at Risk Security provided a proactive layer of defense, allowing protocols to price the probability of failure into their interest rate models and collateral requirements.

Deterministic code replaces discretionary oversight in the calculation of liquidation thresholds.

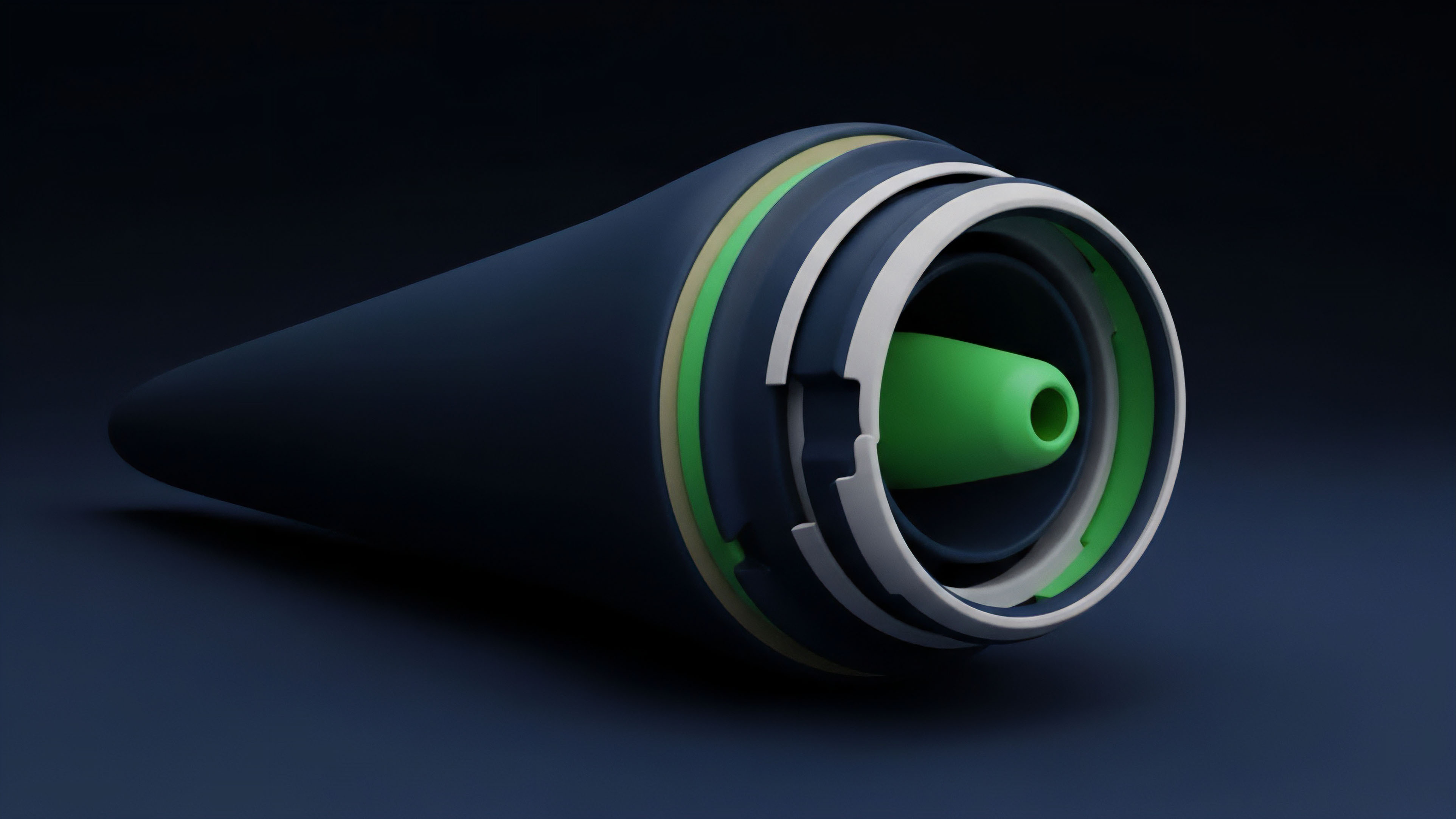

By porting the variance-covariance and Monte Carlo methodologies into smart contracts, developers created a new class of “risk-aware” assets. These securities do not rely on centralized reporting; instead, they ingest real-time price feeds from decentralized oracles to continuously recalculate the risk profile of the network. This evolution represents a shift from reactive risk management to an anticipatory, algorithmic architecture.

Theory

The mathematical architecture of Value at Risk Security is built upon the distribution of returns and the identification of the “left tail” where extreme losses reside.

The model assumes that asset returns follow a specific probability distribution ⎊ often a student-t or a jump-diffusion model ⎊ to better account for the high kurtosis observed in crypto markets. The security calculates the potential loss by integrating the probability density function up to the desired confidence interval.

Methodological Frameworks

Three primary methodologies govern the valuation of Value at Risk Security, each offering distinct trade-offs in computational intensity and accuracy.

| Methodology | Mathematical Basis | Computational Cost | Tail Sensitivity |

|---|---|---|---|

| Parametric | Variance-Covariance matrix | Low | Limited |

| Historical | Past price distributions | Medium | Moderate |

| Monte Carlo | Stochastic simulations | High | High |

The Monte Carlo method is particularly favored for Value at Risk Security because it can simulate thousands of potential market paths, including path-dependent risks like flash loan attacks or oracle manipulation. This stochastic approach allows the security to price in the “Greeks” ⎊ Delta, Gamma, and Vega ⎊ ensuring that the instrument remains responsive to changes in both price and volatility.

Mathematical rigor serves as the primary defense against the cascading failures of interconnected liquidity pools.

Capital Efficiency and Solvency

The theoretical goal of Value at Risk Security is to optimize the margin requirements for leveraged positions. By accurately predicting the probability of a liquidation event, the protocol can lower the collateralization ratio for low-risk portfolios while increasing it for those with high VaR. This creates a more fluid market where capital is directed toward its most efficient use without compromising the safety of the lender.

Approach

Current implementation of Value at Risk Security relies on a robust stack of decentralized infrastructure.

Oracles supply high-frequency price data, which is then processed by off-chain keepers or specialized ZK-circuits to generate the risk proofs. These proofs are submitted to the smart contract, which updates the trading price of the Value at Risk Security or adjusts the parameters of the associated lending pool.

- Stochastic Modeling: Utilizing Brownian motion and Poisson processes to simulate asset price trajectories.

- Oracle Integration: Fetching real-time volatility data to ensure the risk metric reflects current market conditions.

- Automated Rebalancing: Triggering asset swaps when the VaR threshold is breached to maintain a delta-neutral profile.

- Liquidity Provisioning: Incentivizing market makers to provide depth for the risk-transfer market.

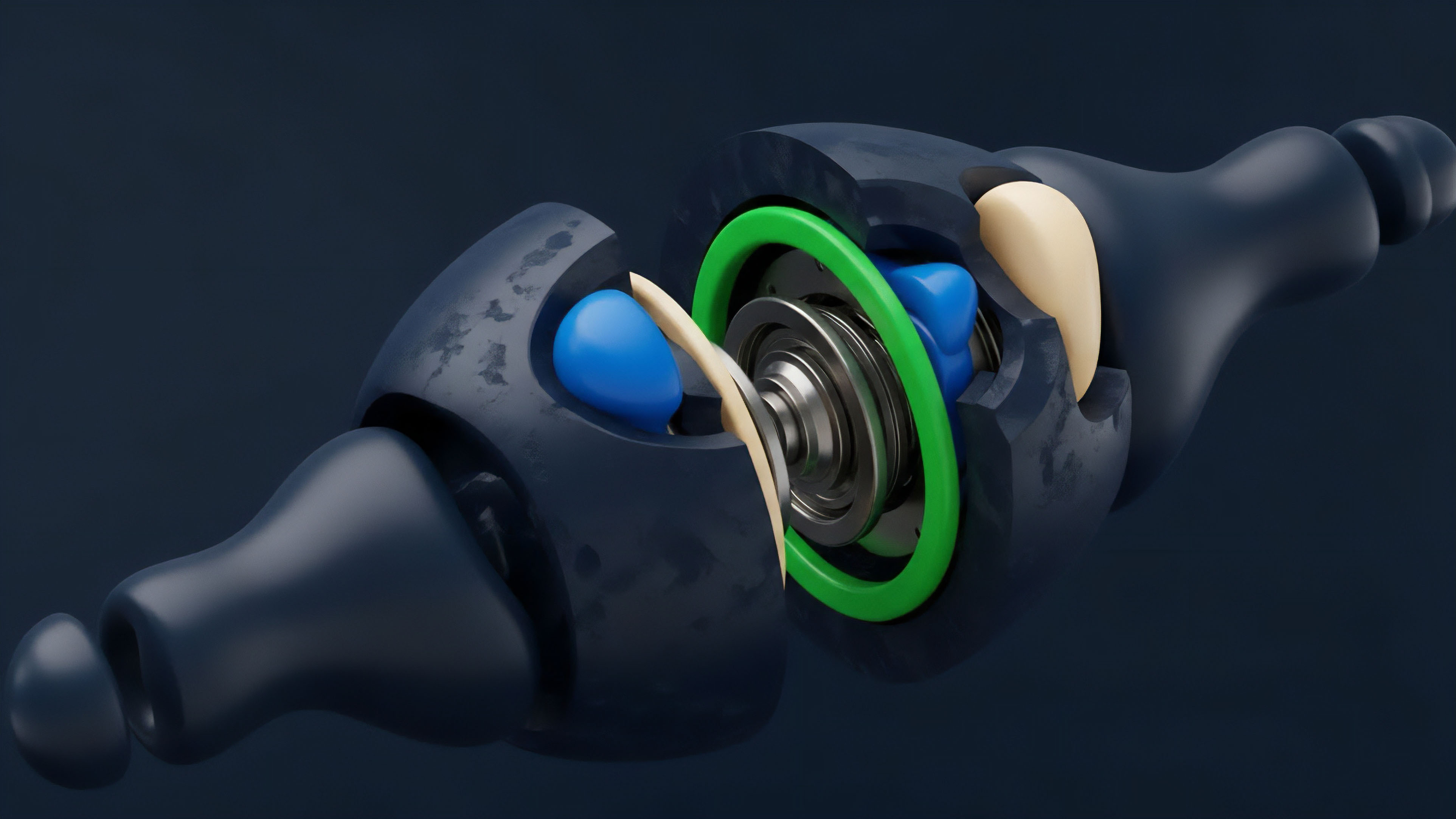

Biological systems often manage risk through redundancy ⎊ having multiple pathways to achieve the same metabolic result ⎊ and Value at Risk Security mirrors this by utilizing multi-oracle consensus to prevent single points of failure. This redundancy ensures that the risk calculation remains accurate even if a specific data source is compromised. The operational terrain requires constant calibration.

Strategists use backtesting engines to compare the predicted VaR against actual market outcomes. If the model consistently underestimates the loss, the parameters are adjusted to increase the safety margin. This iterative process ensures that the Value at Risk Security evolves alongside the market it intends to protect.

Evolution

The transition from static VaR to Conditional Value at Risk (CVaR), also known as Expected Shortfall, represents a significant leap in the sophistication of Value at Risk Security.

While standard VaR only identifies the threshold of loss, CVaR calculates the average loss that occurs beyond that threshold. This distinction is vital in crypto finance, where “fat tails” mean that once a threshold is breached, the magnitude of the loss can be catastrophic. The psychological impact of borrowed capital often leads to irrational market behavior during periods of high stress.

When a Value at Risk Security indicates a rising probability of loss, it can trigger a feedback loop where automated selling leads to further price declines, which in turn increases the VaR. This reflexivity is the greatest challenge for risk architects. We have seen this play out in numerous de-pegging events where the very mechanisms designed to protect the system contributed to its temporary instability.

The evolution of these instruments now includes “circuit breakers” and “smoothing functions” that attempt to dampen these feedback loops without sacrificing the accuracy of the risk signal. The goal is to create a system that is resilient, not just reactive, acknowledging that human participants will often act in ways that defy pure mathematical logic when their solvency is threatened.

| Feature | Traditional VaR | On-Chain VaR Security |

|---|---|---|

| Transparency | Opaque reporting | Real-time on-chain data |

| Settlement | T+2 or longer | Atomic execution |

| Counterparty Risk | Institutional credit | Smart contract collateral |

| Accessibility | Qualified investors | Permissionless access |

As the network matures, Value at Risk Security has moved from being a niche tool for hedge funds to a foundational component of decentralized treasury management. DAOs now use these securities to hedge their native token exposure, ensuring they have sufficient runway to continue operations even during prolonged bear markets.

Horizon

The future of Value at Risk Security lies in the integration of artificial intelligence and zero-knowledge proofs. AI models can identify non-linear correlations between disparate assets that traditional linear models might miss, providing a more comprehensive view of systemic risk. Meanwhile, ZK-proofs allow institutions to prove they are managing risk according to specific VaR mandates without revealing their underlying positions or strategies. Traversing the path toward a fully automated financial system requires the development of cross-chain risk instruments. As liquidity becomes increasingly fragmented across various Layer 2 solutions and sovereign blockchains, a Value at Risk Security must be able to aggregate risk across multiple environments simultaneously. This will likely involve the use of generalized message-passing protocols to synchronize risk metrics in real-time. The ultimate objective is the creation of a “Global Risk Map” ⎊ a transparent, real-time visualization of the Value at Risk Security metrics across the entire decentralized landscape. This would allow regulators and participants to identify pockets of excessive gearing before they lead to systemic failure. By commoditizing risk and making it tradeable, we are not eliminating volatility; we are building the infrastructure to survive it.

Glossary

Conditional Value-at-Risk

Proof of Stake Security

Cross Margin Efficiency

Bug Bounty Program

Sortino Ratio

Institutional Grade Defi

Socialized Loss Mechanism

Fat Tail Distribution

Trading Volume