Essence

Value-at-Risk Proofs Generation functions as the cryptographic assurance layer for market risk exposure within decentralized finance. It transforms opaque margin requirements into verifiable, on-chain commitments. This mechanism enables protocols to cryptographically bind a participant to a specific risk ceiling, ensuring that solvency is not assumed but mathematically demonstrated at every block interval.

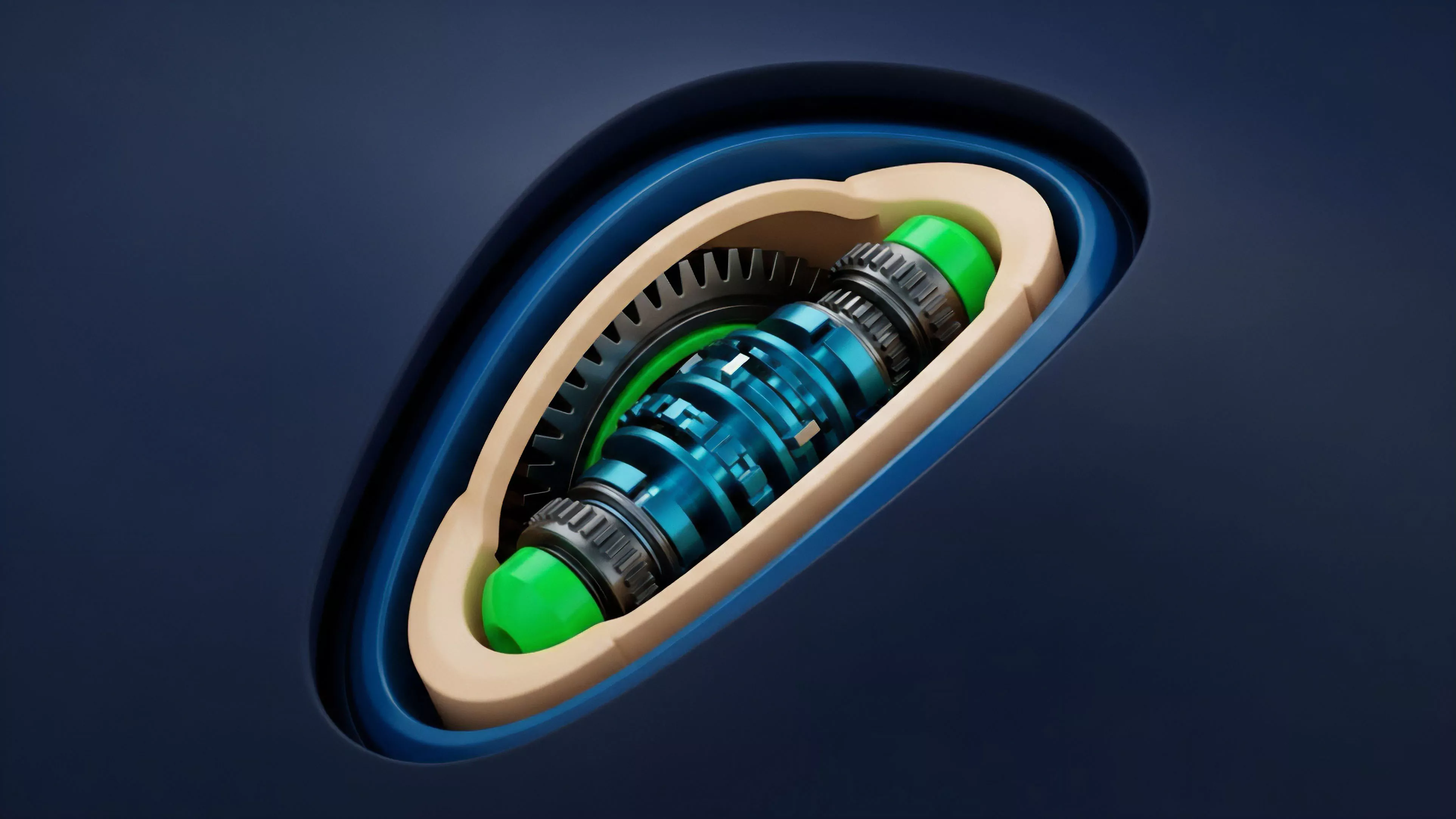

Value-at-Risk Proofs Generation establishes a verifiable cryptographic link between a participant’s collateral position and their maximum potential loss over a defined timeframe.

At its core, this architecture replaces centralized oversight with algorithmic certainty. By leveraging zero-knowledge proofs or state-root commitments, a trading venue validates that a user’s portfolio volatility, delta, and gamma exposure remain within strictly defined liquidation thresholds. This prevents the systemic accumulation of hidden leverage, as the protocol itself serves as the ultimate arbiter of risk compliance.

Origin

The genesis of Value-at-Risk Proofs Generation lies in the convergence of traditional quantitative risk management and the trustless requirements of decentralized settlement engines.

Early iterations of on-chain margin systems relied on reactive, state-heavy checks that suffered from high latency and gas inefficiency. The shift toward proof-based systems emerged from the necessity to scale complex derivative positions without sacrificing the integrity of the collateral pool.

- Foundational Quant Models provided the mathematical basis for calculating potential loss distributions using historical volatility and correlation matrices.

- Zero-Knowledge Cryptography introduced the mechanism for compressing these massive, multi-variable calculations into succinct, verifiable statements.

- Protocol Engineering catalyzed the transition from centralized risk engines to decentralized, automated systems capable of enforcing margin compliance autonomously.

This evolution represents a fundamental change in how financial systems approach risk. By shifting the burden of proof to the user or the clearing agent, protocols achieve a higher degree of transparency, effectively neutralizing the information asymmetry that historically plagued opaque, off-chain derivative markets.

Theory

The mathematical structure of Value-at-Risk Proofs Generation relies on the precise calculation of a portfolio’s tail risk under specific confidence intervals. This requires the continuous re-evaluation of greeks ⎊ delta, gamma, vega, and theta ⎊ to ensure the total risk footprint stays below the protocol-defined insolvency threshold.

The proof generation process compresses these multi-dimensional inputs into a single, compact witness that the blockchain can validate instantly.

| Metric | Function in Proof Generation |

|---|---|

| Confidence Interval | Defines the probability threshold for solvency. |

| Time Horizon | Determines the duration over which risk is measured. |

| Volatility Surface | Provides the input data for pricing tail risk. |

| Succinct Proof | Validates compliance without revealing raw position data. |

The complexity arises when market regimes shift. As liquidity dries up or volatility spikes, the underlying model must dynamically adjust its correlation assumptions. If the proof generation does not account for these non-linearities, the system risks cascading liquidations.

The mathematical rigor here is not merely an academic exercise; it is the structural integrity of the entire decentralized market.

The efficacy of Value-at-Risk Proofs Generation depends on the robustness of the underlying pricing model and its ability to incorporate real-time volatility surface adjustments.

One might consider the parallel between this and the evolution of thermodynamics in closed systems ⎊ where the conservation of energy dictates the physical limits, here, the conservation of collateral dictates the financial limits of the protocol. This bridge between abstract math and tangible capital constraints is where the real work happens.

Approach

Current implementations of Value-at-Risk Proofs Generation utilize advanced off-chain computation to derive risk parameters, which are then submitted to the protocol for verification. This hybrid approach optimizes for gas costs while maintaining on-chain transparency.

Market participants generate their proofs using localized, high-performance engines that ingest real-time order flow data to calculate their specific risk contribution to the pool.

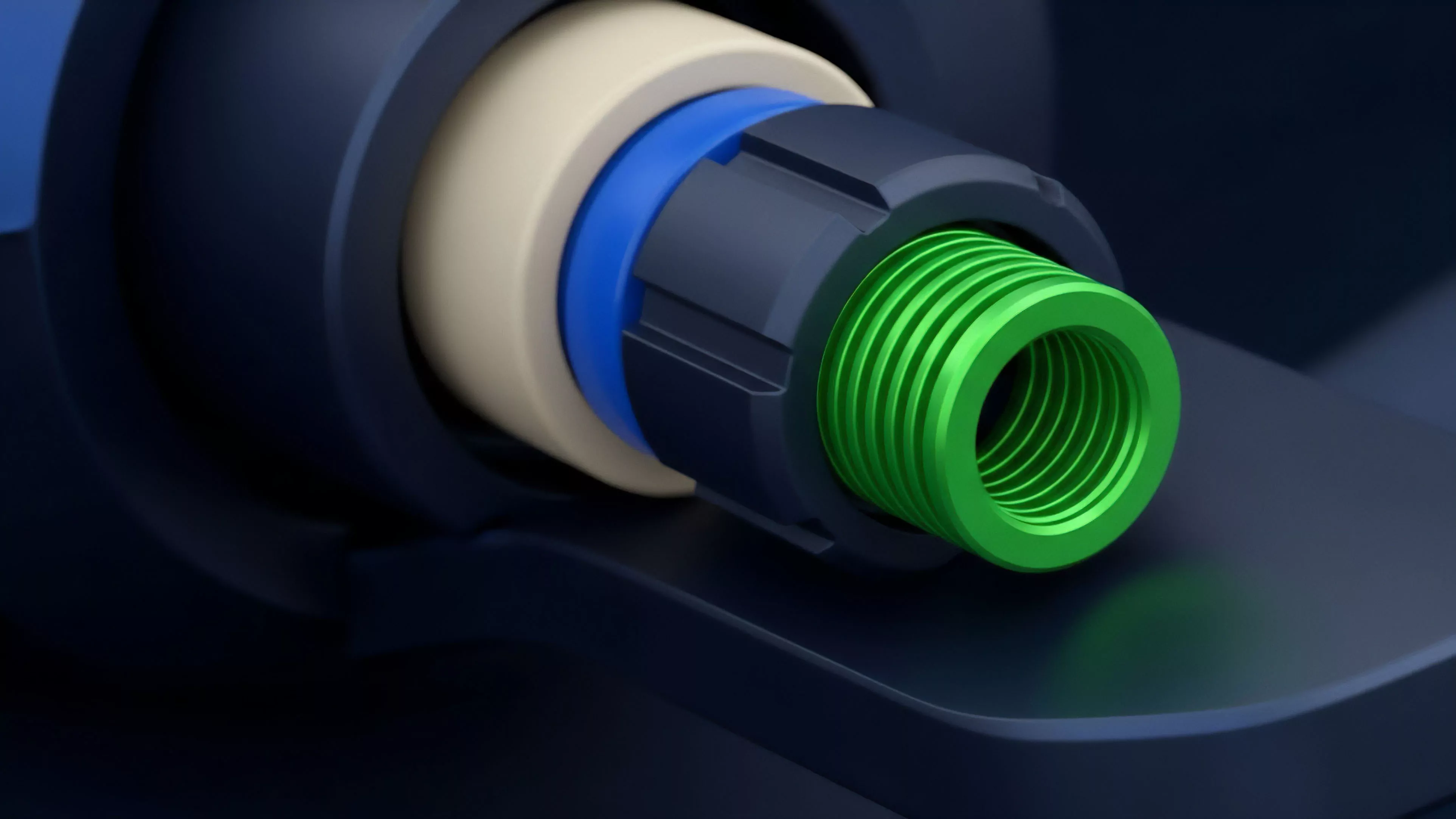

- Data Ingestion involves pulling granular order book and index price data to establish current market state.

- Risk Calculation executes the quantitative models to derive the required margin based on the user’s current derivative exposure.

- Proof Creation compresses the result into a cryptographic statement that verifies the calculation followed the protocol’s mandated risk parameters.

- On-chain Verification confirms the validity of the proof, triggering state updates or potential liquidation actions based on the output.

Evolution

The trajectory of Value-at-Risk Proofs Generation moves from static, parameter-heavy systems toward adaptive, agent-based risk modeling. Initially, protocols utilized fixed, conservative margin requirements that penalized capital efficiency. The current state represents a more granular, dynamic system where risk is calculated based on individual portfolio composition rather than generic, asset-wide risk tiers.

| Generation | Mechanism | Limitation |

|---|---|---|

| First | Static margin ratios | Inefficient capital usage |

| Second | Dynamic volatility bands | Latency in state updates |

| Third | Cryptographic proof-based risk | High computational complexity |

This evolution is driven by the demand for higher leverage and more sophisticated hedging strategies within decentralized venues. The challenge is maintaining performance while ensuring that the proof generation does not become a bottleneck for market liquidity. Future iterations will likely incorporate decentralized oracles directly into the proof generation loop, reducing the reliance on centralized off-chain engines.

Horizon

The next stage for Value-at-Risk Proofs Generation involves the integration of cross-protocol risk aggregation.

Currently, most risk proofs are isolated to single venues. A truly resilient system will allow for the verification of risk across multiple, interconnected protocols, providing a holistic view of a participant’s systemic footprint. This will be the defining factor in preventing contagion across decentralized markets.

True systemic stability in decentralized markets will arrive when Value-at-Risk Proofs Generation can aggregate exposure across heterogeneous protocol architectures.

This development path requires solving the interoperability problem ⎊ specifically, how to pass risk-related state roots between chains without introducing new trust assumptions. If successful, this will enable a new class of cross-margin accounts that are both capital-efficient and cryptographically secure. The final hurdle remains the psychological transition from trusting human-led clearinghouses to trusting mathematical proofs of solvency.