Essence

Validator Selection functions as the mechanism through which decentralized protocols designate participants to propose, verify, and commit transactions to the ledger. This process dictates the distribution of consensus power, directly influencing the security, decentralization, and performance metrics of the underlying network. Participants acting as validators stake assets to align their economic incentives with the protocol’s health, creating a feedback loop where capital allocation determines network integrity.

Validator Selection represents the critical intersection of cryptographic consensus and economic stake, defining the operational security of decentralized ledgers.

The architecture of this selection process varies significantly across protocols. Some utilize proof of stake, where selection probability correlates with the quantity of tokens held or delegated. Others incorporate reputation-based metrics or hardware-specific requirements to prevent centralization.

The primary objective remains the minimization of adversarial control while maximizing throughput and finality.

Origin

The genesis of Validator Selection resides in the fundamental requirement to solve the Byzantine Generals Problem within a permissionless environment. Early iterations relied on proof of work, where computational effort served as the primary filter for block production.

This approach favored raw energy expenditure, which introduced limitations regarding scalability and environmental sustainability. The transition toward proof of stake models emerged to address these inefficiencies. Developers sought to replace expensive hardware competition with capital-based signaling.

This shift fundamentally altered the role of the participant from a miner to a staker, necessitating new frameworks for selecting who maintains the network state.

- Deterministic Selection mechanisms rely on pseudorandom functions to choose validators based on stake weight.

- Delegated Mechanisms allow token holders to signal support for specific validators, creating a competitive market for trust.

- Slashing Conditions serve as the economic deterrent against malicious behavior, ensuring validators maintain operational uptime.

Theory

Validator Selection operates as a game-theoretic construct where participants optimize for reward maximization under strict protocol constraints. The mathematical foundation rests on probability distributions that determine selection frequency. If a validator controls a significant portion of the total stake, their influence over the chain state grows, potentially introducing risks related to censorship or reorg attacks.

The pricing of validator services involves evaluating the cost of capital, operational expenses, and the risk of penalties. Sophisticated actors model these variables using quantitative sensitivity analysis to determine the optimal delegation strategy.

| Metric | Description |

| Stake Weight | The probability of selection for block production. |

| Uptime Requirement | The technical threshold for continuous availability. |

| Slashing Risk | The financial penalty for protocol violations. |

The efficiency of validator selection models dictates the equilibrium between network decentralization and operational latency.

Consider the implications of information asymmetry in delegation markets. When participants select validators based on yield alone, they ignore the systemic risks posed by centralized infrastructure providers. This behavioral tendency leads to the concentration of consensus power, a direct contradiction to the original goals of distributed ledger technology.

Approach

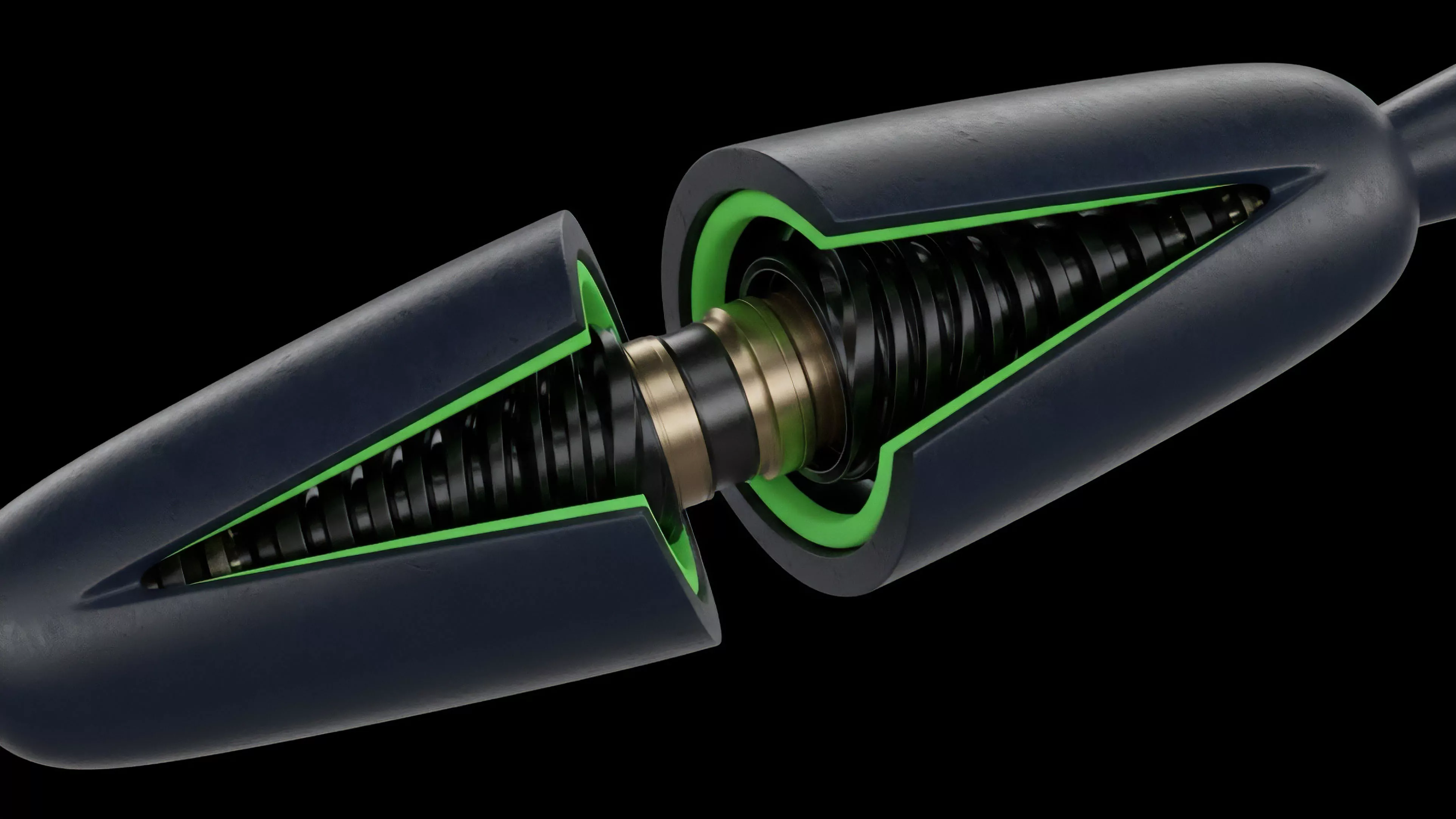

Current methodologies for Validator Selection focus on optimizing for capital efficiency and risk mitigation. Protocols now implement dynamic validator sets, allowing for the rotation of participants to maintain high security standards. This requires sophisticated monitoring of validator performance, including latency, hardware reliability, and geographic distribution.

Risk management strategies involve diversifying delegations across multiple validators to reduce exposure to individual failure points. Market participants evaluate validators using quantitative metrics such as reward consistency, historical uptime, and transparency in fee structures.

- Liquid Staking protocols introduce abstraction layers that allow users to maintain liquidity while participating in validator selection.

- Restaking frameworks expand the security scope, enabling validators to secure multiple protocols simultaneously.

- MEV Extraction behaviors significantly influence validator competitiveness and the overall fee landscape for users.

Evolution

The progression of Validator Selection moved from simple, static pools toward complex, multi-layered incentive structures. Early designs treated all validators as equals, failing to account for variations in technical expertise or infrastructure resilience. Modern protocols now integrate sophisticated reputation systems and cryptographic proofs to filter participants.

The rise of modular blockchain architectures necessitated more flexible selection processes. Validators must now demonstrate competence across different execution environments, shifting the focus from simple block proposal to complex data availability verification. This evolution mirrors the maturation of financial markets, where specialized intermediaries now provide services previously handled by generalist participants.

The transition from monolithic to modular consensus structures forces validator selection to prioritize verifiable technical performance over simple stake accumulation.

This structural shift requires participants to engage with more advanced financial instruments to hedge against validator-specific risks. The market for staking derivatives serves as a testament to this, providing tools for price discovery regarding the cost of network security.

Horizon

Future developments in Validator Selection will likely emphasize automated governance and machine-learning-driven optimization.

As protocols scale, the manual selection of validators becomes impractical for institutional participants. Automated agents will manage capital allocation based on real-time performance data and risk scoring, further abstracting the underlying complexity. Regulatory frameworks will also play a decisive role in shaping the selection landscape.

Jurisdictional requirements regarding validator identity and liability will force a bifurcation between permissioned and permissionless consensus layers. The ultimate goal remains the creation of a resilient, self-correcting system that minimizes the need for human intervention in maintaining network integrity.

| Trend | Implication |

| Automated Delegation | Reduction in manual overhead for capital allocators. |

| Zero Knowledge Proofs | Enhanced privacy for validator operations. |

| Institutional Adoption | Increased focus on compliance and regulatory standards. |