Essence

Validator Security Audits function as the rigorous verification protocols for the entities tasked with maintaining consensus integrity within decentralized networks. These assessments move beyond basic code reviews to analyze the operational resilience, cryptographic key management, and slashing risk exposure of specific validator nodes. By evaluating the probability of liveness failures or malicious behavior, these audits provide a necessary quantitative foundation for institutional capital allocators.

Validator security audits provide the quantitative risk baseline for evaluating the operational integrity of consensus participants in decentralized networks.

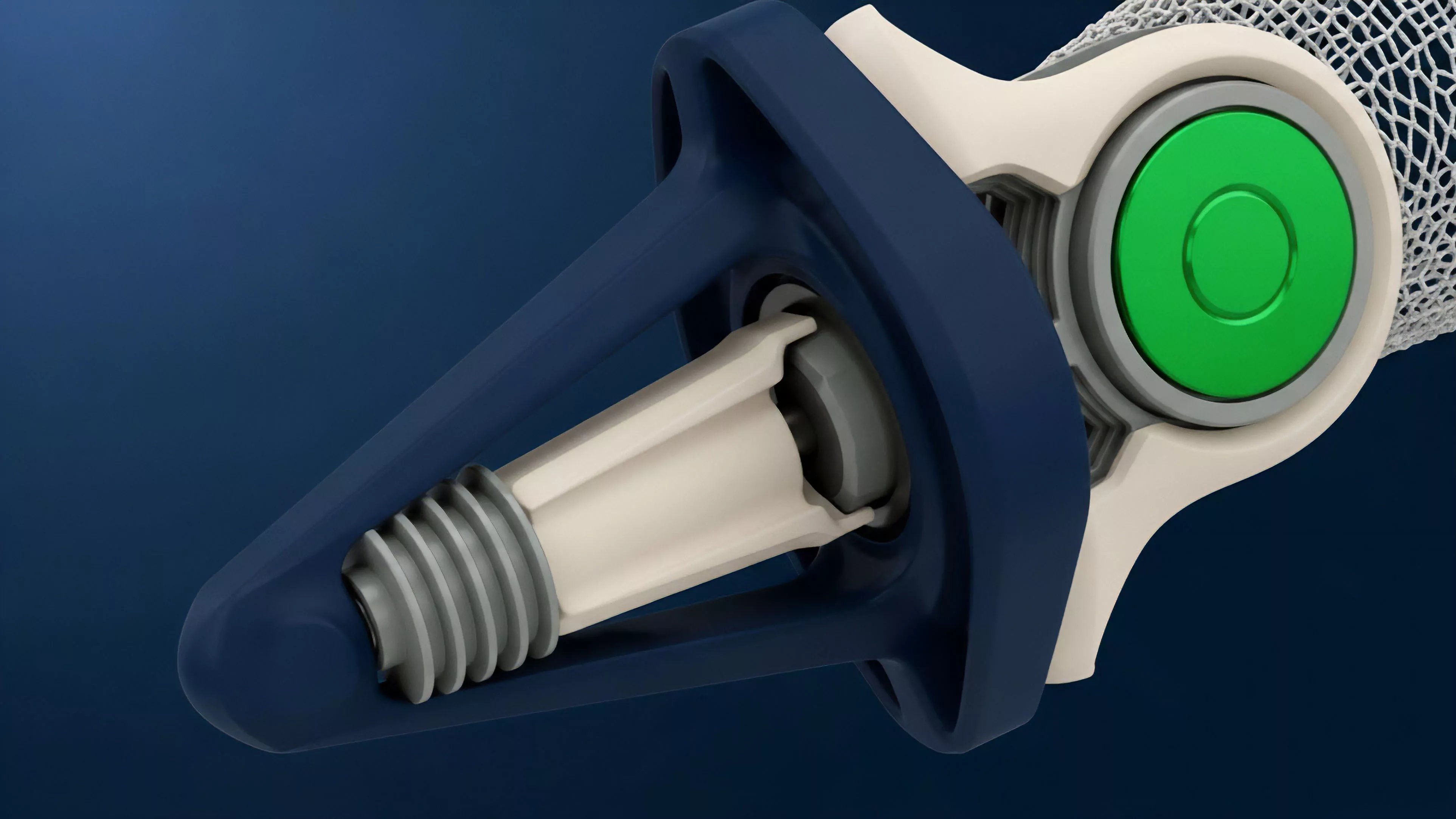

The core objective involves mapping the technical and economic vulnerabilities inherent in the validator lifecycle. This includes scrutinizing infrastructure configurations, such as hardware security modules and distributed validator technology implementations, alongside the economic incentives governing collateral at risk. In a market where capital flows toward the most resilient consensus providers, these audits determine the viability of staking-as-a-service offerings.

Origin

The genesis of Validator Security Audits tracks the transition from simple proof-of-work mining to complex proof-of-stake architectures. Early iterations focused exclusively on smart contract bugs within delegation pools. As consensus mechanisms evolved to include sophisticated slashing conditions and multi-party computation requirements, the industry identified a systemic need for auditing the human-machine hybrid that constitutes a validator.

Foundational research into Byzantine fault tolerance and distributed systems provided the initial parameters for these audits. Market participants recognized that a validator node is not merely a passive server but a high-stakes financial entity requiring active risk management. This realization drove the development of specialized security firms that treat consensus nodes as critical financial infrastructure rather than standard cloud deployments.

Theory

Validator Security Audits rely on a multi-dimensional risk framework that balances technical uptime with economic exposure. The mathematical modeling of these audits focuses on the slashing threshold and the probability of Byzantine failure across diverse client implementations. Analysts evaluate the following components:

- Key Management Infrastructure: The technical architecture governing the custody and usage of signing keys, prioritizing hardware-level isolation.

- Infrastructure Redundancy: The quantitative measurement of failover mechanisms and the geographic dispersion of node clusters to mitigate systemic downtime.

- Slashing Risk Exposure: The calculation of potential principal loss resulting from protocol-level penalties due to double-signing or prolonged unavailability.

Auditing consensus participants requires a synthesis of distributed systems engineering and game-theoretic risk assessment to quantify node reliability.

The audit process also incorporates behavioral game theory to assess how a validator might respond to adverse market conditions. If the cost of maintaining a node exceeds the yield generated through consensus participation, the incentive to prioritize security diminishes. This tension between operational cost and yield efficiency represents the primary risk factor for delegators.

| Metric | Risk Implication |

| Key Latency | Potential for missed block production |

| Slashing History | Indicator of operational negligence |

| Hardware Entropy | Susceptibility to side-channel attacks |

Approach

Modern practitioners employ a hybrid approach that combines automated static analysis with manual red-teaming of the node architecture. The focus has shifted toward continuous monitoring rather than point-in-time assessments. This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

By integrating real-time telemetry, auditors gain visibility into the validator’s performance during high-volatility events, where consensus failures often manifest.

Techniques include:

- Stress testing consensus clients against malicious network partitions to observe recovery behaviors.

- Reviewing multi-party computation protocols to ensure that shard key fragments remain secure during rotation.

- Analyzing the validator’s capital structure to determine if sufficient liquidity exists to cover potential slashing penalties without triggering fire sales.

Continuous monitoring protocols transform validator audits from static reports into dynamic risk management systems for institutional staking.

Market makers often use these audit results to adjust the risk premium applied to liquid staking tokens. A validator with a high-security rating commands a lower cost of capital, whereas poor audit performance leads to a widening of the spread in derivative markets. This feedback loop ensures that security is directly priced into the decentralized financial stack.

Evolution

The trajectory of Validator Security Audits reflects the increasing sophistication of institutional demand. Initially, audits were superficial, focusing on basic server uptime. Current standards mandate deep dives into Distributed Validator Technology and sophisticated MEV extraction behaviors.

This shift is analogous to the maturation of prime brokerage services in traditional finance, where counterparty risk assessment became the primary driver of market stability.

Regulatory pressures have further accelerated this evolution. Jurisdictional requirements for operational transparency are forcing validators to adopt standardized reporting frameworks. The move toward automated, on-chain attestation of security posture is the next phase.

Instead of relying on periodic PDF reports, protocols are integrating cryptographic proofs that verify the security configuration of a validator in real-time, reducing the latency between a potential vulnerability and its remediation.

| Audit Era | Primary Focus |

| Legacy | Server uptime and basic connectivity |

| Current | Key management and slashing mitigation |

| Future | Automated on-chain security attestations |

Horizon

The future of Validator Security Audits lies in the convergence of formal verification and autonomous risk agents. As networks grow in complexity, manual audits will struggle to keep pace with protocol upgrades. Automated agents will perform constant, high-frequency security scanning, triggering instantaneous adjustments to delegation weightings based on detected risk profiles.

This transition will likely lead to the commoditization of security, where nodes that fail to maintain a baseline of automated attestation are automatically excluded from institutional-grade staking pools.

We are witnessing the emergence of a self-correcting financial system where validator performance is not merely tracked but algorithmically enforced by the market. The ultimate goal is to remove human error from the validator lifecycle entirely, relying on cryptographic proofs to guarantee operational safety. The critical pivot point remains the standardization of these proofs across heterogeneous chains, a challenge that will define the next cycle of infrastructure development.

What fundamental limit exists in creating a truly trustless, self-auditing validator network that does not rely on external human oversight?