Essence

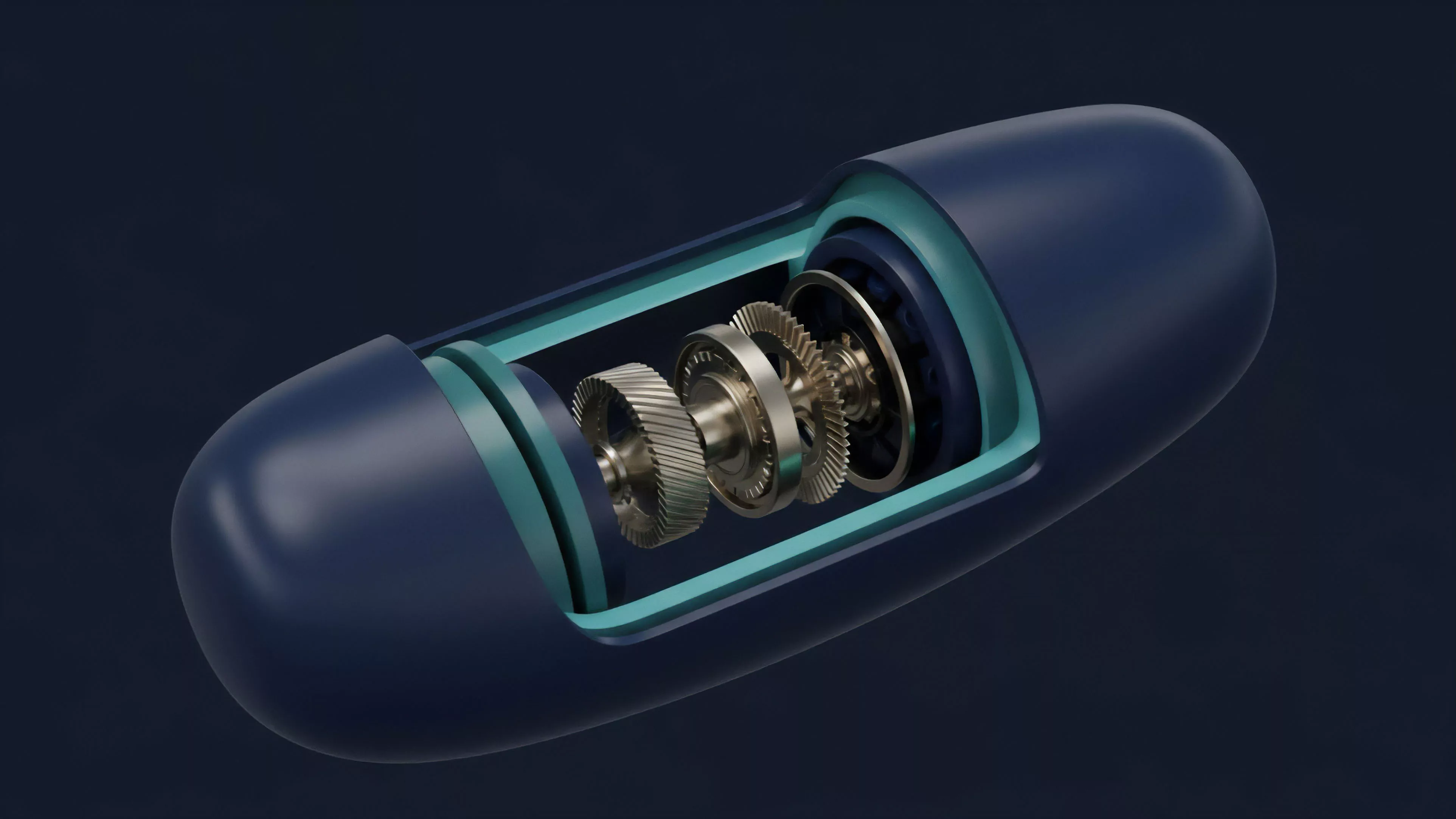

Trustless Data Verification functions as the cryptographic bridge ensuring that external information ⎊ the inputs required for derivative settlement ⎊ remains tamper-proof and authentic without relying on centralized intermediaries. This mechanism secures the integrity of decentralized finance by enforcing algorithmic truth, allowing smart contracts to ingest real-world data points while maintaining the non-custodial and permissionless properties of the underlying blockchain protocol.

Trustless data verification replaces human-centric reporting with cryptographic proofs to ensure derivative settlement accuracy.

At the mechanical level, this process requires decentralized oracle networks to aggregate, validate, and deliver information to execution engines. When a derivative contract triggers, the system demands a verified data state to determine the payout or liquidation event. By distributing this validation across independent nodes, the protocol eliminates the single point of failure inherent in traditional financial data providers.

Origin

The necessity for Trustless Data Verification emerged from the fundamental architectural constraint of blockchains: the inability to natively access off-chain data.

Early decentralized applications encountered a binary choice between isolation or dependence on centralized data feeds. This dependency reintroduced counterparty risk, contradicting the goal of building autonomous financial systems.

- Deterministic Execution necessitated that all nodes reach consensus on the same data input.

- Oracle Problem identified the technical hurdle of securely importing external variables like interest rates or asset prices.

- Cryptographic Proofs provided the solution to verify data authenticity without needing to trust the source entity.

Developers recognized that for decentralized options to mirror the sophistication of traditional markets, they required high-frequency, verifiable price feeds. The subsequent development of proof-of-authority and decentralized oracle consensus models enabled the transition from siloed experiments to integrated, multi-asset financial protocols.

Theory

The theoretical framework rests on adversarial game theory and cryptographic commitment schemes. In a decentralized environment, participants are incentivized to provide accurate data to maintain the value of the network, while penalties for malicious behavior ensure data integrity.

The protocol treats data feeds as high-stakes inputs where any deviation results in immediate economic forfeiture.

| Component | Functional Role |

| Data Aggregator | Collates inputs from multiple independent sources |

| Consensus Mechanism | Validates the median or weighted average of inputs |

| Cryptographic Proof | Attests to the authenticity of the ingested data |

The integrity of decentralized derivatives depends on the economic cost of subverting the consensus mechanism exceeding the potential gain from data manipulation.

When considering Quantitative Finance, the verification process must operate within the latency constraints of the protocol. If the verification step is too slow, the price data becomes stale, leading to arbitrage opportunities or inaccurate liquidation triggers. The system must achieve a balance where the computational overhead of verification does not degrade the responsiveness of the derivative engine.

Approach

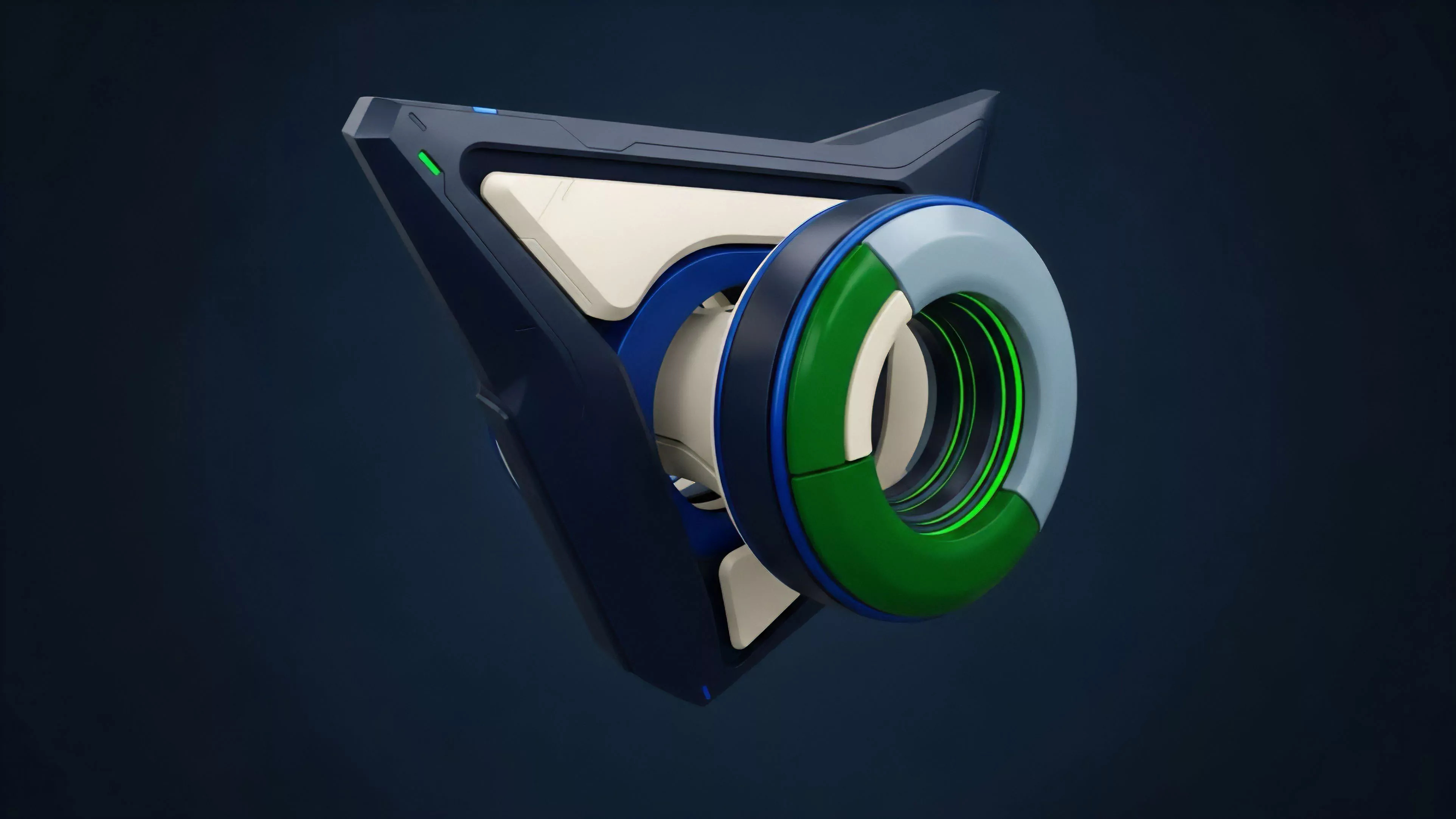

Current implementations prioritize decentralized node selection and stake-based reputation systems to ensure reliability.

Protocols now utilize threshold signature schemes to sign data packets, ensuring that the information received by the smart contract is a consensus output from a verified set of participants.

- Node Staking requires participants to lock collateral, creating a direct financial disincentive for malicious reporting.

- Latency Mitigation employs off-chain aggregation to reduce the load on the main consensus layer.

- Multi-Source Ingestion prevents localized data failure by pulling from diverse, independent exchange APIs.

These systems operate as automated auditors, constantly monitoring the deviation between reported prices and actual market conditions. When a node provides data outside a statistically significant range, the protocol triggers an automatic slashing event. This ensures the derivative pricing model remains anchored to objective market reality, regardless of individual node behavior.

Evolution

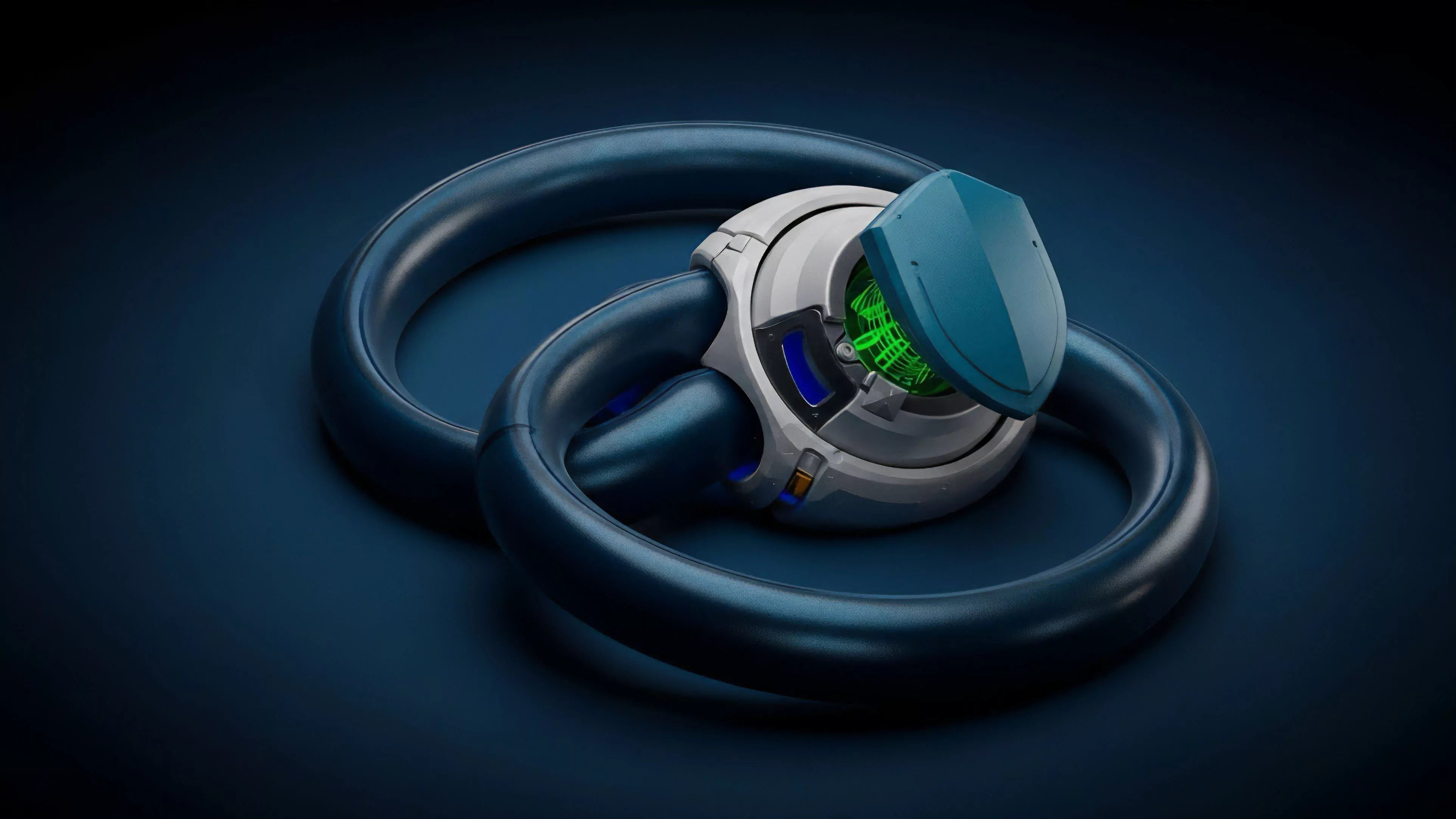

The transition from early, monolithic oracle designs to modular data infrastructure reflects the maturing requirements of decentralized markets.

Initial models suffered from fragility and limited scalability, often requiring manual intervention or centralized oversight. Current designs leverage Zero-Knowledge Proofs to verify data validity without exposing the underlying source, increasing both privacy and efficiency.

Modern oracle architectures move toward modularity to support the high-frequency requirements of complex crypto derivatives.

We have moved beyond simple price feeds into complex data availability layers that provide verifiable computation for arbitrary functions. This evolution allows for the creation of exotic options that require complex volatility calculations or multi-asset correlations. The shift acknowledges that market participants demand not only accuracy but also the ability to audit the verification process in real-time.

Horizon

Future developments will focus on cryptographic hardware integration and decentralized machine learning to automate data verification.

As derivative complexity increases, the ability to verify large-scale datasets will become the primary competitive advantage for protocols. The goal is to move toward fully autonomous data ecosystems that require zero human oversight.

| Innovation | Anticipated Impact |

| Trusted Execution Environments | Hardware-level verification of data origin |

| Predictive Oracle Models | Reduced latency in price discovery |

| Cross-Chain Interoperability | Unified data state across fragmented liquidity pools |

This path leads to a financial architecture where the entire derivative lifecycle ⎊ from quote to settlement ⎊ exists entirely on-chain, secured by verifiable math rather than legal contracts. The convergence of programmable money and verifiable data will redefine the boundaries of systemic risk, moving the industry toward a state of complete, algorithmic transparency.