State Transition Finality

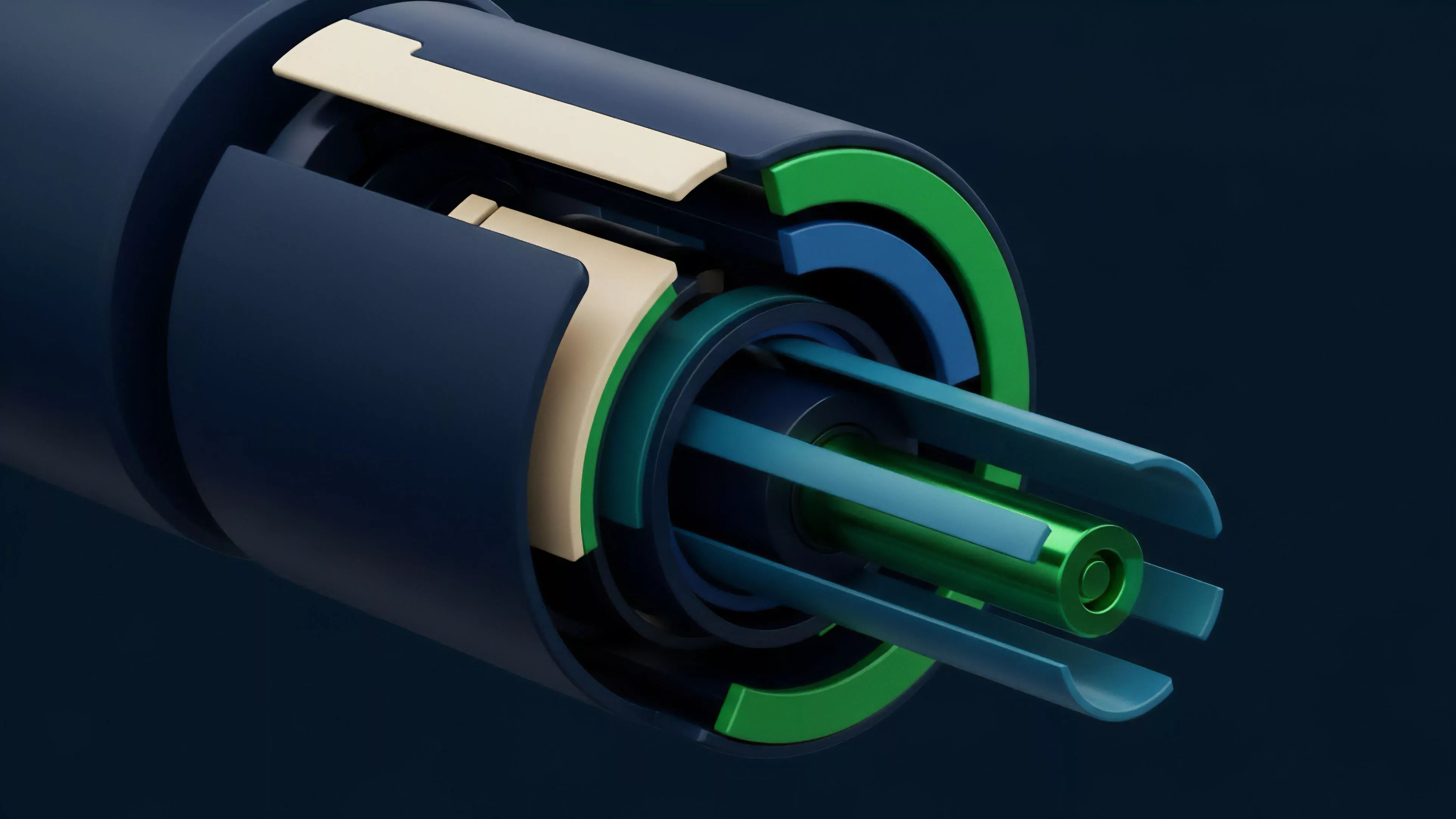

Transaction Verification constitutes the definitive cryptographic confirmation that a state change within a distributed ledger adheres to the consensus rules of the protocol. In the domain of decentralized derivatives, this process serves as the synthetic clearinghouse, replacing the centralized counterparty with a deterministic execution environment. The integrity of an entire options market rests upon the ability of the network to validate that the underlying collateral exists, the signature is authentic, and the execution logic of the smart contract remains uncompromised.

Transaction Verification establishes the cryptographic truth necessary for trustless settlement in decentralized derivative markets.

The systemic importance of this mechanism resides in its role as the arbiter of solvency. Without a rigorous Transaction Verification protocol, the risk of double-spending or unauthorized state mutation would render complex financial instruments like perpetual swaps or exotic options untenable. The process transforms a broadcasted intent into an immutable historical record, ensuring that every participant operates within a shared, verifiable reality where the laws of the code dictate the movement of capital.

Architectural Integrity

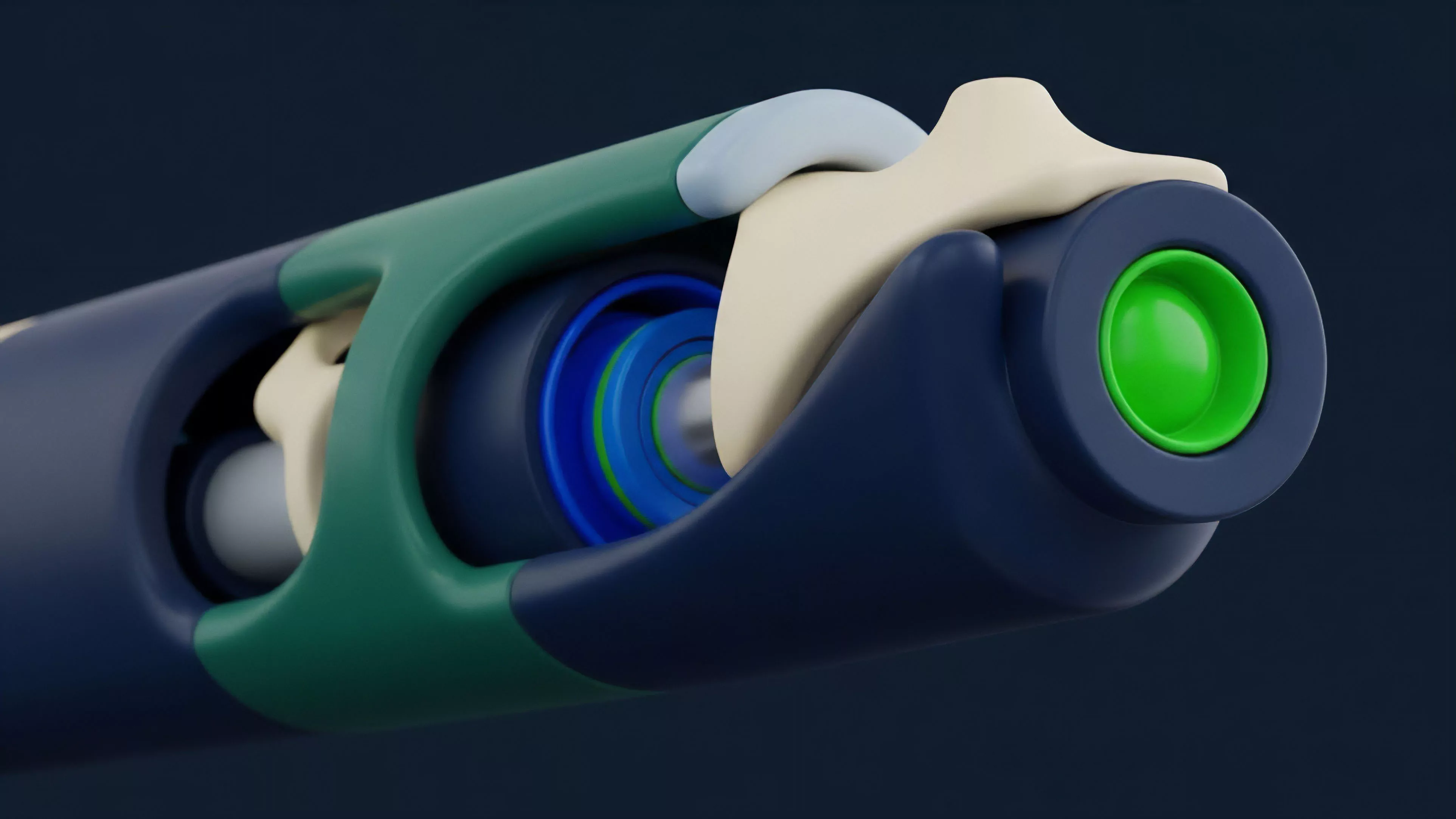

The architecture of Transaction Verification demands a balance between security and throughput. High-frequency trading environments require low-latency validation to minimize slippage and execution risk. Conversely, the security of the underlying assets necessitates a high degree of decentralization to prevent malicious actors from subverting the verification process.

This tension defines the technical boundaries of modern decentralized finance, forcing a choice between the probabilistic finality of certain consensus models and the immediate finality of others.

| Verification Metric | Centralized Clearing | Cryptographic Verification |

|---|---|---|

| Trust Assumption | Institutional Reputation | Mathematical Proof |

| Settlement Speed | T+2 Days | Seconds to Minutes |

| Transparency | Opaque Internal Ledgers | Publicly Auditable Code |

| Counterparty Risk | Systemic Institution Failure | Smart Contract Vulnerability |

Triple Entry Accounting Foundations

The genesis of Transaction Verification lies in the transition from double-entry bookkeeping to triple-entry systems. While traditional finance relies on two parties maintaining independent ledgers, the cryptographic model introduces a third, public ledger that serves as an objective witness. This innovation, first realized through the Nakamoto consensus, solved the double-spend problem without a central authority.

The early focus remained on simple value transfers, yet the logic laid the groundwork for the complex state transitions required by modern derivative protocols.

The mathematical certainty of state transitions determines the risk premium associated with settlement latency in high-frequency derivative trading.

The shift toward smart contract platforms expanded the scope of Transaction Verification from simple balance checks to the validation of complex computational outputs. This evolution allowed for the creation of automated market makers and decentralized option vaults, where the verification process confirms that the pricing algorithms and collateralization ratios are maintained in real-time. The transition from proof-of-work to proof-of-stake further refined the economic incentives surrounding verification, aligning the interests of validators with the long-term stability of the financial ecosystem.

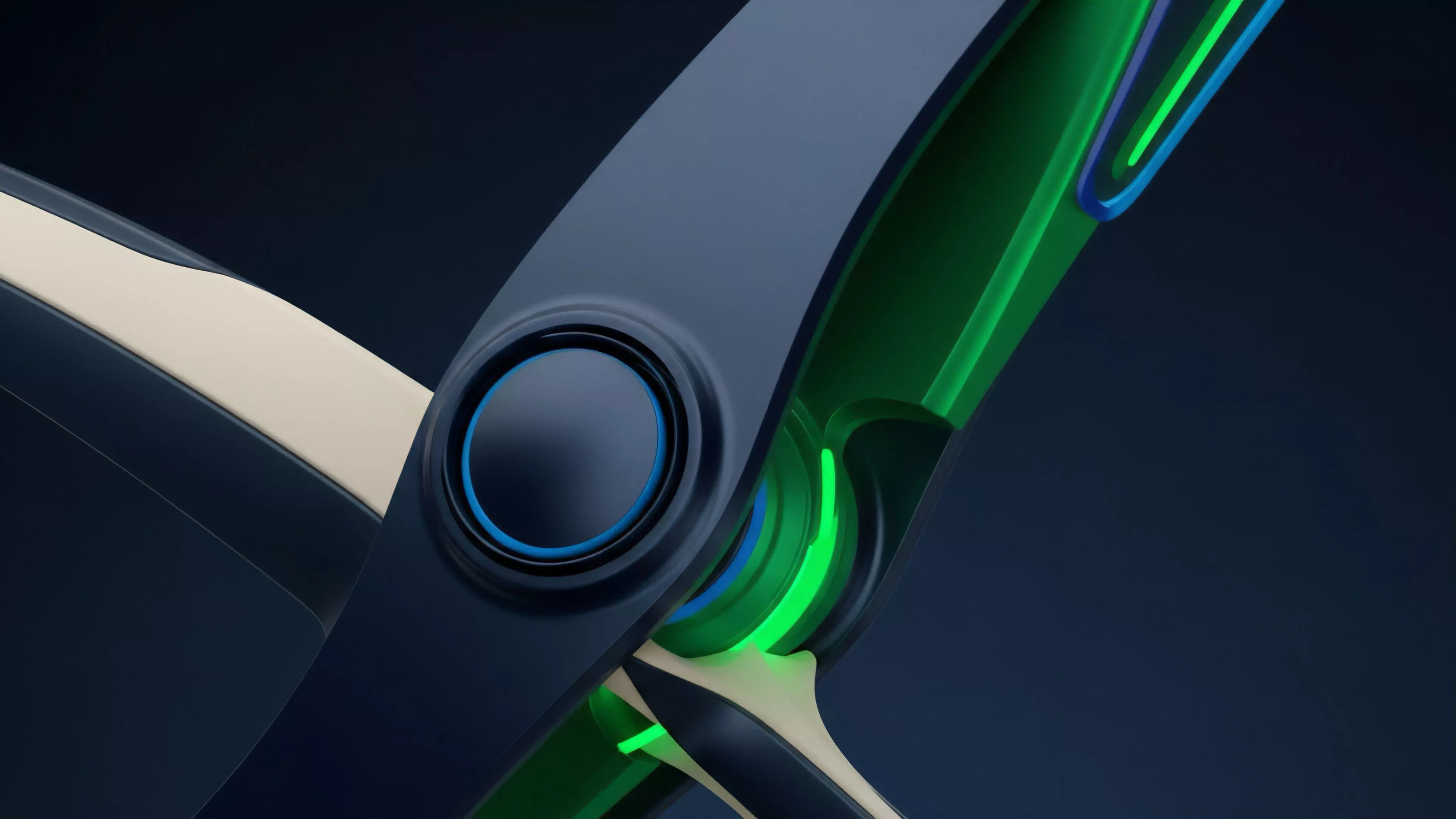

Consensus Mechanics and Determinism

The theoretical framework of Transaction Verification revolves around the CAP theorem, which posits that a distributed system can only provide two of three guarantees: consistency, availability, and partition tolerance. In the context of financial settlement, consistency is non-negotiable. The verification engine must ensure that all nodes agree on the state of the market at any given moment.

This requirement leads to the implementation of Byzantine Fault Tolerant (BFT) algorithms, which allow the network to reach consensus even if a subset of participants acts maliciously or fails.

Probabilistic Vs Deterministic Finality

The distinction between probabilistic and deterministic finality is a primary concern for derivative architects. Protocols like Bitcoin offer probabilistic finality, where the likelihood of a transaction being reversed decreases as more blocks are added to the chain. For derivative markets, where large liquidations can occur in seconds, this uncertainty introduces systemic risk.

Modern platforms often favor BFT-based consensus or Layer 2 solutions that provide deterministic finality, ensuring that once Transaction Verification is complete, the trade is irreversible and the collateral is secure.

- Validation begins with the verification of the ECDSA signature to confirm the identity of the initiator.

- Network nodes must reach consensus on the inclusion of the transaction within a specific block based on the protocol rules.

- State root updates reflect the new balance or contract status after the execution of the derivative logic.

- Cryptographic proofs are generated to provide evidence of correct execution without revealing the underlying data.

Execution Methodologies and Scaling

Current methodologies for Transaction Verification are increasingly focused on off-chain computation with on-chain verification. This shift addresses the scalability bottlenecks that plague early blockchain designs. Zero-knowledge proofs (ZKPs) represent the frontier of this methodology, allowing for the verification of complex trades without requiring every node to re-execute the transaction.

This approach significantly reduces the computational burden on the main network while maintaining the security guarantees of the underlying layer.

Verification Scaling Strategies

The industry has converged on several distinct strategies for handling high volumes of Transaction Verification. These strategies differ in their trust assumptions and technical complexity.

| Strategy | Verification Mechanism | Trust Assumption |

|---|---|---|

| Optimistic Rollups | Fraud Proofs | One Honest Observer |

| ZK-Rollups | Validity Proofs | Cryptographic Soundness |

| Sidechains | Independent Consensus | Validator Set Integrity |

| State Channels | Multi-sig Settlement | Participant Cooperation |

The implementation of Transaction Verification within these systems involves a multi-stage process. First, the transaction is bundled with others and processed by a sequencer. Then, a proof of the batch’s validity is submitted to the main chain.

The main chain’s role is reduced to verifying the proof, rather than processing each individual trade. This separation of execution and verification allows for the throughput necessary to support global derivative markets.

State Validation Transitions

The evolution of Transaction Verification has moved from a monolithic process to a modular one.

In the early stages of decentralized finance, every node on the network was responsible for verifying every aspect of every transaction. This led to extreme congestion and high fees during periods of market volatility. The transition to modular architectures allows for specialized layers to handle different parts of the verification process, such as data availability, execution, and settlement.

Future verification architectures must balance the trade-off between absolute privacy and the systemic need for regulatory transparency.

The rise of Maximal Extractable Value (MEV) has introduced new complexities into the verification landscape. Validators now have the incentive to reorder or include transactions in a way that maximizes their own profit. This dynamic has forced a redesign of Transaction Verification protocols to include MEV-resistance mechanisms, such as commit-reveal schemes or encrypted mempools.

These innovations aim to ensure that the verification process remains fair and transparent, preventing sophisticated actors from front-running retail traders.

Cross Chain Verification and Regulatory Integration

The future of Transaction Verification lies in the seamless movement of state across disparate networks. Cross-chain verification protocols are being developed to allow for the validation of transactions that span multiple blockchains.

This capability is essential for the growth of a unified liquidity pool in the decentralized derivative market. The use of light clients and zero-knowledge bridges will enable one chain to verify the state of another without relying on centralized intermediaries, creating a truly global and interconnected financial system.

Regulatory Alignment and Privacy

As decentralized markets mature, the integration of regulatory requirements into the Transaction Verification layer becomes inevitable. This does not mean a return to centralization, but rather the development of privacy-preserving compliance tools. Zero-knowledge proofs can be used to verify that a participant meets certain criteria ⎊ such as being a non-sanctioned entity or an accredited investor ⎊ without revealing their identity or financial history.

This balance between privacy and compliance will be the defining challenge for the next generation of derivative systems.

- Protocols will integrate zero-knowledge identity proofs directly into the verification pipeline to meet global compliance standards.

- Recursive proofs will allow for the compression of entire transaction histories into a single, easily verifiable data point.

- Hardware-accelerated verification will reduce the latency of cryptographic proofs, enabling sub-millisecond settlement times.

The adversarial nature of the crypto environment ensures that Transaction Verification will remain under constant pressure. Automated agents and sophisticated exploiters will continue to probe for weaknesses in consensus logic and smart contract execution. The resilience of the financial system depends on the continuous refinement of these verification mechanisms, ensuring they can withstand both market shocks and targeted attacks. The transition to a decentralized future is a process of hardening these cryptographic foundations until they are as reliable as the laws of physics.