Essence

Transaction Validation Processes function as the definitive cryptographic gatekeeping mechanism within decentralized ledgers. These protocols ensure that every state transition ⎊ whether a simple transfer or a complex derivative execution ⎊ adheres to the pre-defined consensus rules of the network. Without these mechanisms, the integrity of the ledger collapses under the weight of double-spending or unauthorized state changes.

Transaction validation acts as the cryptographic arbiter that enforces network state integrity by verifying the legitimacy of every requested ledger update.

At the mechanical level, these processes involve the rigorous checking of digital signatures, balance availability, and adherence to smart contract logic. When a user initiates an action, such as exercising a crypto option, the network nodes must verify that the transaction is cryptographically signed by the owner of the private key and that the account possesses sufficient collateral. This validation is the bedrock of trustless finance, replacing centralized clearinghouses with automated, code-based verification.

Origin

The architectural roots of these validation systems trace back to the foundational design of distributed hash chains.

Early systems utilized proof-of-work, where the computational cost of finding a valid block served as the primary validation proxy. This ensured that only participants contributing energy to the network could influence the order of transactions, effectively solving the problem of decentralized synchronization without a central authority.

- Proof of Work established the initial paradigm where computational energy expenditure secured transaction ordering and validation.

- Proof of Stake emerged as a capital-efficient alternative, substituting energy-intensive mining with validator economic commitment.

- Smart Contract Logic introduced programmable validation, allowing networks to enforce complex financial rules autonomously.

As decentralized finance matured, the requirements for validation shifted from simple balance verification to complex state transitions. The evolution of Ethereum and subsequent high-throughput chains required validation mechanisms that could handle the computational overhead of decentralized derivatives, leading to the development of sophisticated execution environments that separate transaction sequencing from state computation.

Theory

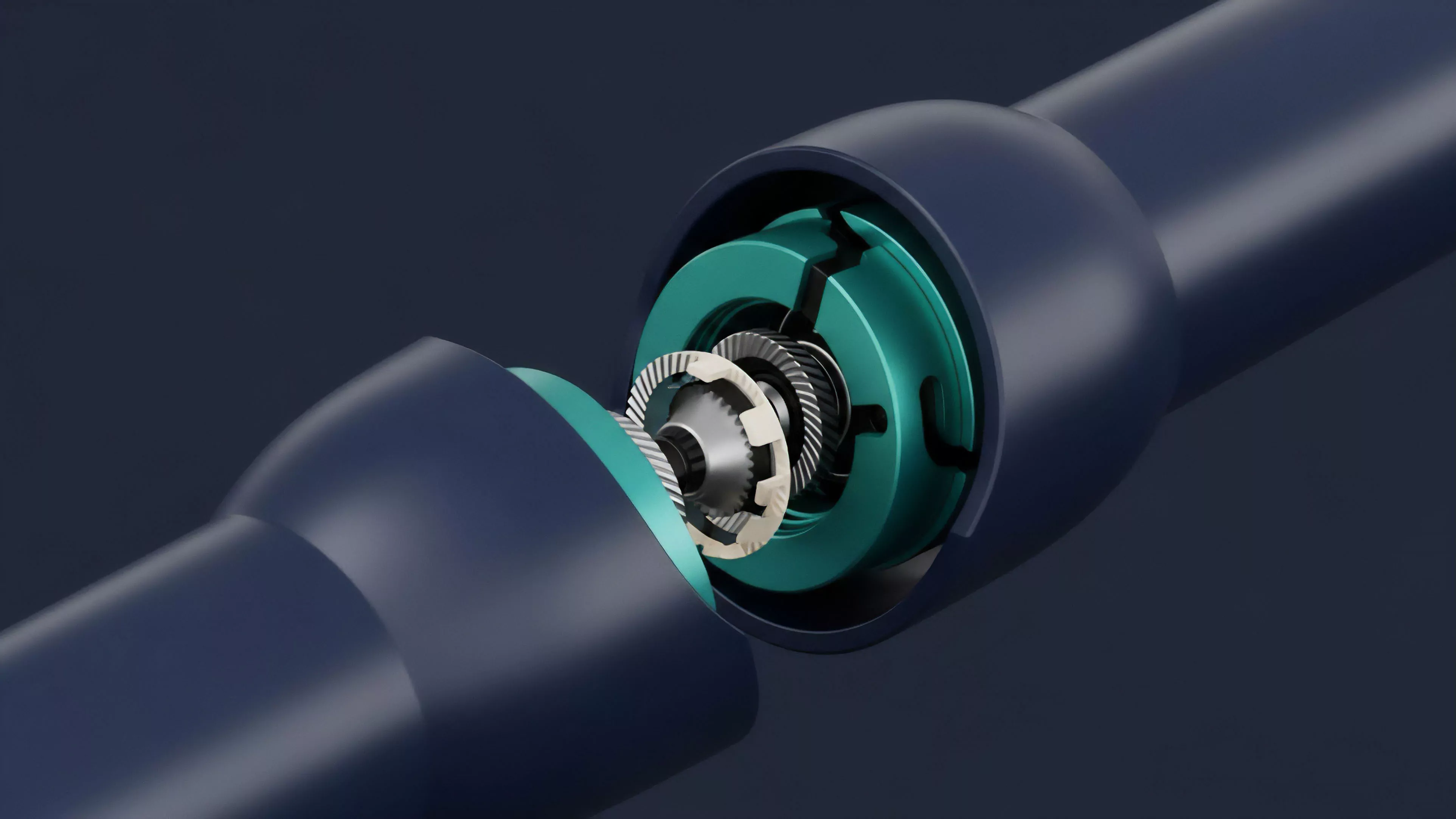

The mechanics of validation rest on the interplay between state transition functions and consensus algorithms. In a decentralized derivative market, a validator node must perform several distinct tasks to ensure the validity of an incoming order.

First, the node performs a signature check, utilizing elliptic curve cryptography to confirm that the transaction was authorized by the holder of the corresponding public key. Second, the node executes the transaction within the virtual machine to ensure it does not violate the protocol’s safety invariants.

| Validation Phase | Primary Objective |

| Cryptographic Verification | Confirming authorization and ownership |

| State Consistency Check | Ensuring sufficient margin and collateral |

| Execution Invariant Validation | Preventing contract logic violations |

The systemic risk here involves the propagation of invalid states. If a validation mechanism is flawed, malicious actors can drain liquidity pools by forcing the protocol to accept unauthorized trades. Validator collusion remains a theoretical concern, where a subset of nodes might prioritize specific transactions to extract value through front-running, directly impacting the fairness of the underlying market microstructure.

Approach

Current validation strategies leverage advanced techniques like zero-knowledge proofs and optimistic execution to scale throughput without sacrificing security.

By using ZK-Rollups, networks can compress thousands of transaction validations into a single proof that is verified on the main chain, significantly reducing the load on individual validators. This shift allows for the creation of high-frequency derivative platforms that mimic the performance of traditional centralized exchanges.

Zero-knowledge proofs enable the validation of large batches of transactions through cryptographic succinctness rather than individual node computation.

The operational reality involves a delicate balance between latency and security. High-frequency options trading requires sub-second finality, forcing protocols to adopt architectures that prioritize rapid sequencing while deferring deep validation to secondary layers. This creates a trade-off where the speed of execution can temporarily outpace the finality of the settlement layer, requiring robust margin engines to handle potential state reversals or invalidations.

Evolution

Validation has transitioned from a simple, consensus-driven process into a multi-layered security stack.

Early iterations focused on basic transaction integrity, whereas modern systems now incorporate modular security components like decentralized oracle networks and automated risk engines. These additions allow the validation process to account for real-world market data, ensuring that derivative liquidations are triggered based on accurate, non-manipulated price feeds.

- Modular Architecture separates sequencing, execution, and settlement to optimize for specific performance goals.

- Oracle Integration feeds real-time market data into the validation process for automated margin management.

- Programmable Privacy utilizes cryptographic techniques to validate transactions without revealing sensitive order flow data.

This evolution reflects a broader shift toward institutional-grade infrastructure. The demand for cross-chain validation has necessitated the development of interoperability protocols that can verify state transitions across heterogeneous networks. These bridges act as external validation points, introducing new vectors for systemic contagion if the bridge security is compromised, which underscores the critical importance of secure cross-chain state proofs.

Horizon

The future of transaction validation lies in the intersection of hardware-accelerated computation and advanced game-theoretic incentive design.

As validation requirements grow more intensive, we will likely see the adoption of specialized hardware ⎊ such as FPGAs or ASICs ⎊ optimized specifically for verifying complex cryptographic proofs. This will drive down the cost of security while increasing the throughput capacity of decentralized financial systems.

The next generation of validation systems will utilize hardware-accelerated cryptographic proofs to achieve institutional-scale throughput.

Furthermore, the integration of AI-driven risk agents will allow validation processes to become proactive rather than reactive. Instead of merely checking if a transaction adheres to static rules, future validation nodes will analyze the systemic impact of an order on the overall liquidity pool, automatically rejecting trades that threaten the stability of the protocol. This transition will redefine the role of the validator from a passive observer to an active participant in market maintenance.