Essence

Transaction Sequencing Algorithms define the precise order in which pending operations are incorporated into a distributed ledger. These mechanisms function as the gatekeepers of state transition, determining how individual inputs interact with the global consensus. By dictating the temporal priority of events, these systems directly influence the distribution of economic value among network participants.

Transaction sequencing algorithms establish the canonical ordering of events, acting as the fundamental arbiter of state transitions in decentralized ledgers.

The core function involves transforming a chaotic mempool of competing requests into a linear, verifiable history. Without a rigorous sequencing logic, decentralized networks succumb to non-deterministic outcomes, rendering financial settlement unpredictable. The design of these algorithms dictates whether a network prioritizes censorship resistance, throughput, or the extraction of value from order flow.

Origin

The genesis of these mechanisms traces back to the fundamental challenge of achieving distributed consensus without a central authority.

Early protocols relied on simple, first-come-first-served logic, where transaction timestamps were loosely coupled to network arrival times. This naive approach proved insufficient as adversarial participants discovered that influencing propagation latency allowed for the exploitation of order priority.

- FIFO Ordering: Early implementations prioritized transactions based on their arrival time at a validator node, assuming network propagation was equitable.

- Gas Price Auctions: The introduction of flexible fee markets allowed participants to signal urgency, shifting the sequence priority toward those willing to pay higher premiums.

- Validator Selection: The transition to proof-based consensus models shifted sequencing power from whoever broadcasted first to whoever held the authority to propose the next block.

This evolution demonstrates a clear movement away from passive network-dependent ordering toward active, incentive-driven selection. As the financial stakes increased, the ability to control sequence became the most valuable commodity in decentralized finance, leading to the sophisticated mechanisms observed in current high-throughput environments.

Theory

The mechanics of sequencing rely on the interaction between game theory and network topology. Validators operate as profit-maximizing agents within an adversarial environment, where the objective is to maximize the extraction of value from the sequence they construct.

Mathematical Foundations

The value of a sequence is often modeled as the sum of transaction fees plus the potential for extracted value, commonly identified as MEV. The sequencing problem can be viewed as an optimization task:

| Mechanism | Priority Driver | Risk Profile |

| Gas Auction | Fee Payment | High Network Congestion |

| Batch Auction | Uniform Price | Reduced Arbitrage Opportunity |

| Time Priority | Propagation Speed | Information Asymmetry |

The sequencing problem functions as an optimization challenge where validators maximize block utility while managing the externalities of transaction ordering.

These systems often encounter the paradox of decentralization. If the sequencing process is too transparent, participants exploit the order flow to the detriment of liquidity providers. If the process is opaque, the system risks becoming a private extractors’ market, where the validator maintains total control over the economic outcomes of the users.

Approach

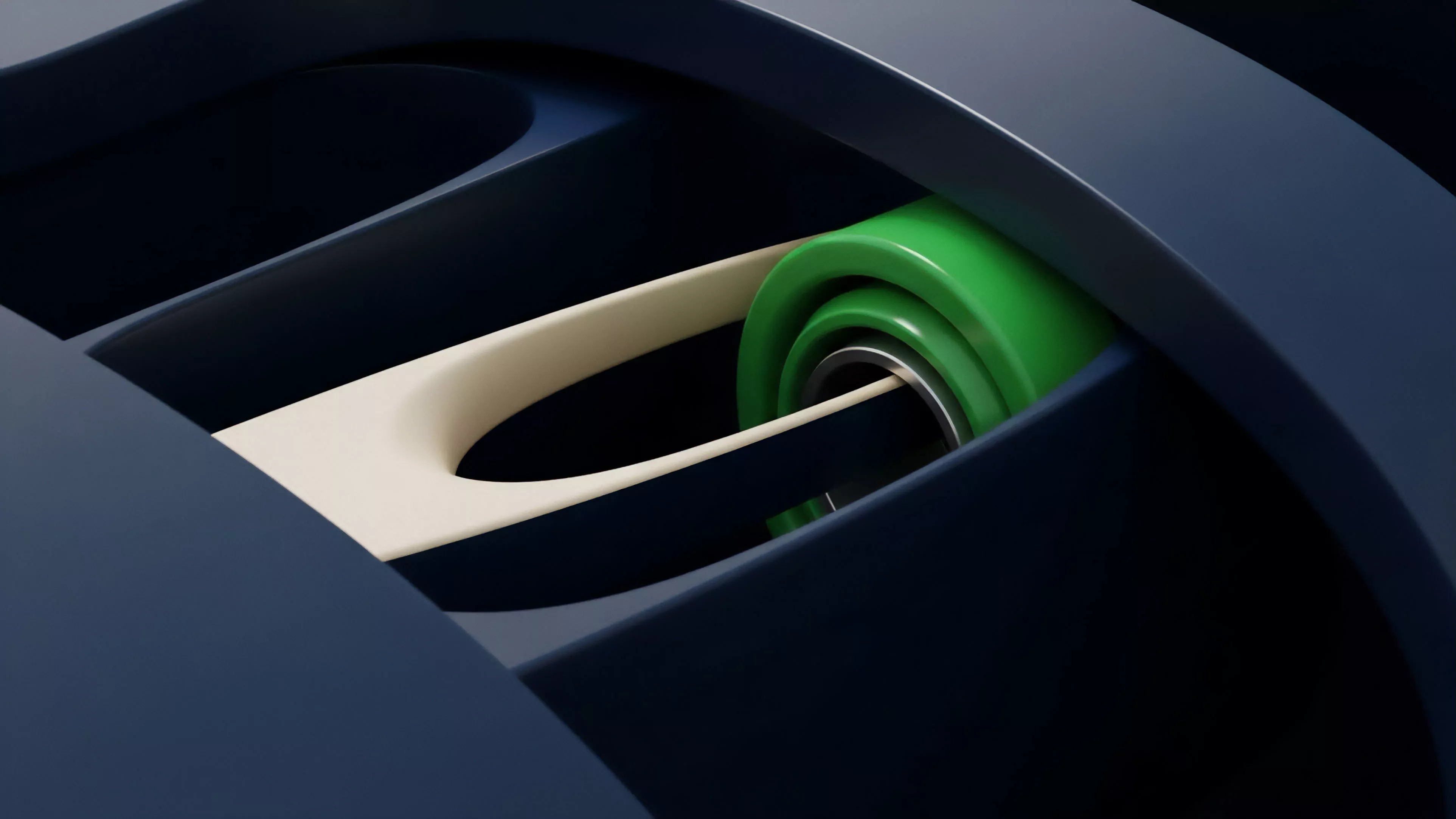

Current implementations utilize a mix of public mempools and private relay channels to manage transaction flow.

The dominant approach involves a hybrid model where public requests compete in a fee-based auction, while sophisticated actors utilize private channels to submit bundles of transactions directly to validators.

Strategic Execution

The modern landscape forces participants to weigh the cost of priority against the probability of inclusion. Transaction Bundling has become the standard for professional actors, ensuring that related operations are executed atomically. This prevents partial execution risks, which would otherwise result in catastrophic capital loss during high volatility events.

- Atomic Bundling: Grouping multiple related transactions into a single unit ensures all succeed or none execute, maintaining the integrity of complex strategies.

- Private Relay: Bypassing the public mempool protects sensitive order flow from predatory searchers, albeit at the cost of centralized infrastructure reliance.

- Threshold Encryption: Emerging techniques aim to hide transaction contents until after they are sequenced, theoretically neutralizing the ability to front-run or sandwich users.

The systemic risk here involves the concentration of sequencing power. When a small number of entities control the majority of block production, the ability to manipulate market prices via sequence becomes a latent threat to the stability of the entire financial layer.

Evolution

The path from simple queueing to sophisticated, market-aware sequencing represents a fundamental shift in blockchain architecture. Initially, developers viewed ordering as a purely technical, neutral task.

Today, it is recognized as an economic function that dictates the profitability of almost every derivative product on-chain.

The evolution of sequencing shifts the burden of order management from the network layer to the application layer, forcing protocol designers to account for adversarial order flow.

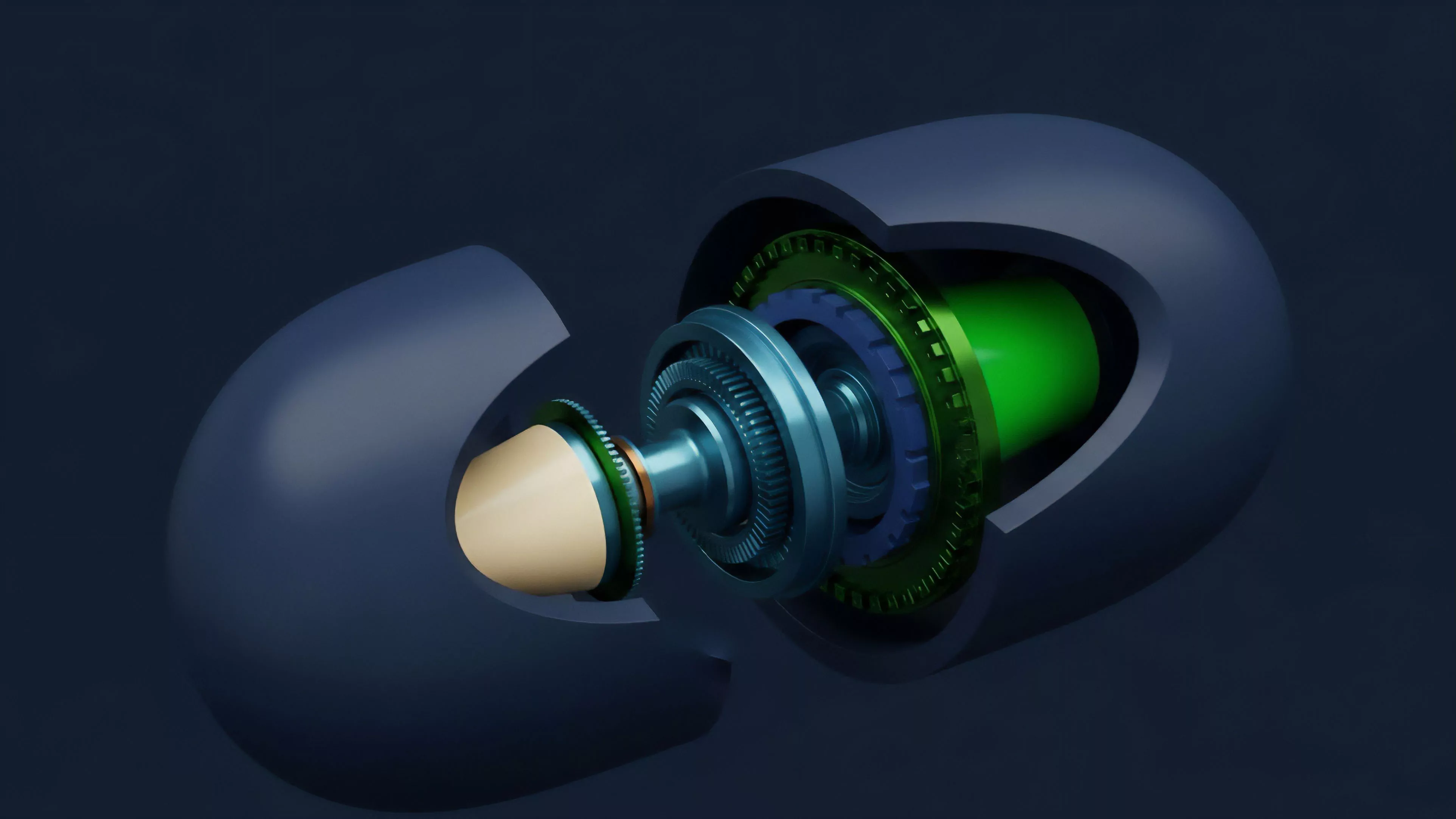

The industry has moved toward modularity, where the sequencing layer is increasingly decoupled from the execution and data availability layers. This allows for specialized sequencing environments that can be optimized for specific financial products, such as high-frequency options or decentralized margin engines.

| Era | Primary Driver | Outcome |

| Genesis | Technical Fairness | Unpredictable Latency |

| Expansion | Economic Incentive | Maximal Extractable Value |

| Modular | Application Specialization | Customized Ordering Logic |

This modularity allows for the creation of sequencers that are tailored to the requirements of derivative markets, where precision in execution time is critical for managing Greeks and preventing slippage. The transition indicates a move toward a more fragmented but highly specialized market structure.

Horizon

The future of sequencing lies in the transition toward decentralized, verifiable ordering mechanisms that remove the reliance on individual validators. Cryptographic primitives like Threshold Decryption and Verifiable Delay Functions will likely form the basis for next-generation sequencing, ensuring that transaction order is determined by consensus rather than individual discretion. The systemic implications are profound. If sequencing becomes truly decentralized and verifiable, the ability to extract value from order flow will be significantly diminished, leading to more efficient markets and tighter spreads for derivatives. This will force participants to compete on the basis of capital efficiency and risk management rather than technical latency or information asymmetry. The next phase of development will focus on integrating these sequencing layers directly into the security models of the protocols they serve, creating a self-reinforcing loop of transparency and financial integrity.