Essence

Trading Venue Analysis functions as the rigorous assessment of infrastructure where digital asset derivatives exchange hands. It evaluates the technical, economic, and operational reliability of platforms facilitating options and futures. The objective centers on quantifying the friction, security, and capital efficiency inherent in a specific venue.

Trading Venue Analysis provides the framework for assessing the operational integrity and market microstructure of digital asset derivative exchanges.

Market participants utilize this lens to distinguish between venues that provide genuine liquidity and those relying on synthetic depth or opaque matching engines. This assessment extends to the underlying settlement mechanisms, collateral management, and the robustness of the liquidation engine during periods of extreme volatility.

Origin

The necessity for Trading Venue Analysis arose from the transition of crypto markets from simple spot exchanges to sophisticated derivative environments. Early platforms operated with minimal oversight, leading to frequent system failures during market stress.

Financial history demonstrates that centralized exchanges often obscure their risk profiles, necessitating a shift toward independent, data-driven scrutiny.

- Systemic Fragility: Early venue architectures lacked standardized risk controls, resulting in cascading liquidations.

- Information Asymmetry: Participants required tools to pierce the veil of reported volume and depth.

- Institutional Requirements: The entry of professional capital demanded verifiable performance metrics for execution venues.

This evolution mirrors the development of traditional equity markets, where venue selection directly impacts execution quality and portfolio survival. The shift toward decentralized venues has only intensified the requirement for analyzing protocol-level security and governance structures.

Theory

The architecture of Trading Venue Analysis rests on three pillars: microstructure efficiency, protocol physics, and systemic risk exposure. Each pillar requires distinct mathematical modeling to capture the true state of a venue.

Microstructure Dynamics

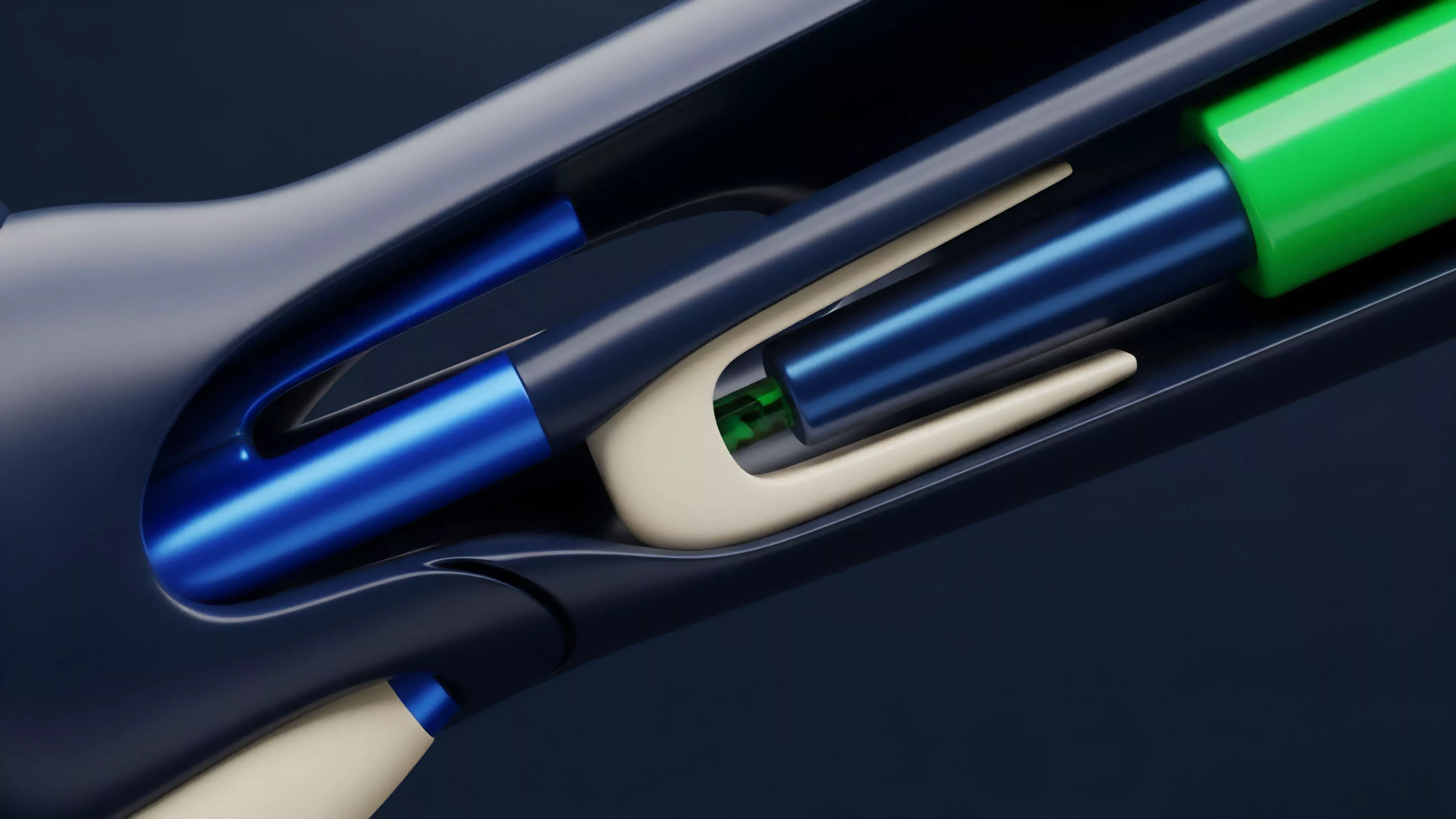

Analyzing order flow involves measuring the bid-ask spread, depth at various price levels, and the latency of order matching engines. High-frequency data reveals the presence of market-making algorithms and the impact of liquidity fragmentation.

Protocol Physics

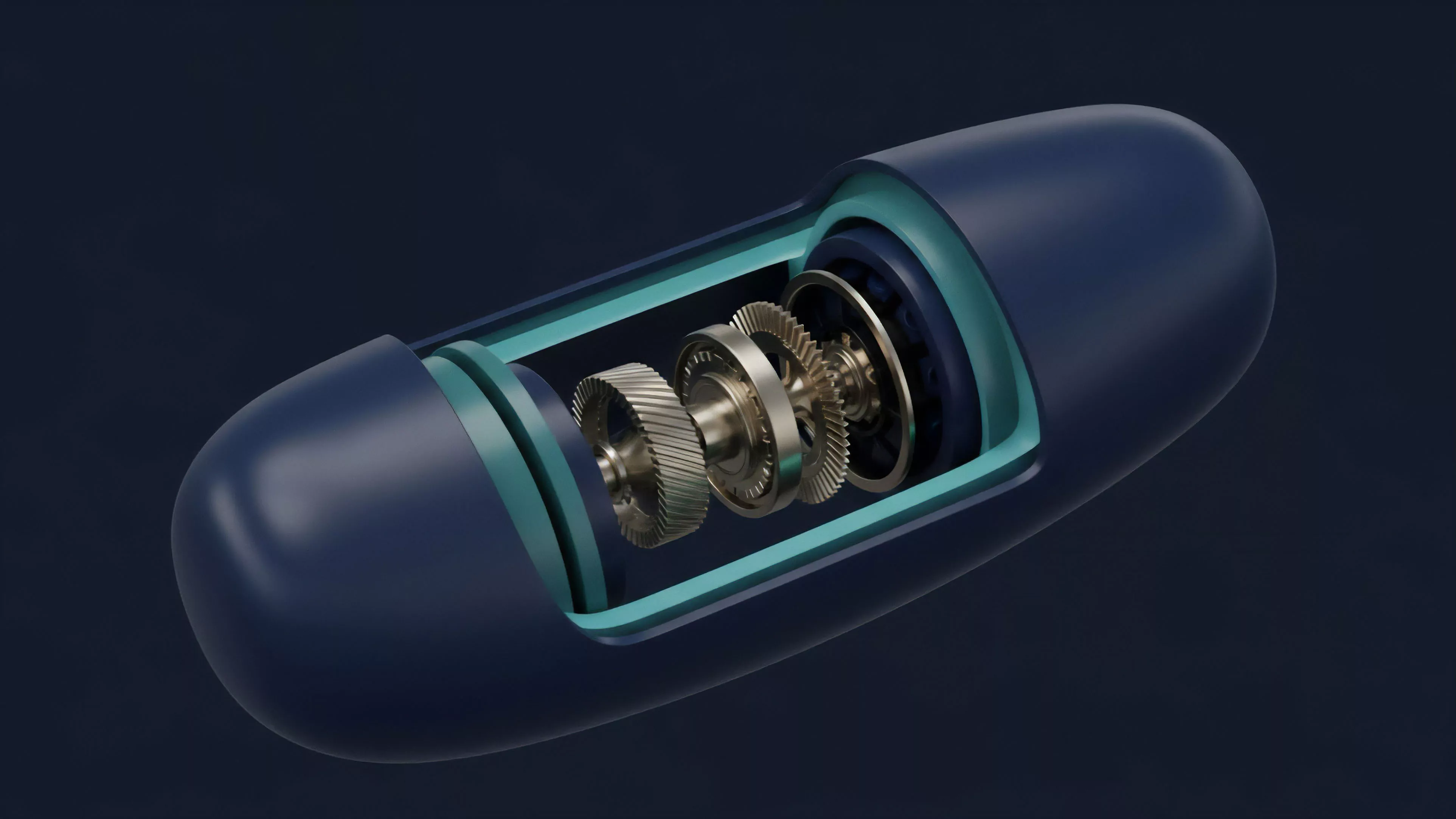

The settlement engine defines the venue’s reliability. Whether using an Automated Market Maker or a centralized order book, the mathematical model governing margin calls and liquidations determines the system’s resistance to tail-risk events.

The stability of a derivative venue depends on the mathematical rigor of its liquidation engine and the transparency of its collateral management.

| Metric | Focus Area |

| Execution Latency | Microstructure |

| Liquidation Thresholds | Protocol Physics |

| Counterparty Exposure | Systemic Risk |

The intersection of these metrics allows for a probabilistic assessment of a venue’s survival during a black-swan event. One might consider how the physical constraints of blockchain block times impact the responsiveness of a margin engine, creating a direct correlation between network congestion and liquidation failure.

Approach

Modern assessment strategies prioritize quantitative verification over platform marketing. The practitioner constructs a risk profile for each venue by stress-testing its parameters against historical volatility data.

- Data Aggregation: Collecting granular trade and quote data to map the true liquidity surface.

- Stress Testing: Simulating extreme market conditions to evaluate the speed and accuracy of the margin engine.

- Code Auditing: Analyzing smart contract logic to identify potential exploits in the collateral vault.

This approach shifts the focus from superficial user interface metrics to the underlying engine’s ability to maintain solvency. It is a process of mapping the potential for failure before committing capital.

Evolution

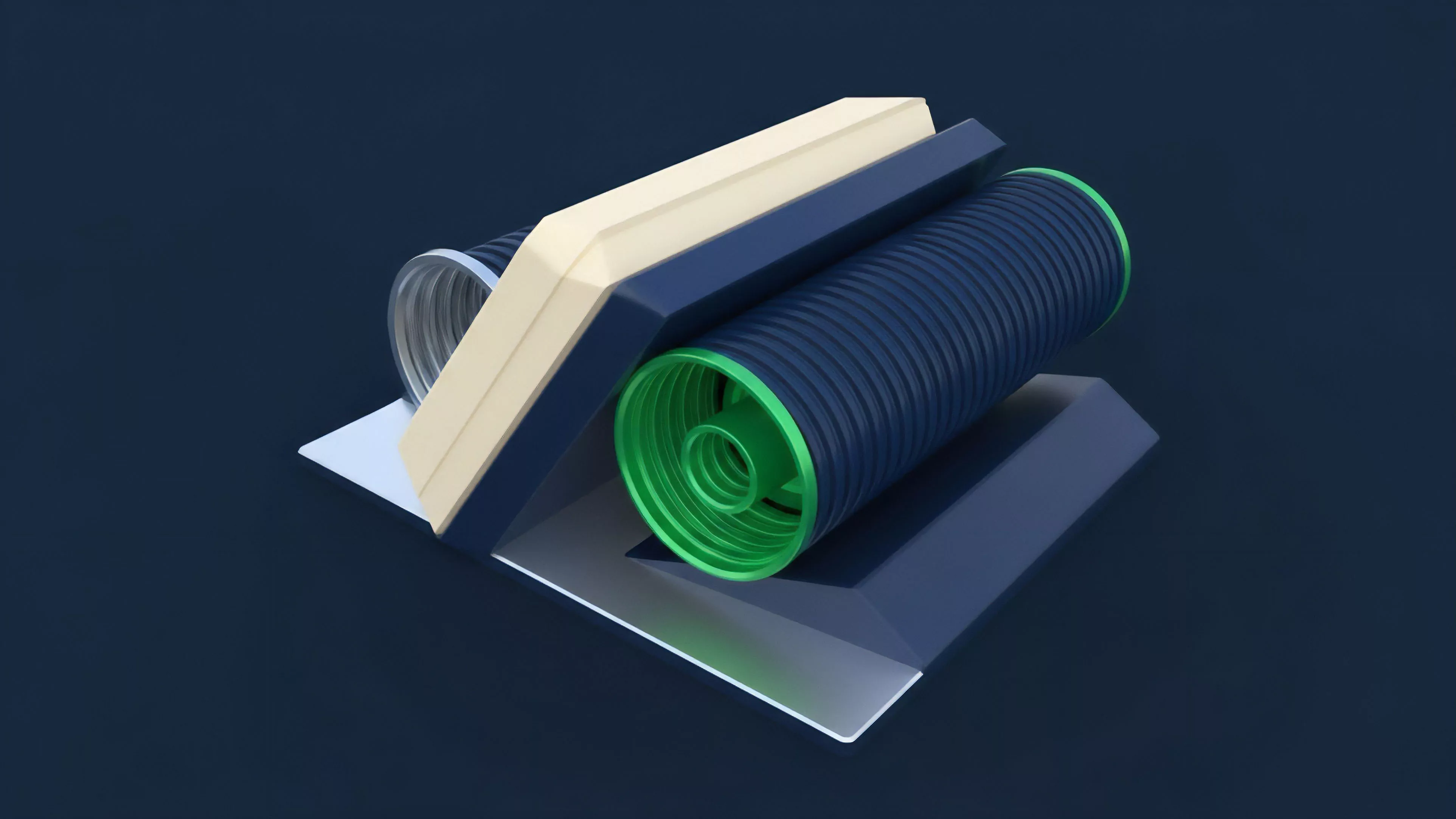

The field has moved from simple uptime monitoring to complex systems risk analysis. Early efforts focused on basic availability, while current standards require deep inspection of incentive structures and governance models.

Institutional Integration

The requirement for regulatory compliance has forced venues to adopt transparent reporting and audited reserves. This shift provides more data points for analysts to construct comprehensive risk models.

Venues now face rigorous scrutiny regarding their collateralization ratios and the technical resilience of their matching engines.

The proliferation of decentralized protocols has introduced new variables, such as governance risk and protocol upgradeability, which require a broader, interdisciplinary approach to analysis. One must consider how the shift from human-managed risk to code-managed risk changes the fundamental nature of counterparty assessment.

Horizon

The future of Trading Venue Analysis lies in real-time, automated monitoring systems that integrate on-chain data with off-chain performance metrics. As markets become more interconnected, the analysis must account for cross-protocol contagion.

| Trend | Implication |

| Real-time Auditing | Immediate detection of insolvency |

| Cross-Chain Liquidity | Complex risk propagation modeling |

| Automated Governance | Dynamic risk parameter adjustment |

Predicting the trajectory of venue design involves anticipating how zero-knowledge proofs might enhance privacy without sacrificing the transparency needed for risk analysis. The next phase of development will focus on the creation of standardized, open-source risk frameworks that enable participants to assess venue health with the same precision as traditional financial audits.