Essence

Trading System Validation serves as the rigorous verification process ensuring that automated crypto derivative strategies operate within defined risk parameters and technical constraints. It represents the bridge between abstract mathematical models and the unforgiving reality of decentralized execution environments. By subjecting algorithmic logic to synthetic stress tests, participants identify potential failure modes before capital exposure occurs.

Trading System Validation confirms that automated execution logic adheres to intended risk and performance thresholds under adverse market conditions.

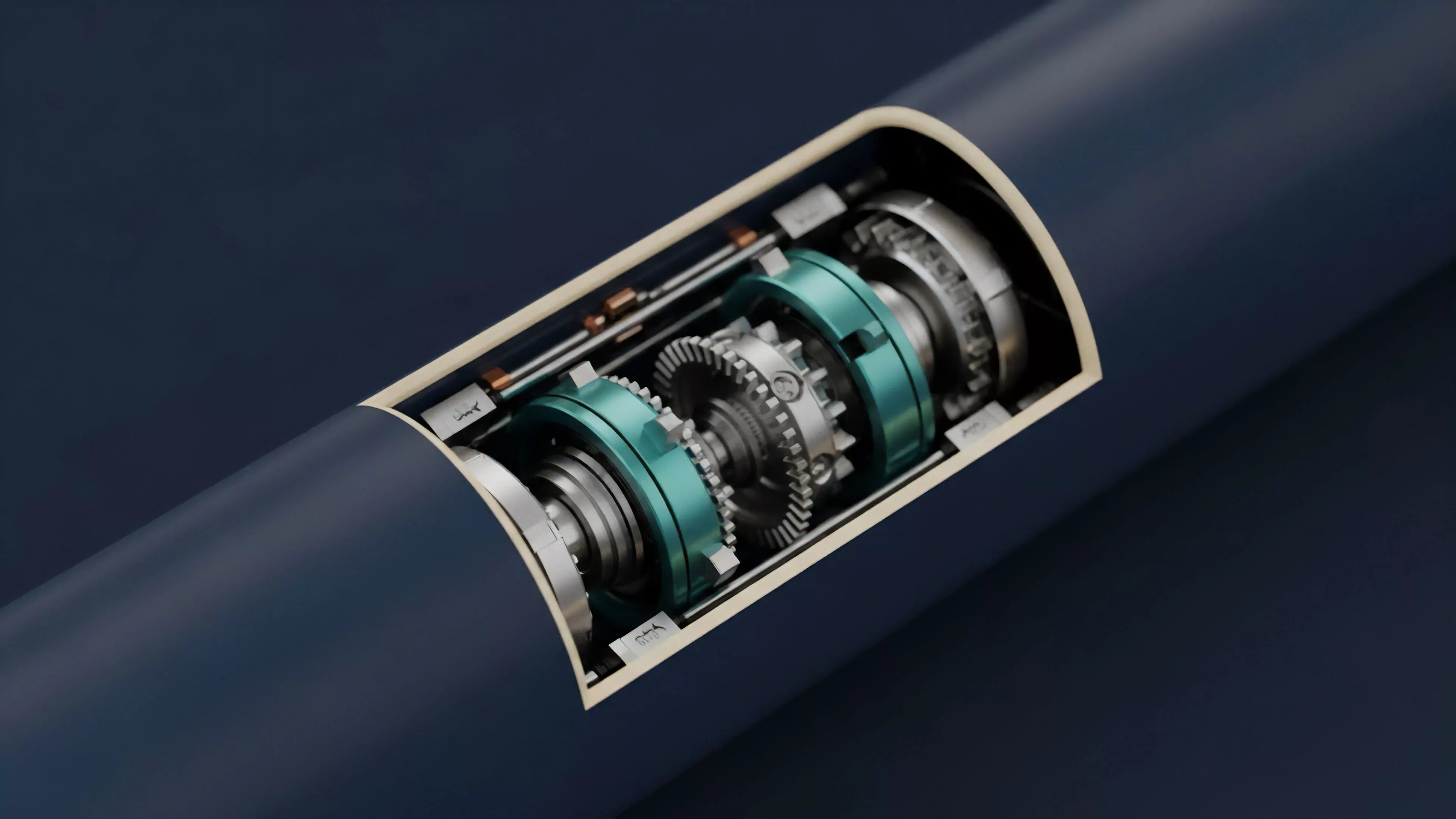

This process centers on the intersection of protocol architecture and market microstructure. A system is only as robust as its ability to maintain margin requirements, handle order flow fragmentation, and manage latency during periods of extreme volatility. Validation transforms theoretical profitability into actionable reliability by enforcing a disciplined review of every state transition within the smart contract layer.

Origin

The requirement for Trading System Validation stems from the historical fragility of early centralized and decentralized derivative venues.

Financial history demonstrates that systemic collapse often originates from unforeseen interactions between leverage, liquidation mechanisms, and market liquidity. Early market participants relied on rudimentary testing, leading to catastrophic losses when code execution diverged from economic intent during high-stress cycles.

- Legacy Finance Models provided the initial framework for backtesting and stress testing, though they lacked the specific constraints of 24/7 blockchain settlement.

- Smart Contract Vulnerabilities introduced a new dimension of risk where technical exploits could bypass intended financial logic, mandating a security-first approach to validation.

- Liquidation Engine Failures across early protocols highlighted the necessity of simulating extreme volatility to ensure collateral sufficiency during rapid price swings.

This evolution reflects a transition from optimistic experimentation to a hardened, engineering-centric perspective. As protocols increased in complexity, the industry recognized that relying on production-grade code without extensive pre-deployment verification invited inevitable failure. Consequently, the focus shifted toward comprehensive simulation environments that replicate the adversarial nature of decentralized markets.

Theory

The theoretical foundation of Trading System Validation rests on probabilistic modeling and adversarial simulation.

A robust validation framework must account for the non-linear relationship between market volatility, liquidity provision, and protocol-level settlement latency. By applying quantitative rigor, architects assess the sensitivity of a strategy to various exogenous shocks, ensuring that the system remains within defined risk limits.

| Parameter | Validation Metric |

| Latency Sensitivity | Execution slippage variance under load |

| Liquidation Thresholds | Collateral sufficiency during flash crashes |

| Margin Integrity | Cross-margin correlation risk exposure |

Quantitative finance provides the tools for evaluating Greeks ⎊ delta, gamma, vega, and theta ⎊ to understand how a system responds to changes in underlying asset prices and time decay. However, in the decentralized domain, these calculations must be reconciled with on-chain settlement speeds. The model is essentially a map of potential future states, and validation tests the durability of that map against the chaotic terrain of real-world order flow.

Quantitative validation models map potential future states to identify the breaking points of a strategy before they encounter live market volatility.

This analytical process requires acknowledging that markets are not static environments but dynamic systems under constant stress from automated agents and human participants. Sometimes, the most elegant mathematical model fails because it ignores the physical reality of gas congestion or the game-theoretic incentives of liquidators. True validation incorporates these externalities, treating the protocol as an adversarial participant rather than a passive ledger.

Approach

Current validation strategies prioritize high-fidelity simulation environments that mirror mainnet conditions.

Developers utilize historical tick data and synthetic order flow to stress-test their systems, focusing on how algorithms manage execution during liquidity droughts. This involves deploying code to testnets, simulating network congestion, and auditing smart contract interactions to ensure that state changes align with the expected financial outcome.

- Backtesting utilizes historical price action to evaluate how a strategy would have performed, providing a baseline for expected return and risk.

- Monte Carlo Simulations generate thousands of potential future market paths, identifying how a system manages extreme tail risk scenarios.

- Formal Verification applies mathematical proofs to smart contract code, ensuring that the logic remains consistent under all possible input conditions.

This methodical approach minimizes the gap between simulation and execution. It is a demanding process that requires deep familiarity with the underlying blockchain consensus and the specific liquidity characteristics of the chosen derivative instruments. Architects must maintain a healthy skepticism toward their own models, constantly seeking out edge cases that could lead to unexpected behavior during periods of high market activity.

Evolution

The trajectory of Trading System Validation has shifted from simple backtesting to sophisticated, real-time risk monitoring and automated fail-safes.

Earlier iterations focused on basic profitability metrics, whereas modern systems prioritize systemic resilience and capital efficiency. As decentralized protocols become more interconnected, the validation process has expanded to account for contagion risk, where the failure of one protocol impacts the liquidity and stability of another.

Modern validation frameworks prioritize systemic resilience and contagion risk management over simple profitability metrics.

The integration of cross-chain data feeds and more efficient oracles has enabled a higher degree of precision in validation models. Architects now design systems that can dynamically adjust their risk exposure based on real-time network health metrics. This shift represents a move toward self-correcting financial structures that recognize their own limitations and act to preserve capital during periods of heightened uncertainty.

Horizon

The future of Trading System Validation involves the integration of autonomous, AI-driven stress testing that evolves alongside the market.

As protocols grow in complexity, manual validation will prove insufficient to identify all potential vulnerabilities. Next-generation systems will employ continuous, automated red-teaming to probe for weaknesses in real-time, effectively creating an immune system for decentralized finance.

| Development Phase | Primary Objective |

| Predictive Simulation | Anticipating liquidity gaps before occurrence |

| Autonomous Auditing | Continuous code verification against market shifts |

| Systemic Risk Mapping | Quantifying inter-protocol contagion pathways |

This evolution will likely redefine the relationship between developers and the systems they deploy. By creating self-validating architectures, the focus will move from reactive patching to proactive, robust design. The ultimate goal is to build financial systems that are not just efficient, but inherently stable and resistant to the structural shocks that have characterized previous market cycles.