Essence

Trading System Calibration represents the precise adjustment of algorithmic parameters and risk thresholds to align automated execution engines with prevailing market microstructure dynamics. It functions as the technical bridge between abstract mathematical models and the volatile reality of decentralized order books.

Trading System Calibration optimizes the alignment between quantitative pricing models and actual market liquidity conditions to ensure execution efficiency.

This practice involves the fine-tuning of latency sensitivities, slippage tolerances, and capital allocation ratios. Without continuous adjustment, strategies decay as market participants adapt their own behaviors, rendering static models obsolete in the face of shifting liquidity and protocol-level constraints.

Origin

The roots of this discipline extend back to high-frequency trading in traditional equity markets, where firms first recognized that order execution quality deteriorates rapidly without constant feedback loops. In decentralized finance, this necessity intensified due to the transparency of on-chain data combined with the opacity of miner extractable value.

- Latency Arbitrage: Early efforts focused on reducing the time between signal generation and transaction inclusion.

- Liquidity Fragmentation: The expansion of decentralized exchanges necessitated calibration across disparate pools to manage cross-venue execution.

- Protocol Interoperability: Modern systems must now calibrate for the variable block times and gas fee volatility inherent to diverse blockchain architectures.

These origins highlight a transition from simple automated trading to complex systems engineering where the infrastructure itself becomes a variable in the risk equation.

Theory

The theoretical framework rests on the assumption that market efficiency is a dynamic state rather than a static equilibrium. Calibration requires mapping the relationship between internal risk parameters and external market signals, often utilizing quantitative sensitivity analysis to identify the optimal operational range.

Mathematical Feedback Loops

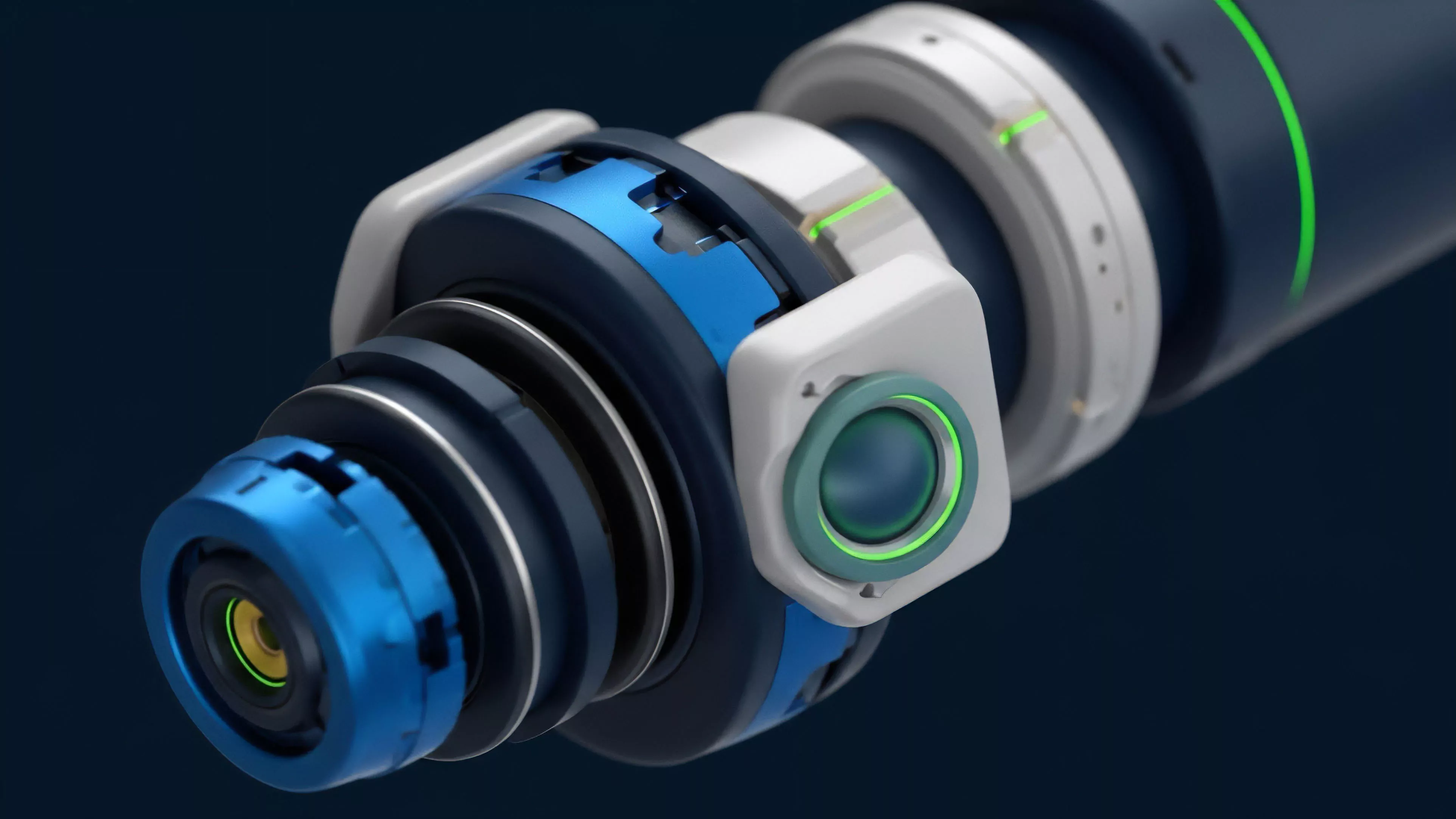

Effective calibration relies on monitoring the Greeks ⎊ delta, gamma, vega, and theta ⎊ in real-time. When realized volatility diverges from implied volatility, the system must recalibrate its hedge ratios to prevent excessive exposure.

Calibration theory treats market volatility as a non-stationary signal requiring adaptive rather than fixed risk responses.

Behavioral Game Theory

Market participants engage in constant strategic interaction. A calibrated system anticipates adversarial order flow, such as front-running or sandwich attacks, by adjusting transaction sequencing and gas price bidding strategies to mitigate negative impact.

| Component | Calibration Objective | Risk Factor |

|---|---|---|

| Slippage Tolerance | Maximize fill rate | Adverse selection |

| Latency Threshold | Minimize execution lag | Network congestion |

| Margin Buffer | Prevent liquidations | Capital inefficiency |

The complexity of these interactions suggests that systems often suffer from over-fitting to historical data, failing to account for black-swan events where correlation structures break down entirely.

Approach

Current methodologies prioritize data-driven loops that ingest real-time order book snapshots and mempool activity. Strategists utilize backtesting frameworks that incorporate synthetic slippage models to simulate how large orders alter the local price surface.

- Parameter Optimization: Algorithms iteratively test ranges of stop-loss and take-profit levels against simulated market shocks.

- Execution Profiling: Systems analyze historical fill quality to determine the most cost-effective routing protocols.

- Stress Testing: Automated agents subject the system to extreme liquidity depletion scenarios to verify margin engine robustness.

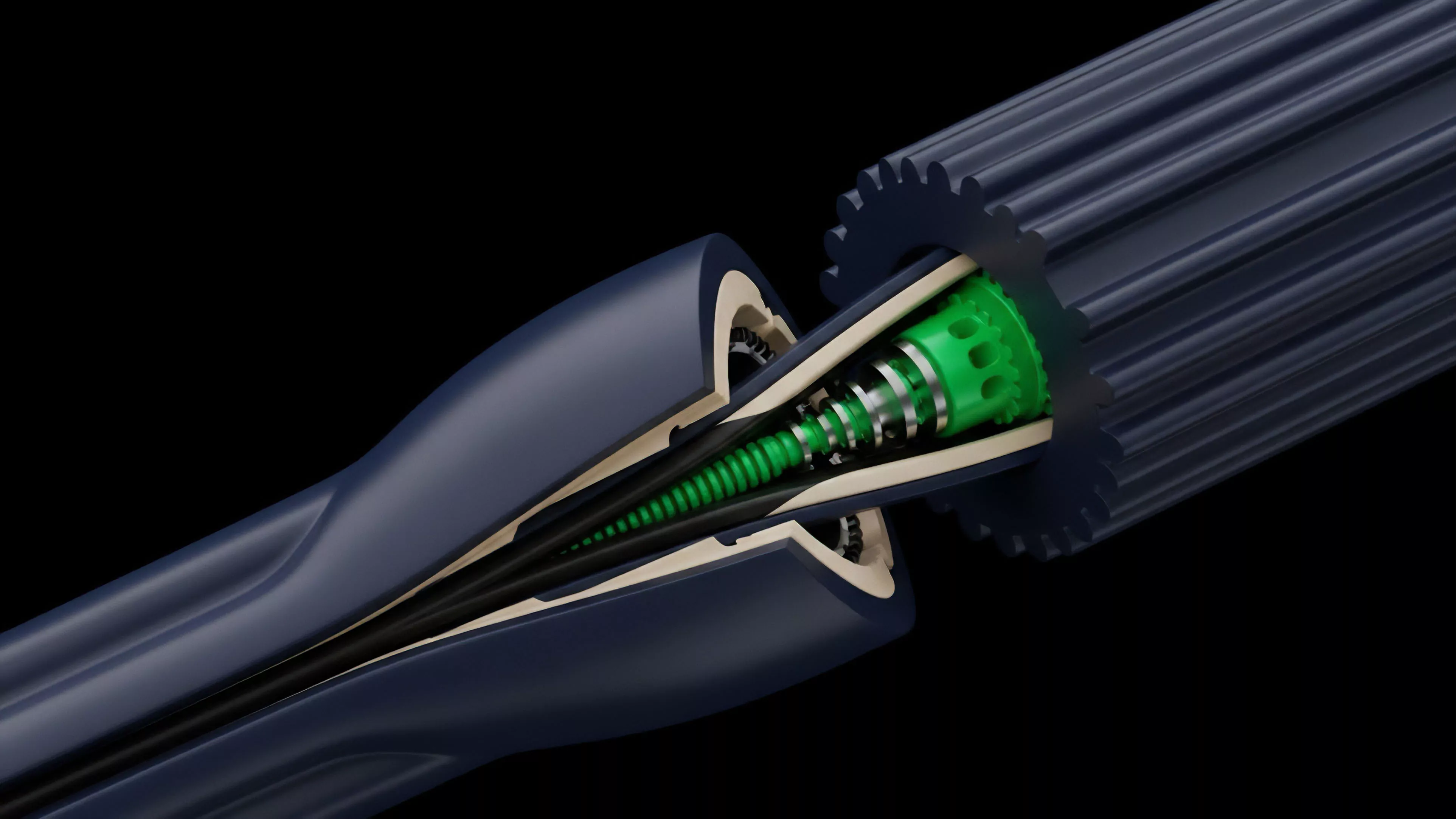

This approach emphasizes the modularity of the trading stack. By isolating the execution engine from the signal generation logic, developers can recalibrate one without compromising the integrity of the other.

Evolution

The discipline has shifted from manual, spreadsheet-based tuning to fully autonomous, machine-learning-driven adjustment. Early protocols relied on human intervention to update parameters after major market dislocations, a process inherently too slow for current decentralized cycles.

Modern evolution focuses on autonomous calibration agents that dynamically update risk parameters in response to protocol-level volatility.

The integration of on-chain analytics has provided a granular view of participant behavior, allowing systems to adjust in anticipation of liquidation cascades or liquidity provision shifts. This evolution reflects a broader movement toward self-correcting financial systems that minimize human reliance during periods of systemic stress.

Horizon

Future development points toward decentralized calibration protocols where community-governed risk parameters adapt via on-chain consensus. This movement aims to remove centralized points of failure, ensuring that the system remains resilient even when individual participants act in ways that deviate from initial assumptions. The path forward involves utilizing zero-knowledge proofs to calibrate strategies against private liquidity sources while maintaining regulatory compliance. As these systems mature, the distinction between manual strategy management and automated infrastructure maintenance will blur, leading to more robust, self-optimizing decentralized financial architectures. What unseen feedback loops within the current decentralized derivative infrastructure will define the next major systemic failure or breakthrough in autonomous risk management?