Essence

Throughput Optimization in decentralized derivatives refers to the architectural design and execution strategy employed to maximize the volume of order matching, liquidation processing, and state updates within a specific blockchain or layer-two environment. It represents the limit of capital velocity within a permissionless system, where the ability to settle trades at high frequency directly dictates the viability of complex financial instruments like American options or delta-neutral strategies.

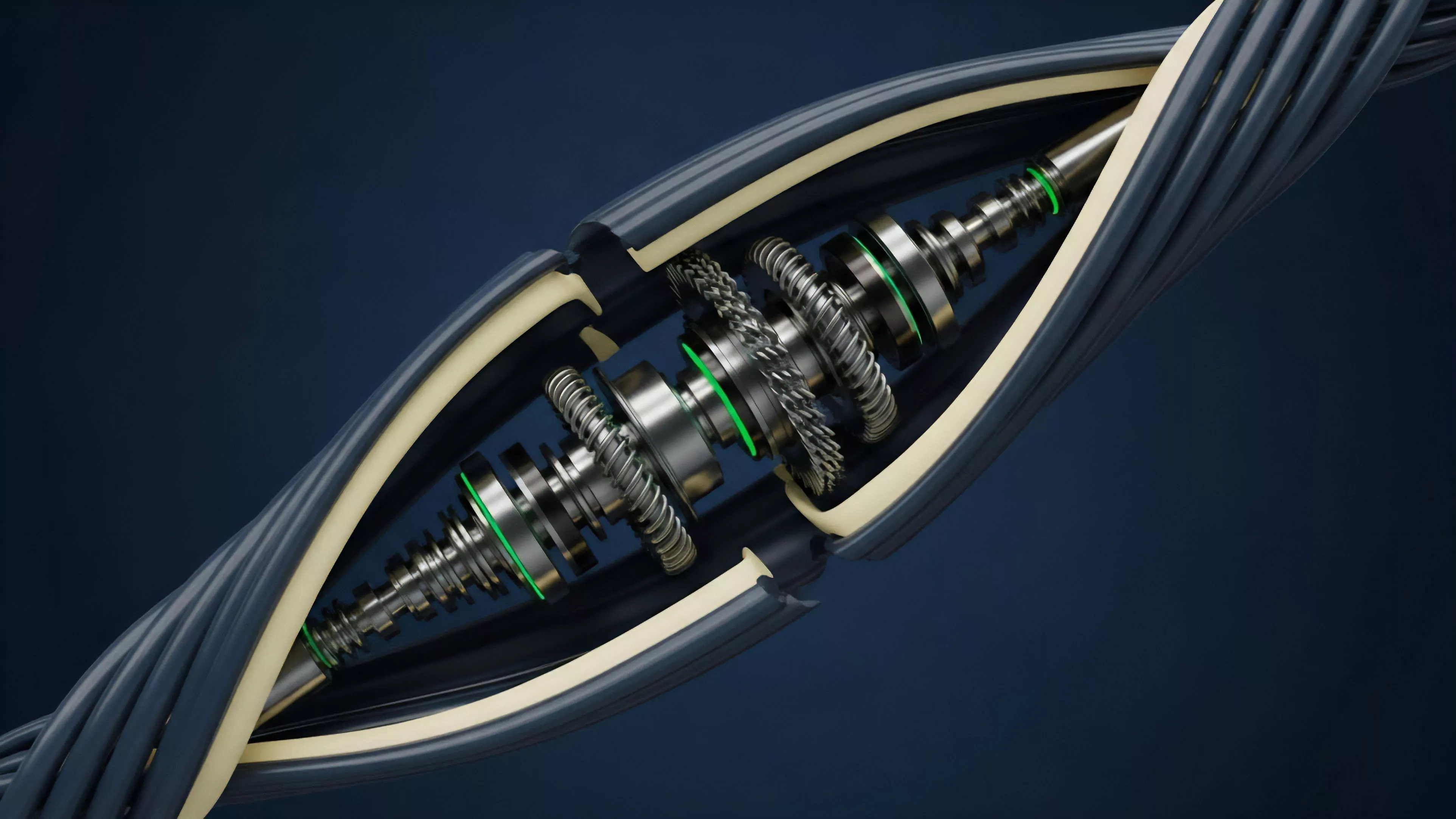

Throughput optimization functions as the mechanical ceiling for market efficiency, defining the maximum rate at which liquidity can react to price discovery.

The core significance lies in the reduction of latency between intent and finality. When order flow encounters bottlenecks in the underlying consensus mechanism, the resulting slippage creates synthetic risk for market makers. This creates a direct correlation between network performance and the cost of hedging volatility.

Systems designed for high-frequency interaction must balance the overhead of decentralized verification with the need for near-instantaneous execution.

Origin

Early iterations of decentralized trading relied on on-chain order books that suffered from extreme congestion during periods of high volatility. Developers observed that standard consensus protocols, designed for security and decentralization, were fundamentally ill-suited for the rapid state transitions required by derivative markets. This conflict necessitated a shift toward specialized scaling solutions.

- Transaction Sequencing emerged as the primary method to order incoming requests before they reached the smart contract layer.

- Off-chain Computation models allowed protocols to move the heavy lifting of matching engines away from the mainnet to improve speed.

- State Channel implementations were tested to enable high-volume, low-latency interaction between two parties without constant broadcast to the ledger.

The evolution began with simple automated market makers, but as the demand for more sophisticated hedging instruments grew, the architecture moved toward rollups and custom execution environments. The goal shifted from simple asset swapping to maintaining a consistent, high-performance order flow capable of handling complex greeks management in real-time.

Theory

At the mathematical level, Throughput Optimization is an exercise in minimizing the time-to-settlement for a given set of operations. In an adversarial environment, the system must ensure that the order matching engine remains accurate despite attempts at front-running or malicious congestion. The theoretical framework relies on the interplay between block space availability and the efficiency of the smart contract logic.

| Metric | Impact on Derivatives |

| Finality Time | Dictates the speed of liquidation triggering |

| Gas Throughput | Limits the complexity of option pricing formulas |

| State Bloat | Affects the long-term cost of historical data |

The system experiences stress when market participants compete for limited block space during rapid price movements. This forces a trade-off where the protocol must either prioritize high-fee transactions, potentially leading to exclusion, or implement fair-sequencing algorithms. The physics of the protocol determines whether the liquidation engine can function during black swan events, or if it will fail due to network saturation.

Sometimes the most elegant solution involves removing the need for global state updates entirely, shifting toward localized execution environments where the speed of light is the only true constraint.

The structural integrity of a derivative protocol depends on the ability to maintain deterministic state updates even under maximum network load.

Approach

Current strategies for achieving higher performance involve isolating the trading logic from the broader network state. By utilizing zero-knowledge proofs, developers can compress large batches of trades into a single, verifiable statement. This method significantly increases the number of positions that can be opened or closed without burdening the base layer.

- Parallel Execution allows multiple unrelated order updates to occur simultaneously across different shards.

- Optimistic Rollups assume validity for faster throughput, utilizing fraud proofs to revert invalid states.

- Custom Virtual Machines provide a tailored environment that strips away unnecessary computational overhead.

Market makers and liquidity providers now prioritize protocols that demonstrate a clear pathway to high-speed settlement. The technical architecture must also account for the mev-resistance required to keep the order flow equitable. When the infrastructure is too slow, the market effectively becomes a private club for those who can afford to bribe validators for priority, which defeats the purpose of open financial access.

Evolution

The progression of these systems moved from basic on-chain swaps to highly complex, multi-layered derivative platforms. Early designs were limited by the rigid nature of initial blockchain architectures. As the demand for cross-margining and sophisticated risk management grew, the focus turned toward modularity, where the execution layer, settlement layer, and data availability layer are decoupled.

Evolution in this space is characterized by the transition from monolithic, general-purpose chains to purpose-built, high-performance application-specific environments.

This architectural shift enables a level of precision that was previously impossible. We are witnessing the rise of decentralized exchanges that perform with the speed of centralized counterparts while retaining the transparency of on-chain accounting. The industry has learned that security cannot be sacrificed for speed, leading to a focus on cryptographic verification methods that do not rely on centralized trust.

This is a quiet revolution in the history of capital markets, where the plumbing of finance is being rewritten in code that is accessible to anyone with a connection.

Horizon

Future developments will center on the integration of asynchronous communication between different rollups and the base layer. As we move toward a more fragmented yet interconnected landscape, the ability to maintain a consistent state across these boundaries will become the defining feature of high-throughput protocols. The next generation of systems will likely incorporate hardware-level optimizations, such as specialized zero-knowledge hardware acceleration, to push the limits of what is computationally feasible.

| Trend | Implication |

| Hardware Acceleration | Sub-millisecond proof generation |

| Cross-Rollup Interoperability | Unified liquidity across ecosystems |

| Automated Risk Engines | Dynamic liquidation threshold adjustments |

The ultimate goal is a global, unified market that operates without the friction of traditional clearing houses. By optimizing for speed and efficiency, we are creating a system that is not only faster but fundamentally more resilient to the systemic risks that plagued legacy financial institutions. How will the interaction between automated, high-speed liquidation engines and human-driven market sentiment shape the volatility regimes of the next decade?