Essence

Systemic Risk Quantification represents the mathematical discipline of measuring the potential for cascading failures across decentralized financial networks. It functions as the diagnostic layer that identifies how localized liquidations or protocol insolvencies transmit shockwaves throughout interconnected liquidity pools.

Systemic risk quantification measures the probability and magnitude of chain reactions within decentralized financial systems triggered by localized asset volatility.

At its base, this field evaluates the structural fragility inherent in cross-collateralized lending and derivative positions. Unlike traditional finance where centralized clearinghouses act as shock absorbers, decentralized systems rely on algorithmic liquidations. When these mechanisms fail to execute during high-volatility events, the resulting bad debt creates a feedback loop that threatens the stability of the entire network.

Origin

The requirement for Systemic Risk Quantification arose from the limitations of early decentralized lending protocols. Initial designs prioritized capital efficiency over failure isolation, creating architectures where a single asset’s price collapse could trigger widespread insolvency across unrelated markets. Historical market events, specifically the rapid unwinding of leveraged positions during liquidity crunches, revealed that standard value-at-risk models underestimated the speed of contagion in programmable money.

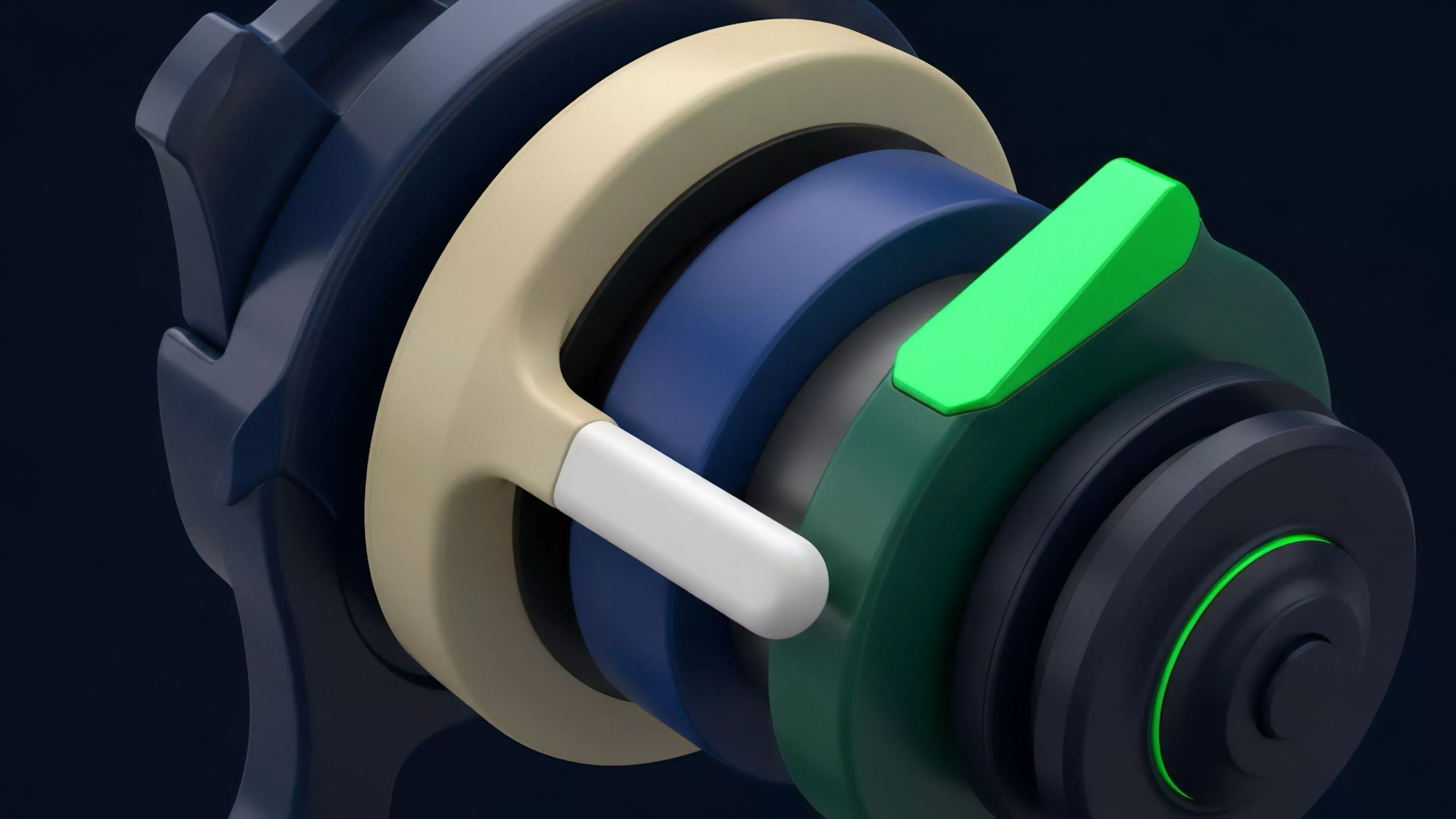

Developers recognized that smart contract composability, while beneficial for innovation, creates dense dependency networks that propagate risk instantaneously.

- Protocol Interconnectivity refers to the practice of using one protocol’s receipt tokens as collateral in another, amplifying leverage across the ecosystem.

- Liquidation Cascades occur when automated selling triggers further price drops, leading to subsequent waves of liquidations in a self-reinforcing cycle.

- Oracle Failure represents the vulnerability where inaccurate price feeds lead to incorrect valuation of collateral, destabilizing the entire system.

Theory

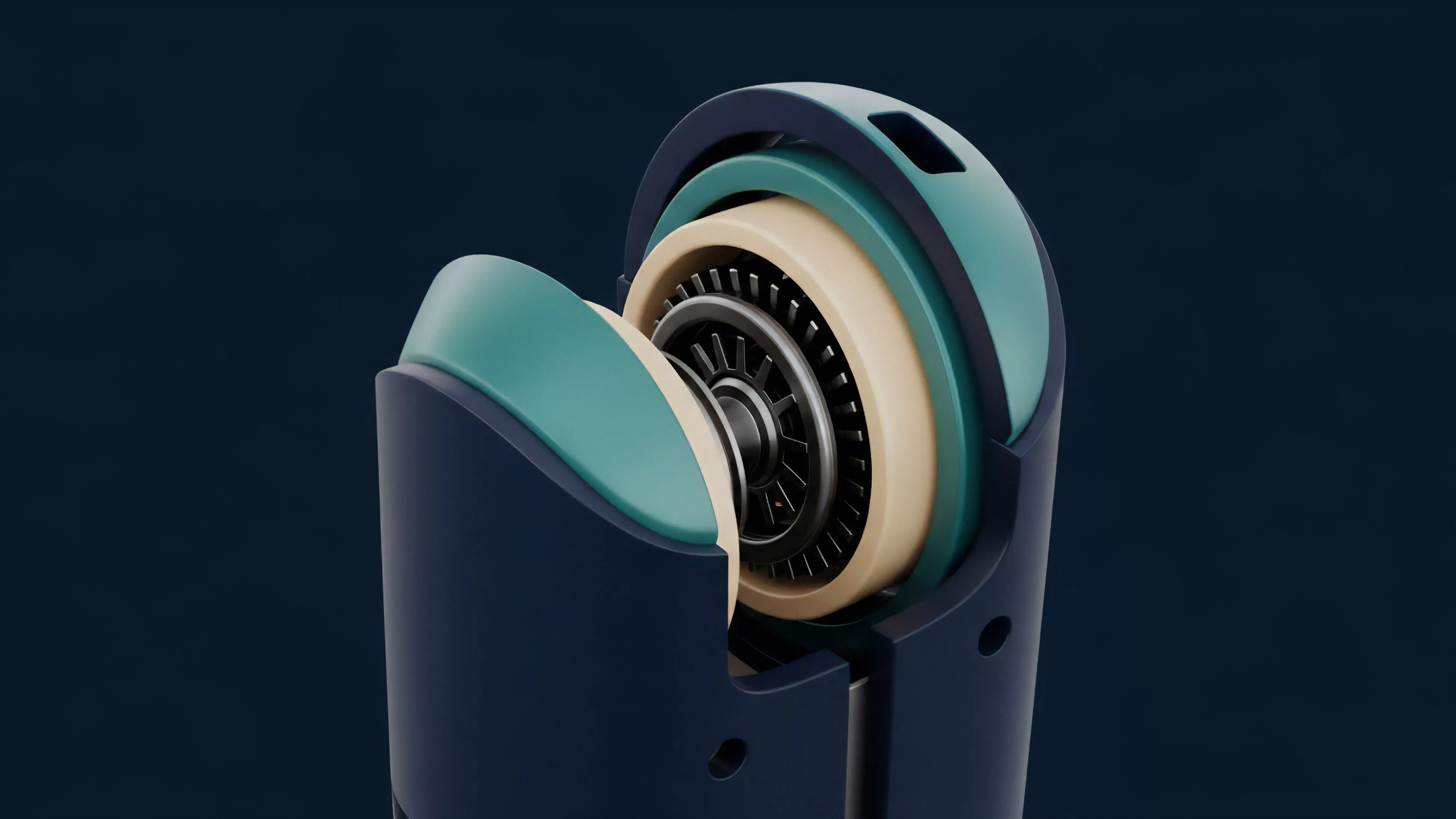

The structural integrity of decentralized derivatives relies on the precise calibration of liquidation thresholds and margin requirements. Quantifying risk requires modeling the interaction between price volatility and the latency of on-chain execution.

Quantitative risk models for crypto derivatives must account for the non-linear relationship between margin depletion and network congestion.

Greeks and Sensitivity Analysis

The application of Delta, Gamma, and Vega in a decentralized context demands adjustment for the absence of a central counterparty. Risk architects must analyze how these sensitivities shift when liquidity providers withdraw funds during market stress.

| Metric | Systemic Significance |

|---|---|

| Delta | Measures immediate exposure to underlying asset price movements. |

| Gamma | Quantifies the rate of change in delta as the underlying price shifts. |

| Vega | Assesses vulnerability to changes in implied volatility across the derivative curve. |

The mathematical modeling of these sensitivities allows for the simulation of tail risk events. By stress-testing protocols against extreme price movements, architects determine the viability of their collateralization ratios.

Approach

Current practice involves the integration of real-time on-chain analytics with probabilistic simulation models.

This approach prioritizes the monitoring of large, highly leveraged accounts that, if liquidated, would overwhelm the market’s depth.

Order Flow Analysis

Market participants evaluate order flow toxicity to determine the probability of informed trading impacting protocol stability. High toxicity indicates that liquidity providers are likely to face adverse selection, leading them to increase spreads or withdraw capital, which exacerbates systemic fragility.

- Liquidity Depth analysis ensures that collateral can be sold during crises without causing excessive price slippage.

- Concentration Risk monitoring identifies protocols heavily reliant on a small number of large depositors or specific asset classes.

- Margin Sufficiency testing verifies that protocols maintain enough excess capital to cover potential bad debt during extreme volatility.

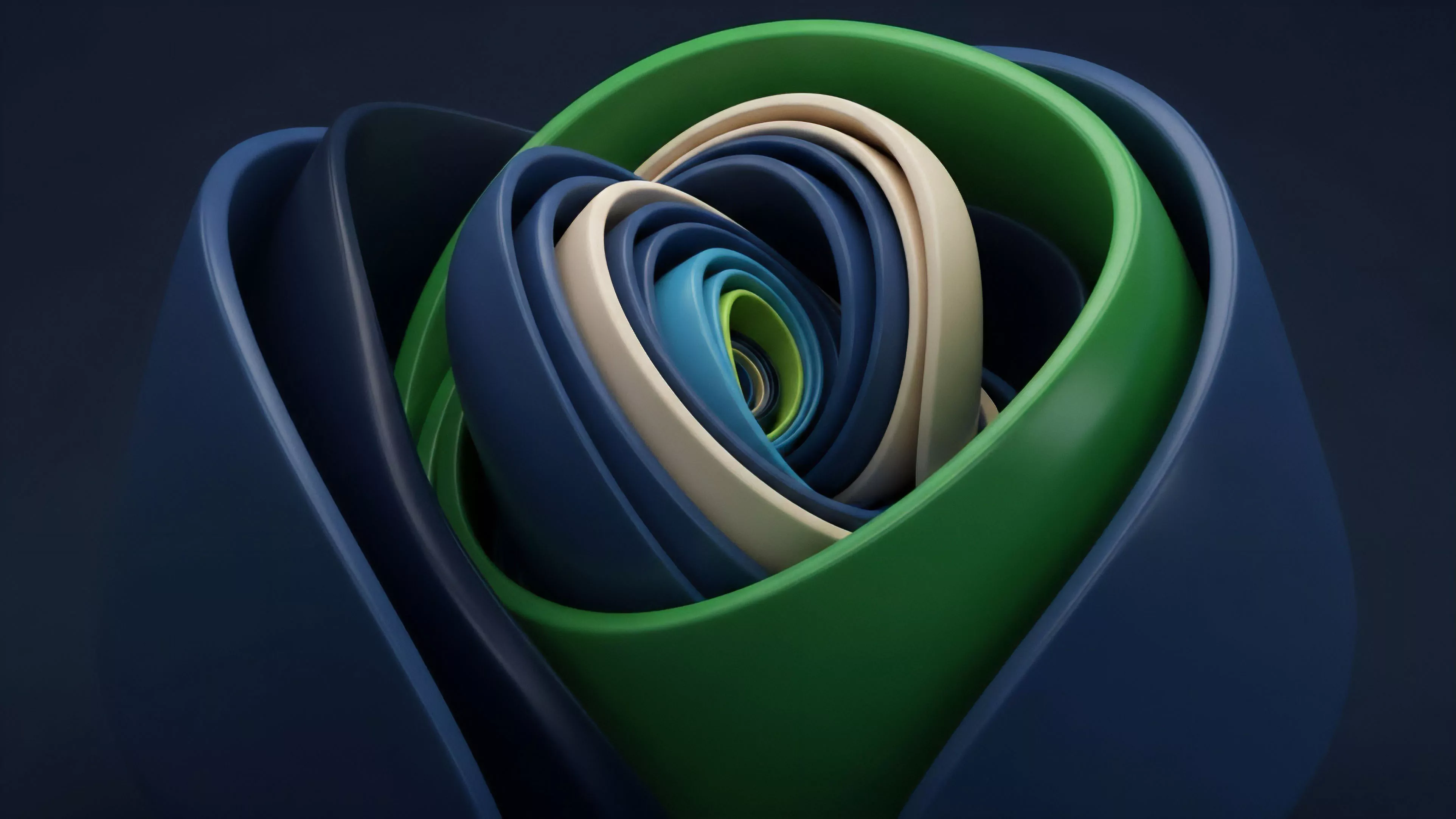

The shift toward cross-margin risk engines marks a significant evolution in how platforms handle exposure. By unifying collateral across multiple positions, these engines attempt to provide a more holistic view of a user’s solvency, though this also increases the potential for a single account to trigger broader system failures.

Evolution

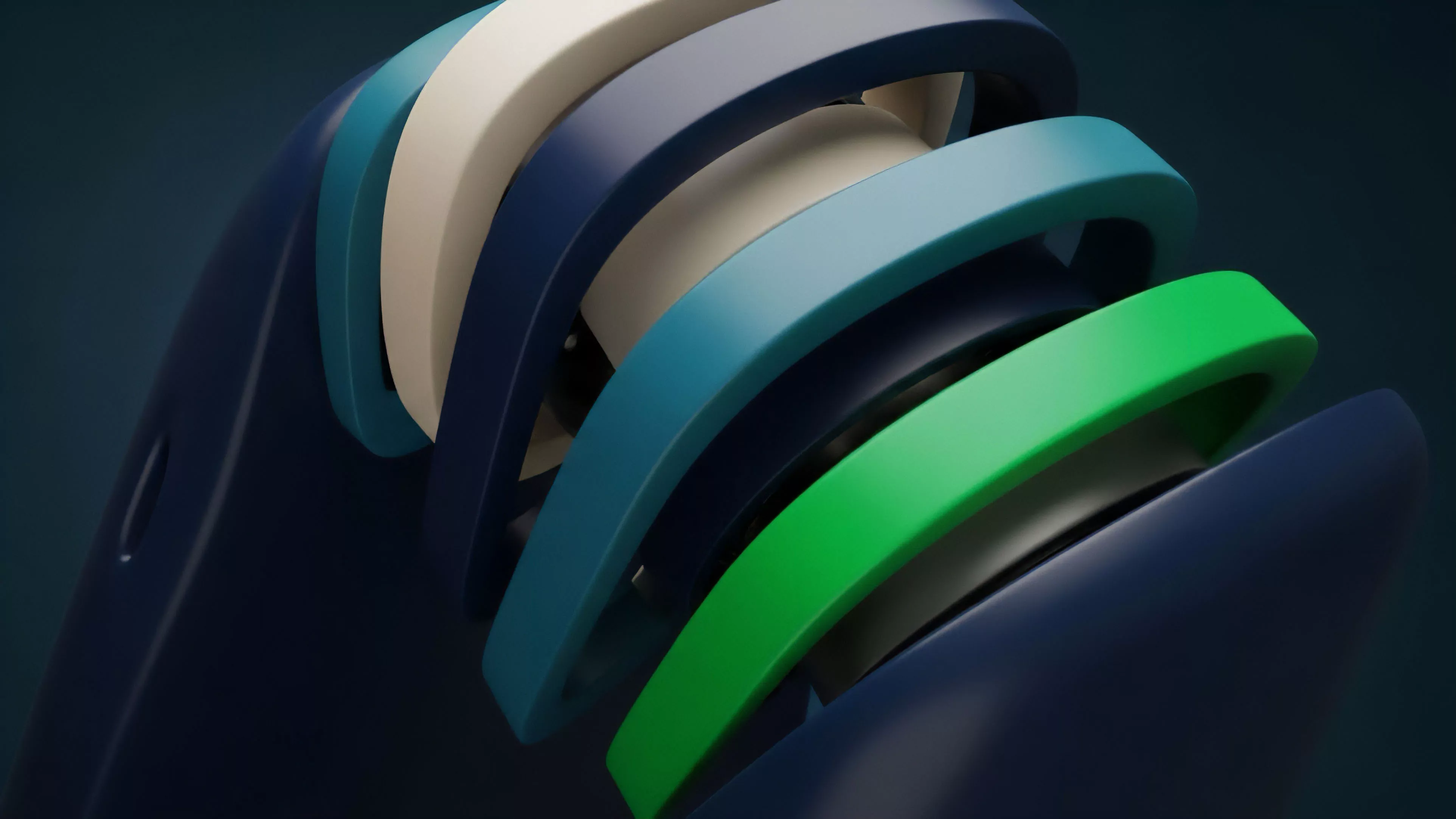

The discipline has evolved from simple over-collateralization requirements to sophisticated, automated risk-mitigation frameworks.

Early platforms operated as silos, whereas modern systems function as integrated, multi-layered environments where risk is managed through dynamic interest rate adjustments and automated circuit breakers. The introduction of algorithmic market makers and decentralized clearing layers has transformed the risk landscape. These systems now incorporate historical volatility data and real-time network throughput metrics to adjust collateral requirements dynamically, reflecting the reality that systemic risk is not a constant but a function of current market state and participant behavior.

Dynamic risk adjustment mechanisms allow protocols to throttle leverage during periods of high market stress to preserve system solvency.

| Era | Primary Risk Management Strategy |

|---|---|

| Foundational | Static over-collateralization ratios. |

| Intermediate | Multi-asset collateral pools and interest rate adjustments. |

| Advanced | Dynamic margin engines and real-time contagion monitoring. |

Horizon

The future of Systemic Risk Quantification lies in the development of decentralized, cross-protocol risk oracles. These entities will provide standardized, verifiable data on systemic exposure, allowing for the creation of insurance layers that operate autonomously across the entire decentralized finance stack.

Conjecture on Contagion

The hypothesis is that future systemic crises will be mitigated by the widespread adoption of automated volatility-based margin scaling. This framework will adjust required collateral not based on static thresholds, but on the real-time, cross-protocol correlation of assets. By mathematically linking collateral requirements to the interconnectedness of the underlying assets, protocols will prevent the accumulation of systemic leverage before it reaches a critical threshold. The next generation of risk instruments will likely be programmable insurance tokens that automatically trigger payouts when specific systemic risk indicators are breached, effectively decentralizing the function of a lender of last resort. What remains the most significant paradox when we attempt to quantify systemic risk in an environment where the very act of measurement alters the behavior of the market participants being observed?