Essence

Static Analysis Tools function as the automated sentinels of the decentralized financial landscape. These systems perform rigorous inspection of smart contract source code and bytecode without executing the underlying logic. By parsing the abstract syntax tree or control flow graph of a protocol, these tools identify logical inconsistencies, potential reentrancy vectors, and integer overflows before capital ever interacts with the contract.

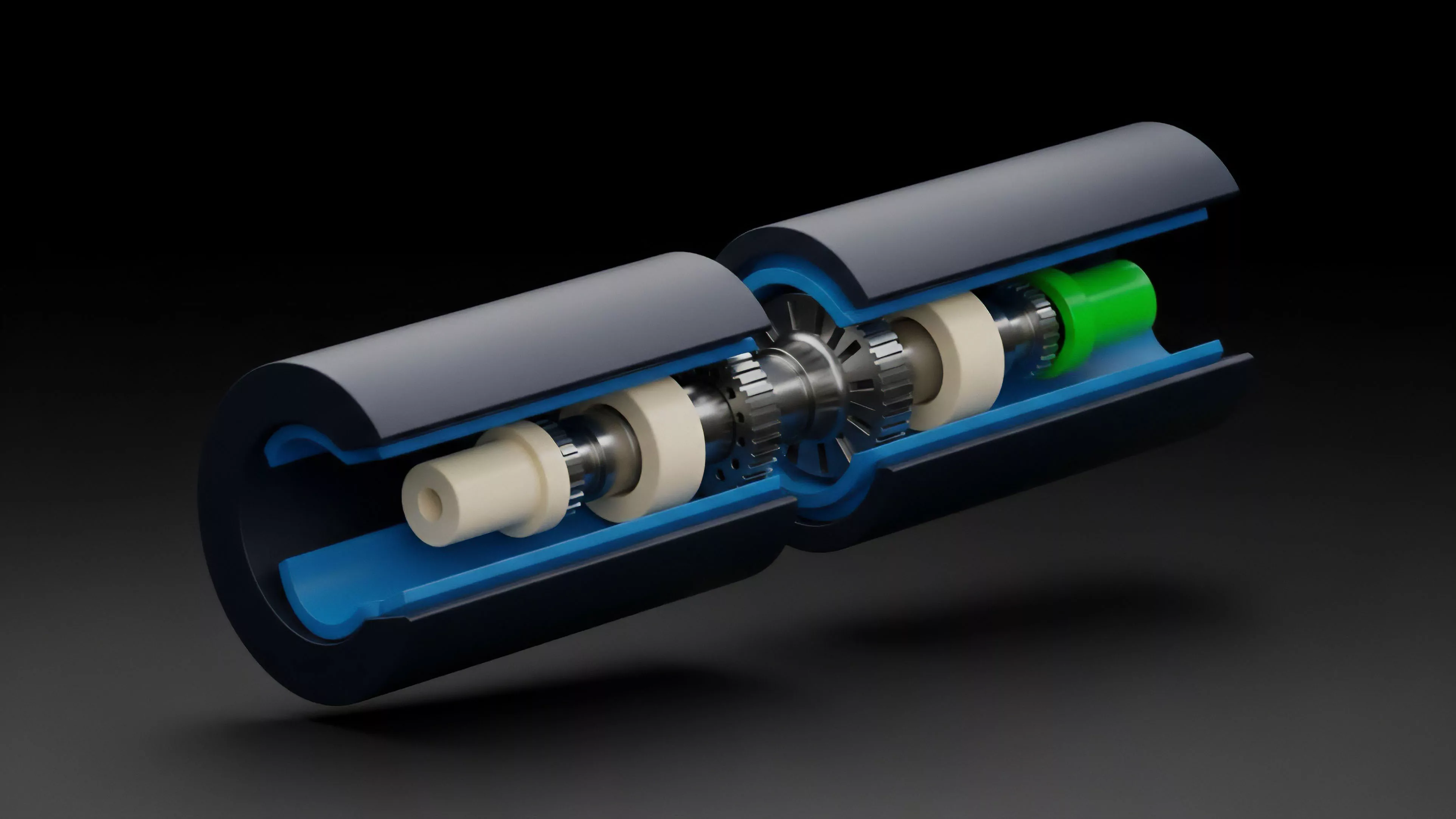

Static analysis provides a deterministic audit of protocol integrity by examining code structure without requiring runtime execution.

The primary value proposition lies in the reduction of systemic risk. In an environment where code constitutes legal and financial authority, these tools serve as the first line of defense against the permanent loss of assets. They provide developers with the capacity to detect vulnerabilities that remain hidden to manual review, effectively mapping the attack surface of a derivative engine or automated market maker.

Origin

The lineage of Static Analysis Tools traces back to formal verification methods developed for high-assurance software in aerospace and defense.

As decentralized finance emerged, the necessity for robust security migrated from centralized server environments to public, immutable ledgers. The transition occurred when developers recognized that traditional debugging methods failed to address the specific adversarial nature of programmable money.

- Formal Verification provides the mathematical proofs required to guarantee specific properties within a contract.

- Symbolic Execution explores multiple execution paths by treating variables as symbolic inputs to detect hidden edge cases.

- Pattern Matching identifies known vulnerability signatures such as unchecked external calls or insecure access control modifiers.

This evolution represents a shift from reactive patching to proactive architectural validation. Early iterations focused on identifying simple buffer overflows, but contemporary systems now model complex state transitions to ensure that protocol invariants remain intact under varying market conditions.

Theory

The mechanics of Static Analysis Tools rely on the decomposition of smart contracts into intermediate representations. By transforming complex solidity or vyper code into a simplified mathematical structure, these tools can apply graph theory to analyze the reachability of specific states.

This allows for the identification of dangerous loops, dead code, and improper privilege escalation.

Automated code analysis leverages graph theory and state transition modeling to identify structural vulnerabilities before deployment.

The efficacy of these systems depends on the quality of their heuristics and the depth of their path analysis. Adversarial agents continuously probe protocol logic for deviations; therefore, the analysis engine must account for all possible input combinations. This creates a computational trade-off between the depth of the search and the time required to generate results.

| Methodology | Primary Focus | Computational Cost |

| Static Pattern Matching | Known exploit signatures | Low |

| Symbolic Execution | Path-specific logic errors | High |

| Formal Verification | Mathematical proof of invariants | Very High |

Approach

Current implementation strategies integrate Static Analysis Tools directly into the continuous integration pipeline. Developers treat these scans as a mandatory gate for every pull request, ensuring that no code reaches the mainnet without passing a battery of automated security checks. This process creates a continuous feedback loop between the engineering team and the security architecture.

- Continuous Integration automates the scanning process upon every code commit.

- Custom Rule Sets allow teams to define protocol-specific invariants that must never be violated.

- Cross-Contract Analysis evaluates the security implications of interactions between different liquidity pools or vaults.

My assessment of current market standards suggests that reliance on these tools is insufficient if the underlying architectural design lacks fundamental security. The tool detects the error, but the engineer must design the resilience. The most effective strategies utilize these tools to enforce strict coding standards that minimize the probability of human error during the development phase.

Evolution

The trajectory of these tools is moving toward machine-learned anomaly detection.

Initial versions relied on human-defined rules, whereas newer systems can adapt to new exploit vectors by analyzing patterns from historical contract failures. This transition reflects the maturation of decentralized infrastructure from experimental scripts to hardened financial systems.

The shift toward automated anomaly detection represents the next stage in hardening protocols against sophisticated adversarial actors.

Market participants now demand higher transparency regarding the security of derivatives. This demand forces projects to publish audit reports generated by advanced static analysis alongside their documentation. This transparency is no longer optional; it is a fundamental requirement for attracting institutional liquidity and maintaining market trust.

Horizon

Future developments will focus on real-time monitoring and adaptive threat mitigation.

As protocols become increasingly interconnected, the scope of analysis must expand to encompass the entire liquidity stack. We are moving toward a future where automated tools provide instantaneous risk scores based on real-time code changes and market-driven stress tests.

| Development Phase | Primary Objective |

| Legacy | Basic syntax and pattern check |

| Current | Deep symbolic execution and invariants |

| Future | Autonomous real-time security monitoring |

The critical challenge remains the gap between theoretical code correctness and operational risk. My conjecture posits that the next generation of these tools will integrate market data with code analysis to simulate how liquidity shifts might trigger latent vulnerabilities in a protocol. This would transform static analysis into a dynamic, predictive engine for financial stability.