Essence

Secure Data Validation serves as the cryptographic verification layer ensuring that external inputs feeding into decentralized derivative protocols maintain integrity, accuracy, and tamper-resistance. Without this mechanism, the deterministic nature of smart contracts remains vulnerable to malicious data manipulation or accidental feed failures, which would render complex financial instruments like options and perpetual swaps non-functional.

Secure Data Validation acts as the primary defense against oracle-based exploits by ensuring that financial data inputs are cryptographically signed and verified before triggering automated contract settlements.

At its core, this process transforms raw, untrusted data from off-chain sources into high-fidelity inputs that protocols trust to execute margin calls, liquidations, and premium calculations. It functions as the bridge between the chaotic, high-velocity real world and the rigid, logic-gated environment of on-chain finance.

Origin

The genesis of Secure Data Validation lies in the fundamental architectural limitation of early blockchain networks, which were unable to natively access real-time market data without relying on centralized, opaque intermediaries. This dependency introduced a single point of failure that contradicted the core promise of decentralization.

Developers recognized that the reliability of a decentralized option contract is strictly bounded by the reliability of its underlying data feed.

- Oracle Problem: The inability of isolated smart contracts to access external data necessitated the creation of decentralized middleware.

- Cryptographic Proofs: Early implementations focused on multi-signature schemes to ensure that data providers remained accountable for their reported price points.

- Aggregated Feeds: The shift toward consensus-based validation models replaced individual feed reliance with decentralized networks of independent node operators.

These initial architectures sought to mitigate the systemic risk inherent in centralized price feeds, which were frequently susceptible to manipulation during periods of high market volatility. The evolution from basic single-source feeds to robust, decentralized validation frameworks represents a transition toward hardening the financial infrastructure of the entire digital asset space.

Theory

The mathematical rigor of Secure Data Validation relies on distributed consensus algorithms to filter noise and detect adversarial behavior in data streams. When protocols calculate the payoff for an option, the precision of the underlying asset price is paramount; even a minor deviation caused by latency or manipulation can lead to erroneous liquidations or insolvency for the protocol.

Robust validation frameworks utilize statistical outlier detection and cryptographic commitment schemes to ensure that data inputs remain within acceptable deviation thresholds before protocol execution.

Adversarial participants constantly test the boundaries of these validation systems, attempting to induce price slippage or trigger artificial liquidation events. Consequently, the design of these validation engines must incorporate game-theoretic incentives that penalize malicious reporting while rewarding consistent, high-accuracy data delivery.

| Validation Mechanism | Primary Benefit | Risk Profile |

| Threshold Signatures | High Throughput | Collusion Risk |

| Proof of Stake Consensus | Economic Security | Capital Cost |

| Zero Knowledge Proofs | Data Privacy | Computational Overhead |

The architecture of these systems is rarely static. It operates under constant stress from automated agents seeking to exploit the gap between off-chain price discovery and on-chain settlement.

Approach

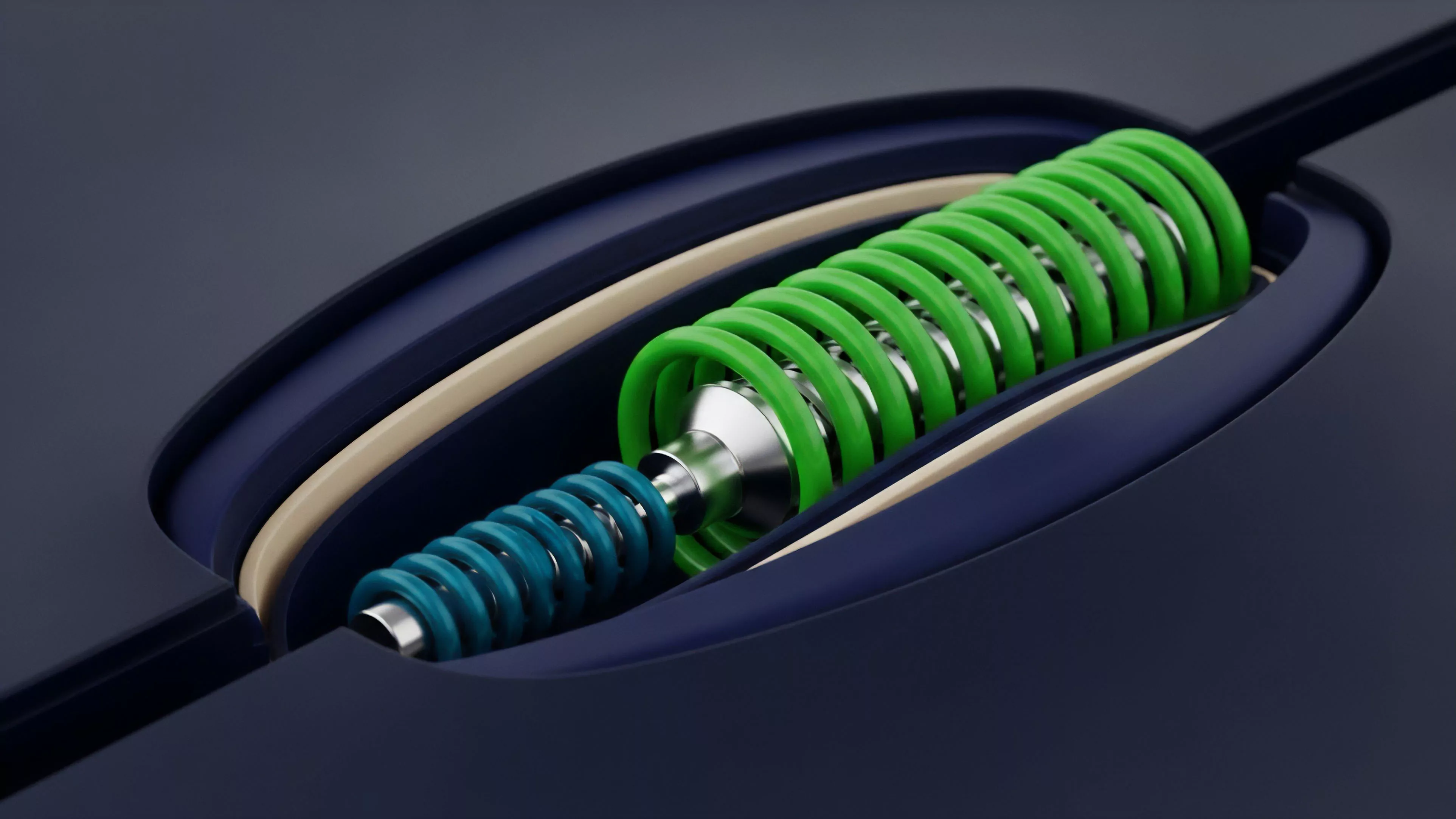

Modern implementations of Secure Data Validation emphasize latency minimization and modularity to handle the high-frequency demands of options trading. Protocols now leverage decentralized oracle networks that aggregate data from numerous independent sources, utilizing weighted median calculations to neutralize the influence of bad actors.

- Decentralized Aggregation: Systems combine multiple independent data streams to create a singular, hardened reference price for derivative settlement.

- Latency Mitigation: Optimized data delivery paths reduce the temporal gap between market events and on-chain settlement, protecting users from front-running.

- Cryptographic Verification: Every data point is signed by its source, creating an immutable audit trail that holds participants accountable for reporting accuracy.

This approach ensures that even during extreme market volatility, the data powering derivative instruments remains representative of true market equilibrium. The technical sophistication required to maintain this level of integrity is immense, as the system must process millions of data points while maintaining sub-second finality.

Evolution

The trajectory of Secure Data Validation has moved from basic, centralized data feeds to highly sophisticated, multi-layered consensus frameworks. Early designs were often rigid, failing to adapt to the rapid expansion of exotic derivative instruments.

Today, the focus has shifted toward cross-chain compatibility and the integration of hardware-based security modules.

The evolution of data validation protocols has progressed from simple, vulnerable single-source feeds to complex, resilient multi-party consensus engines designed for systemic stability.

We are witnessing a shift where validation logic is moving closer to the execution layer itself. This reduces the attack surface and enhances the overall efficiency of decentralized finance by ensuring that data integrity is baked into the protocol architecture from the start. The complexity of these systems continues to grow, mirroring the sophistication of the financial products they enable.

Horizon

The future of Secure Data Validation lies in the integration of real-time, privacy-preserving validation techniques that do not sacrifice performance.

As derivatives markets become increasingly global and interconnected, the demand for cross-jurisdictional, high-speed data validation will grow exponentially.

- Hardware Security Modules: Integrating trusted execution environments directly into data reporting nodes to prevent internal tampering.

- Cross-Chain Interoperability: Developing standardized validation protocols that allow derivative markets to access high-fidelity data regardless of the underlying blockchain.

- Dynamic Consensus Models: Implementing adaptive validation mechanisms that automatically tighten security parameters during periods of elevated market volatility.

The ultimate goal remains the construction of a financial infrastructure where data integrity is guaranteed by mathematical law rather than human oversight. This transformation is essential for scaling decentralized derivatives to match the volume and complexity of traditional institutional finance.