Essence

Scalability Testing Methodologies constitute the rigorous validation frameworks applied to decentralized financial infrastructure to determine the upper limits of transaction throughput, latency, and state growth before systemic failure occurs. These methodologies quantify how a protocol manages concurrent order flow, margin updates, and clearing processes under extreme load. They serve as the definitive measure of whether a decentralized venue can support institutional-grade derivative activity without degrading into network congestion or consensus stalling.

Scalability testing methodologies establish the operational threshold for decentralized venues by measuring throughput limits and latency under peak market stress.

The core objective involves stress-testing the consensus mechanism and state machine to identify bottlenecks in data propagation or smart contract execution. By simulating synthetic volume spikes, engineers observe how margin engines and liquidation triggers behave when block space becomes scarce. This assessment provides the data necessary to determine if the protocol maintains its financial integrity during high-volatility events when liquidity providers and traders simultaneously execute thousands of positions.

Origin

These methodologies emerged from the early struggles of public blockchain networks to process simple token transfers during periods of high demand. Early pioneers identified that transaction finality and throughput capacity represented the primary constraints for decentralized finance. Developers transitioned from basic load testing to sophisticated distributed systems analysis, adapting techniques from high-frequency trading infrastructure to the constraints of distributed ledger technology.

The intellectual roots lie in traditional systems engineering combined with game theory applications. As protocols began supporting complex derivatives, the requirement shifted from measuring raw transfers to evaluating the latency of complex state updates within automated market makers. The following factors drove this evolution:

- Protocol throughput constraints necessitated specialized testing for parallel execution environments.

- Latency-sensitive derivative markets required validation of sub-second settlement times.

- Adversarial network conditions forced the adoption of stress-testing frameworks simulating malicious actors attempting to clog the network.

Theory

The theoretical framework for Scalability Testing Methodologies relies on queueing theory and probabilistic modeling to predict system behavior under non-linear stress. When transaction volume exceeds the block gas limit, the protocol enters a state of congestion where priority is determined by fee markets. Understanding the marginal cost of computation becomes the primary metric for assessing if a protocol can handle sustained institutional volume without failing.

Queueing theory applied to blockchain architecture reveals how transaction backlogs impact the precision of margin calls and liquidation execution speed.

Mathematical modeling of systemic risk requires observing the propagation delay of transactions across global validator sets. If the time required to achieve consensus finality exceeds the time required for price discovery in external spot markets, the protocol becomes susceptible to arbitrage exploitation. The following table summarizes the technical parameters essential for these evaluations:

| Parameter | Measurement Metric | Systemic Significance |

| Throughput | Transactions Per Second | Market Liquidity Capacity |

| Latency | Time To Finality | Derivative Pricing Accuracy |

| State Growth | Storage Overhead Per Block | Long-term Node Sustainability |

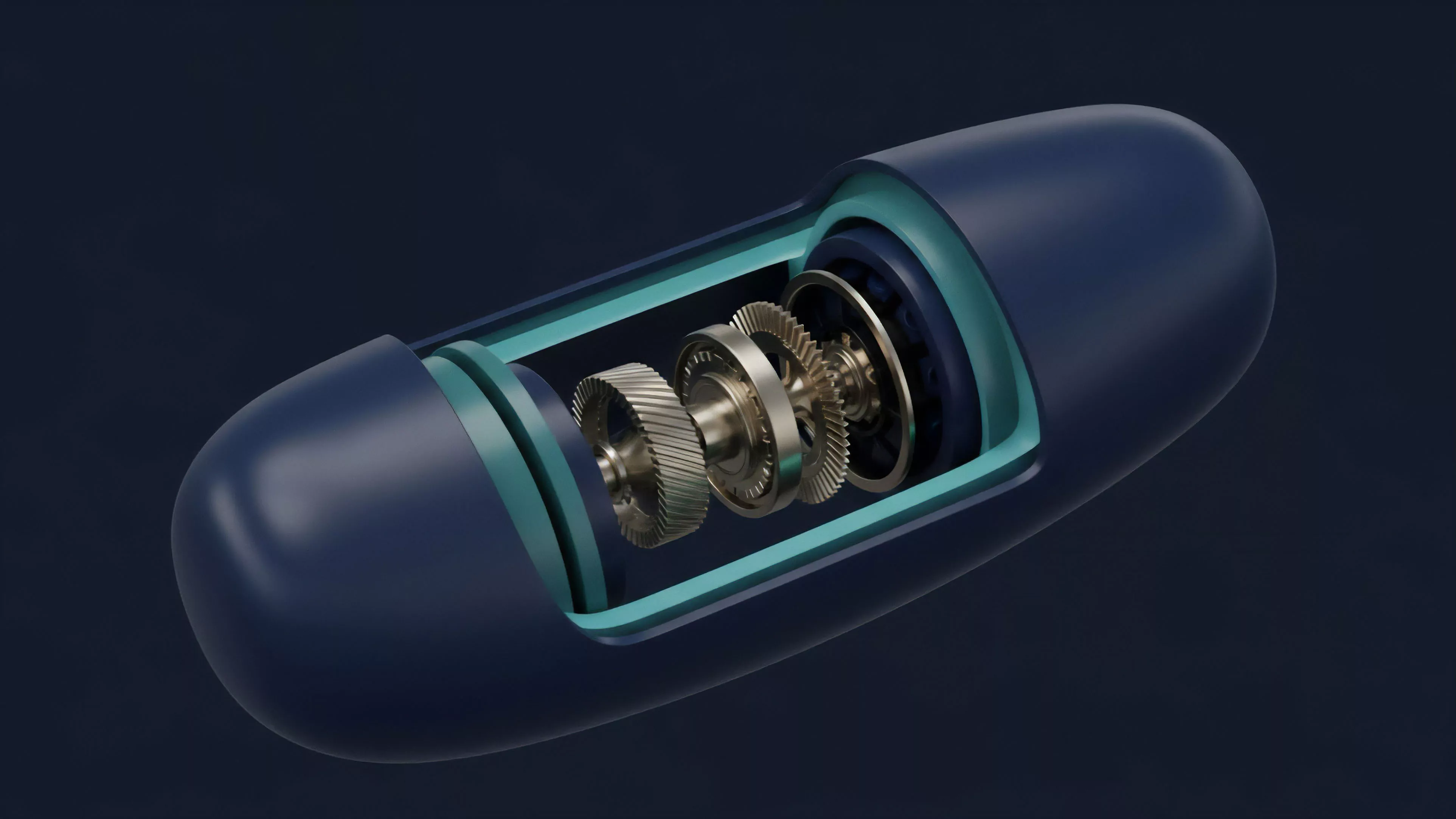

One might observe that this is not dissimilar to how hydraulic engineers stress-test levees; the pressure is applied until the structural failure point reveals the true capacity of the material. This perspective allows architects to design fault-tolerant margin engines that remain operational even when the underlying consensus layer is saturated with low-priority activity.

Approach

Current approaches involve deploying synthetic load generators that mimic diverse participant behaviors, ranging from high-frequency market makers to retail users. These generators inject high-entropy transaction streams into testnets, monitoring for consensus drift or smart contract execution errors. The analysis focuses on the critical path of a derivative trade: from order submission to clearing and eventual settlement.

- Load simulation involves creating high-volume traffic patterns that mirror historical market crashes.

- Resource contention analysis identifies how CPU and memory usage spike during peak derivative activity.

- Liquidation efficiency testing measures the response time of automated engines when collateral values drop rapidly.

Synthetic load generators enable the simulation of extreme market events to verify that margin engines execute liquidations within defined temporal bounds.

Engineers also employ shadow testing, where production traffic is replayed against a parallel version of the protocol. This method allows for the identification of concurrency bugs that only manifest when thousands of smart contract interactions occur within a single block. The objective is to verify that the financial state remains consistent regardless of the underlying network latency.

Evolution

The field has shifted from simple capacity checks toward holistic system resilience testing. Early iterations focused on raw transaction throughput, whereas modern strategies prioritize financial correctness under load. As protocols implement layer-two scaling solutions and sharding architectures, the testing methodologies must now account for cross-chain message passing and asynchronous finality.

- Modular blockchain architectures require testing the interaction between independent data availability and execution layers.

- Advanced consensus algorithms necessitate verification of security guarantees during high-latency network partitions.

- Programmable privacy features introduce overhead that complicates real-time throughput calculations.

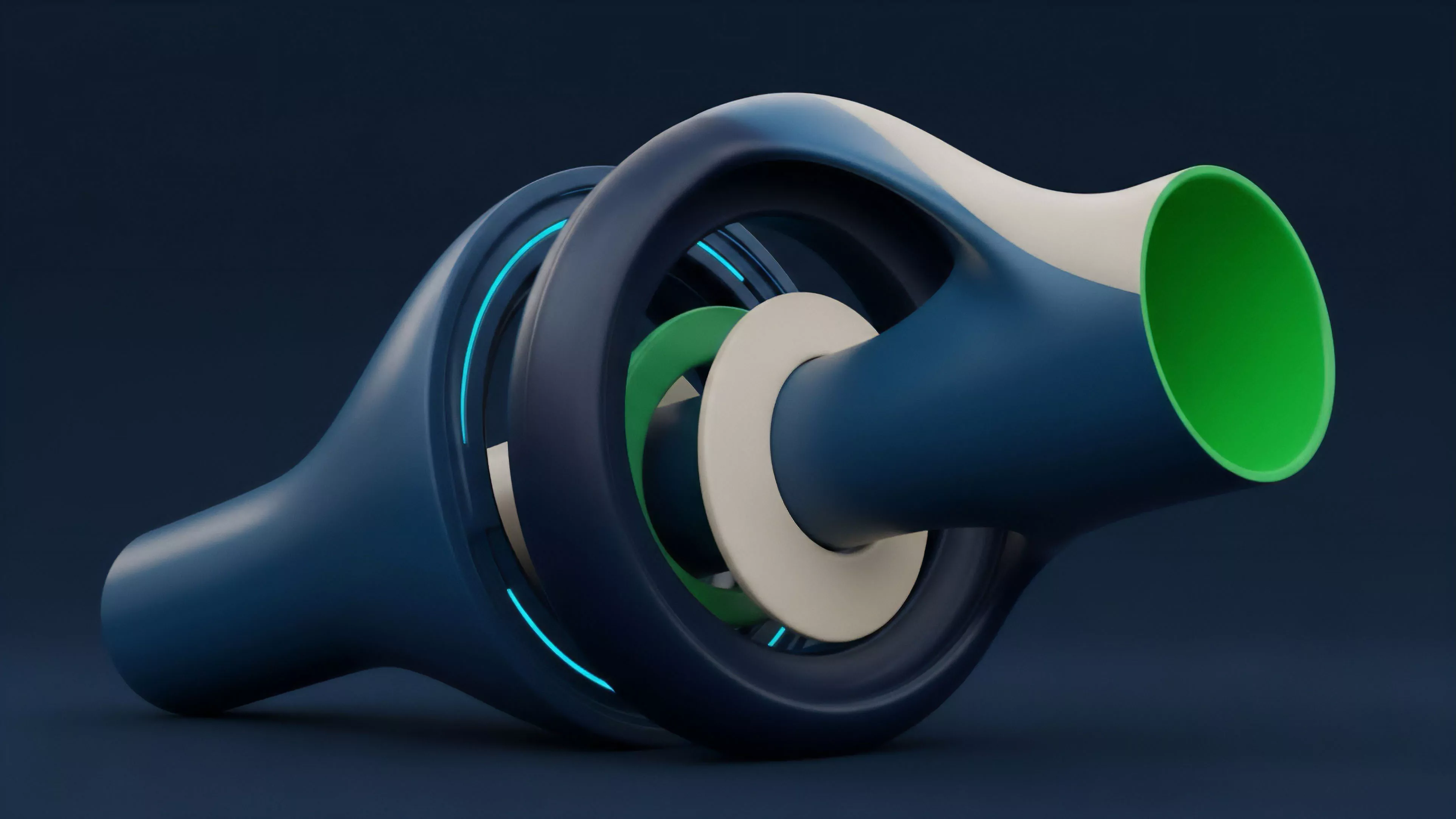

The transition toward asynchronous state machines represents the most significant shift in recent years. This change demands that testers focus on the atomic nature of cross-shard transactions to prevent double-spending or state corruption during high-volume periods. The focus has moved from static performance metrics to dynamic, environment-aware validation.

Horizon

Future testing frameworks will likely incorporate AI-driven adversarial agents that autonomously search for edge-case vulnerabilities in protocol logic. These agents will go beyond simple load injection, attempting to manipulate the order flow sequence to trigger protocol-level failures or exploit liquidation delays. The next stage involves the automation of formal verification during the testing phase, ensuring that code properties hold true even under extreme resource constraints.

Integration with cross-protocol liquidity aggregation will define the next standard for scalability. Testing will no longer be limited to a single chain but will encompass the entire interoperable financial stack. This requires a transition toward global state validation, where the stability of one protocol is measured by its ability to remain synchronized with external market feeds and liquidity pools across the entire decentralized landscape.