Essence

Scalability Solutions Research functions as the analytical inquiry into architectural frameworks designed to expand transaction throughput and decrease settlement latency within decentralized ledger systems. These investigations target the inherent trade-offs between decentralization, security, and computational efficiency, often categorized as the blockchain trilemma. By modeling how specific protocol upgrades ⎊ such as Layer 2 rollups, sharding, or state channels ⎊ alter the underlying network physics, researchers determine the viability of sustaining high-frequency derivative trading environments on-chain.

Scalability solutions research quantifies the technical boundaries that dictate how decentralized networks process financial volume while maintaining state integrity.

The primary objective involves reducing the per-transaction cost and time-to-finality to levels competitive with centralized high-frequency trading venues. This requires deep technical analysis of consensus mechanisms, data availability layers, and execution environments. Without these advancements, decentralized derivative markets remain constrained by network congestion, which prevents the effective deployment of sophisticated automated market-making strategies and arbitrage operations.

Origin

The genesis of Scalability Solutions Research traces back to the realization that monolithic blockchain architectures possess hard throughput ceilings.

Early participants observed that as network demand surged, transaction fees became prohibitive for all but the most lucrative financial activities, effectively pricing out smaller participants and limiting the liquidity depth required for robust options markets. This limitation served as the primary driver for engineers to look beyond the base layer.

- Transaction Congestion: High demand periods on legacy networks forced participants to pay premiums, highlighting the need for efficient off-chain processing.

- Latency Sensitivity: Financial derivatives, particularly options, require rapid price discovery and order matching that exceed the capabilities of initial decentralized architectures.

- State Bloat: The cumulative history of every transaction created storage burdens, necessitating new approaches to data management and validation.

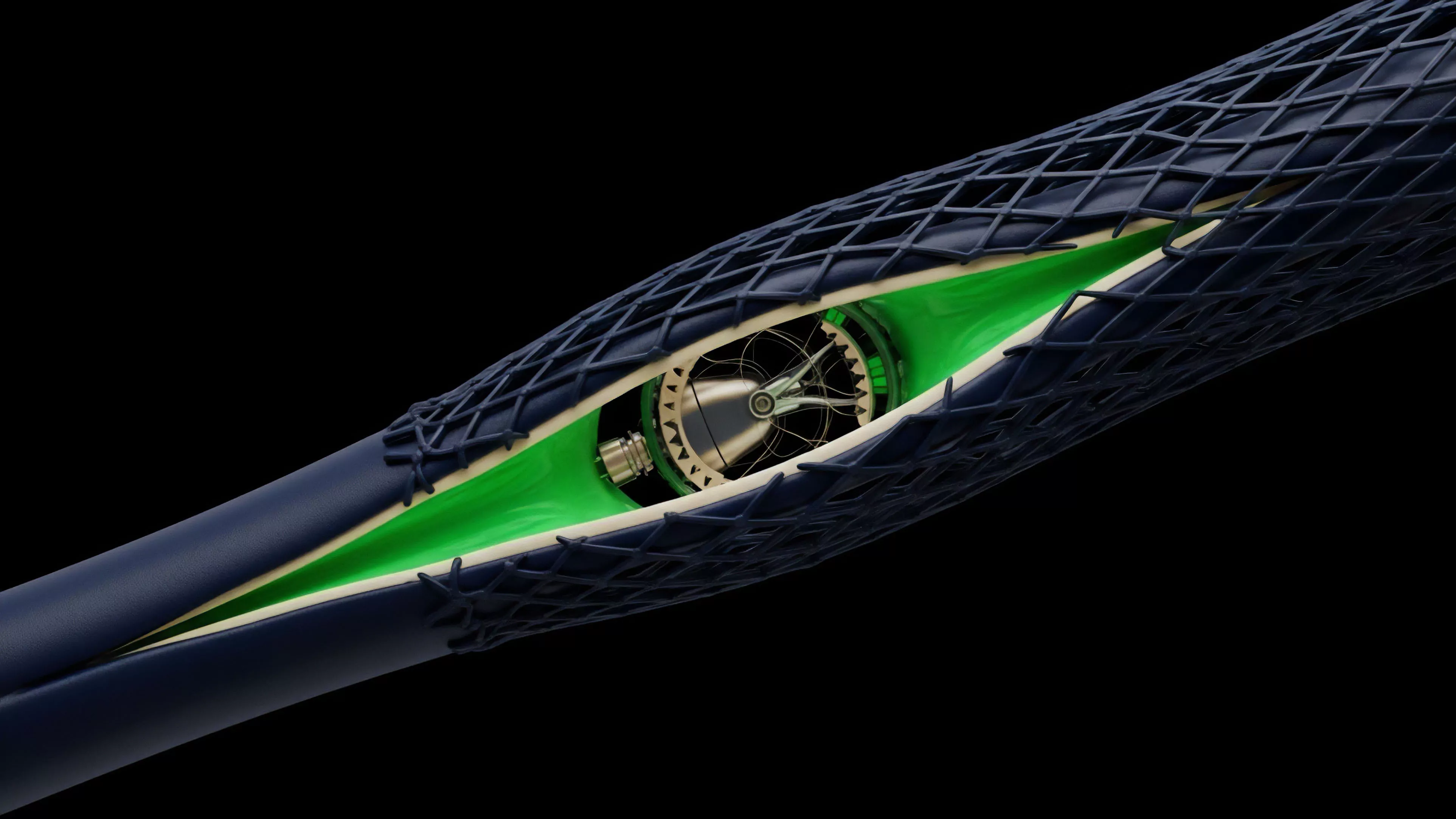

Researchers began analyzing how to shift computational load while maintaining cryptographic proof of validity. This shift marked the transition from viewing blockchains as simple value transfer systems to seeing them as programmable financial backbones. The early exploration of state channels and sidechains provided the initial proof-of-concept that transaction execution could occur outside the primary consensus loop, provided that the final settlement remained anchored to the secure base layer.

Theory

The theoretical underpinnings of Scalability Solutions Research rely on the rigorous application of computational complexity theory and cryptographic verification.

Analysts evaluate how different protocols manage the state transition function. A critical focus remains on zero-knowledge proofs, which allow a network to verify the correctness of a massive batch of transactions without requiring the full computational cost of processing each individual transaction on the main chain.

Theoretical frameworks in scalability research focus on decoupling transaction execution from global consensus to enable high-throughput financial settlement.

This domain also incorporates behavioral game theory to model how incentive structures within these new architectures affect validator and sequencer behavior. In an adversarial environment, the security of a scalability solution depends on ensuring that participants are economically motivated to act honestly. The following table outlines the comparative performance parameters often scrutinized in this research.

| Architecture Type | Throughput Capacity | Security Model | Latency Profile |

| Optimistic Rollups | Moderate | Fraud Proofs | Delayed Settlement |

| Zero Knowledge Rollups | High | Validity Proofs | Immediate Finality |

| State Channels | Very High | Multi-sig Anchoring | Near Instant |

The mathematical rigor applied to these models mirrors the precision required for option pricing models. Just as one must account for volatility and time decay, a researcher must account for the propagation delay and the cost of generating proofs within the protocol architecture.

Approach

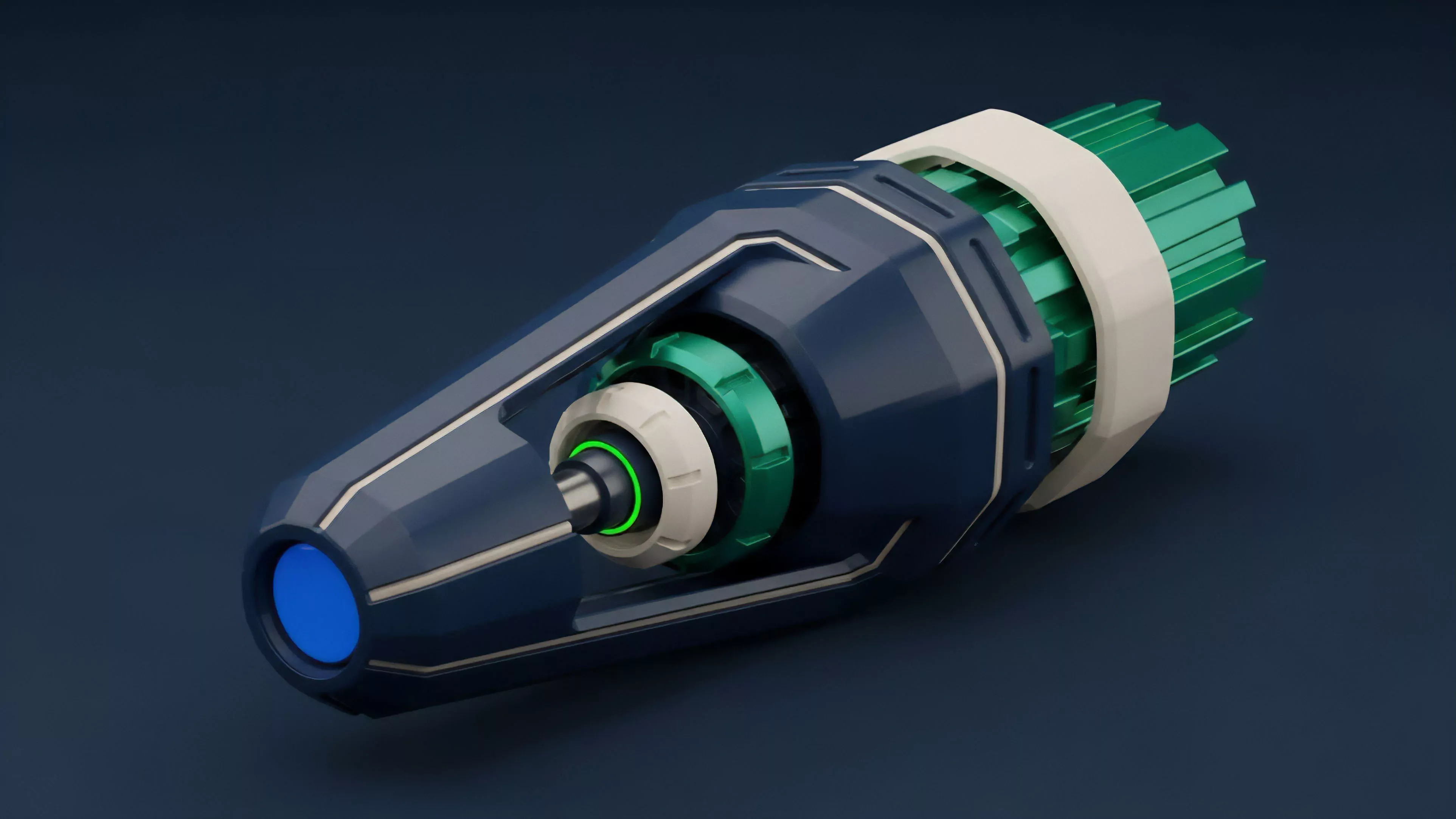

Current methodologies prioritize data availability and sequencer decentralization as the most pressing operational hurdles. Professionals analyze how the off-chain components of a solution communicate with the main layer, often focusing on the efficiency of blob storage and the mitigation of data withholding risks.

This is a technical pursuit where every microsecond of latency reduction directly translates into improved capital efficiency for derivative protocols.

- Execution Environment Analysis: Evaluating how virtual machines handle parallel transaction processing to minimize bottlenecks.

- Liquidity Fragmentation Assessment: Modeling the systemic risk introduced when liquidity is spread across multiple interoperable networks.

- Validator Incentive Modeling: Quantifying the economic rewards necessary to ensure consistent and secure proof generation.

My professional assessment indicates that we are currently at a stage where the focus has moved from simple throughput gains to the optimization of the user experience for institutional-grade liquidity providers. The challenge lies in ensuring that these systems do not introduce new, opaque points of failure that could lead to systemic contagion. The architecture must remain robust under extreme market stress, where high volatility induces massive order flow spikes that test the limits of the protocol’s capacity to settle.

Evolution

The trajectory of Scalability Solutions Research has shifted from academic speculation to highly competitive engineering.

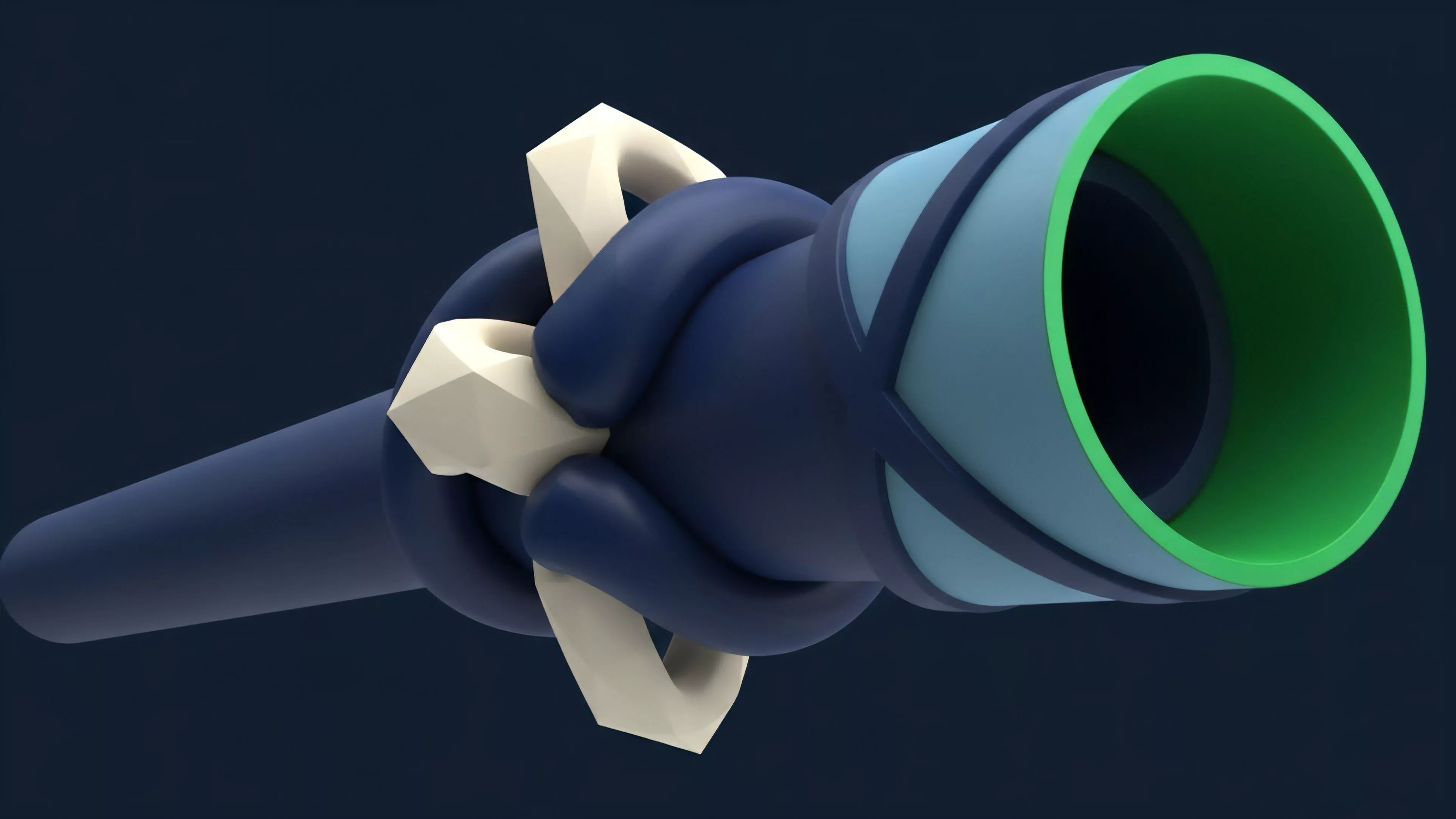

Initially, the community viewed scalability as a binary problem ⎊ either a protocol was fast or it was secure. Today, the discourse centers on modular blockchain stacks, where execution, consensus, and data availability are handled by specialized, decoupled layers. This modularity allows for rapid iteration and the specialization of components.

The evolution of scalability research has transitioned from monolithic network constraints to modular architectures optimized for specific financial workflows.

This evolution mirrors the development of traditional financial exchanges, which also moved from physical trading floors to highly optimized electronic systems. We are witnessing the maturation of the decentralized sequencing market, where the entities responsible for ordering transactions must now compete on speed and reliability. This is where the physics of the protocol meets the reality of the market ⎊ the most efficient sequencers will capture the most order flow, creating a self-reinforcing cycle of liquidity and stability.

Horizon

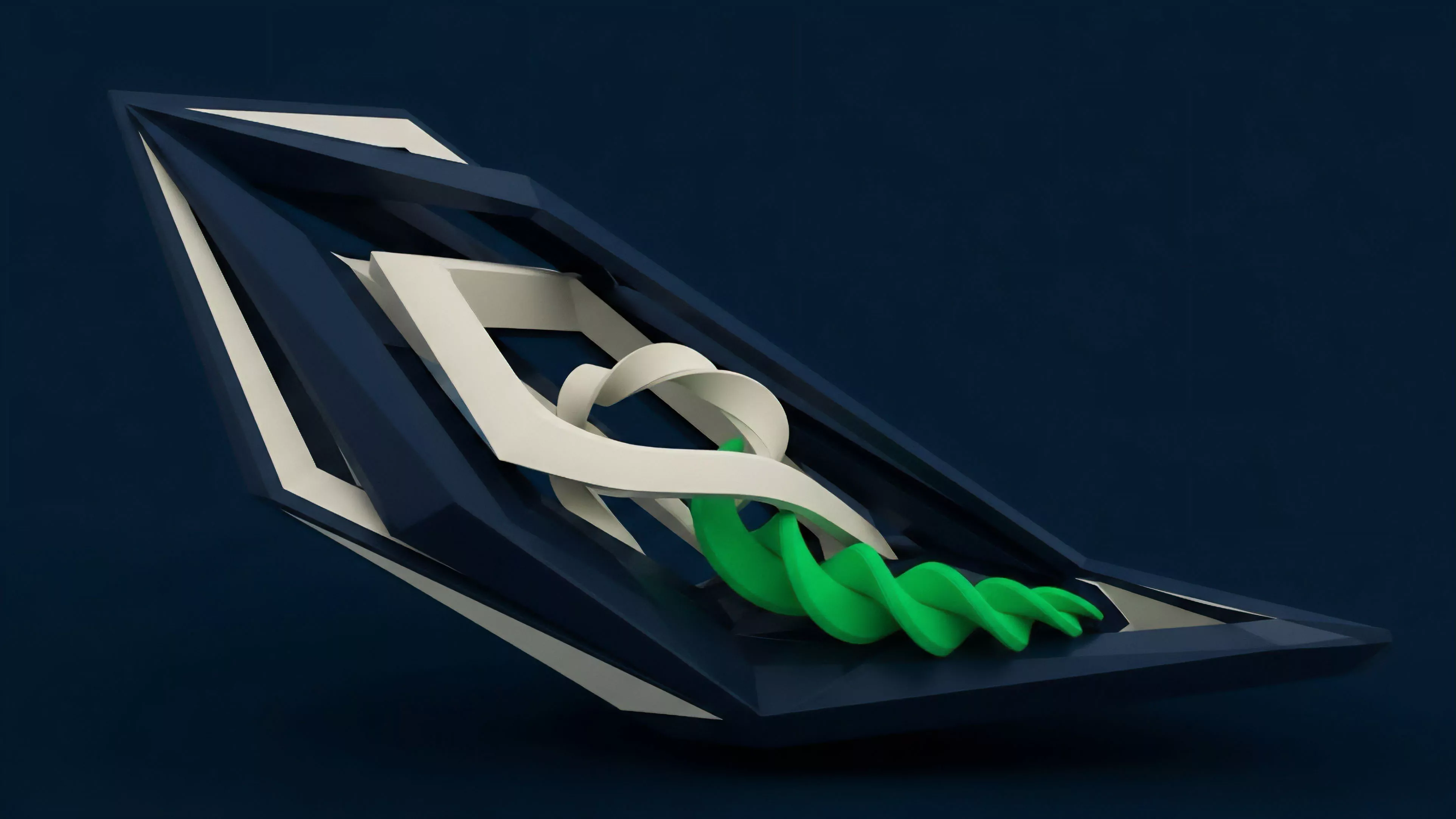

The future of Scalability Solutions Research lies in the total integration of cross-chain atomic settlement and the realization of shared sequencing networks.

As these technologies mature, the distinction between disparate networks will diminish, creating a unified global liquidity pool for derivative instruments. The next generation of research will address the limits of asynchronous communication between these layers, aiming to reduce the risk of state inconsistency.

- Shared Sequencer Networks: Establishing decentralized infrastructures that order transactions across multiple rollups to minimize latency.

- Recursive Proof Aggregation: Implementing advanced cryptographic techniques to compress millions of transactions into a single, highly efficient proof.

- Automated Risk Mitigation: Developing protocol-level mechanisms that automatically adjust margin requirements based on network throughput capacity during periods of extreme volatility.

The ultimate goal remains the creation of a financial system that is not limited by the computational capacity of its individual nodes. The ability to execute complex derivative strategies with the same speed as centralized counterparts, while retaining the transparency and self-custody of decentralized systems, is the benchmark for success. This research path is the only viable route toward achieving the scale required for a truly global, permissionless financial market. What paradox emerges when the pursuit of absolute scalability compromises the auditability of the underlying ledger state?