Essence

Risk Assessment Modeling functions as the structural bedrock for decentralized derivatives, translating opaque market volatility into quantifiable probability distributions. This discipline replaces intuition with rigorous mathematical frameworks to determine the solvency of margin engines and the integrity of clearing mechanisms. Participants rely on these models to ascertain the likelihood of adverse price movements exceeding collateral buffers, ensuring that systemic shocks remain contained within defined liquidity pools.

Risk Assessment Modeling quantifies market uncertainty to secure collateralized derivative protocols against insolvency.

The primary utility lies in its ability to synthesize heterogeneous data ⎊ ranging from on-chain liquidity depth to cross-exchange order flow ⎊ into actionable sensitivity metrics. By mapping the interplay between asset correlation, liquidation latency, and validator consensus speed, these models establish the boundaries of safe leverage. Protocols lacking sophisticated, real-time assessment capabilities frequently succumb to recursive liquidation cascades, where automated deleveraging forces price deviations that trigger further liquidations, ultimately draining the underlying treasury.

Origin

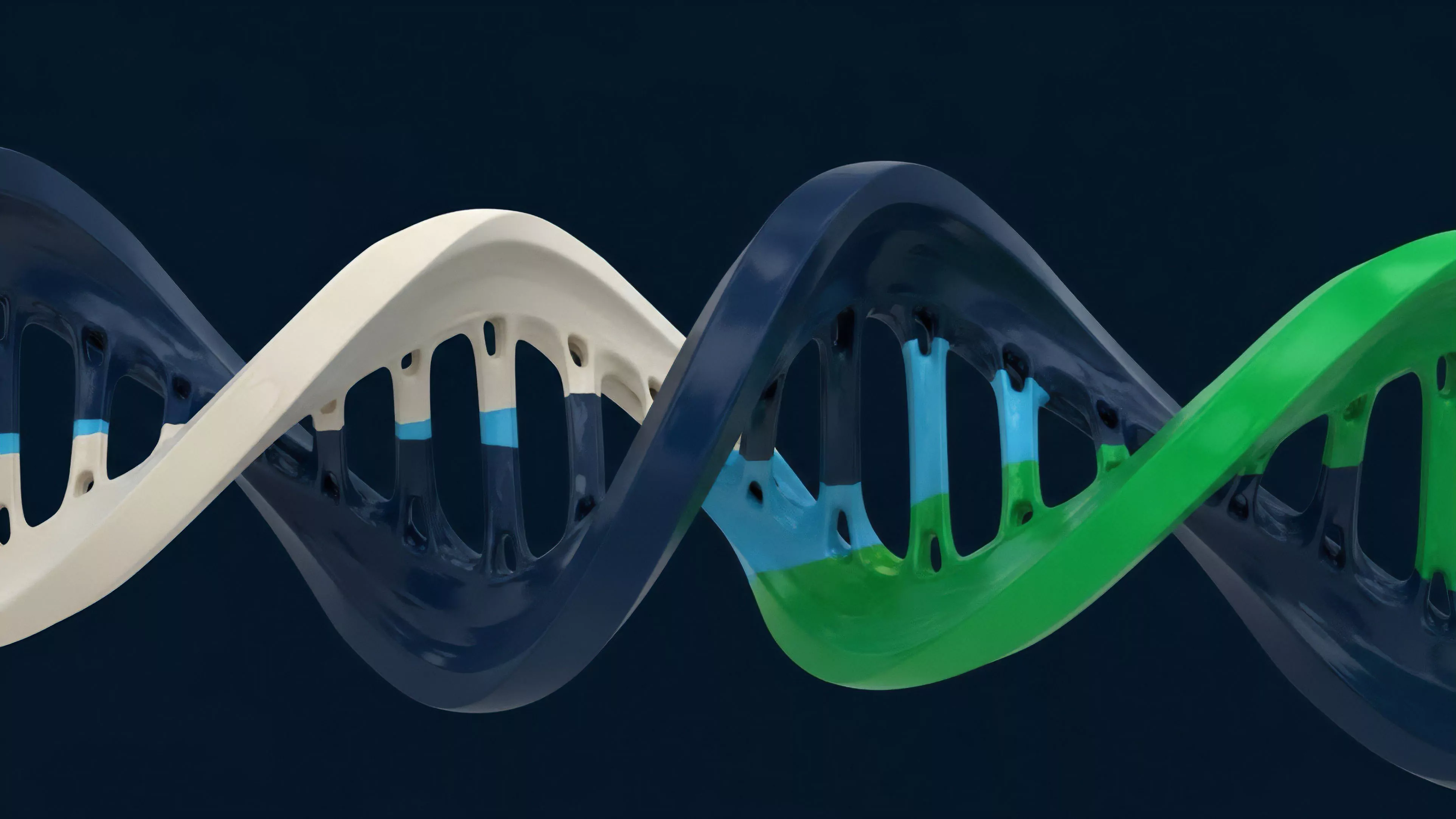

The lineage of Risk Assessment Modeling within decentralized finance traces back to the early implementation of over-collateralized lending and automated market maker designs.

Initial iterations borrowed heavily from traditional finance, applying Gaussian-based models to assets characterized by non-normal, fat-tailed distributions. These early frameworks often failed to account for the unique liquidity fragmentation and high-frequency volatility inherent to digital asset exchanges.

- Black-Scholes adaptations provided the initial, albeit limited, foundation for pricing crypto options by assuming continuous trading and log-normal returns.

- Value at Risk (VaR) frameworks were subsequently introduced to estimate potential portfolio losses over specific time horizons, though they struggled with the rapid contagion patterns of crypto markets.

- Liquidation Engine architectures evolved from basic threshold-based triggers to dynamic, volatility-adjusted models that account for slippage and gas-constrained execution times.

These origins highlight a transition from static, legacy-inspired metrics toward dynamic, protocol-native approaches. The realization that blockchain-based assets operate within an adversarial environment necessitated a departure from models that assume stable, efficient markets. Developers shifted focus toward modeling the mechanics of liquidation queues and the resilience of oracle feeds, recognizing that the security of a derivative instrument is only as robust as the data providing its valuation.

Theory

The theoretical structure of Risk Assessment Modeling centers on the sensitivity of derivative contracts to underlying price fluctuations and exogenous shocks.

Analysts utilize Quantitative Finance & Greeks to map how option values change relative to spot price (Delta), volatility (Vega), and time decay (Theta). In decentralized contexts, these sensitivities are complicated by the non-linear relationship between collateral value and protocol solvency.

Mathematical modeling of risk sensitivities enables protocols to dynamically adjust margin requirements based on real-time market stress.

The model must account for the following structural components:

| Component | Function |

|---|---|

| Liquidation Thresholds | Defines the point where collateral is insufficient to cover potential losses. |

| Volatility Skew | Captures the market pricing of tail risks and asymmetric probability distributions. |

| Oracle Latency | Models the delay between on-chain price updates and actual market execution. |

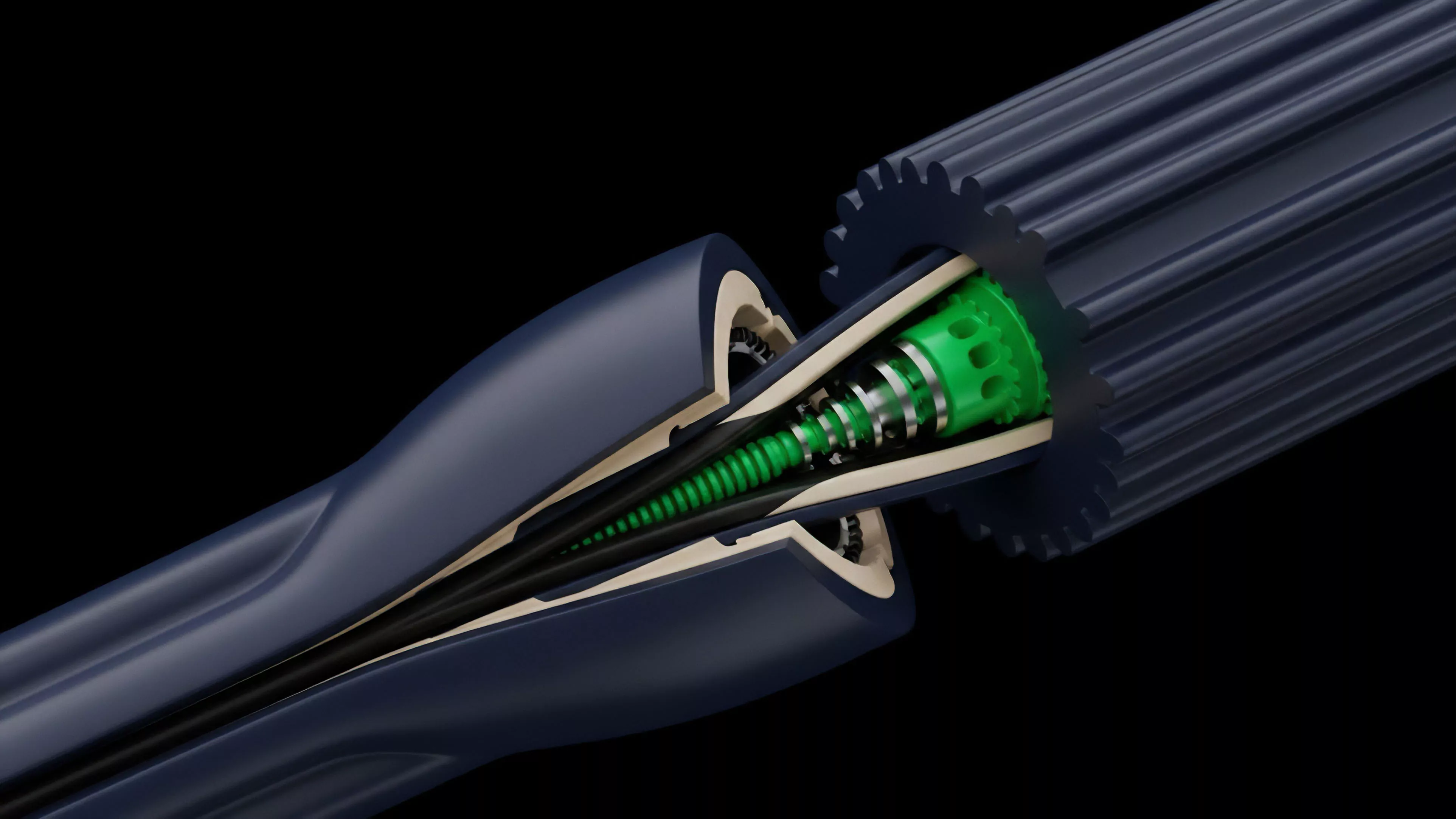

The mathematical rigor here involves stochastic calculus applied to discrete, high-frequency environments. One might observe that the behavior of an automated liquidation agent mirrors the physical constraints of a fluid dynamics system, where pressure ⎊ liquidation demand ⎊ builds until the conduit ⎊ the smart contract ⎊ reaches its throughput limit. This analogy serves to clarify why static models fail; they treat the market as a closed vessel rather than a high-pressure system subject to unpredictable ruptures.

When the underlying blockchain consensus experiences congestion, the model’s assumption of instantaneous settlement collapses, exposing the protocol to significant tail risk.

Approach

Modern practitioners deploy Risk Assessment Modeling through a multi-layered architecture that combines off-chain computational offloading with on-chain enforcement. The primary approach involves running high-fidelity simulations ⎊ often Monte Carlo or agent-based models ⎊ to stress-test protocol health against extreme market scenarios. These simulations inform the parameters for margin maintenance and insurance fund allocation.

- Real-time Order Flow Analysis tracks the concentration of open interest and the proximity of large positions to liquidation zones.

- Stress Testing Frameworks simulate 30% to 50% price shocks to evaluate the exhaustion rate of liquidity pools and the effectiveness of socialized loss mechanisms.

- Adversarial Simulation models the behavior of malicious actors who might attempt to manipulate oracle feeds or exploit gas latency during periods of high volatility.

This approach demands a constant reconciliation between the theoretical model and the reality of Market Microstructure. Analysts must reconcile the gap between the idealized pricing model and the realized slippage observed on-chain. If the model indicates a high probability of solvency, yet the order book depth is insufficient to absorb a liquidation, the protocol remains functionally insolvent.

This is where the pricing model becomes truly elegant ⎊ and dangerous if ignored.

Evolution

The trajectory of Risk Assessment Modeling has moved from simple collateral ratios to complex, cross-margin, and portfolio-based risk engines. Early systems utilized a siloed approach, assessing each position in isolation. Current iterations prioritize systemic interconnectedness, acknowledging that a collapse in one protocol can rapidly propagate through others via shared collateral assets or common liquidity providers.

Dynamic risk management evolves by integrating cross-protocol data to anticipate systemic contagion before it manifests.

This shift is driven by the maturation of Tokenomics & Value Accrual, where governance models now explicitly tie protocol stability to token incentives. The evolution is visible in the transition toward decentralized insurance and autonomous hedging strategies that respond to volatility spikes. We now see the emergence of modular risk layers that allow different protocols to share a unified assessment framework, reducing the redundancy and fragmentation that previously hindered market efficiency.

The challenge lies in maintaining this modularity without introducing new, hidden points of failure within the interlinked chain of smart contracts.

Horizon

Future developments in Risk Assessment Modeling will likely focus on predictive, machine-learning-based frameworks that adapt to changing market regimes without human intervention. The integration of Protocol Physics & Consensus into these models will allow for finer control over settlement speed, potentially utilizing layer-two sequencing to preemptively manage liquidation risk before it impacts the base layer.

| Future Metric | Expected Impact |

|---|---|

| Consensus-Aware Risk | Adjusts margin requirements based on current network congestion levels. |

| Predictive Liquidity | Forecasts liquidity depth across multiple chains to optimize execution. |

| Autonomous Hedging | Deploys protocol-owned liquidity to dampen volatility and prevent cascades. |

The ultimate goal is a self-healing financial system where risk parameters are not fixed by governance votes but are continuously optimized by the underlying protocol logic. This evolution necessitates a deep integration of behavioral game theory to ensure that incentive structures align with the goal of systemic resilience. The next generation of models will treat volatility not as an external variable to be managed, but as a core protocol parameter to be internalized and hedged within the automated architecture.