Essence

Regulatory Data Standards function as the codified linguistic and structural bridge between decentralized cryptographic primitives and traditional financial oversight mechanisms. They establish common syntax, data schemas, and reporting protocols for derivative instruments, ensuring that on-chain activity remains intelligible to off-chain compliance engines. These standards transform opaque, permissionless transaction logs into structured, actionable intelligence required for institutional participation and systemic risk monitoring.

Regulatory Data Standards provide the essential linguistic framework that translates decentralized derivative activity into transparent, compliant financial intelligence.

At the architectural level, these standards define the precise format for trade lifecycle events, including issuance, clearing, settlement, and margin management. By enforcing uniformity in data fields such as Counterparty Identifier, Asset Classification, and Notional Value, they enable cross-protocol interoperability. This alignment reduces the information asymmetry that historically plagued the integration of digital asset markets with global regulatory frameworks.

Origin

The genesis of these standards resides in the collision between the rapid proliferation of decentralized finance protocols and the established requirements of Global Derivatives Regulation. Early decentralized markets operated with bespoke data structures, creating fragmented liquidity and severe challenges for risk assessment. As institutional capital sought entry, the lack of standardized reporting became the primary barrier to regulatory approval and broader market adoption.

- Systemic Fragility: Early decentralized derivative venues lacked standardized data, hindering effective risk oversight during market volatility.

- Institutional Mandates: Regulators demanded parity between decentralized and traditional markets, necessitating the adoption of existing standards like the Legal Entity Identifier.

- Protocol Proliferation: The rapid growth of diverse automated market makers required a unified schema to enable accurate reporting across heterogeneous blockchain environments.

Initial attempts at standardization focused on mapping existing ISO 20022 financial messaging protocols onto smart contract events. This effort sought to harmonize the high-velocity, permissionless nature of crypto-derivatives with the rigid, batch-processed requirements of traditional finance. The resulting shift prioritized technical compatibility, ensuring that cryptographic proofs could serve as verifiable audit trails for global regulators.

Theory

The theoretical underpinnings of Regulatory Data Standards rely on the convergence of Distributed Ledger Technology and quantitative financial modeling. These standards ensure that every derivative trade produces a verifiable, immutable record that captures the necessary parameters for sensitivity analysis, including Delta, Gamma, and Vega. By embedding these requirements directly into the protocol’s Smart Contract Security logic, systems move from reactive reporting to proactive, algorithmic compliance.

Standardization of derivative data enables precise risk sensitivity analysis, transforming decentralized protocols into predictable, transparent financial instruments.

Effective implementation relies on the following structural parameters:

| Standard Component | Functional Objective |

| Transaction Schema | Uniform data entry for trade execution |

| Identity Framework | Verified participant classification |

| Reporting API | Real-time data synchronization with regulators |

This approach assumes that adversarial participants will exploit any lack of clarity in reporting requirements to engage in Regulatory Arbitrage. Consequently, the standards are designed to be protocol-agnostic, preventing venue-specific logic from obfuscating the underlying risk exposure. By mandating transparency at the point of trade, these standards align the incentives of market participants with the requirements of stable, systemic financial operation.

Approach

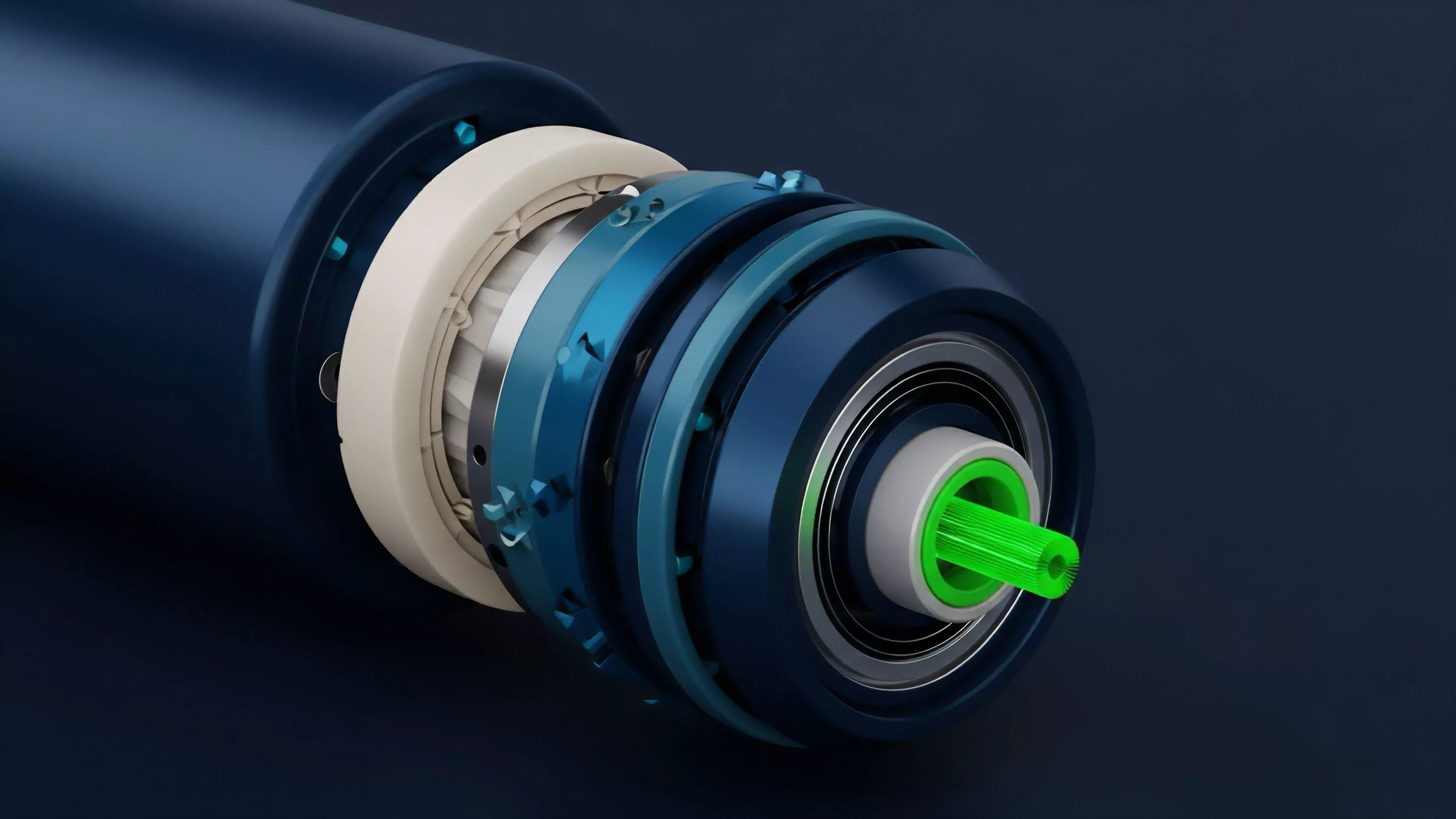

Modern implementation strategies prioritize On-Chain Compliance by integrating reporting logic directly into the protocol’s settlement engine. This approach treats regulatory data as a first-class citizen within the Protocol Physics, ensuring that trades failing to meet specified schema requirements are rejected at the execution layer. This design shifts the burden of proof from post-trade reconciliation to real-time, automated verification.

Automated on-chain compliance integrates reporting requirements into the protocol logic, ensuring immediate transparency for every derivative transaction.

The current methodology involves three distinct layers of data management:

- Protocol-Level Encoding: Embedding standard data fields into the smart contract state machine to ensure data integrity from inception.

- Middleware Aggregation: Utilizing decentralized oracles or indexers to structure raw on-chain events into regulatory-compliant formats without compromising decentralization.

- Institutional Interface: Establishing secure, permissioned gateways that provide regulators with read-only access to the aggregated, standardized data stream.

This architecture addresses the inherent tension between privacy and compliance by employing zero-knowledge proofs. These allow participants to demonstrate adherence to Regulatory Data Standards ⎊ such as confirming identity or collateral sufficiency ⎊ without revealing sensitive, proprietary trading strategies or personal information. The system architecture becomes a resilient mechanism for proving validity rather than merely recording history.

Evolution

The trajectory of these standards reflects a transition from voluntary, fragmented adoption toward mandatory, protocol-integrated frameworks. Early iterations were limited to simple data tagging, which proved insufficient for complex derivative products like Perpetual Swaps or Options. As the market matured, the focus shifted to the rigorous definition of Data Interoperability, ensuring that different chains could communicate risk metrics effectively.

The technical landscape shifted from simple log analysis to complex, cross-chain state proofs.

The current phase is defined by the hardening of Systemic Risk monitoring capabilities. Regulators now require granular data on leverage ratios, collateral quality, and concentration risk, which has forced protocols to upgrade their internal accounting engines. This shift demonstrates a broader trend: the integration of decentralized systems into the global financial fabric is not an elective process, but a requirement for surviving the inevitable scrutiny of the Macro-Crypto Correlation cycles.

Horizon

Future development will focus on the creation of autonomous, regulatory-aware protocols capable of adjusting their own risk parameters based on standardized data feeds. These systems will likely utilize Machine Learning to detect anomalies in order flow that signal potential systemic contagion, triggering automated circuit breakers that satisfy both protocol safety and regulatory mandates. The ultimate objective is a self-regulating, transparent global derivatives market where data standards are not just requirements, but foundational features of the protocol design itself.

This evolution will likely lead to the complete disappearance of the distinction between on-chain and off-chain reporting. As cryptographic verification becomes the standard for all financial interactions, Regulatory Data Standards will dissolve into the underlying infrastructure, providing a frictionless, high-speed, and inherently compliant environment for the next generation of global capital markets.