Essence

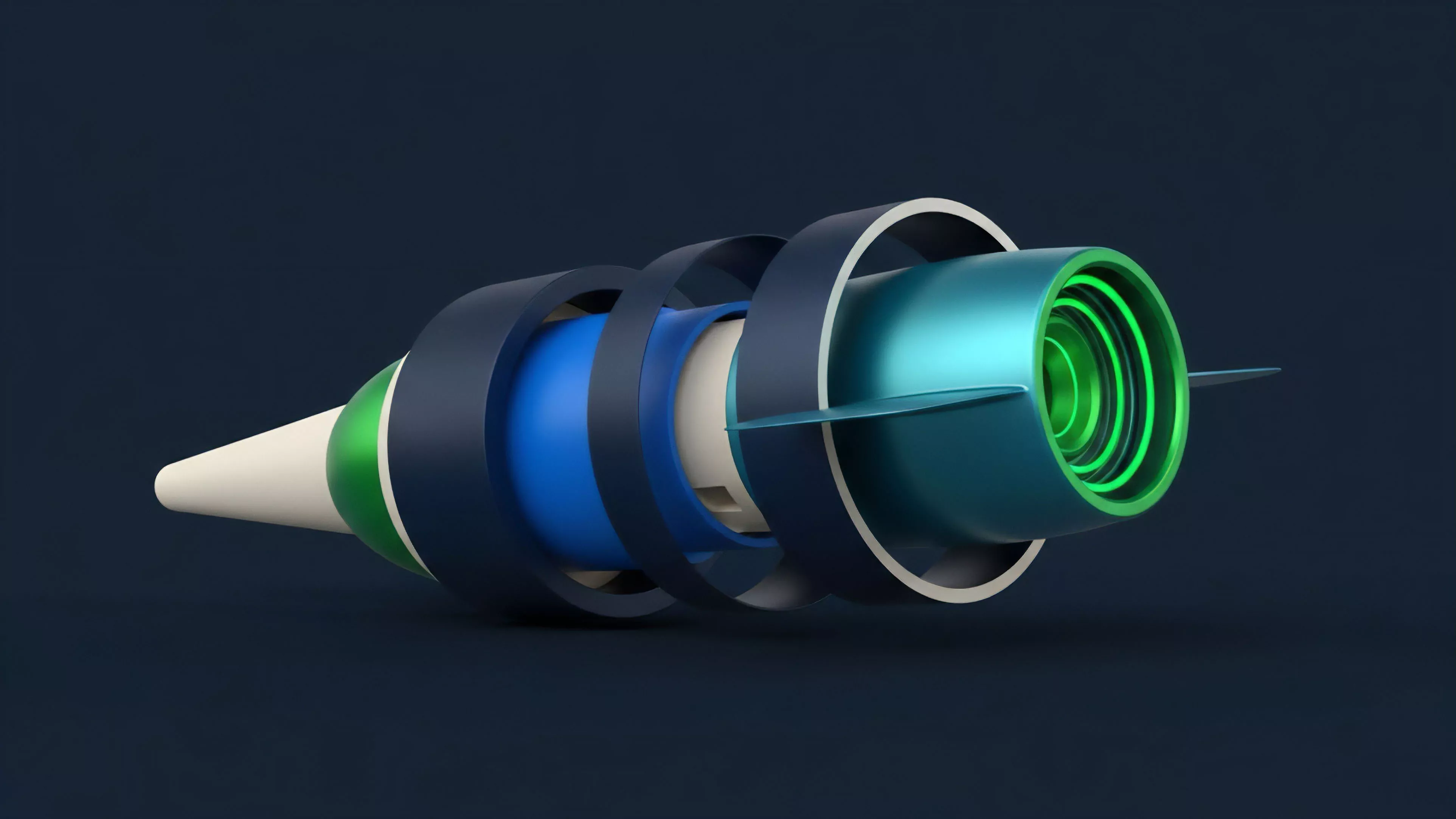

Real-Time Rate Feeds constitute the fundamental sensory apparatus for decentralized derivative markets. These mechanisms ingest raw, fragmented liquidity data from disparate trading venues and synthesize it into a singular, authoritative stream of pricing information. Without this continuous pulse, the automated engines governing margin, collateralization, and liquidation would operate in a state of blind uncertainty, rendering complex financial instruments impossible to sustain.

Real-Time Rate Feeds function as the connective tissue between volatile asset markets and the rigid execution requirements of smart contract protocols.

At the mechanical level, these feeds solve the problem of asynchronous price discovery. By aggregating order flow from multiple exchanges, they provide a reliable mark-to-market value that is resistant to localized manipulation or flash-crash artifacts. The integrity of a decentralized options platform relies entirely on the precision and latency of these data inputs.

When the feed fails, the entire risk management framework collapses, exposing participants to systemic contagion.

Origin

The necessity for Real-Time Rate Feeds arose from the limitations of early decentralized finance protocols that relied on single-source price data. These rudimentary systems were vulnerable to arbitrageurs who could manipulate thin order books on isolated exchanges to trigger cascading liquidations. The market learned through painful, repeated cycles of protocol insolvency that a single point of failure in data acquisition equates to a total loss of user capital.

- Oracle Decentralization: Early attempts to mitigate risk involved simple multi-signature or centralized oracle providers.

- Volume-Weighted Averaging: Protocols began incorporating volume-weighted average pricing to dampen the impact of anomalous spikes.

- Adversarial Evolution: Market participants actively exploited latency gaps between on-chain settlement and off-chain spot prices, forcing developers to prioritize sub-second feed updates.

This transition marked a shift from trusting a single, centralized entity to relying on cryptographically verified, distributed networks of data nodes. The focus moved toward ensuring that the price reported on-chain accurately reflects the global equilibrium of supply and demand.

Theory

The architectural structure of a robust Real-Time Rate Feed relies on the mathematical principle of consensus under adversarial conditions. By utilizing a decentralized network of nodes to pull data from multiple exchanges, the system constructs a robust estimate of the true market price, often applying statistical filters to discard outliers that do not align with broader market behavior.

The accuracy of a derivative pricing model is constrained by the statistical quality and update frequency of its underlying data inputs.

Quantitative modeling requires precise input for volatility calculations, specifically the Black-Scholes framework applied to digital assets. When rate feeds exhibit high jitter or latency, the resulting Greeks ⎊ such as Delta, Gamma, and Vega ⎊ become unreliable. This forces market makers to widen their spreads to compensate for the risk of stale pricing, which ultimately degrades capital efficiency across the entire platform.

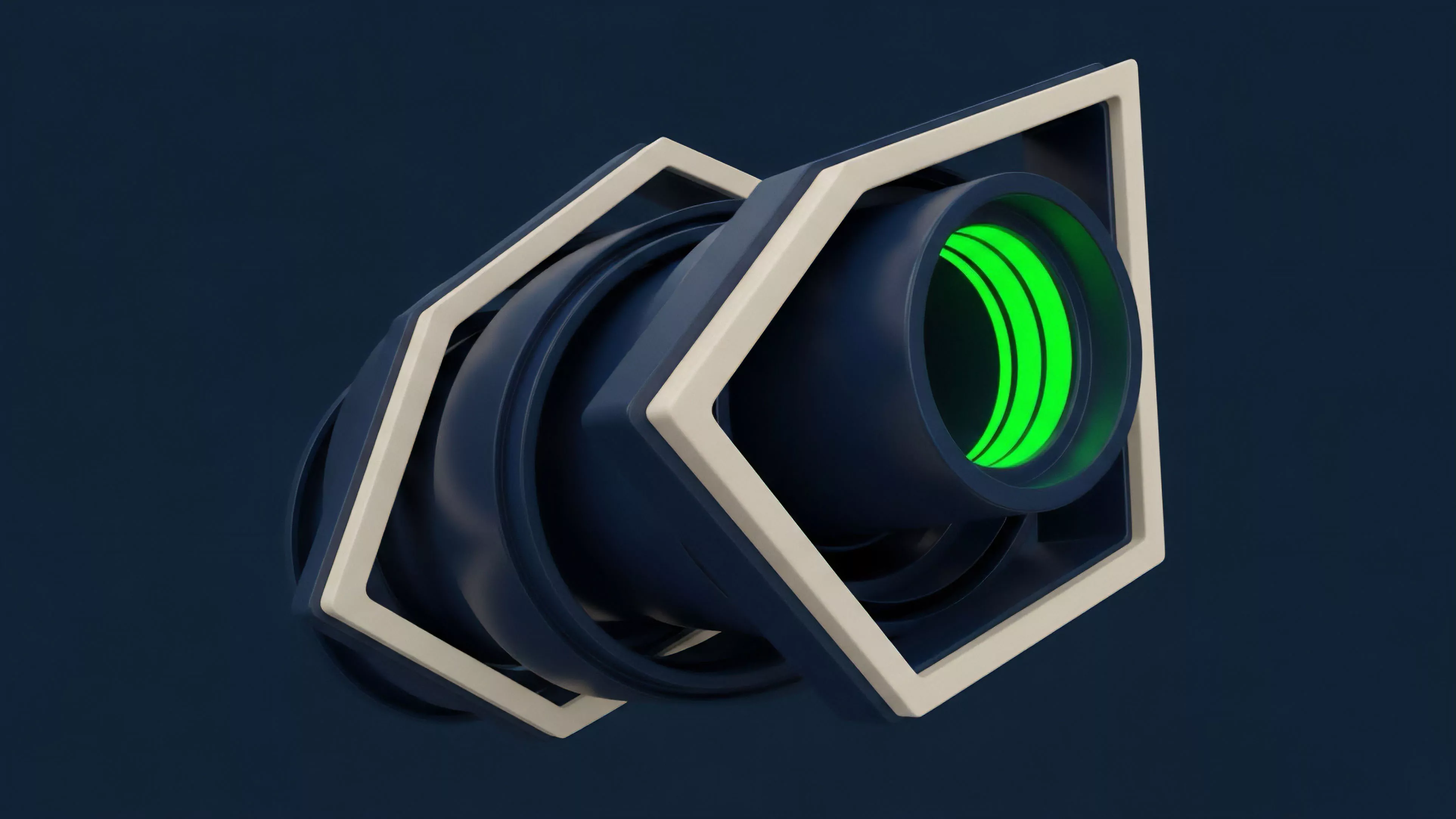

| Component | Functional Responsibility |

| Data Aggregator | Normalizes raw exchange order book snapshots. |

| Consensus Engine | Validates data points against peer node submissions. |

| Latency Filter | Discards stale information to maintain temporal integrity. |

The interplay between these components is governed by game theory. Nodes are incentivized to provide accurate data to maintain their reputation and receive protocol rewards, while the system is designed to penalize those who submit information that deviates significantly from the median consensus.

Approach

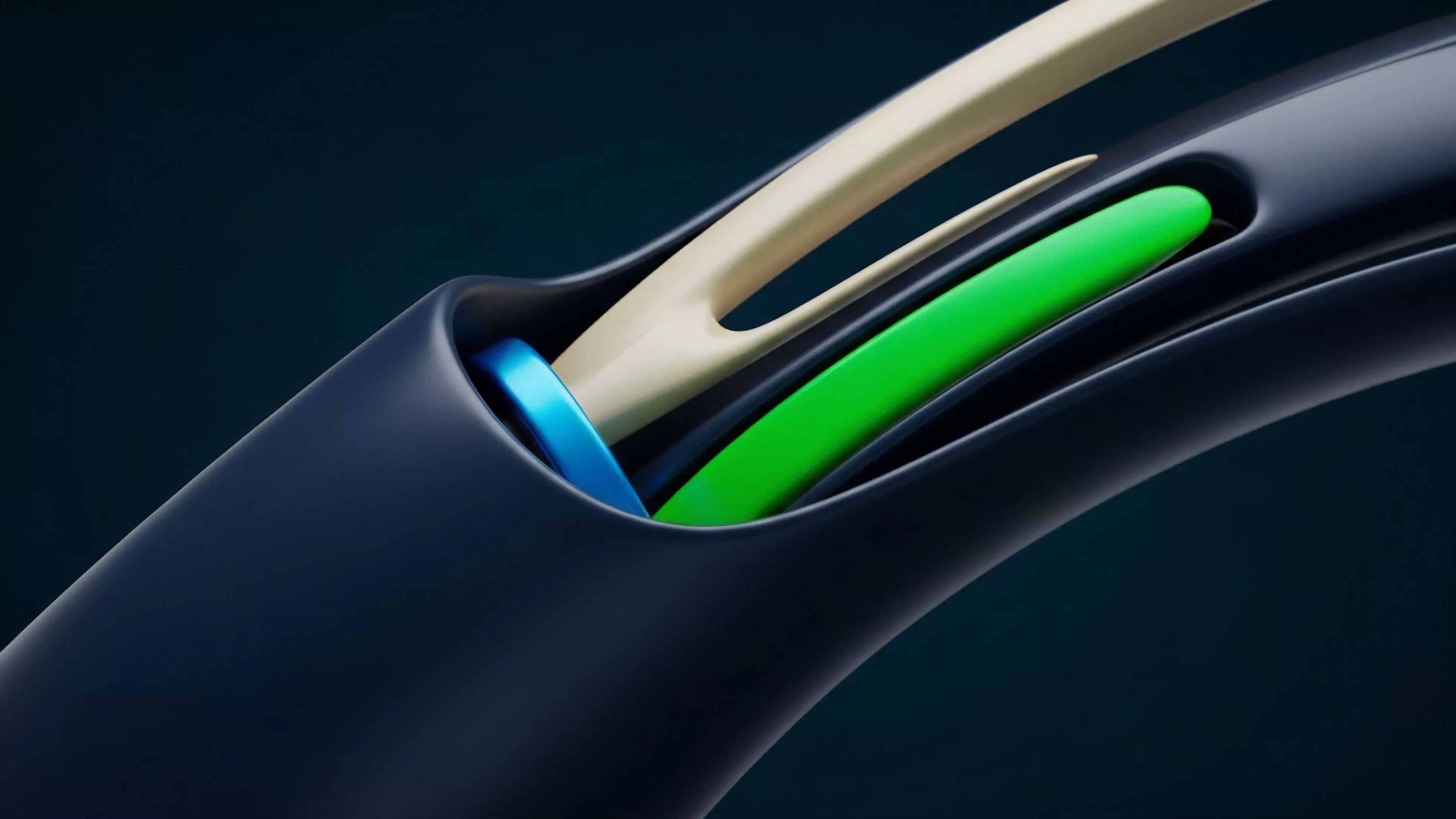

Current implementation strategies focus on minimizing the time delta between spot market execution and on-chain settlement. Modern protocols utilize high-performance, off-chain computation to process massive datasets, pushing only the final, aggregated price update to the blockchain.

This separation of compute and storage allows for the frequency of updates necessary to support high-leverage trading.

Effective risk management requires rate feeds capable of capturing tail-risk volatility during periods of extreme market stress.

Market makers and liquidators rely on these feeds to calculate the Maintenance Margin requirements in real-time. If the feed lags during a period of high volatility, the protocol may fail to trigger liquidations until the account is already insolvent, leading to bad debt that must be socialized among other participants. My professional concern remains the tendency for developers to prioritize throughput over the cryptographic security of the data source, a compromise that invites disaster.

Evolution

The transition from static, batch-processed price updates to continuous streaming data represents the most significant shift in derivative protocol design.

Early systems functioned like a periodic snapshot, leaving significant windows of opportunity for predatory behavior. Today, the industry has shifted toward streaming models where updates occur upon the detection of significant price movement, effectively creating a self-adjusting temporal resolution.

- Static Snapshots: Infrequent updates that created exploitable gaps for traders.

- Continuous Streaming: High-frequency data transmission triggered by price deviation thresholds.

- Cross-Chain Aggregation: Synthesis of pricing data from disparate blockchain environments to prevent regional price dislocation.

This evolution has been driven by the increasing sophistication of automated trading agents. These agents act as a constant stress test for the infrastructure, identifying and exploiting any inconsistency in the rate feed. Consequently, the design of these systems now reflects an adversarial mindset where security and responsiveness are treated as a unified requirement.

Horizon

The future of Real-Time Rate Feeds lies in the integration of zero-knowledge proofs to verify the authenticity of data directly from exchange APIs. This would allow protocols to ingest high-fidelity data without relying on a intermediary oracle network, significantly reducing trust assumptions. We are moving toward a world where the provenance of every price point is cryptographically provable, from the exchange matching engine to the final on-chain settlement. The challenge remains in scaling these cryptographic proofs without sacrificing the latency requirements of active derivative markets. As we refine our ability to handle these data volumes, the distinction between on-chain and off-chain liquidity will continue to blur, fostering a truly global, unified market for digital asset derivatives.