Foundational Nature

Real-Time Market Monitoring functions as the requisite nervous system for digital asset derivatives, providing the continuous data streams necessary to maintain protocol solvency and market efficiency. This process involves the high-frequency acquisition and processing of order book depth, trade execution velocity, and liquidation events across fragmented liquidity pools. In the adversarial environment of decentralized finance, where code execution is final and latency determines survival, the ability to observe and react to price dislocations in milliseconds is a structural requirement.

Continuous observation of market variables ensures that risk engines can adjust collateral requirements and liquidation thresholds before systemic failure occurs.

The primary objective of this surveillance is the maintenance of a transparent and accurate volatility surface. By tracking every bid and ask across multiple venues, Real-Time Market Monitoring allows participants to identify toxic order flow and adverse selection risks. This constant vigilance is mandatory for market makers who must hedge their Delta and Gamma exposures in a 24/7 trading environment where traditional market closes do not exist.

- Order Book Depth: Tracking the volume of limit orders at various price levels to assess immediate liquidity and potential slippage.

- Trade Velocity: Measuring the frequency and size of market orders to detect momentum shifts or institutional accumulation.

- Liquidation Feeds: Observing forced closures of leveraged positions which often signal local price bottoms or cascading volatility.

- Funding Rates: Monitoring the periodic payments between long and short positions in perpetual swaps to gauge market bias.

Historical Development

The transition from legacy batch processing to Real-Time Market Monitoring mirrors the broader shift from centralized, opaque financial systems to transparent, distributed ledgers. In traditional equity markets, price discovery was often confined to specific trading hours, with risk assessments performed during overnight settlement cycles. The birth of Bitcoin and the subsequent explosion of the crypto derivatives market necessitated a move toward a continuous, T+0 settlement model.

Early implementations of market surveillance in the digital asset space were limited by the throughput of public APIs and the high latency of early blockchain networks. As the volume of perpetual swaps and options grew, the need for more sophisticated data ingestion became apparent. The 2020 liquidity crisis served as a significant turning point, revealing that static risk models were insufficient for managing the rapid de-leveraging events characteristic of crypto markets.

The move from periodic risk assessment to constant surveillance was driven by the inherent volatility and 24/7 nature of decentralized auctions.

This historical trajectory led to the creation of specialized data providers and decentralized indexing protocols. These systems were designed to handle the massive influx of on-chain events and off-chain order book updates, transforming raw data into actionable risk metrics. The focus shifted from simply knowing the current price to understanding the probability of future price movements based on real-time order flow and whale movements.

Theoretical Framework

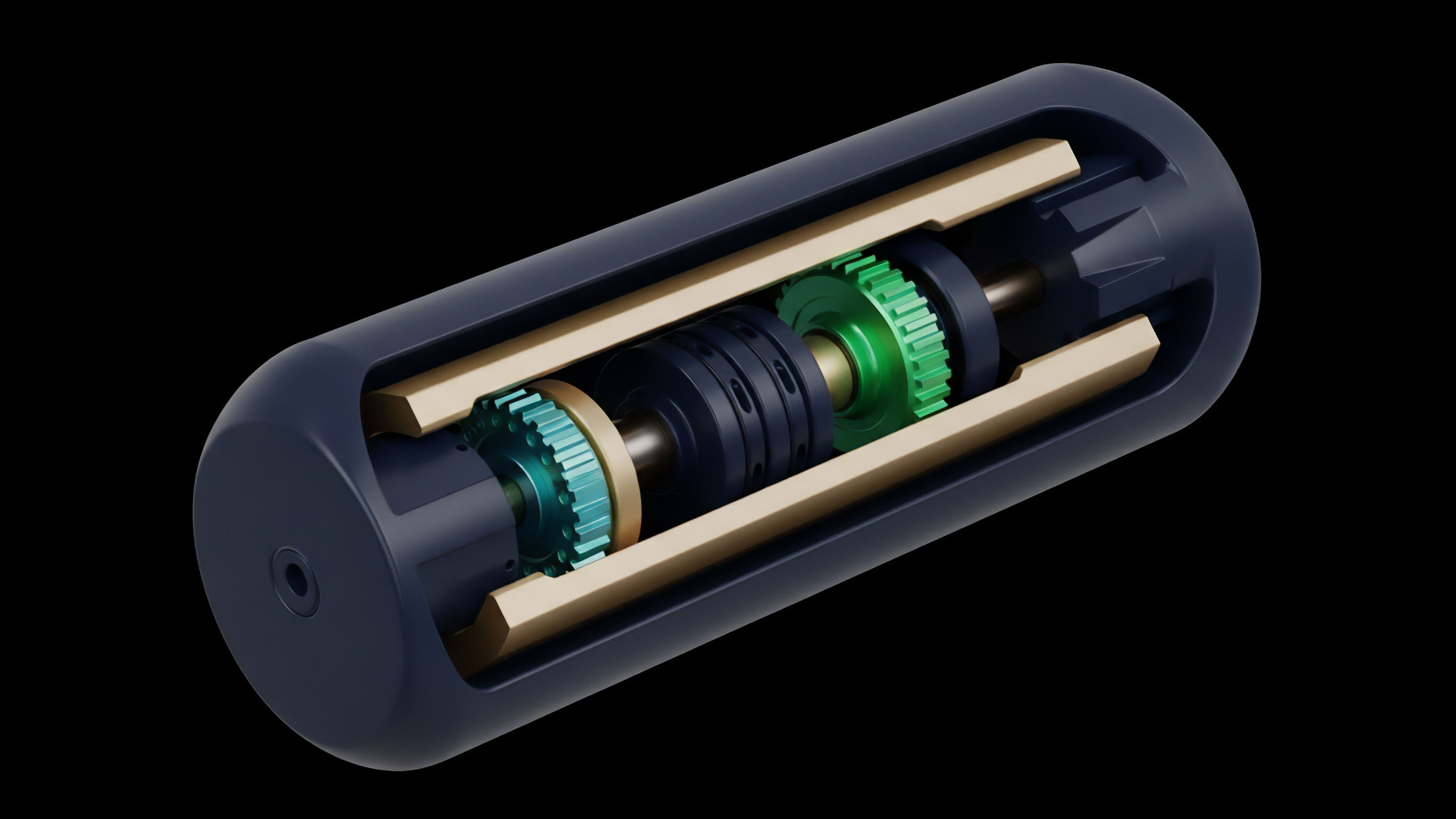

The mathematical architecture of Real-Time Market Monitoring is grounded in information theory and market microstructure analysis.

It relies on the continuous calculation of the Greeks ⎊ Delta, Gamma, Vega, and Theta ⎊ to quantify the sensitivity of option prices to underlying market changes. Unlike traditional markets where these values might be updated sporadically, the crypto environment demands a fluid model that accounts for the high-velocity shifts in implied volatility.

Microstructure and Order Flow Toxicity

A primary theoretical component is the Volume-Synchronized Probability of Informed Trading (VPIN). This metric allows risk engines to detect when informed traders are entering the market, which often precedes significant price moves. By monitoring the imbalance between buy and sell volume in real-time, Real-Time Market Monitoring identifies periods of high toxicity where liquidity providers are at risk of being picked off by more informed participants.

| Metric | Description | Systemic Significance |

|---|---|---|

| Delta Sensitivity | Rate of change in option price relative to underlying asset price. | Determines the immediate hedging requirements for market makers. |

| Gamma Exposure | Rate of change in Delta relative to underlying asset price. | Signals potential for accelerated volatility during large price moves. |

| Vega Sensitivity | Rate of change in option price relative to implied volatility. | Measures the portfolio risk associated with shifts in market uncertainty. |

| Theta Decay | Rate of change in option price relative to time to expiration. | Informs the cost of maintaining long volatility positions. |

Mathematical models that incorporate real-time Greek sensitivities are the only defense against the rapid de-leveraging cycles of crypto derivatives.

Volatility Surface Dynamics

The theory also encompasses the observation of the volatility smile and skew. In a healthy market, these curves are relatively stable, but in periods of stress, they can shift violently. Real-Time Market Monitoring tracks these shifts to identify mispriced options and arbitrage opportunities.

This requires solving complex partial differential equations, such as the Black-Scholes-Merton model, at sub-second intervals to ensure that the theoretical price remains aligned with the market reality.

Technical Execution

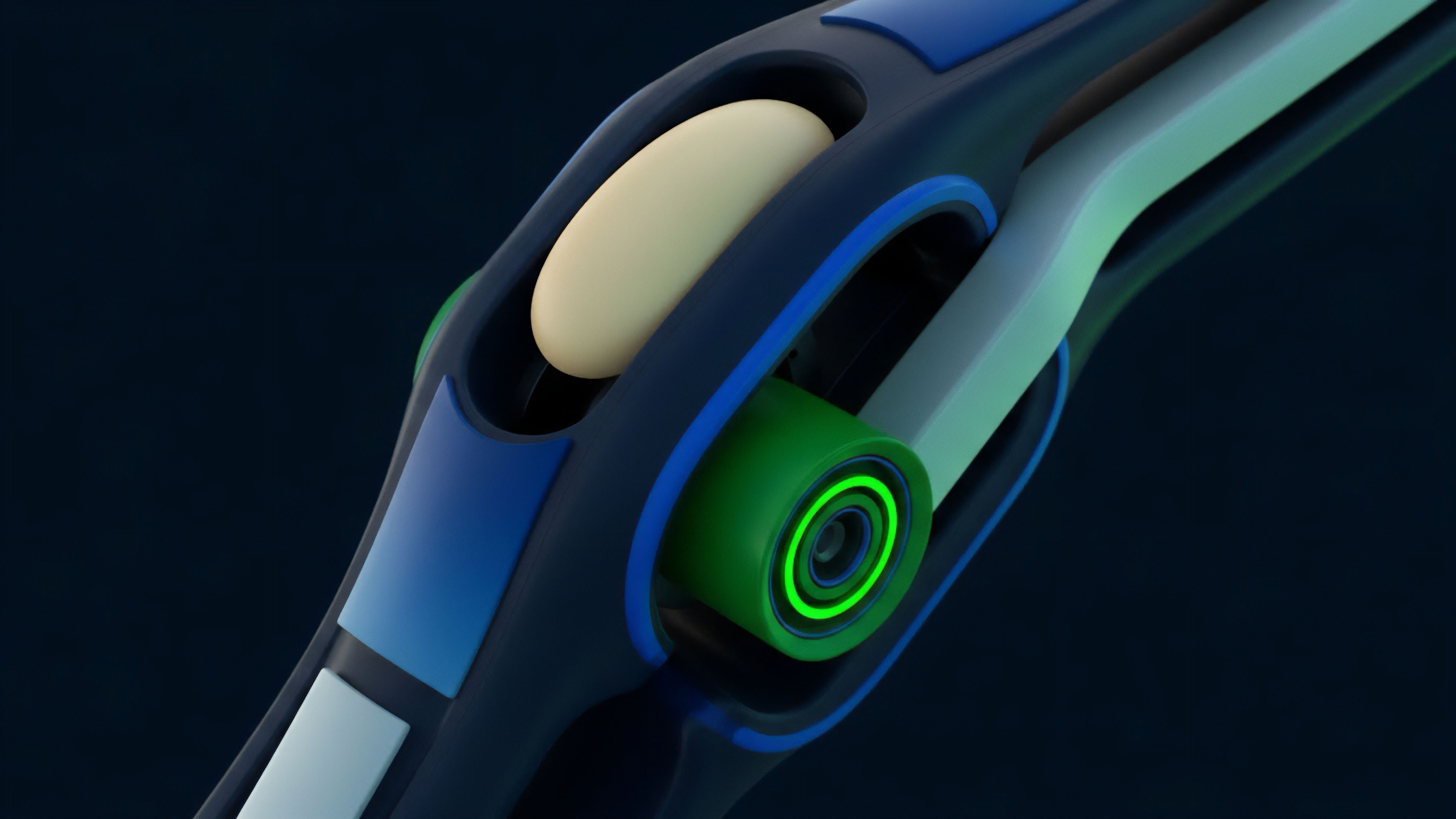

Operationalizing Real-Time Market Monitoring requires a high-performance technology stack capable of handling millions of messages per second. The technical implementation focuses on minimizing the time between a market event and the subsequent risk calculation. This is achieved through the use of WebSockets for low-latency data streaming and gRPC for efficient inter-service communication.

Data Ingestion and Normalization

The first step in the execution process is the ingestion of raw data from multiple centralized and decentralized exchanges. Each venue has its own data format and rate limits, requiring a robust normalization layer. This layer converts disparate data points into a unified format that can be consumed by the risk engine.

- Websocket Clusters: Distributed nodes that maintain persistent connections to exchange servers to receive real-time updates.

- Stream Processing: Using tools like Apache Kafka or Flink to process and aggregate data in transit.

- In-Memory Databases: Storing the current state of the order book in Redis or similar systems for microsecond retrieval.

- Hardware Acceleration: Utilizing FPGAs or GPUs to perform the heavy mathematical lifting required for Greek calculations.

Comparative Ingestion Methods

| Method | Latency Profile | Throughput Capacity | Reliability |

|---|---|---|---|

| REST API Polling | High (100ms+) | Low | Medium |

| WebSocket Streams | Low (10-50ms) | High | High |

| Direct Exchange Feeds | Ultra-Low (<1ms) | Very High | Very High |

| On-Chain Indexing | Variable (Block time) | Medium | High (Verifiable) |

The execution of these strategies allows for the creation of a real-time risk dashboard. This dashboard provides a comprehensive view of the market, including the current funding rates, liquidation thresholds, and the overall health of the liquidity pools. By automating the response to specific data triggers, protocols can execute defensive measures, such as pausing trading or adjusting margin requirements, without human intervention.

Systemic Shift

The development of Real-Time Market Monitoring has moved from simple price tracking to a complex, multi-layered analysis of systemic health.

Initially, monitoring was centralized, with individual traders and exchanges running their own proprietary systems. This created information asymmetries where those with better data had a significant advantage over retail participants. The rise of decentralized finance has democratized access to high-quality market data.

Protocols like The Graph and various blockchain explorers allow anyone to monitor on-chain activity in real-time. This transparency has led to a shift in how risk is managed. Instead of relying on the word of a centralized intermediary, users can verify the solvency of a protocol by looking at the real-time collateralization ratios and liquidation history.

Transparency in data ingestion has shifted the power balance from centralized gatekeepers to the broader participant network.

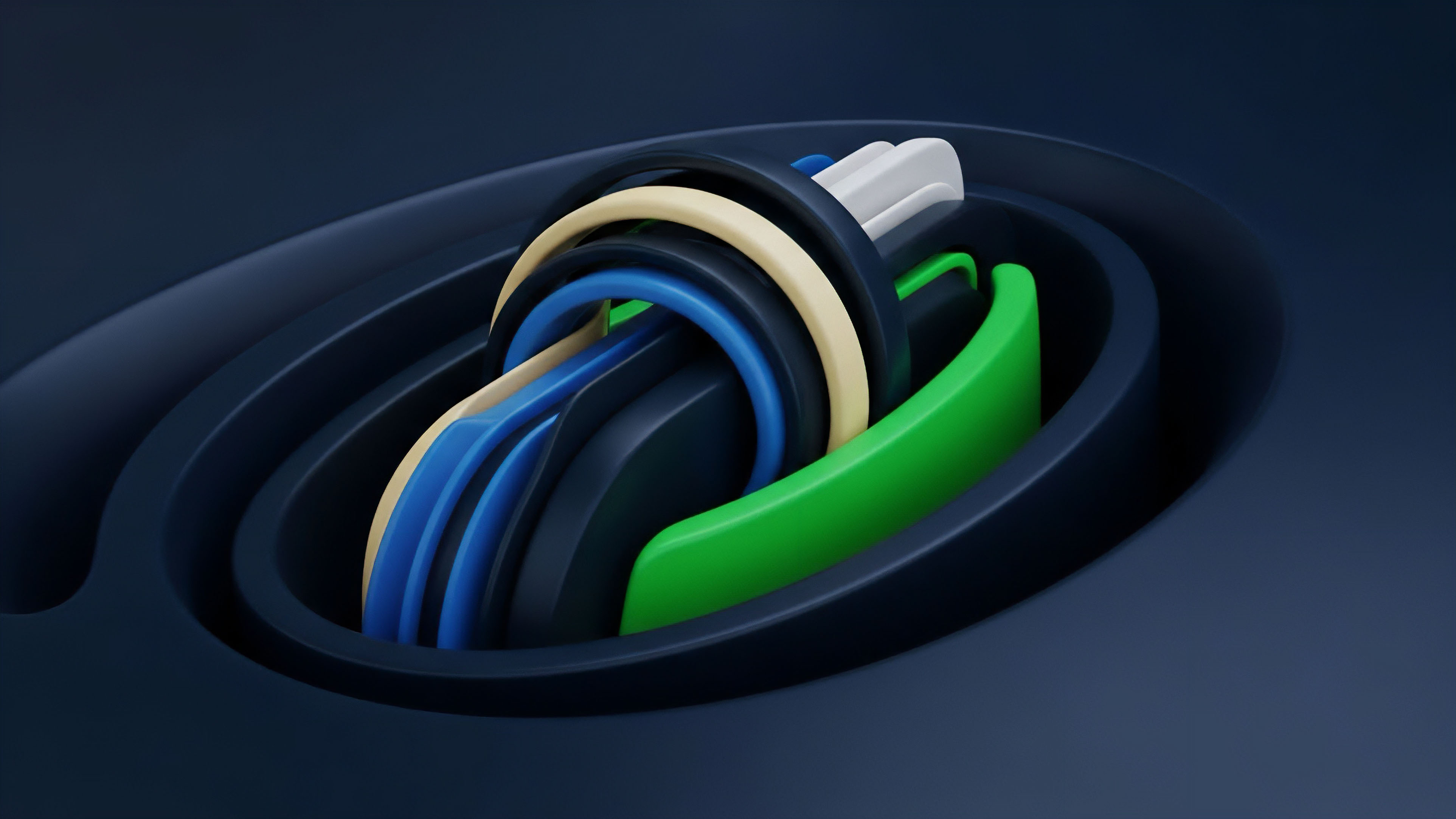

Another major shift is the move toward cross-venue monitoring. As liquidity has fragmented across different Layer 1 and Layer 2 networks, the ability to monitor multiple chains simultaneously has become vital. This has led to the development of cross-chain bridges and aggregators that provide a unified view of the entire crypto environment.

This systemic shift ensures that a liquidity crisis on one chain can be detected and mitigated before it spreads to others.

Future Path

The future of Real-Time Market Monitoring lies in the integration of predictive modeling and artificial intelligence. Current systems are primarily reactive, responding to events after they have occurred. The next generation of monitoring tools will use machine learning to identify patterns that precede volatility, allowing for proactive risk management.

Predictive Risk Engines

These advanced systems will analyze historical data and real-time order flow to predict the probability of a liquidation cascade. By identifying the specific price levels where large amounts of leverage are concentrated, Real-Time Market Monitoring can provide early warning signals to the market. This will lead to more stable and resilient decentralized exchanges, as they will be able to adjust their parameters before a crisis hits.

MEV-Aware Monitoring

As Miner Extractable Value (MEV) continues to play a larger role in the crypto markets, monitoring systems must become MEV-aware. This involves tracking the activity of searchers and builders to understand how their actions are impacting price discovery and execution quality. Future monitoring tools will provide detailed analysis of how MEV is being extracted and how it affects the overall efficiency of the market.

- AI-Driven Liquidation Prevention: Algorithms that identify at-risk positions and offer automated deleveraging options.

- Cross-Chain Risk Parity: Systems that monitor and balance risk across multiple blockchain networks in real-time.

- Zero-Knowledge Monitoring: Using ZK-proofs to provide verifiable market data without revealing sensitive trade information.

- Sentiment Integration: Incorporating real-time social media and news data into quantitative risk models.

The trajectory of this field is toward a fully automated, self-healing financial system. In this future, Real-Time Market Monitoring will not just be a tool for traders, but the foundational infrastructure that ensures the stability and security of the entire decentralized economy. By continuously observing and adapting to market conditions, these systems will enable the creation of more complex and capital-efficient financial instruments.

Glossary

Real-Time Risk Dashboards

On-Chain Event Processing

Implied Volatility Surface

Skew Dynamics

Perpetual Swap Funding Rates

Decentralized Indexing Protocols

Predictive Risk Modeling

Protocol Solvency Verification

Order Book Depth Analysis