Architectural Definition

Real-Time Data Rendering constitutes the instantaneous translation of raw cryptographic state transitions and order book updates into actionable visual and computational structures. This process eliminates the latency between the execution of a trade and its representation within the decision-making interface of a participant. Within the high-stakes environment of crypto options, where implied volatility can shift within milliseconds, the ability to project these changes without delay determines the difference between capital preservation and total liquidation.

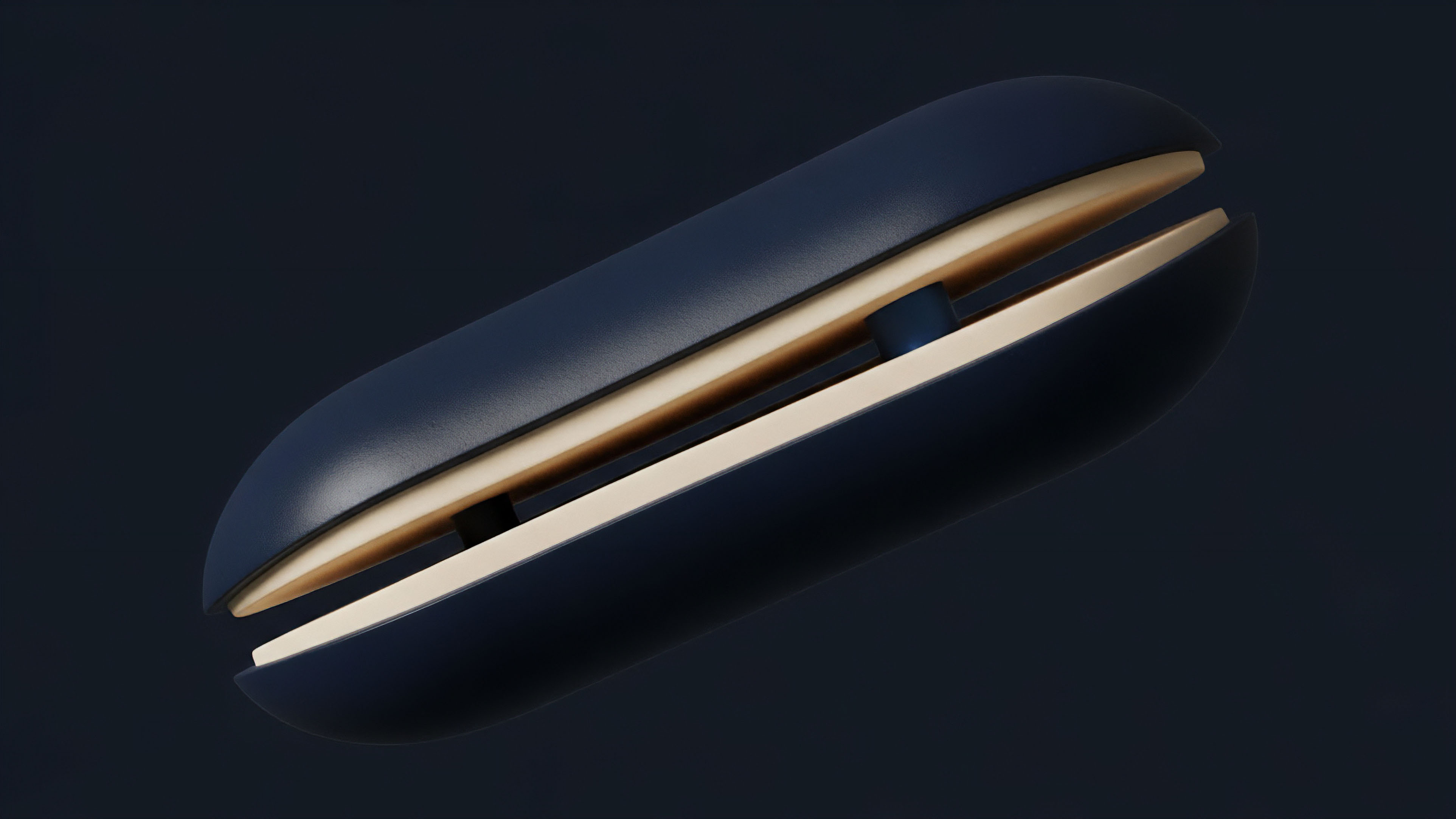

The technical architecture of this rendering process relies on high-throughput data pipelines that bypass traditional polling mechanisms. Instead of requesting data at fixed intervals, modern systems utilize push-based protocols to stream updates as they occur on the underlying ledger or matching engine. This creates a living representation of market liquidity, allowing for the precise calibration of risk parameters in a environment characterized by adversarial actors and automated liquidation bots.

The immediate projection of market state through low-latency rendering allows participants to react to volatility shifts before traditional price feeds reflect the change.

The functional significance of this technology extends to the stabilization of decentralized finance. When liquidators can view real-time health ratios across a lending protocol or an options vault, they can execute necessary interventions with surgical precision. This transparency reduces the probability of cascading failures, as the system remains legible even during periods of extreme market stress.

- Latency Minimization: The reduction of the time interval between a state change on the blockchain and its appearance on the user interface.

- State Synchronization: The alignment of the local client view with the global consensus state of the network.

- Computational Efficiency: The ability to process thousands of updates per second without overwhelming the hardware of the end-user.

Historical Genesis

The lineage of Real-Time Data Rendering traces back to the mechanical ticker tapes of the late 19th century, which provided the first serialized stream of price information. In the digital asset era, this requirement intensified due to the 24/7 nature of the markets and the lack of a centralized closing bell. Early crypto exchanges struggled with “ghost orders” and lagging interfaces, leading to the development of specialized WebSocket architectures designed to handle the massive message frequency generated by high-frequency trading algorithms.

The transition from centralized to decentralized environments introduced new complexities. In the early days of decentralized finance, users relied on block explorers that were often several minutes behind the actual state of the chain. The introduction of indexing protocols and specialized RPC nodes allowed developers to extract and render data with increasing speed.

This evolution was driven by the necessity of managing complex derivative positions that require constant monitoring of the underlying asset price and the resulting Greeks.

The transition from pull-based data requests to push-based streaming marked the beginning of professional-grade decentralized trading environments.

Current systems have moved beyond simple price displays to the rendering of complex multidimensional surfaces. This shift mirrors the evolution of professional options desks in traditional finance, where the visualization of the volatility smile and skew is a requirement for any serious market-making operation. The decentralized version of this rendering must also account for the unique properties of blockchain data, such as block times and finality risks.

Theoretical Framework

The physics of Real-Time Data Rendering is governed by the relationship between data throughput and the refresh rate of the human or algorithmic observer.

In a market where the underlying state changes with every block ⎊ or every sub-second update in the case of Layer 2 solutions ⎊ the rendering engine must prioritize the most relevant information to avoid cognitive or computational overload. This involves the application of the Nyquist-Shannon sampling theorem to market data, ensuring that the frequency of the updates is sufficient to represent the underlying volatility without introducing aliasing or noise. The mathematical modeling of these systems often employs asynchronous processing techniques.

By decoupling the data ingestion layer from the rendering layer, developers ensure that a sudden spike in market activity does not freeze the user interface. This is particularly relevant for crypto options, where a single price move can trigger a flurry of updates across the entire strike price spectrum.

| Parameter | Legacy Polling | Modern Streaming |

|---|---|---|

| Update Frequency | Periodic (Seconds) | Event-Driven (Milliseconds) |

| Data Integrity | Prone to Stale State | High Fidelity Synchronization |

| Bandwidth Usage | High (Redundant Requests) | Efficient (Differential Updates) |

| User Experience | Fragmented and Lagging | Fluid and Reactive |

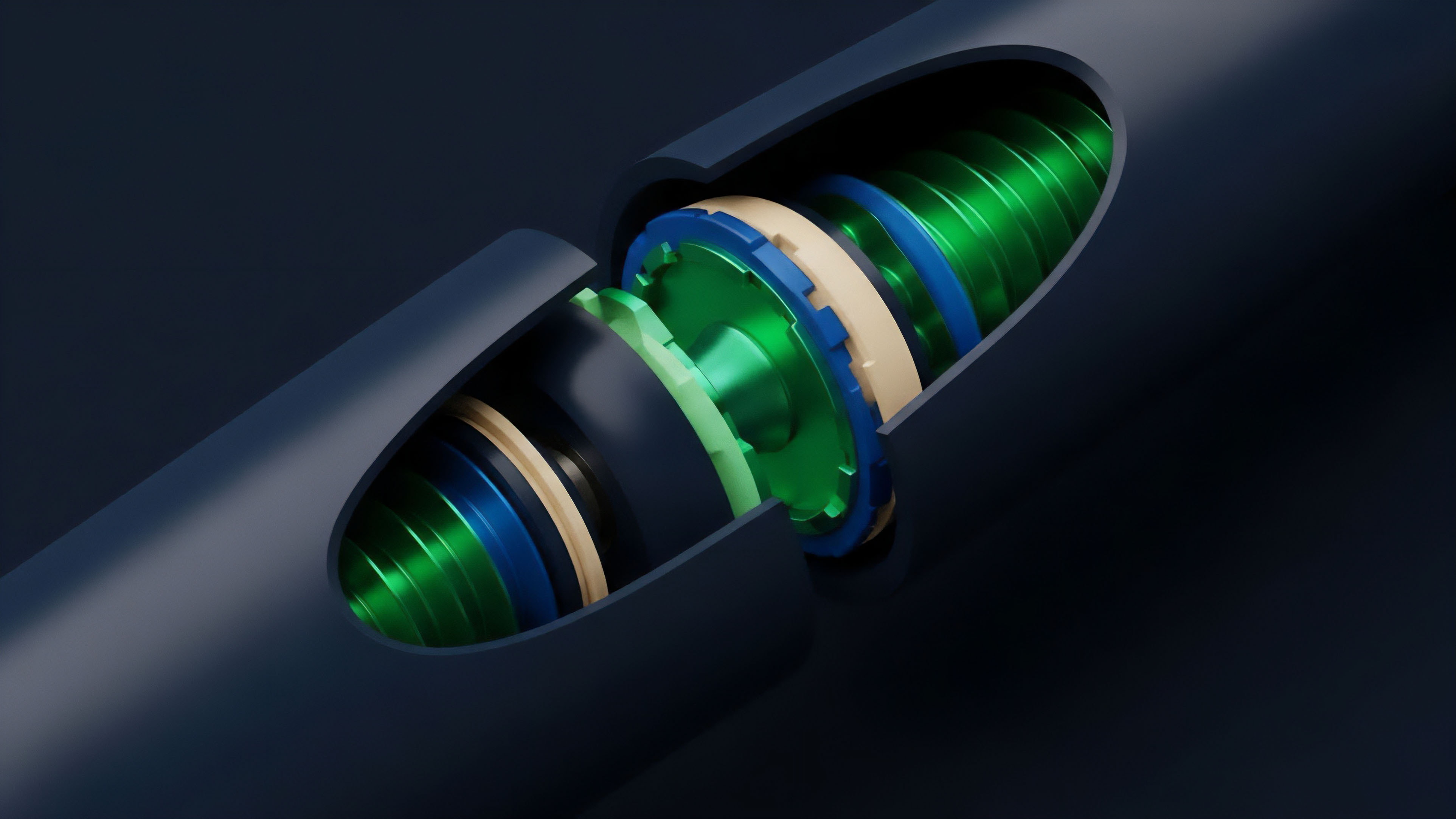

Furthermore, the rendering of the Volatility Surface requires the continuous recalculation of the Black-Scholes model or its variants across hundreds of strike prices and expiration dates. This demands significant client-side or server-side computational power. The goal is to provide a visual representation that allows a trader to identify mispriced options or hedging opportunities instantly.

Mathematical models for real-time risk assessment require a continuous stream of high-fidelity data to maintain accuracy during periods of high volatility.

Technical Execution

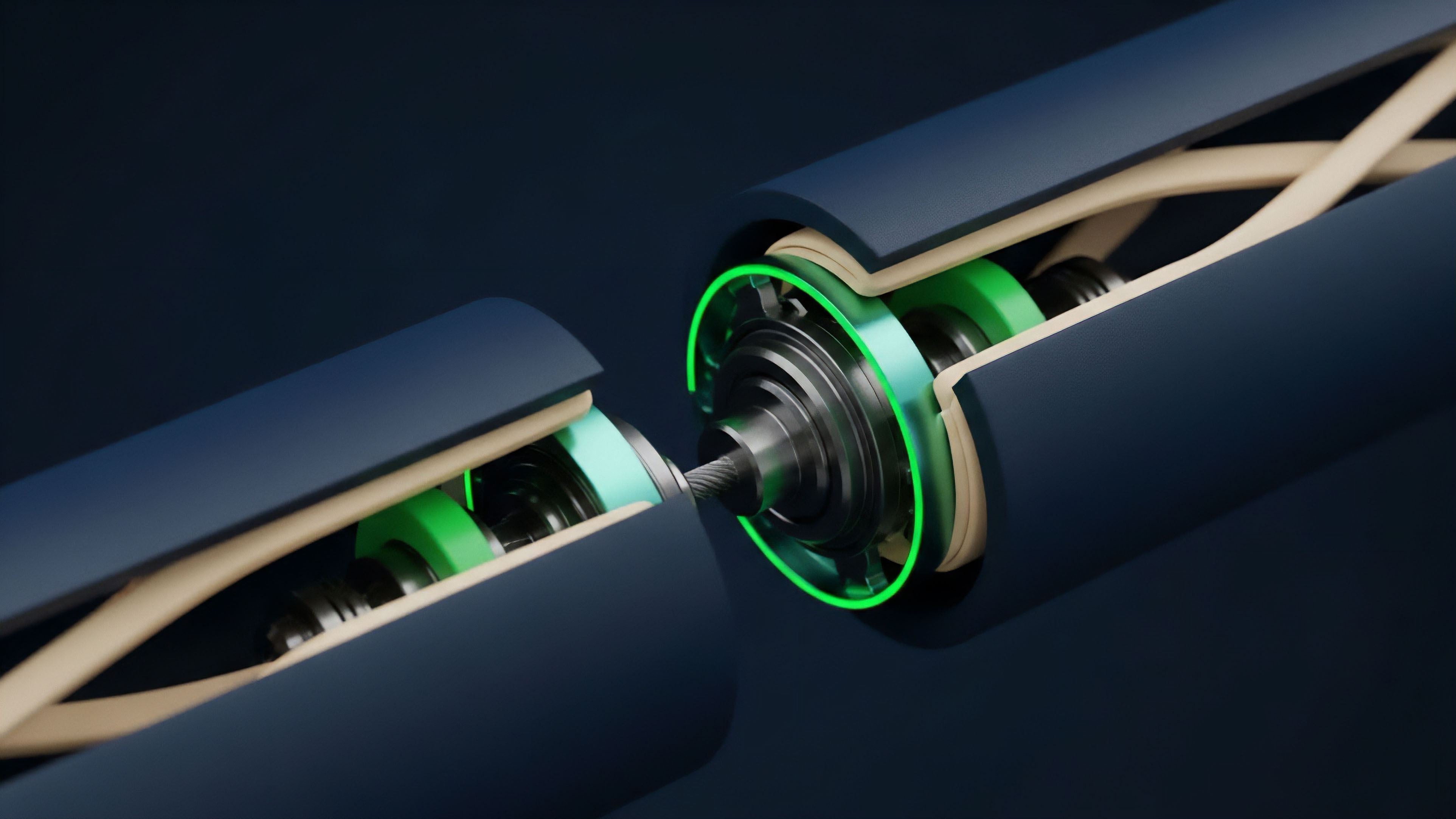

Current methodologies for Real-Time Data Rendering involve a multi-layered stack designed for maximum speed and reliability. At the base layer, high-performance RPC providers deliver raw blockchain events. These events are then processed by indexers or custom backends that transform the raw hex data into structured JSON or binary formats.

The front-end application consumes these streams via WebSockets or gRPC, utilizing frameworks like React or specialized graphics libraries to update the display without a full page reload. Professional trading terminals often employ hardware acceleration to handle the rendering of complex charts and order books. This is especially true for Order Flow Visualization, which tracks the “footprint” of trades as they hit the bid or ask.

By rendering these events in real-time, traders can identify the presence of large institutional buyers or sellers and adjust their strategies accordingly.

- Event Listeners: Specialized scripts that monitor the blockchain for specific smart contract events related to options minting, trading, or liquidation.

- Data Normalization: The process of converting diverse data formats from multiple chains into a single, unified schema for rendering.

- State Management: The use of local caches to store the current market state, allowing for instantaneous updates as new data arrives.

- Visual Optimization: The application of techniques like “throttling” and “debouncing” to ensure the interface remains responsive during extreme market events.

The use of Vectorized Graphics allows for the smooth rendering of complex curves, such as the gamma profile of an options portfolio. As the price of the underlying asset moves, the entire curve shifts in real-time, providing the trader with an immediate understanding of their changing risk exposure. This level of technical sophistication is now a standard requirement for any platform competing for professional liquidity.

Systemic Transformation

The shift toward Real-Time Data Rendering has fundamentally altered the competitive structure of the crypto derivatives market.

In the past, information asymmetry was often a function of who had the fastest connection to a centralized exchange’s API. In the current decentralized environment, the advantage has shifted to those who can most effectively aggregate and render data from a fragmented across multiple protocols and chains. This transformation has led to the rise of “Super-Aggregators” that provide a unified interface for trading options across different liquidity pools.

These platforms rely on sophisticated rendering engines to show the best available price and the deepest liquidity in real-time. The result is a more efficient market where price discrepancies are quickly closed by arbitrageurs who are alerted to opportunities by their high-speed data feeds.

| Feature | Early DeFi Interfaces | Advanced Derivative Terminals |

|---|---|---|

| Data Source | Single RPC Node | Multi-Source Aggregation |

| Refresh Logic | Manual Page Refresh | Automatic WebSocket Push |

| Risk Metrics | Static Price Only | Real-Time Greeks and IV |

| Execution Speed | Slow and Uncertain | Rapid and Validated |

The democratization of these tools means that individual traders now have access to the same quality of information that was previously reserved for institutional desks. This has increased the overall sophistication of the market, as participants are better equipped to manage complex strategies like delta-neutral hedging or volatility arbitrage. The systemic risk is also reduced, as participants can see the buildup of leverage and the proximity of liquidation levels across the entire network.

Future Trajectory

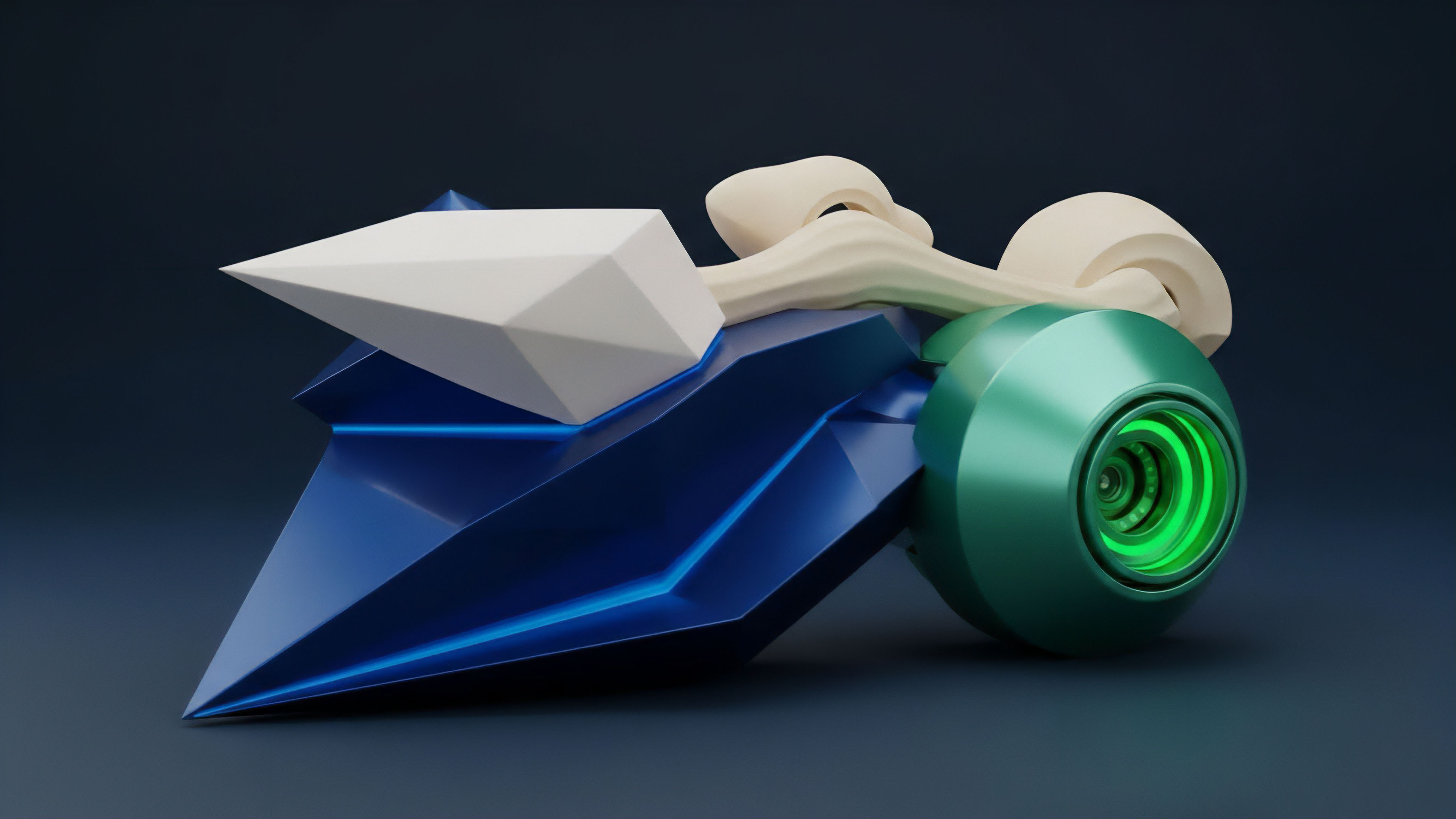

The next phase of Real-Time Data Rendering will likely involve the integration of predictive analytics and machine learning directly into the visualization layer. Instead of just showing what is happening now, future interfaces will render probabilistic models of what might happen in the next several minutes. This will include the rendering of “Liquidation Heatmaps” that show where large clusters of stop-losses or margin calls are located, providing a visual map of potential volatility triggers. Another area of development is the use of Zero-Knowledge Proofs to verify the integrity of the data being rendered. This ensures that the information shown on the screen is a true representation of the on-chain state, protecting users from malicious front-ends or compromised data providers. As the complexity of the crypto financial system increases, the need for verifiable and instantaneous information will only grow. We are also seeing the emergence of Immersive Rendering Environments, where traders can visualize the entire options market as a three-dimensional space. In this environment, the “height” of a surface might represent implied volatility, while the “color” represents trading volume. This allows for the rapid identification of patterns and anomalies that would be impossible to spot on a traditional two-dimensional chart. The goal is to transform the abstract math of derivatives into a tangible, navigable landscape. The ultimate destination is a state of “Zero-Latency Consensus,” where the rendering of the market state is perfectly synchronized across all participants globally. While physical limits on the speed of light make this a challenge, the development of localized edge computing and advanced synchronization protocols will bring us closer to this ideal. In such a world, the distinction between the market and its representation will effectively vanish.

Glossary

Financial Operating System Design

Adversarial Environment Modeling

Gamma Exposure Tracking

High-Throughput Data Pipelines

Decentralized Options Protocols

Latency Arbitrage Strategies

Delta Neutral Hedging

Systemic Contagion Prevention

Risk Sensitivity Analysis