Essence

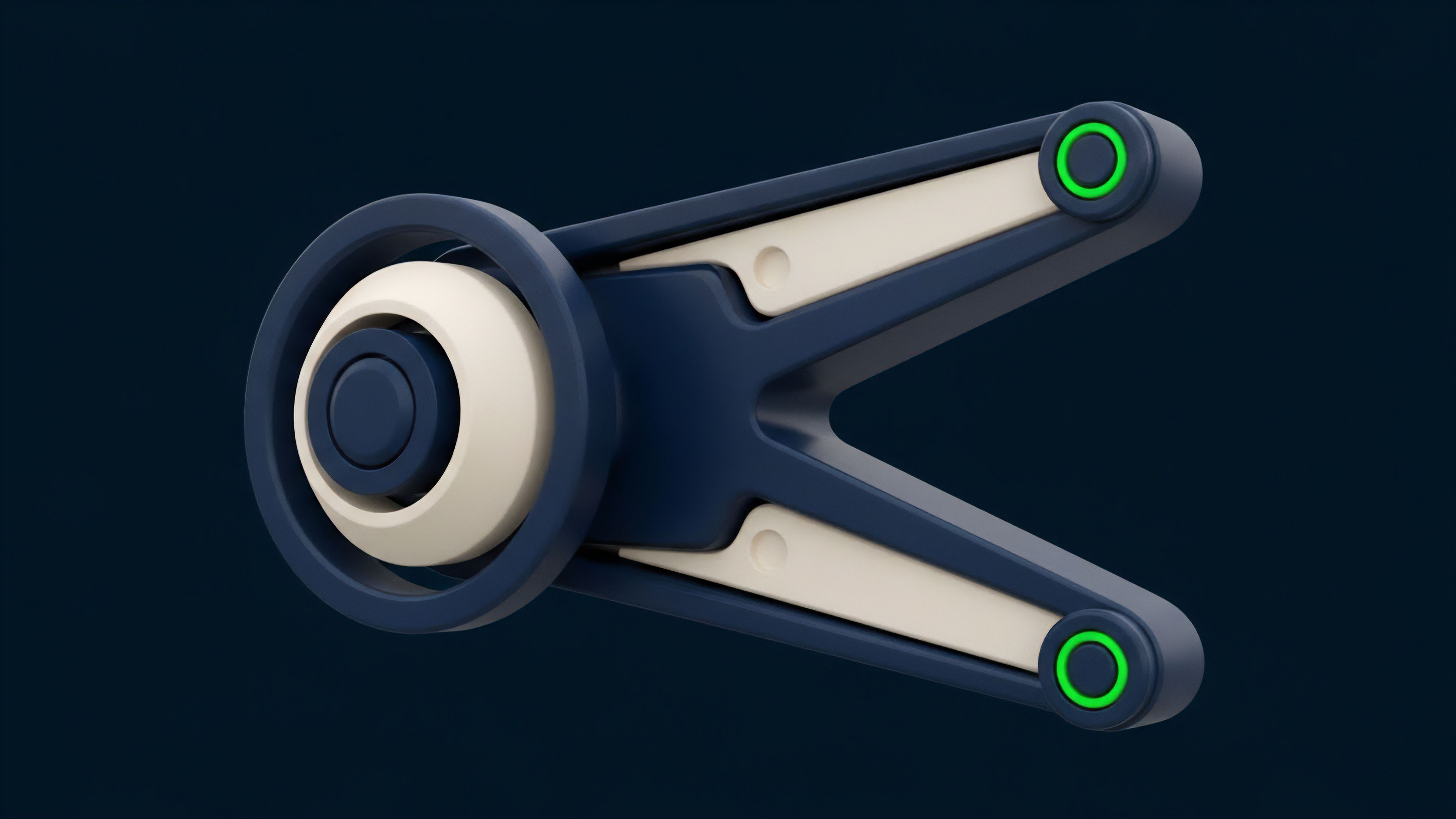

The operational integrity of crypto options protocols hinges on a continuous, accurate stream of market data, specifically referred to as Real-Time Data Feeds. These feeds act as the primary interface between the off-chain financial reality of price movements and the on-chain logic of a smart contract. For derivatives, a simple price feed is insufficient; the protocol requires a more complex input: the implied volatility surface.

This surface is a dynamic, multi-dimensional representation of market expectations regarding future price fluctuations, essential for calculating the fair value of an option across various strike prices and expiration dates. A failure in the data feed results in a fundamental breakdown of the options pricing mechanism, creating opportunities for arbitrage and potentially leading to systemic insolvency within the protocol. The feed must not only deliver raw price data but also perform complex calculations to derive this volatility surface in real-time, translating market microstructure into actionable risk parameters for the decentralized application.

Real-time data feeds provide the essential inputs for options pricing models, translating market microstructure into actionable risk parameters for decentralized protocols.

Origin

The concept of real-time data feeds originates in traditional finance, where centralized exchanges and proprietary data vendors like Bloomberg or Refinitiv provide high-speed, low-latency market information. This model assumes a centralized authority responsible for data accuracy and dissemination. The transition to decentralized finance introduced the fundamental “oracle problem,” where smart contracts are isolated from external data sources.

Early DeFi protocols addressed this with simple price feeds, primarily for spot markets and lending protocols. The rise of sophisticated options and perpetual futures markets created a demand for more complex data. Options protocols could not function securely using simple spot prices because the primary determinant of an option’s value is volatility, not just the underlying asset price.

This necessitated the creation of specialized data feeds capable of calculating and delivering implied volatility surfaces to the chain. The evolution of this data infrastructure represents a shift from simple price reporting to complex, pre-calculated risk metric delivery.

Theory

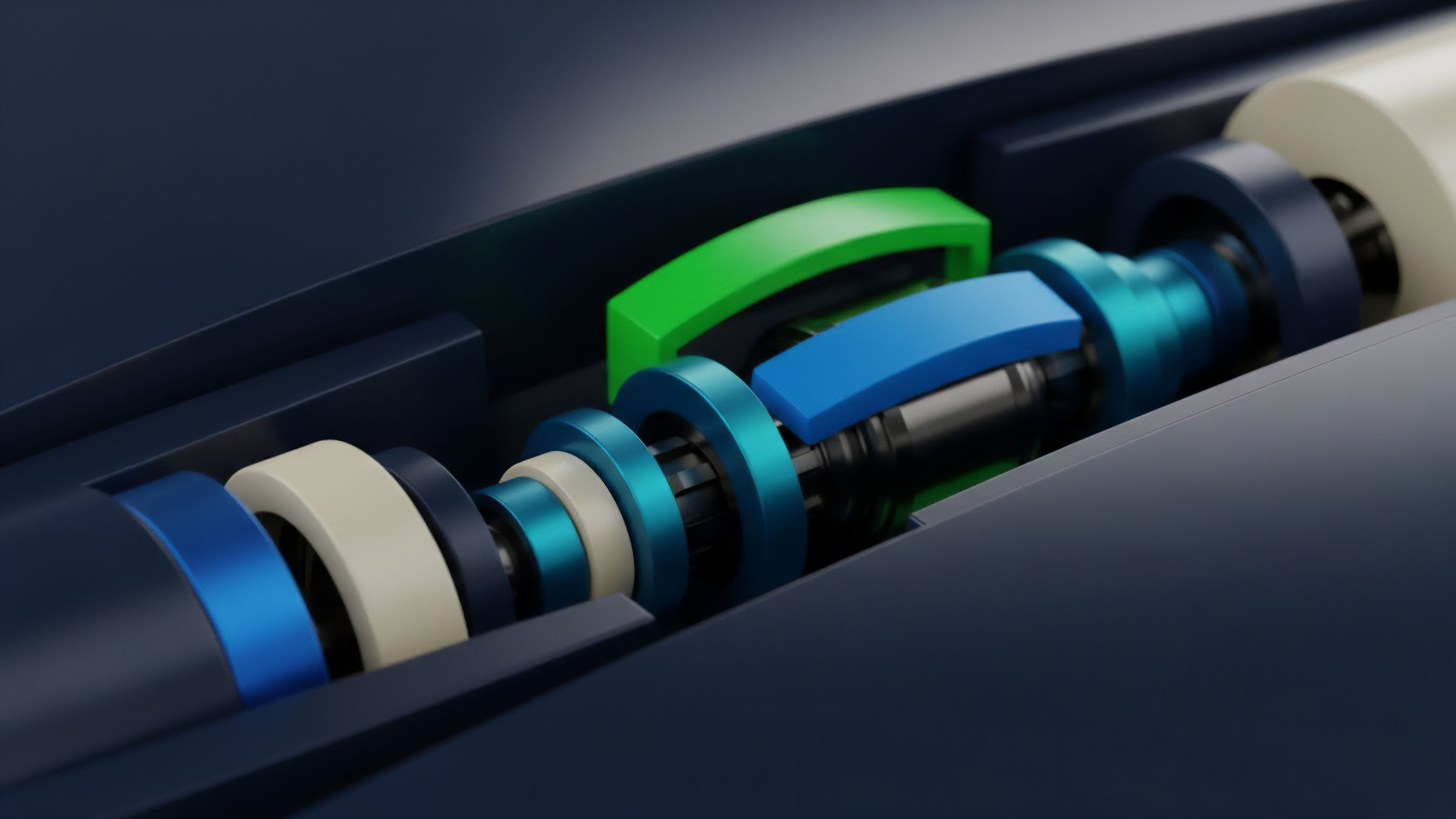

The theoretical foundation for options pricing relies on models like Black-Scholes-Merton, which require several inputs, including the current price of the underlying asset, time to expiration, risk-free interest rate, and most critically, expected future volatility. The data feed’s primary theoretical challenge is to capture and transmit this expected volatility in a verifiable manner.

The volatility surface, which plots implied volatility against different strike prices and maturities, provides a more accurate representation of market sentiment than a single volatility number. This surface often exhibits a “volatility skew,” where options further out of the money have higher implied volatility than at-the-money options ⎊ a phenomenon that reflects a market preference for purchasing protection against sharp downward movements. A data feed must accurately model this skew to prevent mispricing.

If the data feed fails to capture this real-time skew, a protocol’s risk engine will calculate incorrect margin requirements, leading to potential undercollateralization and systemic risk. The feed’s reliability directly influences the accuracy of the Greeks ⎊ delta, gamma, and vega ⎊ which are essential for dynamic hedging strategies.

Volatility Surface Dynamics

A robust data feed for options must deliver a precise volatility surface, which serves as the core input for pricing models. The feed’s architecture must address several key dynamics:

- Skew and Smile: The volatility skew represents the difference in implied volatility between options of the same expiration date but different strike prices. The feed must accurately reflect this market bias, which often shows higher implied volatility for out-of-the-money puts.

- Term Structure: This component shows how implied volatility changes across different expiration dates. The feed must capture this forward-looking aspect, as longer-term options often react differently to market events than short-term options.

- Data Smoothing: Raw order book data from multiple exchanges can be noisy. The feed must employ smoothing algorithms to filter out short-term noise and outliers, ensuring a stable and reliable input for on-chain calculations.

Greeks and Risk Management

The real-time data feed directly impacts the calculation of risk parameters known as the Greeks. These metrics are fundamental to options trading and risk management:

- Delta: Measures the change in option price for a one-unit change in the underlying asset price. The data feed’s accuracy is vital for calculating a portfolio’s delta and executing dynamic hedging strategies.

- Gamma: Measures the rate of change of delta relative to the underlying asset price. A real-time feed helps assess how quickly delta changes, which is essential for managing risk in volatile markets.

- Vega: Measures the sensitivity of an option’s price to changes in implied volatility. The data feed’s ability to capture volatility accurately directly impacts the calculation of vega, allowing protocols to manage exposure to volatility risk.

Approach

The implementation of real-time data feeds varies significantly between centralized exchanges (CEXs) and decentralized protocols (DEXs). CEXs operate on a high-speed, low-latency internal network where the matching engine and data feed are tightly coupled. Data is proprietary and disseminated through a single point of truth.

DEXs, conversely, must rely on external data sources, creating a significant architectural challenge known as the oracle problem. The current approach for most decentralized options protocols involves a hybrid model where data aggregation and calculation occur off-chain, with only the final, verified data pushed to the smart contract.

Data Aggregation and Pre-Computation

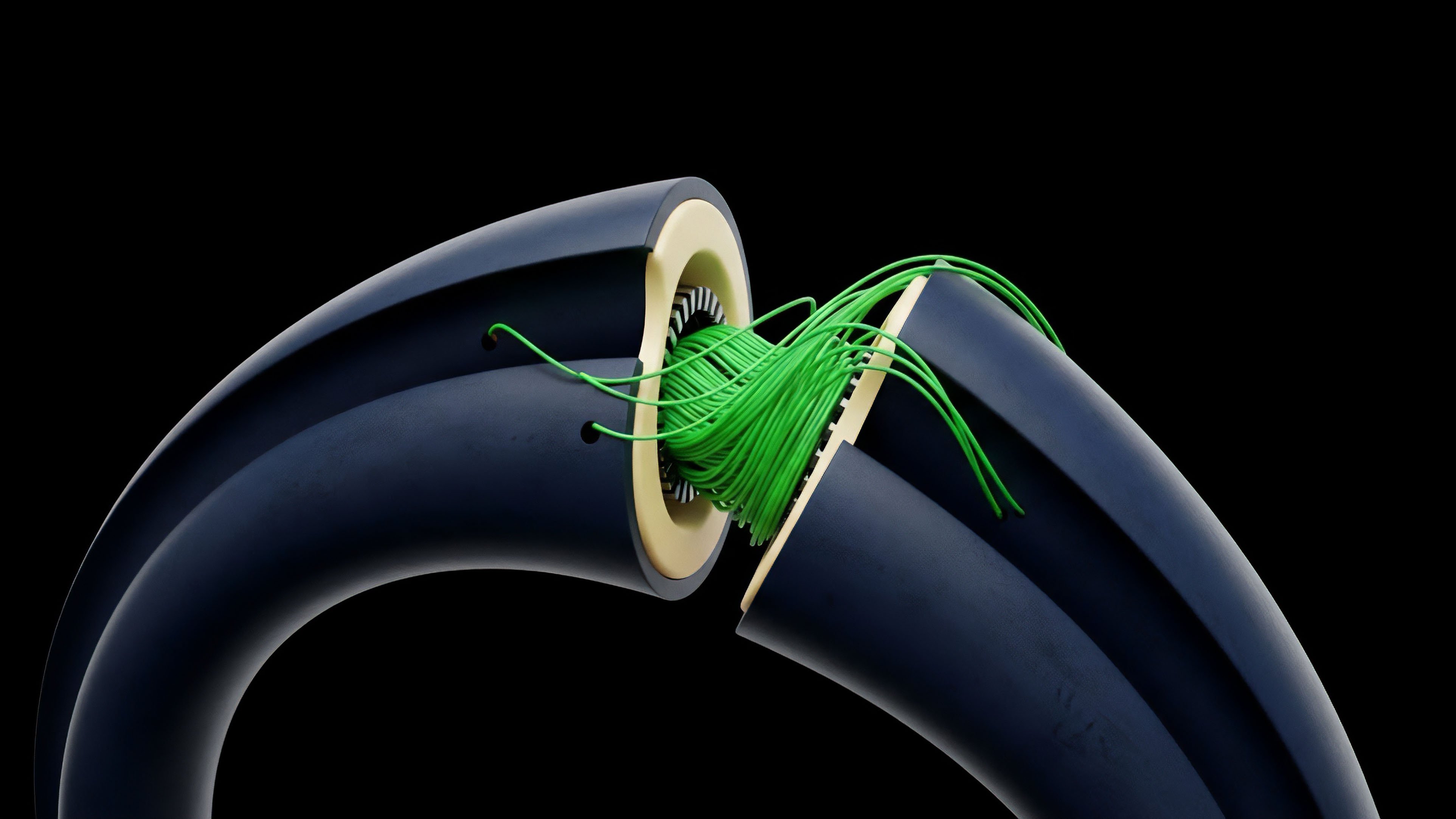

To create a robust feed for options, data providers must aggregate data from multiple centralized and decentralized exchanges. This process involves collecting order book depth, trade history, and implied volatility calculations from various sources. The data provider then calculates a composite implied volatility surface, often using algorithms to weigh sources and filter outliers.

This pre-computation step is crucial because calculating complex pricing models directly on-chain is prohibitively expensive due to gas costs. The pre-computed risk metrics are then delivered to the protocol via a decentralized oracle network. This approach significantly reduces on-chain computation and allows for more frequent updates.

CEX Vs. DEX Data Feed Architectures

| Feature | Centralized Exchange (CEX) Data Feed | Decentralized Exchange (DEX) Data Feed |

|---|---|---|

| Source of Truth | Internal matching engine and order book. | Decentralized oracle network aggregating external sources. |

| Latency Profile | Sub-millisecond latency; proprietary high-speed feeds. | Higher latency due to oracle consensus mechanisms; dependent on block times. |

| Data Security Model | Trust-based model; relies on exchange integrity. | Cryptographic verification and economic incentives to prevent manipulation. |

| Data Granularity | Full order book depth and last trade data. | Aggregated price and implied volatility surface. |

Evolution

The evolution of data feeds for crypto options mirrors the increasing sophistication of the derivatives market itself. Early attempts to launch decentralized options struggled due to reliance on simplistic data feeds that failed to capture the complexity of volatility dynamics. The first generation of protocols used spot price feeds, which led to significant vulnerabilities when markets experienced rapid changes in implied volatility.

The market quickly realized that a simple price feed, even if accurate, provided insufficient data for options pricing. The next generation of protocols shifted to specialized data feeds that calculate implied volatility surfaces off-chain and deliver them to the protocol. This move toward pre-computation was essential to overcome the high cost and latency of on-chain calculation.

The current state involves highly specialized data providers that offer granular, high-frequency data streams, specifically tailored for options protocols. The focus has moved from simple data reporting to a real-time risk engine, where the feed itself performs complex calculations to determine margin requirements and liquidation thresholds.

The data feed’s evolution reflects a transition from simple price reporting to complex, pre-calculated risk metric delivery, driven by the need for capital efficiency in decentralized markets.

Horizon

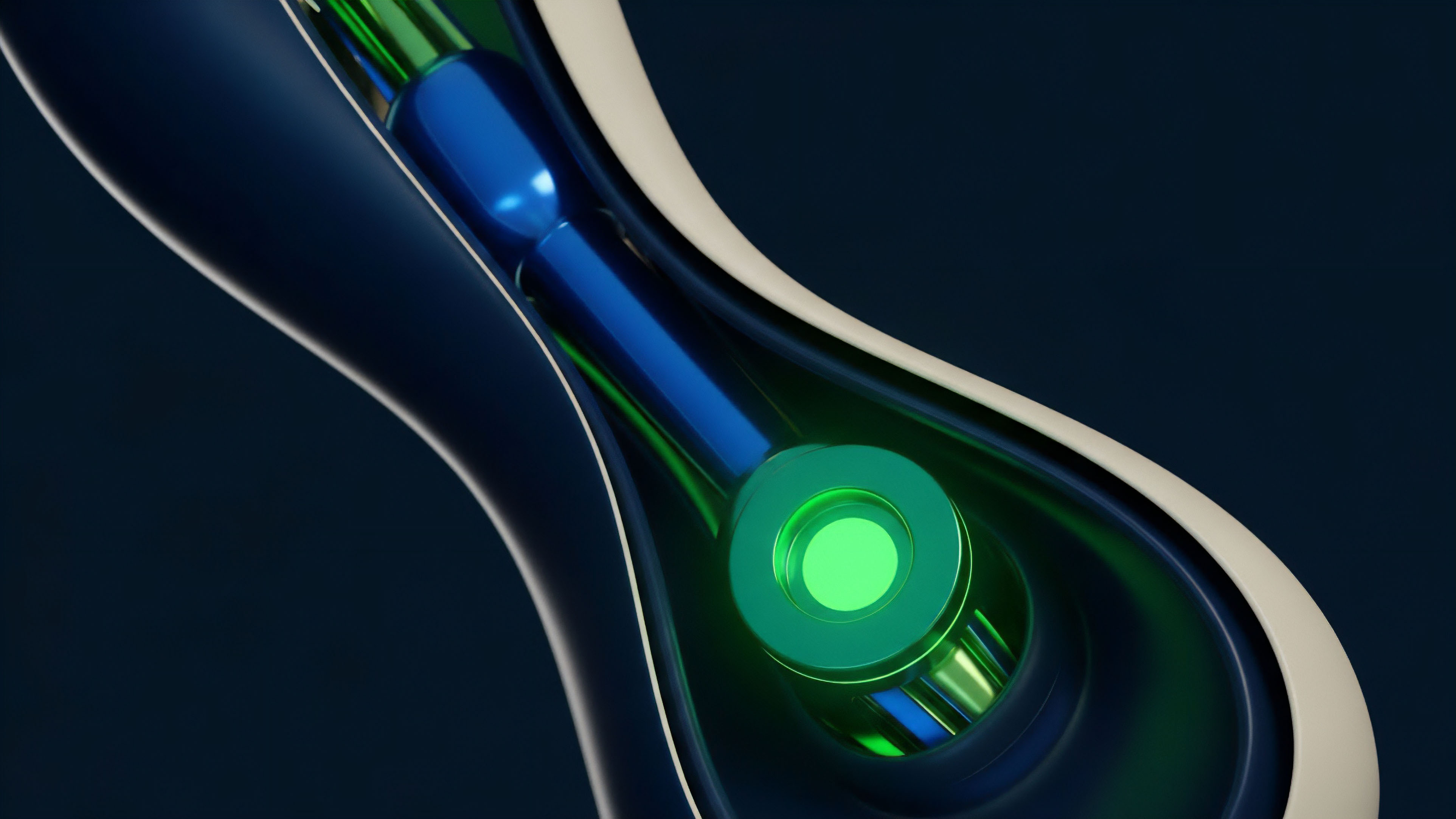

Looking ahead, the next generation of real-time data feeds will move beyond simple data aggregation to predictive analytics and real-time risk modeling. We will see a shift toward decentralized data streams that offer more than just price data; they will deliver real-time calculations of risk metrics and predictive volatility surfaces. The focus will be on reducing data latency to near-CEX levels while maintaining decentralization.

This requires advancements in decentralized oracle networks (DONs) that can perform complex computations off-chain in a verifiable manner. The goal is to provide real-time margin calculations and automated liquidations based on a continuously updated risk model, rather than relying on lagging price triggers. The data feed will become a real-time risk engine, enabling proactive risk management and allowing protocols to handle complex derivatives with greater capital efficiency.

Future Architectural Developments

The future of options data feeds will be defined by three key architectural shifts:

- Specialized Volatility Oracles: New oracle designs will emerge specifically for options, focusing on delivering high-frequency updates to the implied volatility surface. These oracles will use advanced machine learning models to predict future volatility and feed these predictions back into the pricing models.

- Decentralized Data Streaming: The move toward high-frequency data requires a shift away from periodic block-based updates. New protocols will implement decentralized streaming architectures that allow data to flow continuously, reducing the latency gap between CEXs and DEXs.

- Pre-computation and Risk Modeling: Data feeds will increasingly perform complex risk calculations off-chain, such as calculating Value at Risk (VaR) and Expected Shortfall, to provide protocols with real-time risk metrics for automated collateral management.

Systemic Risk and Data Integrity

The reliance on real-time data feeds introduces new forms of systemic risk. A single point of failure in the data feed or a manipulation attack can lead to widespread protocol insolvency. The future challenge is to ensure data integrity and security through a combination of economic incentives, cryptographic verification, and robust data source diversification.

The data feed must be resistant to market manipulation, where an attacker could artificially inflate or deflate prices on a single exchange to trigger liquidations. This requires a shift toward more resilient aggregation methods that are resistant to single-source failure.

The data feed’s role is evolving from a passive data source to an active risk management system, capable of pre-computation and predictive modeling to prevent systemic failure.

Glossary

Real-Time Simulations

Index Price Feeds

Permissionless Data Feeds

Real-Time Data Feeds

Asynchronous Data Feeds

Real-Time Risk Auditing

Defi Options Protocols

Single-Source Price Feeds

Real Time Simulation